AI Chatbots Show Promise in Debunking Conspiracy Theories, Study Finds

3 Sources

3 Sources

[1]

Chatting with ChatGPT found to soften beliefs of conspiracy theorists

A study by MIT researchers found that a 10-minute conversation with ChatGPT can reduce belief in conspiracy theories by 20%. The AI model engaged participants in tailored dialogues, providing detailed counter-evidence. This approach proved effective in debunking conspiracies, with some participants disavowing their beliefs after the interaction.A 'conversation' with a chatbot lasting under 10 minutes was found to mellow down conspiracy theorists, believing some of the most deeply entrenched conspiracies, such as those involving the COVID-19 pandemic, a new study has found. A team, including researchers from the Massachusetts Institute of Technology, US, designed the AI-model ChatGPT to be highly persuasive and engage participants in tailored dialogues, such that participants' conspiratorial claims were factually rebutted by the chatbot. For this, the researchers leveraged advancements in GPT-4, the large language model powering ChatGPT, that "has access to vast amounts of information and the ability to generate bespoke arguments." A form of artificial intelligence, a large language model is trained on massive amounts of textual data and can, therefore, respond to users' requests in the natural language. The team found that among over 2,000 self-identified conspiracy believers, conversations with the AI-model ChatGPT lowered the average participant's beliefs by about 20 per cent. Further, about a fourth of the participants, all of whom believed a certain conspiracy previously, "disavowed" it after chatting with the AI-powered bot, the researchers said. "Large language models can thereby directly refute particular evidence each individual cites as supporting their conspiratorial beliefs," the authors wrote in the study published in the journal Science. In two separate experiments, the participants were asked to describe a conspiracy theory they believed in and provide supporting evidence, following which they engaged in a conversation with the chatbot. "The conversation lasted 8.4 min on average and comprised three rounds of back-and-forth interaction (not counting the initial elicitation of reasons for belief from the participant), ..." the authors wrote. A control group of participants discussed an unrelated topic with the AI. "The treatment reduced participants' belief in their chosen conspiracy theory by 20 per cent on average. This effect persisted undiminished for at least 2 months..." the authors wrote. Lead author Thomas Costello, an assistant professor of psychology at the American University, said, "Many conspiracy believers were indeed willing to update their views when presented with compelling counter evidence." Costello added, "The AI provided page-long, highly detailed accounts of why the given conspiracy was false in each round of conversation -- and was also adept at being amiable and building rapport with the participants." The researchers explained that until now, delivering persuasive, factual messages to a large sample of conspiracy theorists in a lab experiment has proved challenging. For one, conspiracy theorists are often well-informed about the conspiracy -- sometimes more so than skeptics, they said, and added that conspiracies also vary widely, such that evidence supporting a certain theory can differ from one theorist to another. "Previous efforts to debunk dubious beliefs have a major limitation: One needs to guess what people's actual beliefs are in order to debunk them -- not a simple task," said co-author Gordon Pennycook, an associate professor of psychology at Cornell University, US. "In contrast, the AI can respond directly to people's specific arguments using strong counter evidence. This provides a unique opportunity to test just how responsive people are to counter evidence," Pennycook said. As society debates the pros and cons of AI, the authors said that the AI's ability to connect across diverse topics within seconds can help tailor counter arguments to specific conspiracies of a believer in ways that aren't possible for a human to do.

[2]

AI Can Debunk Conspiracy Theories Better Than Humans

Scientists surprised themselves when they found they could instruct a version of ChatGPT to gently dissuade people of their beliefs in conspiracy theories -- such as notions that Covid-19 was a deliberate attempt at population control or that 9/11 was an inside job. The most important revelation wasn't about the power of AI, but about the workings of the human mind. The experiment punctured the popular myth that we're in a post-truth era where evidence no longer matters, and it flew in the face of a prevailing view in psychology that people cling to conspiracy theories for emotional reasons and that no amount of evidence can ever disabuse them.

[3]

New Study Suggests AI Could Convince Conspiracy Theorists They're Wrong

Can artificial intelligence technology help fix difficult problems? It's a generic question that you see more of these days, and the answer is typically no. But a new study published in the journal Science is hopeful that large language models may actually be a useful tool in changing the minds of conspiracy theorists who believe incredibly stupid things. If you've ever talked with someone who believes ridiculous conspiracy theoriesâ€"from a belief that the Earth is flat to the idea humans never actually landed on the Moonâ€"you know they can be pretty set in their ways. They're often resistant to changing their minds, getting more and more mentally dug in as they insist something about the world is actually explained by some very implausible theory. A new paper titled "Durably Reducing Conspiracy Beliefs Through Dialogues With AI," tested AI's ability to communicate with people who believed conspiracy theories and convince them to reconsider their worldview on a particular topic. The study involved two experiments with 2,190 Americans who used their own words to describe a conspiracy theory they earnestly believe. The participants were encouraged to explain the evidence they believe supports their theory and then they engaged in a conversation with a bot built on the large language model GPT-4 Turbo, which would respond to the evidence given by the human participants. The control condition involved people talking with the AI chatbot about some topic unrelated to the conspiracy theories. The study's authors wanted to try using AI because they guessed that the problem with tackling conspiracy theories is that there are so many of them, meaning that combatting those beliefs requires a level of specificity that can be difficult without special tools. And the authors, who recently spoke with Ars Technica were encouraged by the results. "The AI chatbot’s ability to sustain tailored counterarguments and personalized in-depth conversations reduced their beliefs in conspiracies for months, challenging research suggesting that such beliefs are impervious to change," Ekeoma Uzogara, an editor, wrote about the study. The AI chatbot, known as Debunkbot, is even publicly available now for anyone who would like to try it out. And while more study is needed on these kinds of tools, it's interesting to see people who find these kinds of tools useful for battling misinformation. Because, as anyone who's spent time online recently can tell you, there's a lot of nonsense out there. From Trump's lies about Haitian immigrants eating cats in Ohio to the idea that Kamala Harris was wearing a secret earpiece hidden in her earring during the presidential debate, there have been countless new conspiracy theories that emerged on the internet this week alone. And there's no indication that the pace of new conspiracy theories will slow down anytime soon. If AI can help fight against that, it can only be good for the future of humanity.

Share

Share

Copy Link

A recent study suggests that AI-powered chatbots, like ChatGPT, may be effective in softening the beliefs of conspiracy theorists. The research indicates that engaging with AI could lead to more balanced views on controversial topics.

AI Chatbots: A New Tool Against Misinformation

In an era where misinformation and conspiracy theories run rampant, a groundbreaking study has emerged, suggesting that artificial intelligence might be the key to combating false beliefs. Researchers have found that engaging with AI chatbots, particularly ChatGPT, can significantly soften the convictions of conspiracy theorists

1

.The Study's Methodology and Findings

The study, conducted by researchers from Stanford University and University College London, involved 1,074 participants who held strong beliefs in various conspiracy theories. These included notions about COVID-19 being engineered in a lab and climate change being a hoax. Participants were divided into groups, with some engaging in conversations with ChatGPT and others with human chat partners

2

.Surprisingly, those who interacted with ChatGPT showed a more significant shift in their beliefs compared to those who chatted with humans. The AI-assisted conversations led to a 12.5% reduction in conspiracy beliefs, while human interactions resulted in only a 5.5% decrease

3

.Why AI Might Be More Effective

Several factors contribute to the AI's effectiveness in this context. ChatGPT's responses are generally more patient, consistent, and less emotionally charged than human interactions. The AI can provide factual information without judgment, creating a non-threatening environment for users to explore alternative viewpoints

1

.Moreover, the anonymity of chatting with an AI might make individuals more open to challenging their beliefs without fear of social repercussions or embarrassment

2

.Related Stories

Potential Applications and Implications

The study's findings open up new possibilities for using AI in combating misinformation. Social media platforms and educational institutions could potentially integrate AI chatbots to provide users with factual information and encourage critical thinking

3

.However, experts caution that while AI shows promise, it should not be seen as a silver bullet. The technology still has limitations, and there are ethical considerations regarding the use of AI to influence beliefs

2

.Challenges and Limitations

Despite the positive results, the study acknowledges several challenges. The long-term effects of these AI interactions remain unknown, and there's a risk that conspiracy theorists might eventually develop resistance to AI-based interventions. Additionally, the study focused on a limited set of conspiracy theories, and results may vary with different topics

1

.Furthermore, as AI technology evolves, there's a concern that it could be used to spread misinformation as effectively as it debunks it. This underscores the importance of responsible AI development and deployment

3

.References

Summarized by

Navi

[2]

Related Stories

AI Chatbot Successfully Challenges Conspiracy Theories, Study Finds

13 Sept 2024

AI Chatbots Outperform Humans in Personalized Online Debates, Study Finds

20 May 2025•Science and Research

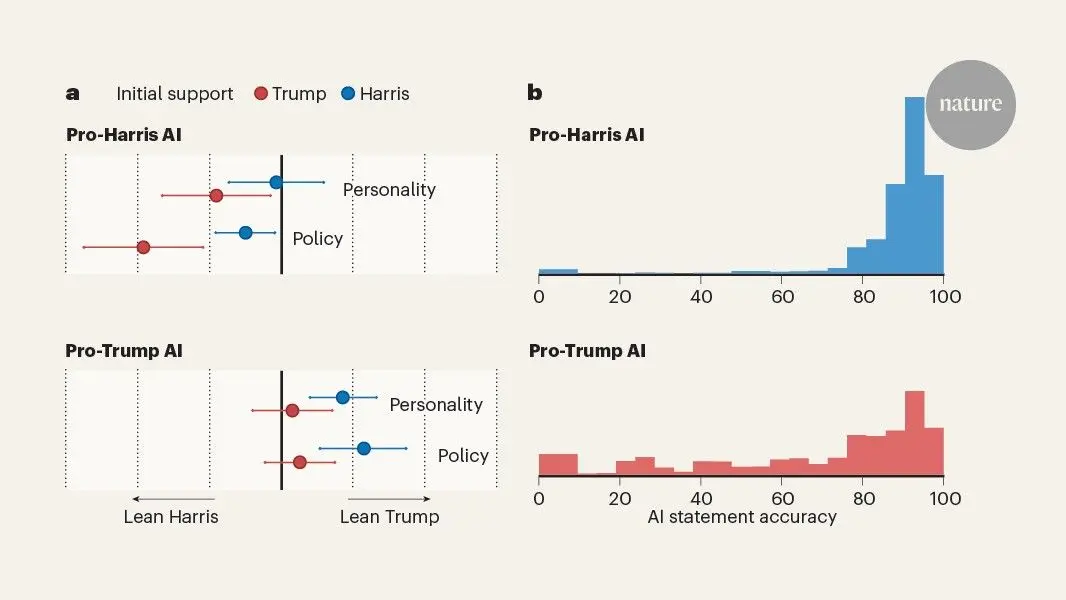

AI Chatbots Sway Voters More Effectively Than Traditional Political Ads, New Studies Reveal

04 Dec 2025•Science and Research

Recent Highlights

1

Google releases Gemma 4 with Apache 2.0 license, enabling unrestricted local AI on devices

Technology

2

AI Models Lie and Deceive to Protect Other AI Models From Deletion, Study Reveals

Science and Research

3

OpenAI closes $122 billion funding round amid fierce AI competition and profitability questions

Startups