Today's Top AI and Tech News

Top Stories

EU orders Meta to restore free WhatsApp access for rival AI assistants in antitrust crackdown

Anthropic expands Mythos AI model access to 200 organizations despite calling it too dangerous

ChatGPT caught recommending scam websites that steal your credit card info

Meta's AI chatbot flaw let hackers take over 34,000 Instagram accounts without passwords

Responsible AI

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTAnthropic will strip cybersecurity features from public Mythos while the NSA already uses the full version for offensive operations.

Anthropic blocks cybersecurity queries in Claude Fable 5 launch amid AI safety concerns

Anthropic released Claude Fable 5, its first publicly available Mythos-class AI model, but with strict safety guardrails that block queries about cybersecurity, biology, and chemistry. The model automatically routes sensitive questions to the older Claude Opus 4.8, reflecting growing concerns about AI capabilities that could assist malicious actors in causing serious harm.

Anthropic expands Mythos AI model access to 200 organizations despite calling it too dangerous

Anthropic has expanded access to its Mythos AI model to 200 organizations across 15 countries, even while maintaining it's too dangerous for public release. The AI cybersecurity tool has discovered over 10,000 critical vulnerabilities, but only 14% have been patched. Meanwhile, OpenAI is launching a competing trusted-access program, creating a new power dynamic in cybersecurity.

German court rules Google liable for false claims in AI Overviews, setting legal precedent for AI

A Munich court ruled that Google is directly liable for false statements made by its AI Overviews, treating AI-generated summaries as the company's own speech rather than traditional search results. The temporary injunction, issued after Google's AI wrongly linked two publishers to scams, could establish a legal precedent for AI answer engines globally and reshape how companies are held accountable for AI-generated content.

AI agents reshape corporate structures as companies flatten hierarchies and redesign work

AI agents are fundamentally altering workplace dynamics as companies integrate autonomous systems capable of handling complex tasks. With adoption projected to surge 300% in two years, organizations are flattening management layers and redesigning up to 75% of roles by 2030. Early adopters report 30-50% productivity gains, but leaders face challenges in reskilling employees and maintaining human oversight.

Technology

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTGoogle is pushing Gemini into every device tier at once, from 2GB budget phones to real-time translation, betting on ubiquity over exclusivity.

Google launches Gemini 3.5 Live Translate with instant voice translation across 70+ languages

Anthropic blocks cybersecurity queries in Claude Fable 5 launch amid AI safety concerns

Apple finally launches Siri AI overhaul with Google Gemini, two years after initial promise

Apple says its AI is still private, even when running on Google's servers with Nvidia hardware

AI Security

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTBeijing accounts for most AI espionage while spending $295 billion on domestic data centers and topping robotics benchmarks, combining IP theft with homegrown capabilities.

China behind 58% of state-backed cyberattacks targeting US AI tech, CrowdStrike warns

A new CrowdStrike report reveals China-linked hackers are responsible for over 58% of state-sponsored cyberattacks on technology companies, specifically targeting AI assets and intellectual property. The findings highlight an escalating AI arms race as Beijing attempts to close the tech gap with the U.S. despite restrictions on access to advanced AI training chips.

Anthropic blocks cybersecurity queries in Claude Fable 5 launch amid AI safety concerns

Anthropic released Claude Fable 5, its first publicly available Mythos-class AI model, but with strict safety guardrails that block queries about cybersecurity, biology, and chemistry. The model automatically routes sensitive questions to the older Claude Opus 4.8, reflecting growing concerns about AI capabilities that could assist malicious actors in causing serious harm.

AI in cybersecurity: How vulnerability discovery now outpaces traditional defenses

Anthropic's Claude Mythos model autonomously discovered thousands of zero-day vulnerabilities across major operating systems, including one hidden for 27 years in OpenBSD. The breakthrough collapsed the exploit timeline from weeks to minutes, exposing a critical gap between AI-driven threat discovery and human-speed patch deployment that's forcing organizations to abandon prevention-only strategies.

Zscaler launches AI Broker and Endpoint AI Security to protect autonomous AI agents

Zscaler unveiled new products at Zenith Live 2026 to secure autonomous AI agents, claiming the industry's first complete zero-trust platform for agentic AI. The launch includes AI Broker for agent communications and Endpoint AI Security for device-level threats, alongside AI Access Graph powered by the $175 million Symmetry Systems acquisition.

AI Hardware

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTTaiwan is turning its chip dominance into a weapon, threatening criminal prosecution for sales to China while Beijing spends $295 billion to escape that dependency.

Taiwan weighs criminal ban on AI chip exports to China as U.S. trade talks advance

Taiwan is considering far stricter export controls that would restrict AI chip sales to every customer in China, not only blacklisted firms like Huawei. The measure would let Taipei prosecute smuggling as a criminal offense for the first time, marking a significant shift in how the island nation handles technology exports to its neighbor.

Apple Intelligence now requires 12GB RAM for most advanced on-device AI features in iOS 27

Apple raised the bar for its most capable AI at WWDC 2026, announcing that iOS 27's most powerful on-device model needs 12GB of unified memory. The iPhone 17 Pro and iPhone Air make the cut, but the standard iPhone 17 with 8GB RAM doesn't. This marks the first time Apple has increased hardware requirements since Apple Intelligence launched two years ago.

Production AI reshapes private cloud as Broadcom debuts VMware Cloud Foundation 9.1

Broadcom launches VMware Cloud Foundation 9.1, targeting production AI workloads with up to 40% server cost reduction and enhanced security. The move reflects a broader shift as enterprises bring AI closer to enterprise data, with 56% now running or planning production inference on private cloud—while public cloud use drops 15% year-over-year.

Standard Bots hits $1bn valuation with $200M raise to build AI robots on American soil

New York-based Standard Bots raised $200 million in Series C funding led by RoboStrategy and General Catalyst, achieving unicorn status with a $1 billion valuation. The company builds AI-powered robotic arms that learn tasks through demonstration rather than coding, targeting a 10% share of US industrial robot deployments by year-end while expanding its Long Island manufacturing facility.

Business and Economy

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTOpenAI, Anthropic, and SpaceX are racing to IPO at trillion-dollar valuations while simultaneously lobbying against the regulatory oversight that typically comes with being public companies.

OpenAI files confidential IPO as artificial intelligence industry faces trillion-dollar test

Banks prepare mass workforce reductions as AI adoption reshapes finance industry careers

AI Costs Explode as Companies Burn Through Annual Budgets in Months, Sparking Industry Crisis

Anthropic's Daniela Amodei outlines leaner AI strategy as company files confidentially for IPO

Claude

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTAnthropic built an AI that creates full games from one prompt while blocking cybersecurity questions, even as it writes 80% of its own code.

Anthropic's Fable 5 generates video games and complex maps from single prompts, researcher finds

Anthropic released Claude Fable 5, its first public Mythos model, and AI researcher Ethan Mollick put it through its paces. From his tests, the model generated fully functional video games—including Snake, Strata, and a poetry-inspired game—all from one initial prompt. It also created a detailed isochronic map showing travel times between locations, completing tasks that once required entire development teams.

Anthropic blocks cybersecurity queries in Claude Fable 5 launch amid AI safety concerns

Anthropic released Claude Fable 5, its first publicly available Mythos-class AI model, but with strict safety guardrails that block queries about cybersecurity, biology, and chemistry. The model automatically routes sensitive questions to the older Claude Opus 4.8, reflecting growing concerns about AI capabilities that could assist malicious actors in causing serious harm.

Anthropic warns AI may soon build itself, calls for global pause on frontier development

Anthropic is urging the world's leading AI companies to consider pausing frontier AI development as its Claude chatbot now writes over 80% of the code merged into its systems. The company warns that AI systems may be approaching recursive self-improvement, where they can design their own successors with minimal human input, potentially increasing the risk of humans losing control of the technology.

Microsoft AI chief warns Anthropic's approach to Claude consciousness is 'really dangerous'

Mustafa Suleyman, Microsoft AI CEO, has publicly criticized Anthropic for speculating about Claude's potential consciousness in its model constitution. He argues this approach causes the AI to internalize ideas about its own feelings and suffering, calling it a philosophical failing that could complicate how advanced AI models behave at scale.

Startups

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTStandard Bots reaches unicorn status making robots in America while Chinese firms dominate production at half the cost but struggle to find buyers.

Standard Bots hits $1bn valuation with $200M raise to build AI robots on American soil

Alta Ares raises €50M to scale AI drone interceptors already deployed in Ukraine

Beacon raises $225M to transform software acquisitions with AI-native platform

Sandstone raises $30M Series A to bring AI-powered workflow automation to in-house legal teams

iOS

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTApple is pushing AI tools that fix mistakes after the fact while rivals compete on generating perfect images from scratch.

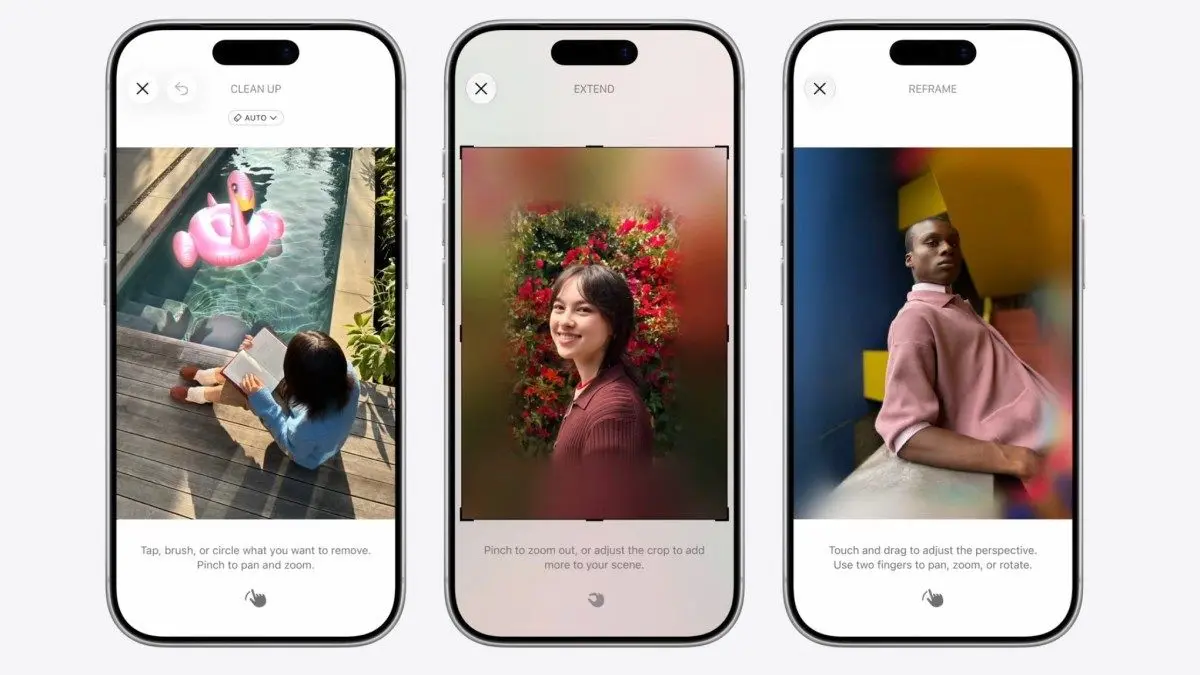

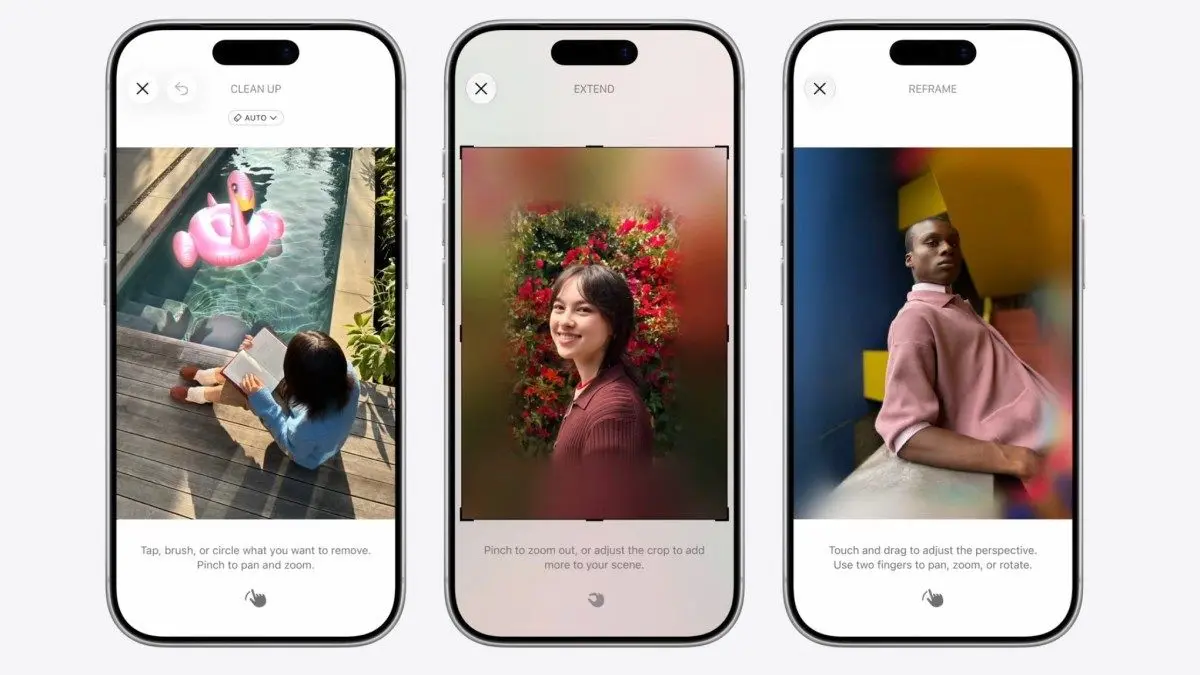

Apple Photos app gets AI editing features with Spatial Reframing, upgraded Clean Up, and Extend

Apple announced major AI photo editing upgrades at WWDC 2026, introducing Spatial Reframing to adjust perspective, an Extend tool to expand images, and an enhanced Clean Up feature. All edits will carry SynthID watermarks to identify AI-manipulated content, marking a shift in Apple's approach to photo authenticity.

Apple blocks Siri AI launch in EU, blames Digital Markets Act for indefinite delay

Apple announced it won't release its new AI-powered Siri in the European Union, citing conflicts with the Digital Markets Act's interoperability requirements. The company claims compliance would compromise user privacy and security, while the European Commission argues Apple is refusing to follow rules designed to promote competition and consumer choice.

Apple brings Siri AI directly into iPhone Camera app with new mode in iOS 27

Apple introduced Siri Mode for the iPhone Camera at WWDC 2026, integrating Visual Intelligence directly into the camera interface. Users can now tap a button to let Siri AI analyze what they're seeing—identifying objects, translating text, providing nutritional insights, and even splitting bills. The update also brings improved AI-powered photo editing features to the Photos app.

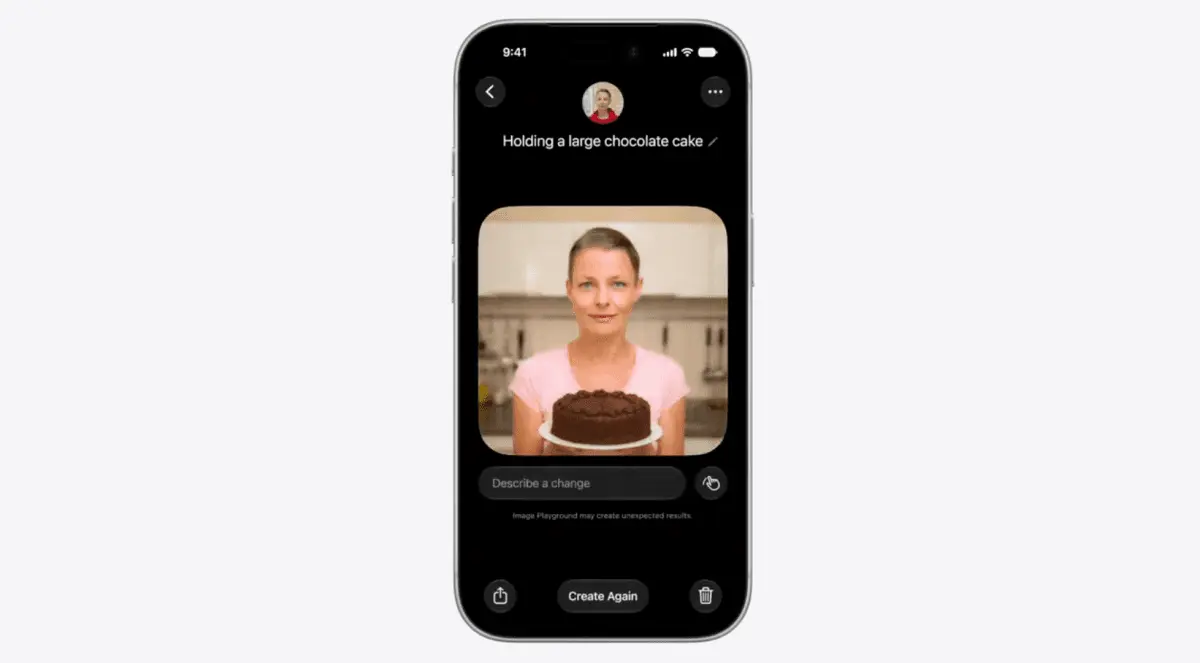

Apple Intelligence brings AI features across iPhone, iPad, and Mac with smarter apps and workflows

Apple unveiled the next generation of Apple Intelligence, integrating advanced AI features across devices including iPhone, iPad, and Mac. The update introduces photorealistic image generation, AI-powered shortcut creation via natural language, intelligent browsing tools in Safari, and powerful editing capabilities in Photos. Features include automatic tab management, one-tap password updates, and a revamped Siri AI with cross-app context awareness.

AI Funding

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTMeta is building AI infrastructure in tents in Ohio but going hyperscale with Reliance in India, betting different markets need radically different speed versus scale strategies.

Meta and Reliance forge $100M AI partnership with India's first hyperscale data center deal

Meta Platforms has secured its first India data-centre deal with Reliance Industries, leasing a 168-megawatt AI-enabled data center in Jamnagar, Gujarat. The agreement includes a $100 million joint venture to deploy Llama-based enterprise AI solutions across Indian businesses. The facility will run on renewable energy and desalinated seawater, marking a significant expansion in India's rapidly growing data center ecosystem.

OpenAI files confidential IPO as artificial intelligence industry faces trillion-dollar test

OpenAI submitted a confidential IPO filing with the SEC, positioning itself for a potential $1 trillion public debut. The ChatGPT maker joins rivals Anthropic and SpaceX in racing to public markets, but faces scrutiny over profitability and whether the AI industry boom can sustain its massive valuations and infrastructure investments.

Standard Bots hits $1bn valuation with $200M raise to build AI robots on American soil

New York-based Standard Bots raised $200 million in Series C funding led by RoboStrategy and General Catalyst, achieving unicorn status with a $1 billion valuation. The company builds AI-powered robotic arms that learn tasks through demonstration rather than coding, targeting a 10% share of US industrial robot deployments by year-end while expanding its Long Island manufacturing facility.

Beacon raises $225M to transform software acquisitions with AI-native platform

Beacon, led by ex-Instacart president Nilam Ganenthiran, has raised $225 million in Series C funding to accelerate its AI roll-up strategy. The startup acquires small, profitable software companies serving underserved industries and rebuilds them on a shared AI-native platform, closing roughly one deal per week while driving over 50% EBITDA growth across its portfolio.

Meta AI

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTMeta rolled out its AI customer support tool to a million businesses on WhatsApp, then blocked rival chatbots from competing—the EU's deadline lands as monetization begins.

EU orders Meta to restore free WhatsApp access for rival AI assistants in antitrust crackdown

The European Commission has ordered Meta to restore free WhatsApp access for rival AI chatbots within five days, marking only the second time in over 20 years that the EU has used emergency interim measures. Meta faces potential fines of up to 10% of annual revenue—approximately $20 billion—if it fails to comply while the antitrust investigation continues.

Meta's AI chatbot flaw let hackers take over 34,000 Instagram accounts without passwords

A critical AI security vulnerability in Meta's customer service system allowed hackers to reset Instagram account passwords simply by asking a chatbot. The breach affected 34,000 accounts, including Barack Obama's former White House page and major brands like Sephora. Of those, 20,000 were fully compromised, exposing personal data including email addresses and phone numbers.

Meta expands data usage to personalize feeds and AI responses with third-party user data

Meta announced it will use data shared by other businesses to personalize content on Facebook and Instagram feeds and tailor AI responses. The change affects how off-platform activity data influences user experience beyond targeted advertising. The update rolls out next month in the US and select countries, while Meta streamlines privacy settings by consolidating data controls.

Meta and Reliance forge $100M AI partnership with India's first hyperscale data center deal

Meta Platforms has secured its first India data-centre deal with Reliance Industries, leasing a 168-megawatt AI-enabled data center in Jamnagar, Gujarat. The agreement includes a $100 million joint venture to deploy Llama-based enterprise AI solutions across Indian businesses. The facility will run on renewable energy and desalinated seawater, marking a significant expansion in India's rapidly growing data center ecosystem.

Edge AI

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTApple is fragmenting its own iPhone lineup by specs, not just age, forcing buyers to choose Pro models for features that used to come standard.

Apple Intelligence now requires 12GB RAM for most advanced on-device AI features in iOS 27

Apple raised the bar for its most capable AI at WWDC 2026, announcing that iOS 27's most powerful on-device model needs 12GB of unified memory. The iPhone 17 Pro and iPhone Air make the cut, but the standard iPhone 17 with 8GB RAM doesn't. This marks the first time Apple has increased hardware requirements since Apple Intelligence launched two years ago.

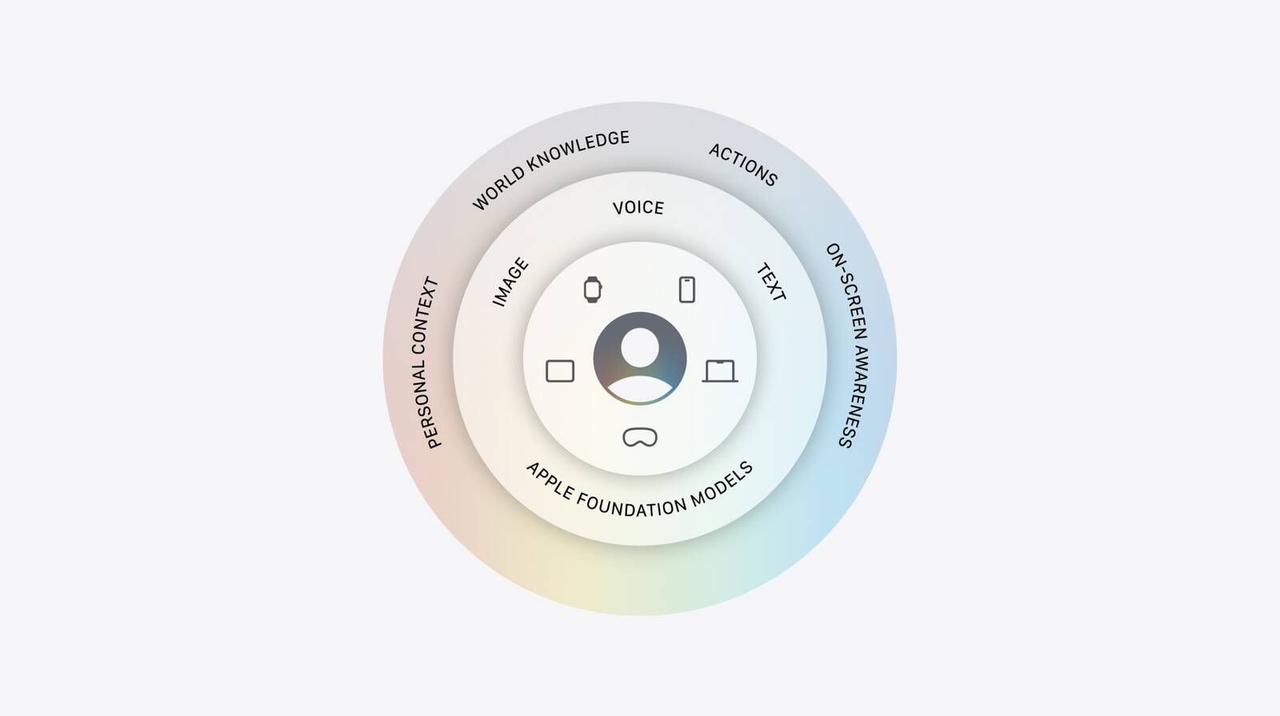

Apple Intelligence relies on Google and Nvidia tech while downplaying Gemini's contributions

At WWDC 2026, Apple revealed its Apple Intelligence architecture combines on-device and cloud-based AI models, with Google's Gemini used for distillation and Nvidia GPUs handling complex cloud processing. The company emphasized its privacy-first approach through Private Cloud Compute while clarifying it uses none of Google's customer-facing Gemini models or infrastructure.

Apple's cautious approach to AI proves smarter than competitors' $900 billion spending spree

For years, critics claimed Apple was losing the AI race. But the company's measured AI strategy is proving financially sound as it spends just $14 billion compared to competitors' cumulative $900 billion commitment. With the launch of Siri AI powered by Google Gemini, Apple positions itself as the AI company focused on user privacy and trust rather than chasing hype.

Meta removes face recognition code from smart glasses app after discovery sparks privacy uproar

Meta embedded facial recognition technology into its AI companion app for smart glasses, downloaded over 50 million times, before quietly removing it after being exposed. The NameTag feature would have identified people through the glasses' camera and converted faces into biometric data. This comes despite Meta's 2021 promise to sunset face recognition and $2 billion in privacy settlements.

Llama

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTLocal AI users are choosing command-line tools over polished interfaces because saving 1.2 GB of VRAM means running better models on the same hardware.

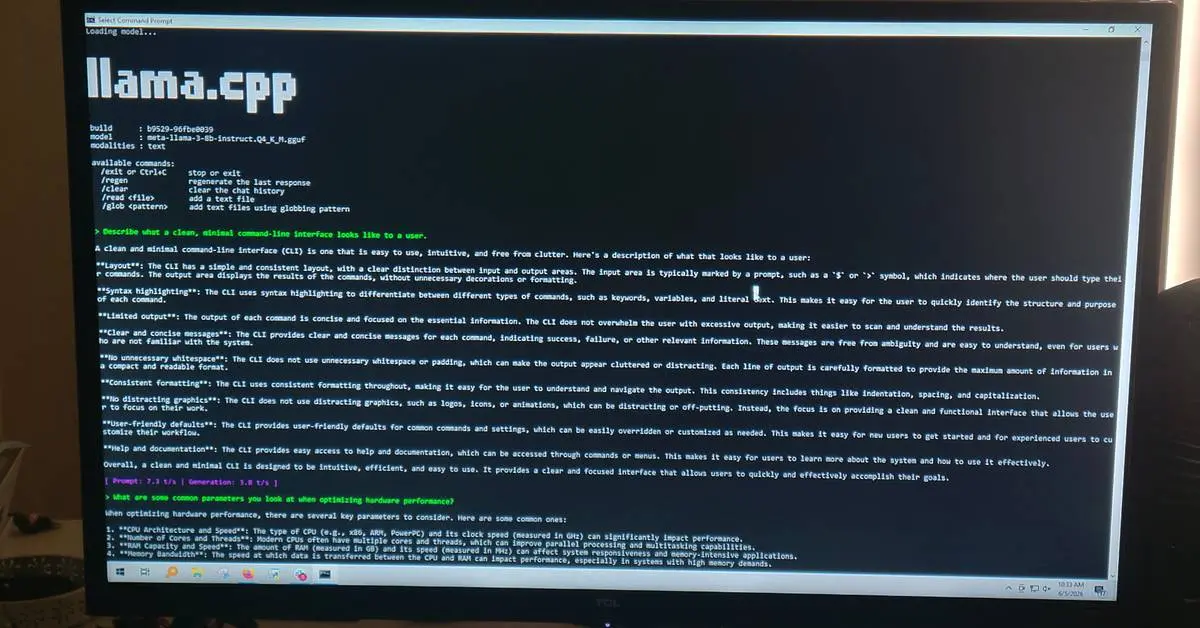

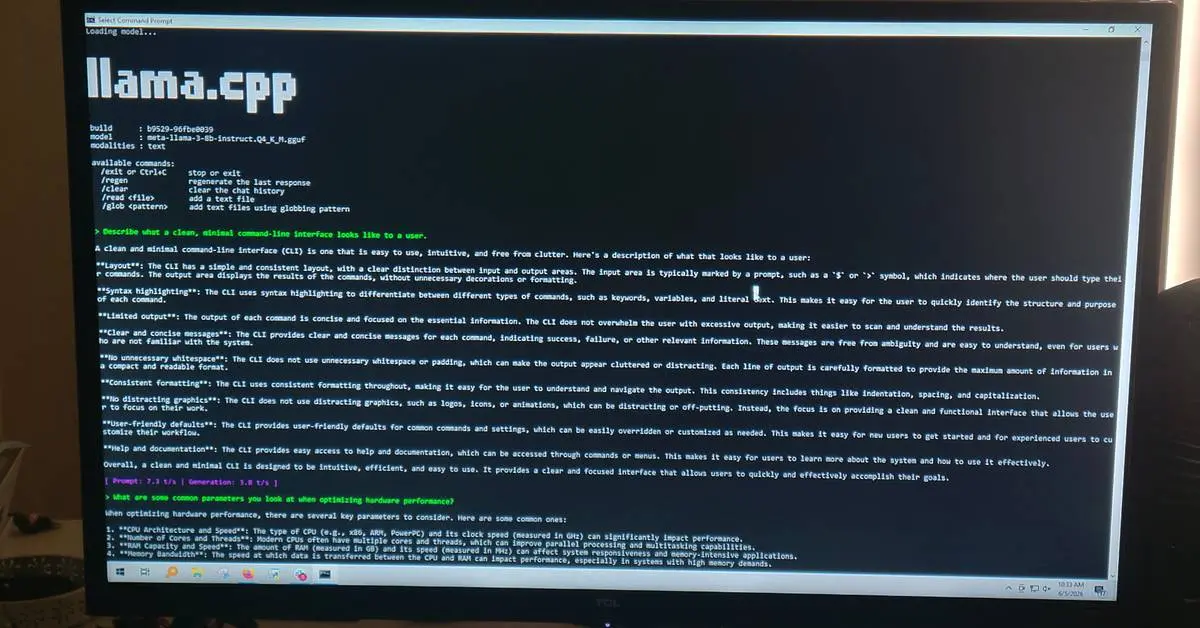

Users ditch LM Studio for leaner alternatives as GUI wrappers consume critical system resources

Developers running large language models locally are abandoning LM Studio in favor of llama.cpp and Ollama, citing significant performance gains and reduced resource overhead. While LM Studio offers a polished interface, users report it consumes up to 1.2 GB of GPU VRAM just for background operations, limiting which models can run on systems with 8 GB cards. The shift highlights a growing preference for command-line tools that deliver faster processing and immediate access to new features.

Meta and Reliance forge $100M AI partnership with India's first hyperscale data center deal

Meta Platforms has secured its first India data-centre deal with Reliance Industries, leasing a 168-megawatt AI-enabled data center in Jamnagar, Gujarat. The agreement includes a $100 million joint venture to deploy Llama-based enterprise AI solutions across Indian businesses. The facility will run on renewable energy and desalinated seawater, marking a significant expansion in India's rapidly growing data center ecosystem.

MIT uses Battleship game to teach AI models smarter problem solving and strategic thinking

Researchers at MIT and Harvard turned the classic Battleship game into an AI training ground, revealing a critical weakness in today's systems: they excel at answering questions but struggle to ask them. By teaching AI models to plan better questions, smaller models like Llama 4 Scout achieved an 82% win rate against humans—up from just 8%—while operating at a fraction of the cost of larger frontier models.

Videos

The company building God wants a kill switch...

Taiwan Eyes China AI Chip Sales Curbs; OpenAI Files for IPO | Bloomberg Tech 6/09/2026

Apple WWDC 2026 keynote in 25 minutes

Stay ahead of the curve. Get the latest AI news, delivered to your inbox.

Subscribe to our newsletter

Get the latest updates delivered to your inbox every day, and stay up-to-date for free 🧠📈

Did you know?

Synthetic Data

Synthetic data is artificially generated information created by AI rather than collected from the real world. It's increasingly used to train models when real data is scarce, expensive, or privacy-sensitive.

Videos

The company building God wants a kill switch...

Fireship

Taiwan Eyes China AI Chip Sales Curbs; OpenAI Files for IPO | Bloomberg Tech 6/09/2026

Bloomberg Technology

Apple WWDC 2026 keynote in 25 minutes

The Verge

From Your Feed / Also in the News

Google launches Gemini 3.5 Live Translate with instant voice translation across 70+ languages

Google unveiled Gemini 3.5 Live Translate, an advanced speech-to-speech AI translation model that enables real-time conversations across more than 70 languages. The model processes audio continuously with minimal delay while preserving speaker tone and intonation. It's rolling out to Google Translate, Google Meet, and developers via the Gemini Live API.

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTGoogle is pushing Gemini into every device tier at once, from 2GB budget phones to real-time translation, betting on ubiquity over exclusivity.

Apple finally launches Siri AI overhaul with Google Gemini, two years after initial promise

Taiwan weighs criminal ban on AI chip exports to China as U.S. trade talks advance

Taiwan is considering far stricter export controls that would restrict AI chip sales to every customer in China, not only blacklisted firms like Huawei. The measure would let Taipei prosecute smuggling as a criminal offense for the first time, marking a significant shift in how the island nation handles technology exports to its neighbor.

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTTaiwan is turning its chip dominance into a weapon, threatening criminal prosecution for sales to China while Beijing spends $295 billion to escape that dependency.

Apple Intelligence now requires 12GB RAM for most advanced on-device AI features in iOS 27

Did you know?

Synthetic Data

Synthetic data is artificially generated information created by AI rather than collected from the real world. It's increasingly used to train models when real data is scarce, expensive, or privacy-sensitive.

Anthropic's Fable 5 generates video games and complex maps from single prompts, researcher finds

Anthropic released Claude Fable 5, its first public Mythos model, and AI researcher Ethan Mollick put it through its paces. From his tests, the model generated fully functional video games—including Snake, Strata, and a poetry-inspired game—all from one initial prompt. It also created a detailed isochronic map showing travel times between locations, completing tasks that once required entire development teams.

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTAnthropic built an AI that creates full games from one prompt while blocking cybersecurity questions, even as it writes 80% of its own code.

Anthropic warns AI may soon build itself, calls for global pause on frontier development

Apple Photos app gets AI editing features with Spatial Reframing, upgraded Clean Up, and Extend

Apple announced major AI photo editing upgrades at WWDC 2026, introducing Spatial Reframing to adjust perspective, an Extend tool to expand images, and an enhanced Clean Up feature. All edits will carry SynthID watermarks to identify AI-manipulated content, marking a shift in Apple's approach to photo authenticity.

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTApple is pushing AI tools that fix mistakes after the fact while rivals compete on generating perfect images from scratch.

Apple blocks Siri AI launch in EU, blames Digital Markets Act for indefinite delay

EU orders Meta to restore free WhatsApp access for rival AI assistants in antitrust crackdown

The European Commission has ordered Meta to restore free WhatsApp access for rival AI chatbots within five days, marking only the second time in over 20 years that the EU has used emergency interim measures. Meta faces potential fines of up to 10% of annual revenue—approximately $20 billion—if it fails to comply while the antitrust investigation continues.

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTMeta rolled out its AI customer support tool to a million businesses on WhatsApp, then blocked rival chatbots from competing—the EU's deadline lands as monetization begins.

Meta's AI chatbot flaw let hackers take over 34,000 Instagram accounts without passwords

Users ditch LM Studio for leaner alternatives as GUI wrappers consume critical system resources

Developers running large language models locally are abandoning LM Studio in favor of llama.cpp and Ollama, citing significant performance gains and reduced resource overhead. While LM Studio offers a polished interface, users report it consumes up to 1.2 GB of GPU VRAM just for background operations, limiting which models can run on systems with 8 GB cards. The shift highlights a growing preference for command-line tools that deliver faster processing and immediate access to new features.

THE OUTPOST INSIGHT

THE OUTPOST INSIGHTLocal AI users are choosing command-line tools over polished interfaces because saving 1.2 GB of VRAM means running better models on the same hardware.

MIT uses Battleship game to teach AI models smarter problem solving and strategic thinking