Anthropic accuses Chinese AI labs of industrial-scale theft as chip export debate intensifies

38 Sources

38 Sources

[1]

Anthropic accuses Chinese AI labs of mining Claude as US debates AI chip exports | TechCrunch

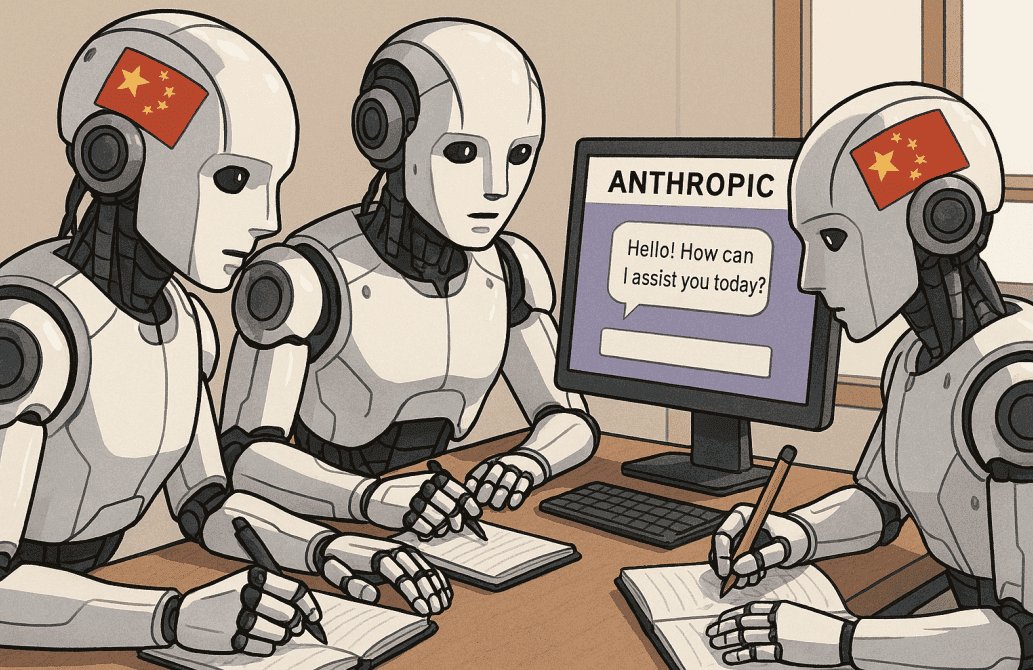

Anthropic is accusing three Chinese AI companies of setting up more than 24,000 fake accounts with its Claude AI model to improve their own models. The labs -- DeepSeek, Moonshot AI, and MiniMax -- allegedly generated more than 16 million exchanges with Claude through those accounts using a technique called "distillation." Anthropic said the labs "targeted Claude's most differentiated capabilities: agentic reasoning, tool use, and coding." The accusations come amid debates over how strictly to enforce export controls on advanced AI chips, a policy aimed at curbing China's AI development. Distillation is a common training method that AI labs use on their own models to create smaller, cheaper versions, but competitors can use it to essentially copy the homework of other labs. OpenAI sent a memo to House lawmakers earlier this month accusing DeepSeek of using distillation to mimic its products. DeepSeek first made waves a year ago when it released its open-source R1 reasoning model that nearly matched American frontier labs in performance at a fraction of the cost. DeepSeek is expected to soon release DeepSeek V4, its latest model, which reportedly can outperform Anthropic's Claude and OpenAI's ChatGPT in coding. The scale of each attack differed in scope. Anthropic tracked more than 150,000 exchanges from DeepSeek that seemed aimed at improving foundational logic and alignment, specifically around censor-ship safe alternatives to policy-sensitive queries. Moonshot AI had more than 3.4 million exchanges targeting agentic reasoning and tool use, coding and data analysis, computer-use agent development, and computer vision. Last month, the firm released a new open source model Kimi K2.5 and a coding agent. MiniMax's 13 million exchanges targeted agentic coding and tool use and orchestration. Anthropic said it was able to observe MiniMax in action as it redirected nearly half its traffic to siphon capabilities from the latest Claude model when it was launched. Anthropic says it will continue to invest in defenses that make distillation attacks harder to execute and easier to identify, but is calling on "a coordinated response across the AI industry, cloud providers, and policymakers." The distillation attacks come at a time when American chip exports to China are still hotly debated. Last month, the Trump administration formally allowed U.S. companies like Nvidia to export advanced AI chips (like the H200) to China. Critics have argued that this loosening of export controls increases China's AI computing capacity at a critical time in the global race for AI dominance. Anthropic says that the scale of extraction DeepSeek, MiniMax, and Moonshot performed "requires access to advanced chips." "Distillation attacks therefore reinforce the rationale for export controls: restricted chip access limits both direct model training and the scale of illicit distillation," per Anthropic's blog. Dmitri Alperovitch, chairman of the Silverado Policy Accelerator think-tank and co-founder of CrowdStrike, told TechCrunch he's not surprised to see these attacks. "It's been clear for a while now that part of the reason for the rapid progress of Chinese AI models has been theft via distillation of US frontier models. Now we know this for a fact," Alperovitch said. "This should give us even more compelling reasons to refuse to sell any AI chips to any of these [companies], which would only advantage them further." Anthropic also said distillation doesn't only threaten to undercut American AI dominance, but could also create national security risks. "Anthropic and other U.S. companies build systems that prevent state and non-state actors from using AI to, for example, develop bioweapons or carry out malicious cyber activities," reads Anthropic's blog post. "Models built through illicit distillation are unlikely to retain those safeguards, meaning that dangerous capabilities can proliferate with many protections stripped out entirely." Anthropic pointed to authoritarian governments deploying frontier AI for things like "offensive cyber operations, disinformation campaigns, and mass surveillance," a risk that is multiplied if those models are open-sourced. TechCrunch has reached out to DeepSeek, MiniMax, and Moonshot for comment.

[2]

Anthropic accuses DeepSeek and other Chinese firms of using Claude to train their AI

Anthropic claims DeepSeek and two other Chinese AI companies misused its Claude AI model in an attempt to improve their own products. In an announcement on Monday, Anthropic says the "industrial-scale campaigns" involved the creation of around 24,000 fraudulent accounts and more than 16 million exchanges with Claude, as reported earlier by The Wall Street Journal. The three companies -- DeepSeek, MiniMax, and Moonshot -- are accused of "distilling" Claude, or training a smaller AI model based on a more advanced one. Though Anthropic says that distillation is a "legitimate training method," it adds that it can "also be used for illicit purposes," including "to acquire powerful capabilities from other labs in a fraction of the time, and at a fraction of the cost, that it would take to develop them independently." Anthropic adds that illicitly distilled models are "unlikely" to carry over existing safeguards. "Foreign labs that distill American models can then feed these unprotected capabilities into military, intelligence, and surveillance systems -- enabling authoritarian governments to deploy frontier AI for offensive cyber operations, disinformation campaigns, and mass surveillance," Anthropic writes. DeepSeek, which caused a stir in the AI industry for its powerful but more efficient models, held over 150,000 exchanges with Claude and targeted its reasoning capabilities, according to Anthropic. It's also accused of using Claude to generate "censorship-safe alternatives to politically sensitive questions about dissidents, party leaders, or authoritarianism." In a letter to lawmakers last week, OpenAI similarly accused DeepSeek of "ongoing efforts to free-ride on the capabilities developed by OpenAI and other U.S. frontier labs." Moonshot and MiniMax had more than 3.4 million and 13 million exchanges with Claude, respectively. Anthropic is calling on other members in the AI industry, cloud providers, and lawmakers to address distillation, adding that "restricted chip access" could limit model training and "the scale of illicit distillation."

[3]

Anthropic Slams China for AI Theft, But Critics Say the Outrage Is Hypocritical

Anthropic is accusing Chinese developers of stealing Claude chatbot trade secrets, but critics note that Anthropic itself has a record of scraping the internet for AI training. On Monday, the San Francisco company said the Chinese firms behind DeepSeek, Moonshot AI, and MiniMax "created over 24,000 fraudulent accounts and generated over 16 million exchanges with Claude, extracting its capabilities to train and improve their own models." The company claims the Chinese developers are essentially trying to clone Claude by tricking the chatbot into revealing "the internal reasoning behind a completed response." Last year, OpenAI accused DeepSeek of doing the same, but for its own AI models. In Anthropic's case, the company already blocks commercial access to China for national security reasons. However, the Chinese AI developers allegedly bypass those restrictions by tapping "commercial proxy services which resell access to Claude and other frontier AI models at scale." These "hydra clusters" then use "sprawling networks of fraudulent accounts that distribute traffic across our API as well as third-party cloud platforms," the company says. In one case, a single proxy network managed more than 20,000 fraudulent accounts simultaneously. Anthropic examined the metadata on Claude chatbot requests and traced them to staffers at DeepSeek and Moonshot AI. "These campaigns are growing in intensity and sophistication," the company warned. "The window to act is narrow, and the threat extends beyond any single company or region. Addressing it will require rapid, coordinated action among industry players, policymakers, and the global AI community." But rather than receiving support, Anthropic is facing claims of hypocrisy on social media since it has a controversial record of scraping data across the internet to train its AI. This has allegedly included using numerous copyrighted books without the authors' permission to develop Claude. "Sorry but Anthropic can't have it both ways," tweeted programmer Gergely Orosz, who publishes a newsletter for software engineers. "Also let's not forget how Anthropic itself trained Claude: on copyrighted books, only paying copyright holders after a lawsuit." Elon Musk, who founded xAI, also chimed in, tweeting, "How dare they [China] steal the stuff Anthropic stole from human coders??" alleging that the company has been training its AI on projects from software developers without their permission. Still, the news from Anthropic suggests the company plans on taking stronger action to prevent China from accessing its technology. In addition to shutting off access, Anthropic also called for more export controls to prevent advanced US chips from falling into China's hands. "The apparently rapid advancements made by these [Chinese] labs are incorrectly taken as evidence that export controls are ineffective and able to be circumvented by innovation. In reality, these advancements depend in significant part on capabilities extracted from American models, and executing this extraction at scale requires access to advanced chips," Anthropic says. DeepSeek and the developers of Moonshot AI and MiniMax didn't immediately respond to a request for comment.

[4]

Anthropic accuses DeepSeek, other Chinese AI developers of 'industrial-scale' copying -- Claims 'distillation' included 24,000 fraudulent accounts and 16 million exchanges to train smaller models

Anthropic on Monday accused three leading Chinese developers of frontier AI models of using large-scale distillation to improve their own models by using Anthropic's Claude capabilities. In total, DeepSeek, Moonshot, and MiniMax made 16 million exchanges using 24,000 fraudulent accounts. Distillation is a machine learning technique in which a smaller or less capable model is trained on the outputs of a stronger model instead of using actual data to train. It can save time, create cheaper, more specialized models, extract capabilities from competitors, and/or lower requirements for hardware capabilities. While distillation is generally a legitimate technique, when a China-based entity with heavy restrictions does it, it violates both U.S. export controls and end-user license agreement with Anthropic. "Distillation can be legitimate: AI labs use it to create smaller, cheaper models for their customers," a statement by Anthropic published on X reads. "But foreign labs that illicitly distill American models can remove safeguards, feeding model capabilities into their own military, intelligence, and surveillance systems." American companies like OpenAI have long accused DeepSeek of using distillation to train some of their frontier models using outputs of ChatGPT and other services, but have not presented detailed explanation, unlike Anthropic. How Chinese companies use distillation from American AI models According to Anthropic, the perpetrators followed the same pattern: they used commercial services that resell access to frontier models and built what the company calls 'hydra cluster' networks -- large pools of accounts that spread traffic across Anthropic's API and third-party clouds. In one case, a single proxy setup allegedly controlled more than 20,000 fraudulent accounts at once. To avoid raising flags, it mixed extraction traffic with ordinary use requests. However, its prompt patterns stood out: very high volumes, tightly focused on specific capabilities, and highly repetitive. Such behavior was consistent with model training, but certainly not typical end-user interaction. DeepSeek alone generated over 150,000 exchanges that targeted reasoning tasks, rubric-based grading suitable for reinforcement learning reward models, and censorship-safe rewrites of politically sensitive queries, according to Anthropic. Anthropic also observed prompts designed to produce step-by-step internal reasoning and therefore reveal chain-of-thought training data. Moonshot, known for its Kimi models, accounted for more than 3.4 million exchanges, according to Anthropic. Its focus areas included agentic reasoning, tool use, coding, data analysis, computer-use agents, and computer vision. Moonshot allegedly used hundreds of fraudulent accounts spanning multiple access pathways and later tried to extract and reconstruct Claude's reasoning traces. MiniMax conducted the largest campaign with over 13 million exchanges that targeted agentic coding and orchestration. Anthropic says it detected this operation while it was still ongoing, as MiniMax was training its model that was to be released in the future, which provides the American company a unique view on the lifecycle of the extraction. After Anthropic introduced a new Claude model, MiniMax allegedly redirected nearly half its traffic within 24 hours to capture capabilities from the latest model. Anthropic's response To fight future distillation attempts, Anthropic says it is strengthening defenses to make large-scale distillation harder to carry out and easier to detect. The company has deployed classifiers and behavioral fingerprinting systems to identify extraction patterns in API traffic, including chain-of-thought elicitation and coordinated multi-account activity. The company is also sharing technical indicators of large-scale distillation operation with other AI labs, cloud providers, and authorities, as well as tightening verification for educational, research, and startup accounts often used to create fraudulent access. In parallel, it is developing product-, API-, and model-level safeguards to reduce their usefulness of outputs for illicit training without harming legitimate users. At the same time, the company admits that that countering attacks at this scale requires coordinated industry and policy action. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[5]

The AI Power Struggle Is Out of Silicon Valley's Control

Anthropic PBC's latest complaint against three Chinese labs is a warning sign for Silicon Valley: Don't expect to earn too much from the competitive edge your model gives you. Companies from the developing world will line up to swim across your moat, if they can -- and neither the US government nor your lawyers will be able to help you. As the music industry and Big Pharma could tell them, nobody will eliminate your rivals, you have to accommodate them. The company says it has pretty solid evidence that DeepSeek, MiniMax Group Inc. and Moonshot ran "industrial-scale campaigns" to "illicitly extract" the capabilities of its own model, Claude. That involved over 24,000 accounts that Anthropic described as fraudulent, and 16 million exchanges that were "in violation of our terms of service and regional access restrictions." There's just one problem: Laws that are unenforceable might get ignored. And if they are both unenforceable and profitable to break, then they certainly will be brushed aside. It might sound like this is straightforward theft, or industrial espionage. Anthropic owns Claude and these three labs in China are stealing it. Unfortunately, that doesn't hold up to closer examination. First, "distillation" -- what DeepSeek et al are accused of -- is, as the American company itself admits, a "widely used and legitimate training method." Where it gets murky is when your competitor decides to use that legitimate tactic to try and narrow the gap between the two of you. Anthropic says this is "illicit," which is one of the most wonderfully misleading words in the English language. It can mean "illegal." But it could also mean "frowned upon by custom and morality." In this case, the wronged party wants us to think the first, when it can really only claim the second. But many legal scholars argue that "generative AI companies probably don't own rights over either their models or the output of those models." That's why the three upstarts can't be accused of theft. Instead, they are "in violation of the terms of reference." As everyone who has grown up on the internet knows, only three people have ever read terms of reference online, and those were the first three lawyers to log on to the World Wide Web in 1993. After that, even they started clicking through automatically. People can be, and are, successfully prosecuted for unauthorized access to systems or for copyright infringement -- but not for breaching a website's terms of reference. Such claims don't seem to have real legal force at the moment. But even if they do, Silicon Valley will have to deal with an additional problem: How will they enforce them across the world? The more important that AI becomes, and the more revenue that the frontline labs try to make from it, the more likely it is that both governments and companies in other parts of the globe will refuse to comply with Silicon Valley's expectations and Washington's demands. Look at pharmaceuticals, for example. India never accepted the notion that life-saving drugs could be fully protected. If a generics manufacturer could replicate it through a different process, they were allowed to. The same will happen with AI: If it is possible to copy it, distill it, break the license, or force it open politically, then countries will allow -- perhaps even encourage -- their companies to do so. Distillation by hostile or irresponsible actors is a genuine concern. A frontline model that's stripped of safety and privacy guardrails could lead to nightmare scenarios. But then, if the US is forcing Anthropic to ditch its own safety pledges -- as the Pentagon is attempting to do -- can it really complain about other governments? Sign up for the Bloomberg Opinion bundle Sign up for the Bloomberg Opinion bundle Sign up for the Bloomberg Opinion bundle Get Matt Levine's Money Stuff, John Authers' Points of Return and Jessica Karl's Opinion Today. Get Matt Levine's Money Stuff, John Authers' Points of Return and Jessica Karl's Opinion Today. Get Matt Levine's Money Stuff, John Authers' Points of Return and Jessica Karl's Opinion Today. Bloomberg may send me offers and promotions. Plus Signed UpPlus Sign UpPlus Sign Up By submitting my information, I agree to the Privacy Policy and Terms of Service. Companies can't rely on US law to protect them internationally. They will have to throw more resources into preventing distillation at this scale, and simultaneously expose their systems to millions of queries, while preventing any combination of those queries from revealing too much to any one hidden actor. Perhaps some brilliant technical countermeasures will succeed at this task. But, right now, it seems like a tough ask. The music industry could remind Silicon Valley that it ended piracy not by complaining about the law, but by making products more accessible and raising revenues elsewhere. Big Pharma had to accept compulsory licensing by governments, as well as make its peace with the generics sector. They did so because otherwise they faced the possibility that the very idea of intellectual property would be contested. Give the world a stake in the system you want to legitimize, or it will find a way to break it. Illicitly, perhaps, but they won't care. More From Bloomberg Opinion: * Cricket Could Rescue a Lot More Than Imran Khan: Mihir Sharma * India Can't Spectacle Its Way to AI Power: Catherine Thorbecke * Anthropic Should Stand Its Ground Against the Pentagon: Dave Lee Want more Bloomberg Opinion? OPIN <GO>. Or you can subscribe to our daily newsletter.

[6]

Anthropic misanthropic toward China's AI labs

Says DeepSeek, Moonshot, and MiniMax are using 'distillation' to gin up their own models Having built a business by remixing content created by others, Anthropic worries that Chinese AI labs are stealing its data. The US-based maker of Claude models on Monday accused China-based DeepSeek, Moonshot AI, and MiniMax of conducting "industrial-scale campaigns" to siphon knowledge from its models through a technique known as "distillation." Model distillation is a deep learning technique in which a large "teacher" model can be made to transfer its learned patterns to a smaller "student" model. It's a form of data compression that ideally produces a smaller, more efficient model without significant performance loss. Useful for explainable AI - shining a light into black box algorithms - it's also a handy way to copy a model. Anthropic, like its leading rivals, has been sued for alleged copyright infringement or unauthorized web scraping several times. Claims include: Bartz v. Anthropic; Carreyrou v. Anthropic; Concord Music Group, Inc. v. Anthropic; MacKinnon v. Anthropic (Canada); and Reddit, Inc. v. Anthropic. While courts mull whether training AI models on copyrighted material without consent violates the law, Anthropic and its peers worry that Chinese companies are ripping them off. DeepSeek, Moonshot AI, and MiniMax, the company said, have been using networks of fraudulent accounts to probe Claude models on a vast scale. "These labs generated over 16 million exchanges with Claude through approximately 24,000 fraudulent accounts, in violation of our terms of service and regional access restrictions," the company said in a blog post. These attacks take the form of slightly varied prompts designed to elicit responses that can be used to train models. Anthropic refers to the distributed infrastructure used for model distillation as "hydra clusters," though it failed to establish that the underlying technology differs enough from commercial proxy services enough to warrant the menacing multi-headed mythological reference. Anthropic expressed concern that the unwelcome distillation of its models by foreign AI labs will enable authoritarian regimes to undertake cyberattacks, disinformation campaigns, and mass surveillance. It's unclear how this would be any different from the world we now inhabit. But the AI biz suggests that things would be worse still if these developers of distilled models open source their work. "If distilled models are open-sourced, this risk multiplies as these capabilities spread freely beyond any single government's control," the company said. Two weeks ago, Anthropic's arch-competitor OpenAI sent a memo [PDF] to the US House Select Committee on China warning that adversaries in China and to a lesser extent Russia have ramped up their efforts to raid frontier models. "For example, Chinese actors have moved beyond Chain-of-Thought (CoT) extraction toward more sophisticated, multi-stage pipelines that blend synthetic-data generation, large-scale data cleaning, and reinforcement-style preference optimization," OpenAI said. "We have also seen Chinese companies rely on networks of unauthorized resellers of OpenAI's services to evade our platform's controls." OpenAI specifically cited the predation of DeepSeek, warning that its models "lack meaningful guardrails against dangerous outputs in high-risk domains like chemistry and biology, or offer limited protections for copyrighted material." (We note that OpenAI is the defendant in "In re: OpenAI, Inc. Copyright Infringement Litigation," a consolidation of 16 copyright lawsuits.) OpenAI's memo calls for the US to protect the national AI industry and Anthropic's blog post follows suit, arguing that foreign model makers threaten national security. "Illicitly distilled models lack necessary safeguards, creating significant national security risks," the company said. "Anthropic and other US companies build systems that prevent state and non-state actors from using AI to, for example, develop bioweapons or carry out malicious cyber activities. Models built through illicit distillation are unlikely to retain those safeguards, meaning that dangerous capabilities can proliferate with many protections stripped out entirely." According to the Forecasting Research Institute's fifth wave report of predictions by the Longitudinal Expert AI Panel (LEAP), released on Monday, "Experts and superforecasters expect the performance gap between US and Chinese AI models to narrow by 2031, with parity anticipated by 2041." DeepSeek, Moonshot, and MiniMax did not immediately respond to requests for comment. ®

[7]

Anthropic Says Chinese AI Firms Used 16 Million Claude Queries to Copy Model

Anthropic on Monday said it identified "industrial-scale campaigns" mounted by three artificial intelligence (AI) companies, DeepSeek, Moonshot AI, and MiniMax, to illegally extract Claude's capabilities to improve their own models. The distillation attacks generated over 16 million exchanges with its large language model (LLM) through about 24,000 fraudulent accounts in violation of its terms of service and regional access restrictions. All three companies are based in China, where the use of its services is prohibited due to "legal, regulatory, and security risks." Distillation refers to a technique where a less capable model is trained on the outputs generated by a stronger AI system. While distillation is a legitimate way for companies to produce smaller, cheaper versions of their own frontier models, it's illegal for competitors to leverage it to acquire such capabilities from other AI companies at a fraction of the time and cost that would take them if they were to develop them on their own. "Illicitly distilled models lack necessary safeguards, creating significant national security risks," Anthropic said. "Models built through illicit distillation are unlikely to retain those safeguards, meaning that dangerous capabilities can proliferate with many protections stripped out entirely." Foreign AI companies that distill American models can weaponize these unprotected capabilities to facilitate malicious activities, cyber-related or otherwise, thereby serving as a foundation for military, intelligence, and surveillance systems that authoritarian governments can deploy for offensive cyber operations, disinformation campaigns, and mass surveillance. The campaigns detailed by AI upstart entail the use of fraudulent accounts and commercial proxy services to access Claude at scale while avoiding detection. Anthropic said it was able to attribute each campaign to a specific AI lab based on request metadata, IP address correlation, request metadata, and infrastructure indicators. The details of the three distillation attacks are below - "The volume, structure, and focus of the prompts were distinct from normal usage patterns, reflecting deliberate capability extraction rather than legitimate use," Anthropic added. "Each campaign targeted Claude's most differentiated capabilities: agentic reasoning, tool use, and coding." The company also pointed out that the attacks relied on commercial proxy services that resell access to Claude and other frontier AI models at scale. These services are powered by "hydra cluster" architectures that contain massive networks of fraudulent accounts to distribute traffic across their API. The access is then used to generate large volumes of carefully crafted prompts that are designed to extract specific capabilities from the model for the purpose of training their own models by harvesting the high-quality responses. "The breadth of these networks means that there are no single points of failure," Anthropic said. "When one account is banned, a new one takes its place. In one case, a single proxy network managed more than 20,000 fraudulent accounts simultaneously, mixing distillation traffic with unrelated customer requests to make detection harder." To counter the threat, Anthropic said it has built several classifiers and behavioral fingerprinting systems to identify suspicious distillation attack patterns in API traffic, strengthened verification for educational accounts, security research programs, and startup organizations, and implemented enhanced safeguards to reduce the efficacy of model outputs for illicit distillation. The disclosure comes weeks after Google Threat Intelligence Group (GTIG) disclosed it identified and disrupted distillation and model extraction attacks aimed at Gemini's reasoning capabilities through more than 100,000 prompts. "Model extraction and distillation attacks do not typically represent a risk to average users, as they do not threaten the confidentiality, availability, or integrity of AI services," Google said earlier this month. "Instead, the risk is concentrated among model developers and service providers."

[8]

Chinese companies used Claude to improve own models, Anthropic says

Feb 23 (Reuters) - Three Chinese companies have tried to use Claude to improperly obtain capabilities to improve their own models, the chatbot's creator Anthropic said in a blog post on Monday. DeepSeek, Moonshot and MiniMax created more than 16 million interactions with Claude using roughly 24,000 fake accounts, in violation of Anthropic's terms of service and regional access restrictions. They used a technique called "distillation," which involves having an older, more established and powerful AI model evaluate the quality of the answers coming out of a newer model, effectively transferring the older model's learnings, Anthropic said. "These campaigns are growing in intensity and sophistication. The window to act is narrow, and the threat extends beyond any single company or region." DeepSeek AI and MiniMax did not immediately respond to requests for comment. Reporting by Juby Babu in Mexico City; Editing by Alan Barona Our Standards: The Thomson Reuters Trust Principles., opens new tab

[9]

Anthropic joins OpenAI in flagging 'industrial-scale' distillation campaigns by Chinese AI firms

Anthropic on Monday accused three Chinese AI enterprises of engaging in coordinated campaigns to extract information from its model, making it the latest American tech firm to level such claims after OpenAI issued similar complaints. According to a statement from Anthropic, DeepSeek, Moonshot AI and MiniMax -- the three firms in question -- engaged in concerted "distillation attack" campaigns, flooding Claude with large volumes of specially-crafted prompts to train proprietary models. Through distillation, smaller AI models are able to mimic the performance of larger, pre-trained models by extracting knowledge from the better-trained model, a technique particularly useful for smaller teams with fewer resources. Despite Anthropic's service restrictions preventing commercial access to Claude in China, the three firms allegedly engaged commercial proxy services to sidestep Anthropic's restrictions, enabling access to networks running tens of thousands of Claude accounts simultaneously. "Once access is secured, the labs generate large volumes of carefully crafted prompts designed to extract specific capabilities from the model," Anthropic said in the statement. Claude's responses to these prompts are farmed en masse either for direct training of the Chinese models, or to run a process known as reinforcement learning, a data-intensive process where AI models learn decision-making through trial and error, in the absence of human guidance. Anthropic estimated that the three Chinese firms were collectively able to generate over 16 million exchanges with Claude from around 24,000 fraudulently created accounts. Of the three enterprises, Anthropic found MiniMax to have driven the most traffic, with over 13 million exchanges. DeepSeek, Moonshot AI and MiniMax have yet to respond to a request for comment from CNBC.

[10]

Anthropic accuses three Chinese AI labs of abusing Claude to improve their own models

Anthropic is issuing a call to action against AI "distillation attacks," after accusing three AI companies of misusing its Claude chatbot. On its website, Anthropic claimed that DeepSeek, Moonshot and MiniMax have been conducting "industrial-scale campaigns...to illicitly extract Claude's capabilities to improve their own models." Distillation in the AI world refers to when less capable models lean on the responses of more powerful ones to train themselves. While distillation isn't a bad thing across the board, Anthropic said that these types of attacks can be used in a more nefarious way. According to Anthropic, these three Chinese AI firms were responsible for more than "16 million exchanges with Claude through approximately 24,000 fraudulent accounts." From Anthropic's perspective, these competing companies were using Claude as a shortcut to develop more advanced AI models, which could also lead to circumventing certain safeguards. Anthropic said in its post that it was able to link each of these distilling attack campaigns to the specific companies with "high confidence" thanks to IP address correlation, metadata requests and infrastructure indicators, along with corroborating with others in the AI industry who have noticed similar behaviors. Early last year, OpenAI made similar claims of rival firms distilling its models and banned suspected accounts in response. As for Anthropic, the company behind Claude said it would upgrade its system to make distillation attacks harder to do and easier to identify. While Anthropic is pointing fingers at these other firms, it's also facing a lawsuit from music publishers who accused the AI company of using illegal copies of songs to train its Claude chatbot.

[11]

Anthropic Accuses 3 Chinese Companies of Harvesting Its Data

The San Francisco artificial intelligence start-up Anthropic has accused three Chinese companies of improperly harvesting large amounts of data from its A.I. technologies in an effort to accelerate the development of their own systems. Anthropic said in a blog post that DeepSeek, Moonshot and MiniMax -- three prominent Chinese start-ups -- used about 24,000 fraudulent accounts to generate over 16 million conversations with its Claude chatbot that could be used to teach skills to their own chatbots. Using data from one A.I. system to train another -- a process called distillation -- is common in A.I. work. But Anthropic's terms of service forbid anyone from surreptitiously harvesting data for distillation and do not allow its technologies to be used in China. OpenAI, Anthropic's primary rival, has also accused Chinese companies of lifting large amounts of data from its chatbot, ChatGPT, for similar proposes. In a memo sent to the House Select Committee on China last week, OpenAI said that DeepSeek and other Chinese start-ups were using new and "obfuscated" distillation methods as part of their "ongoing efforts to free-ride" on technologies developed by OpenAI and other U.S. companies. Like OpenAI, Anthropic said the practice was a national security risk, adding that it could allow China to build A.I. technologies to create bioweapons or tools for mass surveillance. The start-up has guardrails on its technologies designed to prevent them from being used in those ways, but the guardrails can be stripped away during distillation. Anthropic called on government officials and other A.I. companies to help prevent Chinese companies from distilling American models. "These campaigns are growing in intensity and sophistication," Anthropic said in its post. "The window to act is narrow, and the threat extends beyond any single company or region. Addressing it will require rapid, coordinated action among industry players, policymakers and the global A.I. community." DeepSeek, Moonshot and MiniMax did not immediately respond to requests for comment. Anthropic published its post amid a tussle with the Defense Department over the Pentagon's use of its technologies. The Pentagon has approved Anthropic's technologies for use with classified tasks, but it is threatening to sever ties with the start-up because Anthropic does not want its technologies used in situations involving autonomous weapons or domestic surveillance. Last year, DeepSeek spooked Silicon Valley tech companies and sent the U.S. financial markets into a tailspin after releasing A.I. technologies that matched the performance of anything else on the market. Until then, the prevailing wisdom in Silicon Valley had been that the most powerful systems could not be built without billions of dollars in specialized computer chips. But DeepSeek said it had created its technologies using far fewer resources. Like U.S. companies, DeepSeek, Moonshot and MiniMax build their A.I. technologies using computer code and data corralled from across the internet. A.I. companies across the globe lean heavily on a practice called open sourcing, which means they freely share the code that underpins their technologies and reuse code shared by others. They see this is as way of accelerating technological development. A.I. companies also need enormous amounts of online data to train their A.I. systems. The leading systems learn their skills by analyzing just about all of the text on the internet. Distillation is often used to train new systems. This is often allowed by open source technologies. But if a company takes data from proprietary technology, the practice may be legally problematic. Anthropic, which is now valued at $380 billion, is facing multiple lawsuits accusing it of illegally using copyrighted internet data to train its systems. In September as part of a landmark legal settlement, Anthropic agreed to pay $1.5 billion to a group of authors and publishers after a judge ruled it had illegally downloaded and stored millions of copyrighted books. It was the largest payout in the history of U.S. copyright cases. OpenAI and other A.I. companies face similar suits, including a lawsuit brought by The New York Times against OpenAI and its partner Microsoft. That suit contends that millions of articles published by The Times were used to train automated chatbots that now compete with the news outlet as a source of reliable information. Both OpenAI and Microsoft deny the claims.

[12]

Anthropic Says DeepSeek, MiniMax Distilled AI Models for Gains

Anthropic said it has built better detection and verification systems to mitigate the risk of distillation campaigns and is sharing information with other AI labs to help address the issue. Anthropic PBC said three leading artificial intelligence developers in China worked to "illicitly extract" results from its AI models to bolster the capabilities of rival products, adding to growing concerns in the US about Chinese firms improperly gaining an edge. The San Francisco-based company said DeepSeek, MiniMax Group Inc. and Moonshot violated its terms of service by generating more than 16 million exchanges in total with its Claude models using thousands of fraudulent accounts. With this tactic, known as distillation, Chinese AI labs can rapidly improve their models by training them on the outputs from more powerful systems, Anthropic said. Anthropic rival OpenAI warned US lawmakers earlier this month that DeepSeek had used distillation techniques as part of "ongoing efforts to free-ride on the capabilities developed by OpenAI and other US frontier labs." Other US officials, including White House AI czar David Sacks, have also expressed concerns that DeepSeek used this method. "These campaigns are growing in intensity and sophistication," Anthropic said in a blog post on Monday. "The window to act is narrow, and the threat extends beyond any single company or region." Representatives for DeepSeek, MiniMax and Moonshot did not respond to requests for comment after normal business hours. DeepSeek upended the AI landscape a year ago with the release of R1, a competitive model purportedly built at a fraction of the cost of leading US alternatives. Since then, China has flooded the market with a wave of affordable text, video and image models. That has threatened to undercut adoption of AI software from US firms, making it harder to monetize the technology. MiniMax made its public market debut in January. Moonshot, meanwhile, is targeting a $10 billion valuation in a new financing round. Anthropic said the three firms each used "fraudulent accounts and proxy services to access Claude at scale while evading detection." Proxy networks can be used to provide a cloak of invisibility on the internet, allowing users in prohibited markets to make a large numbers of accounts for various online services. DeepSeek generated more than 150,000 exchanges with Claude, and MiniMax generated more than 13 million, Anthropic said, with a focus on reconstructing Claude's more advanced capabilities. The Claude maker said it was able to pinpoint the three companies with "high confidence" based on information on internet protocol addresses, metadata and "corroboration from industry partners who observed the same actors and behaviors on their platforms." Anthropic said it has built better detection and verification systems to mitigate the risk of distillation campaigns. It's also sharing information with other AI labs to help address the issue. "No company can solve this alone," the company said. "Distillation attacks at this scale require a coordinated response across the AI industry, cloud providers and policymakers."

[13]

Anthropic Says Chinese AI Companies Improved Models By 'Illicitly' Copying Its Capabilities

Did you know that there's a way of using outputs from LLMs that may involve no hackingâ€"essentially just taking large quantities of text and repurposing it as training dataâ€"that upsets AI companies a great deal? In a blog post on Monday, Anthropic said that the China-based AI companies DeepSeek, Moonshot, and MiniMax broke Anthropic’s rules in order to “illicitly extract†the capabilities of its signature AI model, Claude. Distillation is a normal practice used by AI companies in which a “teacher†model is prompted with specifically tailored inputs, and the answers provided allow a “student†model to rapidly improve. For example, Anthropic writes, “frontier AI labs routinely distill their own models to create smaller, cheaper versions for their customers.†So to distinguish the actions Anthropic is complaining about from uses of distillation perceived as legitimate, these actions are referred to as “distillation attacks.†Are distillation attacks criminal offenses in the eyes of Anthropic? No such thing seems to be alleged here, but these acts were carried out, Anthropic says, “in violation of our terms of service and regional access restrictions.†Anthropic, which is itself dealing with the threat of being labeled a “supply chain risk†by the Pentagon, strikes a patriotic note in the post. Circumventing regional use restrictions and breaking rules, allows “foreign labs, including those subject to the control of the Chinese Communist Party, to close the competitive advantage that export controls are designed to preserve through other means,†it claims. Among the three China-based companies mentioned, Shanghai-based MiniMax, creator of the viral character chat app Talkie, offended Anthropic the most with the scale of its distillation effort: over 13 million alleged exchanges. That’s compared to Moonshot with over 3.4 million, and the most famous company named in the post, DeepSeek, with only an estimated 150,000. OpenAI, Anthropic's main competitor, is also mad about distillation from at least one Chinese AI company, having sent a memo to the House of Representatives earlier this month, accusing DeepSeek of "ongoing efforts to free-ride on the capabilities developed by OpenAI and other U.S. frontier labs." DeepSeek is expected to release its latest flagship model, DeepSeek V4 any day now, and CNBC has warned that this release could cause chaos on Wall Street, at a time when there’s already enough AI-related chaos on Wall Street to go around.

[14]

Anthropic says DeepSeek, other Chinese AI firms extracted Claude data

Anthropic has accused three major Chinese AI firms of using fraudulent accounts to extract data from its Claude models in an effort to boost rival systems. The San Francisco-based company said DeepSeek, MiniMax Group Inc. and Moonshot collectively generated more than 16 million exchanges with Claude by creating thousands of fake accounts and using proxy services to avoid detection. The tactic, known as distillation, allows developers to train their own models on the outputs of more advanced systems.

[15]

Anthropic exposes how Chinese AI firms try to steal LLM tech

Anthropic is accusing three Chinese artificial intelligence companies of "industrial-scale campaigns" to "illicitly extract" its technology using distillation attacks. Anthropic says these companies created 24,000 fraudulent accounts to hide these efforts. In a blog post detailing the attacks, Anthropic named three AI firms, including DeepSeek, the maker of the popular DeepSeek AI models. Anthropic explicitly framed the attack as an issue of national security. "We have identified industrial-scale campaigns by three AI laboratories -- DeepSeek, Moonshot, and MiniMax -- to illicitly extract Claude's capabilities to improve their own models," reads the blog post. "These labs generated over 16 million exchanges with Claude through approximately 24,000 fraudulent accounts, in violation of our terms of service and regional access restrictions." This Tweet is currently unavailable. It might be loading or has been removed. In January, OpenAI also accused DeepSeek of engaging in distillation attacks, effectively stealing its technology. At the time, many people reacted not with sympathy, but with mocking, as OpenAI and other AI companies have claimed they have the absolute right to train their models on copyrighted works without permission or payment. Typically, AI industry supporters say they have no choice but to train on copyrighted works because Chinese competitors are sure to ignore copyright laws anyway. "You can't be expected to have a successful AI program when every single article, book, or anything else that you've read or studied, you're supposed to pay for," President Donald Trump said at an AI event in July 2025. "When a person reads a book or an article, you've gained great knowledge. That does not mean that you're violating copyright laws or have to make deals with every content provider." He also added, "China's not doing it." That puts AI companies in the awkward position of claiming their intellectual property is off-limits for model training, while also engaging in similar behavior themselves. Distillation is a common training technique for large-language models; however, it can also be used to effectively reverse-engineer some aspects of the technology. In distillation, AI researchers run variations of the same prompt repeatedly to see how a particular model responds. "Distillation is a widely used and legitimate training method. For example, frontier AI labs routinely distill their own models to create smaller, cheaper versions for their customers. But distillation can also be used for illicit purposes: competitors can use it to acquire powerful capabilities from other labs in a fraction of the time, and at a fraction of the cost, that it would take to develop them independently." Chinese companies have a reputation for flagrantly ignoring intellectual property treaties and copyright laws, and reverse-engineering technology from Western companies. However, while Anthropic says the distillation attacks it uncovered violated its terms of service, it's not clear that they violated any international laws, or what remedy Anthropic has besides suspending the violating accounts. To prevent attacks like this, Anthropic called for cooperation between AI companies, government agencies, and other stakeholders. AI companies like Anthropic, xAI, Meta, and OpenAI are in the midst of one of the largest spending booms ever seen, with tens of billions of dollars being poured into AI infrastructure, data centers, and research and development. If rival foreign AI companies can cheaply recreate their LLM technology using distillation, they would clearly have an advantage over their U.S. rivals. "These campaigns are growing in intensity and sophistication," the blog post reads. "The window to act is narrow, and the threat extends beyond any single company or region. Addressing it will require rapid, coordinated action among industry players, policymakers, and the global AI community." Mashable reached out to Anthropic with questions about the distillation attacks, and we'll update this article if we receive a response.

[16]

Anthropic says DeepSeek, Moonshot, and MiniMax used 24,000 fake accounts to rip off Claude

Anthropic dropped a bombshell on the artificial intelligence industry Monday, publicly accusing three prominent Chinese AI laboratories -- DeepSeek, Moonshot AI, and MiniMax -- of orchestrating coordinated, industrial-scale campaigns to siphon capabilities from its Claude models using tens of thousands of fraudulent accounts. The San Francisco-based company said the three labs collectively generated more than 16 million exchanges with Claude through approximately 24,000 fake accounts, all in violation of Anthropic's terms of service and regional access restrictions. The campaigns, Anthropic said, are the most concrete and detailed public evidence to date of a practice that has haunted Silicon Valley for months: foreign competitors systematically using a technique called distillation to leapfrog years of research and billions of dollars in investment. "These campaigns are growing in intensity and sophistication," Anthropic wrote in a technical blog post published Monday. "The window to act is narrow, and the threat extends beyond any single company or region. Addressing it will require rapid, coordinated action among industry players, policymakers, and the global AI community." The disclosure marks a dramatic escalation in the simmering tensions between American and Chinese AI developers -- and it arrives at a moment when Washington is actively debating whether to tighten or loosen export controls on the advanced chips that power AI training. Anthropic, led by CEO Dario Amodei, has been among the most vocal advocates for restricting chip sales to China, and the company explicitly connected Monday's revelations to that policy fight. To understand what Anthropic alleges, it helps to understand what distillation actually is -- and how it evolved from an academic curiosity into the most contentious issue in the global AI race. At its core, distillation is a process of extracting knowledge from a larger, more powerful AI model -- the "teacher" -- to create a smaller, more efficient one -- the "student." The student model learns not from raw data, but from the teacher's outputs: its answers, reasoning patterns, and behaviors. Done correctly, the student can achieve performance remarkably close to the teacher's while requiring a fraction of the compute to train. As Anthropic itself acknowledged, distillation is "a widely used and legitimate training method." Frontier AI labs, including Anthropic, routinely distill their own models to create smaller, cheaper versions for customers. But the same technique can be weaponized. A competitor can pose as a legitimate customer, bombard a frontier model with carefully crafted prompts, collect the outputs, and use those outputs to train a rival system -- capturing capabilities that took years and hundreds of millions of dollars to develop. The technique burst into public consciousness in January 2025 when DeepSeek released its R1 reasoning model, which appeared to match or approach the performance of leading American models at dramatically lower cost. Databricks CEO Ali Ghodsi captured the industry's anxiety at the time, telling CNBC: "This distillation technique is just so extremely powerful and so extremely cheap, and it's just available to anyone." He predicted the technique would usher in an era of intense competition for large language models. That prediction proved prescient. In the weeks following DeepSeek's release, researchers at UC Berkeley said they recreated OpenAI's reasoning model for just $450 in 19 hours. Researchers at Stanford and the University of Washington followed with their own version built in 26 minutes for under $50 in compute credits. The startup Hugging Face replicated OpenAI's Deep Research feature as a 24-hour coding challenge. DeepSeek itself openly released a family of distilled models on Hugging Face -- including versions built on top of Qwen and Llama architectures -- under the permissive MIT license, with the model card explicitly stating that the DeepSeek-R1 series supports commercial use and allows for any modifications and derivative works, "including, but not limited to, distillation for training other LLMs." But what Anthropic described Monday goes far beyond academic replication or open-source experimentation. The company detailed what it characterized as deliberate, covert, and large-scale intellectual property extraction by well-resourced commercial laboratories operating under the jurisdiction of the Chinese government. Anthropic attributed each campaign "with high confidence" through IP address correlation, request metadata, infrastructure indicators, and corroboration from unnamed industry partners who observed the same actors on their own platforms. Each campaign specifically targeted what Anthropic described as Claude's most differentiated capabilities: agentic reasoning, tool use, and coding. DeepSeek, the company that ignited the distillation debate, conducted what Anthropic described as the most technically sophisticated of the three operations, generating over 150,000 exchanges with Claude. Anthropic said DeepSeek's prompts targeted reasoning capabilities, rubric-based grading tasks designed to make Claude function as a reward model for reinforcement learning, and -- in a detail likely to draw particular political attention -- the creation of "censorship-safe alternatives to policy sensitive queries." Anthropic alleged that DeepSeek "generated synchronized traffic across accounts" with "identical patterns, shared payment methods, and coordinated timing" that suggested load balancing to maximize throughput while evading detection. In one particularly notable technique, Anthropic said DeepSeek's prompts "asked Claude to imagine and articulate the internal reasoning behind a completed response and write it out step by step -- effectively generating chain-of-thought training data at scale." The company also alleged it observed tasks in which Claude was used to generate alternatives to politically sensitive queries about "dissidents, party leaders, or authoritarianism," likely to train DeepSeek's own models to steer conversations away from censored topics. Anthropic said it was able to trace these accounts to specific researchers at the lab. Moonshot AI, the Beijing-based creator of the Kimi models, ran the second-largest operation by volume at over 3.4 million exchanges. Anthropic said Moonshot targeted agentic reasoning and tool use, coding and data analysis, computer-use agent development, and computer vision. The company employed "hundreds of fraudulent accounts spanning multiple access pathways," making the campaign harder to detect as a coordinated operation. Anthropic attributed the campaign through request metadata that "matched the public profiles of senior Moonshot staff." In a later phase, Anthropic said, Moonshot adopted a more targeted approach, "attempting to extract and reconstruct Claude's reasoning traces." MiniMax, the least publicly known of the three but the most prolific by volume, generated over 13 million exchanges -- more than three-quarters of the total. Anthropic said MiniMax's campaign focused on agentic coding, tool use, and orchestration. The company said it detected MiniMax's campaign while it was still active, "before MiniMax released the model it was training," giving Anthropic "unprecedented visibility into the life cycle of distillation attacks, from data generation through to model launch." In a detail that underscores the urgency and opportunism Anthropic alleges, the company said that when it released a new model during MiniMax's active campaign, MiniMax "pivoted within 24 hours, redirecting nearly half their traffic to capture capabilities from our latest system." Anthropic does not currently offer commercial access to Claude in China, a policy it maintains for national security reasons. So how did these labs access the models at all? The answer, Anthropic said, lies in commercial proxy services that resell access to Claude and other frontier AI models at scale. Anthropic described these services as running what it calls "hydra cluster" architectures -- sprawling networks of fraudulent accounts that distribute traffic across Anthropic's API and third-party cloud platforms. "The breadth of these networks means that there are no single points of failure," Anthropic wrote. "When one account is banned, a new one takes its place." In one case, Anthropic said, a single proxy network managed more than 20,000 fraudulent accounts simultaneously, mixing distillation traffic with unrelated customer requests to make detection harder. The description suggests a mature and well-resourced infrastructure ecosystem dedicated to circumventing access controls -- one that may serve many more clients than just the three labs Anthropic named. Anthropic did not treat this as a mere terms-of-service violation. The company embedded its technical disclosure within an explicit national security argument, warning that "illicitly distilled models lack necessary safeguards, creating significant national security risks." The company argued that models built through illicit distillation are "unlikely to retain" the safety guardrails that American companies build into their systems -- protections designed to prevent AI from being used to develop bioweapons, carry out cyberattacks, or enable mass surveillance. "Foreign labs that distill American models can then feed these unprotected capabilities into military, intelligence, and surveillance systems," Anthropic wrote, "enabling authoritarian governments to deploy frontier AI for offensive cyber operations, disinformation campaigns, and mass surveillance." This framing directly connects to the chip export control debate that Amodei has made a centerpiece of his public advocacy. In a detailed essay published in January 2025, Amodei argued that export controls are "the most important determinant of whether we end up in a unipolar or bipolar world" -- a world where either only the U.S. and its allies possess the most powerful AI, or one where China achieves parity. He specifically noted at the time that he was "not taking any position on reports of distillation from Western models" and would "just take DeepSeek at their word that they trained it the way they said in the paper." Monday's disclosure is a sharp departure from that earlier restraint. Anthropic now argues that distillation attacks "undermine" export controls "by allowing foreign labs, including those subject to the control of the Chinese Communist Party, to close the competitive advantage that export controls are designed to preserve through other means." The company went further, asserting that "without visibility into these attacks, the apparently rapid advancements made by these labs are incorrectly taken as evidence that export controls are ineffective." In other words, Anthropic is arguing that what some observers interpreted as proof that Chinese labs can innovate around chip restrictions was actually, in significant part, the result of stealing American capabilities. Anthropic's decision to frame this as a national security issue rather than a legal dispute may reflect the difficult reality that intellectual property law offers limited recourse against distillation. As a March 2025 analysis by the law firm Winston & Strawn noted, "the legal landscape surrounding AI distillation is unclear and evolving." The firm's attorneys observed that proving a copyright claim in this context would be challenging, since it remains unclear whether the outputs of AI models qualify as copyrightable creative expression. The U.S. Copyright Office affirmed in January 2025 that copyright protection requires human authorship, and that "mere provision of prompts does not render the outputs copyrightable." The legal picture is further complicated by the way frontier labs structure output ownership. OpenAI's terms of use, for instance, assign ownership of model outputs to the user -- meaning that even if a company can prove extraction occurred, it may not hold copyrights over the extracted data. Winston & Strawn noted that this dynamic means "even if OpenAI can present enough evidence to show that DeepSeek extracted data from its models, OpenAI likely does not have copyrights over the data." The same logic would almost certainly apply to Anthropic's outputs. Contract law may offer a more promising avenue. Anthropic's terms of service prohibit the kind of systematic extraction the company describes, and violation of those terms is a more straightforward legal claim than copyright infringement. But enforcing contractual terms against entities operating through proxy services and fraudulent accounts in a foreign jurisdiction presents its own formidable challenges. This may explain why Anthropic chose the national security frame over a purely legal one. By positioning distillation attacks as threats to export control regimes and democratic security rather than as intellectual property disputes, Anthropic appeals to policymakers and regulators who have tools -- sanctions, entity list designations, enhanced export restrictions -- that go far beyond what civil litigation could achieve. Anthropic outlined a multipronged defensive response. The company said it has built classifiers and behavioral fingerprinting systems designed to identify distillation attack patterns in API traffic, including detection of chain-of-thought elicitation used to construct reasoning training data. It is sharing technical indicators with other AI labs, cloud providers, and relevant authorities to build what it described as a more holistic picture of the distillation landscape. The company has also strengthened verification for educational accounts, security research programs, and startup organizations -- the pathways most commonly exploited for setting up fraudulent accounts -- and is developing model-level safeguards designed to reduce the usefulness of outputs for illicit distillation without degrading the experience for legitimate customers. But the company acknowledged that "no company can solve this alone," calling for coordinated action across the industry, cloud providers, and policymakers. The disclosure is likely to reverberate through multiple ongoing policy debates. In Congress, the bipartisan No DeepSeek on Government Devices Act has already been introduced. Federal agencies including NASA have banned DeepSeek from employee devices. And the broader question of chip export controls -- which the Trump administration has been weighing amid competing pressures from Nvidia and national security hawks -- now has a new and vivid data point. For the AI industry's technical decision-makers, the implications are immediate and practical. If Anthropic's account is accurate, the proxy infrastructure enabling these attacks is vast, sophisticated, and adaptable -- and it is not limited to targeting a single company. Every frontier AI lab with an API is a potential target. The era of treating model access as a simple commercial transaction may be coming to an end, replaced by one in which API security is as strategically important as the model weights themselves. Anthropic has now put names, numbers, and forensic detail behind accusations that the industry had only whispered about for months. Whether that evidence galvanizes the coordinated response the company is calling for -- or simply accelerates an arms race between distillers and defenders -- may depend on a question no classifier can answer: whether Washington sees this as an act of espionage or just the cost of doing business in an era when intelligence itself has become a commodity.

[17]

US AI giant accuses Chinese rivals of mass data theft

Anthropic says three Chinese firms used 'distillation' technique to extract information from its Claude chatbot US artificial intelligence company Anthropic said on Monday it had uncovered campaigns by three Chinese AI firms to illicitly extract capabilities from its Claude chatbot, in what it described as industrial-scale intellectual property theft. OpenAI leveled similar charges last month. Anthropic said DeepSeek, Moonshot AI, and MiniMax used a technique known as "distillation" - using outputs from a more powerful AI system to rapidly boost the performance of a less capable one. "These campaigns are growing in intensity and sophistication," the company said in a statement. "The window to act is narrow." Distillation is a common practice within AI development, often used by companies to create cheaper, smaller versions of their own models. The practice grabbed headlines a year ago when the release of a low-cost generative AI model from DeepSeek performed at a similar level to ChatGPT and other top American chatbots, upending assumptions of US dominance in the sensitive sector. Anthropic said the companies achieved their ends through approximately 16 million exchanges with its Claude model and 24,000 fake accounts. These allowed the three labs to siphon off capabilities they had not independently developed, at a fraction of the cost - and in so doing circumvented export controls on powerful US technology intended to preserve American dominance in AI. The company argued the practice posed national security risks, saying models built through illicit distillation are unlikely to retain safety guardrails designed to prevent misuse - such as restrictions on helping develop bioweapons or enabling cyberattacks. Anthropic's arch-rival OpenAI, creator of ChatGPT, made similar accusations to US lawmakers earlier this month, saying Chinese companies were using the technique amid "ongoing efforts to free-ride on the capabilities developed by OpenAI and other US frontier labs". Anthropic said MiniMax ran the largest operation, generating more than 13 million exchanges. Each campaign concentrated heavily on coding, agentic reasoning, and tool use - areas where Claude is considered a leader. To circumvent Anthropic's ban on commercial access from China, the labs allegedly routed traffic through proxy services that managed the vast networks of fraudulent accounts. Anthropic called for coordinated industry and government responses to address what it said no single company could tackle alone.

[18]

Anthropic claims 3 Chinese companies ripped it off, using its AI tools to train their models: 'How the turn tables' | Fortune

Anthropic has accused three prominent Chinese artificial intelligence firms of using its Claude chatbot on a massive scale to secretly train rival models, an unexpected development in a years-long global debate over where fraud ends and industry standard practice begins. In a blog post on Monday, San Francisco-based Anthropic alleged that Chinese labs DeepSeek, Moonshot AI, and MiniMax violated corporate law by interacting with Claude, its market-reshaping vibe-coding tool. "We have identified industrial-scale campaigns by three AI laboratories -- DeepSeek, Moonshot, and MiniMax -- to illicitly extract Claude's capabilities to improve their own models," the company said. "These labs generated over 16 million exchanges with Claude through approximately 24,000 fraudulent accounts, in violation of our terms of service and regional access restrictions." According to Anthropic, the Chinese companies relied on a technique known as "distillation," in which one model is trained on the outputs of another, often a more capable system. The campaigns allegedly focused on areas that Anthropic considers key differentiators for Claude, including complex reasoning, coding assistance, and tool use. Anthropic argues that while distillation is a "widely used and legitimate training method," the Chinese firms' use of it in this manner may have been for "for illicit purposes." Using sprawling networks of fake accounts to replicate a competitor's proprietary model violates its terms of service and undermines U.S. export controls aimed at constraining China's access to cutting‑edge AI, Anthropic said, urging "rapid, coordinated action among industry players, policymakers, and the global AI community." If not quite distillation, Anthropic was recently accused of copyright violations by thousands of authors, allegedly downloading books in bulk from shadow libraries to train its AI models, rather than buying copies and scanning them itself. In a historic move, Anthropic settled that lawsuit for $1.5 billion in September 2025, paying authors around $3,000 per book for roughly 500,000 works. The company claims the three labs bypassed geofencing and business restrictions that limit Claude's commercial availability in China by routing traffic through proxy services that resell access to major Western AI models. One such "hydra cluster," Anthropic said, operated tens of thousands of accounts simultaneously to spread requests across different API keys and cloud providers. Once those accounts were in place, the labs allegedly scripted long, high‑token conversations designed to extract detailed, step‑by‑step answers that could be fed back into their own systems as training data. In Anthropic's telling, the result was an off‑the‑books pipeline that turned Claude into an unwilling teacher for models being developed inside China's increasingly competitive AI sector. Anthropic has not yet announced specific lawsuits against the three companies, but it has signaled that it has cut off known access points and is urging Washington to tighten export controls on advanced chips and AI services to prevent similar efforts in the future. If Anthropic hoped for sympathy, the reaction online and among industry watchers has been notably skeptical. Commentators quickly pointed out that Anthropic itself has faced high‑profile accusations of overreaching in its own data collection practices beyond the copyright case from authors, such as a separate case over scraping Reddit content. "How the turn tables," wrote a commenter on the Reddit thread r/singularity, a play on words often attributed to a meme derived from the television show The Office. Behind the sniping lies a broader fight over who sets the rules for an industry built on remixing human work. U.S. firms such as Anthropic and OpenAI have increasingly pushed for aggressive enforcement against foreign competitors they accuse of copying proprietary systems, even as they defend their own sprawling data collection under the banner of fair use. Chinese labs, many of which release more open‑source models, are racing to close the performance gap with Western rivals using any legal advantage they can find. With Washington already debating tighter restrictions on exporting AI chips and cloud services to China, Anthropic's allegations are likely to feed calls for new guardrails -- while giving critics one more chance to note the uncomfortable symmetry at the heart of modern AI.

[19]

Anthropic Furious at DeepSeek for Copying Its AI Without Permission, Which Is Pretty Ironic When You Consider How It Built Claude in the First Place

Earlier this month, Google publicly griped that "commercially motivated" actors were trying to clone its Gemini AI through agents that queried the chatbot up to 100,000 times to "extract" the underlying model. The hypocrisy of Google's accusations was palpable. For years, the search giant has relied on indiscriminately scraping the internet for content to train its AI models, without compensating copyright holders -- and racking up lawsuits as a result. Now Anthropic has entered the fray. Unlike Google, the company behind chatbot Claude was willing to point fingers, accusing Chinese AI firms DeepSeek, Moonshot, and MiniMax of "distilling" its AI model. The company claimed in a new blog post that the accused firms created more than 24,000 fake accounts that queried Claude 16 million times, a "violation of our terms of service and regional access restrictions." Distillation is essentially when a small "student" model is trained to replicate the performance of a much larger "teacher" model -- a convoluted term essentially denoting the act of copying someone's homework without express permission. Even ChatGPT maker OpenAI has accused DeepSeek of distilling its AI models in a statement earlier this month, highlighting a broader backlash to Chinese entities reverse-engineering extremely costly AI software -- despite the companies themselves amassing intellectual property and feeding it to their models with little regard for securing the required permissions. In its blog post, Anthropic cried foul and accused the Chinese companies of "illicitly" extracting "Claude's capabilities to improve their own models." "Distillation is a widely used and legitimate training method," it wrote. "For example, frontier AI labs routinely distill their own models to create smaller, cheaper versions for their customers." "But distillation can also be used for illicit purposes: competitors can use it to acquire powerful capabilities from other labs in a fraction of the time, and at a fraction of the cost, that it would take to develop them independently," the blog post reads. Some queries the company identified involved asking Claude to "imagine and articulate the internal reasoning behind a completed response and write it out step by step -- effectively generating chain-of-thought training data at scale." The allegations come as DeepSeek prepares to release its V4 model, an occasion that could rattle the American AI industry. Perhaps the biggest perpetrator, according to Anthropic, though, was MiniMax, a Shanghai-based company that IPO'd on the Hong Kong Stock Exchange last month. Anthropic says it identified "over 13 million exchanges" from it. "When we released a new model during MiniMax's active campaign, they pivoted within 24 hours, redirecting nearly half their traffic to capture capabilities from our latest system," the company claimed. As a result, Anthropic is asking for action "across the AI industry, cloud providers, and policymakers." "These campaigns are growing in intensity and sophistication," Anthropic wrote. "The window to act is narrow, and the threat extends beyond any single company or region." But given the reckless approach of some of the biggest players in the AI space, it remains to be seen whether politicians will jump into action. In early 2025, DeepSeek turned Silicon Valley upside down after proving that its AI model could be created far cheaper and efficiently than pioneering models at the time, resulting in a panicked selloff that led to over $1 trillion in valuations being wiped out. Whether this time will be any different remains to be seen. The tone has shifted considerably over the last year, with investors starting to balk at the hundreds of billions of dollars AI companies are committing to build data centers. Perhaps, if DeepSeek can prove once again that training AI models can be done cheaper, perhaps there could be an onus to be inspired by their approach instead. Netizens, meanwhile, had little sympathy for Anthropic. "They robbed the robbers," one Reddit user wrote. "Poor billionaires." "This is like when the zoo accuses you of 'stealing' the animals that they rightfully kidnapped from the jungle," another user argued.

[20]

Critics Mock Anthropic's Claims Chinese AI Labs Are Stealing Its Data - Decrypt

Critics on X are accusing Anthropic of hypocrisy over how AI models are trained. Anthropic has accused three Chinese AI labs of extracting millions of responses from its Claude chatbot to train competing systems, a move the company claims violates its terms of service and weakens U.S. export controls. In a blog post published Monday, Anthropic said it identified "industrial-scale campaigns" by AI developers DeepSeek, Moonshot, and MiniMax to extract Claude's capabilities through model distillation. The company alleged the labs generated more than 16 million exchanges using roughly 24,000 fraudulent accounts. Anthropic's announcement drew skepticism and mockery on X, where critics questioned its stance given how major AI models, including Claude, are trained, reflecting the broader ongoing debate over intellectual property, copyright, and fair use. "You trained on the open internet and then call it 'distillation attacks' when others learn from you," wrote Tory Green, co-founder of AI infrastructure firm IO.Net. "Labs that like to preach 'open research' suddenly crying about open access." "Ohhh nooo not my private IP, how dare someone use that to train an AI model, only Anthropic has the right to use everyone else's IP nooooo, this cannot stand!" another X user wrote. Distillation is an AI training method in which a smaller model learns from the outputs of a larger one. In cybersecurity contexts, it can also describe model extraction attacks, where an attacker uses legitimate access to systematically query a system and use its responses to train a competing model. "These campaigns are growing in intensity and sophistication," Anthropic wrote Monday. "The window to act is narrow, and the threat extends beyond any single company or region. Addressing it will require rapid, coordinated action among industry players, policymakers, and the global AI community." "Distillation can be legitimate: AI labs use it to create smaller, cheaper models for their customers," Anthropic wrote in a separate X post. "But foreign labs that illicitly distill American models can remove safeguards, feeding model capabilities into their own military, intelligence, and surveillance systems." In June, Reddit sued Anthropic, accusing it of scraping more than 100,000 posts and comments and using the data to fine-tune Claude. The case joins lawsuits against OpenAI, Meta, and Google over the large-scale scraping of online content without permission. "[There's] the public face that attempts to ingratiate itself into the consumer's consciousness with claims of righteousness and respect for boundaries and the law, and the private face that ignores any rules that interfere with its attempts to further line its pockets," the Reddit lawsuit said. Anthropic said it is expanding detection, tightening account verification, sharing intelligence with other labs and authorities, and adding safeguards to limit future distillation attempts. "But no company can solve this alone," Anthropic wrote. "As we noted above, distillation attacks at this scale require a coordinated response across the AI industry, cloud providers, and policymakers."

[21]

The AI Cold War: US firms claim Chinese companies stole research