AWS Kiro Powers Launch with Stripe, Figma, and Datadog to Fix AI Coding Assistant Bottleneck

2 Sources

2 Sources

[1]

AWS launches Kiro powers with Stripe, Figma, and Datadog integrations for AI-assisted coding

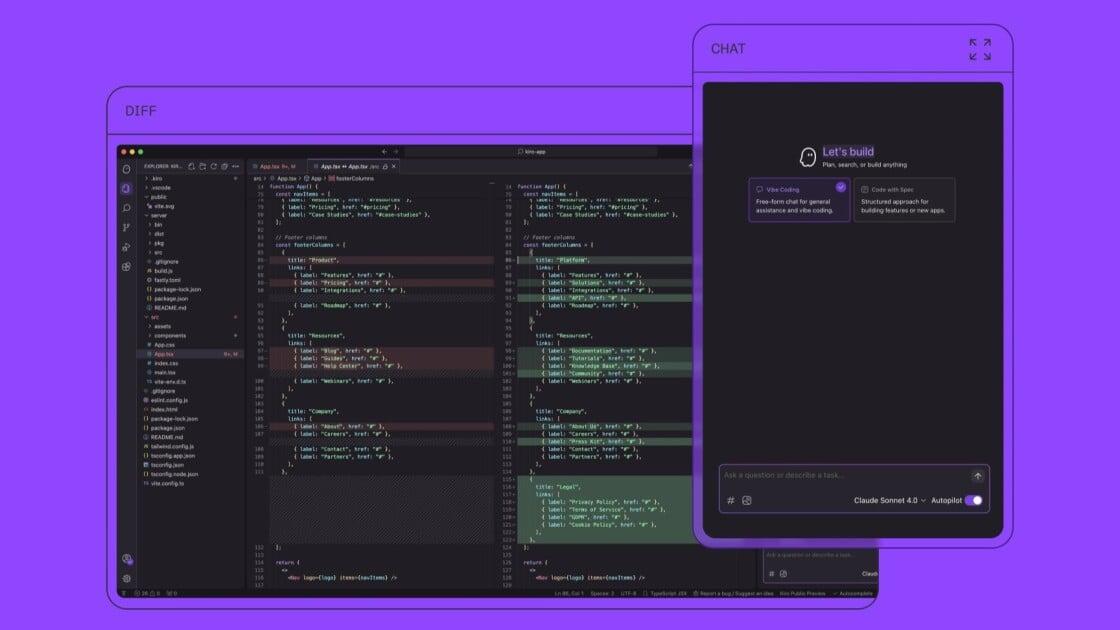

Amazon Web Services on Wednesday introduced Kiro powers, a system that allows software developers to give their AI coding assistants instant, specialized expertise in specific tools and workflows -- addressing what the company calls a fundamental bottleneck in how artificial intelligence agents operate today. AWS made the announcement at its annual re:Invent conference in Las Vegas. The capability marks a departure from how most AI coding tools work today. Typically, these tools load every possible capability into memory upfront -- a process that burns through computational resources and can overwhelm the AI with irrelevant information. Kiro powers takes the opposite approach, activating specialized knowledge only at the moment a developer actually needs it. "Our goal is to give the agent specialized context so it can reach the right outcome faster -- and in a way that also reduces cost," said Deepak Singh, Vice President of Developer Agents and Experiences at Amazon, in an exclusive interview with VentureBeat. The launch includes partnerships with nine technology companies: Datadog, Dynatrace, Figma, Neon, Netlify, Postman, Stripe, Supabase, and AWS's own services. Developers can also create and share their own powers with the community. Why AI coding assistants choke when developers connect too many tools To understand why Kiro powers matters, it helps to understand a growing tension in the AI development tool market. Modern AI coding assistants rely on something called the Model Context Protocol, or MCP, to connect with external tools and services. When a developer wants their AI assistant to work with Stripe for payments, Figma for design, and Supabase for databases, they connect MCP servers for each service. The problem: each connection loads dozens of tool definitions into the AI's working memory before it writes a single line of code. According to AWS documentation, connecting just five MCP servers can consume more than 50,000 tokens -- roughly 40 percent of an AI model's context window -- before the developer even types their first request. Developers have grown increasingly vocal about this issue. Many complain that they don't want to burn through their token allocations just to have an AI agent figure out which tools are relevant to a specific task. They want to get to their workflow instantly -- not watch an overloaded agent struggle to sort through irrelevant context. This phenomenon, which some in the industry call "context rot," leads to slower responses, lower-quality outputs, and significantly higher costs -- since AI services typically charge by the token. Inside the technology that loads AI expertise on demand Kiro powers addresses this by packaging three components into a single, dynamically-loaded bundle. The first component is a steering file called POWER.md, which functions as an onboarding manual for the AI agent. It tells the agent what tools are available and, crucially, when to use them. The second component is the MCP server configuration itself -- the actual connection to external services. The third includes optional hooks and automation that trigger specific actions. When a developer mentions "payment" or "checkout" in their conversation with Kiro, the system automatically activates the Stripe power, loading its tools and best practices into context. When the developer shifts to database work, Supabase activates while Stripe deactivates. The baseline context usage when no powers are active approaches zero. "You click a button and it automatically loads," Singh said. "Once a power has been created, developers just select 'open in Kiro' and it launches the IDE with everything ready to go." How AWS is bringing elite developer techniques to the masses Singh framed Kiro powers as a democratization of advanced development practices. Before this capability, only the most sophisticated developers knew how to properly configure their AI agents with specialized context -- writing custom steering files, crafting precise prompts, and manually managing which tools were active at any given time. "We've found that our developers were adding in capabilities to make their agents more specialized," Singh said. "They wanted to give the agent some special powers to do a specific problem. For example, they wanted their front end developer, and they wanted the agent to become an expert at backend as a service." This observation led to a key insight: if Supabase or Stripe could build the optimal context configuration once, every developer using those services could benefit. "Kiro powers formalizes that -- things that people, only the most advanced people were doing -- and allows anyone to get those kind of skills," Singh said. Why dynamic loading beats fine-tuning for most AI coding use cases The announcement also positions Kiro powers as a more economical alternative to fine-tuning, the process of training an AI model on specialized data to improve its performance in specific domains. "It's much cheaper," Singh said, when asked how powers compare to fine-tuning. "Fine-tuning is very expensive, and you can't fine-tune most frontier models." This is a significant point. The most capable AI models from Anthropic, OpenAI, and Google are typically "closed source," meaning developers cannot modify their underlying training. They can only influence the models' behavior through the prompts and context they provide. "Most people are already using powerful models like Sonnet 4.5 or Opus 4.5," Singh said. "What those models need is to be pointed in the right direction." The dynamic loading mechanism also reduces ongoing costs. Because powers only activate when relevant, developers aren't paying for token usage on tools they're not currently using. Where Kiro powers fits in Amazon's bigger bet on autonomous AI agents Kiro powers arrives as part of a broader push by AWS into what the company calls "agentic AI" -- artificial intelligence systems that can operate autonomously over extended periods. Earlier at re:Invent, AWS announced three "frontier agents" designed to work for hours or days without human intervention: the Kiro autonomous agent for software development, the AWS security agent, and the AWS DevOps agent. These represent a different approach from Kiro powers -- tackling large, ambiguous problems rather than providing specialized expertise for specific tasks. The two approaches are complementary. Frontier agents handle complex, multi-day projects that require autonomous decision-making across multiple codebases. Kiro powers, by contrast, gives developers precise, efficient tools for everyday development tasks where speed and token efficiency matter most. The company is betting that developers need both ends of this spectrum to be productive. What Kiro powers reveals about the future of AI-assisted software development The launch reflects a maturing market for AI development tools. GitHub Copilot, which Microsoft launched in 2021, introduced millions of developers to AI-assisted coding. Since then, a proliferation of tools -- including Cursor, Cline, and Claude Code -- have competed for developers' attention. But as these tools have grown more capable, they've also grown more complex. The Model Context Protocol, which Anthropic open-sourced last year, created a standard for connecting AI agents to external services. That solved one problem while creating another: the context overload that Kiro powers now addresses. AWS is positioning itself as the company that understands production software development at scale. Singh emphasized that Amazon's experience running AWS for 20 years, combined with its own massive internal software engineering organization, gives it unique insight into how developers actually work. "It's not something you would use just for your prototype or your toy application," Singh said of AWS's AI development tools. "If you want to build production applications, there's a lot of knowledge that we bring in as AWS that applies here." The road ahead for Kiro powers and cross-platform compatibility AWS indicated that Kiro powers currently works only within the Kiro IDE, but the company is building toward cross-compatibility with other AI development tools, including command-line interfaces, Cursor, Cline, and Claude Code. The company's documentation describes a future where developers can "build a power once, use it anywhere" -- though that vision remains aspirational for now. For the technology partners launching powers today, the appeal is straightforward: rather than maintaining separate integration documentation for every AI tool on the market, they can create a single power that works everywhere Kiro does. As more AI coding assistants crowd into the market, that kind of efficiency becomes increasingly valuable. Kiro powers is available now to developers using Kiro IDE version 0.7 or later at no additional charge beyond the standard Kiro subscription. The underlying bet is a familiar one in the history of computing: that the winners in AI-assisted development won't be the tools that try to do everything at once, but the ones smart enough to know what to forget.

[2]

How Kiro levels up agent-based software development - SiliconANGLE

From hobby projects to enterprise apps: How AWS Kiro levels up 'vibe coding' The rise of artificial intelligence has sparked a new era of agent-based software development, fundamentally changing how code is created, tested and deployed. While "vibe coding" -- writing software through casual conversation with a coding agent -- has captured the imagination of hobbyists, enterprise information technology requires a level of rigor and maintainability that standard chat windows often lack, according to Deepak Singh (pictured), vice president of developer agents and experiences at Amazon Web Services Inc. "The challenge with [vibe coding] is five days after you've written an application, you've kind of forgotten why," Singh told theCUBE. "In a team environment, it becomes even more interesting because three months later, nobody has any idea why you wrote the software you did." Singh spoke with John Furrier as part of theCUBE's coverage of AWS re:Invent, for an exclusive interview on theCUBE, SiliconANGLE Media's livestreaming studio. They discussed agent-based software development and Kiro, AWS' bid to turn loose "vibe coding" chats into spec-driven, testable software that enterprises can actually ship. Since its announcement at the AWS Summit in July, Kiro -- an intelligent assistant for software development -- has seen rapid adoption, logging over 250,000 users in its first three months. However, the tool is evolving beyond simple code generation toward "spec-driven development," an approach that models the behavior of Amazon's own principal engineers, who focus on defining the problem before writing a single line of code, Singh explained. "Senior engineers, our principal engineering community, [are] really good at helping people understand the problem, break it into smaller parts and maybe work with others to clarify exactly what they need to do," he said. Kiro allows developers to convert conversational intent into a formal specification, followed by a design and finally a set of executable tasks. This ensures that the convenience of AI-assisted coding does not come at the expense of documentation or structural integrity, Singh noted. Instead, this method goes beyond standard unit tests by using the agent to extract functional requirements from the specification and automatically generating tests to verify those properties. "Here the agent is saying, 'Here's all the things that code should functionally do. We are going to actually test if it actually does that,'" Singh said. "[Meaning] your code is much more likely to be actually good and do what you want it to do." Developers can create specific profiles -- such as an "AWS operator" or a "frontend developer" -- that automatically load the correct tools, steering files and best practices for that specific domain, according to Singh. This flexibility allows teams to enforce security guidelines and architectural standards automatically, effectively shifting compliance to the earliest stages of development. "You can switch back and forth between these custom agents that are specialized at other tasks and their manifestations of what you [would] like to do," he explained. "It's a different way of building. In some ways, it's very familiar. In some ways, it's unique." Ultimately, these tools are lowering the barrier to entry, allowing senior engineers to code more and bringing lapsed developers back into the fold, Singh noted. This means that more people can write more code -- faster than ever. "I've seen senior engineers who have written more code in the last six months than they had written in the three years [previously]," he said. "These tools allow them to express themselves in ways that they just couldn't before."

Share

Share

Copy Link

Amazon Web Services unveiled Kiro powers at re:Invent, introducing dynamic loading for AI coding assistants with partnerships including Stripe, Figma, and Datadog. The system addresses context rot by activating specialized knowledge on demand, cutting token usage by up to 40 percent while improving response quality and reducing costs for developers.

AWS Kiro Tackles Context Rot in AI Coding Assistant Workflows

Amazon Web Services announced Kiro powers at its annual re:Invent conference in Las Vegas, introducing a system that fundamentally changes how AI coding assistants access specialized knowledge. The capability allows developers to give their agents instant expertise in specific tools and workflows, addressing what Deepak Singh, Vice President of Developer Agents and Experiences at Amazon, calls a fundamental bottleneck in agent-based software development

1

.

Source: SiliconANGLE

Unlike traditional AI coding assistant approaches that load every possible capability into memory upfront, Kiro powers activates specialized knowledge only when developers actually need it. This departure from conventional methods tackles a growing problem in software development: context rot. When developers connect multiple Model Context Protocol servers to work with services like Stripe for payments, Figma for design, and Datadog for monitoring, each connection loads dozens of tool definitions into the AI's working memory before writing a single line of code[1](https://venturebeat.com/ai/aws-lau nches-kiro-powers-with-stripe-figma-and-datadog-integrations-for-ai).

Stripe, Figma, and Datadog Integrations Cut Token Usage by 40 Percent

According to AWS documentation, connecting just five MCP servers can consume more than 50,000 tokens—roughly 40 percent of an AI model's context window—before developers even type their first request

1

. This phenomenon leads to slower responses, lower-quality outputs, and significantly higher costs since AI services typically charge by the token.The launch includes partnerships with nine technology companies: Datadog, Dynatrace, Figma, Neon, Netlify, Postman, Stripe, Supabase, and AWS's own services. Developers can also create and share their own powers with the community. When a developer mentions "payment" or "checkout" in their conversation with AWS Kiro, the system automatically activates the Stripe power, loading its tools and best practices into context. When the developer shifts to database work, Supabase activates while Stripe deactivates

1

.

Source: VentureBeat

Dynamic AI Agent Configuration Democratizes Elite Developer Techniques

Kiro powers packages three components into a single, dynamically-loaded bundle. The first is a steering file called POWER.md, which functions as an onboarding manual telling the agent what tools are available and when to use them. The second component is the MCP server configuration itself—the actual connection to external services. The third includes optional hooks and automation that trigger specific actions

1

."Our goal is to give the agent specialized context so it can reach the right outcome faster—and in a way that also reduces cost," Singh told VentureBeat in an exclusive interview

1

. Singh framed the capability as democratizing advanced development practices. Before this, only sophisticated developers knew how to properly configure their AI agents with specialized context, writing custom steering files, crafting precise prompts, and manually managing which tools were active.Related Stories

From Vibe Coding to Spec-Driven Processes for Enterprise Applications

Since its announcement at the AWS Summit in July, AWS Kiro has logged over 250,000 users in its first three months

2

. The tool is evolving beyond simple code generation toward spec-driven processes, an approach that models the behavior of Amazon's own principal engineers who focus on defining the problem before writing code."The challenge with [vibe coding] is five days after you've written an application, you've kind of forgotten why," Singh explained to theCUBE. "In a team environment, it becomes even more interesting because three months later, nobody has any idea why you wrote the software you did"

2

.AWS Kiro allows developers to convert conversational intent into a formal specification, followed by a design and finally a set of executable tasks. This ensures maintainability and documentation for enterprise applications. The system goes beyond standard unit tests by using the agent to extract functional requirements from the specification and automatically generating tests to verify those properties

2

.Developer Productivity Gains Signal Shift in Software Development Practices

Developers can create specific profiles—such as an "AWS operator" or a "frontend developer"—that automatically load the correct tools, steering files, and architectural standards for that specific domain. This flexibility allows teams to enforce security guidelines automatically, shifting compliance to the earliest stages of development

2

.The impact on developer productivity has been substantial. "I've seen senior engineers who have written more code in the last six months than they had written in the three years [previously]," Singh noted. "These tools allow them to express themselves in ways that they just couldn't before"

2

. This suggests that Kiro powers positions dynamic loading as a more economical alternative to fine-tuning for most AI coding use cases, lowering the barrier to entry and bringing lapsed developers back into software development.References

Summarized by

Navi

[1]

Related Stories

AWS Launches Kiro: AI Coding Platform Exits Preview with Structured Development Approach

17 Nov 2025•Technology

Amazon Launches Kiro: A New AI-Powered IDE to Revolutionize Software Development

15 Jul 2025•Technology

Amazon Web Services Developing "Kiro": A Powerful AI-Driven Code Generation Tool

08 May 2025•Technology

Recent Highlights

1

AI Models Lie and Deceive to Protect Other AI Models From Deletion, Study Reveals

Science and Research

2

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

3

Judge blocks Pentagon from blacklisting Anthropic over AI safety guardrails dispute

Policy and Regulation