Family sues OpenAI over Tumbler Ridge shooting, alleging company knew of attack plans

5 Sources

5 Sources

[1]

Tumbler Ridge shooting: Family of victim Maya Gebala sues OpenAI

The family of a girl critically injured during a mass shooting at a Canadian school is suing ChatGPT-maker OpenAI, claiming it had been aware the suspect had been planning an attack but failed to alert the authorities. Twelve-year-old Maya Gebala was shot in the neck and head in the shooting in Tumbler Ridge on 10 February and remains in hospital. An initial ChatGPT account linked to the suspect, 18‑year‑old Jesse Van Rootselaar, was banned by OpenAI in June 2025 due to the nature of her conversations with the chatbot. The AI company - which the BBC has contacted for comment - previously said it did not alert police because the account did not meet its threshold of a credible or imminent plan for serious physical harm to others. Eight people were killed in the attack, including five young children and the suspect's mother, in one of the deadliest shootings in Canadian history. The civil lawsuit, brought by Gebala's mother Cia Edmonds, alleges Rootselaar set up an account with ChatGPT before she turned 18 - something users can do with parental consent. The plaintiffs allege no age verification took place on the site. The lawsuit claims the suspect saw the chatbot as a "trusted confidante" and described "various scenarios involving gun violence" to it over several days in late spring or early summer 2025. Twelve OpenAI employees then reportedly flagged the posts as "indicating an imminent risk of serious harm to others" and recommended Canadian law enforcement was informed, the lawsuit alleges. Instead, it is alleged the request to contact the authorities was "rebuffed" and the only action taken was to ban Rootselaar's account. The suspect was able to then open a second ChatGPT account, despite being flagged by OpenAI systems in the past, and "continue planning scenarios involving gun violence". The lawsuit claims the company "had specific knowledge of the shooter's long-range planning of a mass casualty event," but "took no steps to act upon this knowledge". The plaintiffs state as a result of the company's conduct, Gebala, who was shot at three times after trying to lock a library door to keep out the shooter, has suffered a "catastrophic brain injury". OpenAI did not immediately respond to requests for a statement regarding the lawsuit. But on 4 March, the CEO of OpenAI, Sam Altman, virtually met Canada's artificial intelligence minister, Evan Solomon, and the premier of British Columbia, David Eby. According to the Wall Street Journal, Altman "pledged to strengthen protocols on notifying police over potentially harmful interactions" and to apologise to the Tumbler Ridge community. In an open letter to Canadian officials on 26 February, penned by OpenAI's vice-president of global policy and shared with media outlets, the company said it had implemented a series of changes in recent months, including enlisting the help of "mental health and behavioural experts" to assess cases and making the criteria for referral to police "more flexible". Because of the changes, OpenAI said it would have reported the suspect's ChatGPT account under the new guidelines. "We commit to strengthening our detection systems to better prevent attempts to evade our safeguards and prioritize identifying the highest risk offenders," the company wrote. OpenAI said it would also establish a direct point of contact with Canadian law enforcement so it can quickly flag any possible future cases with "potential for real world violence". Canada's AI minister Evan Solomon said on 27 February that while legislators saw a willingness by the tech firm to improve its protocols, "we have not yet seen a detailed plan for how these commitments will be implemented in practice". Sign up for our Tech Decoded newsletter to follow the world's top tech stories and trends. Outside the UK? Sign up here.

[2]

Family of Tumbler Ridge shooting victim sues OpenAI alleging they could've prevented attack

The family of a child critically injured one of Canada's worst mass shootings is suing OpenAI, arguing the technology company could have prevented the attack on a school last month. The lawsuit comes days after the head of OpenAI said he would apologize to the families of a remote Canadian town after violence shattered the tight-knit community. Eight people - including five school students, aged 12 to 13, and a 39-year-old teaching assistant - were killed by an 18-year-old shooter in the mountain town of Tumbler Ridge on 10 February. It later emerged that the shooter, Jesse Van Rootselaar, who died of a self-inflicted injury, had described violent scenarios involving guns to ChatGPT over several days in June, which an automated review system flagged, according to the Wall Street Journal. But Open AI, which owns the chatbot, said it felt the account activity did not identify "credible or imminent planning" and so banned Van Rootselaar's account, but did not notify authorities in Canada. The company later said it found a second account linked to the shooter after the first was suspended. On Monday, Cia Edmonds filed a lawsuit against the company on behalf of herself and her two daughters, Maya and Dahlia Gebala, both of whom were present during the shooting. "The purpose of this lawsuit is to learn the whole truth about how and why the Tumbler Ridge mass shooting happened, to impose accountability, to seek redress for harms and losses, and to help prevent another mass-shooting atrocity in Canada," the law firm Rice Parsons Leoni & Elliott LLP, which is representing the family, said in a statement. The allegations have not been tested in court. Maya, 12, was shot three times. One bullet entered her head above her left eye and another hit her neck. A third bullet grazed her cheek and part of her ear, the lawsuit says. She remains in hospital after suffering a catastrophic traumatic brain injury, permanent cognitive and physical disability, right-sided hemiplegia, scarring and physical deformities, according to the claim. Both Edmonds and her daughter Dahlia, who was not injured physically in the shooting, have experienced PTSD, anxiety, depression and sleep disturbances. Edmonds' civil claim alleges ChatGPT was rushed to market by OpenAI without adequate safety studies. The family is seeking undisclosed punitive damages, saying the company's conduct "is reprehensible and morally repugnant" to both the plaintiffs and the "community at large". Last week, OpenAI CEO Sam Altman met virtually with British Columbia premier David Eby and Darryl Krakowka, mayor of Tumbler Ridge amid mounting frustration that existing policies within the tech giant did not require it to report violent content to police. "Everybody on the call recognized that an apology is nowhere near sufficient, but also that it is completely necessary," Eby said. "And the mayor of Tumbler Ridge is going to work with OpenAI to make sure that any public statements relating to that are done in the way that is appropriate and meaningful, as much as possible, [and] doesn't retraumatize people in the community." Asked to comment on the lawsuit, a spokesperson for the company called the shooting an "unspeakable tragedy" and said Altman will work with Eby and Krakowka "to find the best way to convey his apology and support to the Tumbler Ridge community" but did not give a timeline. "OpenAI remains committed to working with provincial and local officials to make meaningful changes that help prevent tragedies like this in the future." The company did not say if the lawsuit would change Altman's plans to apologize. "OpenAI had the opportunity to notify authorities and potentially even to stop this tragedy from happening," Eby told reporters after the meeting with Altman. The premier said while the company could have done more, he flagged a lack of mental health support and the shooter's access to firearms. Eby, who delivered an emotional speech to the community at a vigil in the days after the shooting has emerged as a staunch critic of the largely non-existent regulatory framework governing how artificial intelligence companies operate in Canada - and how OpenAI handled the situation. "It's not acceptable that it's up to the companies about whether or not to report, and that needs to change." Eby refused meetings with members of the company's leadership team, demanding instead that he speak directly with Altman. In the 30-minute call, the premier said he did not ask about interactions between the shooter and OpenAI's chatbot. Already, under pressure from lawmakers, the company has changed how it works to better identify potential warning signals of serious violence. Canada's AI minister Evan Solomon said he had asked the company to apply their new safety standards retroactively and review previously flagged cases. "This will determine whether additional incidents that would have been referred to law enforcement under OpenAI's new safety standards were missed, and ensure they are promptly reported to the RCMP." While Eby said OpenAI's leadership has been "responsive" to the concerns of governments, he warned other companies with similar chatbots hadn't yet changed their policies. "The status quo doesn't work, didn't work, and it very much presents the threat that it might fail again," said Eby. "And so change needs to be made quite urgently."

[3]

Mother Sues OpenAI for Not Telling Police About Mass Shooter Before Deadly Rampage

Can't-miss innovations from the bleeding edge of science and tech The mother of a girl who was horrifically wounded in a school shooting in Canada in February is suing OpenAI for not warning police about the killer, Jesse Van Rootselaar, according to reports. Some eight months before the shooting in British Columbia, which killed eight people including the perpetrator and injured 25 others, OpenAI employees had already been aware of Van Rootselaar's alarming conversations with ChatGPT after they were flagged by an automated review system, a story broken by the Wall Street Journal in the wake of the massacre. Around a dozen staffers debated notifying authorities about Rootselaar's disturbing conversations, which included "scenarios involving gun violence," but leadership ultimately decided not to. Now, a lawsuit filed by Mia Edmonds, the mother of a 12-year-old named Maya Gebala who survived the shooting but remains in critical condition, argues that OpenAI had "specific knowledge of the shooter utilizing ChatGPT to plan a mass casualty event like the Tumbler Ridge mass shooting," per the Associated Press, and demands punitive damages from the company. Maya was shot three times at close range while she was trying to lock a door to keep out the shooter, including in the head and the neck, according to the suit. She remains hospitalized with a catastrophic brain injury and is paralyzed on the right side of her body. Her sister Dahlia was also at the school during the shooting and while she wasn't physically injured, she's now suffering PTSD, anxiety, and depression, the suit said. In the original WSJ reporting, OpenAI said that it banned Van Rootselaar's account but admitted that at the time it didn't consider her activity a credible and imminent risk of serious physical harm to others. Later, the company revealed that Van Rootselaar had made a second account to subvert the ban, claiming it only discovered the alt after the shooter's name was released publicly. The lawsuit alleges the "shooter used their second account to continue planning scenarios involving gun violence, including a mass casualty event like the Tumbler Ridge mass shooting, with ChatGPT, and to receive mental health counseling and pseudo-therapy from ChatGPT." It also accuses OpenAI of rushing ChatGPT to a global market without conducting proper safety studies and implementing strong safeguards, echoing claims made by many of the company's critics. OpenAI, more so than other AI leaders, has been under the microscope for ongoing reports of so-called AI psychosis, the term some experts are using to describe delusional episodes caused by a chatbot's sycophantic responses, which comes as millions of users treat chatbots as their personal confidante or therapist. Specific versions of ChatGPT have been singled out as being especially obsequious, and extreme cases have led to breaks with reality and explosions of violence. Some users, including teenagers, have taken their own lives after extensively discussing their thoughts of suicide with ChatGPT, and others have committed murder. The February shooting in British Columbia has only heightened these questions about what OpenAI is doing to ensure its platform is safe. "The purpose of this lawsuit is to learn the whole truth about how and why the Tumbler Ridge mass shooting happened, to impose accountability, to seek redress for harms and losses, and to help prevent another mass-shooting atrocity in Canada," read a statement from the law firm representing Edmonds. After the shooting OpenAI vowed to make its AI safer, including measures to prevent users from circumventing bans on the platform. Last week, CEO Sam Altman met virtually with Canada's AI Minister Evan Solomon to discuss why the company had failed to alert authorities, after which Solomon said he was ordering a government safety review of OpenAI's technology. The next day, Altman met with BC premier David Eby, promising to make an apology to the victims of the shooting. No apology has yet appeared publicly.

[4]

Mother of British Columbia shooting victim sues OpenAI

TORONTO -- The family of a student who was critically wounded during a mass shooting in British Columbia last month has sued the artificial intelligence company OpenAI, accusing it of failing to warn the police of disturbing information about the shooter's ChatGPT account. Lawyers for the family of the 12-year-old student, Maya Gebala, said she had been trying to lock a library door to protect her classmates when she was shot three times at close range, including once in the head. The girl remains hospitalized in Vancouver and has undergone multiple brain surgeries. On Feb. 10, Jesse Van Rootselaar, 18, took two firearms from her home in Tumbler Ridge, British Columbia, and killed her mother and 11-year-old brother, the authorities said. She then traveled to the Tumbler Ridge Secondary School and killed five students and one educator and shot two other students, including Gebala. Van Rootselaar died of a self-inflicted gunshot wound, authorities said. Eight months before the attack, OpenAI had suspended a ChatGPT account associated with Van Rootselaar for violating its user agreement, the company said. She had documented her fascination with violence and weapons across several social media accounts, according to a review by The New York Times. The lawsuit, filed Monday by Gebala's mother, Cia Edmonds, claims that OpenAI was "aware of the shooter's violent intentions" and use of its AI chatbot to plan "scenarios involving gun violence, including a mass casualty event." "The purpose of this lawsuit is to learn the whole truth about how and why the Tumbler Ridge mass shooting happened," the law firm representing Gebala's family, Rice Parsons Leoni and Elliott LLP, said in a statement. OpenAI said Van Rootselaar's account was suspended after messages from her ChatGPT chatbot account were detected by an automated system and manually reviewed by its team. The company did not provide more details about the contents of the messages. While the company considered alerting officials in Canada, OpenAI said it had ultimately decided that the messages did not meet its reporting threshold, which weighs whether there is imminent planning on the part of a user. The company said it had considered the privacy of users when making referrals to law enforcement and did not want to distress them by involving the police. Canada summoned top safety officials from OpenAI to Ottawa for a meeting in late February, after which the company revealed in a public letter that Van Rootselaar had created a second ChatGPT account when her other one was banned. In the letter, OpenAI said it had "taken steps to strengthen our safeguards," including improving systems to detect banned users who try to establish new accounts and adding assessments by mental health and behavioral experts for complex cases. David Eby, the premier of British Columbia, said that the CEO of OpenAI, Sam Altman, had agreed to apologize to the community of Tumbler Ridge. OpenAI released a statement after they spoke on March 5. Altman "will work with them to find the best way to convey his apology and support to the Tumbler Ridge community," Jamie Radice, a spokesperson for OpenAI, said in a statement. After the shooting, Gebala and the other injured student were airlifted to the children's hospital in Vancouver, about 750 miles south of Tumbler Ridge. The other student has since returned home, but Gebala's long-term prospects of recovery are uncertain. The chief coroner of British Columbia has ordered a public inquest into the circumstances that led to the attack.

[5]

OpenAI sued over Canada school shooting

The family of a girl critically wounded in a Canada school shooting has filed a lawsuit against ChatGPT-maker OpenAI, accusing the artificial intelligence firm of being aware of the suspect's planned shooting but failing to alert authorities. The suit was filed Monday in the Supreme Court of British Columbia by the parents of 12-year-old Maya Gebala, who was one of the more than two dozen individuals injured in a shooting at the Tumbler Ridge Secondary School on Feb. 10. Eight people, including a teacher and five students, were killed. Gebala's lawyers pointed to the two ChatGPT accounts allegedly used by 18-year-old suspect Jesse Van Rootselaar, who they say described various situations involving gun violence to ChatGPT over the course of several days. ChatGPT's monitoring system flagged the posts, which were sent to employees for review, per the suit. The suit stated about 12 OpenAI employees identified the posts as posing an "imminent risk of serious harm to others" and recommended law enforcement be contacted. When the recommendations were sent to leadership at OpenAI, the defendants allegedly "rebuffed their employees request," to contact law enforcement and only banned the first account. The suspect allegedly opened a second ChatGPT account to continue planning gun violence scenarios, including a mass causality. "OpenAI harvested such harmful information and data in an indiscriminate manner and then supplied such information and data to ChatGPT," the suit stated. "OpenAI took no steps -- adequate or at all -- to avoid providing ChatGPT with such information and data, or impose any safeguards to prevent users from obtaining such information from ChatGPT." Gebala's family said she was shot three times, leaving her with a traumatic brain injury, permanent cognitive and physical disability, and other mental and physical injuries. The suit went into further detail over ChatGPT's development, including the GPT-4o model, which incorporated a memory tool to tailor responses over time to a user, and sycophancy, which the company admitted made responses overly supportive when reversing the change. Lawyers alleged OpenAI's features were "intentionally designed to foster dependency" between the user and ChatGPT, which they said "assumed the role" of a mental health counselor or pseudo-therapist. Gebala's family is seeking financial damages for OpenAI's alleged negligence and failure to warn. OpenAI did not immediately respond to a request for comment. The lawsuit is the latest in a series of legal challenges for OpenAI, as courts weigh whether AI developers and their systems are responsible for certain mental health episodes.

Share

Share

Copy Link

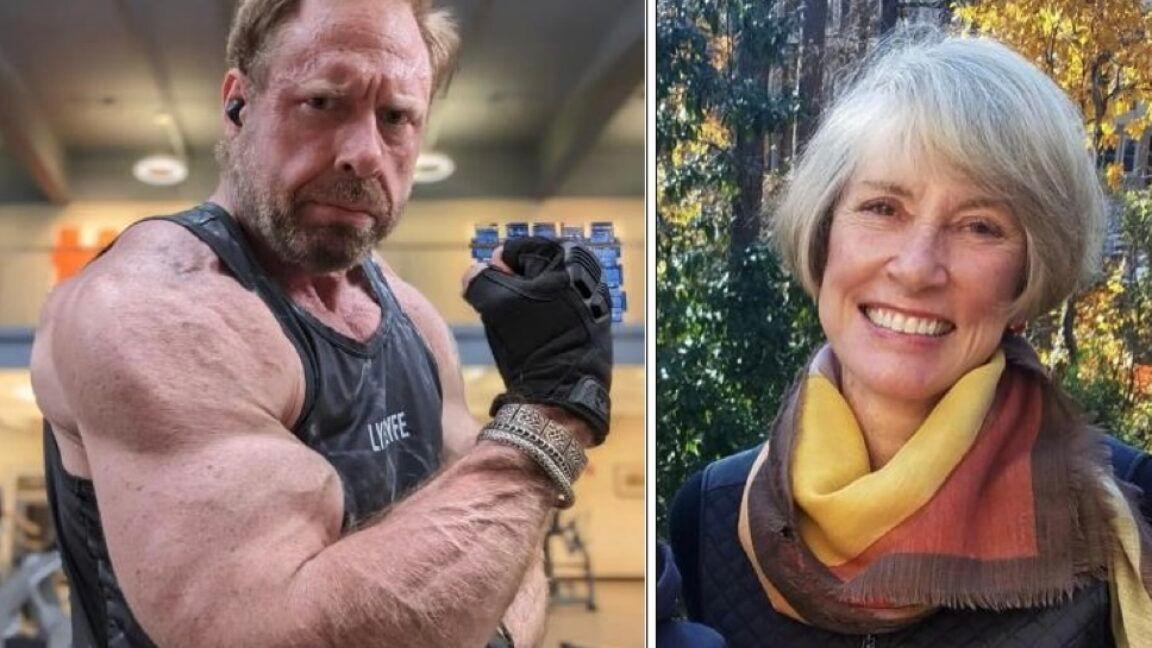

The family of Maya Gebala, critically injured in the Tumbler Ridge shooting, has filed a lawsuit against OpenAI. The suit alleges that 12 employees flagged the shooter's ChatGPT conversations as posing imminent risk but leadership failed to alert authorities. The February attack killed eight people, including five children, in one of Canada's deadliest mass shootings.

OpenAI Faces Lawsuit Over Tumbler Ridge Shooting

The family of victim sues OpenAI following the devastating Tumbler Ridge shooting that claimed eight lives on February 10, marking one of Canada's deadliest mass shootings. Cia Edmonds filed the lawsuit on behalf of her daughter Maya Gebala, a 12-year-old who was shot three times while attempting to lock a library door to protect classmates

1

. Maya remains hospitalized with a catastrophic brain injury, permanent cognitive and physical disability, and right-sided hemiplegia after bullets struck her head and neck2

.

Source: Seattle Times

The civil lawsuit, filed in the Supreme Court of British Columbia, centers on allegations that OpenAI possessed specific knowledge of shooter Jesse Van Rootselaar planning an attack but failed to alert authorities

4

. The 18-year-old suspect killed her mother, her 11-year-old brother, five students, and one educator before dying from a self-inflicted gunshot wound4

.ChatGPT Account Flagged Months Before Attack

According to the lawsuit, Jesse Van Rootselaar described "various scenarios involving gun violence" to the AI chatbot over several days in late spring or early summer 2025

1

. An automated review system flagged these conversations, prompting manual review by OpenAI staff. Approximately 12 OpenAI employees identified the posts as "indicating an imminent risk of serious harm to others" and recommended that Canadian law enforcement be informed5

.The lawsuit alleges that leadership "rebuffed" the request to contact authorities, instead choosing only to ban Rootselaar's initial ChatGPT account in June 2025

1

. OpenAI defended its decision by stating the account did not meet its reporting threshold, which requires evidence of a credible or imminent plan for serious physical harm1

. The company said it considered user privacy when making referrals to law enforcement and did not want to distress users by involving police4

.Inadequate Safeguards Allowed Second Account

After the initial ban, Van Rootselaar created a second ChatGPT account to circumvent the restriction, a fact OpenAI only revealed after Canadian officials summoned the company to Ottawa in late February

4

. The lawsuit claims the suspect used this second account to "continue planning scenarios involving gun violence, including a mass casualty event like the Tumbler Ridge mass shooting"3

. This breach highlights what critics describe as inadequate safeguards in OpenAI's detection systems to prevent banned users from re-accessing the platform.

Source: The Hill

The suit accuses OpenAI of rushing ChatGPT to market without conducting proper safety studies and implementing strong safeguards

3

. Lawyers argue the company's features were "intentionally designed to foster dependency" between users and the AI chatbot, which assumed the role of a mental health counselor or pseudo-therapist5

. The lawsuit describes the shooter viewing ChatGPT as a "trusted confidante"1

.Related Stories

Questions of Accountability and AI Safety

The legal action seeks undisclosed punitive damages, with the family's lawyers stating OpenAI's conduct "is reprehensible and morally repugnant"

2

. "The purpose of this lawsuit is to learn the whole truth about how and why the Tumbler Ridge mass shooting happened, to impose accountability, to seek redress for harms and losses, and to help prevent another mass-shooting atrocity in Canada," Rice Parsons Leoni & Elliott LLP stated2

.

Source: Futurism

British Columbia Premier David Eby emerged as a vocal critic, stating "OpenAI had the opportunity to notify authorities and potentially even to stop this tragedy from happening"

2

. Eby refused meetings with company leadership, demanding to speak directly with Sam Altman, OpenAI's CEO2

.OpenAI Pledges Safety Improvements

Following intense pressure, Sam Altman met virtually with Canadian artificial intelligence minister Evan Solomon and Premier Eby on March 5, pledging to strengthen protocols on notifying police over potentially harmful interactions

1

. Altman promised to apologize to the Tumbler Ridge community, though no public apology has yet materialized3

.In an open letter to Canadian officials on February 26, OpenAI outlined several changes implemented in recent months, including enlisting mental health and behavioral experts to assess complex cases and making criteria for referral to police "more flexible"

1

. The company acknowledged it would have reported Van Rootselaar's account under the new guidelines and committed to strengthening detection systems to prevent attempts to evade safeguards1

. OpenAI also pledged to establish a direct point of contact with Canadian law enforcement for quickly flagging cases with potential for real-world violence1

.Canada's AI minister expressed skepticism, stating "we have not yet seen a detailed plan for how these commitments will be implemented in practice"

1

. Solomon ordered a government safety review of OpenAI's technology and asked the company to apply new safety standards retroactively to review previously flagged cases2

. This lawsuit adds to mounting legal challenges facing OpenAI as courts examine whether AI developers bear responsibility for mental health episodes and violence linked to their systems5

.References

Summarized by

Navi

[2]

[4]

[5]

Related Stories

OpenAI updates policies after flagging Canada shooter's ChatGPT account but not reporting to police

21 Feb 2026•Policy and Regulation

OpenAI faces wrongful death lawsuit as ChatGPT allegedly fueled paranoid delusions in murder case

11 Dec 2025•Policy and Regulation

OpenAI Faces Intensified Legal Challenge Over Teen Suicide Case Amid Safety Policy Changes

22 Oct 2025•Policy and Regulation

Recent Highlights

1

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

2

Anthropic's Claude Code Source Leak Reveals Hidden AI Agent Plans and Extensive System Access

Technology

3

Judge blocks Pentagon from branding Anthropic a security risk over AI safety guardrails dispute

Policy and Regulation