Intel Launches Gaudi 3 AI Accelerator: A Cost-Effective Alternative to NVIDIA's H100

3 Sources

3 Sources

[1]

Intel's new Gaudi 3 AI accelerator launched: cheaper than NVIDIA H100 AI GPU, but also slower

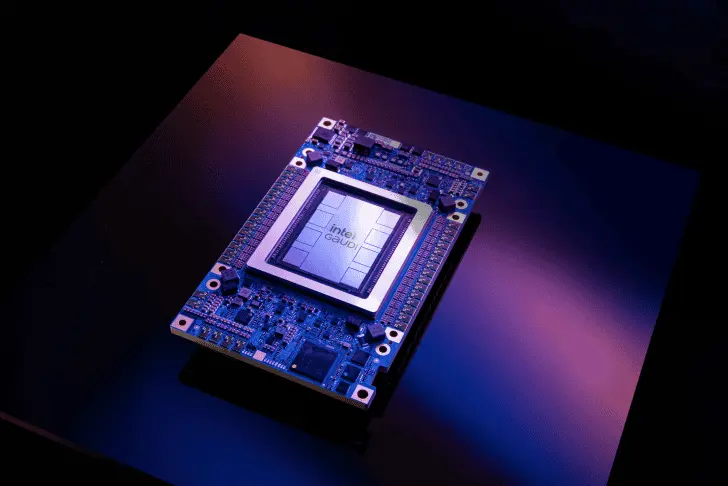

Intel has officially launched its Gaudi 3 AI accelerator, with the new AI chip coming in slower than NVIDIA's dominant H100 and its new HBM3E-fueled H200 AI GPUs, meaning Intel is aiming its Gaudi 3 by pushing that it's cheaper, and has a lower total cost of ownership (TCO). Inside, the new Intel Gaudi 3 AI accelerator features two chiplets with 64 tensor processor cores (TPCs, 256x256 MAC structure with FP32 accumulators), eight matrix multiplication engines (MMEs, 256-bit wide vector processor), and 96MB of on-die SRAM cache with a 19.2TB/s bandwidth. Gaudi 3 also features 24 x 200GbE networking interfaces and 14 media engines, with the media engines capable of handling H.265, H.264, and VP9 to support vision processing. Intel's new Gaudi 3 AI accelerator features 128GB of HBM2E memory with up to 3.67TB/sec of memory bandwidth. Intel says that its new Gaudi 3 AI accelerator offers up to 1856 BF16/FP8 matrix TFLOPS as well as up to 28.7 BF16 vector TFLOPS at around 600W TDP, which when compared to the NVIDIA H100 AI GPU, the Gaudi 3 is slightly slower with BF16 matrix performance (1856 vs 1979 TFLOPS), 2x slower FP8 matrix performance (1856 vs 3958 TFLOPS) and massively, massively slower BF16 vector performance (28.7 vs 1979 TFLOPS), yikes. Justin Hotard, Intel executive vice president and general manager of the Data Center and Artificial Intelligence Group, said: "Demand for AI is leading to a massive transformation in the datacenter, and the industry is asking for choice in hardware, software, and developer tools. With our launch of Xeon 6 with P-cores and Gaudi 3 AI accelerators, Intel is enabling an open ecosystem that allows our customers to implement all of their workloads with greater performance, efficiency, and security". Intel highlights its Gaudi 3 AI accelerator: Specifically optimized for large-scale generative AI, Gaudi 3 boasts 64 Tensor processor cores (TPCs) and eight matrix multiplication engines (MMEs) to accelerate deep neural network computations. It includes 128GB of HBM2e memory for training and inference, and 24 x 200Gb Ethernet ports for scalable networking. Gaudi 3 also offers seamless compatibility with the PyTorch framework and advanced Hugging Face transformer and diffuser models. Intel recently announced a collaboration with IBM to deploy Intel Gaudi 3 AI accelerators as a service on IBM Cloud. Through this collaboration, Intel and IBM aim to lower the total cost of ownership to leverage and scale AI, while enhancing performance.

[2]

Intel launches Gaudi 3 accelerator for AI: Slower than H100 but also cheaper

Intel formally introduced its Gaudi 3 accelerator for AI workloads today. The new processors are slower than Nvidia's popular H100 and H200 GPUs for AI and HPC, so Intel is betting the success of its Gaudi 3 on its lower price and lower total cost of ownership (TCO). Intel's Gaudi 3 processor uses two chiplets that pack 64 tensor processor cores (TPCs, 256x256 MAC structure with FP32 accumulators), eight matrix multiplication engines (MMEs, 256-bit wide vector processor), and 96MB of on-die SRAM cache with a 19.2 TB/s bandwidth. Also, Gaudi 3 integrates 24 200 GbE networking interfaces and 14 media engines -- with the latter capable of handling H.265, H.264, JPEG, and VP9 to support vision processing. The processor is accompanied by 128GB of HBM2E memory in eight memory stacks offering a massive bandwidth of 3.67 TB/s. Intel's Gaudi 3 represents a massive improvement when compared to Gaudi 2, which has 24 TPCs, two MMEs, and carries 96GB of HBM2E memory. However, it looks like Intel simplified both TPCs and MMEs as the Gaudi 3 processor only supports FP8 matrix operations as well as BFloat16 matrix and vector operations (i.e., no more FP32, TF32, and FP16). When it comes to performance, Intel says that Gaudi 3 can offer up to 1856 BF16/FP8 matrix TFLOPS as well as up to 28.7 BF16 vector TFLOPS at around 600W TDP. Compared to Nvidia's H100, at least on paper, Gaudi 3 offers slightly lower BF16 matrix performance (1,856 vs 1,979 TFLOPS), two times lower FP8 matrix performance (1,856 vs 3,958 TFLOPS), and significantly lower BF16 vector performance (28.7 vs 1,979 TFLOPS). More important than the raw specs will be actual real-world performance of Gaudi 3. It needs to compete with AMD's Instinct MI300-series as well as Nvidia's H100 and B100/B200 processors. And this is something that remains to be seen, as a lot depends on software and other factors. For now, Intel showed some slides claiming that Gaudi 3 can offer significant price performance advantage compared to Nvidia's H100. Earlier this year, Intel indicated that an accelerator kit based on eight Gaudi 3 processors on a baseboard will cost $125,000, which means that each one will cost around $15,625. By contrast, an Nvidia H100 card is currently available for $30,678, so Intel indeed plans to have a big price advantage over its competitor. Yet, with the potentially massive performance advantages offered by Blackwell-based B100/B200 GPUs, it remains to be seen whether the blue company will be able to maintain its advantage over its rival. "Demand for AI is leading to a massive transformation in the datacenter, and the industry is asking for choice in hardware, software, and developer tools," said Justin Hotard, Intel executive vice president and general manager of the Data Center and Artificial Intelligence Group. "With our launch of Xeon 6 with P-cores and Gaudi 3 AI accelerators, Intel is enabling an open ecosystem that allows our customers to implement all of their workloads with greater performance, efficiency, and security." Intel's Gaudi 3 AI accelerators will be available from IBM Cloud and Intel Tiber Developer Cloud. Also, systems based on Intel's Xeon 6 and Gaudi 3 will be generally available from Dell, HPE, and Supermicro in the fourth quarter, with systems from Dell and Supermicro shipping in October and machines from Supermicro shipping in December.

[3]

Intel Announces General Availability of Gaudi 3 AI Accelerators In Q4: The Cost-Effective AI Solution

Intel today announced the general availability of its latest Gaudi 3 AI Accelerators which will start shipping next month. Intel's Gaudi lineup is well regarded in the AI industry due to its cost-effective positioning and the next iteration of Gaudi products will be available as early as next month with the Gaudi 3. Today, Intel is announcing the full product stack of Gaudi 3 products which includes the Accelerator cards (HL-325L OAM-Compliant), the Universal Baseboard (HLB-325), and the PCIe CEM (HL-388 Add-In-Card). The Intel Gaudi 3 PCIe CEM is being detailed in today's announcement and will bring up to 1835 TFLOPS of FP8 (peak) compute capabilities along with 128 GB of HBM2e memory, a 600W TDP, 8 matrix multiplication engines, 64 TPCs 22 200 GbE RDMA NICs, all in a dual-slot full-height 10.5" solution. The OAM solution will be equipped with 96 MB of SRAM in two 48 MB SRAM stacks with a total HBM bandwidth of 3.67 TB/s and a total on-die SRAM bandwidth (L2) of 19.2 TB/s. Each Matrix Multiplication engine is fully configurable (not programmable) and comes with a 256 x 256 MAC array structure with FP32 accumulators and 64K MACs/cycle for BF16 and FP8. The TPC or Tensor Processing Core features a 256B-wide SIMD vector processor which is programmable with C enhanced (TPC intrinsic), a VLIW with 4 separate pipeline slots, an integrated address generation unit & supports main 1/2/4-Byte datatypes (Floating Point and Integer). The universal baseboard will be equipped with four Gaudi 3 AI accelerators which will feature 4 200 GbE interconnect links and 400 GbE through the QSFP-DD controller. Each OAM solution will have an x16 PCIe Gen5 link, offering up to 800 GB/s for scale-out and 1800 GB/s for scale-up bandwidth. The system itself will pack 512 GB/s of PCIe bandwidth. This solution is ideally designed for inferencing, fine-tuning, and small model training. In terms of performance, the Intel Gaudi 3 AI accelerator will offer up to 9% better inference uplift in LLaMA 3 8B models while delivering 80% better performance per $ versus the H100. In LLaMA 70B, the Gaudi 3 AI accelerator will offer 19% better inference throughput and 2x performance per $ versus the H100. The Intel Gaudi 3 reference server (HLS-3) node will come with 2 Intel Xeon Host CPUs such as the latest Xeon 6900P series and feature 8 OAM cards, offering a total bandwidth of 67.2 Tb/s (scale-up) and 9.6 Tb/s (scale-out). The AI solution will be backed by the Gaudi software suite which is the most commonly used Gen AI framework and supports FP16, BF16, and FP8 Quantization. Intel is working with various partners on the Gaudi ecosystem which include Dell Technologies, HPE, and Supermicro as the system providers and IBM, LUMEN, Infosys, Naver, and many others as the SW enablers.

Share

Share

Copy Link

Intel has introduced its new Gaudi 3 AI accelerator, positioning it as a more affordable option compared to NVIDIA's H100 GPU. While it offers lower performance, the Gaudi 3 aims to provide a cost-effective solution for AI workloads.

Intel Unveils Gaudi 3 AI Accelerator

Intel has officially launched its latest AI accelerator, the Gaudi 3, aimed at challenging NVIDIA's dominance in the AI hardware market. The Gaudi 3 is positioned as a more affordable alternative to NVIDIA's H100 GPU, offering a balance between performance and cost for AI workloads

1

.Performance and Specifications

The Gaudi 3 accelerator boasts impressive specifications, including 128GB of HBM2e memory and a maximum TDP of 600W. It utilizes a 5nm process node and features 128 Tensor Processor Cores (TPCs) . Intel claims that the Gaudi 3 can deliver up to 1.6x higher performance compared to its predecessor, the Gaudi 2, for natural language processing workloads.

Comparison with NVIDIA H100

While the Gaudi 3 offers improved performance over its predecessor, it still falls short of NVIDIA's H100 GPU in terms of raw power. Intel acknowledges that the H100 is approximately 1.5x faster than the Gaudi 3 for certain AI workloads

1

. However, Intel's strategy focuses on providing a more cost-effective solution, with the Gaudi 3 priced significantly lower than the H100.Cost-Effectiveness and Market Strategy

Intel is positioning the Gaudi 3 as a value proposition for companies looking to build AI infrastructure. The company claims that the total cost of ownership (TCO) for a Gaudi 3-based system could be up to 40% lower than an H100-based system over a three-year period

3

. This approach aims to attract customers who prioritize cost-efficiency over absolute peak performance.Related Stories

Availability and Future Outlook

The Gaudi 3 AI accelerator is expected to be available in the fourth quarter of 2023

3

. Intel's entry into the AI accelerator market with a competitive offering signals its commitment to challenging NVIDIA's dominance and providing alternatives to customers in the rapidly growing AI hardware sector.Industry Impact and Competition

The launch of the Gaudi 3 accelerator is likely to intensify competition in the AI hardware market. As companies increasingly invest in AI infrastructure, the availability of cost-effective solutions like the Gaudi 3 could potentially reshape the market dynamics and provide more options for businesses looking to implement AI technologies at scale .

References

Summarized by

Navi

[1]

Related Stories

Intel Unveils Next-Generation AI Solutions: Xeon 6 CPUs and Gaudi 3 AI Accelerators

25 Sept 2024

Dell Pioneers Integration of Intel's Gaudi 3 AI Chips in PowerEdge Servers

17 Sept 2025•Technology

Intel Challenges AI Cloud Market with Gaudi 3-Powered Tiber AI Cloud and Inflection AI Partnership

08 Oct 2024•Technology