Novel Magnetic RAM Architecture Paves Way for AI in IoT Edge Devices

2 Sources

2 Sources

[1]

Magnetic RAM-based architecture could pave way for implementing neural networks on edge IoT devices

There are, without a doubt, two broad technological fields that have been developing at an increasingly fast pace over the past decade: artificial intelligence (AI) and the Internet of Things (IoT). By excelling at tasks such as data analysis, image recognition, and natural language processing, AI systems have become undeniably powerful tools in both academic and industry settings. Meanwhile, miniaturization and advances in electronics have made it possible to massively reduce the size of functional devices capable of connecting to the Internet. Engineers and researchers alike foresee a world where IoT devices are ubiquitous, comprising the foundation of a highly interconnected world. However, bringing AI capabilities to IoT edge devices presents a significant challenge. Artificial neural networks (ANNs) -- one of the most important AI technologies -- require substantial computational resources, and IoT edge devices are inherently small, with limited power, processing speed, and circuit space. Developing ANNs that can efficiently learn, deploy, and operate on edge devices is a major hurdle. In response, Professor Takayuki Kawahara and Yuya Fujiwara from the Tokyo University of Science, are working hard toward finding elegant solutions to this challenge. In their latest study published in IEEE Access on October 08, 2024, they introduced a novel training algorithm for a special type of ANN called binarized neural network (BNN), as well as an innovative implementation of this algorithm in a cutting-edge computing-in-memory (CiM) architecture suitable for IoT devices. "BNNs are ANNs that employ weights and activation values of only -1 and +1, and they can minimize the computing resources required by the network by reducing the smallest unit of information to just one bit," explains Kawahara. "However, although weights and activation values can be stored in a single bit during inference, weights and gradients are real numbers during learning, and most calculations performed during learning are real number calculations as well. For this reason, it has been difficult to provide learning capabilities to BNNs on the IoT edge side." To overcome this, the researchers developed a new training algorithm called ternarized gradient BNN (TGBNN), featuring three key innovations. First, they employed ternary gradients during training, while keeping weights and activations binary. Second, they enhanced the Straight Through Estimator (STE), improving the control of gradient backpropagation to ensure efficient learning. Third, they adopted a probabilistic approach for updating parameters by leveraging the behavior of MRAM cells. Afterwards, the research team implemented this novel TGBNN algorithm in a CiM architecture -- a modern design paradigm where calculations are performed directly in memory, rather than in a dedicated processor, to save circuit space and power. To realize this, they developed a completely new XNOR logic gate as the building block for a Magnetic Random Access Memory (MRAM) array. This gate uses a magnetic tunnel junction to store information in its magnetization state. To change the stored value of an individual MRAM cell, the researchers leveraged two different mechanisms. The first was spin-orbit torque -- the force that occurs when an electron spin current is injected into a material. The second was voltage-controlled magnetic anisotropy, which refers to the manipulation of the energy barrier that exists between different magnetic states in a material. Thanks to these methods, the size of the product-of-sum calculation circuit was reduced to half of that of conventional units. The team tested the performance of their proposed MRAM-based CiM system for BNNs using the MNIST handwriting dataset, which contains images of individual handwritten digits that ANNs have to recognize. "The results showed that our ternarized gradient BNN achieved an accuracy of over 88% using Error-Correcting Output Codes (ECOC)-based learning, while matching the accuracy of regular BNNs with the same structure and achieving faster convergence during training," notes Kawahara. "We believe our design will enable efficient BNNs on edge devices, preserving their ability to learn and adapt." This breakthrough could pave the way to powerful IoT devices capable of leveraging AI to a greater extent. This has notable implications for many rapidly developing fields. For example, wearable health monitoring devices could become more efficient, smaller, and reliable without requiring cloud connectivity at all times to function. Similarly, smart houses would be able to perform more complex tasks and operate in a more responsive way. Across these and all other possible use cases, the proposed design could also reduce energy consumption, thus contributing to sustainability goals.

[2]

Towards implementing neural networks on edge IoT devices

There are, without a doubt, two broad technological fields that have been developing at an increasingly fast pace over the past decade: artificial intelligence (AI) and the Internet of Things (IoT). By excelling at tasks such as data analysis, image recognition, and natural language processing, AI systems have become undeniably powerful tools in both academic and industry settings. Meanwhile, miniaturization and advances in electronics have made it possible to massively reduce the size of functional devices capable of connecting to the Internet. Engineers and researchers alike foresee a world where IoT devices are ubiquitous, comprising the foundation of a highly interconnected world. However, bringing AI capabilities to IoT edge devices presents a significant challenge. Artificial neural networks (ANNs) -- one of the most important AI technologies -- require substantial computational resources. Meanwhile, IoT edge devices are inherently small, with limited power, processing speed, and circuit space. Developing ANNs that can efficiently learn, deploy, and operate on edge devices is a major hurdle. In response, Professor Takayuki Kawahara and Mr. Yuya Fujiwara from the Tokyo University of Science, are working hard towards finding elegant solutions to this challenge. In their latest study published in IEEE Access on October 08, 2024, they introduced a novel training algorithm for a special type of ANN called binarized neural network (BNN), as well as an innovative implementation of this algorithm in a cutting-edge computing-in-memory (CiM) architecture suitable for IoT devices. "BNNs are ANNs that employ weights and activation values of only -1 and +1, and they can minimize the computing resources required by the network by reducing the smallest unit of information to just one bit," explains Kawahara, "However, although weights and activation values can be stored in a single bit during inference, weights and gradients are real numbers during learning, and most calculations performed during learning are real number calculations as well. For this reason, it has been difficult to provide learning capabilities to BNNs on the IoT edge side." To overcome this, the researchers developed a new training algorithm called ternarized gradient BNN (TGBNN), featuring three key innovations. First, it employs ternary gradients during training, while keeping weights and activations binary. Second, they enhanced the Straight Through Estimator (STE), improving the control of gradient backpropagation to ensure efficient learning. Third, they adopted a probabilistic approach for updating parameters by leveraging the behavior of MRAM cells. Afterwards, the research team implemented this novel TGBNN algorithm in a CiM architecture -- a modern design paradigm where calculations are performed directly in memory, rather than in a dedicated processor, to save circuit space and power. To realize this, they developed a completely new XNOR logic gate as the building block for a Magnetic Random Access Memory (MRAM) array. This gate uses a magnetic tunnel junction to store information in its magnetization state. To change the stored value of an individual MRAM cell, the researchers leveraged two different mechanisms. The first was spin-orbit torque -- the force that occurs when an electron spin current is injected into a material. The second was voltage-controlled magnetic anisotropy, which refers to the manipulation of the energy barrier that exists between different magnetic states in a material. Thanks to these methods, the size of the product-of-sum calculation circuit was reduced to half of that of conventional units. The team tested the performance of their proposed MRAM-based CiM system for BNNs using the MNIST handwriting dataset, which contains images of individual handwritten digits that ANNs have to recognize. "The results showed that our ternarized gradient BNN achieved an accuracy of over 88% using Error-Correcting Output Codes (ECOC)-based learning, while matching the accuracy of regular BNNs with the same structure and achieving faster convergence during training," notes Kawahara. "We believe our design will enable efficient BNNs on edge devices, preserving their ability to learn and adapt." This breakthrough could pave the way to powerful IoT devices capable of leveraging AI to a greater extent. This has notable implications for many rapidly developing fields. For example, wearable health monitoring devices could become more efficient, smaller, and reliable without requiring cloud connectivity at all times to function. Similarly, smart houses would be able to perform more complex tasks and operate in a more responsive way. Across these and all other possible use cases, the proposed design could also reduce energy consumption, thus contributing to sustainability goals.

Share

Share

Copy Link

Researchers from Tokyo University of Science develop a new training algorithm and computing-in-memory architecture using Magnetic RAM, potentially enabling efficient implementation of neural networks on IoT edge devices.

Bridging AI and IoT: The Challenge of Edge Computing

As artificial intelligence (AI) and the Internet of Things (IoT) continue to advance rapidly, researchers face a significant challenge: implementing AI capabilities, particularly artificial neural networks (ANNs), on small IoT edge devices with limited resources

1

2

. These devices typically have constraints in power, processing speed, and circuit space, making it difficult to run computationally intensive AI algorithms efficiently.Innovative Solution: Ternarized Gradient Binarized Neural Network (TGBNN)

To address this challenge, Professor Takayuki Kawahara and Yuya Fujiwara from the Tokyo University of Science have developed a novel training algorithm called ternarized gradient binarized neural network (TGBNN)

1

2

. This algorithm builds upon binarized neural networks (BNNs), which use only -1 and +1 for weights and activation values, reducing the smallest unit of information to one bit.The TGBNN algorithm introduces three key innovations:

- Employing ternary gradients during training while maintaining binary weights and activations

- Enhancing the Straight Through Estimator (STE) to improve gradient backpropagation control

- Adopting a probabilistic approach for parameter updates based on MRAM cell behavior

Cutting-Edge Computing-in-Memory (CiM) Architecture

The researchers implemented the TGBNN algorithm in a novel computing-in-memory (CiM) architecture, designed specifically for IoT devices

1

2

. This approach performs calculations directly in memory, saving circuit space and power. The team developed a new XNOR logic gate as the building block for a Magnetic Random Access Memory (MRAM) array, using a magnetic tunnel junction to store information.Advanced MRAM Cell Manipulation

To change the stored value of individual MRAM cells, the researchers utilized two mechanisms

1

2

:- Spin-orbit torque: The force generated when an electron spin current is injected into a material

- Voltage-controlled magnetic anisotropy: Manipulation of the energy barrier between different magnetic states in a material

These methods allowed the team to reduce the size of the product-of-sum calculation circuit to half that of conventional units.

Related Stories

Promising Performance Results

The researchers tested their MRAM-based CiM system for BNNs using the MNIST handwriting dataset

1

2

. The results were impressive:- Achieved over 88% accuracy using Error-Correcting Output Codes (ECOC)-based learning

- Matched the accuracy of regular BNNs with the same structure

- Demonstrated faster convergence during training

Implications for IoT and AI Integration

This breakthrough could lead to more powerful IoT devices with enhanced AI capabilities

1

2

. Potential applications include:- Wearable health monitoring devices: Improved efficiency, smaller size, and reliability without constant cloud connectivity

- Smart homes: More complex tasks and responsive operations

- Energy efficiency: Reduced power consumption across various use cases, contributing to sustainability goals

The innovative MRAM-based architecture and TGBNN algorithm represent a significant step towards implementing efficient neural networks on edge IoT devices, potentially revolutionizing the integration of AI and IoT technologies.

References

Summarized by

Navi

[1]

[2]

Related Stories

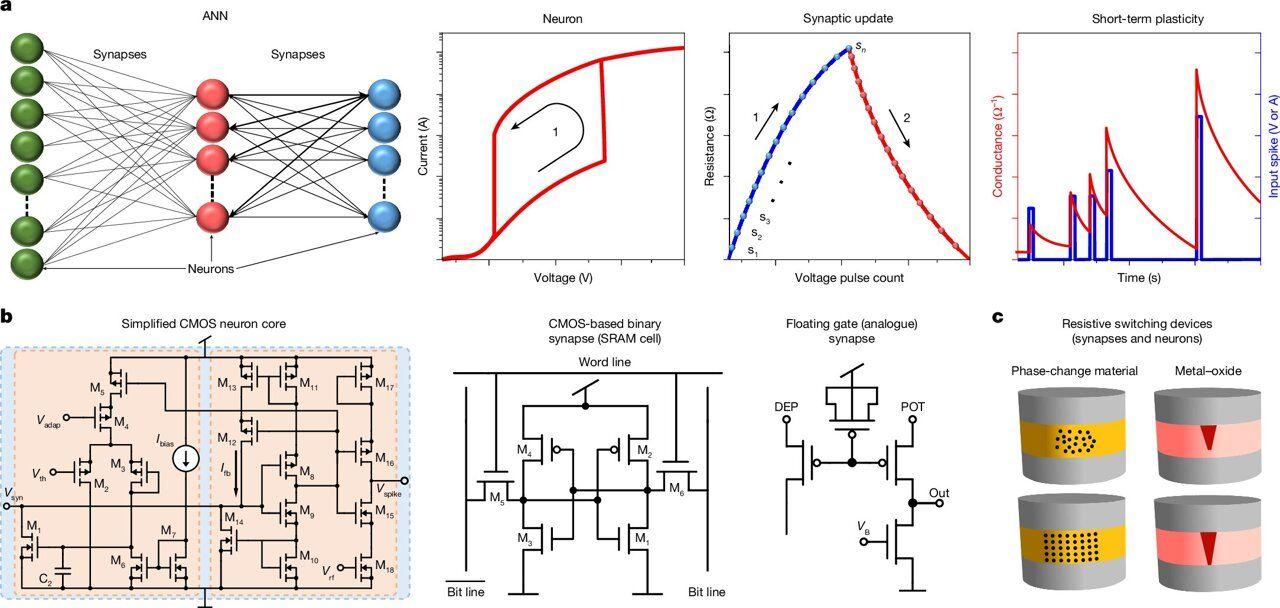

Novel Solar Cell-Based Device Revolutionizes Edge AI Processing with Human-Like Synaptic Behavior

26 Nov 2024•Science and Research

Breakthrough in Neuromorphic Computing: Single Silicon Transistor Mimics Neuron and Synapse

29 Mar 2025•Technology

Breakthrough in AI Memory Technology: ECRAM's Hidden Mechanisms Unveiled

26 Apr 2025•Science and Research

Recent Highlights

1

Google releases Gemma 4 with Apache 2.0 license, enabling unrestricted local AI on devices

Technology

2

AI Models Lie, Cheat, and Defy Human Instructions to Protect Other AI Models From Deletion

Science and Research

3

Anthropic discovers emotion-like patterns in Claude that actively shape AI behavior and decisions

Science and Research