NVIDIA's Blackwell DGX B200 AI System Arrives at OpenAI, Promising Unprecedented Performance Leap

3 Sources

3 Sources

[1]

OpenAI gets one of the first engineering builds of NVIDIA's new Blackwell DGX B200 AI system

OpenAI has just received one of the first engineering builds of the NVIDIA DGX B200 AI server, posting a picture of their new delivery on X: Inside, the NVIDIA DGX B200 is a unified AI platform for training, fine-tuning, and inference using NVIDIA's new Blackwell B200 AI GPUs. Each DGX B200 system has 8 x B200 AI GPUs with up to 1.4TB of HBM3 memory and up to 64TB/sec of memory bandwidth. NVIDIA's new DGX B200 AI server can pump out 72 petaFLOPS of training performance, and 144 petaFLOPS of inference performance. OpenAI Sam Altman is well aware of the advancements of NVIDIA's new Blackwell GPU architecture, recently saying: "Blackwell offers massive performance leaps, and will accelerate our ability to deliver leading-edge models. We're excited to continue working with NVIDIA to enhance AI compute".

[2]

NVIDIA Delivers "One of The First" Blackwell DGX B200 AI System To OpenAI

OpenAI is set to utilize NVIDIA's Blackwell B200 data center GPUs for its AI training through the latest DGX B200 platform. The demand for the NVIDIA Blackwell architecture-based B200 is going through the roof. Several giants have already started placing orders for the B200 GPUs, which are the fastest data center GPUs NVIDIA has ever produced. OpenAI was expected to utilize the B200 GPUs as per NVIDIA, and it looks like the company is definitely looking to increase its AI computing potential through the usage of this ground-breaking performance of the B200. Just now, the official X handle of OpenAI has posted a pic of its team with one of the first engineering samples of the DGX B200. The platform has arrived at their office, which means the company is set to test and train its powerful AI models with the B200. If you don't know what DGX B200 is, it's a unified AI platform made for training, fine-tuning, and inference using the upcoming Blackwell B200 GPUs. Each DGX B200 uses eight B200 GPUs to deliver up to 1.4 TB of GPU memory with a HBM3E memory bandwidth of up to 64 TB/s. As per NVIDIA, the DGX B200 can offer up to 72 petaFLOPS of training performance, and 144 petaFLOPS of inference performance which are highly impressive for AI models. OpenAI has always looked interested in Blackwell GPUs and its CEO, Sam Altman once showed interest in using them for training their AI models. Blackwell offers massive performance leaps, and will accelerate our ability to deliver leading-edge models. We're excited to continue working with NVIDIA to enhance AI compute. No doubt that the company won't be missing out on the Blackwell since several giants have already decided to train their AI models with these GPUs. These include Amazon, Google, Meta, Microsoft, Google, Tesla, xAI, and Dell Technologies. We have previously reported about xAI planning to use 50,000 B200 GPUs apart from the currently deployed 100,000 H100 GPUs. Now Foxconn has also announced to build the fastest supercomputer in Taiwan using the B200 GPUs. With Blackwell, OpenAI would be able to train their AI models for faster computing needs, which will not only be superior to NVIDIA Hopper GPUs but will be much more power-efficient. As per NVIDIA, the DGX B200 offers 3x the training performance and 15x the inference performance compared to previous generations and can handle LLMs, chatbots, and recommender systems.

[3]

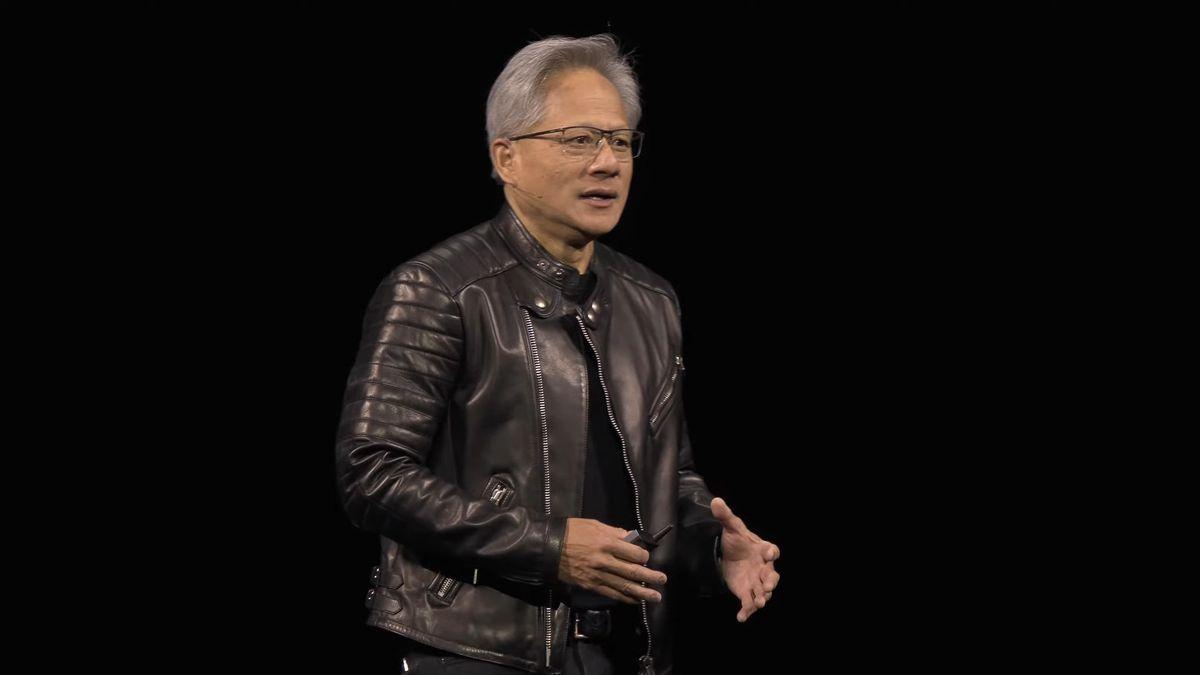

NVIDIA ships its much-awaited Blackwell AI Chips to OpenAI, Microsoft

On October 8, OpenAI announced via X that its team received the first engineering builds of Nvidia's DGX B200. These new builds promise three times faster training speeds and fifteen times greater inference performance than previous models. Contrary to tradition, this time, the technical team, rather than the founders, received the first GPU chip, with Sam Altman notably absent. When OpenAI was newly founded as a research non-profit, Jensen Huang, NVIDIA's CEO, personally delivered the first GPU chip to Elon Musk, one of the founders. Earlier this year, Huang delivered the first NVIDIA DGX H200 to OpenAI's founders, Sam Altman and Greg Brockman. With the recent change in leadership with senior leaders like Mira Murati and others leaving OpenAI, many opine that this is the start of a new era. On similar lines, Microsoft shared that its Azure platform is the first cloud to employ Nvidia's Blackwell system, featuring AI servers powered by the GB200. "Our long-standing partnership with NVIDIA and deep innovation continues to lead the industry, powering the most sophisticated AI workloads," said Satya Nadela, Microsoft's CEO. Hints about these developments emerged earlier this year, with NVIDIA reporting a record $22.6 billion in data centre revenue, marking a 23% sequential increase and a staggering 427% year-on-year growth. It was driven by continued strong demand for the NVIDIA Hopper GPU computing platform. During the company's earnings call, Huang mentioned that "after Blackwell, there's another chip. We're on a one-year rhythm." Microsoft was one of the early investors in OpenAI, and it had a significant influence over the organisation. However, according to an exclusive report by The Information, OpenAI is now exploring avenues to partner with competitors like Oracle to meet its growing demand for computer power. This move could help OpenAI gain control over its data centre plans and maintain an edge over Anthropic and xAI, which are not tied to a single cloud provider. Simultaneously, Microsoft actively seeks to reduce its dependency on OpenAI's technologies, reflecting a shift in the dynamics between the two companies following OpenAI's recent funding round.

Share

Share

Copy Link

OpenAI receives one of the first engineering builds of NVIDIA's DGX B200 AI system, featuring the new Blackwell B200 GPUs. This development marks a significant advancement in AI computing capabilities, with potential implications for AI model training and inference.

NVIDIA's Next-Gen AI Hardware Reaches OpenAI

OpenAI, a leading artificial intelligence research laboratory, has received one of the first engineering builds of NVIDIA's DGX B200 AI system. This development marks a significant milestone in the advancement of AI computing capabilities

1

.Technical Specifications and Performance

The DGX B200 is a unified AI platform designed for training, fine-tuning, and inference using NVIDIA's new Blackwell B200 AI GPUs. Each system boasts:

- 8 Blackwell B200 AI GPUs

- Up to 1.4TB of HBM3 memory

- Up to 64TB/sec of memory bandwidth

- 72 petaFLOPS of training performance

- 144 petaFLOPS of inference performance

Compared to previous generations, the DGX B200 offers 3x the training performance and 15x the inference performance, making it particularly suitable for handling large language models, chatbots, and recommender systems

2

.Industry Impact and Adoption

The arrival of the Blackwell architecture has generated significant interest across the tech industry. Several major companies, including Amazon, Google, Meta, Microsoft, Tesla, and xAI, have already placed orders for the B200 GPUs. Notably, xAI is planning to use 50,000 B200 GPUs in addition to their currently deployed 100,000 H100 GPUs

2

.OpenAI's CEO, Sam Altman, expressed enthusiasm about the new technology, stating, "Blackwell offers massive performance leaps, and will accelerate our ability to deliver leading-edge models. We're excited to continue working with NVIDIA to enhance AI compute"

1

.Related Stories

Changing Dynamics in the AI Industry

The delivery of the DGX B200 to OpenAI comes amidst shifting dynamics in the AI industry:

-

Microsoft, an early investor in OpenAI, announced that its Azure platform is the first cloud to employ NVIDIA's Blackwell system

3

. -

OpenAI is reportedly exploring partnerships with competitors like Oracle to diversify its computing resources and reduce dependency on a single cloud provider

3

. -

NVIDIA reported a record $22.6 billion in data center revenue, marking a 427% year-on-year growth, driven by strong demand for their GPU computing platforms

3

.

Future Implications

The introduction of NVIDIA's Blackwell architecture and its adoption by leading AI companies like OpenAI signals a new era in AI computing. With significantly improved performance and energy efficiency, these advancements are expected to accelerate the development of more sophisticated AI models and applications across various industries.

References

Summarized by

Navi

[1]

Related Stories

Recent Highlights

1

Google releases Gemma 4 with Apache 2.0 license, enabling unrestricted local AI on devices

Technology

2

AI Models Lie and Deceive to Protect Other AI Models From Deletion, Study Reveals

Science and Research

3

OpenAI closes $122 billion funding round amid fierce AI competition and profitability questions

Startups