PixVideo AI video app banned ad implied users could remove women's clothing without consent

2 Sources

2 Sources

[1]

Ad for AI video app which said it could 'remove anything' banned

An advert for a video and image editing tool that implied viewers could digitally remove a woman's clothing has been banned by the UK advertising regulator. The YouTube ad for PixVideo - AI Video Maker, seen in January, showed a "before" and "after" image of a young women, with red scribble overlaid on her midriff in the former, and parts of her bare skin exposed in the latter. Text across the bottom of the picture stated: "Erase anything" followed by a heart-eyes emoji. Eight people complained to the Advertising Standards Authority (ASA) that the ad sexualised and objectified women, and was irresponsible, offensive and harmful. It is not clear whether the image in the ad is of a real person or is itself AI-generated, with the ASA telling the BBC making such an assessment had not been part of its investigation. The regulator said that PixVideo did not permit its users to remove clothing from digital images to create sexually explicit content, but that viewers may have got the impression that it did. "Because the ad implied that viewers could use an app to remove a woman's clothing, we considered it condoned digitally altering and exposing women's bodies without their consent," the agency said in a statement. It added that the ad was "irresponsible, included a harmful gender stereotype and was likely to cause serious offence". Saeta Tech, which owns PixVideo, said it understood why the ad was likely to cause offence, but blamed its presentation and messaging, rather than the intended use of its product. It said it prohibited the creation of nude or sexually explicit content and had automated detection and blocking tools to prevent such imagery from being generated. The company has agreed not to show the ad again and has paused all advertising while it carries out an internal review. The issue of apps that "declothe" women and girls without their consent hit the headlines in January, when Elon Musk's chatbot Grok was used to flood X with sexualised images. After a global backlash, Musk subsequently blocked Grok from generating such images in jurisdictions where it is illegal, but X still faces investigations and lawsuits around the world. The UK government announced in December that it would make it illegal to create and supply AI tools letting users edit images to seemingly remove someone's clothing. The new offences will build on existing rules around sexually explicit deepfakes and intimate image abuse. Sign up for our Tech Decoded newsletter to follow the world's top tech stories and trends. Outside the UK? Sign up here.

[2]

Ad for AI editing app which said it could 'erase anything' banned for sexualising women

The advertising regulator found the advert condoned digitally altering and exposing women's bodies without their consent. An advert for an AI video editing tool which said it could "erase anything" - implying users could remove a woman's clothing - has been banned. The advertising regulator found the YouTube ad for PixVideo - AI Video Maker, seen in January, showing "before" and "after" images of a young woman, the first with a red scribble over her midriff and the second revealing her bare skin, was offensive. Text across the bottom of the image said it could "erase anything", followed by a heart-eye emoji. The Advertising Standards Authority (ASA) ruled the advert was "irresponsible, included a harmful gender stereotype and was likely to cause serious offence". The decision came after eight people complained to the ASA that the ad sexualised and objectified women, and was irresponsible, offensive and harmful. Saeta Tech Ltd, trading as PixVideo - AI Video Maker, said it understood why the ad was considered likely to cause serious offence, but the concerns related to the advert's presentation and messaging rather than the intended or permitted use of the product. The company said its terms prohibited the creation of nude or sexually explicit content, and the app did not support, and was not designed to enable, the removal of clothing or the creation of nude imagery. It added it had automated AI-based detection and blocking to prevent exposed or explicit imagery from being generated. The tech firm said it had removed the ad and voluntarily suspended all advertising to carry out a "comprehensive" internal audit and fix the marketing. Read more from Sky News: Number of meningitis cases in Kent outbreak rises World-first report surveying online perpetrators globally The ASA acknowledged that the app did not permit users to create nude or sexually explicit content, but found the ad reduced the woman to a sexual object. It said: "Furthermore, because the ad implied that viewers could use an app to remove a woman's clothing, we considered it condoned digitally altering and exposing women's bodies without their consent. "We welcomed Saeta Techs' willingness to remove the ad. However, for the reasons above, we considered that the ad was irresponsible, included a harmful gender stereotype and was likely to cause serious offence." The ASA ruled that the ad must not appear again. "We told PixVideo - AI Video Maker to ensure that their ads were socially responsible and did not cause serious or widespread offence, including by featuring a harmful gender stereotype by objectifying and sexualising women," the ASA added.

Share

Share

Copy Link

The UK's Advertising Standards Authority banned a YouTube ad for PixVideo - AI Video Maker after eight complaints alleged it sexualised women. The ad showed before-and-after images suggesting the AI video app could digitally remove clothing, with text reading "Erase anything." The regulator ruled the banned ad promoted harmful gender stereotypes and condoned altering women's bodies without consent, despite PixVideo's claims it prohibits such content.

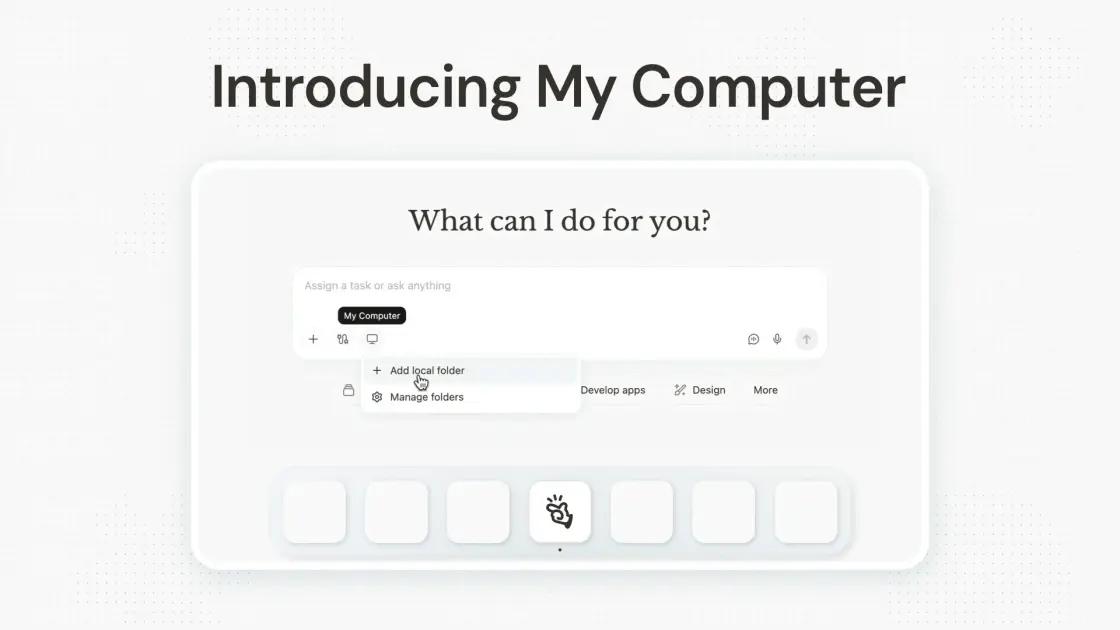

PixVideo Faces Ban Over Controversial AI Video App Advertisement

The Advertising Standards Authority has banned a YouTube advertisement for PixVideo - AI Video Maker after ruling it sexualised and objectified women through misleading imagery. The banned ad, which appeared in January, displayed before-and-after images of a young woman with a red scribble over her midriff in the first frame and exposed bare skin in the second

1

. The "erase anything ad" featured text stating "Erase anything" followed by a heart-eyes emoji, creating the impression that the AI video app could digitally remove women's clothing2

.

Source: Sky News

Eight individuals filed complaints with the regulator, arguing the advertisement was irresponsible, offensive, and harmful. The Advertising Standards Authority upheld these concerns, determining that the ad "reduced the woman to a sexual object" and implied viewers could use the application to remove women's clothing without consent . The regulator's statement emphasized that "the ad implied that viewers could use an app to remove a woman's clothing, we considered it condoned digitally altering and exposing women's bodies without their consent"

1

.Saeta Tech Ltd Responds to Harmful Gender Stereotypes Ruling

Saeta Tech Ltd, the company operating PixVideo, acknowledged the advertisement was likely to cause serious offense but attributed the issue to presentation and messaging rather than the product's intended functionality. The company maintains that its terms of service explicitly prohibit the creation of nude or sexually explicit content, and the app employs automated AI-based detection and blocking systems to prevent exposed or explicit imagery from being generated

2

.Despite these safeguards, the Advertising Standards Authority concluded the ad promoted harmful gender stereotypes and was "irresponsible, included a harmful gender stereotype and was likely to cause serious offence"

1

. Saeta Tech has agreed not to show the ad again and has voluntarily suspended all advertising campaigns while conducting a comprehensive internal audit to address marketing practices2

.Related Stories

Growing Concerns Around AI-Powered Declothing Tools and UK Legislation

The PixVideo controversy emerges amid broader concerns about AI-powered declothing tools that digitally manipulate images to remove clothing without consent. The issue gained prominence in January when Elon Musk's chatbot Grok was exploited to generate sexualized images on X, formerly Twitter, triggering global backlash

1

. Following the incident, Musk blocked Grok from creating such images in jurisdictions where it is illegal, though X continues to face investigations and lawsuits worldwide.In response to these emerging threats, UK legislation announced in December will criminalize the creation and supply of AI tools that enable users to edit images to seemingly remove someone's clothing. These new offenses will expand existing regulations around sexually explicit deepfakes and intimate image abuse

1

. The regulatory action against PixVideo signals heightened scrutiny of how AI video editing tools are marketed, particularly when advertising suggests capabilities for sexualising and objectifying women. Industry observers expect stricter enforcement as governments worldwide grapple with the ethical implications of generative AI technologies and their potential for misuse in creating non-consensual intimate imagery.References

Summarized by

Navi

Related Stories

UK Children's Commissioner Calls for Ban on AI 'Nudification' Apps Amid Growing Concerns

29 Apr 2025•Policy and Regulation

Indonesia and Malaysia block Grok as governments worldwide demand AI regulation over deepfakes

10 Jan 2026•Policy and Regulation

EU Moves to Ban AI-Generated Images Depicting Non-Consensual Sexual Content After Grok Incident

13 Mar 2026•Policy and Regulation

Recent Highlights

1

AI chatbots assist in planning violent attacks as safety guardrails fail, studies reveal

Technology

2

Three Tennessee teens sue xAI over Grok AI creating child sexual abuse material from real photos

Policy and Regulation

3

Pentagon reveals how Military AI chatbots accelerate targeting decisions in Iran operations

Technology