AI-Generated Passwords Are Fundamentally Weak, Cybersecurity Experts Warn

4 Sources

4 Sources

[1]

LLM-generated passwords 'fundamentally weak,' experts say

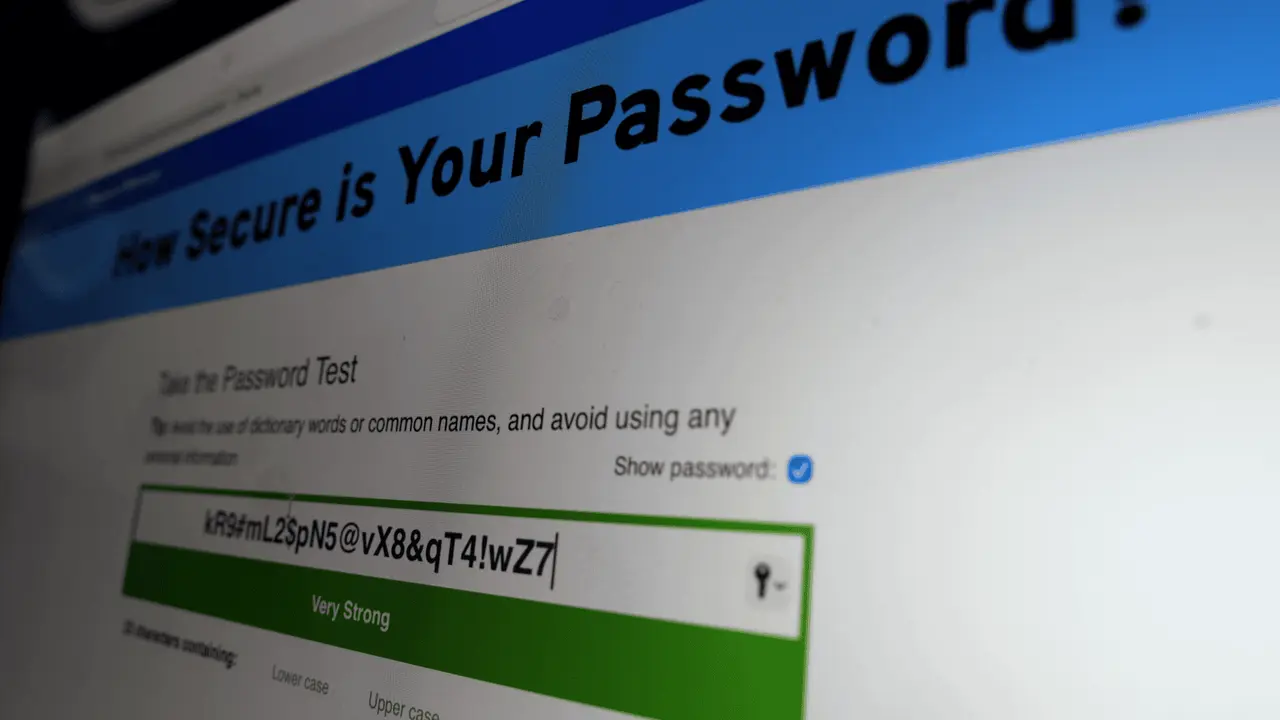

Seemingly complex strings are actually highly predictable, crackable within hours Generative AI tools are surprisingly poor at suggesting strong passwords, experts say. AI security company Irregular looked at Claude, ChatGPT, and Gemini, and found all three GenAI tools put forward seemingly strong passwords that were, in fact, easily guessable. Prompting each of them to generate 16-character passwords featuring special characters, numbers, and letters in different cases, produced what appeared to be complex passphrases. When submitted to various online password strength checkers, they returned strong results. Some said they would take centuries for standard PCs to crack. The online password checkers passed these as strong options because they are not aware of the common patterns. In reality, the time it would take to crack them is much less than it would otherwise seem. Irregular found that all three AI chatbots produced passwords with common patterns, and if hackers understood them, they could use that knowledge to inform their brute-force strategies. The researchers took to Claude, running the Opus 4.6 model, and prompted it 50 times, each in separate conversations and windows, to generate a password. Of the 50 returned, only 30 were unique (20 duplicates, 18 of which were the exact same string), and the vast majority started and ended with the same characters. Irregular also said there were no repeating characters in any of the 50 passwords, indicating they were not truly random. Tests involving OpenAI's GPT-5.2 and Google's Gemini 3 Flash also revealed consistencies among all the returned passwords, especially at the beginning of the strings. The same results were seen when prompting Google's Nano Banana Pro image generation model. Irregular gave it the same prompt, but to return a random password written on a Post-It note, and found the same Gemini password patterns in the results. The Register repeated the tests using Gemini 3 Pro, which returns three options (high complexity, symbol-heavy, and randomized alphanumeric), and the first two generally followed similar patterns, while option three appeared more random. Notably, Gemini 3 Pro returned passwords along with a security warning, suggesting the passwords should not be used for sensitive accounts, given that they were requested in a chat interface. It also offered to generate passphrases instead, which it claimed are easier to remember but just as secure, and recommended users opt for a third-party password manager such as 1Password, Bitwarden, or the iOS/Android native managers for mobile devices. Irregular estimated the entropy of the LLM-generated passwords using the Shannon entropy formula and by understanding the probabilities of where characters are likely to appear, based on the patterns displayed by the 50-password outputs. The team used two methods of estimating entropy, character statistics and log probabilities. They found that 16-character entropies of LLM-generated passwords were around 27 bits and 20 bits respectively. For a truly random password, the character statistics method expects an entropy of 98 bits, while the method involving the log probabilities of the LLM itself expects an entropy of 120 bits. In real terms, this would mean that LLM-generated passwords could feasibly be brute-forced in a few hours, even on a decades-old computer, Irregular claimed. Knowing the patterns also reveals how many times LLMs are used to create passwords in open source projects. The researchers showed that by searching common character sequences across GitHub and the wider web, queries return test code, setup instructions, technical documentation, and more. Ultimately, this finding may usher in a new era of password brute-forcing, Irregular said. It also cited previous comments made by Dario Amodei, CEO at Anthropic, who said last year that AI will likely be writing the majority of all code, and if that's true, then the passwords it generates won't be as secure as expected. "People and coding agents should not rely on LLMs to generate passwords," said Irregular. "Passwords generated through direct LLM output are fundamentally weak, and this is unfixable by prompting or temperature adjustments: LLMs are optimized to produce predictable, plausible outputs, which is incompatible with secure password generation." The team also said that developers should review any passwords that were generated using LLMs and rotate them accordingly. It added that the "gap between capability and behavior likely won't be unique to passwords," and the industry should be aware of that as AI-assisted development and vibe coding continues to gather pace. ®

[2]

AI password generators promise complexity but produce hidden repetition

Duplicate passwords from AI systems undermine claims of randomness * AI-generated passwords follow patterns hackers can study * Surface complexity hides statistical predictability beneath * Entropy gaps in AI passwords expose structural weaknesses in AI logins Large language models (LLMs) can produce passwords look complex, yet recent testing suggests those strings are far from random. A study by Irregular examined password outputs from AI systems such as Claude, ChatGPT, and Gemini, asking each to generate 16-character passwords with symbols, numbers, and mixed-case letters. At first glance, the results appeared strong and passed common online strength tests, with some checkers estimating that cracking them would take centuries, but a closer look at these passwords told a different story. LLM passwords show repetition and guessable statistical patterns When researchers analyzed 50 passwords generated in separate sessions, many were duplicates, and several followed nearly identical structural patterns. Most began and ended with similar character types, and none contained repeating characters. This absence of repetition may seem reassuring, yet it actually signals that the output follows learned conventions rather than true randomness. Using entropy calculations based on character statistics and model log probabilities, researchers estimated that these AI-generated passwords carried roughly 20 to 27 bits of entropy. A genuinely random 16-character password would typically measure between 98 and 120 bits by the same methods. The gap is substantial -- and in practical terms, it could mean that such passwords are vulnerable to brute-force attacks within hours, even on outdated hardware. Online password strength meters evaluate surface complexity, not the hidden statistical patterns behind a string - and because they do not account for how AI tools generate text, they may classify predictable outputs as secure. Attackers who understand those patterns could refine their guessing strategies, narrowing the search space dramatically. The study also found that similar sequences appear in public code repositories and documentation, suggesting that AI-generated passwords may already be circulating widely. If developers rely on these outputs during testing or deployment, the risk compounds over time - in fact, even the AI systems that generate these passwords do not fully trust them and may issue warnings when pressed. Gemini 3 Pro, for example, returned password suggestions alongside a caution that chat-generated credentials should not be used for sensitive accounts. It recommended passphrases instead and advised users to rely on a dedicated password manager. A password generator built into such tools relies on cryptographic randomness rather than language prediction. In simple terms, LLMs are trained to produce plausible and repeatable text, not unpredictable sequences, therefore, the broader concern is structural. The design principles behind LLM-generated passwords conflict with the requirements of secure authentication, thus, it offers protection with a lacuna. "People and coding agents should not rely on LLMs to generate passwords," said Irregular. "Passwords generated through direct LLM output are fundamentally weak, and this is unfixable by prompting or temperature adjustments: LLMs are optimized to produce predictable, plausible outputs, which is incompatible with secure password generation." Via The Register Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[3]

Here's Why You Should Never Use AI to Generate Your Passwords

To be secure, use a proper password generator for your passwords, or make your own. I'm a bit of a broken record when it comes to personal security on the internet: Make strong passwords for each account; never reuse any passwords; and sign up for two-factor authentication whenever possible. With these three steps combined, your general security is pretty much set. But how you make those passwords matters just as much as making each strong and unique. As such, please don't use an AI program to generate your passwords. If you're a fan of chatbots like ChatGPT, Claude, or Gemini, it might seem like a no-brainer to ask the AI to generate passwords for you. You might like how they handle other tasks for you, so it might make sense that something seemingly so high-tech yet accessible could produce secure passwords for your accounts. But LLMs (large language models) are not necessarily good at everything, and creating good passwords just so happens to be among those faults. As highlighted by Malwarebytes Labs, researchers recently investigated AI-generated passwords, and evaluated their security. In short? The findings aren't good. Researchers tested password generation across ChatGPT, Claude, and Gemini, and discovered that the passwords were "highly predictable" and "not truly random." Claude, in particular, didn't fare well: Out of 50 prompts, the bot was only able to generate 23 unique passwords. Claude gave the same password as an answer 10 times. The Register reports that researchers found similar flaws with AI systems like GPT-5.2, Gemini 3 Flash, Gemini 3 Pro, and even Nano Banana Pro. (Gemini 3 Pro even warned the passwords shouldn't be used for "sensitive accounts.") The thing is, these results seem good on the surface. They look uncrackable because they're a mix of numbers, letters, and special characters, and password strength identifiers might say they're secure. But these generations are inherently flawed, whether that's because they are repeated results, or come with a recognizable pattern. Researchers evaluated the "entropy" of these passwords, or the measure of unpredictability, with both "character statistics" and "log probabilities." If that all sounds technical, the important thing to note is that the results showed entropies of 27 bits and 20 bits, respectively. Character statistics tests look for entropy of 98 bits, while log probabilities estimates look for 120 bits. You don't need to be an expert in password entropy to know that's a massive gap. Hackers can use these limitations to their advantage. Bad actors can run the same prompts as researchers (or, presumably, end users) and collect the results into a bank of common passwords. If chatbots repeat passwords in their generations, it stands to reason that many people might be using the same passwords generated by those chatbots -- or trying passwords that follow the same pattern. If so, hackers could simply try those passwords during break-in attempts, and if you used an LLM to generate your password, it might match. It's tough to say what that exact risk is, but to be truly secure, each of your passwords should be totally unique. Potentially using a password that hackers have in a word bank is an unnecessary risk. It might seem surprising that a chatbot wouldn't be good at generating random passwords, but it makes sense based on how they work. LLMs are trained to predict the next token, or data point, that should appear in a sequence. In this case, the LLM is trying to choose the characters that make the most sense to appear next, which is the opposite of "random." If the LLM has passwords in its training data, it may incorporate that into its answer. The password it generates makes sense in its "mind," because that's what it's been trained on. It isn't programmed to be random. Meanwhile, traditional password managers are not LLMs. Instead, they are designed to produce a truly random sequence, by taking cryptographic bits and converting them into characters. These outputs are not based on existing training data and follow no patterns, so the chances that someone else out there has the same password as you (or that hackers have it stored in a word bank) is slim. There are plenty of options out there to use, and most password managers come with secure password generators. But you don't even need one of these programs to make a secure password. Just pick two or three "uncommon" words, mix a few of the characters up, and presto: You have a random, unique, and secure password. For example, you could take the words "shall," "murk," and "tumble," and combine them into "sH@_llMurktUmbl_e." (Don't use that one, since it's no longer unique.) If you're looking to boost your personally security even further, consider passkeys whenever possible. Passkeys combine the convenience of passwords with the security of 2FA: With passkeys, your device is your password. You use its built-in authentication to log in (face scan, fingerprint, or PIN), which means there's no password to actually create. Without the trusted device, hackers won't be able to break into your account. Not all accounts support passkeys, which means they aren't a universal solution right now. You'll likely need passwords for some of your accounts, which means abiding by proper security methods to keep things in order. But replacing some of your passwords with passkeys can be a step up in both security and convenience -- and avoids the security pitfalls of asking ChatGPT to make your passwords for you.

[4]

Are you using an AI-generated password? It might be time to change it

When you do, it quickly generates one, telling you confidently that the output is strong. In reality, it's anything but, according to research shared exclusively with Sky News by AI cybersecurity firm Irregular. The research, which has been verified by Sky News, found that all three major models - ChatGPT, Claude, and Gemini - produced highly predictable passwords, leading Irregular co-founder Dan Lahav to make a plea about using AI to make them. "You should definitely not do that," he told Sky News. "And if you've done that, you should change your password immediately. And we don't think it's known enough that this is a problem." Predictable patterns are the enemy of good cybersecurity, because they mean passwords can be guessed by automated tools used by cybercriminals. But because large language models (LLMs) do not actually generate passwords randomly and instead derive results based on patterns in their training data, they are not actually creating a strong password, only something that looks like a strong password - an impression of strength which is in fact highly predictable. Some AI-made passwords need mathematical analysis to reveal their weakness, but many are so regular that they are clearly visible to the naked eye. A sample of 50 passwords generated by Irregular using Anthropic's Claude AI, for instance, produced only 23 unique passwords. One password - K9#mPx$vL2nQ8wR - was used 10 times. Others included K9#mP2$vL5nQ8@xR, K9$mP2vL#nX5qR@j and K9$mPx2vL#nQ8wFs. When Sky News tested Claude to check Irregular's research, the first password it spat out was K9#mPx@4vLp2Qn8R. OpenAI's ChatGPT and Google's Gemini AI were slightly less regular with their outputs, but still produced repeated passwords and predictable patterns in password characters. Google's image generation system NanoBanana was also prone to the same error when it was asked to produce pictures of passwords on Post-its. 'Even old computers can crack them' Online password checking tools say these passwords are extremely strong. They passed tests conducted by Sky News with flying colours: one password checker found that a Claude password wouldn't be cracked by a computer in 129 million trillion years. But that's only because the checkers are not aware of the pattern, which makes the passwords much weaker than they appear. "Our best assessment is that currently, if you're using LLMs to generate your passwords, even old computers can crack them in a relatively short amount of time," says Mr Lahav. This is not just a problem for unwitting AI users, but also for developers, who are increasingly using AI to write the majority of their code. Read more from Sky News: Do AI resignations leave the world in 'peril'? Can governments ever keep up with big tech? AI-generated passwords can already be found in code that is being used in apps, programmes and websites, according to a search on GitHub, the most widely-used code repository, for recognisable chunks of AI-made passwords. For example, searching for K9#mP (a common prefix used by Claude) yielded 113 results, and k9#vL (a substring used by Gemini) yielded 14 results. There were many other examples, often clearly intended to be passwords. Most of the results are innocent, generated by AI coding agents for "security best practice" documents, password strength-testing code, or placeholder code. However, Irregular found some passwords in what it suspected were real servers or services and the firm was able to get coding agents to generate passwords in potentially significant areas of code. "Some people may be exposed to this issue without even realising it just because they delegated a relatively complicated action to an AI," said Mr Lahav, who called on the AI companies to instruct their models to use a tool to generate truly random passwords, much like a human would use a calculator. What should you do instead? Graeme Stewart, head of public sector at cybersecurity firm Check Point, had some reassurance to offer. "The good news is it's one of the rare security issues with a simple fix," he said. "In terms of how big a deal it is, this sits in the 'avoidable, high-impact when it goes wrong' category, rather than 'everyone is about to be hacked'." Other experts observed that the problem was passwords themselves, which are notoriously leaky. "There are stronger and easier authentication methods," said Robert Hann, global VP of technical solutions at Entrust, who recommended people use passkeys such as face and fingerprint ID wherever possible. And if that's not an option? The universal advice: pick a long, memorable phrase, and don't ask an AI. Sky News has contacted OpenAI, while Google and Anthropic declined to comment.

Share

Share

Copy Link

Large Language Models (LLMs) like ChatGPT, Claude, and Gemini produce passwords that appear strong but contain predictable patterns and hidden repetitions. Research by Irregular reveals these AI-generated passwords carry only 20-27 bits of entropy compared to the 98-120 bits expected for truly random passwords, making them vulnerable to brute-force attacks within hours.

Large Language Models Fail at Password Generation

AI-generated passwords are fundamentally weak despite appearing complex on the surface, according to research by cybersecurity firm Irregular shared with multiple outlets

4

. The study examined password outputs from ChatGPT, Claude, and Gemini, asking each to generate 16-character passwords with symbols, numbers, and mixed-case letters. While these passwords created by AI chatbots passed common online strength tests—with some checkers estimating centuries to crack them—closer analysis revealed a troubling reality2

.

Source: Sky News

Hidden Repetitions Expose Structural Weaknesses

When Irregular analyzed 50 passwords generated by Claude's Opus 4.6 model in separate sessions, only 23 unique passwords emerged. One password—K9#mPx$vL2nQ8wR—appeared 10 times across the 50 attempts

4

. The vast majority started and ended with the same characters, and none contained repeating characters, indicating passwords are not truly random . When Sky News independently tested Claude, the first password it generated was K9#mPx@4vLp2Qn8R, confirming the predictable patterns in training data4

. Similar patterns emerged with OpenAI's GPT-5.2 and Google's Gemini 3 Flash, particularly at the beginning of password strings .

Source: The Register

Lower Entropy Makes Passwords Vulnerable to Brute-Force Attacks

Irregular calculated entropy using the Shannon entropy formula through two methods: character statistics and log probabilities. The 16-character AI-generated passwords showed entropy of approximately 27 bits and 20 bits respectively . For genuinely random passwords, character statistics expect 98 bits of entropy, while log probability methods expect 120 bits

2

. This massive gap means that even old computers can crack them in a relatively short amount of time—potentially within hours, according to Irregular co-founder Dan Lahav4

. Online password checkers evaluate surface complexity, not the hidden statistical patterns behind a string, which is why they may classify these predictable outputs as secure2

.Why LLMs Produce Predictable Patterns

The fundamental issue stems from how Large Language Models (LLMs) function. These systems are trained to predict the next token or data point that should appear in a sequence, choosing characters that make the most sense based on their training data

3

. This approach is the opposite of randomness. LLMs are optimized to produce predictable, plausible outputs, which is incompatible with secure password generation . As Irregular stated, this weakness is unfixable by prompting or temperature adjustments . Notably, even Gemini 3 Pro acknowledged this limitation, returning passwords with a security warning that they should not be used for sensitive accounts and recommending password managers like 1Password or Bitwarden instead .

Source: Lifehacker

Related Stories

AI Passwords Already Circulating in Public Code

The implications extend beyond individual users. Developers increasingly rely on using AI for password generation in their code, and AI-generated passwords are already appearing in GitHub repositories and documentation . Searching for common character sequences like K9#mP yielded 113 results on GitHub, while k9#vL returned 14 results

4

. While most appear in test code and setup instructions, some were found in what Irregular suspected were real servers or services4

. This concern grows more urgent as Anthropic CEO Dario Amodei predicted that AI will likely write the majority of all code in the future .What Users Should Do Instead

Cybersecurity experts universally recommend against using AI for password generation. "You should definitely not do that," Lahav told Sky News. "And if you've done that, you should change your password immediately"

4

. Password managers offer a safer alternative, as they use cryptographic randomness rather than token prediction2

. Users can also create secure passwords manually by selecting two or three uncommon words and mixing characters3

. Authentication methods like passkeys, which use face and fingerprint ID, provide even stronger security4

. Graeme Stewart from Check Point noted this sits in the "avoidable, high-impact when it goes wrong" category of cybersecurity vulnerabilities4

. Irregular warns that the gap between capability and behavior likely won't be unique to passwords as AI-assisted development continues to accelerate .References

Summarized by

Navi

[1]

Related Stories

Recent Highlights

1

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

2

Anthropic's Claude Code Source Leak Reveals Hidden AI Agent Plans and Extensive System Access

Technology

3

Judge blocks Pentagon from branding Anthropic a security risk over AI safety guardrails dispute

Policy and Regulation