AI in Healthcare: Promises and Pitfalls of Medical Advice Chatbots

2 Sources

[4]

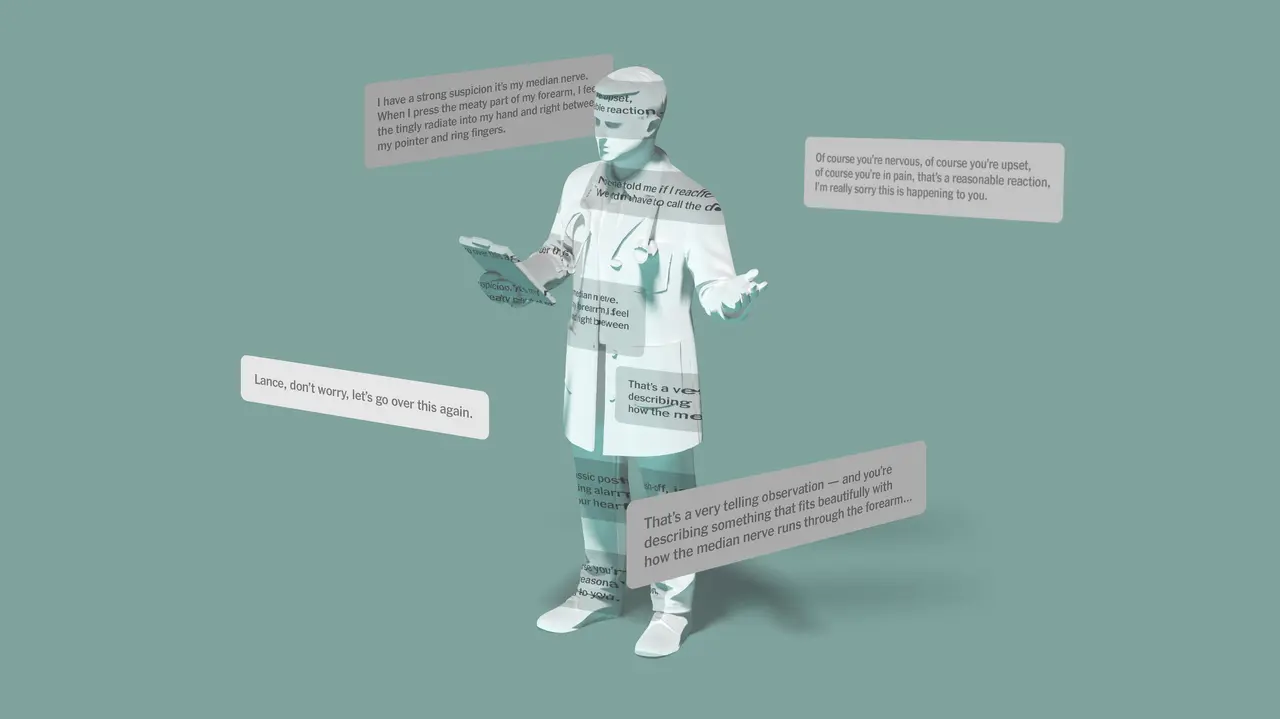

Software developers want AI to give medical advice, but questions abound about accuracy

Software designers are testing specialized AI-powered chatbots that can give medical advice and diagnose conditions -- but questions abound about accuracy. This spring, Google unveiled an "AI Overview" feature where answers from the company's chatbot started to appear above typical search results, including for health-related queries. While it might have sounded like a good idea in theory, there have been issues around health advice offered by the software. In the first week that the bot was online, one user said Google AI gave incorrect, possibly lethal information about what to do if bitten by a rattlesnake. Another search resulted in Google recommending people eat "at least one small rock per day" for vitamins and minerals -- advice was lifted from a satirical article. Google says that they have since limited the inclusion of satirical and humor sites in their overviews, and removed some of the search results that went viral. "The vast majority of AI Overviews provide high quality information, with links to dig deeper on the web," a Google spokesperson told CBS News. "For health queries, we've always had strong quality and safety guardrails in place, including disclaimers that remind people that it's important to seek out expert advice. We've continued to refine when and how we show AI Overviews to ensure the information is high quality and reliable." CBS News Confirmed found that those fixes haven't prevented all health misinformation. Queries about introducing solid food to infants under six months old still returned tips in late June. Babies aren't supposed to begin eating solid food until the age of at least six months, according to the American Academy of Pediatrics. Searches on the health benefits of dubious wellness trends like detoxes or drinking raw milk also included debunked claims. Despite the quirks and outright errors, many health care leaders say they remain optimistic about AI chatbots and how they can change the industry. "People will access information that they need," said Dr. Nigam Shah, a chief data scientist at Stanford Healthcare. "In the short term I'm a bit pessimistic, I think we're getting a bit ahead of ourselves. But in the long run I think these technologies are gonna do us a lot of good." Other advocates of chatbots are quick to point out that physicians don't always get it right. Estimates vary, but a study by the Department of Health and Human Services from 2022 found as many as 2% of patients who go to an emergency department each year may suffer harm after a misdiagnosis from a health care provider. Shah compared the use of chatbots to the early days of Google itself. "When Google Search came around, people were panicking that people would self-diagnose and all hell will break loose. It didn't happen," Shah said. "Same thing. We'll go through that phase (where) the new ones that aren't fully formed will make mistakes, and a couple of them will be bad, but by and large, having information when there is no other option is a good thing." The World Health Organization is one of the companies dipping its toes into the AI waters. The organization's chatbot, Sarah, pulls information from the WHO's site and its trusted partners, making the answers less prone to factual errors. When asked how to limit the risk of a heart attack, Sarah gave information about managing stress, sleeping well and focusing on a healthy lifestyle. Continued advancements in design and oversight might continue to improve such bots. But if you're turning to an AI chatbot for health advice today, note the warning that comes with Google's version: "Info quality may vary."

[5]

Software providers test healthcare AI bots, but are they accurate?

With medical providers facing rising levels of burnout, software designers are testing specialized AI-powered chatbots that they hope provide preventative care advice to patients. However, CBS News Confirmed found that the summaries given from existing AI bots like ChatGPT aren't always accurate.

Share

Copy Link

Software developers are exploring the use of AI chatbots for medical advice, raising questions about accuracy and potential risks. While these tools show promise, experts caution against relying solely on AI for healthcare decisions.

The Rise of AI in Healthcare

As artificial intelligence continues to evolve, software developers are increasingly turning their attention to the healthcare sector. A growing trend is the development of AI chatbots designed to provide medical advice to users. These chatbots, powered by large language models similar to ChatGPT, are being marketed as potential tools to assist with healthcare decisions

1

.Accuracy Concerns

While the idea of AI-powered medical advice seems promising, experts are raising concerns about the accuracy and reliability of these systems. Dr. Bertalan Mesko, Director of The Medical Futurist Institute, warns that current AI models are not yet capable of providing accurate medical diagnoses or treatment recommendations

1

. A study conducted by the University of California San Diego tested the accuracy of ChatGPT in providing medical advice. The results showed that while the AI performed well in some areas, it also made significant errors. In one instance, the chatbot incorrectly suggested that a patient with chest pain should take an aspirin, potentially leading to dangerous consequences1

.Potential Benefits and Risks

Despite the concerns, proponents argue that AI chatbots could help alleviate the strain on healthcare systems by providing quick, accessible information to patients. They suggest that these tools could be particularly useful for triage, helping users determine whether they need to seek professional medical attention

1

.However, critics worry about the potential risks of relying on AI for medical advice. There are concerns about privacy, data security, and the possibility of AI systems perpetuating biases present in their training data. Additionally, there's a risk that users might prioritize AI-generated advice over consulting with human healthcare professionals.

Related Stories

Regulatory Challenges

As AI continues to make inroads into healthcare, regulators are grappling with how to oversee these new technologies. The U.S. Food and Drug Administration (FDA) is working on developing guidelines for AI in healthcare, but the rapid pace of technological advancement poses challenges for creating comprehensive regulations

2

.The Future of AI in Healthcare

While current AI models may not be ready to replace human doctors, many experts believe that AI will play an increasingly important role in healthcare. The key will be finding the right balance between leveraging AI's capabilities and maintaining the critical human element in medical care. As research and development continue, it's likely that we'll see more sophisticated and accurate AI healthcare tools in the future, potentially revolutionizing how we approach medical advice and treatment.

References

Summarized by

Navi

Related Stories

Recent Highlights

1

OpenAI launches GPT-5.5 Instant as ChatGPT's new default model, cutting hallucinations by 52.5%

Technology

2

French prosecutors escalate Elon Musk and X investigation to criminal probe over Grok AI violations

Policy and Regulation

3

Google, Microsoft, and xAI Agree to US Government Review of AI Models Before Public Release

Policy and Regulation