arXiv Bans AI-Generated Computer Science Reviews Amid Quality Crisis

3 Sources

3 Sources

[1]

Preprint site arXiv is banning computer-science reviews: here's why

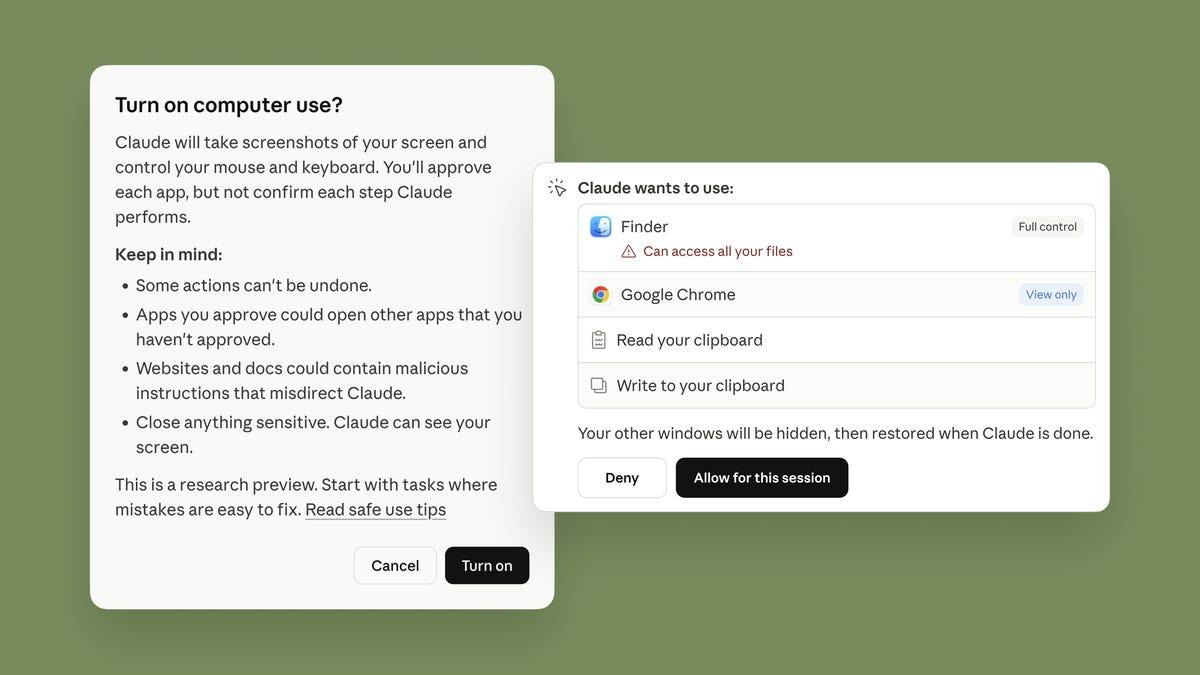

The oldest and best-known preprint repository, arXiv, has announced that it will no longer accept review or position papers in computer science. The website will make exceptions only for papers that have been previously accepted by a peer-reviewed venue, such as a journal or conference. These types of article were never officially on arXiv's list of 'accepted content types', but they had previously been allowed at moderator discretion, said a blog post announcing the move on 31 October. arXiv management says the move was made necessary by a surge in low-quality papers, including many that appear to be written using generative artificial intelligence (AI) tools. "I think this is a wise move by arXiv," says Richard Sever, chief science and strategy officer of the non-profit organisation openRxiv in New York City, which runs the biomedical-sciences preprint servers bioRxiv and medRxiv. He adds both of those platforms have had a 'no narrative reviews' policy from the outset. Preprints submitted to arXiv are checked by automated tools. Before going live on the site, around 20% of submissions are flagged to one of 240 volunteer moderators, says arXiv executive director Ramin Zabih, a computer scientist at Cornell University in Ithaca, New York. Until a year ago, only 2-3% of submissions were ultimately rejected by moderators, says Steinn Sigurðsson, an astrophysicist at Penn State University in University Park who is arXiv's scientific director. But in the last year, that number has gone up to 10%. The number of computer-science review and position papers that were flagged or rejected was not particularly large, but these articles took a disproportionate amount of time for moderators to check, says Thomas Dietterich, a computer scientist at Oregon State University in Corvallis who chairs arXiv's computer-science section. "What we are seeing is many surveys that are just annotated bibliographies without analysis, synthesis or road mapping," he says, adding that the citations often include papers on completely unrelated subjects. "We suspect there may be markets where such citations can be purchased, because the papers being cited can be scattered across the academic landscape." Stephanie Orphan, arXiv's programme director, says that chatbots are now sophisticated enough that the review papers they produce can escape simple plagiarism checks, whether performed by people or software. "The tools have gotten more clever." It is too soon to measure the impact of arXiv's new policy and determine whether it will reduce moderators' workload, says Zabih, adding that it is probably only the first in a series of similar changes. "We are actively contemplating more measures along these lines."

[2]

arXiv Changes Rules After Getting Spammed With AI-Generated 'Research' Papers

Cornell University's academic paper repository will no longer accept Computer Science papers still under review. arXiv, a preprint publication for academic research that has become particularly important for AI research, has announced it will no longer accept computer science articles and papers that haven't been vetted by an academic journal or a conference. Why? A tide of AI slop has flooded the computer science category with low-effort papers that are "little more than annotated bibliographies, with no substantial discussion of open research issues," according to a press release about the change. arXiv has become a critical place for preprint and open access scientific research to be published. Many major scientific discoveries are published on arXiv before they finish the peer review process and are published in other, peer-reviewed journals. For that reason, it's become an important place for new breaking discoveries and has become particularly important for research in fast-moving fields such as AI and machine learning (though there are also sometimes preprint, non-peer-reviewed papers there that get hyped but ultimately don't pass peer review muster). The site is a repository of knowledge where academics upload PDFs of their latest research for public consumption. It publishes papers on physics, mathematics, biology, economics, statistics, and computer science and the research is vetted by moderators who are subject matter experts. But because of an onslaught of AI-generated research, specifically in the computer science (CS) section, arXiv is going to limit which papers can be published. "In the past few years, arXiv has been flooded with papers," arXiv said in a press release. "Generative AI / large language models have added to this flood by making papers -- especially papers not introducing new research results -- fast and easy to write." The site noted that this was less a policy change and more about stepping up enforcement of old rules. "When submitting review articles or position papers, authors must include documentation of successful peer review to receive full consideration," it said. "Review/survey articles or position papers submitted to arXiv without this documentation will be likely to be rejected and not appear on arXiv." According to the press release, arXiv has been inundated by "review" submissions -- papers that are still pending peer review -- but that CS was the worst category. "We now receive hundreds of review articles every month," arXiv said. "The advent of large language models have made this type of content relatively easy to churn out on demand. The plan is to enforce a blanket ban on papers still under review in the CS category and free the moderators to look at more substantive submissions. arXiv stressed that it does not often accept review articles, but had been doing so when it was of academic interest and from a known researcher. "If other categories see a similar rise in LLM-written review articles and position papers, they may choose to change their moderation practices in a similar manner to better serve arXiv authors and readers," arXiv said. AI-generated research articles are a pressing problem in the scientific community. Scam academic journals that run pay-to-publish schemes are an issue that plagued academic publishing long before AI, but the advent of LLMs has supercharged it. But scam journals aren't the only ones affected. Last year, a serious scientific journal had to retract a paper that included an AI-generated image of a giant rat penis. Peer reviewers, the people who are supposed to vet scientific papers for accuracy, have also been caught cutting corners using ChatGPT in part because of the large demands placed on their time.

[3]

ArXiv Blocks AI-Generated Survey Papers After 'Flood' of Trashy Submissions - Decrypt

Researchers are divided, with some warning the rule hurts early-career authors while others call it necessary to stop AI spam. ArXiv, a free repository founded at Cornell University that has become the go-to hub for thousands of scientists and technologists worldwide to publish early research papers, will no longer accept review articles or position papers in its Computer Science category unless they've already passed peer review at a journal or conference. The policy shift, announced October 31, comes after a "flood" of AI-generated survey papers that moderators describe as "little more than annotated bibliographies." The repository now receives hundreds of these submissions monthly, up from a small trickle of high-quality reviews historically written by senior researchers. "In the past few years, arXiv has been flooded with papers," an official statement on the site explained. "Generative AI/large language models have added to this flood by making papers -- especially papers not introducing new research results -- fast and easy to write." "We were driven to this decision by a big increase in LLM-assisted survey papers," added Thomas G. Dietterich, an arXiv moderator and former president of the Association for the Advancement of Artificial Intelligence, on X. "We don't have the moderator resources to examine these submissions and identify the good surveys from the bad ones." Research published in Nature Human Behaviour found that nearly a quarter of all computer science abstracts showed evidence of large language model modification by September 2024. A separate study in Science Advances showed that the use of AI in research papers published in 2024 skyrocketed since the launch of ChatGPT. ArXiv's volunteer moderators have always filtered submissions for scholarly value and topical relevance, but they don't conduct peer review. Review articles and position papers were never officially accepted content types, though moderators made exceptions for work from established researchers or scientific societies. That discretionary system broke under the weight of AI-generated submissions. The platform now handles a submission volume that's multiplied several times over in recent years, with generative AI making it trivially easy to produce superficial survey papers. The response from the research community has been mixed. Stephen Casper, an AI safety researcher, raised concerns that the policy might disproportionately affect early-career researchers and those working on ethics and governance topics. "Review/position papers are disproportionately written by young people, people without access to lots of compute, and people who are not at institutions that have lots of publishing experience," he wrote in a critique. One problem is that AI detection tools have proven unreliable, with high false-positive rates that can unfairly flag legitimate work. On the other hand, a recent study found that researchers failed to identify one-third of ChatGPT-generated medical abstracts as machine-written. The American Association for Cancer Research reported that less than 25% of authors disclosed AI use despite mandatory disclosure policies. The new requirement means authors must submit documentation of successful peer review, including journal references and DOIs. Workshop reviews won't meet the standard. ArXiv emphasized that the change affects only the Computer Science category for now, though other sections may adopt similar policies if they face comparable surges in AI-generated submissions. The move reflects a broader reckoning in academic publishing. Major conferences like CVPR 2025 have implemented policies to desk-reject papers from reviewers flagged for irresponsible conduct. Publishers are grappling with papers that contain obvious AI tells, like one that began, "Certainly, here is a possible introduction for your topic."

Share

Share

Copy Link

The prominent preprint repository arXiv has banned computer science review and position papers unless peer-reviewed, responding to a flood of low-quality AI-generated submissions that overwhelmed volunteer moderators.

arXiv Implements Strict New Policy Against AI-Generated Papers

The prestigious preprint repository arXiv has announced a significant policy change that will ban computer science review and position papers unless they have already undergone peer review at an academic journal or conference. The decision, announced on October 31, represents a direct response to what administrators describe as a "flood" of low-quality, AI-generated submissions that have overwhelmed the platform's volunteer moderation system

1

.

Source: Nature

The policy shift affects one of academia's most important platforms for early research dissemination, particularly in fast-moving fields like artificial intelligence and machine learning. arXiv has become critical infrastructure for scientific communication, allowing researchers to share findings before the lengthy peer review process is complete

2

.Surge in AI-Generated Submissions Overwhelms Moderators

The numbers tell a stark story of the platform's quality crisis. According to Steinn Sigurðsson, arXiv's scientific director and an astrophysicist at Penn State University, rejection rates have skyrocketed from just 2-3% of submissions a year ago to 10% currently

1

. The platform now receives hundreds of review articles every month, a dramatic increase from the small number of high-quality reviews historically submitted by senior researchers3

.Thomas Dietterich, a computer scientist at Oregon State University who chairs arXiv's computer science section, described the problematic submissions as "many surveys that are just annotated bibliographies without analysis, synthesis or road mapping." He noted that citations often include papers on completely unrelated subjects, suggesting potential citation manipulation schemes

1

.The platform's 240 volunteer moderators have found these AI-generated papers particularly time-consuming to evaluate, despite not representing the largest volume of submissions. Stephanie Orphan, arXiv's programme director, explained that modern chatbots have become sophisticated enough to produce content that escapes simple plagiarism detection tools

1

.

Source: 404 Media

Broader Impact on Academic Publishing

The arXiv policy change reflects a wider crisis in academic publishing as AI tools make it trivially easy to generate superficial research papers. Recent studies have documented the scope of this problem: research published in Nature Human Behaviour found that nearly a quarter of all computer science abstracts showed evidence of large language model modification by September 2024

3

.The issue extends beyond preprint servers to peer-reviewed journals, where AI-generated content has led to embarrassing retractions and raised questions about the integrity of the review process. Even peer reviewers themselves have been caught using ChatGPT to assist with their evaluations, partly due to the overwhelming demands placed on their time

2

.

Source: Decrypt

Related Stories

Mixed Reactions from Research Community

The research community's response to arXiv's new policy has been divided. Stephen Casper, an AI safety researcher, raised concerns that the restrictions might disproportionately harm early-career researchers and those working on ethics and governance topics. "Review/position papers are disproportionately written by young people, people without access to lots of compute, and people who are not at institutions that have lots of publishing experience," he argued

3

.However, others in the academic community have supported the move as necessary. Richard Sever, chief science and strategy officer of openRxiv, which operates bioRxiv and medRxiv, called it "a wise move," noting that both biomedical preprint servers have maintained "no narrative reviews" policies from their inception

1

.Future Implications and Enforcement

Under the new policy, authors submitting computer science review or position papers must provide documentation of successful peer review, including journal references and DOIs. Workshop reviews will not meet this standard. arXiv emphasized that the change currently affects only the computer science category, though other sections may adopt similar policies if they experience comparable surges in AI-generated submissions

3

.Executive director Ramin Zabih indicated that this policy change is likely just the beginning, stating that arXiv is "actively contemplating more measures along these lines" as the platform continues to grapple with the challenges posed by AI-generated content

1

.References

Summarized by

Navi

Related Stories

Scientists Hide AI Prompts in Research Papers to Manipulate Peer Reviews

12 Jul 2025•Science and Research

AI Tool Flags Over 1,000 Suspicious Open-Access Journals, Aiding Scientific Integrity

29 Aug 2025•Technology

AI Hallucinations Contaminate NeurIPS Papers as 100 Fabricated Citations Slip Past Peer Review

21 Jan 2026•Science and Research

Recent Highlights

1

Tennessee Teens Sue Elon Musk's xAI Over Grok AI-Generated Child Abuse Images

Policy and Regulation

2

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation

3

Val Kilmer to appear posthumously in As Deep as the Grave through AI-generated performance

Entertainment and Society