Brain Implant Breakthrough: Real-Time Thought-to-Speech Translation

18 Sources

18 Sources

[1]

Brain implant translates thoughts to speech in an instant

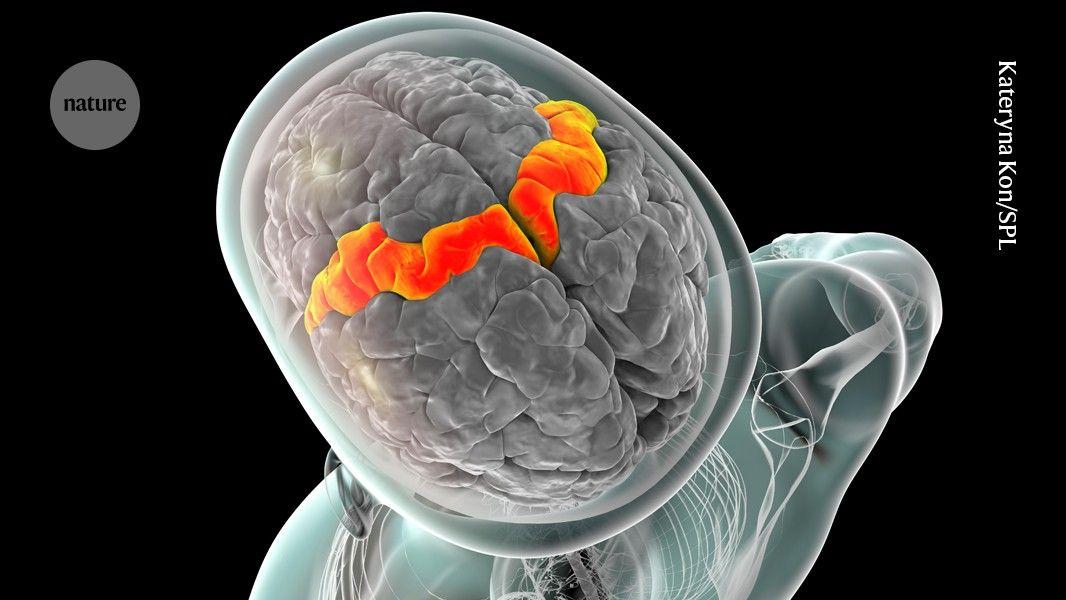

A brain-reading implant that translates neural signals into audible speech has allowed a woman with paralysis to hear what she intends to say nearly instantly. Researchers enhanced the device -- known as a brain-computer interface (BCI) -- with artificial intelligence (AI) algorithms that decoded sentences as the woman thought of them, and then spoke them out loud using a synthetic voice. Unlike previous efforts, which could produce sounds only after users finished an entire sentence, the current approach can simultaneously detect words and turn them into speech within three seconds. Older speech-generating BCIs are similar to "a WhatsApp conversation", says Christian Herff, a computational neuroscientist at Maastricht University, the Netherlands, who was not involved with the work. "I write a sentence, you write a sentence and you need some time to write a sentence again... It just doesn't flow like a normal conversation." BCIs that stream speech in real time are "the next level" in research because they allow users to convey the tone and emphasis that are characteristic of natural speech, he adds. The study participant, Ann, lost her ability to speak after a stroke in her brainstem in 2005. Some 18 years later, she underwent a surgery to place a paper-thin rectangle containing 253 electrodes on the surface on her brain cortex. The implant can record the combined activity of thousands of neurons at the same time. Researchers personalized the synthetic voice to sound like Ann's own voice from before her injury, by training AI algorithms on recordings from her wedding video. During the latest study, Ann silently mouthed 100 sentences from a set of 1,024 words and 50 phrases that appeared on a screen. The BCI device captured her neural signals every 80 milliseconds, starting 500 milliseconds before Ann started to silently say the sentences. It produced between 47 and 90 words per minute (natural conversation happens at around 160 words per minute). The results represent a marked improvement compared with an older version of the technology that Ann tested in a previous study, and with the current assistant-communication device she uses, which takes more than 20 seconds to stream out a single sentence. Herff says that, although the BCI works for short sentences, it still operates with "quite a big delay" compared with a natural conversation. Studies show that, "when the delay is larger than 50 milliseconds, it starts to really confuse you", he adds. "This is where we are right now," says study co-author Edward Chang, a neurosurgeon at the University of California, San Francisco. "But you can imagine, with more sensors, with more precision and with enhanced signal processing, those things are only going to change and get better."

[2]

Brain implant helps woman with paralysis speak with her own voice again

The new method decodes brain signals while simultaneously feeding them through a text-to-speech AI model. Researchers have developed a new method for intercepting neural signals from the brain of a person with paralysis and translating them into audible speech -- all in near real-time. The result is a brain-computer interface (BCI) system similar to an advanced version of Google Translate, but instead of converting one language to another, it deciphers neural data and transforms it into spoken sentences. Recent advancements in machine learning have enabled researchers to train AI voice synthesizers using recordings of the individual's own voice, making the generated speech more natural and personalized. Patients with paralysis have already used BCI to improve physical motor control function by controlling computer mice and prosthetic limbs. This particular system addresses a more specific subsection of patients who have also lost their capacity to speak. In testing, the paralyzed patient was able to silently read full text sentences, which were then converted into speech by the AI voice with a delay of less than 80 milliseconds. Results of the study were published this week in the journal Nature Neuroscience by a team of researchers from the University of California, Berkeley and the University of California, San Francisco. "Our streaming approach brings the same rapid speech decoding capacity of devices like Alexa and Siri to neuroprostheses," UC Berkeley professor and co-principal investigator of the study Gopala Anumanchipalli said in a statement. "Using a similar type of algorithm, we found that we could decode neural data and, for the first time, enable near-synchronous voice streaming. The result is more naturalistic, fluent speech synthesis." Researchers worked with a paralyzed woman named Ann, who lost her ability to speak following an unspecified accident. To collect neural data, the team implanted a 253-channel high-density electrocorticography (ECoG) array over the area of her brain responsible for speech motor control. They recorded her brain activity as she silently mouthed or mimed phrases displayed on a screen. Ann was ultimately presented with hundreds of sentences, all based on a limited vocabulary of 1,024 words. This initial data collection phase allowed researchers to begin decoding her thoughts. "We are essentially intercepting signals where the thought is translated into articulation and in the middle of that motor control," study co-author Cheol Jun Cho said in a statement. "So what we're decoding is after a thought has happened, after we've decided what to say, after we've decided what words to use and how to move our vocal-tract muscles." The decoded neural data was then processed through a text-to-speech AI model trained on real voice recordings of Ann from before her injury. While various tools have long existed to help individuals with paralysis communicate, they are often too slow for natural, back-and-forth conversation. The late theoretical physicist Stephen Hawking, for example, used a computer and voice synthesizer to speak, but the system's limited interface allowed him to produce only 10 to 15 words per minute. More advanced brain-computer interface (BCI) models have significantly improved communication speed, but they have still struggled with input lag. A previous version of this AI model, developed by the same research team, for instance, had an average delay of eight seconds between decoding neural data and producing speech. Related: [This cap is a big step towards universal, noninvasive brain-computer interfaces] This latest breakthrough reduced input delay to less than a second -- an improvement researchers attribute to rapid advancements in machine learning across the tech industry in recent years. Unlike previous models, which waited for Ann to complete a full thought before translating it, this system "continuously decodes" speech while simultaneously vocalizing it. For Ann, this means she can now hear herself speak a sentence in her own voice within a second of thinking it. A video demonstration of the clinical trial shows Ann looking at the phrase "you love me" on a screen in front of her. Moments later, the AI model -- trained on her own voice -- speaks the words aloud. Seconds after that, she successfully repeats the phrases "so did you do it" and "where did you get this?" Ann reportedly appreciated that the synthesized speech sounded like her own voice. "Hearing her own voice in near-real time increased her sense of embodiment," Anumanchipalli said. This advancement comes as BCIs are gaining public recognition. Neuralink, founded by Elon Musk in 2016, has already successfully implanted its BCI device in three human patients. The first, a 30-year-old man named Noland Arbaugh with quadriplegia, says the device has allowed him to control a computer mouse and play video games using only his thoughts. Since then, Neuralink has upgraded the system with more electrodes, which the company says should provide greater bandwidth and longer battery life. Neuralink recently received a special designation from the Food and Drug Administration (FDA) to explore a similar device aimed at restoring eyesight. Meanwhile, Synchron, another leading BCI company, recently demonstrated that a patient living with ALS could operate an Apple Vision Pro mixed reality headset using only neural inputs. "Using this type of enhanced reality is so impactful and I can imagine it would be for others in my position or others who have lost the ability to engage in their day-to-day life," a Synchron patient with ALS named Mark said in a statement. "It can transport you to places you never thought you'd see or experience again." Though the field is mostly dominated by US startups, other countries are catching up. Just this week, a Chinese BCI company called NeuCyber NeuroTech announced it had inserted its own semi-invasive BCI chip into three patients over the past month. The company, according to Reuters, plans to implant its "Beinao No.1" device into 10 more patients by the end of the year. All of that said, it will still take time before BCIs can meaningfully bring back conversational dialogue in day-to-day life for those who no longer have the capacity for speech. The California researchers say their next steps involve improving their interception methods and AI models to better reflect changes in vocal tone and pitch, two elements crucial for communicating emotion. They are also working on bringing their already low latency down even further. "That's ongoing work, to try to see how well we can actually decode these paralinguistic features from brain activity," UC Berkeley PhD student and paper co-author Kaylo Littlejohn said.

[3]

Speech neuroprosthesis unlocks near-real-time communication for patients with paralysis

University of California Berkeley College of EngineeringMar 31 2025 Marking a breakthrough in the field of brain-computer interfaces (BCIs), a team of researchers from UC Berkeley and UC San Francisco has unlocked a way to restore naturalistic speech for people with severe paralysis. This work solves the long-standing challenge of latency in speech neuroprostheses, the time lag between when a subject attempts to speak and when sound is produced. Using recent advances in artificial intelligence-based modeling, the researchers developed a streaming method that synthesizes brain signals into audible speech in near-real time. As reported in Nature Neuroscience, this technology represents a critical step toward enabling communication for people who have lost the ability to speak. The study is supported by the National Institute on Deafness and Other Communication Disorders (NIDCD) of the National Institutes of Health. Our streaming approach brings the same rapid speech decoding capacity of devices like Alexa and Siri to neuroprostheses. Using a similar type of algorithm, we found that we could decode neural data and, for the first time, enable near-synchronous voice streaming. The result is more naturalistic, fluent speech synthesis." Gopala Anumanchipalli, Robert E. and Beverly A. Brooks Assistant Professor of Electrical Engineering and Computer Sciences at UC Berkeley and co-principal investigator of the study "This new technology has tremendous potential for improving quality of life for people living with severe paralysis affecting speech," said neurosurgeon Edward Chang, senior co-principal investigator of the study. Chang leads the clinical trial at UCSF that aims to develop speech neuroprosthesis technology using high-density electrode arrays that record neural activity directly from the brain surface. "It is exciting that the latest AI advances are greatly accelerating BCIs for practical real-world use in the near future." The researchers also showed that their approach can work well with a variety of other brain sensing interfaces, including microelectrode arrays (MEAs) in which electrodes penetrate the brain's surface, or non-invasive recordings (sEMG) that use sensors on the face to measure muscle activity. "By demonstrating accurate brain-to-voice synthesis on other silent-speech datasets, we showed that this technique is not limited to one specific type of device," said Kaylo Littlejohn, Ph.D. student at UC Berkeley's Department of Electrical Engineering and Computer Sciences and co-lead author of the study. "The same algorithm can be used across different modalities provided a good signal is there." Decoding neural data into speech According to study co-lead author Cheol Jun Cho, UC Berkeley Ph.D. student in electrical engineering and computer sciences, the neuroprosthesis works by sampling neural data from the motor cortex, the part of the brain that controls speech production, then uses AI to decode brain function into speech. "We are essentially intercepting signals where the thought is translated into articulation and in the middle of that motor control," he said. "So what we're decoding is after a thought has happened, after we've decided what to say, after we've decided what words to use and how to move our vocal-tract muscles." To collect the data needed to train their algorithm, the researchers first had Ann, their subject, look at a prompt on the screen - like the phrase: "Hey, how are you?" - and then silently attempt to speak that sentence. "This gave us a mapping between the chunked windows of neural activity that she generates and the target sentence that she's trying to say, without her needing to vocalize at any point," said Littlejohn. Because Ann does not have any residual vocalization, the researchers did not have target audio, or output, to which they could map the neural data, the input. They solved this challenge by using AI to fill in the missing details. "We used a pretrained text-to-speech model to generate audio and simulate a target," said Cho. "And we also used Ann's pre-injury voice, so when we decode the output, it sounds more like her." Streaming speech in near real time In their previous BCI study, the researchers had a long latency for decoding, about an 8-second delay for a single sentence. With the new streaming approach, audible output can be generated in near-real time, as the subject is attempting to speak. To measure latency, the researchers employed speech detection methods, which allowed them to identify the brain signals indicating the start of a speech attempt. "We can see relative to that intent signal, within 1 second, we are getting the first sound out," said Anumanchipalli. "And the device can continuously decode speech, so Ann can keep speaking without interruption." This greater speed did not come at the cost of precision. The faster interface delivered the same high level of decoding accuracy as their previous, non-streaming approach. "That's promising to see," said Littlejohn. "Previously, it was not known if intelligible speech could be streamed from the brain in real time." Anumanchipalli added that researchers don't always know whether large-scale AI systems are learning and adapting, or simply pattern-matching and repeating parts of the training data. So the researchers also tested the real-time model's ability to synthesize words that were not part of the training dataset vocabulary - in this case, 26 rare words taken from the NATO phonetic alphabet, such as "Alpha," "Bravo," "Charlie" and so on. "We wanted to see if we could generalize to the unseen words and really decode Ann's patterns of speaking," he said. "We found that our model does this well, which shows that it is indeed learning the building blocks of sound or voice." Ann, who also participated in the 2023 study, shared with researchers how her experience with the new streaming synthesis approach compared to the earlier study's text-to-speech decoding method. "She conveyed that streaming synthesis was a more volitionally controlled modality," said Anumanchipalli. "Hearing her own voice in near-real time increased her sense of embodiment." Future directions This latest work brings researchers a step closer to achieving naturalistic speech with BCI devices, while laying the groundwork for future advances. "This proof-of-concept framework is quite a breakthrough," said Cho. "We are optimistic that we can now make advances at every level. On the engineering side, for example, we will continue to push the algorithm to see how we can generate speech better and faster." The researchers also remain focused on building expressivity into the output voice to reflect the changes in tone, pitch or loudness that occur during speech, such as when someone is excited. "That's ongoing work, to try to see how well we can actually decode these paralinguistic features from brain activity," said Littlejohn. "This is a longstanding problem even in classical audio synthesis fields and would bridge the gap to full and complete naturalism." In addition to the NIDCD, support for this research was provided by the Japan Science and Technology Agency's Moonshot Research and Development Program, the Joan and Sandy Weill Foundation, Susan and Bill Oberndorf, Ron Conway, Graham and Christina Spencer, the William K. Bowes, Jr. Foundation, the Rose Hills Innovator and UC Noyce Investigator programs, and the National Science Foundation. University of California Berkeley College of Engineering Journal reference: Littlejohn, K. T., et al. (2025). A streaming brain-to-voice neuroprosthesis to restore naturalistic communication. Nature Neuroscience. doi.org/10.1038/s41593-025-01905-6.

[4]

Mind-reading brain implant continuously 'streams' thoughts through speaker

The brain-computer interface can provide a synthetic voice to those unable to speak. (Image credit: Noah Berger) A brain implant that uses artificial intelligence (AI) can almost instantaneously decode a person's thoughts and stream them through a speaker, new research shows. This is the first time researchers have achieved near-synchronous brain-to-voice streaming. The experimental mind-reading technology is designed to give a synthetic voice to people with severe paralysis who cannot speak. It works by putting electrodes onto the brain's surface as part of an implant called a neuroprosthesis, which allows scientists to identify and interpret speech signals. The brain-computer interface (BCI) uses AI to decode neural signals and can stream intended speech from the brain in close to real time, according to a statement released by the University of California (UC), Berkeley. The team previously unveiled an earlier version of the technology in 2023, but the new version is quicker and less robotic. "Our streaming approach brings the same rapid speech decoding capacity of devices like Alexa and Siri to neuroprostheses," study co-principal investigator Gopala Anumanchipalli, an assistant professor of electrical engineering and computer sciences at UC Berkeley, said in the statement. "Using a similar type of algorithm, we found that we could decode neural data and, for the first time, enable near-synchronous voice streaming." Anumanchipalli and his colleagues shared their findings in a study published Monday (March 31) in the journal Nature Neuroscience. Related: AI analysis of 100 hours of real conversations -- and the brain activity underpinning them -- reveals how humans understand language The first person to trial this technology, identified as Ann, suffered a stroke in 2005 that left her severely paralyzed and unable to speak. She has since allowed researchers to implant 253 electrodes onto her brain to monitor the part of our brains that controls speech -- called the motor cortex -- to help develop synthetic speech technologies. "We are essentially intercepting signals where the thought is translated into articulation and in the middle of that motor control," study co-lead author Cheol Jun Cho, a doctoral student in electrical engineering and computer sciences at UC Berkeley, said in the statement. "So what we're decoding is after a thought has happened, after we've decided what to say, after we've decided what words to use and how to move our vocal-tract muscles." AI decodes data sampled by the implant to help convert neural activity into synthetic speech. The team trained their AI algorithm by having Ann silently attempt to speak sentences that appeared on a screen before her, and then by matching the neural activity to the words she wanted to say. The system sampled brain signals every 80 milliseconds (0.08 seconds) and could detect words and convert them into speech with a delay of up to around 3 seconds, according to the study. That's a little slow compared to normal conversation, but faster than the previous version, which had a delay of about 8 seconds and could only process whole sentences. The new system benefits from converting shorter windows of neural activity than the old one, so it can continuously process individual words rather than waiting for a finished sentence. The researchers say the new study is a step toward achieving more natural-sounding synthetic speech with BCIs. "This proof-of-concept framework is quite a breakthrough," Cho said. "We are optimistic that we can now make advances at every level. On the engineering side, for example, we will continue to push the algorithm to see how we can generate speech better and faster."

[5]

A stroke survivor speaks again with the help of an experimental brain-computer implant

Scientists have developed a device that can translate thoughts about speech into spoken words in real time. Although it's still experimental, they hope the brain-computer interface could someday help give voice to those unable to speak. A new study described testing the device on a 47-year-old woman with quadriplegia who couldn't speak for 18 years after a stroke. Doctors implanted it in her brain during surgery as part of a clinical trial. It "converts her intent to speak into fluent sentences," said Gopala Anumanchipalli, a co-author of the study published Monday in the journal Nature Neuroscience. Other brain-computer interfaces, or BCIs, for speech typically have a slight delay between thoughts of sentences and computerized verbalization. Such delays can disrupt the natural flow of conversation, potentially leading to miscommunication and frustration, researchers said. This is "a pretty big advance in our field," said Jonathan Brumberg of the Speech and Applied Neuroscience Lab at the University of Kansas, who was not part of the study. A team in California recorded the woman's brain activity using electrodes while she spoke sentences silently in her brain. The scientists used a synthesizer they built using her voice before her injury to create a speech sound that she would have spoken. They trained an AI model that translates neural activity into units of sound. It works similar to existing systems used to transcribe meetings or phone calls in real time, said Anumanchipalli, of the University of California, Berkeley. The implant itself sits on the speech center of the brain so that it's listening in, and those signals are translated to pieces of speech that make up sentences. It's a "streaming approach," Anumanchipalli said, with each 80-millisecond chunk of speech - about half a syllable - sent into a recorder. "It's not waiting for a sentence to finish," Anumanchipalli said. "It's processing it on the fly." Decoding speech that quickly has the potential to keep up with the fast pace of natural speech, said Brumberg. The use of voice samples, he added, "would be a significant advance in the naturalness of speech." Though the work was partially funded by the National Institutes of Health, Anumanchipalli said it wasn't affected by recent NIH research cuts. More research is needed before the technology is ready for wide use, but with "sustained investments," it could be available to patients within a decade, he said. The Associated Press Health and Science Department receives support from the Howard Hughes Medical Institute's Science and Educational Media Group and the Robert Wood Johnson Foundation. The AP is solely responsible for all content.

[6]

Brain-to-Voice AI Streams Natural Speech for People with Paralysis - Neuroscience News

Summary: Researchers have developed a brain-computer interface that can synthesize natural-sounding speech from brain activity in near real time, restoring a voice to people with severe paralysis. The system decodes signals from the motor cortex and uses AI to transform them into audible speech with minimal delay -- less than one second. Unlike previous systems, this method preserves fluency and allows for continuous speech, even generating personalized voices. This breakthrough brings scientists closer to giving people with speech loss the ability to communicate in real-time, using only their brain activity. Marking a breakthrough in the field of brain-computer interfaces (BCIs), a team of researchers from UC Berkeley and UC San Francisco has unlocked a way to restore naturalistic speech for people with severe paralysis. This work solves the long-standing challenge of latency in speech neuroprostheses, the time lag between when a subject attempts to speak and when sound is produced. Using recent advances in artificial intelligence-based modeling, the researchers developed a streaming method that synthesizes brain signals into audible speech in near-real time. As reported in Nature Neuroscience, this technology represents a critical step toward enabling communication for people who have lost the ability to speak. The study is supported by the National Institute on Deafness and Other Communication Disorders (NIDCD) of the National Institutes of Health. "Our streaming approach brings the same rapid speech decoding capacity of devices like Alexa and Siri to neuroprostheses," said Gopala Anumanchipalli, Robert E. and Beverly A. Brooks Assistant Professor of Electrical Engineering and Computer Sciences at UC Berkeley and co-principal investigator of the study. "Using a similar type of algorithm, we found that we could decode neural data and, for the first time, enable near-synchronous voice streaming. The result is more naturalistic, fluent speech synthesis." "This new technology has tremendous potential for improving quality of life for people living with severe paralysis affecting speech," said neurosurgeon Edward Chang, senior co-principal investigator of the study. Chang leads the clinical trial at UCSF that aims to develop speech neuroprosthesis technology using high-density electrode arrays that record neural activity directly from the brain surface. "It is exciting that the latest AI advances are greatly accelerating BCIs for practical real-world use in the near future." The researchers also showed that their approach can work well with a variety of other brain sensing interfaces, including microelectrode arrays (MEAs) in which electrodes penetrate the brain's surface, or non-invasive recordings (sEMG) that use sensors on the face to measure muscle activity. "By demonstrating accurate brain-to-voice synthesis on other silent-speech datasets, we showed that this technique is not limited to one specific type of device," said Kaylo Littlejohn, Ph.D. student at UC Berkeley's Department of Electrical Engineering and Computer Sciences and co-lead author of the study. "The same algorithm can be used across different modalities provided a good signal is there." Decoding neural data into speech According to study co-lead author Cheol Jun Cho, UC Berkeley Ph.D. student in electrical engineering and computer sciences, the neuroprosthesis works by sampling neural data from the motor cortex, the part of the brain that controls speech production, then uses AI to decode brain function into speech. "We are essentially intercepting signals where the thought is translated into articulation and in the middle of that motor control," he said. "So what we're decoding is after a thought has happened, after we've decided what to say, after we've decided what words to use and how to move our vocal-tract muscles." To collect the data needed to train their algorithm, the researchers first had Ann, their subject, look at a prompt on the screen -- like the phrase: "Hey, how are you?" -- and then silently attempt to speak that sentence. "This gave us a mapping between the chunked windows of neural activity that she generates and the target sentence that she's trying to say, without her needing to vocalize at any point," said Littlejohn. Because Ann does not have any residual vocalization, the researchers did not have target audio, or output, to which they could map the neural data, the input. They solved this challenge by using AI to fill in the missing details. "We used a pretrained text-to-speech model to generate audio and simulate a target," said Cho. "And we also used Ann's pre-injury voice, so when we decode the output, it sounds more like her." Streaming speech in near real time In their previous BCI study, the researchers had a long latency for decoding, about an 8-second delay for a single sentence. With the new streaming approach, audible output can be generated in near-real time, as the subject is attempting to speak. To measure latency, the researchers employed speech detection methods, which allowed them to identify the brain signals indicating the start of a speech attempt. "We can see relative to that intent signal, within 1 second, we are getting the first sound out," said Anumanchipalli. "And the device can continuously decode speech, so Ann can keep speaking without interruption." This greater speed did not come at the cost of precision. The faster interface delivered the same high level of decoding accuracy as their previous, non-streaming approach. "That's promising to see," said Littlejohn. "Previously, it was not known if intelligible speech could be streamed from the brain in real time." Anumanchipalli added that researchers don't always know whether large-scale AI systems are learning and adapting, or simply pattern-matching and repeating parts of the training data. So the researchers also tested the real-time model's ability to synthesize words that were not part of the training dataset vocabulary -- in this case, 26 rare words taken from the NATO phonetic alphabet, such as "Alpha," "Bravo," "Charlie" and so on. "We wanted to see if we could generalize to the unseen words and really decode Ann's patterns of speaking," he said. "We found that our model does this well, which shows that it is indeed learning the building blocks of sound or voice." Ann, who also participated in the 2023 study, shared with researchers how her experience with the new streaming synthesis approach compared to the earlier study's text-to-speech decoding method. "She conveyed that streaming synthesis was a more volitionally controlled modality," said Anumanchipalli. "Hearing her own voice in near-real time increased her sense of embodiment." Future directions This latest work brings researchers a step closer to achieving naturalistic speech with BCI devices, while laying the groundwork for future advances. "This proof-of-concept framework is quite a breakthrough," said Cho. "We are optimistic that we can now make advances at every level. On the engineering side, for example, we will continue to push the algorithm to see how we can generate speech better and faster." The researchers also remain focused on building expressivity into the output voice to reflect the changes in tone, pitch or loudness that occur during speech, such as when someone is excited. "That's ongoing work, to try to see how well we can actually decode these paralinguistic features from brain activity," said Littlejohn. "This is a longstanding problem even in classical audio synthesis fields and would bridge the gap to full and complete naturalism." Funding: In addition to the NIDCD, support for this research was provided by the Japan Science and Technology Agency's Moonshot Research and Development Program, the Joan and Sandy Weill Foundation, Susan and Bill Oberndorf, Ron Conway, Graham and Christina Spencer, the William K. Bowes, Jr. Foundation, the Rose Hills Innovator and UC Noyce Investigator programs, and the National Science Foundation. A streaming brain-to-voice neuroprosthesis to restore naturalistic communication Natural spoken communication happens instantaneously. Speech delays longer than a few seconds can disrupt the natural flow of conversation. This makes it difficult for individuals with paralysis to participate in meaningful dialogue, potentially leading to feelings of isolation and frustration. Here we used high-density surface recordings of the speech sensorimotor cortex in a clinical trial participant with severe paralysis and anarthria to drive a continuously streaming naturalistic speech synthesizer. We designed and used deep learning recurrent neural network transducer models to achieve online large-vocabulary intelligible fluent speech synthesis personalized to the participant's preinjury voice with neural decoding in 80-ms increments. Offline, the models demonstrated implicit speech detection capabilities and could continuously decode speech indefinitely, enabling uninterrupted use of the decoder and further increasing speed. Our framework also successfully generalized to other silent-speech interfaces, including single-unit recordings and electromyography. Our findings introduce a speech-neuroprosthetic paradigm to restore naturalistic spoken communication to people with paralysis.

[7]

Breakthrough brain-to-voice tech brings natural speech within reach for paralyzed patients

Forward-looking: Researchers from the University of California, Berkeley, and the University of California, San Francisco, have developed a brain-computer interface system capable of restoring naturalistic speech to individuals with severe paralysis. The innovation, which addresses a long-standing challenge in speech neuroprostheses, was detailed in a study published in Nature Neuroscience and represents a significant leap forward in enabling real-time communication for those who have lost the ability to speak. The research team overcame the issue of latency - the delay between a person's intent to speak and the production of sound - by leveraging advances in artificial intelligence. Their streaming system decodes neural signals into audible speech in near-real time. "Our streaming approach brings the same rapid speech decoding capacity of devices like Alexa and Siri to neuroprostheses," explained Gopala Anumanchipalli, co-principal investigator and assistant professor at UC Berkeley. "Using a similar type of algorithm, we found that we could decode neural data and, for the first time, enable near-synchronous voice streaming. The result is more naturalistic, fluent speech synthesis." The technology holds immense promise for improving the lives of individuals with conditions such as ALS or stroke-induced paralysis. "It is exciting that the latest AI advances are greatly accelerating BCIs for practical real-world use in the near future," said Edward Chang, a neurosurgeon at UCSF and senior co-principal investigator of the study. The system works by sampling neural data from the motor cortex - the part of the brain responsible for controlling speech production - and using AI to decode this activity into spoken words. The researchers tested their method on Ann, a 47-year-old woman who has been unable to speak since a stroke 18 years ago. Ann participated in a clinical trial where electrodes implanted on her brain surface recorded neural activity as she silently attempted to speak sentences displayed on a screen. These signals were then decoded into audible speech using an AI model trained with her pre-injury voice. "We are essentially intercepting signals where the thought is translated into articulation," explained Cheol Jun Cho, a UC Berkeley Ph.D. student and co-lead author of the study. "So what we're decoding is after a thought has happened - after we've decided what to say and how to move our vocal-tract muscles." This approach allowed researchers to map Ann's neural activity to target sentences without requiring her to vocalize. One of the key breakthroughs was achieving near real-time speech synthesis. Previous BCI systems had significant delays - up to eight seconds for decoding a single sentence - but this new method reduced latency dramatically. "We can see relative to that intent signal, within one second, we are getting the first sound out," noted Anumanchipalli. The system also demonstrated continuous decoding capabilities, allowing Ann to "speak" without interruptions. Despite its speed, the system maintained high accuracy in decoding speech. To test its adaptability, researchers evaluated whether it could synthesize words outside its training dataset. Using rare words from the NATO phonetic alphabet like "Alpha" and "Bravo," they confirmed that their model could generalize beyond familiar vocabulary. "We found that our model does this well, which shows that it is indeed learning the building blocks of sound or voice," said Anumanchipalli. Ann herself noted a profound difference between this new streaming approach and earlier text-to-speech methods used in prior studies. According to Anumanchipalli, she described hearing her own voice in near-real time as increasing her sense of embodiment - a critical step toward making BCIs feel more natural. The researchers also explored how their system could work with different brain-sensing technologies, including microelectrode arrays (MEAs) that penetrate brain tissue, and non-invasive surface electromyography (sEMG) sensors that detect muscle activity on the face. This versatility suggests broader potential applications across various BCI platforms. The team is now focused on further enhancing and optimizing their technology. One area of ongoing research involves enhancing expressivity by incorporating paralinguistic features such as tone, pitch, and loudness into synthesized speech. "This is a longstanding problem even in classical audio synthesis fields," said Kaylo Littlejohn, another co-lead author and Ph.D. student at UC Berkeley. "It would bridge the gap to full and complete naturalism." While still experimental, this breakthrough offers hope that BCIs capable of restoring fluent speech could become widely available within the next decade with sustained investment and development. The project received funding from organizations including the National Institute on Deafness and Other Communication Disorders (NIDCD), Japan Science and Technology Agency's Moonshot Program, and several private foundations. "This proof-of-concept framework is quite a breakthrough," said Cho. "We are optimistic that we can now make advances at every level."

[8]

Brain-to-voice interface converts thoughts to speech in near-real time

Marking a breakthrough in the field of brain-computer interfaces (BCIs), a team of researchers from UC Berkeley and UC San Francisco has unlocked a way to restore naturalistic speech for people with severe paralysis. This work solves the long-standing challenge of latency in speech neuroprostheses, the time lag between when a subject attempts to speak and when sound is produced. Using recent advances in artificial intelligence-based modeling, the researchers developed a streaming method that synthesizes brain signals into audible speech in near-real time. As reported in Nature Neuroscience, this technology represents a critical step toward enabling communication for people who have lost the ability to speak. "Our streaming approach brings the same rapid speech decoding capacity of devices like Alexa and Siri to neuroprostheses," said Gopala Anumanchipalli, Robert E. and Beverly A. Brooks Assistant Professor of Electrical Engineering and Computer Sciences at UC Berkeley and co-principal investigator of the study. "Using a similar type of algorithm, we found that we could decode neural data and, for the first time, enable near-synchronous voice streaming. The result is more naturalistic, fluent speech synthesis." "This new technology has tremendous potential for improving quality of life for people living with severe paralysis affecting speech," said neurosurgeon Edward Chang, senior co-principal investigator of the study. Chang leads the clinical trial at UCSF that aims to develop speech neuroprosthesis technology using high-density electrode arrays that record neural activity directly from the brain surface. "It is exciting that the latest AI advances are greatly accelerating BCIs for practical real-world use in the near future." The researchers also showed that their approach can work well with a variety of other brain sensing interfaces, including microelectrode arrays (MEAs) in which electrodes penetrate the brain's surface, or non-invasive recordings (sEMG) that use sensors on the face to measure muscle activity. "By demonstrating accurate brain-to-voice synthesis on other silent-speech datasets, we showed that this technique is not limited to one specific type of device," said Kaylo Littlejohn, Ph.D. student at UC Berkeley's Department of Electrical Engineering and Computer Sciences and co-lead author of the study. "The same algorithm can be used across different modalities provided a good signal is there." Decoding neural data into speech According to study co-lead author Cheol Jun Cho, UC Berkeley Ph.D. student in electrical engineering and computer sciences, the neuroprosthesis works by sampling neural data from the motor cortex, the part of the brain that controls speech production, then uses AI to decode brain function into speech. "We are essentially intercepting signals where the thought is translated into articulation and in the middle of that motor control," he said. "So what we're decoding is after a thought has happened, after we've decided what to say, after we've decided what words to use and how to move our vocal-tract muscles." To collect the data needed to train their algorithm, the researchers first had Ann, their subject, look at a prompt on the screen -- like the phrase: "Hey, how are you?" -- and then silently attempt to speak that sentence. "This gave us a mapping between the chunked windows of neural activity that she generates and the target sentence that she's trying to say, without her needing to vocalize at any point," said Littlejohn. Because Ann does not have any residual vocalization, the researchers did not have target audio, or output, to which they could map the neural data, the input. They solved this challenge by using AI to fill in the missing details. "We used a pretrained text-to-speech model to generate audio and simulate a target," said Cho. "And we also used Ann's pre-injury voice, so when we decode the output, it sounds more like her." Streaming speech in near-real time In their previous BCI study, the researchers had a long latency for decoding, about an 8-second delay for a single sentence. With the new streaming approach, audible output can be generated in near-real time, as the subject is attempting to speak. To measure latency, the researchers employed speech detection methods, which allowed them to identify the brain signals indicating the start of a speech attempt. "We can see relative to that intent signal, within 1 second, we are getting the first sound out," said Anumanchipalli. "And the device can continuously decode speech, so Ann can keep speaking without interruption." This greater speed did not come at the cost of precision. The faster interface delivered the same high level of decoding accuracy as their previous, non-streaming approach. "That's promising to see," said Littlejohn. "Previously, it was not known if intelligible speech could be streamed from the brain in real time." Anumanchipalli added that researchers don't always know whether large-scale AI systems are learning and adapting, or simply pattern-matching and repeating parts of the training data. So the researchers also tested the real-time model's ability to synthesize words that were not part of the training dataset vocabulary -- in this case, 26 rare words taken from the NATO phonetic alphabet, such as "Alpha," "Bravo," "Charlie" and so on. "We wanted to see if we could generalize to the unseen words and really decode Ann's patterns of speaking," he said. "We found that our model does this well, which shows that it is indeed learning the building blocks of sound or voice." Ann, who also participated in the 2023 study, shared with researchers how her experience with the new streaming synthesis approach compared to the earlier study's text-to-speech decoding method. "She conveyed that streaming synthesis was a more volitionally controlled modality," said Anumanchipalli. "Hearing her own voice in near-real time increased her sense of embodiment." Future directions This latest work brings researchers a step closer to achieving naturalistic speech with BCI devices, while laying the groundwork for future advances. "This proof-of-concept framework is quite a breakthrough," said Cho. "We are optimistic that we can now make advances at every level. On the engineering side, for example, we will continue to push the algorithm to see how we can generate speech better and faster." The researchers also remain focused on building expressivity into the output voice to reflect the changes in tone, pitch or loudness that occur during speech, such as when someone is excited. "That's ongoing work, to try to see how well we can actually decode these paralinguistic features from brain activity," said Littlejohn. "This is a longstanding problem even in classical audio synthesis fields and would bridge the gap to full and complete naturalism."

[9]

Brain waves become spoken words in AI breakthrough for paralysis

Researchers from UC Berkeley and UC San Francisco connect their subject Ann's brain implant to the voice synthesizer computer California-based researchers have developed an AI-powered system to restore natural speech for paralyzed people in real time and using their own voices. This new technology from researchers at University of California Berkeley and University of California San Francisco, takes advantage of devices that can tap into the brain to measure neural activity, along with AI that actually learns how to build the sounds of a patient's voice. That's far ahead of advancements as recent as last year in the field of brain-computer interfaces for synthesizing speech. "Our streaming approach brings the same rapid speech decoding capacity of devices like Alexa and Siri to neuroprostheses," explained Gopala Anumanchipalli, an assistant professor of electrical engineering and computer sciences at UC Berkeley and co-principal investigator of the study that appeared this week in Nature Neuroscience. "Using a similar type of algorithm, we found that we could decode neural data and, for the first time, enable near-synchronous voice streaming. The result is more naturalistic, fluent speech synthesis." What's neat about this tech is that it can work effectively with a range of brain sensing interfaces. That includes high-density electrode arrays that record neural activity directly from the brain surface (like the setup the researchers used), as well as microelectrodes that penetrate the brain's surface, and non-invasive Surface Electromyography (sEMG) sensors on the face to measure muscle activity. Here's how it works. First, the neuroprosthesis fitted to the patient samples neural data from their brain's motor cortex, which controls speech production. AI then decodes that data into speech. Cheol Jun Cho, who co-authored the paper, explained, "... what we're decoding is after a thought has happened, after we've decided what to say, after we've decided what words to use and how to move our vocal-tract muscles." That AI was trained on brain function data captured from the patient silently attempting to speak the words that appeared on a screen in front of them. This allowed the team to map the neural activity and the words they were trying to say. In addition, a text-to-speech model - developed using the patient's own voice before they were injured and paralyzed - generates the audio that you can hear from the patient 'speaking.' In the proof-of-concept demonstration above, it appears the resulting speech isn't entirely perfect or completely naturally paced, but it's darn close. The system begins decoding brain signals and outputting speech within a second of the patient attempting to speak; that's down from 8 seconds in a previous study the team conducted in 2023. This could greatly improve the quality of life for people with paralysis and similar debilitating conditions like ALS, by helping them communicate everything from their day-to-day needs to their complex thoughts, and connect with loved ones more naturally. The researcher's next steps will see them speed up the AI's processing for generating speech more quickly, and explore ways to make the output voice more expressive.

[10]

US: Brain-to-speech breakthrough helps paralyzed people talk again

They created a real-time method for converting brain signals into spoken words. The key to this development is an AI-powered streaming method. By decoding brain signals directly from the motor cortex - the brain's speech control center - the AI synthesizes audible speech almost instantly. "Our streaming approach brings the same rapid speech decoding capacity of devices like Alexa and Siri to neuroprostheses," said Gopala Anumanchipalli, co-principal investigator of the study. Anumanchipalli added, "Using a similar type of algorithm, we found that we could decode neural data and, for the first time, enable near-synchronous voice streaming. The result is more naturalistic, fluent speech synthesis." This neuroprosthesis translates brain activity related to speech (from the motor cortex) into spoken words using AI. "We are essentially intercepting signals where the thought is translated into articulation and in the middle of that motor control. So what we're decoding is after a thought has happened, after we've decided what to say, after we've decided what words to use and how to move our vocal-tract muscles," explained Cheol Jun Cho, co-lead author.

[11]

Mind-reading device allows paralysed people to speak fluently

A mind-reading device that allows paralysed patients to speak fluently just by thinking has been developed. The technology can quickly decode brain signals produced by the motor cortex when a person wants to say a word before translating them into sound waves that can be "spoken" by a synthesised voice. Although similar devices have been trialled in the past, there has always been a lengthy delay between a person thinking a word and it being said out loud, making it tricky to form coherent sentences. However, a team at the University of California have used the same rapid speech-decoding capacity of AI devices such as Alexa and Siri to help speed up the process and produce more natural speech. 'Intercepting signals' Cheol Jun Cho, a doctoral student at UC Berkeley, said: "We are essentially intercepting signals where the thought is translated into articulation. "So what we're decoding is after a thought has happened, after we've decided what to say, after we've decided what words to use and how to move our vocal-tract muscles. "This proof-of-concept framework is quite a breakthrough. We will continue to push the algorithm to see how we can generate speech better and faster." Many people are unable to speak because of paralysis, disease or injury and current speech generators - which often involve gazing at individual words or letters on a screen - are time-consuming and laborious. The new device was trialled on a paralysed patient named Ann, who had an electronic array implanted over her motor cortex, to pick up her brain signals. She was then asked to look at phrases on a screen, such as "Hey, how are you?" and silently attempt to speak the sentences. The programme was able to pick out chunks of neural activity behind certain sounds so they could be reproduced as a synthesised voice.

[12]

Brain implant turns thoughts into speech in near real-time

A brain implant using artificial intelligence was able to turn a paralyzed woman's thoughts into speech almost simultaneously, US researchers said Monday. Though still at the experimental stage, the latest achievement using an implant linking brains and computers raised hopes that these devices could allow people who have lost the ability to communicate to regain their voice. The California-based team of researchers had previously used a brain-computer interface (BCI) to decode the thoughts of Ann, a 47-year-old with quadriplegia, and translate them into speech. However there was an eight-second delay between her thoughts and the speech being read aloud by a computer. This meant a flowing conversation was still out of reach for Ann, a former high school math teacher who has not been able to speak since suffering a stroke 18 years ago. But the team's new model, revealed in the journal Nature Neuroscience, turned Ann's thoughts into a version of her old speaking voice in 80-millisecond increments. "Our new streaming approach converts her brain signals to her customized voice in real time, within a second of her intent to speak," senior study author Gopala Anumanchipalli of the University of California, Berkeley told AFP. Ann's eventual goal is to become a university counselor, he added. "While we are still far from enabling that for Ann, this milestone takes us closer to drastically improving the quality of life of individuals with vocal paralysis." 'Excited to hear her voice' For the research, Ann was shown sentences on a screen -- such as "You love me then" -- which she would say to herself in her mind. Then her thoughts would be converted into her voice, which the researchers built up from recordings of her speaking before she was injured. Ann was "very excited to hear her voice, and reported a sense of embodiment," Anumanchipalli said. The BCI intercepts brain signals "after we've decided what to say, after we've decided what words to use and how to move our vocal tract muscles," study co-author Cheol Jun Cho explained in a statement. The model uses an artificial intelligence method called deep learning that was trained on Ann previously attempting to silently speak thousands of sentences. It was not always accurate -- and still has a limited vocabulary of 1,024 words. Patrick Degenaar, a neuroprosthetics professor at the UK's Newcastle University not involved in the study, told AFP that this is "very early proof of principle" research. But it is still "very cool," he added. Degenaar pointed out that this system uses an array of electrodes that do not penetrate the brain, unlike the BCI used by billionaire Elon Musk's Neuralink firm. The surgery for installing these arrays is relatively common in hospitals for diagnosing epilepsy, which means this technology would be easier to roll out en masse, he added. With proper funding, Anumanchipalli estimated the technology could be helping people communicate in five to 10 years.

[13]

AI Restores Speech to Paralyzed Stroke Survivor After Decades of Silence - Decrypt

After 18 years without speech, a woman paralyzed by a stroke has regained her voice through an experimental brain-computer interface developed by researchers at the University of California, Berkeley, and UC San Francisco. The research, published in Nature Neuroscience on Monday, utilized artificial intelligence to translate the thoughts of the participant, known as "Anne," into natural speech in real time. "Unlike vision, motion, or hunger -- shared with other species -- speech sets us apart. That alone makes it a fascinating research topic," Assistant Professor of Electrical Engineering and Computer Sciences at UC Berkeley, Gopala Anumanchipalli, told Decrypt. "It's still one of the big unknowns: how intelligent behavior emerges from neurons and cortical tissue." The study used a brain-computer interface to create a direct pathway between Anne's brain's electrical signals and a computer. As Anumanchipalli explained, the interface reads neural signals using a grid of electrodes placed on the brain's speech center. "But it became clear there are conditions -- like ALS, brainstem stroke, or injury -- where the body becomes inaccessible, and the person is 'locked in.' They're cognitively intact but unable to move or speak," Anumanchipalli said. Anumanchipalli noted that while significant progress has been made in creating artificial limbs, restoring speech remains more complex. "Both are motor systems, but limb movement is a simpler problem than mouth movement, which involves more joints and muscles," he said. "Arm restoration is also something we pursue." Emphasizing the importance of rapid responses in conversation, Anumanchipalli said that with machine learning and custom AI algorithms, the brain-computer interface converted Anne's brain signals into speech within a second using a synthetic voice generator. "We recorded Anne's attempts and used audio from before her injury -- her wedding video. It was a 20-year-old clip, but we digitally recreated a synthetic voice," Anumanchipalli said. "Then, we matched her brain's attempt to speak with that voice to generate synthetic speech." While technology enabled Anne to speak, Anumanchipalli gave her credit for doing the most difficult part of the process. "The real driver here is Anne. Her brain does the heavy lifting -- we're just reading what it's trying to do," Anumanchipalli said. "AI fills in some gaps, but Anne is the main character. The brain evolved over millions of years to do this -- fluid communication is what it was built for." Anne's breakthrough is part of a broader movement in brain-computer interface research, which has attracted major players in neuroscience and tech, including Elon Musk's Neuralink. On Wednesday, Neuralink opened its patient registry to the company's PRIME Study to applicants worldwide. Rather than relying on publicly available artificial intelligence models, the team built a system from the ground up specifically for Anne. "We haven't used anything off the shelf. Everything we're using is custom-made for Anne. We're not licensing AI from any other company," Anumanchipalli said. "We're AI engineers and scientists -- we design our own work, customized for Anne. AI as a black box isn't appropriate, especially in healthcare, where one size doesn't fit all. We have to reimagine and custom-make solutions for each person." Anumanchipalli said building a proprietary AI was not only about specialization but preserving user privacy as well. "The goal is to preserve privacy. We're not sending her signals to a company in Silicon Valley. We're designing software that stays with her," he said. "Eventually, this will be a standalone device, powered by her own body, working locally -- so no one else controls what she's trying to say." Anumanchipalli highlighted the importance of public funding in developing brain-computer interface research. "Projects like this drive innovation beyond what we can imagine, with downstream applications. Federal funding from the U.S. National Institutes of Health and National Institute on Deafness and Other Communication Disorders made this possible," he said. "Philanthropic and private funding is also welcome to push it forward. This is the frontier of what we can achieve together." Looking to the future, Anumanchipalli hopes researchers will double down on efforts to return speech using technology. "Fortunately, the effort has received strong support. I hope the human element remains central," he said. "People like Anne have volunteered their time and effort into something that doesn't promise them anything but explores therapies for others like them -- and that's important."

[14]

A stroke survivor speaks again with the help of an experimental brain-computer implant

Scientists have developed a device that can translate thoughts about speech into spoken words in real time. Although it's still experimental, they hope the brain-computer interface could someday help give voice to those unable to speak. A new study described testing the device on a 47-year-old woman with quadriplegia who couldn't speak for 18 years after a stroke. Doctors implanted it in her brain during surgery as part of a clinical trial. It "converts her intent to speak into fluent sentences," said Gopala Anumanchipalli, a co-author of the study published Monday in the journal Nature Neuroscience. Other brain-computer interfaces, or BCIs, for speech typically have a slight delay between thoughts of sentences and computerized verbalization. Such delays can disrupt the natural flow of conversation, potentially leading to miscommunication and frustration, researchers said. This is "a pretty big advance in our field," said Jonathan Brumberg of the Speech and Applied Neuroscience Lab at the University of Kansas, who was not part of the study. A team in California recorded the woman's brain activity using electrodes while she spoke sentences silently in her brain. The scientists used a synthesizer they built using her voice before her injury to create a speech sound that she would have spoken. They trained an AI model that translates neural activity into units of sound. It works similar to existing systems used to transcribe meetings or phone calls in real time, said Anumanchipalli, of the University of California, Berkeley. The implant itself sits on the speech center of the brain so that it's listening in, and those signals are translated to pieces of speech that make up sentences. It's a "streaming approach," Anumanchipalli said, with each 80-millisecond chunk of speech -- about half a syllable -- sent into a recorder. "It's not waiting for a sentence to finish," Anumanchipalli said. "It's processing it on the fly." Decoding speech that quickly has the potential to keep up with the fast pace of natural speech, said Brumberg. The use of voice samples, he added, "would be a significant advance in the naturalness of speech." Though the work was partially funded by the National Institutes of Health, Anumanchipalli said it wasn't affected by recent NIH research cuts. More research is needed before the technology is ready for wide use, but with "sustained investments," it could be available to patients within a decade, he said.

[15]

Experimental implant translates thoughts to speech

Scientists trained an AI model that translates brain activity into sound to help a stroke survivor regain speech. Scientists have developed a device that can translate thoughts about speech into spoken words in real time. Although it's still experimental, they hope the brain-computer interface could someday help give voice to those unable to speak. A new study described testing the device on a 47-year-old woman with quadriplegia who couldn't speak for 18 years after a stroke. Doctors implanted it in her brain during surgery as part of a clinical trial. It "converts her intent to speak into fluent sentences," said Gopala Anumanchipalli, a co-author of the study, which was published in the journal Nature Neuroscience. Other brain-computer interfaces (BCIs) for speech typically have a slight delay between thoughts of sentences and computerised verbalisation. Such delays can disrupt the natural flow of conversation, potentially leading to miscommunication and frustration, researchers said. This is "a pretty big advance in our field," said Jonathan Brumberg of the Speech and Applied Neuroscience Lab at the University of Kansas in the US. He was not part of the study. How the implant works A team in California recorded the woman's brain activity using electrodes while she spoke sentences silently in her brain. The scientists used a synthesiser they had built using her voice before her injury to create a speech sound that she would have spoken. Then they trained an artificial intelligence (AI) model that translates neural activity into units of sound. It works similarly to existing systems used to transcribe meetings or phone calls in real time, said Anumanchipalli, of the University of California, Berkeley, in the US. The implant itself sits on the speech centre of the brain so that it's listening in, and those signals are translated to pieces of speech that make up sentences. It's a "streaming approach," Anumanchipalli said, with each 80-millisecond chunk of speech - about half a syllable - sent into a recorder. "It's not waiting for a sentence to finish," Anumanchipalli said. "It's processing it on the fly". Decoding speech that quickly has the potential to keep up with the fast pace of natural speech, said Brumberg. The use of voice samples, he added, "would be a significant advance in the naturalness of speech". Though the work was partially funded by the US National Institutes of Health (NIH), Anumanchipalli said it wasn't affected by recent NIH research cuts. More research is needed before the technology is ready for wide use, but with "sustained investments," it could be available to patients within a decade, he said.

[16]

A stroke survivor speaks again with the help of an experimental brain-computer implant

Scientists have developed a device that can translate thoughts about speech into spoken words in real time. Although it's still experimental, they hope the brain-computer interface could someday help give voice to those unable to speak. A new study described testing the device on a 47-year-old woman with quadriplegia who couldn't speak for 18 years after a stroke. Doctors implanted it in her brain during surgery as part of a clinical trial. It "converts her intent to speak into fluent sentences," said Gopala Anumanchipalli, a co-author of the study published Monday in the journal Nature Neuroscience. Other brain-computer interfaces, or BCIs, for speech typically have a slight delay between thoughts of sentences and computerized verbalization. Such delays can disrupt the natural flow of conversation, potentially leading to miscommunication and frustration, researchers said. This is "a pretty big advance in our field," said Jonathan Brumberg of the Speech and Applied Neuroscience Lab at the University of Kansas, who was not part of the study. A team in California recorded the woman's brain activity using electrodes while she spoke sentences silently in her brain. The scientists used a synthesizer they built using her voice before her injury to create a speech sound that she would have spoken. They trained an AI model that translates neural activity into units of sound. It works similar to existing systems used to transcribe meetings or phone calls in real time, said Anumanchipalli, of the University of California, Berkeley. The implant itself sits on the speech center of the brain so that it's listening in, and those signals are translated to pieces of speech that make up sentences. It's a "streaming approach," Anumanchipalli said, with each 80-millisecond chunk of speech - about half a syllable - sent into a recorder. "It's not waiting for a sentence to finish," Anumanchipalli said. "It's processing it on the fly." Decoding speech that quickly has the potential to keep up with the fast pace of natural speech, said Brumberg. The use of voice samples, he added, "would be a significant advance in the naturalness of speech." Though the work was partially funded by the National Institutes of Health, Anumanchipalli said it wasn't affected by recent NIH research cuts. More research is needed before the technology is ready for wide use, but with "sustained investments," it could be available to patients within a decade, he said. ___ The Associated Press Health and Science Department receives support from the Howard Hughes Medical Institute's Science and Educational Media Group and the Robert Wood Johnson Foundation. The AP is solely responsible for all content.

[17]

Stroke survivor speaks again with the help of an experimental brain-computer implant

Scientists have developed a device that can translate thoughts about speech into spoken words in real time. Although it's still experimental, they hope the brain-computer interface could someday help give voice to those unable to speak. A new study described testing the device on a 47-year-old woman with quadriplegia who couldn't speak for 18 years after a stroke. Doctors implanted it in her brain during surgery as part of a clinical trial. It "converts her intent to speak into fluent sentences," said Gopala Anumanchipalli, a co-author of the study published Monday in the journal Nature Neuroscience. Other brain-computer interfaces, or BCIs, for speech typically have a slight delay between thoughts of sentences and computerized verbalization. Such delays can disrupt the natural flow of conversation, potentially leading to miscommunication and frustration, researchers said. This is "a pretty big advance in our field," said Jonathan Brumberg of the Speech and Applied Neuroscience Lab at the University of Kansas, who was not part of the study. A team in California recorded the woman's brain activity using electrodes while she spoke sentences silently in her brain. The scientists used a synthesizer they built using her voice before her injury to create a speech sound that she would have spoken. They trained an AI model that translates neural activity into units of sound. It works similar to existing systems used to transcribe meetings or phone calls in real time, said Anumanchipalli, of the University of California, Berkeley. The implant itself sits on the speech center of the brain so that it's listening in, and those signals are translated to pieces of speech that make up sentences. It's a "streaming approach," Anumanchipalli said, with each 80-millisecond chunk of speech - about half a syllable - sent into a recorder. "It's not waiting for a sentence to finish," Anumanchipalli said. "It's processing it on the fly." Decoding speech that quickly has the potential to keep up with the fast pace of natural speech, said Brumberg. The use of voice samples, he added, "would be a significant advance in the naturalness of speech." Though the work was partially funded by the National Institutes of Health, Anumanchipalli said it wasn't affected by recent NIH research cuts. More research is needed before the technology is ready for wide use, but with "sustained investments," it could be available to patients within a decade, he said. ___ The Associated Press Health and Science Department receives support from the Howard Hughes Medical Institute's Science and Educational Media Group and the Robert Wood Johnson Foundation. The AP is solely responsible for all content.

[18]

Brain Implant Lets Woman Talk After 18 Years of Silence Due to Stroke

TUESDAY, April 1, 2025 (HealthDay News) -- For nearly two decades, a stroke had left a woman unable to speak -- until now. Thanks to a new brain implant, her thoughts are being turned into real-time speech, giving her a voice again for the first time in 18 years. The device was tested on a 47-year-old woman with quadriplegia who lost her ability to speak after a stroke. Doctors placed the brain-computer implant during surgery as part of a clinical trial, The Associated Press reported. It "converts her intent to speak into fluent sentences," said Gopala Anumanchipalli, an assistant professor of electrical engineering and computer sciences at UC Berkeley and a co-author of the study published Monday in the journal Nature Neuroscience. Unlike other brain-computer systems that have a delay, this new technology works in real time. Scientists say that the delay in existing systems makes conversations hard and, sometimes, frustrating. Jonathan Brumberg of the Speech and Applied Neuroscience Lab at the University of Kansas, who reviewed the findings, welcomed the advances. This is "a pretty big advance in our field," he told The Associated Press. Here's how it works: The team recorded the woman's brain activity using electrodes as she silently imagined saying sentences. They also used a synthesizer using her voice before her stroke to re-create the sound she would have made. An AI model was trained to translate her brain signals into sounds. The system sends each small sound unit -- roughly a half-syllable -- into a recorder every 80 milliseconds. That means it works quickly, just like real-time transcription on a phone call. "It's not waiting for a sentence to finish," Anumanchipalli said. "It's processing it on the fly." Decoding speech that fast could help the device keep up with the speed of natural conversation, Brumberg added. Using a person's actual voice "would be a significant advance in the naturalness of speech." Though the research was partly funded by the National Institutes of Health, Anumanchipalli said it was not affected by recent cuts to NIH funding. He added that more research is still needed, but with "sustained investments," the device could be available to patients within 10 years.

Share

Share

Copy Link

Researchers develop a brain-computer interface that can translate thoughts into audible speech almost instantly, potentially revolutionizing communication for people with severe paralysis.

Breakthrough in Brain-Computer Interface Technology

Researchers from the University of California, Berkeley and the University of California, San Francisco have achieved a significant breakthrough in brain-computer interface (BCI) technology. They have developed a system that can translate neural signals from the brain of a person with paralysis into audible speech in near real-time

1

.The Technology Behind the Breakthrough

The system uses a brain implant with 253 electrodes placed on the surface of the brain cortex, specifically in the area responsible for speech motor control. This implant, known as a high-density electrocorticography (ECoG) array, can record the combined activity of thousands of neurons simultaneously

2

.AI-Powered Decoding and Speech Synthesis

The breakthrough relies heavily on artificial intelligence algorithms. These algorithms decode the neural signals and transform them into spoken sentences using a synthetic voice. The system is personalized to sound like the patient's own voice before their injury, trained on recordings from old videos

1

.Near Real-Time Performance

Unlike previous efforts that could only produce sounds after users finished an entire sentence, this approach can detect words and convert them into speech within three seconds. The system samples brain signals every 80 milliseconds, starting 500 milliseconds before the patient begins to silently say the sentences

1

3

.Clinical Trial and Patient Experience

The study's participant, identified as Ann, lost her ability to speak after a stroke in 2005. During the trial, Ann silently mouthed 100 sentences from a set of 1,024 words and 50 phrases that appeared on a screen. The BCI device captured her neural signals and produced between 47 and 90 words per minute

1

4

.Related Stories

Potential Impact and Future Developments

This technology represents a critical step toward enabling communication for people who have lost the ability to speak due to severe paralysis. The researchers are optimistic about further improvements, including enhancing the algorithm to generate speech better and faster

3

5

.Broader Applications and Compatibility

The researchers demonstrated that their approach could work well with various brain sensing interfaces, including microelectrode arrays and non-invasive recordings. This versatility suggests potential applications beyond the current focus on paralysis patients

3

.While the technology is still experimental, researchers hope that with sustained investments, it could be available to patients within a decade, potentially transforming the lives of those unable to communicate verbally

5

.References

Summarized by

Navi

[2]

[3]

Related Stories

Breakthrough Brain-Computer Interface Restores Real-Time Speech for ALS Patient

12 Jun 2025•Science and Research

Brain Implant Decodes Inner Speech with Password Protection, Advancing AI-Powered Communication

15 Aug 2025•Science and Research

Brain Implants Decode Inner Speech: Medical Breakthrough Raises Ethical Concerns

31 Aug 2025•Technology

Recent Highlights

1

Apple Plans Major Siri AI Overhaul in iOS 27 With Third-Party Chatbot Integration

Technology

2

OpenAI shuts down Sora after six months, ending Disney's $1 billion licensing partnership

Technology

3

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research