ByteDance's Seedance 2.0 AI video tool impresses audiences but raises deepfake concerns

10 Sources

10 Sources

[1]

TikTok-Linked AI Video Tool Debuts With a Catch for the US

It remains unclear when general audiences will be able to access Seedance 2.0 and whether it will be available in the US, given that the company had to relinquish most of its TikTok ownership due to security and privacy concerns. So far, Seedance 2.0 is impressing AI enthusiasts, many of whom say it is more advanced than offerings from companies such as OpenAI, which offers video generation through its Sora 2 AI tool. A representative for ByteDance did not immediately respond to a request for comment. (Disclosure: Ziff Davis, CNET's parent company, in April filed a lawsuit against OpenAI, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.) According to information on various ByteDance websites, Seedance 2.0 is an AI video model that will be available across several of its tools, including Dreamina, a creator software suite from its video editing service CapCut. Seedance can pull together multiple types of clips, such as video, audio and images, generate videos based on a thumbnail of a person's face, and do things that other video generators struggle with, like maintaining consistent character and object features and displaying unified fonts and text. Some of the examples that have made their way onto social media include realistic-looking dance scenes, martial-arts fights, explosive battles and entire short films generated from a text prompt. Other AI software such as TopView AI and Atlas Cloud appear to be planning to incorporate Seedance 2.0 into their video-generator suites. On the Atlas Cloud website, Seedance 2.0 AI generation is said to be arriving later this month. Video generators are proving to be popular, but companies making these AI tools have faced legal issues and other troubles. Disney and other companies last year sued AI image and video company Midjourney over copyright issues. Kuaishou was recently fined by the Chinese government for failing to curb pornography on its AI platforms.

[2]

ByteDance's next-gen AI model can generate clips based on text, images, audio, and video

Big Tech's race to leapfrog the latest AI models continues with the launch of ByteDance's next-gen video generator. In a blog post, ByteDance - the China-based company behind TikTok - says Seedance 2.0 supports prompts that combine text, images, video, and audio. The company claims it "delivers a substantial leap in generation quality," offering improvements in generating complex scenes with multiple subjects and its ability to follow instructions. Users can refine their text prompts by feeding Seedance 2.0 up to nine images, three video clips, and three audio clips. The model can generate up to 15-second clips with audio, while taking camera movement, visual effects, and motion into account. It can also reference text-based storyboards, according to ByteDance. AI-powered video generation models have only gotten more advanced within the past year, with Google Veo 3 adding the ability to generate audio-supported clips, and OpenAI launching Sora 2 along with a new app that allows users to create videos with "hyperreal motion and sound." The AI startup, Runway, has also released a new version of its AI video model that it claims has "unprecedented" accuracy. In one example shared by ByteDance, which shows two figure skaters performing a routine together, the company says Seedance 2.0 can "reliably perform a sequence of high-difficulty movements -- including synchronized takeoffs, mid-air spins and precise ice landings -- while strictly following real-world physical laws." Users on social media have already started showing off what the new tool can do, with one person posting an AI-generated video with the likenesses of Brad Pitt and Tom Cruise in a cinematic fight sequence. Deadpool writer Rhett Reese reposted the video with the comment, "I hate to say it. It's likely over for us." Other posts demonstrate Seedance 2.0's ability to generate anime-style clips, cartoons, cinematic sci-fi scenes, and videos that look like a content creator made them. It's not clear what (if any) copyright protections Seedance 2.0 offers, as a quick search on X reveals a bunch of clips featuring characters from Dragon Ball Z, Family Guy, Pokémon, and more. For now, Seedance 2.0 is only available through ByteDance's Dreamina AI platform and through its AI assistant, Doubao. It's unclear whether it will make its way to TikTok -- especially now that the app is under new ownership in the US.

[3]

A New AI Video Model From ByteDance is Making Waves

It may have just lost control of TikTok U.S., but this weekend ByteDance released Seedance 2.0 -- an AI video generator that is turning heads. CNBC reports that Seedance's headline feature is something called "multi-lens storytelling". AI video is often short, but this model will create several scenes while keeping the same style and characters. The AI can reportedly generate 2K video 30% faster than other models. It can be prompted with an image, a short video, text, or audio. There have been some impressive examples shared online. See below. Beijing-friendly newspaper South China Morning Post reports that the release of the beta model and subsequent buzz has sent the stock prices of Chinese AI firms upwards. SCMP notes that Seedance V2 is currently only available to a few select users of Jimeng AI, which is ByteDance's AI video platform. The feedback has been overwhelmingly positive. Seedance 2.0 provides precise camera controls and editing tools. "With its reality enhancements, I feel it's very hard to tell whether a video is generated by AI," Wang Lei, a programmer from China's southern Guangdong province, tells SCMP. That AI video is getting good is no surprise. It was always obvious that video would eventually catch up with still images and text generators. But where AI video will lead to is an intriguing question. Last week, PetaPixel reported that famed director Darren Aronofsky has released a series of AI-generated short films called 'On This Day... 1776' that mark the semiquincentennial anniversary of the American Revolution. Aronofsky's project has been met with widespread derision. Yet the two videos released so far have racked up hundreds of thousands of views. Whether those numbers come from people who are morbidly curious about a respected filmmaker apparently damaging his own career, or they're genuinely popular remains to be seen. AI is undoubtedly being used to enhance productions in small, background ways. But a fully AI-generated production still seems unlikely to be loved. Despite countless AI influencers declaring "Hollywood is cooked", will audiences really ever connect with dead-eyed AI characters? Right now it's difficult to see that happening.

[4]

China's new AI video tools close the uncanny valley for good

Every TV and movie critic is loving to hate on Darren Aronofsky these days. The Academy Award-nominated filmmaker -- creator of lyrical, surreal, and deeply human movies like Black Swan, The Whale, Mother!, and Pi -- has released an AI-generated series called On This Day . . . 1776 to commemorate the semiquincentennial anniversary of the American Revolution. Though the series has garnered millions of views, commentators everywhere call it "a horror," slamming Aronofsky's work for how stiff the faces look, how everything morphs unrealistically. Although calling it "requiem for a filmmaker" seems excessive, they are not wrong about these faults. The series, created using real human voice-overs and Google's generative video AI, does suffer from "uncanny valley syndrome" (our brains can very easily detect what's off with faces, and we don't buy it as real, feeling an automatic repulsion). But this month, two new generative AI models from China have closed the valley's gap: Kling 3.0 and Seedance 2.0. For the first time, AI is generating video content that is truly indistinguishable from film, with the time and subject coherence that will make the 2020s "It's AI slop!" crybabies disappear like their predecessors in the aughts ("It's CGI!") and the 1990s ("It's Photoshop!"). Seedance 2.0, developed by TikTok parent company ByteDance, released in beta on February 9 -- exclusively in China for now. It's widely considered the first "director's tool." Unlike previous models that gave the feeling you were pulling a slot machine lever and hoping for a coherent result, Seedance allows for what analysts at Chinese investment firm Kaiyuan Securities call director-level control. It achieves this through a breakthrough multimodal input system. ByteDance has redesigned its model to accept images, videos, audio, and text simultaneously as inputs, rather than relying on text prompts alone. A creator can upload up to a dozen reference files -- mixing character sheets, specific camera movement demos, and audio tracks -- and the AI will synthesize them into a scene that follows cinematic logic.

[5]

ByteDance's new Seedance 2.0 supposedly 'surpasses Sora 2'

Seedance 2.0 says it generates 'cinematic content' with 'seamless video extension and natural language control'. TikTok-parent ByteDance launched the pre-release version of its new AI video model, called Seedance 2.0, over the weekend. Positive response to the launch drove up shares in Chinese AI firms. Seedance 2.0 markets itself as a "true" multi-modal AI creator, allowing users to combine images, videos, audio, and text to generate "cinematic content" with "precise reference capabilities, seamless video extension, and natural language control". The model is currently available to select users of Jimeng AI, ByteDance's AI video platform. The new Seedance model allows exporting in 2k with 30pc faster generation that the previous version 1.5, the company's website read. Swiss-based consultancy CTOL called it the "most advanced AI video generation model available", "surpassing OpenAI's Sora 2 and Google's Veo 3.1 in practical testing". Data compiled by Bloomberg earlier today showed publishing company COL Group Co hit its 20pc daily price ceiling, while Shanghai Film Co and gaming and entertainment firm Perfect World Co rose by 10pc. Meanwhile, the Shanghai Shenzhen CSI 300 Index is up by 1.63pc at the time of publishing. Consumer AI video generators have made leaps in advances in just a short period of time. The usual tells - blurry fingers, overly smooth and unrealistic skin and inexplainable changes from frame to frame in AI videos are all becoming extremely hard to notice. While rivalling AI video generator Sora 2 by OpenAI produces results with watermarks (although, there's no shortage of tutorials on how to remove them), and Google's Veo 3.1 comes with a metadata watermark called Syth ID, Seedance boasts that its results are "completely watermark-free". The prevalence of advanced AI tools coupled with ease of access has opened the gates to a new wave of AI deepfakes, with the likes of xAI's Grok at the centre of the issue. Last month, the EU launched a new investigation into X to probe whether the Elon Musk-owned social media site properly assessed and mitigated risks stemming from its in-platform AI chatbot Grok after it was outfitted with the ability to edit images. Users on the social media site quickly prompted the tool to undress people - generally women and children - in images and videos. Millions of such pieces of content were generated on X, The New York Times found. Don't miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic's digest of need-to-know sci-tech news.

[6]

ByteDance's new AI video tool is getting scary good - Phandroid

ByteDance dropped Seedance 2.0 over the weekend, and the Seedance 2.0 AI video generator is already turning heads. The company behind TikTok quietly released its latest AI video tool without much fanfare, but the results flooding social media speak for themselves. People are already comparing it to OpenAI's Sora 2 and Google's Veo 3.1. The Seedance 2.0 AI video generator creates 15-second clips from text, images, audio, or video inputs. What sets it apart is quality. Early users are praising its physical accuracy, character consistency, and properly synced audio. The tool generates dialogue, ambient sounds, and sound effects that actually match what's happening on screen. According to ByteDance, Seedance 2.0 achieves a 90% usable output rate on the first try. That's a massive jump from previous AI video tools that typically deliver around 20% usable results. The difference comes down to architecture. ByteDance built Seedance 2.0 with a dual-branch system that generates video and audio simultaneously instead of adding sound after the fact. Seedance 2.0 supports text-to-video and image-to-video generation at 1080p resolution. It can handle up to nine images, three video clips, and three audio files as reference inputs. You can upload character poses, action sequences, and audio samples, then let the AI build a scene around them. The tool also understands cinematic language. Describe camera movements and shot transitions, and Seedance 2.0 will generate a multi-shot sequence that looks semi-professional. One viral clip showed someone uploading static photos and getting back a photorealistic digital clone, though ByteDance quickly banned face uploads after that went viral. Right now, the Seedance 2.0 AI video generator is available through ByteDance's Dreamina platform and Doubao in China. It's already integrated into CapCut, but there's no word on TikTok integration given the app's ownership situation in the US. The reaction from creatives has been mixed. Some see massive disruption coming to film and advertising. Others worry about deepfakes and copyright, since early samples already show celebrities being used without permission. This comes just months after ByteDance's OmniHuman-1 raised similar concerns about hyper-realistic AI video.

[7]

From Titanic to Harry Potter to LOTR, epic endings reimagined by Seedance 2.0 AI that's going viral

ByteDance has launched Seedance 2.0, a new video generation tool. It creates realistic clips from simple text prompts. The technology is gaining significant attention globally. Users are comparing its impact to DeepSeek's AI models. Seedance 2.0 is designed for professional use in filmmaking and advertising. It processes multiple data types to reduce production costs and time. ByteDance's new video-generation tool, Seedance 2.0, is grabbing global attention for its ability to create ultra-realistic clips from just two lines of text. The model has already drawn praise from tech leaders including Elon Musk and is trending heavily in China, where many users are comparing its impact to DeepSeek. While text-based AI platforms like ChatGPT and DeepSeek's R1 became mainstream over the past year, advanced video-generation models are now being seen as the next big leap in artificial intelligence. Unveiled officially on Thursday, ByteDance said Seedance 2.0 is built for professional use across filmmaking, advertising, and e-commerce. The company highlighted that the system can process text, images, audio, and video together, significantly reducing production costs and time. Its launch comes as investors and tech observers search for the next breakthrough following the global splash made by DeepSeek's R1 and V3 models earlier this year. On China's Weibo platform, users have been sharing AI-generated clips demonstrating the model's cinematic quality -- even when prompts are unusual or absurd. One widely viewed two-minute video imagined rapper Ye (formerly known as Kanye West) and reality TV star Kim Kardashian as characters in an Imperial Chinese palace drama, speaking and singing in Mandarin. The clip reportedly crossed one million views. Hashtags linked to Seedance 2.0 have accumulated tens of millions of views on Weibo. Even state-run publication Beijing Daily joined the conversation, posting: "From DeepSeek to Seedance, China's AI has succeeded." Since its February 12 debut, social media has been flooded with Seedance-created videos. Among the most viral are playful alternate endings to Titanic, Harry Potter, and The Lord of the Rings, turning beloved classics into meme-worthy reimaginings. (You can now subscribe to our Economic Times WhatsApp channel)

[8]

ByteDance launches Seedance 2.0; insane 'cinematic' AI videos spark rally in China tech stocks

ByteDance's new AI video model, Seedance 2.0, has launched, impressing users with its ability to create cinematic content from diverse inputs. This advanced tool reportedly surpasses competitors like OpenAI's Sora and Google's Veo, offering faster generation and sophisticated "multi-lens storytelling." The release has already sparked interest in Chinese AI and entertainment stocks. TikTok parent company ByteDance has launched a pre-release version of its new artificial intelligence video model, Seedance 2.0, triggering a rally in shares of several Chinese AI and entertainment companies. According to the company, Seedance 2.0 is designed as a "true" multi-modal AI creator, allowing users to combine images, video, audio and text to generate what ByteDance describes as "cinematic content" with "precise reference capabilities, seamless video extension, and natural language control." The model is currently available to select users on Jimeng AI, ByteDance's AI video platform, reports Silicon Republic. ByteDance said Seedance 2.0 supports 2K video exports and delivers generation speeds about 30% faster than its previous version, Seedance 1.5, according to information published on the company's website. Swiss-based consultancy CTOL described the new model as the "most advanced AI video generation model available," saying it is "surpassing OpenAI's Sora 2 and Google's Veo 3.1 in practical testing." One of the model's standout features is what ByteDance calls "multi-lens storytelling," CNBC explains. A single prompt can be expanded into multiple connected scenes, with the AI maintaining consistent characters, lighting, and tone throughout. This significantly reduces the need for manual editing and stitching in post-production software. Seedance 2.0 has already become very popular and drawn attention online for a series of highly produced AI-generated videos circulating on social platforms; here are a few clips. According to CNBC, official materials from ByteDance state that the model generates 2K video roughly 30% faster than some competitors, including Kling. The system also supports multiple input formats, text, still images, short video clips and audio, giving creators greater flexibility in how projects begin. The launch appears to have fueled investor optimism around China's AI and media sector. Data compiled by Bloomberg on February 9 showed publishing firm COL Group Co. hitting its daily 20% price limit, while Shanghai Film Co. and gaming and entertainment company Perfect World Co. both rose by about 10%, notes siliconrepublic.

[9]

ByteDance's Seedance 2.0 Builds Buzz in Expanding Video Generation Market | PYMNTS.com

By completing this form, you agree to receive marketing communications from PYMNTS and to the sharing of your information with our sponsor, if applicable, in accordance with our Privacy Policy and Terms and Conditions. In a marketplace hungry for innovation beyond text bots, Seedance 2.0 has captured widespread attention for its ability to turn simple prompts into complex, cinematic videos -- including multishot scenes with synchronized audio, fueled by a design that processes text, imagery, sound and motion all at once. ByteDance rolled out the system on Thursday (Feb. 12), positioning it as a tool for professional film, eCommerce and advertising content creation that could cut production costs and time dramatically. The model's popularity has been amplified across China's Weibo platform, where users have posted videos demonstrating Seedance 2.0's capabilities, and hashtags about the AI have generated tens of millions of clicks. One particularly viewed clip reimagined U.S. music and television personalities in an ancient Chinese palace drama, complete with Mandarin dialogue and singing. Chinese media have openly drawn parallels between the Seedance launch and the impact of DeepSeek's R1 and V3 AI models, whose debut in early 2025 generated intense debate about China's role in the global AI race. Last year's DeepSeek models shook up the field by showcasing large language model capabilities, and the arrival of a standout video-focused generator adds another dimension to China's AI ambitions. The rise of Seedance 2.0 also ties into a larger ecosystem shift toward multimodal AI, where the ability to blend text, visual and auditory outputs seamlessly is becoming a core differentiator among leading models. While text-centric systems such as OpenAI's ChatGPT remain widely used, video and multimedia generation represent a rapidly growing frontier with implications for creative industries and commercial content workflows. OpenAI unveiled its own text-to-video model, Sora, designed to generate realistic video clips from written prompts. Sora demonstrated the ability to create minute-long, high-fidelity scenes with consistent characters and complex motion, signaling that video generation is moving from experimental novelty to production-grade capability. At the same time, as reported by PYMNTS, social media platforms are retooling their products to respond to the surge in AI-generated content. Companies including Meta and Pinterest have begun overhauling feeds and labeling systems to more clearly distinguish between human-created and AI-generated posts, reflecting mounting pressure around transparency and trust.

[10]

Seedance 2.0: This Chinese AI video tool is outpacing Veo 3 and Sora 2

The landscape of generative video has shifted rapidly in early 2026, and the "Sora-killer" narrative has found a new protagonist in ByteDance's Seedance 2.0. While Western giants like OpenAI and Google have focused on increasing clip duration and perfecting lighting physics, ByteDance has taken a different route by prioritizing surgical control and production reliability. Launched in early February, Seedance 2.0 is quickly becoming the preferred tool for creators who need predictable results rather than "luck-based" generations. The model represents a fundamental move toward an industrial-grade workflow, offering features that directly address the most common frustrations in AI filmmaking: character drift, lack of audio synchronization, and the inability to follow complex directorial cues. Also read: AI is being brainwashed to favor specific brands, Microsoft report shows The most significant technical leap in Seedance 2.0 is its "quad-modal" input system, which supports up to 12 reference files in a single generation task. While Sora 2 and Veo 3.1 generally rely on a single text prompt and perhaps one reference image, Seedance allows users to upload a mix of up to nine images, three video clips, and three audio files. This creates a "Universal Reference" ecosystem where a creator can use a specific @mention system to direct the AI. For instance, a user can tag a specific image to lock in a character's face, a video clip to mirror a complex camera pan like a Hitchcock zoom, and an audio file to dictate the rhythmic pacing of the cuts. This level of granularity effectively turns the AI from a creative partner into a digital production crew that follows exact specifications rather than vague descriptions. Also read: Saaras V3 explained: How 1 million hours of audio taught AI to speak "Hinglish" This reference-heavy approach has led to a reported "usability rate" of over 90 percent. In professional environments where time is money, the ability to get a usable 15-second clip on the first or second try is a massive competitive advantage. By contrast, even the most advanced Western models often require multiple "re-rolls" to fix artifacts or unintended character morphing. By allowing creators to provide a comprehensive "mood board" of inputs, Seedance 2.0 has essentially solved the consistency problem that has plagued AI video since its inception. Beyond its control stack, Seedance 2.0 is outperforming its rivals in raw output specifications and integrated features. It is currently one of the few flagship models to offer native 2K resolution, providing a level of detail and texture that surpasses the 1080p cap currently seen in Sora 2 and Veo 3.1. This higher resolution is matched by a sophisticated audio-visual joint generation architecture. Unlike other tools that add sound as a post-production layer, Seedance generates synchronized dialogue, environmental sound effects, and background music simultaneously with the video. This results in far more accurate lip-syncing across multiple languages, including English, Mandarin, and Cantonese, making it an indispensable tool for global marketing and localized content. While Sora 2 still holds a slight edge in pure physical simulation - handling complex object collisions and fluid dynamics with unmatched realism - Seedance 2.0 has closed the gap significantly. Its retrained physics engine manages momentum and gravity with a natural fluidity that avoids the "floaty" look of earlier versions. For the modern creator, the trade-off is clear: while other models might offer longer clips or slightly better water physics, Seedance 2.0 provides the most comprehensive and controllable production suite available today. It is no longer just a toy for generating viral clips; it is a serious piece of infrastructure for the future of digital cinema. Also read: Lenovo Yoga Book 9i hands-on: Are dual-screen laptops the future of work?

Share

Share

Copy Link

ByteDance released Seedance 2.0, an AI video generation model that many consider more advanced than OpenAI's Sora 2. The tool generates 2K video 30% faster with multimodal input capabilities, combining text, images, audio, and video prompts. While it's driving up Chinese AI firms' stock prices and impressing users with realistic output, questions remain about US availability and copyright protections.

ByteDance Unveils Advanced AI Video Generation Model

ByteDance, TikTok's parent company, launched the pre-release version of Seedance 2.0 over the weekend, introducing what many AI enthusiasts are calling the most advanced AI video generation model currently available

1

5

. Swiss-based consultancy CTOL stated that the model surpasses Sora 2 from OpenAI and Google's Veo 3.1 in practical testing5

. The generative AI video models have impressed early users with their ability to generate realistic video content that closes what critics call the uncanny valley—the unsettling feeling viewers experience when AI-generated faces and movements appear almost, but not quite, human4

.

Source: Digit

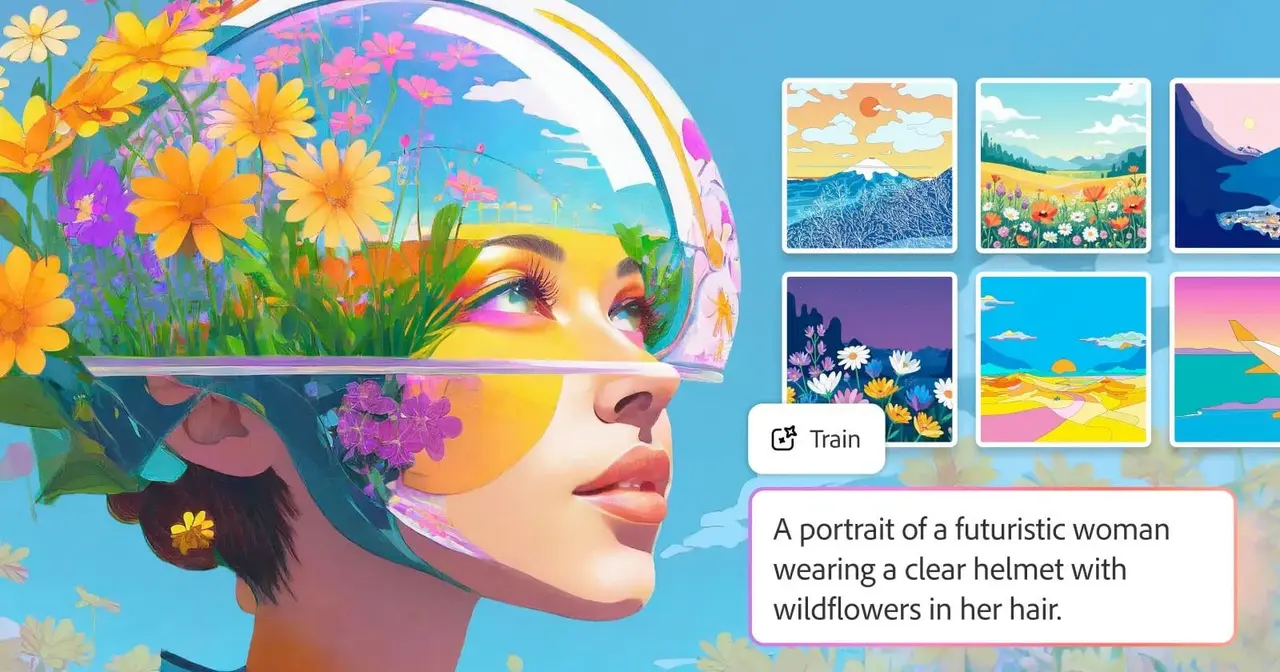

Multimodal Input System Enables Director-Level Control

The breakthrough feature of Seedance 2.0 lies in its multimodal input system, which allows users to combine text, images, video, and audio simultaneously as prompts rather than relying solely on text descriptions

2

4

. Users can upload up to nine images, three video clips, and three audio clips to refine their prompts, giving creators what analysts at Chinese investment firm Kaiyuan Securities describe as director-level control4

. The model generates up to 15-second clips with audio while accounting for camera movement, visual effects, and motion2

. ByteDance claims the AI video generation model can export in 2K resolution with 30% faster generation than the previous version 1.53

5

.

Source: CNET

Technical Capabilities Demonstrate Complex Scene Generation

Seedance 2.0 showcases its ability to maintain consistent character and object features across scenes while displaying unified fonts and text—capabilities that other video generators struggle with

1

. In one example shared by ByteDance featuring two figure skaters performing together, the company demonstrated how the model can "reliably perform a sequence of high-difficulty movements—including synchronized takeoffs, mid-air spins and precise ice landings—while strictly following real-world physical laws"2

. Users on social media have posted AI-generated videos showing anime-style clips, cinematic sci-fi scenes, martial-arts fights, and even videos featuring the likenesses of Brad Pitt and Tom Cruise in fight sequences2

1

. The headline feature is "multi-lens storytelling," which creates several scenes while maintaining the same style and characters3

.

Source: Fast Company

Chinese AI Firms See Stock Price Surge

The positive response to Seedance 2.0 drove up shares in Chinese AI firms immediately following the launch. Data compiled by Bloomberg showed publishing company COL Group Co hit its 20% daily price ceiling, while Shanghai Film Co and gaming and entertainment firm Perfect World Co rose by 10%

5

. The Shanghai Shenzhen CSI 300 Index increased by 1.63%5

. Wang Lei, a programmer from China's southern Guangdong province, told South China Morning Post that "with its reality enhancements, I feel it's very hard to tell whether a video is generated by AI"3

.Limited Availability Raises Questions for US Users

Seedance 2.0 is currently available only to select users through ByteDance's Dreamina AI platform and its AI assistant, Doubao

2

5

. The model will also be available across several ByteDance tools, including CapCut, its video editing service1

. It remains unclear when general audiences will access the tool or whether it will be available in the US, given that ByteDance had to relinquish most of its TikTok ownership due to security concerns and privacy issues1

. Other AI software such as TopView AI and Atlas Cloud appear to be planning to incorporate Seedance 2.0 into their video-generator suites, with Atlas Cloud stating on its website that Seedance 2.0 AI generation will arrive later this month1

.Related Stories

Copyright Issues and Deepfakes Concerns Emerge

While Seedance 2.0 produces results that are completely watermark-free, unlike OpenAI's Sora 2 which includes watermarks, and Google's Veo 3.1 which uses a metadata watermark called Syth ID, this raises significant concerns

5

. It's unclear what copyright issues protections Seedance 2.0 offers, as users have already posted clips featuring characters from Dragon Ball Z, Family Guy, and Pokémon2

. The prevalence of advanced AI tools coupled with ease of access has opened gates to a new wave of deepfakes5

. Video generators have faced legal troubles, with Disney and other companies suing AI image and video company Midjourney over copyright issues last year1

.Industry Implications and Creative Resistance

The release has sparked debate about AI's role in filmmaking. Darren Aronofsky recently released an AI-generated series called "On This Day... 1776" using Google's generative video AI to commemorate the American Revolution, though the series has been met with widespread criticism for its stiff faces and unrealistic morphing

3

4

. Deadpool writer Rhett Reese reposted an AI-generated video showing Brad Pitt and Tom Cruise, commenting "I hate to say it. It's likely over for us"2

. Despite AI influencers declaring "Hollywood is cooked," questions remain whether audiences will connect with AI-generated characters and whether fully AI-generated productions will gain acceptance3

. The emergence of tools like Seedance 2.0 and Kling 3.0 suggests that AI video generation is closing the gap between artificial and authentic cinematic content4

.References

Summarized by

Navi

[2]

[3]

[4]

[5]

Related Stories

ByteDance scrambles to fix Seedance 2.0 after Hollywood erupts over copyright infringement

13 Feb 2026•Policy and Regulation

ByteDance's OmniHuman-1: Revolutionizing AI Video Generation with Single Image Input

05 Feb 2025•Technology

ByteDance Unveils Goku: A Powerful AI Model for Text-to-Video Generation

13 Feb 2025•Technology

Recent Highlights

1

Tennessee Teens Sue Elon Musk's xAI Over Grok AI-Generated Child Abuse Images

Policy and Regulation

2

Pentagon designates Palantir Maven AI as core US military system in major defense shift

Technology

3

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation