ChatGPT caricature trend sweeps social media, but privacy concerns emerge over user data

6 Sources

6 Sources

[1]

9 ChatGPT caricature trends that went wrong

If you've been online in the past week, then you've probably seen the ChatGPT caricatures trend. We've covered a lot of ChatGPT image trends here at Mashable, but nothing that's gone this viral since the original Studio Ghibli trend. In the latest viral ChatGPT trend, people are going to Chat with a simple prompt: "Create a caricature of me based on everything you know about me." You can vary the prompt to your liking, but that's the most basic version. In the best-case scenario, you should get a cute and wholesome caricature with a cartoon version of you and some artwork reflecting your hobbies or career. This Tweet is currently unavailable. It might be loading or has been removed. Of course, not everyone's ChatGPT caricature turns out quite the way they hoped. On Reddit and X, we've seen some particularly strange results from this simple prompt. In some cases, ChatGPT seems to be calling out the user. This Tweet is currently unavailable. It might be loading or has been removed. In some examples, ChatGPT includes some very questionable details. One image of Reddit user crunchy-wraps looks fairly normal at first, until you zoom in... Other users seem to have received a more traditional caricature. Many of the caricatures being shared offer a cute and cartoonish rendering of the user, but some show the more insulting caricature style you might get from a rude and underpaid boardwalk caricature artist. This Tweet is currently unavailable. It might be loading or has been removed. This Tweet is currently unavailable. It might be loading or has been removed. On X, some users are using Grok Imagine to generate caricatures, and the results with Grok are especially bad. At least Grok isn't undressing people without their consent, for once. This Tweet is currently unavailable. It might be loading or has been removed. This Tweet is currently unavailable. It might be loading or has been removed. And at least one disgruntled X user has reported that free users of the AI chatbot are getting bad results. This Tweet is currently unavailable. It might be loading or has been removed. Head to ChatGPT to try creating your own caricature, or visit X to see some of the latest examples of the trend in action.

[2]

I tried the new ChatGPT caricature trend and was shocked how well the AI chatbot knows me

A viral AI art craze turns personal details into playful portraits ChatGPT and AI chatbots can cause a lot of trouble when they exaggerate. Luckily, the latest viral AI image fad actually encourages it, at least when it comes to your hair, teeth, or chin. Following the trend of turning pictures in renaissance paintings and 3D figurines, there's a new vogue for asking AI chatbots to draw a caricature of you, the kind you might get on a beach boardwalk, but specifically about your job. But instead of you skateboarding or surfing or whatever else the artist decided you might like, people are asking AI to produce caricatures based on what the AI knows of them and their jobs and lives. The results might shock you with their accuracy or their wild errors, but they are both entertaining and reveal a lot about how AI models process details about users, and what they hold in their memories. Here's how you can do it too. It's a bit like if the caricature artist at the beach was also a mind reader, one who could see everything you've said about yourself and describe your life and work. It's easy enough to do, assuming your AI chatbot of choice has absorbed enough information about you through conversation. Upload a straightforward, fairly close-up picture of yourself, particularly your head and upper body. Then pair it with a prompt like: "Create a caricature of me and my job based on everything you know about me." It nailed my career as a science and tech reporter and added lots of other little details like my love of books and coffee. It even pulled a memory from me describing my desk and office. That's why there's a window onto a yard where two dogs who look very much like my actual dogs are playing. I don't have a "Press" coffee mug, but I'd be lying if I said it was far removed from some of my actual mugs. If you've been a long‑time ChatGPT user, the chatbot will already have a context bank built up from your past conversations. It doesn't remember them in the human sense, but it can link together pieces of your self‑description into a composite vision for a caricature. Also, while "caricature" should be enough, you might want to add more detail about the visual style if it's not quite right. I suggest a phrase like, "a classic, funny caricature with exaggerated facial features and a vibrant, hand-drawn art style." Part of the reason this works is how ChatGPT integrates visuals with text. When you ask it to generate an image, the model doesn't just look at your selfies and apply a style. Instead, it interprets your words and your image together, learning from descriptive cues to build a scene. Models trained on massive image‑text datasets have internalized associations. If your chat history with it is thin, you can supply details about your job, interests, hobbies, pets, or anything else, and the image generator will stitch them together. I've talked with ChatGPT enough that it didn't need anything extra to do a pretty good job. For fun. I then asked ChatGPT to do the same for itself. The self-image of ChatGPT as a caricature suggests the AI has trained on a lot of data, calling the chatbot friendly and helpful (and apparently a Corgi lover). The notebook may be upside down, but the fact that the caricature has also drawn an image of a cartoon that itself is holding a pencil is pretty deep. The results of asking ChatGPT for a caricature of yourself can be evocative. More like little narratives than static images. And they show something about how AI "sees" us, and the caricature version of ourselves we share online.

[3]

ChatGPT caricatures are taking over social media -- but at what cost?

Have you seen larger-than-life depictions of your friends lately? They might have been sucked into the latest social trend: creating AI-generated caricatures. The trend itself is simple. Users input a common prompt: "Create a caricature of me and my job based on everything you know about me," and upload a photo of themself, and, voila! ChatGPT (or any AI-image platform) spits out an over-the-top, cartoon-style image of you, your job, and anything else it's learned about you. This ability is predicated on a robust ChatGPT (or other AI) chat history. Those who don't have a close, personal relationship with the AI might need to give additional information to get a more accurate depiction. But notably, that's yet another instance of potential AI privacy concerns. It's not the first AI image trend. Other social media challenges have had users posting themselves as AI-generated cartoons, Renaissance paintings, or fantasy characters.

[4]

ChatGPT caricature trend goes viral: How to turn yourself into a cartoon

A fresh ChatGPT trend is sweeping social media, enabling users to see themselves in an exaggerated, cartoon style. People are converting regular photos into playful digital caricatures with the use of OpenAI's chatbot, emphasizing facial features, work environments, and even personal quirks. The process is simple: upload a photo to ChatGPT and ask it to "turn you into a caricature." Unlike conventional street artists, AI handles the creative work, making real-life photos into whimsical, cartoon-style portraits. The trend is receiving popularity among teachers, journalists, and office employees, who are happily sharing their AI-generated versions online. To try the trend yourself, log into ChatGPT, upload a regular photo, and give a prompt like "Create a caricature of me." Including additional details such as your role, outfit, or setting enhances the quality, mainly for new users whose profiles may not have much prior details. If the generated image isn't satisfactory, you can modify your prompt for better results. Paid subscriptions provide unlimited caricature creations, but free users can create up to five images each day. The AI depends on your interaction history, so those who have shared more details with the chatbot will generally receive more accurate results. If you lack previous information, giving clear, clear instructions will still generate precise images. Before caricatures, social media was abuzz with other AI image trends such as Ghibli-style characters, Barbie-core avatars, and humanized pets. Several of these trends vanished quickly, but the caricature craze continues as the AI results seems to closely resemble the person while exaggerating features humorously.This perfect combination of humor and realism is why users can't stop posting their images. Q1: What is the ChatGPT caricature trend? It's a trend where users turn their photos into exaggerated cartoon-style portraits using AI. People upload images and prompt ChatGPT to create a playful version of themselves. Q2: How do I make a caricature on ChatGPT? Log into your ChatGPT account, upload a clear photo, and give a prompt like "Create a caricature of me." Adding details about your role, outfit, or setting improves results.

[5]

This Viral AI Caricature Trend Is Taking over the World and Your Privacy

You might have seen people sharing caricatures of themselves on X, Reddit, or Instagram. Well, it's part of a new viral trend, where people upload their photo and ask ChatGPT to turn it into a caricature. But it's not just any caricature; they explicitly ask the AI to use everything it knows about them based on previous chats to make it more personal, more accurate, and more "them." Soon, ChatGPT churns out a comical cartoonish image of you with exaggerated features and all. It is packed with your personality traits, interests, hobbies, job, and even your relationship drama, too. Maybe something else that you mentioned in passing, like 6 months ago. And that is the most unsettling part about this trend. While the caricature trend is just another harmless thing, like many others that we have seen in the past year. Remember the Studio Ghibli trend, or other viral image trends like creating a 3D model of yourself or the saree trend, which had women sharing their photos in traditional Indian attire. But what's especially problematic with this viral phenomenon is the fact that it exposes how much the AI knows about you. To make the output "better" and more "personalized", ChatGPT references all the other chats you have had with it in the past. These chats could contain discussions about personal habits, emotional vulnerabilities, career struggles, inside jokes, and relationship details. Even some confidential documents that are not meant to be disclosed elsewhere. Like in a recent revelation, where it was found out that Trump's acting cyber chief shared official documents with AI. It is worth noting that OpenAI retains this information to train its models, and poses a serious privacy risk. It's not just about your information. This viral trend also requires you to share your photo, so that the AI can make a caricature in your likeness. This puts into focus that when you share your photo with ChatGPT, it learns about your facial geometry, skin color, texture, body type, ethnicity, and more. Now, the company might not use it inherently for a malicious purpose. But there is always a risk of a data breach, which could put your details in the wrong hands. There was a recent incident from last year, where Discord's third-party vendor suffered a breach, which led to over 70,000 government IDs being leaked. Even if the company has its privacy policies in place, there is still the fact that we are feeding into AI. The more we share, the more it knows, the more it learns, the more it gets better at mimicking us. So, suffice it to say that this trend is not healthy, especially from a privacy point of view. Now, even if you don't care about your privacy, there's another layer of issues underlying this trend that no one is discussing. Caricature used to be a fun and very creative form of artwork. It would be up to the artist's interpretation to decide which features to highlight. The drawn image had an effort that made people laugh. On the other hand, caricatures made by ChatGPT just feel more like AI slop. From what I have seen online, and the one that I created myself looks pretty much the same. There doesn't seem to be a shred of creativity in sight. It's more of an algorithmic exaggeration based on patterns it has learned from millions of existing styles. Honestly, that takes away the WOW factor of a caricature. The same was the case with the Studio Ghibli trend. Ripping off a style of hand-drawn art for a mundane, by-the-numbers effect with nothing distinct to speak of. Many people voiced their against the trend, but their voices were unheard in the virality of it all. Again, I don't mind this viral caricature trend. But are you really comfortable with AI knowing so much about you?

[6]

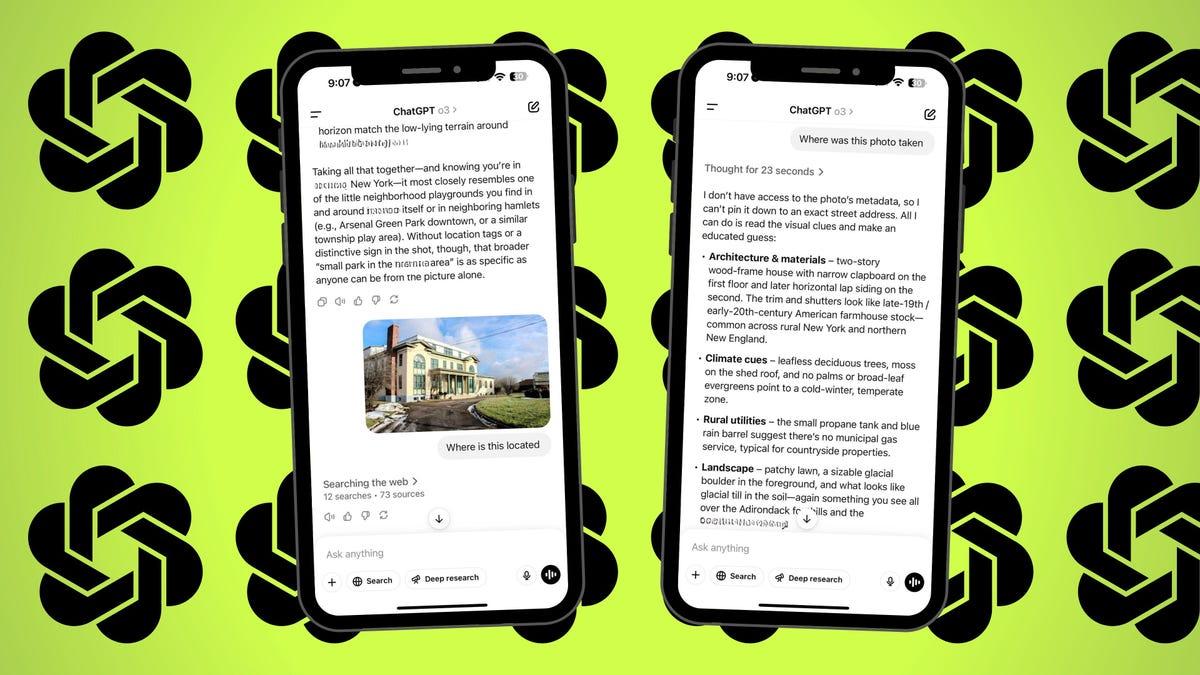

ChatGPT's viral caricature trend proves how well it knows you -- ...

There's a new AI trend that's making us wonder if it knows us a little too well. Worldwide, people have taken to asking the AI platform, ChatGPT, to create a caricature of them based on what it already knows about them. This information will usually come from their chat history, but the accuracy can be pretty astounding. Users on X have been sharing their caricatures, pointing to how well they've been captured by the AI tool. Many will have things in the background that round out the picture with extra detail: books with uncanny titles, screens with graphs or editing software depicted on them, flags, and even fantasy football stats can be seen in the background of the caricature images. While similar images can be made in apps like Cartoonify, ChatGPT offers the twist of proving how well it "knows" you based on what you've previously asked or searched on the platform. However, the excitement around the trend feels a little paradoxical, given the concern many hold that AI "knows too much." If it knows us better than we know ourselves, what does that say for our privacy, and what AI platforms choose to do with the data we provide to them? While it seems like a harmless trend, AI platforms aren't bound by confidentiality agreements the way doctors, lawyers, and psychologists are. David Grover, Senior Director of Cyber Initiatives at Baylor University, told KWTX that once you upload information, you lose control of it. "In general, all of them are going to save your information, and that information is going to go into some kind of storage, and at that point, you don't really know what that company is doing with your information," he explained. "You need to be careful about any image that you put in and anything that you put online because that becomes who you are, and the more we get into the digital world, the more challenging it's going to become to protect."

Share

Share

Copy Link

A viral social media trend has users asking ChatGPT to create AI-generated caricatures based on everything the AI chatbot knows about them. While the results are entertaining and often surprisingly accurate, the trend exposes how much personal information these AI systems retain—from job details to relationship struggles—raising questions about privacy and data security.

ChatGPT Powers Latest Viral Social Media Trend

A new caricature trend is taking over social media platforms, with users flocking to ChatGPT to generate exaggerated, cartoon-style portraits of themselves. The process is simple: upload a photo and use a prompt like "Create a caricature of me based on everything you know about me," and the AI chatbot produces personalized images that reflect not just physical features but also careers, hobbies, and personal quirks

1

2

.

Source: New York Post

This AI-driven image trend follows previous viral phenomena like Studio Ghibli-style characters and Renaissance painting transformations, but it has achieved unprecedented reach across X, Reddit, Instagram, and TikTok

3

.The trend's appeal lies in how OpenAI's ChatGPT synthesizes information from past conversations to create surprisingly accurate representations. One tech reporter who tested the feature found that the AI caricature included details like his love of books and coffee, his desk setup, and even his two dogs playing in a yard—all pulled from previous chat history

2

. Users can enhance results by providing additional context through user prompts about their role, outfit, or setting, particularly if they lack extensive interaction history with the platform4

.

Source: Fast Company

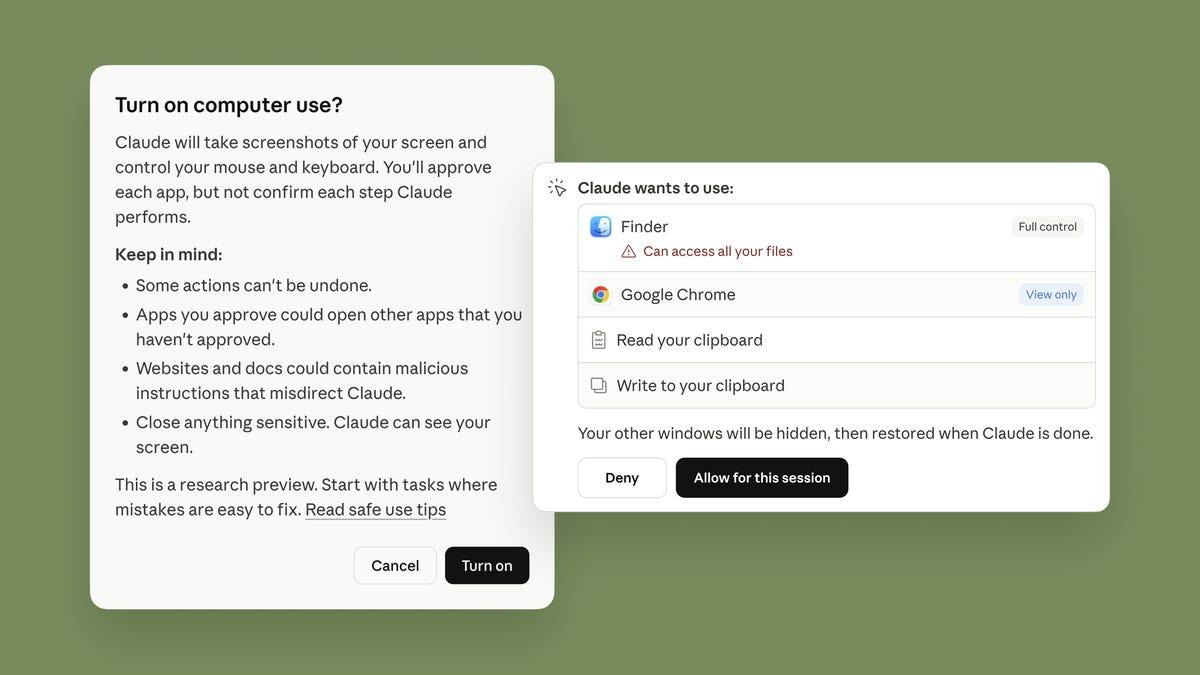

How User Information Processing Reveals Privacy Concerns

While the AI-generated caricatures offer entertainment value, they simultaneously expose significant privacy concerns about how much personal information AI systems retain and process. To create these personalized images, ChatGPT references conversations that may contain discussions about personal habits, emotional vulnerabilities, career struggles, relationship details, and even confidential documents

5

. OpenAI retains this information to train its models, creating potential risks if data breaches occur. A recent incident saw Discord's third-party vendor suffer a breach that leaked over 70,000 government IDs, highlighting the tangible dangers of centralized data storage5

.

Source: Beebom

Beyond text-based chat history, the trend requires users to upload photos, allowing AI systems to learn facial geometry, skin color, texture, body type, and ethnicity

5

. This ability to turn yourself into a cartoon comes at the cost of feeding increasingly detailed personal information into AI systems. Free users of ChatGPT can create up to five images daily, while paid subscriptions offer unlimited caricature generation4

. Some users report that free accounts receive inferior results compared to paid subscriptions1

.Related Stories

Mixed Results and Creative Concerns

Not all AI caricature attempts produce flattering or accurate results. Social media showcases numerous examples where ChatGPT includes questionable details or produces images that seem to call out users in unflattering ways

1

. Users experimenting with alternative platforms like Grok Imagine report especially poor outcomes1

. Beyond technical failures, critics argue that AI-generated caricatures lack the creative interpretation and effort that traditional caricature artists bring to their work. The algorithmic exaggeration based on learned patterns produces what some describe as "AI slop"—generic outputs with little distinct character or artistic merit5

.As this viral social media trend continues to spread, users should consider what they're trading for a few moments of entertainment. The more personal information shared with AI systems, the more these models learn to mimic human behavior and preferences. While OpenAI may not use collected data for inherently malicious purposes, the accumulation of detailed personal profiles creates vulnerabilities that extend beyond individual control

5

. The caricature trend serves as a visible reminder of the invisible data trails users leave behind with every interaction with AI chatbots.References

Summarized by

Navi

[1]

[2]

Related Stories

Recent Highlights

1

Tennessee Teens Sue Elon Musk's xAI Over Grok AI-Generated Child Abuse Images

Policy and Regulation

2

Pentagon designates Palantir Maven AI as core US military system in major defense shift

Technology

3

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation