Explainable AI to drive LLM observability investments to 50% of GenAI deployments by 2028

2 Sources

2 Sources

[1]

Gartner Predicts Growth in Explainable AI for Secure Generative AI Deployment

Gartner defines XAI as a set of capabilities that describes a model, highlights its strengths and weaknesses, predicts its likely behavior and identifies any potential biases. It can clarify a model's functioning to a specific audience to enable accuracy, fairness, accountability, stability and transparency in algorithmic decision making. LLM observability solutions monitor, analyze and provide actionable insights into the behavior and performance of LLMs. They go beyond standard IT measurements, such as response times to look at specific LLM metrics such as hallucinations, bias and token utilization. These tools are used by teams that develop and operationalize AI systems, and increasingly by IT operations and SREs responsible for the performance and resilience of these systems in production.

[2]

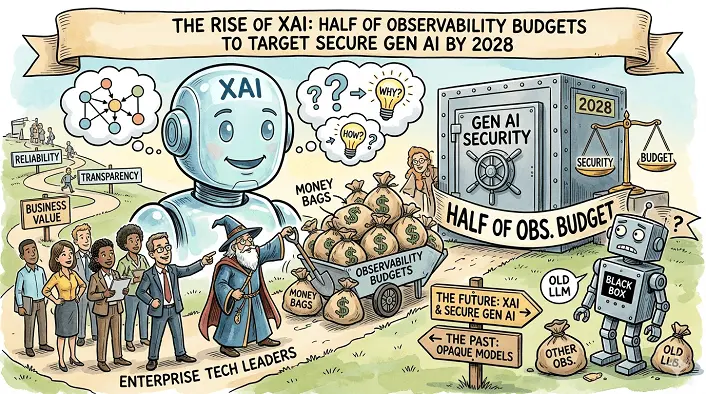

The Rise of XAI: Half of Observability Budgets to Target Secure GenAI by 2028

LLM Observability and Explainable AI Form Critical Trust Layers for Scaling GenAI Initiatives Gartner, Inc. predicts that by 2028, the growing importance of explainable AI (XAI) will drive large language model (LLM) observability investments to 50% of GenAI deployments, up from 15% today. Gartner defines XAI as a set of capabilities that describes a model, highlights its strengths and weaknesses, predicts its likely behavior and identifies any potential biases. It can clarify a model's functioning to a specific audience to enable accuracy, fairness, accountability, stability and transparency in algorithmic decision making. LLM observability solutions monitor, analyze and provide actionable insights into the behavior and performance of LLMs. They go beyond standard IT measurements, such as response times to look at specific LLM metrics such as hallucinations, bias and token utilization. These tools are used by teams that develop and operationalize AI systems, and increasingly by IT operations and SREs responsible for the performance and resilience of these systems in production. "As enterprises scale GenAI, the trust requirement grows faster than the technology itself," said Pankaj Prasad, Sr Principal Analyst at Gartner. "XAI provides visibility into why a model responded a certain way, while LLM observability validates how that response was generated and whether it can be relied on. "Without robust XAI and observability foundations, GenAI initiatives will be restricted to low risk, internal, or noncritical tasks where output verification is easily managed or inconsequential, severely limiting the potential return on investment." Growing Need for XAI and LLM Observability as Mandatory Trust Mechanisms Gartner forecasts the global GenAI models market will exceed $25 billion in 2026 and reach $75 billion by 2029, driven by rapid adoption across industries. As usage increases, so does the need for mechanisms that verify AI-generated content and protect against hallucinations, factual inaccuracies and biased reasoning. "Traditional observability is focused on speed and cost, but the priority is now moving toward deeper quality measures such as factual accuracy, logical correctness and sycophancy. This shift requires new governance-focused metrics and evaluation methods, such as human-in-the-loop validation of the generated content's narrative and citation accuracy," said Prasad. "Explainability turns a GenAI output into a defensible, auditable insight. LLM observability ensures the model behaves as expected over time. Without both, GenAI cannot mature beyond controlled lab environments." To maximize the reliability and business value of GenAI, organizations must adopt a rigorous, multi-layered strategy centered on transparency and performance. This begins with mandating verifiable XAI tracing for high-impact use cases to document reasoning and data sources, alongside the deployment of multidimensional observability platforms that track everything from latency and drift to cost and output quality. Furthermore, teams should integrate automated evaluation metrics -- such as safety checks and accuracy benchmarks -- directly into CI/CD pipelines to ensure continuous validation. Finally, fostering cross-functional alignment is essential; by educating legal and compliance stakeholders on explainability requirements, organizations can navigate governance hurdles and ensure a secure, high-performing AI deployment.

Share

Share

Copy Link

Gartner predicts that explainable AI (XAI) will drive LLM observability investments to 50% of GenAI deployments by 2028, up from 15% today. As enterprises scale generative AI, the trust requirement grows faster than the technology itself, making XAI and observability critical for ensuring accuracy, fairness, and transparency in AI-generated outputs.

Explainable AI Emerges as Critical Foundation for Secure Generative AI Deployment

Gartner, Inc. has issued a significant forecast that reveals how establishing trust in AI will reshape enterprise technology investments over the next four years. By 2028, the growing importance of Explainable AI (XAI) will drive LLM observability investments to 50% of Generative AI (GenAI) deployments, a substantial increase from just 15% today

2

. This shift reflects a fundamental recognition that as enterprises scale GenAI initiatives, the trust requirement grows faster than the technology itself.

Source: CXOToday

Understanding XAI and LLM Observability Solutions

Gartner defines Explainable AI (XAI) as a set of capabilities that describes a model, highlights its strengths and weaknesses, predicts its likely behavior and identifies any potential biases

1

. It can clarify an AI model's functioning to a specific audience to enable accuracy, fairness, accountability, stability and transparency in algorithmic decision making. Meanwhile, LLM observability solutions monitor, analyze and provide actionable insights into the behavior and performance of large language models, going beyond standard IT operations measurements such as response times to examine specific metrics including hallucinations, bias and token utilization2

.

Source: DT

The Trust Gap Limiting GenAI Business Value

"As enterprises scale GenAI, the trust requirement grows faster than the technology itself," said Pankaj Prasad, Sr Principal Analyst at Gartner

2

. XAI provides visibility into why a model responded a certain way, while monitoring and analysis of large language models validates how that response was generated and whether it can be relied on. Without robust XAI and observability foundations, GenAI initiatives will be restricted to low risk, internal, or noncritical tasks where output verification is easily managed or inconsequential, severely limiting the potential return on investment.Related Stories

Market Growth Demands New Quality Measures

Gartner forecasts the global GenAI models market will exceed $25 billion in 2026 and reach $75 billion by 2029, driven by rapid adoption across industries

2

. As usage increases, so does the need for mechanisms that verify AI-generated content and protect against hallucinations, factual inaccuracies and biased reasoning. Traditional observability focused on speed and cost, but the priority is now moving toward deeper quality measures such as factual accuracy, logical correctness and sycophancy, requiring new governance-focused evaluation metrics and methods, such as human-in-the-loop validation of generated content's narrative and citation accuracy.Building Multi-Layered Strategy for Ensuring Accuracy and Fairness

These tools are used by teams that develop and operationalize AI systems, and increasingly by IT operations and SREs responsible for the performance and resilience of these systems in production

1

. To maximize reliability and business value, organizations must adopt a rigorous strategy centered on transparency and performance. This begins with mandating verifiable XAI tracing for high-impact use cases to document reasoning and data sources, alongside deployment of multidimensional observability platforms that track everything from latency and drift to cost and output quality2

. Teams should integrate automated evaluation metrics such as safety checks and accuracy benchmarks directly into CI/CD pipelines to ensure continuous validation. Fostering cross-functional alignment by educating legal and compliance stakeholders on explainability requirements will help organizations navigate governance hurdles and ensure secure, high-performing AI deployment.References

Summarized by

Navi

Related Stories

Recent Highlights

1

AI Models Lie and Deceive to Protect Other AI Models From Deletion, Study Reveals

Science and Research

2

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

3

Judge blocks Pentagon from blacklisting Anthropic over AI safety guardrails dispute

Policy and Regulation