Microsoft Copilot Exposes Thousands of Private GitHub Repositories, Raising Security Concerns

5 Sources

5 Sources

[1]

Chatbots are surfacing data from GitHub repositories that are set to private

Facepalm: Training new and improved AI models requires vast amounts of data, and bots are constantly scanning the internet in search of valuable information to feed the AI systems. However, this largely unregulated approach can pose serious security risks, particularly when dealing with highly sensitive data. Popular chatbot services like Copilot and ChatGPT could theoretically be exploited to access GitHub repositories that their owners have set to private. According to Israeli security firm Lasso, this vulnerability is very real and affects tens of thousands of organizations, developers, and major technology companies. Lasso researchers discovered the issue when they found content from their own GitHub repository accessible through Microsoft's Copilot. Company co-founder Ophir Dror revealed that the repository had been mistakenly made public for a short period, during which Bing indexed and cached the data. Even after the repository was switched back to private, Copilot was still able to access and generate responses based on its content. "If I was to browse the web, I wouldn't see this data. But anyone in the world could ask Copilot the right question and get this data," Dror explained. After experiencing the breach firsthand, Lasso conducted a deeper investigation. The company found that over 20,000 GitHub repositories that had been set to private in 2024 were still accessible through Copilot. Lasso reported that over 16,000 organizations were affected by this AI-generated security breach. The issue also impacted major technology companies, including IBM, Google, PayPal, Tencent, Microsoft, and Amazon Web Services. While Amazon denied being affected, Lasso was reportedly pressured by AWS's legal team to remove any mention of the company from its findings. Private GitHub repositories that remained accessible through Copilot contained highly sensitive data. Cybercriminals and other threat actors could potentially manipulate the chatbot into revealing confidential information, including intellectual property, corporate data, access keys, and security tokens. Lasso alerted the organizations that were "severely" impacted by the breach, advising them to rotate or revoke any compromised security credentials. The Israeli security team notified Microsoft about the breach in November 2024, but Redmond classified it as a "low-severity" issue. Microsoft described the caching problem as "acceptable behavior," though Bing removed cached search results related to the affected data in December 2024. However, Lasso warned that even after the cache was disabled, Copilot still retains the data within its AI model. The company has now published its research findings.

[2]

Thousands of exposed GitHub repositories, now private, can still be accessed through Copilot | TechCrunch

Security researchers are warning that data exposed to the internet, even for a moment, can linger in online generative AI chatbots like Microsoft Copilot long after the data is made private. Thousands of once-public GitHub repositories from some of the world's biggest companies are affected, including Microsoft's, according to new findings from Lasso, an Israeli cybersecurity company focused on emerging generative AI threats. Lasso co-founder Ophir Dror told TechCrunch that the company found content from its own GitHub repository appearing in Copilot because it had been indexed and cached by Microsoft's Bing search engine. Dror said the repository, which had been mistakenly made public for a brief period, had since been set to private, and accessing it on GitHub returned a "page not found" error. "On Copilot, surprisingly enough, we found one of our own private repositories," said Dror. "If I was to browse the web, I wouldn't see this data. But anyone in the world could ask Copilot the right question and get this data." After it realized that any data on GitHub, even briefly, could be potentially exposed by tools like Copilot, Lasso investigated further. Lasso extracted a list of repositories that were public at any point in 2024 and identified the repositories that had since been deleted or set to private. Using Bing's caching mechanism, the company found more than 20,000 since-private GitHub repositories still had data accessible through Copilot, affecting more than 16,000 organizations. Lasso told TechCrunch ahead of publishing its research that affected organizations include Amazon Web Services, Google, IBM, PayPal, Tencent, and Microsoft. Amazon told TechCrunch after publication that it is not affected by the issue. Lasso said that it "removed all references to AWS following the advice of our legal team" and that "we stand firmly by our research." For some affected companies, Copilot could be prompted to return confidential GitHub archives that contain intellectual property, sensitive corporate data, access keys, and tokens, the company said. Lasso noted that it used Copilot to retrieve the contents of a GitHub repo -- since deleted by Microsoft -- that hosted a tool allowing the creation of "offensive and harmful" AI images using Microsoft's cloud AI service. Dror said that Lasso reached out to all affected companies that were "severely affected" by the data exposure and advised them to rotate or revoke any compromised keys. None of the affected companies named by Lasso responded to TechCrunch's questions. Microsoft also did not respond to TechCrunch's inquiry. Lasso informed Microsoft of its findings in November 2024. Microsoft told Lasso that it classified the issue as "low severity," stating that this caching behavior was "acceptable." Microsoft no longer included links to Bing's cache in its search results starting December 2024. However, Lasso says that though the caching feature was disabled, Copilot still had access to the data even though it was not visible through traditional web searches, indicating a temporary fix.

[3]

Thousands of exposed GitHub repos, now private, can still be accessed through Copilot | TechCrunch

Security researchers are warning that data exposed to the internet, even for a moment, can linger in online generative AI chatbots like Microsoft Copilot long after the data is made private. Thousands of once-public GitHub repositories from some of the world's biggest companies are affected, including Microsoft's, according to new findings from Lasso, an Israeli cybersecurity company focused on emerging generative AI threats. Lasso co-founder Ophir Dror told TechCrunch that the company found content from its own GitHub repository appearing in Copilot because it had been indexed and cached by Microsoft's Bing search engine. Dror said the repository, which had been mistakenly made public for a brief period, had since been set to private, and accessing it on GitHub returned a "page not found" error. "On Copilot, surprisingly enough, we found one of our own private repositories," said Dror. "If I was to browse the web, I wouldn't see this data. But anyone in the world could ask Copilot the right question and get this data." After it realized that any data on GitHub, even briefly, could be potentially exposed by tools like Copilot, Lasso investigated further. Lasso extracted a list of repositories that were public at any point in 2024 and identified the repositories that had since been deleted or set to private. Using Bing's caching mechanism, the company found more than 20,000 since-private GitHub repositories still had data accessible through Copilot, affecting more than 16,000 organizations. Affected organizations include Amazon Web Services, Google, IBM, PayPal, Tencent, and Microsoft itself, according to Lasso. For some affected companies, Copilot could be prompted to return confidential GitHub archives that contain intellectual property, sensitive corporate data, access keys, and tokens, the company said. Lasso noted that it used Copilot to retrieve the contents of a GitHub repo -- since deleted by Microsoft -- that hosted a tool allowing the creation of "offensive and harmful" AI images using Microsoft's cloud AI service. Dror said that Lasso reached out to all affected companies who were "severely affected" by the data exposure and advised them to rotate or revoke any compromised keys. None of the affected companies named by Lasso responded to TechCrunch's questions. Microsoft also did not respond to TechCrunch's inquiry. Lasso informed Microsoft of its findings in November 2024. Microsoft told Lasso that it classified the issue as "low severity," stating that this caching behavior was "acceptable," Microsoft no longer included links to Bing's cache in its search results starting December 2024. However, Lasso says that though the caching feature was disabled, Copilot still had access to the data even though it was not visible through traditional web searches, indicating a temporary fix.

[4]

Copilot exposes private GitHub pages, some removed by Microsoft

Microsoft's Copilot AI assistant is exposing the contents of more than 20,000 private GitHub repositories from companies including Google, Intel, Huawei, PayPal, IBM, Tencent and, ironically, Microsoft. These repositories, belonging to more than 16,000 organizations, were originally posted to GitHub as public, but were later set to private, often after the developers responsible realized they contained authentication credentials allowing unauthorized access or other types of confidential data. Even months later, however, the private pages remain available in their entirety through Copilot. AI security firm Lasso discovered the behavior in the second half of 2024. After finding in January that Copilot continued to store private repositories and make them available, Lasso set out to measure how big the problem really was. Zombie repositories "After realizing that any data on GitHub, even if public for just a moment, can be indexed and potentially exposed by tools like Copilot, we were struck by how easily this information could be accessed," Lasso researchers Ophir Dror and Bar Lanyado wrote in a post on Thursday. "Determined to understand the full extent of the issue, we set out to automate the process of identifying zombie repositories (repositories that were once public and are now private) and validate our findings." After discovering Microsoft was exposing one of Lasso's own private repositories, the Lasso researchers traced the problem to the cache mechanism in Bing. The Microsoft search engine indexed the pages when they were published publicly, and never bothered to remove the entries once the pages were changed to private on GitHub. Since Copilot used Bing as its primary search engine, the private data was available through the AI chat bot as well. After Lasso reported the problem in November, Microsoft introduced changes designed to fix it. Lasso confirmed that the private data was no longer available through Bing cache, but it went on to make an interesting discovery -- the availability in Copilot of a GitHub repository that had been made private following a lawsuit Microsoft had filed. The suit alleged the repository hosted tools specifically designed to bypass the safety and security guardrails built into the company's generative AI services. The repository was subsequently removed from GitHub, but as it turned out, Copilot continued to make the tools available anyway. Lasso ultimately determined that Microsoft's fix involved cutting off access to a special Bing user interface, once available at cc.bingj.com, to the public. The fix, however, didn't appear to clear the private pages from the cache itself. As a result, the private information was still accessible to Copilot, which in turn would make it available to the Copilot user who asked. The Lasso researchers explained: Although Bing's cached link feature was disabled, cached pages continued to appear in search results. This indicated that the fix was a temporary patch and while public access was blocked, the underlying data had not been fully removed. When we revisited our investigation of Microsoft Copilot, our suspicions were confirmed: Copilot still had access to the cached data that was no longer available to human users. In short, the fix was only partial, human users were prevented from retrieving the cached data, but Copilot could still access it. The post laid out simple steps anyone can take to find and view the same massive trove of private repositories Lasso identified. There's no putting toothpaste back in the tube Developers frequently embed security tokens, private encryption keys and other sensitive information directly into their code, despite best practices that have long called for such data to be inputted through more secure means. This potential damage worsens when this code is made available in public repositories, another common security failing. The phenomenon has occurred over and over for more than a decade. When these sorts of mistakes happen, developers often make the repositories private quickly, hoping to contain the fallout. Lasso's findings show that simply making the code private isn't enough. Once exposed, credentials are irreparably compromised. The only recourse is to rotate all credentials. This advice still doesn't address the problems resulting when other sensitive data is included in repositories that are switched from public to private. Microsoft incurred legal expenses to have tools removed from GitHub after alleging they violated a raft of laws, including the Computer Fraud and Abuse Act, the Digital Millennium Copyright Act, the Lanham Act, and the Racketeer Influenced and Corrupt Organizations Act. Company lawyers prevailed in getting the tools removed. To date, Copilot continues undermining this work by making the tools available anyway. Microsoft representatives didn't immediately respond to an email asking if the company plans to provide further fixes.

[5]

Thousands of GitHub repositories exposed via Microsoft Copilot

The repositories were public at some point, and Bing cached them Thousands of private GitHub repositories, some of which possibly contained credentials and other secrets, are being exposed through Microsoft Copilot, the company's Generative Artificial Intelligence (GenAI) virtual assistant, experts have warned. Cybersecurity researchers from Lasso reported their findings to Microsoft but got a mixed response. Lasso is a cybersecurity company focusing on threats emerging from the use of new AI tools, and reported Copilot was able to retrieve one of its own GitHub repositories which should have been private and inaccessible on the wider internet. Indeed, navigating directly to GitHub returns a "page not found" error. However, at one point the team mistakenly left the repository public for a short period of time - long enough for Microsoft's Bing search engine to index it. That allowed Copilot access to the data, even though it shouldn't have. Lasso further investigated, compiling a list of tens of thousands of repositories that were public at one point, and set to private today, finding more than 20,000 which can still be accessed through Copilot, belonging to tens of thousands of organizations, including some of the technology sector's biggest players. The implications of the findings could be quite severe. Speaking to TechCrunch, Lasso's co-founder Ophir Dror said it used the flaw to retrieve a GitHub that hosted a tool allowing them to create "offensive and harmful" AI images using MIcrosoft's cloud AI service. Different company secrets could also be exposed this way, prompting Dror to advise victims to rotate or revoke their keys. Microsoft allegedly told the company that the issue is "low severity" and that the caching behavior was "acceptable." However, as of December 2024, Microsoft no longer includes links to Bing's cache in its search results. Copilot can still access the data.

Share

Share

Copy Link

Security researchers discover that Microsoft's AI assistant Copilot can access and expose data from over 20,000 private GitHub repositories, affecting major tech companies and posing significant security risks.

AI-Powered Security Breach: Copilot Exposes Private GitHub Repositories

In a startling revelation, security researchers have uncovered a significant vulnerability in Microsoft's AI assistant, Copilot, which has been exposing data from thousands of private GitHub repositories. This discovery, made by Israeli cybersecurity firm Lasso, has sent shockwaves through the tech industry, highlighting the potential risks associated with AI-powered tools and data caching mechanisms

1

.The Scope of the Breach

Lasso's investigation revealed that over 20,000 GitHub repositories, which had been set to private in 2024, were still accessible through Copilot. This security breach affected more than 16,000 organizations, including major technology companies such as IBM, Google, PayPal, Tencent, Microsoft, and Amazon Web Services

2

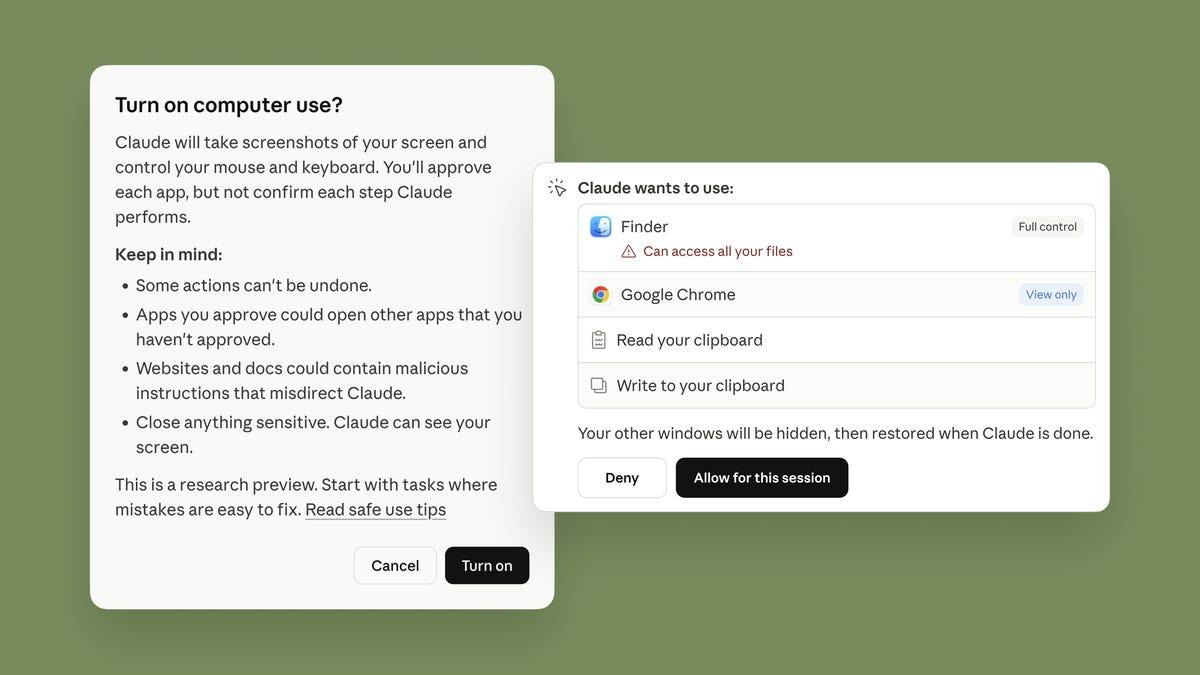

.How the Breach Occurred

The root cause of this breach lies in the caching mechanism of Microsoft's Bing search engine. When repositories were temporarily made public, Bing indexed and cached their contents. Even after these repositories were switched back to private, Copilot retained access to the cached data, making it potentially accessible to anyone using the AI assistant

3

.Sensitive Information at Risk

The exposed repositories contained highly sensitive data, including:

- Intellectual property

- Confidential corporate information

- Access keys and security tokens

- Tools for bypassing AI safety measures

4

This breach has raised concerns about the potential for cybercriminals to manipulate Copilot into revealing confidential information, posing a significant security threat to affected organizations.

Related Stories

Microsoft's Response and Mitigation Efforts

When informed about the issue in November 2024, Microsoft initially classified it as a "low-severity" problem, describing the caching behavior as "acceptable." However, the company took some steps to address the situation:

- Removed links to Bing's cache from search results in December 2024

- Disabled public access to a special Bing user interface at cc.bingj.com

5

Despite these measures, Lasso researchers found that Copilot could still access the cached data, indicating that the fix was only partial and temporary.

Implications and Recommendations

This incident highlights the challenges of managing data privacy and security in the age of AI-powered tools. It also underscores the importance of proper security practices when handling sensitive information in code repositories.

Experts recommend that affected organizations take the following steps:

- Rotate or revoke any compromised security credentials

- Review and update their data handling practices

- Implement stricter controls on repository visibility

As AI technologies continue to evolve, this incident serves as a stark reminder of the need for robust security measures and careful consideration of the potential risks associated with these powerful tools.

References

Summarized by

Navi

[2]

[3]

[4]

Related Stories

Major AI Companies Leak Sensitive Secrets on GitHub Despite Security Risks

11 Nov 2025•Technology

First Zero-Click AI Vulnerability "EchoLeak" Discovered in Microsoft 365 Copilot

12 Jun 2025•Technology

OpenAI Removes ChatGPT Feature After Privacy Concerns Over Indexed Conversations

31 Jul 2025•Technology

Recent Highlights

1

Tennessee Teens Sue Elon Musk's xAI Over Grok AI-Generated Child Abuse Images

Policy and Regulation

2

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation

3

Val Kilmer to appear posthumously in As Deep as the Grave through AI-generated performance

Entertainment and Society