TikTok accused of creating racist AI ads for indie publisher Finji without permission

3 Sources

3 Sources

[1]

Tunic publisher claims TikTok ran 'racist, sexist' AI ads for one of its games without its knowledge

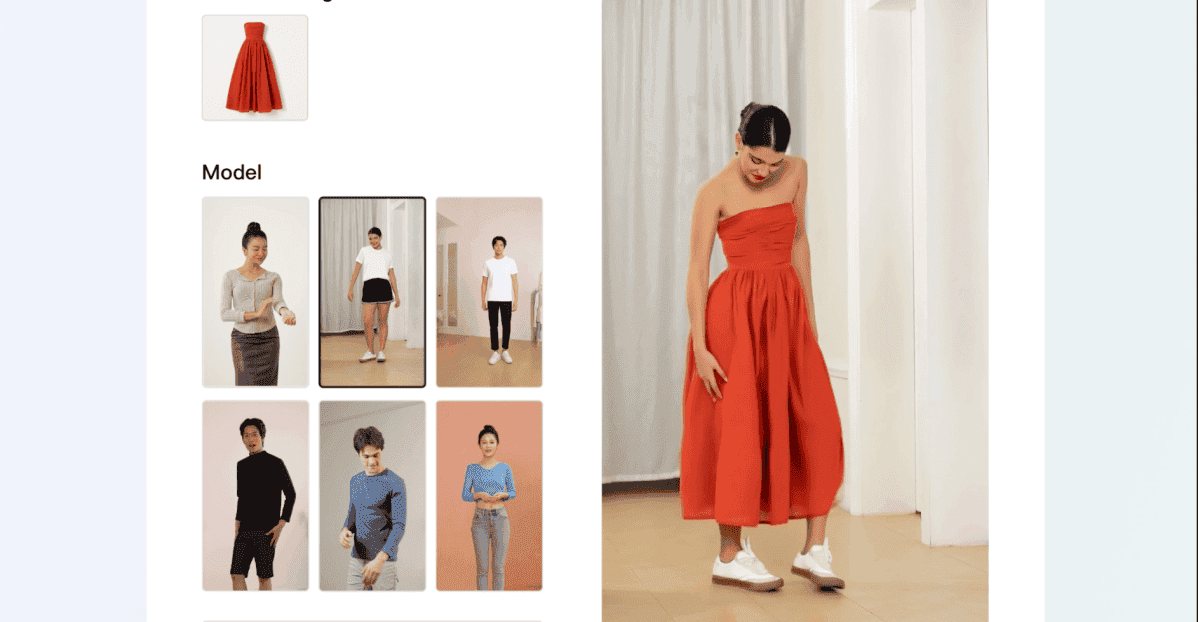

Indie publisher and developer Finji has accused TikTok of using generative AI to alter the ads for its games on the platform without its knowledge or permission. Finji, which published indie darlings like and , said it only became aware of the seemingly modified ads after being alerted to them by followers of its official TikTok account. As reported by , Finji alleges that one ad that went out on the platform was modified so it displayed a "racist, sexualized" representation of a character from one of its games. While it does advertise on TikTok, it told IGN that it has AI "turned all the way off," but after CEO and co-founder Rebekah Saltsman received screenshots of the ads in question from fans, she approached TikTok to investigate. A number of Finji ads have appeared on TikTok, some that include montages of the company's games, and others that are game-specific like one for Usual June. According to IGN, the offending AI-modified ads (which are still posted as if they're coming directly from Finji) appeared as slideshows. Some images don't appear to be that different from the source, but one possibly AI-generated example seen by IGN depicts Usual June's titular protagonist with "a bikini bottom, impossibly large hips and thighs, and boots that rise up over her knees." Needless to say (and obvious from the official screenshot used as the lead image for this article), this is not how the character appears in the game. As for TikTok's response, IGN printed a number of the platform's replies to Finji's complaints, in which it initially said, in part, that it could find no evidence that "AI-generated assets or slideshow formats are being used." This was despite Finji sending the customer support page a screenshot of the clearly edited image mentioned above. In a subsequent exchange, TikTok appeared to acknowledge the evidence and assured the publisher it was "no longer disputing whether this occurred." It added that it has escalated the issue internally and was investigating it thoroughly. TikTok does have a "Smart Creative" option on its ad platform, which essentially uses generative AI to modify user-created ads so that multiple versions are pushed out, with the ones its audience responds more positively to used more often. Another option is the features, which use AI to automatically optimize things like music, audio effects and general visual "quality" to "enhance the user's viewing experience." Saltsman showed IGN evidence that Finji has both of these options turned off, which was also confirmed by a TikTok agent for the ad in question. After a number of increasingly frustrated exchanges in which TikTok eventually admitted to Saltsman that the ad "raises significant issues, including the unauthorized use of AI, the sexualization and misrepresentation of your characters, and the resulting commercial and reputational harm to your studio," the Finji co-founder was offered something of an explanation. TikTok said that Finji's campaign used a "catalog ads format" designed to "demonstrate the performance benefits of combining carousel and video assets in Sales campaigns." It said that this "initiative" helped advertisers "achieve better results with less effort," but did not address the harmful content directly. Finji seemingly also opted into this ad format without knowing it had done so. TikTok declined to comment on the matter when approached by IGN. Saltsman was told the issue could not be escalated any higher, with communication not resolved at the time of IGN publishing its report. In a statement to the outlet, Saltsman said she was "a bit shocked by TikTok's complete lack of appropriate response to the mess they made." She went on to say that she expected both an apology and clear reassurance of how a similar issue would not reoccur, but was "obviously not holding my breath for any of the above."

[2]

Indie publisher alleges TikTok is AI-generating offensive ads using its characters and they can't make it stop: 'Does TikTok want me to be grateful for the mistreatment of my company?'

Finji CEO Rebekah Saltsman called out TikTok for "a profound void where common sense and business sense usually reside." Night in the Woods and Tunic publisher Finji said TikTok is using its characters and art without its consent to run offensive ads "enhanced" with generative AI. As reported by IGN, Finji CEO Rebekah Saltsman called attention to the issue publicly in a February 5 Bluesky post. "If you happen to see any Finji ads that look distinctly UN-Finji-like, send me a screencap," Saltsman told her followers. We have reached out to TikTok for comment, and will update this story if we hear back. Posting this here for reasons 😒 If you happen to see any Finji ads that look distinctly UN-Finji-like, send me a screencap. If you are someone who writes intelligently about something like "massive company running ai versions of paid ads without small company's consent" idk, maybe reach out. -- @bexsaltsman.bsky.social ( @bexsaltsman.bsky.social.bsky.social) 2026-02-22T18:00:55.392Z Saltsman told IGN that, while Finji does use TikTok to serve ads (like this one), those ads don't make use of generative AI. Finji says it received screenshots from fans who were served AI versions of the ads. IGN was shown at least one example where the lead character of Finji's next game, a Black woman, was turned into a sexualized, racist caricature through AI alterations. "After checking the creatives, we do not see any indication that AI-generated assets or slideshow formats are being used," TikTok Ads Support reportedly told Finji when contacted about the issue. When supplied screenshots of the ads in question, TikTok Ads Support reportedly told Finji that "we are no longer disputing whether this occurred," and that the publisher would be put in contact with a "senior representative who has the authority to address the situation." TikTok support reportedly told Finji that this was part of "an initiative aimed at helping advertisers like you achieve better results with less effort." Finji was told it could request to opt out. In a later reply, TikTok allegedly told Finji that it "would not be contacted by a 'senior representative' because the person currently speaking was 'the highest internal team available for this type of issue.'" Though it did, in another reply, say it'd "re-escalate the issue internally." In a statement, Saltsman told IGN: "It's one thing to have an algorithm that's racist and sexist, and another thing to use AI to churn content of your paying business partners, and another thing to do it against their consent, and then to also NOT respond to any of those mistakes in a coherent way? Really? "What really is utterly baffling is what appears to be a profound void where common sense and business sense usually reside. Does TikTok want me to be grateful for the mistreatment of my company and our game?"

[3]

Tunic, Night in the Woods Publisher Says TikTok Is Creating and Running Racist GenAI Ads for Its Games Without Permission - IGN

Finji, publisher of beloved indie titles such as Night in the Woods and Tunic and the developer behind Overland and Usual June, says that TikTok has been using generative AI to modify its ads on the platform without permission and pushing those ads to its users without Finji's knowledge, including one ad that was modified to include a racist, sexualized stereotype of one of Finji's characters. This was first brought up by Finji CEO and co-founder Rebekah Saltsman on Bluesky, where she shared a screencap of a social media post from another brand that appeared to be going through the same thing, and saying the following, "If you happen to see any Finji ads that look distinctly UN-Finji-like, send me a screencap." According to Saltsman speaking with IGN, Finji's official account on TikTok does push ads for its games, but has "AI turned all the way off." The team first learned that generative AI ads were being created without their knowledge thanks to social media comments on Finji's actual, regular ads from users concerned about what they were seeing. Saltsman was able to get screenshots from audience members showing the offending ads, which prompted her to escalate the issue to TikTok support. The original ads in question appear to be videos advertising Finji's games, with one showing off several games and the other focused on Usual June. The AI-"enhanced" versions, which appear on TikTok as if posted directly from the official Finji account, seem to consist of slideshows rather than videos as indicated by a number of comments on both ads. Finji has sent IGN screenshots sent in by viewers who claim they saw the AI version of those ads. While several of the AI-"enhanced" images seem to be relatively unedited compared to their official counter parts, one image seen by IGN is noticeably modified. The offending image depicts an edited version of the official cover art, the original version of which is pictured above. In the seemingly AI-edited version, the main character June (center in the image above) is depicted alone, but the image extends down to her ankles. She is depicted with a bikini bottom, impossibly large hips and thighs, and boots that rise up over her knees, seemingly invoking a harmful stereotype. This is extremely distinct from June's actual depiction in the game: IGN has viewed a conversation between the official Finji account and TikTok customer support, including a part of the discussion where the customer support agent confirmed Finji did have TikTok's "Smart Creative" option shut off. "Smart Creative" is essentially a TikTok function that uses generative AI to create multiple versions of user-created ads. So if a company makes Ad A with Image A and Text A, and Ad B with Image B and Text B, generative AI will mix and match these in different combinations to test which versions of the ads work best with users, and then surface the best ones more frequently. There's also an "Automate Creative" feature that uses AI to "automatically optimize" assets, such as "improving" images, music, audio, and other things to make an ad allegedly more pleasing to an audience. Saltsman confirms that Finji has both of those options shut off, and showed screenshots of the TikTok backend for several of the ads in question to confirm this. Finji also says it is unable to view or edit the AI-generated versions of its own ads, and is only aware of them via numerous comments on the ads as well as users in its official Discord reporting the problem and sharing screenshots. Saltsman says she suspects there is at least one other inappropriate generative AI ad circulating based on comments on some of the ads regarding another character in Usual June, Frankie, but is unable to see the modifications herself and thus cannot confirm. In that same support conversation, the TikTok support agent was unable to find an immediate solution for Finji. At one point, the agent suggests that one of Finji's ads was inadvertently using the Automate Create feature, to which Finji replies, "I have never turned that on," and had the agent confirm that option was not on for the ads described above. Later in the conversation, the agent said, "I am checking all the possible cause [sic] why this can happen but as per checking all the setup is clear and there should be no ai generated content included." The agent offers to "raise a ticket" for further investigation, but ignored repeated requests from Finji to share a timeline for when the ticket might be responded to. Since this incident took place, Finji staff have made efforts to follow up and get answers, only to be shut down by TikTok support repeatedly. Finji has sent IGN screenshots of all of the following messages to TikTok, and their responses. The above conversation happened on February 3. On February 6, after a follow-up message to support from Finji asking for an update, TikTok Ads Support responded as follows: After checking the creatives, we do not see any indication that AI-generated assets or slideshow formats are being used. Both ads are confirmed as video creatives sourced directly from your Creative Library / TikTok posts, and creatives appear unchanged at the ad level. There is no evidence that AI-generated content or auto-assembled slideshow assets were added by the system. [All emphasis TikTok's.] A Finji representative responded that same day with the screenshot of the offensive ad (which Finji had already sent during the initial support request) and asked for TikTok to escalate the issue, which prompted the following response from TikTok: We acknowledge receipt of the evidence you've provided and understand the seriousness of your concerns. Based on the materials and context you've shared, we recognize that this situation raises significant issues, including the unauthorized use of AI, the sexualization and misrepresentation of your characters, and the resulting commercial and reputational harm to your studio. We want to be clear that we are no longer disputing whether this occurred. We understand that you have provided documentation and that audience comments on the ads further corroborate your claims. This matter will be escalated immediately for further review at the highest appropriate level. We are intiating an internal escalation to ensure this issue is investigated thoroughly, and we will work to connect you with a senior representative who has the authority to address the situation and discuss next steps toward resolution. On February 10, having not received further responses nor been connected with a "senior representative", Finji followed up again to ask where the ticket was at. It received a message containing the following: I understand how surprising it was to see AI-generated or automatically created content appear in your ads, especially when you weren't expecting any changes to your creatives. Here's what happened and why you saw those assets: Your campaign recently included an ad that used a catalog ads format designed to demonstrate the performance benefits of combining carousel and video assets in Sales campaigns. This is part of an initiative aimed at helping advertises [sic] like you achieve better results with less effort. Campaigns that use these mixed assets typically see a 1.4x ROAS [return on ad spend] lift, and we wanted to ensure you had access to that potential improvement. [All emphasis TikTok's]. The message from support went on to describe the claimed improvements gained from a catalog ads format, followed by an offer to request to be added to an "opt-out blocklist" for which approval "isn't guaranteed." Finji responded, understandably pretty irate at this point, demanding to know why it had not been put in touch with a senior representative, why it isn't addressing the "SEXUALIZED, RACIST, and SEXIST representation of [the] studio's work" [emphasis Finji's], why the company can't track AI-generated versions of the ads, why it was opted into this without the company's consent, and why TikTok cannot guarantee an opt out. TikTok responded again, stating that the most recent response it sent was in fact from its escalation team, and that Finji would not be contacted by a "senior representative" because the person currently speaking was "the highest internal team available for this type of issue." The representative went on to say the escalation team had already reviewed the situation and "their findings were included in the previous response" and that the feedback "had been taken seriously." It said that Finji had been included in "a broader automated initiative" and concluded that the escalation team had "already provided their final findings and actions on this matter." After another reply from Finji, the TikTok representative promised to "re-escalate the issue internally," but this was the final communication received as of publication time, even after another check-in from Finji on February 17. When reached out to by IGN, TikTok declined to provide comment on-record. "I have to admit I am a bit shocked by TikTok's complete lack of appropriate response to the mess they made," said Saltsman in a statement to IGN today. "It's one thing to have an algorithm that's racist and sexist, and another thing to use AI to churn content of your paying business partners, and another thing to do it against their consent, and then to also NOT respond to any of those mistakes in a coherent way? Really? "What really is utterly baffling is what appears to be a profound void where common sense and business sense usually reside. Does TikTok want me to be grateful for the mistreatment of my company and our game? Based on the wild response through the weeks of customer service correspondence we have received, I think this is their stance and take on their obvious offensive and racist technology and process and how they secretly use it on the assets of their paying clients without consent or knowledge. "This is just simply embarrassing but not for me as an individual. For me- I am just super pissed off. This is my work, my team's work and mine and my company's reputation- which I have spent over a decade building. My expectation was a proper apology, systemic changes in how they use this technology for paying clients and a hard look at why their technology is so obviously racist and sexist. I am obviously not holding my breath for any of the above."

Share

Share

Copy Link

Indie game publisher Finji alleges TikTok used generative AI to modify its game advertisements without consent, creating racist and sexualized depictions of characters. Despite having AI features disabled, Finji discovered the problematic AI-generated ads only after fans reported them. TikTok's response has been inconsistent, initially denying the issue before acknowledging it but offering no clear resolution.

Indie Game Publisher Finji Confronts TikTok Over Unauthorized AI Ads

Finji, the indie game publisher behind beloved titles like Tunic and Night in the Woods, has accused TikTok of using generative AI to alter advertisements for its games without permission or knowledge. The company only discovered the problematic AI-generated ads after followers on its official TikTok account sent screenshots showing disturbing modifications to game characters

1

. CEO and co-founder Rebekah Saltsman publicly addressed the issue on Bluesky, asking followers to send screencaps of any Finji ads that looked "distinctly UN-Finji-like"2

.

Source: PC Gamer

The most egregious example involved unauthorized advertisements for Usual June, Finji's upcoming game featuring a Black woman protagonist. According to evidence shared with IGN, one AI-modified image depicted the character June with "a bikini bottom, impossibly large hips and thighs, and boots that rise up over her knees," creating what Finji describes as racist and sexualized caricatures that bear no resemblance to the character's actual in-game appearance

3

. These unauthorized advertisements appeared as slideshows rather than the video format Finji had created, and were displayed on TikTok as if posted directly from Finji's official account.

Source: Engadget

TikTok's Inconsistent Response Raises Platform Control Questions

When Saltsman escalated the issue to TikTok customer support, the platform's response was both inconsistent and inadequate. Initially, TikTok stated it could find "no evidence" that "AI-generated assets or slideshow formats are being used," despite Finji providing clear screenshots of the modified content

1

. In subsequent exchanges, TikTok appeared to acknowledge the evidence, stating it was "no longer disputing whether this occurred" and promised to escalate the issue internally1

.Finji had explicitly disabled TikTok's AI features, including Smart Creative and Automate Creative options. Smart Creative uses generative AI to create multiple versions of user-created ads, mixing and matching different elements to test which combinations perform best with audiences. The Automate Creative feature uses AI to automatically optimize assets like images, music, and audio effects

3

. Saltsman provided evidence to IGN showing both features were turned off, which a TikTok agent confirmed for the ads in question1

.Catalog Ads Format Reveals Deeper Platform Control Issues

After multiple frustrated exchanges, TikTok eventually admitted the ad "raises significant issues, including the unauthorized use of AI, the sexualization and misrepresentation of your characters, and the resulting commercial and reputational harm to your studio"

1

. The platform explained that Finji's campaign used a "catalog ads format" designed to "demonstrate the performance benefits of combining carousel and video assets in Sales campaigns." TikTok described this as "an initiative aimed at helping advertisers like you achieve better results with less effort," but notably did not address the harmful content directly2

. Finji apparently opted into this ad format without knowing it had done so1

.The incident highlights critical questions about platform control over AI-generated content and advertiser consent. Finji reports being unable to view or edit the AI-generated versions of its own ads, only becoming aware of them through comments and Discord reports from users

3

. Saltsman suspects at least one other inappropriate ad featuring the character Frankie is circulating based on user comments, but cannot confirm without seeing the modifications herself.Related Stories

Brand Integrity and Reputational Damage for Small Studios

Saltsman was told the issue could not be escalated any higher, with communication remaining unresolved. In her statement to IGN, she expressed shock at "TikTok's complete lack of appropriate response to the mess they made." She expected both an apology and clear reassurance about how similar issues would be prevented, but was "obviously not holding my breath for any of the above"

1

. Saltsman elaborated on the severity: "It's one thing to have an algorithm that's racist and sexist, and another thing to use AI to churn content of your paying business partners, and another thing to do it against their consent, and then to also NOT respond to any of those mistakes in a coherent way?"2

The situation raises urgent concerns for other advertisers about brand integrity when platforms deploy AI tools without explicit consent. For small indie game publishers like Finji, such misrepresentation carries significant commercial and reputational risks. The case demonstrates how automated systems can produce harmful content that contradicts a company's values and damages relationships with their audience. As Saltsman pointedly asked: "Does TikTok want me to be grateful for the mistreatment of my company and our game?"

2

TikTok declined to comment when approached by IGN1

.References

Summarized by

Navi

[1]

Related Stories

Nexon's The First Descendant Faces Backlash Over AI-Generated Influencer Ads on TikTok

18 Aug 2025•Technology

TikTok Launches AI-Powered Ad Creation Tool Globally, Partners with Getty Images

15 Nov 2024•Technology

TikTok Expands AI-Powered Advertising Tools with Virtual Avatars and Video Generation

17 Jun 2025•Technology

Recent Highlights

1

Google Maps unveils Ask Maps with Gemini AI and 3D Immersive Navigation in biggest update

Technology

2

AI chatbots help plan violent attacks as safety guardrails fail, new investigation reveals

Technology

3

OpenAI secures $110 billion funding round as questions swirl around AI bubble and profitability

Business and Economy