Nvidia CEO Jensen Huang projects $1 trillion in AI chips revenue through 2027, doubling forecast

39 Sources

39 Sources

[1]

Jensen just put Nvidia's Blackwell and Vera Rubin sales projections into the $1 trillion stratosphere | TechCrunch

Nvidia CEO Jensen Huang threw out a lot of numbers -- mostly of the technical variety -- during his keynote Monday to kick off the company's annual GTC Conference in San Jose, California. But there was one financial figure that investors surely took notice of: his projection that there will be $1 trillion worth of orders for Nvidia's Blackwell and Vera Rubin chips, a monetary reflection of a booming AI business. About an hour into his keynote, Jensen noted that last year Nvidia saw about $500 billion in demand for its Blackwell and upcoming Rubin chips through 2026. "Now, I don't know if you guys feel the same way, but $500 billion is an enormous amount of revenue," he said. "Well, I'm here to tell you that right now where I stand -- a few short months after GTC DC, one year after last GTC -- right here where I stand, I see through 2027, at least $1 trillion." The Rubin computing chip architecture, which was first announced in 2024, has been described by Jensen as the state of the art in AI hardware that outperforms its Blackwell predecessor. The company said in January, when it officially started production of Rubin, it would operate 3.5x faster than the Blackwell architecture on model-training tasks and 5x faster on inference tasks, reaching as high as 50 petaflops. Nvidia has said it expects to ramp up production in the second half of the year.

[2]

Jensen Huang expects Nvidia to sell $1 trillion of AI hardware through 2027 -- AI buildout intensifies as Agentic AI takes hold

Jensen Huang, chief executive and a co-founder of Nvidia, expects his company to earn $1 trillion selling AI hardware through 2027, he revealed at his keynote at the GTC 2026 event. If this happens, then Nvidia will be the first company in history to earn $1 trillion by selling AI hardware, which will once again prove its strong position as an indisputable AI hardware market leader. "I see [sales of AI hardware], through 2027, at least one trillion dollars," Huang said on stage of GTC 2026. There are currently no companies in the world generating $1 trillion in annual revenue, though Nvidia expects its AI hardware revenue for 2025 - 2027 period to be $1 trillion. The biggest companies are still well below $1 trillion per year, although some are getting closer. Even the world's largest company by sales, Walmart, earned $681 billion in annual revenue last fiscal year, so it is still over $300 billion of $1 trillion. Amazon earned $638 billion in revenue last year, followed by Apple with $391 billion. If Nvidia crossed the $1 trillion revenue in 2027, then it would likely earn more than Apple and Amazon did in 2025 combined. Nvidia earned $215 billion in its fiscal year 2026 that ended on January 31, 2026, up from $130.5 billion in FY2025. For the first quarter of its fiscal 2027, Nvidia projects revenue to hit $78 billion, up from $44.062 billion in Q1 FY2026. If Nvidia continues to grow its revenue at a pace of 164% per year, then its revenues will hit $578.264 in fiscal 2028, which is than Apple's or UnitedHealthGroup revenue in 2025. Some analysts think Nvidia could reach $1 trillion annual revenue by around 2030 if global AI infrastructure spending continues to grow and will be in the multi-trillion-dollar range around 2030. But is it really possible? Perhaps, the only way for Nvidia's revenue to reach $1 trillion is to grow faster than the market, increase the volumes of products it sells, and possibly increase the average sales price of its products. This may not be too hard as Nvidia's Rubin Ultra AI GPU will increase its compute chiplet count from two in case of Blackwell and Rubin to four. As a result, Nvidia will have to increase its price accordingly, which will increase its revenue. As it is highly likely that Feynman GPUs will retain the quad chiplet design, those AI GPU prices will be here to stay. A big question, however, is whether Nvidia can meet demand for AI hardware worth $1 trillion in the coming years as the company's supplier TSMC expands its capacity at rather conservative pace. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[3]

Nvidia Expects to Make $1 Trillion From AI Chips Through 2027

Nvidia Corp., the company at the center of an explosive build-out of AI computing, expects to generate at least $1 trillion from its Blackwell and Rubin chips through the end of 2027. The company had previously forecast that the chips would bring $500 billion in sales by the end of 2026. The latest forecast, delivered by Chief Executive Officer Jensen Huang during a company event, extends out the outlook. The shares gained as much as 4.8% on the remarks. They had been down 3.4% this year heading into the event. Meta Platforms Inc. will pay as much as $27 billion over the next five years for access to artificial intelligence infrastructure from cloud provider Nebius Group NV as it spends aggressively to compete with the industry's top frontier models. Nebius, a so-called neocloud that operates data centers and has a strategic partnership with Nvidia Corp., will provide Meta $12 billion of dedicated capacity starting in early 2027, the Dutch company said in a statement Monday. Meta also committed to buying as much as $15 billion in additional capacity that the cloud provider is building for third-party customers. The outlay represents one of the biggest single contracts that Meta has signed, underscoring the Instagram and Facebook owner's push for more computing capacity to power the development of AI products. Last year, it signed a separate $3 billion deal with Nebius. Bloomberg Intelligence Meta to Spend Up to $27 Billion on Nebius AI Infrastructure Arrow Right 15:55 Nebius shares jumped 15% in premarket trading. The stock had closed at $112.95 in New York on Friday and has nearly quadrupled in the past 12 months. Meta gained 2.8% before the market opened after previously closing at $613.71. Meta and some of its largest tech peers are expected to spend some $650 billion in 2026 to build data centers and purchase other infrastructure in anticipation of an AI services explosion in the coming years. Meta has made AI the company's top priority, and is investing heavily to compete with rivals like OpenAI and Google. It has also inked multi-billion dollar partnership agreements with Nvidia and Advanced Micro Devices Inc. for AI infrastructure since the start of the year. And Meta is developing its own chips in-house. Chief Executive Officer Mark Zuckerberg said last year that Meta will spend $600 billion on US infrastructure projects by 2028. To do so, Meta has leaned on profits generated by its advertising business, but has also raised outside financing to fund infrastructure projects. The company is developing its own high-end models and has built several AI products, including a chatbot, that is available inside its various apps. A spokesperson for Meta confirmed the Nebius deal, and said its strategy of diversifying its partnerships and technology stack for AI was part of "building a more resilient and flexible infrastructure." Nebius, which is based in Amsterdam and split off from the Russian internet giant Yandex in 2024, is one of a handful of newcomers to capitalize on the AI boom by building data centers tailor-made to train models and run services like ChatGPT. Nvidia has been using its enormous resources to finance this new breed of neoclouds that compete with larger cloud-computing providers like Google and Amazon.com Inc. Last week, Nvidia announced it will invest $2 billion in Nebius, fueling a 16% jump in the Dutch company's shares. Much of Nvidia's financing spree has gone to companies that buy its chips, leading to criticism that such circular investments are fueling a bubble. In January, Nvidia announced a similar $2 billion investment in Nebius competitor CoreWeave Inc. to deploy its products. It also put $30 billion into OpenAI this year, and participated in a $2 billion funding round for UK neocloud Nscale.

[4]

Nvidia sales opportunity for Blackwell, Rubin chips more than $1 trillion by 2027

SAN JOSE, California, March 17 (Reuters) - Nvidia (NVDA.O), opens new tab CEO Jensen Huang said on Tuesday at a press conference that the revenue opportunity for the company's Blackwell and Rubin artificial intelligence chips is "likely to be larger than $1 trillion" by the end of 2027. The estimate does not include the company's networking chips and the new processors made with technology from the Groq licensing deal it signed in December. Reporting by Stephen Nellis and Max A. Cherney in San Jose; Editing by Franklin Paul Our Standards: The Thomson Reuters Trust Principles., opens new tab

[5]

Nvidia's Jensen Huang predicts $1tn in AI chip revenue over 2 years

Nvidia chief executive Jensen Huang on Monday said he expects at least $1tn in AI hardware revenue over roughly the next two years, driven by rapid adoption of AI agents such as Anthropic's Claude Code. "Right now where I stand . . . I see through 2027 at least $1tn" in revenue, Huang said, adding he was "certain" demand would prove to be higher. Nvidia's shares briefly surged more than 2 per cent after Huang's comments on Monday at the semiconductor giant's flagship GTC event in Silicon Valley. The forecast is above Wall Street expectations. But the enthusiasm was shortlived because the stock gave up its gains to trade lower than before Huang's presentation. Huang's bullish forecasts for AI adoption have struggled to impress Wall Street in recent months amid concerns about the returns from huge investments in AI, threats to the semiconductor supply chain from conflict in the Middle East and shortages of the memory chips Nvidia's products need. The Nvidia co-founder had previously forecast $500bn in AI-driven revenue through to the end of 2026 based on "high confidence demand and purchase orders" for the company's latest Blackwell and Rubin hardware. Huang's prediction of $1tn in revenue from AI hardware is far higher than Wall Street consensus estimates for Nvidia's total revenue. Analysts' forecasts for its 2027 and 2028 fiscal years -- running through to the end of January 2028 -- total about $835bn, according to CapitalIQ. Huang said increasing demand for computing power was being driven by popular AI tools such as Anthropic's Claude Code and the need for "inference" -- the process of running AI models and applications. He also unveiled a new addition to its family of chips, the Groq 3 "language processing unit", which has been designed to speed up how AI systems respond to users' queries. The move shows Nvidia is looking to shore up its leading position in the AI chip market by exploring new chip architectures, departing from its history of offering a single GPU chip for AI workloads. "We are in volume production now," he said. "We'll ship [Groq 3] in the second half [of 2026], probably in the Q3 timeframe." The chip will be manufactured by South Korea's Samsung, Huang said, a departure for Nvidia which has typically used Taiwan Semiconductor Manufacturing Company for building its AI processors. It will be the first new product to come out of Nvidia's licensing deal with chip start-up Groq late last year, under which it also hired its founder Jonathan Ross -- who previously helped create Google's AI chip.

[6]

Nvidia GTC 2026: CEO Jensen Huang sees $1 trillion in orders for Blackwell and Vera Rubin through '27

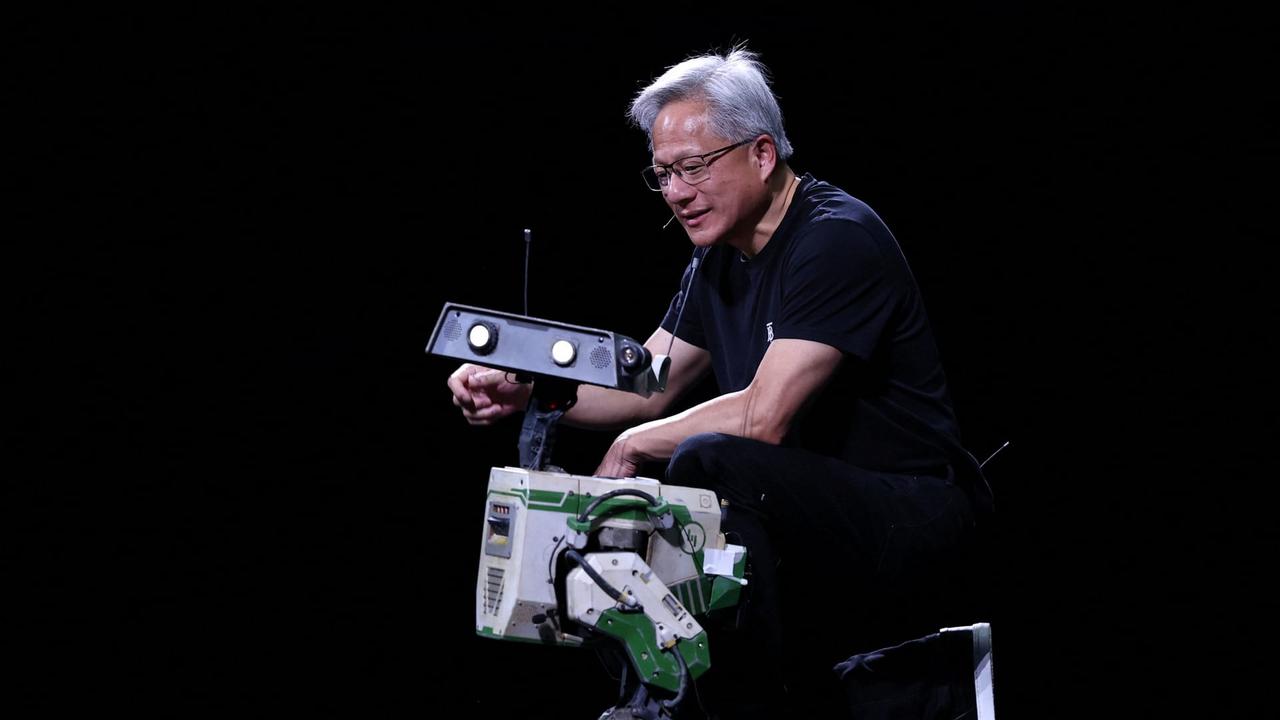

Nvidia's CEO Jensen Huang speaks during a keynote address at Nvidia's GTC Conference on March 16, 2026 in San Jose, California. Nvidia's GTC Conference focuses on recent developments and future uses of AI. At Nvidia's annual developer conference on Monday, CEO Jensen Huang took the stage to a packed house and said he expects purchase orders between Blackwell and Vera Rubin to reach $1 trillion through 2027. Last year, the company had projections for a $500 billion revenue opportunity between the two chip technologies. Following Nvidia's earnings report last month, Finance chief Colette Kress said the company expects growth this year to exceed what was included in that estimate. Nvidia's graphics processing units for artificial intelligence have turned the brand into a household name and the most valuable public company in the world, worth about $4.5 trillion. As mass AI adoption shifts from chatbots to agentic apps that spawn off other agents to accomplish tasks, the number of tokens being generated has exploded, creating even greater need for running inference at faster speeds.

[7]

Nvidia CEO heralds 'inference inflection' as next phase of AI boom, backed by $1 trillion in orders

Nvidia CEO Jensen Huang on Monday elaborated on his vision for keeping his company at the forefront of the artificial intelligence boom that he predicted will produce a $1 trillion backlog in orders within the next year. Sporting his signature black leather jacket, Huang spent more than two hours sauntering across a stage in a packed arena in San Jose, California, explaining how Nvidia's processors became indispensable AI components and highlighting the products that he believes will keep the company in the catbird's seat. Huang, 63, also touched upon many of the themes that he has been trumpeting since he emerged as one of Silicon Valley's most influential voices during the past few years, including his thesis that the AI buildup remains in its infancy. "We reinvented computing, just like the PC (personal computer) revolution and the internet revolution," Huang proclaimed. "We are now at the beginning of a new platform change." To hammer home his points, Huang predicted that Nvidia will be grappling with a $1 trillion backlog in orders for its chips by the end of the year, doubling his estimate from a year ago. Nvidia has leveraged its dominant position in the AI chip market so far to increase its annual revenue from $27 billion in 2022 to $216 billion last year -- a growth rate that has translated into a $4.5 trillion market value for the Santa Clara, California, company. But Nvidia's once-torrid stock has cooled since the company briefly became the first to surpass a $5 trillion market value last October amid worries that the the AI buzz is overblown. "This is just a white-knuckle period for the technology industry," said Wedbush Securities analyst Dan Ives. Even after Nvidia released a quarterly report in late February that far exceeded analyst forecasts and management provided a rosy outlook, the company's stock price is still down by 6% from where it stood before those numbers came out. While analysts expect Nvidia's revenue to surpass $330 billion for the upcoming year, the company is facing its first serious challenges in the AI chip market as other technology powerhouses such as Google and Facebook's corporate parent Meta Platforms try to develop their own processors. Nvidia's potential growth is being held back by security and trade barriers imposed by the U.S. that have impeded the company's ability to sell its advanced chips in China. Huang envisions Nvidia maintaining its instrumental role in AI by continuing to feed the feverish demand for chips that power chatbots like OpenAI's ChatGPT and Google's Gemini and expanding its reach into the emerging market for inference processors. Once an AI tool is trained, inference chips enable the technology to take what it has learned and produce responses -- whether it be writing a document or creating an image -- more efficiently than the processors that were used while the large language models were being built. "The inference inflection has arrived," Huang said. To help navigate its transition into the inference field, Nvidia struck a multi-billion dollar licensing deal with market specialist Groq that included the hiring of that startup's top engineers. "Nvidia isn't going to cede any market share to Google or Meta," said Ives, who believes Nvidia's market value will eclipse $6 trillion during the next year or so.

[8]

Jensen Huang predicts Nvidia AI chip revenue will hit $1 trillion by 2027

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. The big picture: The world's most valuable company is widening its ambitions once again. During Nvidia's annual GPU Technology Conference in Silicon Valley, chief executive Jensen Huang unveiled a sweeping roadmap that deepens the company's bets on artificial intelligence hardware and pushes its manufacturing footprint into new territory. "The inference inflection has arrived," Huang said during the keynote, framing the next stage of the AI boom around systems designed not just to train models but to run them at massive scale. Huang said Nvidia expects revenue from AI-focused chips to total at least $1 trillion through 2027, roughly double his earlier projection of $500 billion through the end of 2026, as enterprise demand for computational capacity continues to surge. Source: App Economy Insights The figure far exceeds Wall Street estimates, which peg Nvidia's total revenue for fiscal 2027 and 2028 at roughly $835 billion, according to Capital IQ data. Huang said his prediction stems from what he described as "high confidence demand and purchase orders" for the company's next-generation processors. The forecast also extends Nvidia's earlier cumulative outlook rather than representing a new annual revenue target, effectively stretching the company's previous $500 billion platform estimate by another year. Huang's message was based on a familiar theme: that the exponential growth in computing power needed for AI inference continues to reshape the semiconductor industry's economics. The CEO cited rising enterprise adoption of AI developer tools, such as Anthropic's Claude Code, as one of the main drivers of the need for more efficient compute systems. "Inference will only become more important as more companies adopt personal AI agent tools such as OpenClaw," he said. Nvidia increasingly frames this next phase as the rise of "AI factories" running agentic workloads at scale, where inference demand could eventually eclipse model training as the dominant computing task. To feed that demand, Nvidia is expanding beyond its longstanding GPU architecture. Huang announced a new chip, the Groq 3, described as a "language processing unit" designed to accelerate the responsiveness of AI systems during user interactions. The chip will be produced by Samsung Electronics. Groq 3 is also the first product to emerge from Nvidia's licensing agreement with chip startup Groq, signed late last year. The deal brought Groq founder Jonathan Ross - best known for his work on Google's early AI chips - into Nvidia's fold. Huang said the Groq 3 chips are already in volume production and scheduled to begin shipping in the second half of 2026, likely during the third quarter. Huang also used the stage to highlight Nvidia's growing presence across unconventional computing environments. He detailed partnerships involving robotaxi systems and introduced a chip concept intended for "orbital data centers" - an approach aimed at managing AI workloads in space. The idea aligns loosely with similar concepts Elon Musk has floated, including integrating his companies SpaceX and xAI through such orbital infrastructure. Analysts suggested that investors remain cautious, balancing near-term excitement over Nvidia's technological expansion with questions about the sustainability of long-term growth. Gene Munster, managing partner at Deepwater Asset Management, said the company's stock faces what he called a "wall of worry" despite sharply rising demand. "Bottom line from Jensen's keynote," he told the Financial Times, "demand is measurably stronger than even the highest expectations, and investors are still having a hard time getting comfortable with that."

[9]

Nvidia Expects Agentic AI To Drive $1 Trillion In Revenue

Nvidia is now expecting to generate $1 trillion in revenue through 2027 from its Blackwell and Vera Rubin platforms, CEO Jensen Huang said at the annual GPU Technology Conference in California. The revenue expectation is up 200% from the $500 billion in revenue through 2026 that the company previously projected in last year's GTC. "A trillion dollars is an enormous amount of infrastructure; you have to have complete confidence that the trillion dollars you're putting down will be utilized, would be performant, would be incredibly cost-effective and have useful life for as long as you could see," Huang said. That "complete confidence" is coming from a belief that demand for AI will rise exponentially, thanks to the rapid developments in agentic AI, Huang argued. That belief is based on a new token-driven AI economy that he detailed previously in the company's latest earnings report. That new revenue model is based on the rising importance of inference with the proliferation of agentic AI models. As agentic AI hype takes over Silicon Valley and models mature, the amount of data managed by an AI system expands, and the importance of inference outgrows that of training. The inflection point for this new order came with Anthropic's Claude Code AI agent, Huang argued. "Claude Code has revolutionized software engineering," Huang said. "There's not one software engineer [at Nvidia] today who is not assisted by one or many AI agents helping them code." At multiple points in his more than two-and-a-half-hour-long keynote speech, Huang compared agentic AI to other foundational tech breakthroughs, at one point calling it "the new computer." "Every single SaaS company will become an AgaaS company, an agentic as a service company," Huang predicted. It's not the first time Huang has sung the praises of agentic AI and how it's reshaping the tech industry, and it likely won't be the last. In January, agentic AI and the importance of inference were center stage in the launch of Nvidia's Rubin platform. Just a few weeks before that, the company made its largest purchase ever by acquiring Groq (not Grok), a chipmaker that specializes in inference. The fruits of Nvidia's renewed mission were three announcements: a major push into CPUs, Nvidia's first Groq chips that the company will ship in the second half of the year, and a collaboration with OpenClaw, the open-source AI agent software that made waves with its viral success earlier this year, after launching just a few months earlier under a different name. "OpenClaw has open-sourced, essentially, the operating system of agentic computers," Huang said. "It is no different than how Windows made it possible for us to create personal computers." He then talked about how, in the rise of the internet, every company needed to have an "HTML-strategy" and now, in the age of AI agents, every company will need to have "an OpenClaw strategy." But OpenClaw is a bit of a wild card. The agent requires full access to your computer and your files, creating a cybersecurity minefield. Top tech companies and even the Chinese government have reportedly advised employees against relying on OpenClaw and similar agentic AI platforms out of security fears. Giving an AI boundless control over your computer is also likely to have some big risks, like the off chance that OpenClaw's AI agent deletes your entire inbox, which actually happened to a Meta executive last month. In an attempt to address at least some of those concerns, Huang unveiled NemoClaw, Nvidia's attempt at making OpenClaw allegedly more secure and private for enterprises to use. Nvidia's push into the OpenClaw world is also symbolic of the company's desire to be more competitive in the open-source space, seeing its role as an emerging open-source model provider as a tool to drive further global reliance on the company's hardware. On top of the inference-focused announcements, Huang also announced that the company is working on a new Vera Rubin computer to be used in space-based AI data centers, and will be partnering with Hyundai, Nissan and the top Chinese automakers BYD and Geely to build 18 million robotaxis each year. The finance world has grown weary of the multibillion-dollar AI investments and spending commitments it once loved, and the rapid growth trajectory for AI demand that it once steadfastly believed in. You can see how much that skepticism has grown within the last few months by looking at how investors have become significantly tougher to please with each announcement. Investors have grown concerned that Nvidia's revenue growth might be peaking, per Bloomberg, with shares falling 5.5% the day following a stellar earnings report last month. Huang's proclamations on Monday and his confidence in agentic AI might not have changed that outlook, with shares of the company down a little less than 1% after market close despite the usually share-spiking keynote speech.

[10]

'We expect at least $1 trillion revenue': Nvidia CEO predicts huge windfall on Rubin and Blackwell chips as he reveals new Vera CPU and server racks

Nvidia is 'now a computing platform that runs all of AI', says Jensen Huang * Nvidia CEO Jensen Huang predicts bumper times ahead * Huang says he expects around $1 trillion from Rubin and Blackwell sales * Nvidia unveils new Vera chips and server racks at GTC 2026 Jensen Huang has stated he expects Nvidia to see around $1 trillion from selling its AI hardware through 2027. Speaking in his opening keynote at Nvidia GTC 2026, the CEO and co-founder said sales of its Blackwell and Rubin chips are set to be a huge earner for the company in coming months. And this may not be all - as Nvidia announced a range of new hardware releases, expanding its range of offerings even further. All in on compute "I see (AI chip sales) through 2027 - at least one trillion dollars," Huang declared in a presentation full of announcements, but with a focus on meeting the increasing demand for compute in the AI era. "I believe that computing demand has increased by 1 million times in the last two years," Huang said. "It is the feeling that we all have. It is the feeling every startup has." The $1 trillion figure drew gasps from the thousands in attendance at Nvidia GTC 2026, especially as Huang noted the company had previously forecast that data center gear would bring $500 billion in sales through the end of 2026. In order to keep this momentum going, Huang had shown off several major announcements on stage, including no fewer than seven new Vera Rubin chips. These include a new Vera CPU, available in the second half of 2026, which the company says is 'purpose-built' for agentic AI, offering twice the efficiency and 50% faster than traditional CPUs, along with the highest single-thread performance and bandwidth per core around today. Nvidia also announced a new rack integrating 256 liquid-cooled Vera CPUs, enough to sustain more than 22,500 concurrent CPU environments, each running independently at full performance - a key part of the company's drive towards "AI factories" to power use cases from quantum computing to robotics. Huang also revealed the Groq 3 LPU (language processing unit) will now be part of Nvidia's product line-up, helping boost large language model (LLM) inference and improving how responses to AI prompts are generated. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[11]

Nvidia CEO Jensen Huang: $1 trillion in chip sales coming

Why it matters: The company is operating at the center of the AI universe, providing the critical processing infrastructure that powers models like OpenAI's ChatGPT and Anthropic's Claude. Driving the news: Huang said at Nvidia GTC 2026 in San Jose that he anticipates the revenue benchmark from sales of the company's current Blackwell chips and its next-generation Vera Rubin chips through 2027. * And "I am certain computing demand will be much higher than that," Huang said. * He said in October 2025 that the company had $500 billion in AI chip orders through 2026. State of play: The AI economy is transitioning to inference models, having moved beyond the training phase, bolstering demand for complex chips.

[12]

Nvidia's Jensen Huang thinks $1 trillion won't be enough to meet AI demand -- and he's paying engineers in AI tokens worth half their salary to prove it | Fortune

During his keynote address Monday at Nvidia's GTC conference in San Jose, Huang said the company doubled its demand forecast within the next year. "I see through 2027 at least $1 trillion," he said. "In fact, we are going to be short. I am certain computing demand will be much higher than that." And he's already preparing for that reality with an unusual incentive to attract top talent and wring more computing power from his workforce: offering engineers AI tokens worth nearly half their salary. The AI boom is pushing infrastructure investments to new heights. Tech companies are investing a staggering $700 billion into the data center buildout, a sum that rivals the GDP of developed economies like Sweden, and is more than double the total inflation-adjusted cost of the Apollo missions -- projects that sent humans to the moon. Nvidia is a critical supplier in that buildout, providing the processors that power AI factories. The $1 trillion demand figure is further proof that the buildout is all gas, no brakes, even as competitors like Advanced Micro Devices (AMD) struggle to close the gap. All of this comes despite looming fears of an AI bubble, as flagged by business leaders like Microsoft CEO Satya Nadella and "Big Short" investor Michael Burry. Huang made the prediction alongside claims that AI agents could soon run the world, as well as announcements around space-based computing designed to launch AI into orbit, a concept Elon Musk has spotlighted as a potential solution to the energy demands of expanding data centers. "We are completely resetting and starting the largest buildout of human history," Huang said. "Most of the world's industries building AI factories, building chip plants, building computer plants are represented here today." The company's recent earnings reports have added credibility to Huang's claims. Last month, Nvidia posted $215.9 billion in revenue for fiscal 2026, up 65% from a year ago, the highest annual result ever. Data center revenue alone rose 75% from a year ago, reaching $62.3 billion. As business leaders aim to harness AI to boost worker productivity, Huang offered a glimpse at how Nvidia plans to operationalize that ambition: paying engineers in tokens -- the currency of AI -- to amplify their output. "I could totally imagine in the future every single engineer in our company will need an annual token budget," he said. "They're going to make a few 100,000 a year as their base pay. I'm going to give them probably half of that on top of it as tokens so that they could be amplified 10 times." Tokens are the basic units of data or words that AI models use to process language and recognize patterns, making them critical to the future of AI deployment. AI company OpenAI estimates that one token is equal to approximately four characters, with a single one-to-two sentence prompt requiring about 30 tokens. "Fortune Magazine," for example, may be broken down into five tokens: "For" "tune" "Mag" "az" "ine." At the allowance levels Huang described, engineers would have access to billions of tokens annually, unleashing a torrent of compute power. In Huang's scenario, tokens would be an added employment perk for engineers at his firm, arming them with the power needed to conduct deep research for the company. The Nvidia CEO said other tech firms will quickly follow suit and use tokens as a recruiting tool to attract top industry talent. "It is now one of the recruiting tools in Silicon Valley: how many tokens come along with my job," he said. "The reason for that is very clear because every engineer that has access to tokens will be more productive."

[13]

Nvidia forecasts $1tn in AI-driven orders by 2027

Chief executive Jensen Huang says surging demand for artificial intelligence tools could drive Nvidia's sales towards $1tn within two years. Nvidia has said demand for artificial intelligence could help drive up to $1tn in orders by 2027, as it unveiled a new generation of chips and technologies. Speaking at the company's annual GTC developer conference, chief executive Jensen Huang said demand for computing power continued to accelerate. Nvidia shares were up 0.3% tin pre-market trading on Tuesday following the announcements. The company introduced a range of new products, including semiconductors built using technology from start-up Groq. It also outlined plans to develop chips for use in space-based data centres. Nvidia said its latest platforms would allow artificial intelligence systems to operate in orbit, supporting geospatial analysis and autonomous space operations. The company has been a major beneficiary of the boom in AI, with revenues rising from $27bn in 2022 to $216bn last year, helping lift its market value to about $4.5tn. However, its shares have cooled in recent months after briefly surpassing a $5tn valuation last October, amid concerns that enthusiasm for AI may be overstated. Analysts say Nvidia must now convince investors that its rapid growth can be sustained, even as competition intensifies. Technology companies including Google and Meta Platforms are developing their own AI chips, while US export restrictions have limited Nvidia's ability to sell advanced semiconductors in China. Despite those challenges, demand for Nvidia's latest Blackwell and Rubin chips remains strong. "This is just a white-knuckle period for the technology industry," said Dan Ives. Nvidia is also expanding into so-called "inference" chips, which allow AI systems to generate responses more efficiently after training, powering tools such as ChatGPT and Gemini. "The inference inflection has arrived," Huang said. To help navigate its transition into the inference field, Nvidia struck a multi-billion dollar licensing deal with market specialist Groq that included the hiring of that startup's top engineers. "Nvidia isn't going to cede any market share to Google or Meta," said Ives, who believes Nvidia's market value will eclipse $6 trillion during the next year or so.

[14]

Nvidia chief expects revenue of $1 trillion through 2027

San José (United States) (AFP) - Nvidia chief Jensen Huang on Monday said he expects the artificial intelligence chip powerhouse to bring in at least a trillion dollars in revenue through next year. Huang made the ramped-up revenue forecast while outlining Nvidia's latest innovations for a packed audience at the opening of its annual developers conference in Silicon Valley. "I see, through 2027, at least a trillion dollars (in revenue)," Huang said. "I am certain that computing demand will be higher than that." A year earlier, at the same event, Huang had projected revenue of half that much. The revenue is expected to be driven by demand for its premium graphics processing units (GPUs), which Huang touted as delivering high performance while reining in the cost of delivering AI services. Huang contended that demand for computing power has increased "a million-fold" in just two years and shows no sign of abating. He went on to show Nvidia's latest innovations when it came to GPU's and platforms for building AI into nearly everything, from robots and apps to data centers orbiting the planet. Nvidia is tailoring its technology for "agentic" AI and training models, as well as inferencing -- in which AI makes deductions or generates content, demonstrations showed. The entire tech world -- from big names like OpenAI and Anthropic to young startups -- feels like they could grow revenue and their AI "if they could just get more capacity," Huang told the audience. Nvidia is aiming its AI expertise at seemingly all sectors from automobiles to health care. "Every single enterprise company, every single software company in the world needs an AI agent strategy," Huang said. "This is going to become a multi trillion-dollar industry, offering not just tools for people to use, but agents that are specialized," he added.

[15]

5 takeaways from Nvidia CEO Jensen Huang's rare insider blog post on AI

Why it matters: Huang -- whose company underpins the AI boom -- rarely publishes long essays about the tech's broader impact, offering other industry players and investors a rare window into his thinking. The big picture: Huang argues that chip demand, expansion and hiring are still in the early stages of what he calls a long buildout. * "AI is one of the most powerful forces shaping the world today. It is not a clever app or a single model; it is essential infrastructure," he writes in his seventh blog post since 2016. * "Every company will use it. Every country will build it." AI is different from software Huang made the case that AI breaks the model of how traditional software worked. * Traditional software runs on pre-written rules coded by humans. AI systems, he argues, generate answers in real time based on context. * "Every response is newly created. Every answer depends on the context you provide. This is not software retrieving stored instructions. This is software reasoning and generating intelligence on demand," he writes. The boom can create more jobs Huang argues AI will create new kinds of jobs, especially in infrastructure and skilled trades. * As the technology handles routine tasks, he writes, companies can serve more customers and expand. This dynamic, he says, ultimately drives hiring. * "Productivity creates capacity. Capacity creates growth," he writes. Reality check: There's relentless debate on how AI impacts the labor market, including how it speeds up work and makes people busier. * Huang has previously suggested "everybody's jobs will be different" from AI. He also famously said at the Milken conference in 2025: "You're not going to lose your job to an AI, but you're going to lose your job to somebody who uses AI." AI is a five-layer cake Zoom in: AI can be understood by looking at the "five-layer stack" that Huang describes as "Energy → chips → infrastructure → models → applications." * "Every successful application pulls on every layer beneath it, all the way down to the power plant that keeps it alive," he writes. Flashback: The "five-layer cake" framework was originally introduced at the World Economic Forum in Davos in January. "Trillions" more needed for AI infrastructure What's next: Huang notes that the AI boom is only just beginning and will require trillions of dollars in additional investment. * "We have only just begun this buildout," he writes of data centers and infrastructure. "We are a few hundred billion dollars into it. Trillions of dollars of infrastructure still need to be built." AI boom has only just begun The bottom line: "We are still early. Much of the infrastructure does not yet exist. Much of the workforce has not yet been trained. Much of the opportunity has not yet been realized. But the direction is clear."

[16]

AI inflection point: As Nvidia's Jensen Huang outlines vision for agents and the AI factory, he forecasts big jump in revenue - SiliconANGLE

AI inflection point: As Nvidia's Jensen Huang outlines vision for agents and the AI factory, he forecasts big jump in revenue Artificial intelligence inference, the processing of getting answers from AI models, has reached an inflection point and the AI factory is now poised to drive much of the global economy, Nvidia Corp. Chief Executive Jensen Huang declared today. During the AI chipmaking giant's GTC gathering in San Jose today, Huang (pictured) said he believes Nvidia will see $1 trillion in chip orders through 2027. The growth in revenue will stem from what he views as a key shift in AI's main focus from training models to advanced inferencing, the ability to understand instructions and take action. "An AI that could generate became an AI that could reason, an AI that could reason became an AI that could do work," Huang said during his keynote remarks. "It's way past training now. Inference is your workloads and tokens are your new commodity. We have reached that moment, inference inflection has arrived." Huang's vision for the evolving AI economy was supported by a slew of announcements from Nvidia on Monday, ranging from new Vera Rubin GPUs and CPUs to an expansion of open model families. He noted that Nvidia's process of "extreme co-design" has revolutionized the cost of tokens, the text, images or audio that AI models use to understand and generate outputs. "This is your token factory, this is your AI factory, this is your revenue," Huang said. "Our cost per token is the lowest in the world. You can't beat it." Huang's comments reflected the pressure key vendors in the AI world are feeling to make the case for a return on investment. As SiliconANGLE's analysts have recently noted, enterprises have poured millions to tens of millions of dollars into building AI infrastructure, and many companies expect 2026 to be the year of ROI. As Huang's remarks about future revenue in the neighborhood of $1 trillion demonstrate -- double his figure for 2024-2025 issued six months ago -- Nvidia does not see a slowdown in spending for its advanced AI processors. This is being driven by its latest chip platform announcements, which the CEO noted will significantly increase the rates for token generation. "We're going to take our token generation rate from 2 million per second to 700 million," Huang said. "This is the power of extreme codesign," he added in a reference to Nvidia's method of engineering hardware, software, networking, models and data pipelines simultaneously. Nvidia also announced new open-source tools to enhance AI agentic capabilities. The most significant of these latest offerings for developers involved OpenClaw, a highly popular open-source personal AI assistant that has a reported 27 million monthly visitors. "OpenClaw is the most popular open-source project in the history of humanity, and it did it in just a few weeks," Huang told the GTC audience. "It has open-sourced essentially the operating system of agentic computers." Nvidia's version, NemoClaw, is linked to another Nvidia project called Nemotron, allowing users to access other AI models optimized for tasks such as generating text and analyzing graphs. "The OpenClaw event cannot be understated," Huang noted. "This is as big a deal as HTML. OpenClaw gave the industry exactly what it needed at exactly the right time. There's just one catch." That catch involves security. Enterprises interested in adopting OpenClaw must deal with the tool's lack of basic data protection. To address this issue, Nvidia has implemented a set of security protocols for NemoClaw that adds privacy and cybersecurity guardrails, along with limits to the agent's network access. Nvidia's announcements spotlighted the growing influence of agents and the shifting economics surrounding AI. The rapid adoption of OpenClaw and the speed with which Nvidia has moved to embrace the open-source model show that agents are beginning to build their own ecosystems. Nvidia does not intend to let this trend develop without taking an active role. It's also becoming clear that the rise of the AI factory has placed a premium on token generation and the power to drive it. As AI factories become the next generation of global infrastructure, phrases like "token cost per watt" are going to become increasingly important. Nvidia remains the central player in how AI will evolve. The company's Vera Rubin platform highlights Nvidia's vision for the shift taking place from computing as infrastructure to computing as production. Data centers have become factories, an essential part of the enterprise business model, and Nvidia's moves this week were designed to cement its leadership role in AI going forward. "Nvidia offers the only infrastructure in the world that you could go anywhere in the world and build with complete confidence," Huang said. "We are now a computing platform that runs all of AI. This is not a one-app technology. It is fundamental."

[17]

Jensen Huang says the $700 billion AI buildout is just the beginning: 'Trillions of dollars of infrastructure still need to be built' | Fortune

$700 billion. That's larger than the GDP of Sweden, Israel, or Argentina. $700 billion is roughly more than the value of Disney, Nike, and Target combined. $700 billion is even more than the total inflation-adjusted cost of the U.S. Apollo program, which sent humans to the moon -- twiceover. It's a lot, to say the least. But that sky-high expenditure is just the beginning of the AI infrastructure buildout, according to Nvidia CEO Jensen Huang. In a blog post released on Tuesday, the billionaire, himself worth a paltry $154 billion in comparison, said the infrastructure expenditures could easily reach trillions of dollars. "We have only just begun this buildout," Huang wrote. "We are a few hundred billion dollars into it. Trillions of dollars of infrastructure still need to be built." He's not alone in his thinking. McKinsey estimates data center investment could reach a cumulative $6.7 trillion globally by 2030 to meet booming AI demand. That soaring capital expenditure forecast is one of the key forces driving the U.S. economy today. Harvard economist Jason Furman crunched the numbers last October and found that without data centers, U.S. GDP growth in the first half of 2025 would have been a paltry 0.1%. JPMorgan Chase global market strategist Stephanie Aliaga estimated AI-related capital expenditure contributed 1.1% to GDP growth, "outpacing the U.S. consumer as an engine of expansion." And that's not stopping anytime soon. Nvidia is currently one of the central drivers of the data center buildout. Its graphics processing units (GPUs) and other products serve as the backbone of hyperscale AI facilities. Other tech companies like Alphabet, Amazon, Meta, and Microsoft are fueling much of the buildout, dedicating up to $700 billion combined this year to the building of infrastructure across the U.S., with much of the construction concentrated in Virginia, and significant buildouts planned in Georgia and Pennsylvania. AI capex driving demand for skilled trades Yet Huang's analysis extends beyond observing the high sums of cash fueling the AI infrastructure buildout. He says that investment is a boon for the labor market, fueling demand for an array of skilled workers. "The labor required to support this buildout is enormous," he wrote. "AI factories need electricians, plumbers, pipefitters, steelworkers, network technicians, installers, and operators," jobs long considered safe from AI, according to recent doomsday estimations. These roles require specialized training in the trades, but the talent to fill them is in short supply,leading to dire shortages of skilled workers such as electricians. The Bureau of Labor Statistics estimates demand for electricians will increase 9% through 2034, a rate much faster than for all occupations and averaging around 81,000 openings for the position each year. And it's not just electricians: demand for the construction and extraction industry will also grow faster than the average for all occupations over the next eight years, with an average of about 649,000 openings each year. However, experts warn the jobs produced by the data center buildout are typically short-term. According to Brookings Institution research, the temporary jobs offer little long-term or large-scale employment opportunities. That demand comes as AI development threatens white-collar jobs, especially entry-level roles. New research from the AI company Anthropic finds the technology is already theoretically capable of performing most tasks associated with coding, law, and business and finance. Some business leaders, such as Microsoft AI chief Mustafa Suleyman, think white-collar work will be automated by AI within 18 months. Despite those dismal predictions, Huang paints an optimistic picture of AI's role in the workforce, framing it as a tool that enhances human capability rather than a threat to someone's 9-to-5. "A radiologist's purpose is to care for patients," he wrote. "When AI takes on more of the routine work, radiologists can focus on judgment, communication, and care. Hospitals become more productive. They serve more patients. They hire more people."

[18]

Nvidia CEO heralds 'inference inflection' as next phase of AI boom, backed by $1 trillion in orders

Nvidia CEO Jensen Huang on Monday elaborated on his vision for keeping his company at the forefront of the artificial intelligence boom that he predicted will produce a $1 trillion backlog in orders within the next year. Sporting his signature black leather jacket, Huang spent more than two hours sauntering across a stage in a packed arena in San Jose, California, explaining how Nvidia's processors became indispensable AI components and highlighting the products that he believes will keep the company in the catbird's seat. Huang, 63, also touched upon many of the themes that he has been trumpeting since he emerged as one of Silicon Valley's most influential voices during the past few years, including his thesis that the AI buildup remains in its infancy. "We reinvented computing, just like the PC (personal computer) revolution and the internet revolution," Huang proclaimed. "We are now at the beginning of a new platform change." To hammer home his points, Huang predicted that Nvidia will be grappling with a $1 trillion backlog in orders for its chips by the end of the year, doubling his estimate from a year ago. Nvidia has leveraged its dominant position in the AI chip market so far to increase its annual revenue from $27 billion in 2022 to $216 billion last year -- a growth rate that has translated into a $4.5 trillion market value for the Santa Clara, California, company. But Nvidia's once-torrid stock has cooled since the company briefly became the first to surpass a $5 trillion market value last October amid worries that the the AI buzz is overblown. "This is just a white-knuckle period for the technology industry," said Wedbush Securities analyst Dan Ives. Even after Nvidia released a quarterly report in late February that far exceeded analyst forecasts and management provided a rosy outlook, the company's stock price is still down by 6% from where it stood before those numbers came out. While analysts expect Nvidia's revenue to surpass $330 billion for the upcoming year, the company is facing its first serious challenges in the AI chip market as other technology powerhouses such as Google and Facebook's corporate parent Meta Platforms try to develop their own processors. Nvidia's potential growth is being held back by security and trade barriers imposed by the U.S. that have impeded the company's ability to sell its advanced chips in China. Huang envisions Nvidia maintaining its instrumental role in AI by continuing to feed the feverish demand for chips that power chatbots like OpenAI's ChatGPT and Google's Gemini and expanding its reach into the emerging market for inference processors. Once an AI tool is trained, inference chips enable the technology to take what it has learned and produce responses -- whether it be writing a document or creating an image -- more efficiently than the processors that were used while the large language models were being built. "The inference inflection has arrived," Huang said. To help navigate its transition into the inference field, Nvidia struck a multi-billion dollar licensing deal with market specialist Groq that included the hiring of that startup's top engineers. "Nvidia isn't going to cede any market share to Google or Meta," said Ives, who believes Nvidia's market value will eclipse $6 trillion during the next year or so.

[19]

AI Will Boost Jobs With Infrastructure Buildout: Huang

Nvidia founder Jensen Huang says AI will create countless jobs as buildout for the tech has only just started and will require many more workers. Artificial intelligence won't be the large-scale job-taker as feared, as the tech needs workers to build and then maintain the trillions of dollars worth of infrastructure for it to run, says Nvidia founder Jensen Huang. Huang argued in a blog post on Tuesday that AI has become "essential infrastructure, like electricity and the internet," and the facilities that make the chips, build computers and eventually house AI are "becoming the largest infrastructure buildout in human history." "We have only just begun this buildout. We are a few hundred billion dollars into it. Trillions of dollars of infrastructure still need to be built," he added. "The labor required to support this buildout is enormous." Huang said AI data centers require roles such as electricians, plumbers, steelworkers, network technicians and operators, which he added are "skilled, well-paid jobs, and they are in short supply." Nvidia (NVDA) is one of the biggest winners of the current AI boom, as it is the most dominant AI hardware supplier, with its chips in high demand. Its share price has risen by over 1,300% since 2023, shortly after OpenAI released the first public version of ChatGPT that kicked off an AI race. Huang described AI infrastructure as a "five-layer cake" involving energy, AI chips, infrastructure, AI models and then applications. He said the infrastructure backing AI "had to be reinvented" from the ground up due to the way it works, as software typically retrieves stored instructions, while AI is "reasoning and generating intelligence on demand." "Much of the infrastructure does not yet exist. Much of the workforce has not yet been trained. Much of the opportunity has not yet been realized," Huang said. Related: Using AI at work is causing 'brain fry,' researchers say "This is why the buildout is so large. This is why it touches so many industries at once. And this is why it will not be confined to a single country or a single sector," he added. "Every company will use AI. Every nation will build it." Huang's post comes as multiple companies across a broad range of industries have initiated large-scale layoffs, pointing to efficiencies gained through AI as the reason. Last month, Block, Inc. cut 40% of its staff, a decision co-founder Jack Dorsey attributed to AI use at the payments company. Social media platform Pinterest and the chemical company Dow also cited AI as the reason to cut a total of more than 5,000 employees between them earlier this year. Goldman Sachs analysts said last month that AI-driven job losses have been "visible but moderate," with the technology helping to raise the US unemployment rate slightly this year, from its current 4.4% to 4.5% by year-end.

[20]

Jensen Huang Sees a $1 Trillion AI Trade Next Year

Want more stock market and economic analysis from Phil Rosen directly in your inbox? Subscribe to Opening Bell Daily's newsletter. Jensen Huang just buried the debate over whether AI spending has peaked. Speaking Monday at GTC 2026 in California, he told a packed audience that Nvidia has visibility into at least $1 trillion in demand through next year. The number he shared last year just for Blackwell and Vera Rubin was $500 billion. If the largest company in the world is seeing demand double effectively overnight, it's hard to bet against the broader trend. Huang went even further. "In fact, we are going to be short," Huang said. "I am certain computing demand will be much higher than that."

[21]

Nvidia Says AI Chip Orders Could Hit At Least $1 Trillion by 2027. Here's Why Demand Keeps Climbing.

CEO Jensen Huang made the projection at Nvidia's annual developer conference, doubling the company's previous $500 billion estimate. Jensen Huang is putting his chips on the table. The Nvidia CEO told a packed house at his company's developer conference Monday that AI chip orders could hit at least $1 trillion through 2027. That's double what the company projected just last year. The surge is being driven by a shift in how AI is being used. The industry is moving from training AI models to AI actually doing productive work. That transition is exploding demand for chips, according to CNBC. Agentic AI, which spawns other agents to autonomously complete tasks, is particularly chip-hungry. As companies deploy these systems, the number of tokens being generated has exploded. Huang said demand is booming from startups and big companies alike, with both groups eager to expand capacity.

[22]

Nvidia sales opportunity for Blackwell, Rubin chips more than $1 trillion by 2027

Blackwell and Rubin are Nvidia's flagship AI chips and capable of building the large language models that underpin chatbots such as OpenAI's ChatGPT. Nvidia CEO Jensen Huang said on Tuesday at a press conference that the revenue opportunity for the company's Blackwell and Rubin artificial intelligence chips is "likely to be larger than $1 trillion" by the end of 2027. Blackwell and Rubin are Nvidia's flagship AI chips and capable of building the large language models that underpin chatbots such as OpenAI's ChatGPT. Blackwell chips are available for purchase, while Rubin chips are Nvidia's next-generation processors and are in full production. The $1 trillion estimate Huang issued does not include a swath of the company's other products like its central processing units (CPUs), its range of networking chips or the forthcoming chips based on the technology it licensed from Groq. The estimate also does not include a Rubin variant known as Rubin Ultra. In December, Nvidia signed a deal to license Groq's tech and hired many of the startup's executives.

[23]

Nvidia Highlights $1 Trillion Opportunity: Jensen Huang Puts 13-Digit Figure In Reach - NVIDIA (NASDAQ:NVDA)

Nvidia's $1 Trillion Opportunity Huang has been bullish on opportunities such as data centers and AI chips in recent commentary from quarterly results and conferences. None of those comments may compare to Huang saying the $1 trillion figure at Monday's event. Huang said he expects Nvidia's revenue to double to $1 trillion through 2027, which comes after the company previously guided for visibility of $500 billion for its AI chips. The new figure suggests demand for Blackwell and Vera Rubin chips could be even higher than the most optimistic bulls had been forecasting. The comments come as competition in the AI chip space could be rising and as the technology sector has faced pressure over whether the continued high spending by companies on AI and data center platforms and tools will continue. Huang's call for $1 trillion may provide new life into Nvidia stock, which is trading down year-to-date in 2026 as investors and analysts digest what exactly he meant and how revenue could play out in the future. Nvidia has beaten analyst estimates for revenue in 14 straight quarters. Guidance from the company calls for first-quarter revenue to be in a range of $76.44 billion to $79.56 billion, versus a previous Street estimate of $71.96 billion. Fiscal 2025 revenue for the company was $215.9 billion, up 65% year-over-year. Visibility of $1 trillion for AI chips could highlight the strong growth the company is likely to report in the coming fiscal years. Nvidia's Other GTC Announcements Huang's keynote wasn't the only headline for Nvidia on Monday. The company also announced an expanded partnership with Hyundai and Kia for autonomous driving built on Nvidia's DRIVE Hyperion Platform. The company also highlighted several new products. GTC 2026 continues through March 19, which could provide more opportunities for Nvidia to highlight upcoming products and partnerships. Nvidia shares could be volatile through the end of the trading week. Nvidia Stock Climbs Nvidia stock closed Monday up 1.63% to $183.19 versus a 52-week trading range of $86.62 to $212.19. Shares hit an intraday high of $188.88 after Huang's $1 trillion comments. Nvidia shares are down 1.78% year-to-date in 2026, but remain up over 50% in the last year. Image via Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[24]

Nvidia expects to make $1 trillion from AI chips through 2027

Nvidia Chief Executive Officer Jensen Huang, addressing crowds at the company's biggest annual event, unveiled a variety of new products while predicting that its flagship AI processors would help generate $1 trillion in sales through 2027. During a 2½ hour keynote address, Huang announced plans to push deeper into central processing units -- Intel's home turf -- and introduced semiconductors made with technology acquired from startup Groq. The company even said it was developing chips for data centers in outer space. At the heart of Huang's message: Demand for computing power continues to soar, and Nvidia is uniquely equipped to meet the challenge.

[25]

NVIDIA Sees Compute Revenue Exploding to $1 Trillion in Just Two Years, as AI Has Hits an 'Inflection Point' With Inference

NVIDIA's CEO, Jensen Huang, has talked about what we anticipate in terms of compute demand growing in the coming years, and the figure he projects is beyond shocking. The AI industry is currently at a defining point, as we are seeing a massive shift from training workloads to inference, which drives increased compute demand and aggressive revenue growth. Back at GTC 2025, NVIDIA talked about achieving $500 billion in revenue in three quarters, credited to the company's performance with Blackwell and Vera Rubin, but Team Green has now taken the next step: Jensen now projects over $1 trillion in revenue from 2025 to 2027. Jensen signals that NVIDIA now "runs AI," which is why his estimate is 'conservative.' NVIDIA's CEO argues that, with inference hype becoming mainstream, AI labs are now demanding compute capabilities that are "off-the-charts," and that, according to him, the compute requirements have grown by a whopping 1,000,000 times in just 2 years. Jensen also talks about how spot pricing for GPUs that are several years old, such as Ampere and Hopper, is rising, which is an indirect indication that there's a compute bottleneck within the AI industry, and that in the process of addressing it, NVIDIA will bring in revenue that makes it a business entity of its own kind. A huge chunk of demand NVIDIA sees is driven by cloud-native adoption, such as that from hyperscalers, but at the same time, the portion of sovereign AI investments, such as those from the Middle East and the EU, has grown dramatically in recent times. Jensen says that NVIDIA's partnerships with the likes of Anthropic, OpenAI, and other AI labs are the main drivers of the infrastructure demand. At the same time, the hardware advancements NVIDIA has achieved in optimizing token/$ figures make it essentially unavoidable for the company to deploy Tema Green's hardware. Several experts had doubted NVIDIA's revenue projections when they were unveiled at GTC 2025, and Jensen has decided to basically double the figure this time, showing that his company believes compute demand will remain aggressive in the coming years.

[26]

Nvidia eyes $1 trillion in revenue through 2027: What's driving this growth? - The Economic Times

Nvidia CEO Jensen Huang doubled down on the company's explosive growth at the company's annual GPU Technology Conference (GTC) 2026, projecting at least $1 trillion in cumulative revenue through 2027 from AI chips and infrastructure, double his earlier estimate of $500 billion. This signals Huang's confidence that Nvidia will remain the biggest company in the market for AI chips even as competition grows. Investors have also doubted whether its strategy of plowing back its profits into the AI ecosystem is paying off. At a hockey arena with a capacity of more than 18,000, Huang laid out how the top AI chipmaker plans to adapt to a rapidly changing AI landscape at the chipmaker's four-day developer conference. Huang did not offer more details on his $1 trillion forecast. What will drive the revenue projection? Surging compute demand: Huang said in his keynote that global computing needs have risen "a million times in two years", fuelling demand for Nvidia's Blackwell and Rubin chips plus networking gear. He noted that every company, from big names such as OpenAI and Anthropic to young startups, feel like they could grow revenue and their AI "if they could just get more capacity." Shift to inference "The inference inflection has arrived," Huang said. The company said a key driver is inference, where trained models run real-time queries and tasks at scale, shifting from training dominance. Huang said this is a multi-trillion-dollar opportunity, with Nvidia's GPUs offering superior performance and cost efficiency. To help navigate the transition into the inference field, Nvidia has struck a multi-billion-dollar licensing deal with market specialist Groq, including hiring that startup's top engineers. Sector-wide expansion Huang also demonstrated Nvidia's latest innovations when it came to GPUs and platforms for building AI into nearly everything, from robots and apps to data centres orbiting the planet. Nvidia is seemingly aiming its AI expertise at all sectors, from automobiles to health care. "Every single enterprise company, every single software company in the world needs an AI agent strategy," Huang said. "This is going to become a multi-trillion-dollar industry, offering not just tools for people to use, but agents that are specialized," he added. Deals struck Sovereign AI tie-ups were announced, including with Palantir for secure, localised deployment in regulated sectors and Indian AI startup Sarvam AI as a key partner for localised model development. Nebius Group has also committed to multi-billion-dollar gigawatt-scale AI factories. Amazon Web Services (AWS) said it will integrate Blackwell, Rubin GPUs, RTX PRO workstations, and Groq LPUs across its compute lineup. Microsoft Azure said it will add Nvidia Cosmos world models and its open models Alpamayo for robotics/physical AI, accessible via GitHub and Foundry. Automotive/robotics deals included BYD, Hyundai, Nissan, and Geely. Uber also announced a partnership with Nvidia for ride-hailing AI integration. Huang said Samsung was producing Nvidia's new AI chips, which sent the South Korean company's shares up.

[27]

Nvidia's Jensen Huang Says AI Compute Could Near $1 Trillion by 2027 | PYMNTS.com

By completing this form, you agree to receive marketing communications from PYMNTS and to the sharing of your information with our sponsor, if applicable, in accordance with our Privacy Policy and Terms and Conditions. Less than 24 hours later, a moment at Nvidia's annual GTC conference made that joke feel a little less far-fetched. At the end of his keynote Monday, Nvidia CEO Jensen Huang played an animated video showing several robots, along with a digital version of himself, sitting around a campfire singing a country-style song about the conference. The robots joked about tokens, open-source software and artificial intelligence development, closing the event with a playful reminder of how quickly AI is moving from research labs into everyday life. The moment captured the unusual range of this year's GTC keynote. The presentation spanned everything from Disney's Olaf appearing on stage to discussions about computing systems designed for space exploration, and major announcements about the infrastructure needed to power the next wave of AI. But behind the playful demonstrations was a much bigger message about where the AI industry is heading. Huang said the industry is entering what he described as an "inference inflection point," a phase where the demand for computing power is shifting rapidly from training AI models to running them continuously in real-world applications. The scale of that shift, Huang argued, could drive one of the largest technology infrastructure expansions in history. "AI computing could approach a trillion dollars of data center infrastructure between now and 2027," Huang said. Much of that demand is tied to inference, the process where a trained AI model generates responses for users. Every time a chatbot answers a question, an AI assistant writes an email, or a coding tool produces software, the system generates pieces of output known as tokens. Tokens are the basic units of AI-generated text or data. A short sentence might contain dozens of tokens, while a longer response could contain hundreds. Because inference happens continuously as users interact with AI systems, the computing demand can far exceed the resources needed to train the models in the first place. While training large models requires massive bursts of computing power, inference workloads run constantly as millions of users interact with AI services. That shift means the long-term economics of AI are increasingly tied to how efficiently companies can generate tokens at scale. "Inference is your new workload, tokens are your new commodity," Huang said during the keynote. "You want to make sure that the architecture is as optimized as you can in the future." To underscore the point, Huang at one point lifted a championship-style belt on stage labeled "InferenceX," referencing analysis from SemiAnalysis that ranked Nvidia's systems as leaders in inference performance. The visual framed Nvidia's position in the AI market as something akin to a "token king," highlighting the company's focus on delivering the lowest cost per token as AI systems generate ever-larger volumes of output. "The agentic AI inflection point has arrived," Huang said while introducing Nvidia's next-generation Vera Rubin platform. The rise of AI agents, software systems that can perform tasks on behalf of users, is expected to significantly increase the number of tokens generated across enterprise software, digital assistants and automated workflows. Huang described the emerging AI economy using the concept of "AI factories," specialized data centers designed to generate AI outputs at massive scale. "In the age of AI, intelligence tokens are the new currency, and AI factories are the infrastructure that generates them," he said. To support that shift, Nvidia unveiled its next-generation AI computing platform called Vera Rubin. According to Nvidia, the system is designed to deliver up to 10 times higher inference performance per watt while reducing the cost of generating tokens by roughly 90%. Taken together, Huang said the shift toward inference-driven workloads is transforming how the technology industry thinks about computing infrastructure. Instead of building data centers primarily for periodic model training, companies are now building massive systems designed to generate tokens continuously. That shift, Huang suggested, could redefine the economics of computing itself. "The future of computing will be built around AI factories," Huang said.

[28]

Jensen Huang's Big Bet on Agentic AI, Spins a $1 Trillion Yarn for 2027

The company launches the NemoClaw, an enterprise-grade AI agent platform, that it created with help from the OpenClaw maker Steinberger In recent times, tech leaders have aped each other in one aspect. They've reeled of big numbers to foist their dreams on us. Having claimed Nvidia chips to be the "Swiss Army Knife of Artificial Intelligence" for three years, its CEO Jensen Huang now says he is changing AI paradigm from training to inference - and this shift will see them net $1 trillion in revenues through 2027. Of course, the markets knew of Nvidia's decision to launch a new chip incorporating expertise from a start-up called Groq to which Huang paid $20 billion months ago as license fee in a non-exclusive deal. So, when Huang referred to this agenda in his Nvidia Developer Conference GTC at San Jose last night, the audience were hardly surprised. What's a Huang keynote without some shock value? The Nvidia boss told delegates that his company's order books for the newest AI chips could yield $1 trillion through 2027. Now, this is where the math gets a bit confusing. At one of last year's GTC conventions, Huang said Nvidia expected $500 billion revenues from selling its latest AI chips - Blackwell and Rubin - between 2025 and 2026. Quite a big number for a company that had reported $130 billion in revenues for the year-ending January 2025. Analysts were left wondering whether the $500 billion was for a year or over a longer period. In fact, Morgan Stanley wrote a detailed analytics on "parsing the $500 billion" to confirm that the figure was for 2025 and 2026. If this were true, is Huang saying that Nvidia will make an additional $500 billion in just one year - that is in 2027 alone? Sounds farfetched? Of course, there is no reason for Huang to fear as he can pick another number from his hat at a convenient time for the markets to mull over. The real story from this year's GTC though is the new inference chip, one that has been making the rounds since last December. The new chip pairs Nvidia's expertise in AI requests with Groq's components that can charge up answers. From Huang's point of view, things are going as per plan. "I am certain computing demand will be much higher than what it is. Finally AI is able to do productive work, and therefore the inflection point of inference has arrived," he says. As an example, he points to all Nvidia software engineers using AI coding assistants such as Claude Code and its competitor Codex. When Nvidia combines its new Vera Rubin chips with storage, inference accelerator and Ethernet racks to form what Huang likes to describe as the "AI supercomputer", it will deliver a generational leap in agentic AI solutions. Seems plausible. In fact, he goes on to note that agentic AI has witnessed an upswing in recent months. Huang goes on to praise Peter Steinberger, the engineer who created the open-source AI agent called OpenClaw and described the product as "profound" and giving it the title of the most popular open-source product "in the history of humanity? Topping Linux "in just a few weeks." In fact, the company also unveiled Nvidia's own NemoClaw that uses an agent toolkint to interact with OpenClaw and allowing users to operate their agents with added security controls. In fact, he believes that every company needs an OpenClaw strategy. "For the CEOs, the question is, what's your OpenClaw strategy? We need it. We all have a Linux strategy. We all needed to have an HTTP HTML strategy, which started the internet. We all needed to have a Kubernetes strategy, which made it possible for mobile cloud to happen. Every company today needs to have an agentic systems strategy." Huang revealed that Nvidia worked with Steinberger to develop NemoClaw. Currently in early stage alpha-release, NemoClaw users would be able to tap into any coding agent or open-source AI model to build and deploy AI agents. The platform allows users to access cloud-based models on their local devices and hardware agnostic. "OpenClaw brings people closer to AI and helps create a world where everyone has their own agents," says Peter Steinberger, creator of OpenClaw. "With NVIDIA and the broader ecosystem, we're building the claws and guardrails that let anyone create powerful, secure AI assistants."

[29]

Nvidia chief expects revenue of $1 trillion through 2027

Nvidia CEO Jensen Huang projected at least a trillion dollars in revenue through 2027, a significant increase from last year's forecast. This surge is driven by immense demand for their high-performance GPUs, crucial for AI services. Huang emphasized the "million-fold" increase in computing demand and Nvidia's expansion into "agentic" AI across various sectors. Nvidia chief Jensen Huang on Monday said he expects the artificial intelligence chip powerhouse to bring in at least a trillion dollars in revenue through next year. Huang made the ramped-up revenue forecast while outlining Nvidia's latest innovations for a packed audience at the opening of its annual developers conference in Silicon Valley. "I see, through 2027, at least a trillion dollars (in revenue)," Huang said. "I am certain that computing demand will be higher than that." A year earlier, at the same event, Huang had projected revenue of half that much. The revenue is expected to be driven by demand for its premium graphics processing units (GPUs), which Huang touted as delivering high performance while reining in the cost of delivering AI services. Huang contended that demand for computing power has increased "a million-fold" in just two years and shows no sign of abating. He went on to show Nvidia's latest innovations when it came to GPU's and platforms for building AI into nearly everything, from robots and apps to data centers orbiting the planet. Nvidia is tailoring its technology for "agentic" AI and training models, as well as inferencing -- in which AI makes deductions or generates content, demonstrations showed. The entire tech world -- from big names like OpenAI and Anthropic to young startups -- feels like they could grow revenue and their AI "if they could just get more capacity," Huang told the audience. Nvidia is aiming its AI expertise at seemingly all sectors from automobiles to health care. "Every single enterprise company, every single software company in the world needs an AI agent strategy," Huang said. "This is going to become a multi trillion-dollar industry, offering not just tools for people to use, but agents that are specialized," he added.

[30]

Nvidia bets on AI inference as chip revenue opportunity hits US$1 trillion