Meta strikes up to $100 billion AI chips deal with AMD, could acquire 10% stake in chipmaker

49 Sources

49 Sources

[1]

Meta could end up owning 10% of AMD in new chip deal

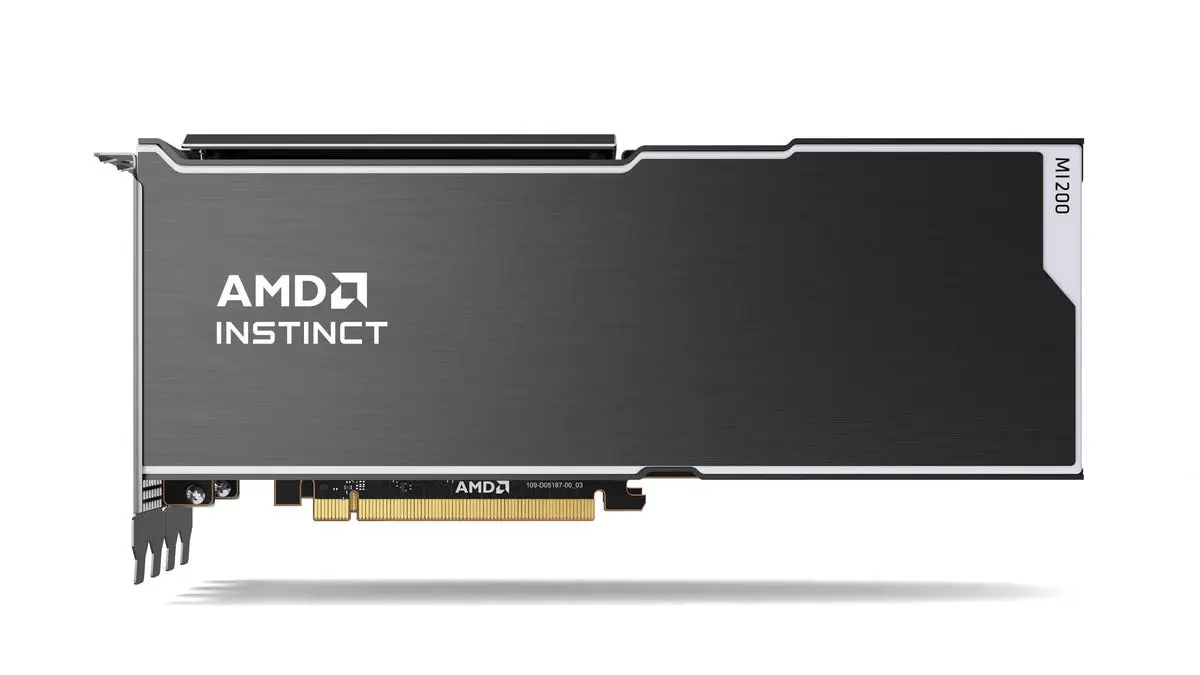

Meta has struck a multi-billion dollar chip deal with AMD that could lead to the Facebook owner taking a 10 percent stake in the group, sending shares in the US chipmaker surging on Tuesday. The social media giant said it would acquire customized chips with a total capacity of 6 gigawatts from AMD as it races to develop and deploy its AI models. AMD's chief executive Lisa Su said that "each gigawatt of compute is worth double-digit billions" under the deal. AMD also issued Meta with a performance-based warrant, giving it the option to acquire up to 160 million AMD shares in tranches at an exercise price of $0.01, as the Facebook owner buys successive orders of processors. The shares-for-chips arrangement represents the latest "circular" transaction in the industry and mirrors a deal AMD struck with OpenAI in October, in which the ChatGPT maker was offered a 10 percent stake in the chip group over time. Shares in AMD, which has a market capitalization of $320 billion, surged 14 percent in pre-market trading on Tuesday. The transaction is also the latest sign that Big Tech customers are looking to diversify supply away from market leader Nvidia, which last week announced its own multiyear deal with Meta to supply it with "millions" of its chips over the coming years. Meta will receive its first tranche of AMD shares in the second half of this year when the first gigawatt of chips is shipped, Su said. The terms of the warrant are also tied to certain share price thresholds, escalating to $600 for the final tranche. The warrant expires in February 2031. "In some sense, Meta is taking a big bet on AMD, and we are also giving Meta a chance to participate if AMD shareholders do well," Su said. "From a financial standpoint, each gigawatt of compute is worth double-digit billions." Su said the warrant structure would help "make sure that we are always a clear seat at the table when [Meta] are thinking about what they need next." Meta's chief executive Mark Zuckerberg said he expected AMD to be "an important partner for many years to come." Meta has said that it will almost double its AI infrastructure spending this year to as much as $135 billion, as US tech giants rush to build the data centers to train and run AI software. It is already one of AMD's biggest AI chip customers. "We don't believe that a single silicon solution will work for all of our workloads," said Santosh Janardhan, Meta's head of infrastructure. "There's a place for Nvidia, there's a place for AMD and... there's a place for our own custom silicon as well. We need all three." Under the deal, AMD will build a custom version of its MI450 AI chips for Meta. They will be used primarily for "inference" workloads, the process of running models after they have been trained. The chips need 6 gigawatts of power -- equivalent to the amount required by 5 million US households for a year. Increasingly creative funding arrangements to support massive AI infrastructure build-outs have emerged in recent years, leading to warnings about circular financing. AMD has, for example, helped data center builder Crusoe secure a $300 million loan from Goldman Sachs by offering a backstop guaranteeing the use of its chips if Crusoe is unable to find customers after installing them in an Ohio facility. Tech giants such as Meta, historically flush with cash, are meanwhile facing the prospect of tapping bond and equity markets or stemming capital returns to shareholders to help fund their unprecedented infrastructure plans. The Facebook and Instagram parent raised $30 billion in October, marking its biggest bond sale to date.

[2]

Meta strikes up to $100B AMD chip deal as it chases 'personal superintelligence'

Meta plans to purchase potentially up to $100 billion worth of AMD chips, enough to drive roughly six gigawatts of data center power demand, the companies announced Tuesday. As part of the multiyear agreement, AMD has issued Meta a performance-based warrant for up to 160 million shares of AMD common stock -- or about 10% of the company -- for $0.01 each, structured to vest alongside certain milestones. The full stock award is conditional on AMD's share price, which would need to hit $600 for Meta to receive its final tranche, per The Wall Street Journal. AMD's stock closed at $196.60 on Monday. Under the agreement, Meta will purchase AMD's MI540 series of GPUs and its latest generation of CPUs. CPUs are increasingly becoming a core pillar of the AI inference compute stack because they're efficient, easier to scale, and don't tie companies solely to Nvidia. AMD has been slowly gaining ground as AI firms look to reduce their reliance on Nvidia, which has been the longstanding leader in AI chips and has charged a premium for the title. Last October, AMD and OpenAI struck a similar deal trading equity for an agreement to buy chips. Meta CEO Mark Zuckerberg said the firm's partnership with AMD is "an important step" as it diversifies its compute and works towards "personal superintelligence." Zuckerberg has defined personal superintelligence as AI systems designed to deeply understand and empower individuals in their everyday lives. Meta has pledged to invest at least $600 billion in U.S. data centers and AI infrastructure over the next several years, including a projected capital expenditure spend of $135 billion in 2026. Meta recently unveiled plans for a $10 billion gas-powered data center campus in Indiana designed for 1 gigawatt of compute capacity. The AMD partnership comes a couple of weeks after Meta struck a multiyear deal to expand its data centers with millions of Nvidia's latest CPUs and GPUs. The Facebook-maker is also working on its own in-house chips but has reportedly hit delays.

[3]

Nvidia's Deal With Meta Signals a New Era in Computing Power

Ask anyone what Nvidia makes, and they're likely to first say "GPUs." For decades, the chipmaker has been defined by advanced parallel computing, and the emergence of generative AI and the resulting surge in demand for GPUs has been a boon for the company. But Nvidia's recent moves signal that it's looking to lock in more customers at the less compute-intensive end of the AI market -- customers who don't necessarily need the beefiest, most powerful GPUs to train AI models, but instead are looking for the most efficient ways to run agentic AI software. Nvidia recently spent billions to license technology from a chip startup focused on low-latency AI computing, and also started selling standalone CPUs as part of its latest superchip system. And yesterday, Nvidia and Meta announced that the social media giant had agreed to buy billions of dollars worth of Nvidia chips to provide computing power for the social media giant's massive infrastructure projects -- with Nvidia's CPUs as part of the deal. The multi-year deal is an expansion of a cozy ongoing partnership between the two companies. Meta previously estimated that by the end of 2024, it would have purchased 350,000 H100 chips from Nvidia, and that by the end of 2025 the company would have access to 1.3 million GPUs in total (though it wasn't clear whether those would all be Nvidia chips). As part of the latest announcement, Nvidia said that Meta would "build hyperscale data centers optimized for both training and inference in support of the company's long-term AI infrastructure roadmap." This includes a "large-scale deployment" of Nvidia's CPUs and "millions of Nvidia Blackwell and Rubin GPUs." Notably, Meta is the first tech giant to announce it was making a large-scale purchase of Nvidia's Grace CPU as a standalone chip, something Nvidia said would be an option when it revealed the full specs of its new Vera Rubin superchip in January. Nvidia has also been emphasizing that it offers technology that connects various chips, as part of its "soup-to-nuts approach" to compute power, as one analyst puts it. Ben Bajarin, CEO and principal analyst at the tech market research firm Creative Strategies, says the move signaled that Nvidia recognizes that a growing range of AI software now needs to run on CPUs, much in the same way that conventional cloud applications do. "The reason why the industry is so bullish on CPUs within data centers right now is agentic AI, which puts new demands on general-purpose CPU architectures," he says. A recent report from the chip newsletter Semianalysis underscored this point. Analysts noted that CPU usage is accelerating to support AI training and inference, citing one of Microsoft's data centers for OpenAI as an example, where "tens of thousands of CPUs are now needed to process and manage the petabytes of data generated by the GPUs, a use case that wouldn't have otherwise been required without AI." Bajarin notes, though, that CPUs are still just one component of the most advanced AI hardware systems. The number of GPUs Meta is purchasing from Nvidia still outnumbers the CPUs. "If you're one of the hyperscalers, you're not going to be running all of your inference computing on CPUs," Bajarin says. "You just need whatever software you're running to be fast enough on the CPU to interact with the GPU architecture that's actually the driving force of that computing. Otherwise, the CPU becomes a bottleneck." Meta declined to comment on its expanded deal with Nvidia. During a recent earnings call, the social media giant said that it planned to dramatically increase its spending on AI infrastructure this year to between $115 billion and $135 billion, up from $72.2 billion last year.

[4]

AMD and Meta strike $100 billion AI deal that includes 10% stock deal -- 6 gigawatt agreement includes up to 160 million AMD shares

AMD's share price has to hit $600 for the stock award to be fully realized AMD and Meta have just announced another colossal AI chip deal, believed to be worth over $100 billion. The new partnership will see AMD provide up to 6 gigawatts of AMD Instinct computing power to power Meta's AI ambitions. The deal includes a colossal performance-based share incentive that could see Meta awarded with up to 160 million AMD shares, roughly 10% of the company's total stock. In a press release, AMD confirmed Meta's partnership with a goal "to rapidly scale AI infrastructure and accelerate the development and deployment of cutting-edge AI models." To that end, AMD will provide Meta with AMD Helios rack-scale architecture, with the first gigawatt deployment expected to begin in the second half of 2026. The solution will be powered by custom AMD Instinct GPUs built on the company's MI450 architecture. The deployment will also use AMD's EPYC CPUs (Venice), and its ROCm software. AMD chair and CEO Lisa Su confirmed the partnership with Meta was a "multi-year, multi-generation collaboration," and Meta CEO Mark Zuckerberg also stated the partnership was long-term. Hinting at the company's AI ambitions, Meta's founder noted plans to deliver "personal superintelligence." AMD says that Meta will be a lead customer for its sixth-generation AMD EPYC CPUs, as well as its Verano next-generation EPYC processors. Crucially, the deal includes a generous stock incentive. AMD says it has issued Meta with "a performance-based warrant" of up to 160 million shares of AMD common stock, which is set to vest "as specific milestones associated with Instinct GPU shipments are achieved." The first such tranche of shares will vest with the first gigawatt shipments, with additional tranches scaling as Meta buys up more compute capacity. Essentially, AMD is rewarding Meta with shares in its company in exchange for buying its GPUs. The deal is identical in scope to the OpenAI and AMD partnership announced in October, with the same 6 gigawatt chip offering and 10% shares of AMD in return. According to the Wall Street Journal, the deal is worth more than $100 billion, with each gigawatt of compute alone worth tens of billions in revenue for AMD. Regarding the stock deal, AMD has reportedly given Meta warrants to buy up to 160 million shares at $0.01 each. To reach the full stock award, AMD's share price needs to hit $600. It is trading just below $200 currently. The news follows an announcement last week that Meta will also deploy standalone Nvidia Grace CPUs in its production data centers, a move it says will deliver a sizeable leap in performance-per-watt for its facilities. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[5]

Meta signs 6 GW chips-for-stock deal with AMD

The House of Zen signed a nearly identical deal with OpenAI last fall AMD just signed a mega chip deal with Meta that appears almost identical to the one it signed with OpenAI last fall. And just like all cross-industry agreements between AI and chip makers of late, this one comes with some circular financing, too. Meta this morning announced it had agreed to purchase 6 gigawatts worth of custom Instinct GPUs from AMD. The first 1 GW tranche of the chips will go to Meta in the second half of this year, and will consist of custom GPUs based on AMD's MI450 architecture. An AMD spokesperson told us that the initial set of chips being shipped to Meta marks the first deployment of custom AMD AI GPUs. The deal isn't ending with custom GPUs, though, and will also see Meta become the "lead customer" for the company's sixth generation EPYC CPUs, the Venice and Verano chips specifically designed for the types of AI workloads Meta wants to put them on. Meta said that the deal is part of its Meta Compute initiative to speed up the development of its planned AI datacenter fleet, and that AMD is one of several hardware partners it's working with to develop a "portfolio approach." As is often the case with these sorts of deals, there's a lot of financial back-scratching going on. AMD has issued Meta a warrant to buy up to 160 million shares of its common stock - about 10% of the total outstanding shares, which sit at 1.63 billion - in various tranches at a steeply discounted price of $0.01 per share. These shares vest only as Meta buys more computing capacity, with the first one coming available after Meta buys 1 GW of chips, which is expected in the second half of this year. The final tranche vests only if AMD's stock price hits $600. AMD shares jumped on news of the Meta deal, but they're still only valued at $211 as of writing. We asked AMD about the circular nature of the deal, and a spokesperson explained that it's all meant to incentivize a close partnership while imposing minimal risk on AMD shareholders. "This structure ensures that Meta has the incentive to collaborate with us even more deeply than our previous work," AMD explained. "The warrants are completely performance-based, AMD must do well, and our shareholders must do well in order for these warrants to vest including our stock price being at $600/share at the last vest." The deal is practically identical to the one AMD offered OpenAI last October. That deal, which was also for 6 GW worth of GPUs, similarly included a warrant for up to 160 million AMD common stock shares structured to payout once certain targets were met. AMD's deals with Meta and OpenAI also came shortly after the pair struck large-scale chip deals with Nvidia, too. "The two companies (OpenAI and Meta) with the most ambitious AI infrastructure plans have committed to 12 GW of AMD GPU," AMD told us. "That puts AMD in a position to gain significant market share." Alternatively, it puts AMD in a precarious position should the AI bubble eventually pop. Several valuations have been assigned to the deal, but both Meta and AMD declined to put a price tag on the transaction when asked. Nonetheless, "we expect to generate double-digit billions of revenue per gigawatt over the course of the 6 GW agreement," the AMD spox told us. As long as the AI-go-round keeps going round and round. ®

[6]

Meta to Spend Tens of Billions of Dollars on AMD Gear, Buy Stock

Meta Platforms Inc. will deploy 6 gigawatts' worth of data center gear based on processors from Advanced Micro Devices Inc., a blockbuster deal that marks a win for the chipmaker's attempts to catch up with Nvidia Corp. Meta will buy AMD chips and computers designed to run artificial intelligence models over a five-year stretch, beginning in the second half of 2026. The series of transactions will be worth "double-digit billions" of dollars per gigawatt, according to AMD Chief Executive Officer Lisa Su. As part of the arrangement, Meta will receive warrants to buy 160 million AMD shares in stages, the two companies said. The shares will vest when the project and AMD's stock price reach certain milestones, turning Meta into a major holder. The agreement is the latest step in a colossal spending spree by Meta, the owner of Facebook and Instagram. CEO Mark Zuckerberg has made AI the company's top priority, pledging to devote hundreds of billions of dollars to "aggressively front-load" computing capacity. AMD's shares rose as much as 15% in early trading before markets opened in New York. Meta's stock was up 0.6%. Last month, the executive announced a new initiative called Meta Compute that's focused on building "tens of gigawatts this decade and hundreds of gigawatts or more over time" to secure a strategic advantage over competitors. One gigawatt represents the output of a nuclear reactor -- enough electricity to power roughly 700,000 homes. The announcement signals that AMD is keeping pace with larger rival Nvidia, which disclosed its own tie-up with Meta last week. And it shows that broader spending on AI equipment continues to accelerate, even as some investors express fears of an investment bubble. For Meta, the AMD deal will bring components that are customized to its needs. It also will have the ability to influence how those semiconductors are designed going forward. "Our ambitions are pretty high," said Santosh Janardhan, Meta's head of global infrastructure, who oversees the company's data centers and their technical architecture. Meta plans to forge ahead with its own in-house custom AI chip efforts and will continue to purchase from Nvidia, with the chips being used to support different workloads. "At the scale we're talking at, there's a place for all three," Janardhan said during an interview with reporters. Janardhan, who now reports directly to Zuckerberg, added that the company hasn't yet decided which of its data centers will use the new chips delivered through this expanded partnership with AMD. The processors are expected to help with the inference stage of AI -- the phase when trained models are put into use. AMD's Su said that Meta, which has already helped influence the design of AMD's chips, will get custom versions of its forthcoming accelerator, the MI450, and successor products. That ability to define more closely what it needs was part of the reason for committing to AMD, Janardhan said. "What we're looking to do is go big and accelerate," AMD's Su said. "We were on a very good path with Meta, but this actually takes our relationship to the next level." Meta is already AMD's second-largest customer, and will now be increasingly vital to the chipmaker's growth. AMD reported $34.6 billion in sales last year and is on course to boost revenue by 34% this year, according to Wall Street estimates. The addition of even $10 billion of extra sales would accelerate its efforts to gain ground on Nvidia. Even with the growth, AMD's investors have become more skeptical of its prospects in recent weeks. They're worried that AI highfliers won't be able to expand fast enough to justify their valuations. After AMD shares surged 77% in 2025, they are down 8.2% so far this year. The Meta tie-up assumes that AMD shares are just at the start of a longer rally. Some of Meta's warrants would only vest if the share price reaches $600. The stock closed Monday at $196.60.

[7]

AMD clinches second mega chip supply deal, this time with Meta

SAN FRANCISCO, Feb 24 (Reuters) - Advanced Micro Devices (AMD.O), opens new tab said on Tuesday it has agreed to sell up to $60 billion worth of artificial intelligence chips to Meta Platforms (META.O), opens new tab over five years in a deal that allows the Facebook owner to purchase as much as 10% of the chip firm. AMD shares rose more than 11% in premarket trading after closing at $196.60 in the previous session. The company had signed a similar pact with OpenAI last year, which was hailed as a vote of confidence in its chips and software, significantly boosting its stock price. A recent slew of chip supply agreements underscores huge appetite for processors from the AI industry. Meta has separately struck a deal with AMD's larger rival Nvidia (NVDA.O), opens new tab to buy millions of AI chips. The partnership underscores the deepening ties among some of the AI industry's top players amid rising concerns around circular deals. Meta and OpenAI are set to own a stake in one of their most significant suppliers at a time when rival chipmaker Nvidia is eyeing investments in some of its largest customers, including the ChatGPT parent. AMD will supply six gigawatts' worth of chips to Meta, starting with one gigawatt of the company's forthcoming MI450 flagship hardware in the second half of this year, AMD CEO Lisa Su told a news briefing. Investor worries about the AI market also extend to the long wait for significant payoffs from Big Tech's relentless spending to expand data center infrastructure. Capital expenditure from Alphabet (GOOGL.O), opens new tab, Microsoft (MSFT.O), opens new tab, Amazon.com (AMZN.O), opens new tab and Meta is expected to total at least $630 billion this year, according to Reuters calculations, with most of the spending focused on data centers and AI chips. In addition to the AMD's flagship graphics chips, Meta also plans to buy central processors, including a variant that will be customized for the social media platform's needs. The custom CPU will be tuned to deliver powerful performance while keeping energy consumption as low as possible, Su said. The deal will include two generations of AMD's CPUs. "So no question Mark is very, very ambitious in what he wants to accomplish, and we want to use every aspect of our technology to really help Meta to accomplish that," Su said, referring to Meta CEO Mark Zuckerberg. Meta helped contribute to the MI450 design that is optimized for a computing process known as inference, which is when a chatbot such as OpenAI's ChatGPT responds to a user's queries. Industry analysts expect the market for inference hardware to dwarf the size of the market for the equipment needed to build the large models AI runs from. As part of the agreement, AMD will issue a warrant for 160 million shares with an exercise price of one cent. The warrant will vest over the course of the deal and will do so after AMD stock price hits rising performance targets up to $600. In addition to the stock price targets, there are "technical and commercial considerations" for each tranche of the warrant that Meta needs to fulfill. "Meta is making a big bet on AMD," Su said. Meta plans to continue to buy chips from other vendors and develop its in-house processors at the same time, Santosh Janardhan, Meta's infrastructure head, said in a call with reporters. Nvidia shares edged lower while Broadcom (AVGO.O), opens new tab dropped nearly 2%. Broadcom is a provider of custom chips and though it does not identify its hyperscaler customers, it is considered by analysts to be a key supplier to Meta, helping the company design and manufacture the processors, known as ASICS. Meta has also been in talks with Google about using the company's tensor processors for AI work, sources have said. The scale at which Meta is building data centers and infrastructure requires multiple chip vendors and approaches, Janardhan said. "All of the chip makers end up having sort of a seat at the table," Janardhan said. Reporting by Stephen Nellis and Max A. Cherney in San Francisco; Editing by Edwina Gibbs Our Standards: The Thomson Reuters Trust Principles., opens new tab * Suggested Topics: * Artificial Intelligence Max A. Cherney Thomson Reuters Max A. Cherney is a correspondent for Reuters based in San Francisco, where he reports on the semiconductor industry and artificial intelligence. He joined Reuters in 2023 and has previously worked for Barron's magazine and its sister publication, MarketWatch. Cherney graduated from Trent University with a degree in history.

[8]

Meta will deploy standalone Nvidia Grace CPUs in production, with Vera to follow -- company sees perf-per-watt improvements of up to 2X in some CPU workloads

Beyond selling its Vera data center CPUs as part of Vera Rubin NVL72 rack-scale systems, Nvidia has expressed ambitions to become a standalone data center CPU vendor, and a new partnership with hyperscale giant Meta represents a big step forward for that plan. As part of a new multi-year strategic partnership announced today, Meta says it'll expand its use of Nvidia tech as it continues to build out hyperscale data centers optimized for its AI training and inference efforts. Those plans include "millions" of Blackwell and Rubin GPUs, part of a massive AI spending plan from Meta that could reach as much as $135 billion in total for 2026. As part of the expanded collaboration between the two companies, Meta will use also Nvidia's Arm-powered Grace server CPUs as standalone platforms in its production data centers. The companies say their collaboration is the first "large-scale Grace-only deployment," and it's meant to deliver a sizeable performance-per-watt improvement for the company's facilities. Nvidia data center honcho Ian Buck told The Register that Meta is seeing gains of up to 2x the performance per watt on certain workloads with the Grace platform, and that it's already test-driving Nvidia's next-gen Vera CPU with "very promising" results. The companies say that large-scale Vera-only deployments could begin as soon as 2027. For reference, the Grace CPU sports 72 Arm Neoverse V2 cores and supports up to 480GB of LPDDR5X memory in its standalone C1 config. Nvidia also offers Grace as a "CPU Superchip" that joins two dies together over the NVLink-C2C interconnect, resulting in 144 cores with up to 960GB of LPDDR5X and up to 1024 GB/s of aggregate memory bandwidth from certain memory capacities. Vera, in turn, has 88 custom Arm cores with up to 176 threads, support for up to 1.5 TB of LPDDR5X memory with up to 1.2TB/s of memory bandwidth, and PCIe Gen 6 and Compute Express Link 3.1 connectivity. Importantly, Vera is also the first Nvidia CPU to support a confidential or trusted computing environment throughout the entirety of its rack-scale systems. Beyond CPUs, Meta also says it'll deploy Nvidia Spectrum-X Ethernet switches throughout its data centers. As we learned at CES, Spectrum-X switches with co-packaged optics promise to increase performance-per-watt for scale-out applications by eliminating active cabling with optical transceivers that can, when combined with the power usage of the switch itself, account for up to 10% of each rack's power consumption. Power used for data movement is power that isn't used to feed GPUs, and with demands for the absolute maximum compute density and efficiency in every rack these days, a savings of that magnitude is a huge deal, so it's no surprise that Meta is hopping on board as it continues to expand its footprint. Beyond hardware, Nvidia will offer its considerable in-house expertise in designing AI models to Meta's engineers to help the company tune and boost the performance of its own core AI applications. All of this just goes to show that as the AI revolution continues, Nvidia's reach into tech far beyond gaming graphics cards is so extensive that people are likely to use software powered or shaped by its models and accelerators, whether they realize it or not, and that'll only grow more true by the day as Meta expands its use of Nvidia's platforms and tech.

[9]

Meta strikes AI chip deal with AMD days after committing to deploy millions of Nvidia GPUs

Meta said on Tuesday that the multiyear deal with AMD involves deploying up to 6 gigawatts of the company's graphics processing units for AI data centers and includes use of AI-optimized central processing units, or CPUs. Early shipments of MI450 GPUs in AMD's Helios rack-scale servers will begin later this year. AMD CEO Lisa Su said in a statement that her company is delivering "high-performance, energy-efficient infrastructure optimized for Meta's workloads, accelerating one of the industry's largest AI deployments and placing AMD at the center of the global AI buildout." In its earnings report last month, Meta committed to up to $135 billion in capital expenditures this year as it tries to keep pace with its megacap peers as well as OpenAI and Anthropic in the global AI race. Overall, Meta has plans for 30 data centers, including 26 in the U.S. Tuesday's announcement is a critical development for AMD, which is far behind Nvidia in the AI chip market. Nvidia is now the world's largest publicly traded company, with a $4.66 trillion valuation, and controls roughly 90% of the market, while AMD is valued at $320 billion. "Meta is in a unique position to control the full stack and they can use whoever's compute they want," said chip analyst Ben Bajarin of Creative Strategies. "It's just a punctuation point on the fact that we are compute constrained, and deals will be done across the board." Terms of the agreement weren't provided, but Bajarin, who was briefed on the deal, estimates that it's worth tens of billions of dollars over at least four years, because "six gigawatts would take quite some time to deploy," he said. Bajarin said a key piece of the agreement, and how it's different from Meta's pact with Nvidia, is that the first deployment involves customized GPUs. "We don't have any indication Nvidia is doing that," Bajarin said. "It's a good way for them to win some of these deals." AMD's Helios represents the first large-scale competition to Nvidia's Grace Blackwell systems, which have experienced soaring demand since launch in 2024. OpenAI struck a similar deal with AMD in October, marking it as a viable second option for AI giants and hyperscalers. Nvidia reports quarterly earnings on Wednesday, and analysts expect revenue growth of 68% from a year earlier to $66 billion, according to LSEG. Earlier this month, AMD reported sales growth for the fourth quarter of 34% to $10.27 billion.

[10]

Meta may trade AI chips for shares in its latest AMD deal

Meta has struck a deal with AMD to buy up to six gigawatts worth of AI chips, both companies announced. The agreement is structured in a way that could see AMD issue Meta up to 160 million shares of its common stock provided GPU shipment milestones are achieved -- meaning Meta could own up to 10 percent of AMD if the deal fully completes. Meta plans to purchase six gigawatts of AMD's Instinct GPUs based on the MI450 architecture and optimized for Meta's workloads, with the first gigawatt deployment set to begin in the second half of 2026. AMD and Meta will also expand on their EPYC CPU partnership, with Meta deploying "millions" of AMD EPYC CPUs and become a launch customer for its sixth-generation EPYC CPUs. The tranche of AMD common stock will vest with the first one gigawatt of shipments, with additional tranches vesting as Meta scales to 6 gigawatts. Vesting is tied to AMD hitting certain stock price thresholds and Meta achieving certain technical and commercial milestones. The deal is very similar to one that AMD structured with OpenAI last year, with AMD obtaining up to a 10 percent stake in AMD in exchange for six gigawatts of Instinct GPUs. Such deals are being likened to circular transactions that have created a tangle of interconnected dependencies between AI companies and chip manufacturers. Analysts have observed that such deals may magnify losses if demand for AI doesn't match the sky-high market expectations. The agreement also shows that AI companies are keen to diversify away from NVIDIA, with AMD being a key alternative. "By diversifying our partnerships and technology stack, we're building a more resilient and flexible infrastructure," Meta wrote in its news release.

[11]

Facebook owner Meta to buy AI chips from AMD in deal worth up to $100 billion

Facebook owner Meta Platforms will buy artificial intelligence chips from Advanced Micro Devices in a deal that will also give it the opportunity to buy up to a 10% stake of the chip company. Meta will buy AMD's latest chips, the MI450, to help power data centers. The 6-gigawatt agreement will see shipments supporting the first gigawatt deployment set to start during the second half of this year. The agreement could potentially be worth more than $100 billion. Shares of AMD jumped more than 9% before the market open on Tuesday. The companies said that AMD issued Meta a performance-based warrant for up to 160 million shares of its common stock at $0.01 a piece, structured to vest as long as certain milestones are achieved. The first tranche vests with the initial 1-gigawatt of shipments, with additional tranches vesting as Meta's purchases scale to 6 gigawatts.

[12]

Meta Announces Major Chips-for-Stock Deal With AMD

Meta said on Tuesday that it would purchase billions of dollars worth of semiconductors from Advanced Micro Devices to develop artificial intelligence technologies and power new data centers. As part of the multiyear deal, Meta is buying a volume of chips equivalent to up to six gigabytes of electricity, enough to power more than five million homes. The social media giant can also take a financial stake of up to 10 percent of the chip developer, the two companies announced. AMD's chief executive, Lisa Su, is using creative deal making to elbow her company's way into an A.I. chips market that has been dominated by Nvidia, the world's most valuable company. In October, AMD struck a similar deal with OpenAI to provide chips and a financial stake in exchange for an agreement to buy its chips. The partnership with Meta would help place AMD "at the center of the global A.I. build out," Ms. Su said in a statement. Nvidia pioneered the A.I. chip industry and claims a more than 90 percent share of the market. It has long counted OpenAI and Meta among its biggest customers. Nvidia has been charging a premium for its chips, which outperform rivals' offerings. But its high prices have made it costly for customers like Meta and OpenAI to build data centers. As a result, those businesses have begun to look for alternative chip providers. Companies like to have choices to keep costs down, said Patrick Moorhead, president of Moor Insights & Strategy. He added that AMD had overhauled its chip design and supercomputers and created a product that's more competitive with Nvidia. "Nobody thought AMD was a serious contender, but this says it's not a one-hit wonder and has a strategy" he said. AMD's stock jumped more than 10 percent in premarket trading. Nvidia's stock fell slightly. Meta said the deal was part of an effort to "diversify" the technology behind its data center infrastructure. AMD said that it would build chips to Meta's specifications and that shipments would begin in the second half of 2026. Meta also remains a major Nvidia customer. Last week, it agreed to spend billions of dollars on millions of Nvidia chips. The companies didn't provide how many gigawatts of power the purchase would support.

[13]

Meta signs major Nvidia deal to power AI data centers with Blackwell and Rubin GPUs

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. In a nutshell: Meta has signed a multi-year agreement with Nvidia to purchase millions of Blackwell and Rubin GPUs, a deal reportedly worth tens of billions of dollars. The company has also committed to buying standalone Grace CPUs from the chipmaker for use across its AI data centers in the US, India, and other global locations. According to separate press releases from Meta and Nvidia, the social media giant will use the chips to power its planned hyperscale data centers optimized for AI training and inference. The company will also deploy Nvidia's new Spectrum-X Ethernet switches as part of the Facebook Open Switching System (FBOSS) software stack used to control and manage network infrastructure. As part of the agreement, Meta will adopt Nvidia's Confidential Computing platform to deliver AI features within WhatsApp Messenger while ensuring data security and confidentiality. According to Nvidia, the technology enables data to be processed on remote edge or public cloud servers within a protected enclave of the processor, preventing unauthorized access to sensitive information. Meta said the secured platform will ensure privacy during computation, since end-to-end encryption only protects data while it is being transmitted to and from the company's servers. The new technology is also expected to help third-party software providers and AI companies safeguard their intellectual property, allowing users to collaborate securely without exposing sensitive data. The two companies plan to expand the use of the Confidential Computing platform beyond WhatsApp to "emerging use cases" across Meta's portfolio. However, neither disclosed what those use cases might be or provided a timeline for large-scale deployment. Both companies said the technology will enable supported apps and services to deliver "privacy-enhanced AI" to consumers and enterprises. Meta is also developing its own custom AI chips, known as the Meta Training and Inference Accelerator (MTIA). Designed specifically for inference workloads in the company's hyperscale data centers, the chips feature processor cores based on a customized version of the open-source RISC-V architecture and are manufactured by TSMC using its 5nm process. A Financial Times report claims Meta is facing "technical challenges and rollout delays" with the MTIA chips, making it unclear when they will go live. However, the new chips are intended to supplement - not replace - Nvidia's accelerators, meaning the partnership is likely to continue even after MTIA is fully deployed. Meta is reportedly planning to spend up to $135 billion this year on its AI-focused Superintelligence Labs division, as well as its core social media business. A significant portion of that budget was expected to go toward purchasing Google's Tensor chips, though it remains unclear whether those plans will proceed following the new deal with Nvidia.

[14]

Meta already deploying Nvidia's standalone CPUs at scale

CPU adoption is part of deeper partnership between the Social Network and Nvidia which will see millions of GPUs deployed over next few years Move over Intel and AMD -- Meta is among the first hyperscalers to deploy Nvidia's standalone CPUs, the two companies revealed on Tuesday. Meta has already deployed Nvidia's Grace processors in CPU-only systems at scale and is working with the GPU slinger to field its upcoming Vera CPUs beginning next year. Apart from a few scientific institutions, Nvidia's nearly three-year-old Grace CPUs have predominantly shipped as part of so-called "Superchips" which featured integrated Hopper and Blackwell GPUs onboard. These are nothing new for Meta. The Social Network has previously deployed Nvidia's Grace-Hopper Superchips as part of its Andromeda recommender system and now plans to field "millions" of Nvidia GB300 and Vera Rubin Superchips, the companies said today. What has changed is Meta is now using Nvidia's CPU-only Grace systems to power both general purpose and agentic AI workloads that don't require a GPU. "What we found is that Grace is an excellent backend datacenter CPU. It can actually deliver 2x the performance per watt on those back end workloads," Ian Buck, Nvidia's VP and General Manager of Hyperscale and HPC, said during a press briefing ahead of Tuesday's announcement. "Meta has already had a chance to get on Vera and run some of those workloads, and the results look very promising." As a refresher, Nvidia's Grace CPUs are equipped with 72 Arm Neoverse V2 cores clocked at up to 3.35 GHz. The chip is available in a standalone config with up to 480 GB of memory or two of the processors can be combined with up to 960 GB of LPDDR5x memory to form the Grace-CPU Superchip. LPDDR5x isn't something you typically see in server platforms, but offers several advantages in terms of space and bandwidth. The Grace-CPU Superchip boasts up to 1 TB of memory bandwidth between the two dies. Nvidia's Vera CPU, officially unveiled at CES earlier this year, boosts core counts to 88 custom Arm cores and adds support for simultaneous multi-threading, A.K.A Hyperthreading, and confidential computing functionality. According to Nvidia, Meta will take advantage of the latter capability for private processing and AI features in its WhatsApp encrypted messaging service. Meta's adoption of Nvidia CPUs runs counter to the broader industry, which has increasingly pivoted to custom Arm CPUs like Amazon's Graviton or Google's Axion. Alongside Meta's new CPU deployments, the hyperscaler will deploy more Nvidia GPUs and Spectrum-X network, something we'd kind of figured considering the tech titan's $115 billion to $135 billion capex target for 2026. Nvidia is being rather tight-lipped about the scale of the expanded collab, but has said the deal will contribute tens of billions to its bottom line. A little back of the napkin math tells us that at north of $3.5 million per rack, a million GPUs works out to about $48 billion, which is a big number even for Nvidia. By way of comparison, the company earned $31.9 billion in net income on revenues of $57 billion in the quarter ended Oct. 26. It'll report Q4 earnings later this month. Meta isn't just an Nvidia shop these days. The company has a not insignificant fleet of AMD Instinct GPUs humming away in its datacenters and was directly involved in the design of AMD's Helios rack systems, which are due out later this year. Meta is widely expected to deploy AMD's competing rack systems, though no formal commitment has yet been made. ®

[15]

Meta Deepens Nvidia Ties With Pact to Use 'Millions' of Chips

Meta Platforms Inc. has agreed to deploy "millions" of Nvidia Corp. processors over the next few years, tightening an already close relationship between two of the biggest companies in the artificial intelligence industry. Meta, which accounts for about 9% of Nvidia's revenue, is committing to use more AI processors and networking equipment from the supplier, according to a statement Tuesday. For the first time, it also plans to rely on Nvidia's Grace central processing units, or CPUs, at the heart of standalone computers. The rollout will include products based on Nvidia's current Blackwell generation and the forthcoming Vera Rubin design of AI accelerators. "We're excited to expand our partnership with Nvidia to build leading-edge clusters using their Vera Rubin platform to deliver personal superintelligence to everyone in the world," Meta Chief Executive Officer Mark Zuckerberg said in the statement. The pact reaffirms Meta's loyalty to Nvidia at a time when the AI landscape is shifting. Nvidia's systems are still considered the gold standard for artificial intelligence infrastructure -- and generate hundreds of billions of dollars in revenue for the chipmaker. But rivals are now offering alternatives, and Meta is working on building its own in-house components. Shares of Nvidia and Meta both rose more than 1% in late trading after the agreement was announced. Advanced Micro Devices Inc., Nvidia's rival in AI processors, fell more than 3%. Ian Buck, Nvidia's vice president of accelerated computing, said the two companies aren't putting a dollar figure on the commitment or laying out a timeline. He said it's proper that Meta and others should test out other alternatives but argued that only Nvidia is able to offer the breadth of components, systems and software that a company wishing to be a leader in AI needs. Zuckerberg, meanwhile, has made AI the top priority at Meta, pledging to spend hundreds of billions of dollars to build the infrastructure needed to compete in this new era. Meta has already projected record spending for 2026, with Zuckerberg saying last year that the company would put $600 billion toward US infrastructure projects over the next three years. Meta is building several gigawatt-sized data centers around the country, including in Louisiana, Ohio and Indiana. One gigawatt is roughly the amount of energy needed to power 750,000 homes. Buck stressed that Meta will be the first large data center operator to use Nvidia's CPUs in standalone servers. Typically, Nvidia offers this technology in combination with its high-end AI accelerators -- chips that owe their lineage to graphics processors. This shift represents an encroachment into territory dominated by Intel Corp. and AMD. It also provides an alternative to some of the in-house chips that are designed by large data center operators, such as Amazon.com Inc.'s Amazon Web Services. Buck said the uses for such chips are only growing. Meta, owner of Facebook and Instagram, will use the chips itself and also rely on Nvidia-based computing capacity offered by other companies. Nvidia CPUs will be increasingly used for tasks such as data manipulation and machine learning, Buck said. "There's many different kinds of workloads for CPUs," Buck said. "What we've found is Grace is an excellent back-end data center CPU," meaning it handles the behind-the-scenes computing tasks. "It can actually deliver two times the performance per watt on those back-end workloads," he said.

[16]

Meta and AMD Team Up to Take on Nvidia in Massive AI Chip Deal

AMD just landed a huge win in its ongoing battle to catch up with AI giant Nvidia. On Tuesday, AMD and Meta, the parent company of Facebook, announced a multi-year, multi-generation partnership to scale Meta’s AI infrastructure and speed up the development of its advanced AI models. Under the deal, Meta plans to deploy up to 6 gigawatts worth of AMD GPUs. For context, that’s enough to power nearly 4.5 million homes. The first shipments supporting an initial one-gigawatt deployment are scheduled to begin in the second half of 2026 and will include custom chips optimized specifically for Meta’s workloads. The announcement also says that Meta will be a top customer of AMD's 6th Gen AMD EPYC CPUs. The agreement includes performance-based terms that could allow Meta to acquire up to 160 million AMD shares, about 10% of the company, if certain milestones are met. The first tranche vests once the initial gigawatt of chips ships, with additional vesting tied to additional chip shipments and AMD stock price thresholds. AMD shares jumped about 10% Tuesday morning following the announcement. AMD is quickly emerging as a viable alternative to Nvidia’s hardware. The company signed another major AI infrastructure partnership with OpenAI in October to deploy another 6 gigawatts of AI chips. “We are proud to expand our strategic partnership with Meta as they push the boundaries of AI at unprecedented scale,†said AMD CEO Lisa Su in a press release today. She added that this latest partnership would place the company “at the center of the global AI buildout.†For Meta, the deal comes as it’s running its own AI arms race against companies like Google and OpenAI in pursuit of more powerful AI systems and ultimately the holy grail of artificial general intelligence. Building those systems requires staggering amounts of compute. Nvidia remains the industry's leading supplier of AI chips, controlling an estimated 84% of the AI and data center market share. This has led it to become the world’s most valuable company, with a market capitalization of about $4.6 trillion. But by working with competitors like AMD, tech giants hope to avoid dependence on a single vendor. “We’re excited to form a long-term partnership with AMD to deploy efficient inference compute and deliver personal superintelligence,†said Meta CEO Mark Zuckerberg in a press release. “This is an important step for Meta as we diversify our compute.†The AMD agreement comes just days after Meta announced a separate long-term deal with Nvidia, though that announcement did not disclose how much power capacity it would support.

[17]

Nvidia to sell Meta millions of chips in multiyear deal

SAN FRANCISCO, Feb 17 (Reuters) - Nvidia (NVDA.O), opens new tab on Tuesday said it has signed a multiyear deal to sell Meta Platforms (META.O), opens new tab millions of its current and future artificial intelligence chips, including central processing units that compete with products from Intel (INTC.O), opens new tab and Advanced Micro Devices (AMD.O), opens new tab. Nvidia did not disclose a value for the deal, but said it includes its current Blackwell chips as well as its forthcoming Rubin AI chips. It also includes standalone installations of its Grace and Vera central processors. Nvidia introduced those central processors, based on technology from Arm Holdings (O9Ty.F), opens new tab, as companions to its AI chips starting 2023. But the announcement Tuesday signaled that Nvidia aims to push those chips for emerging fields such as running AI agents as well as into markets for processors used in workaday technical tasks such as running databases. Nvidia's announcement also comes as Meta is developing its own AI chips and is in discussions with Google about using that company's Tensor Processing Unit chips, or TPUs, for AI work. Ian Buck, the general manager of Nvidia's hyperscale and high-performance computing unit, said that Nvidia's Grace central processors have shown they can use half the power for some common tasks such as running databases, with more gains expected for the next generation, Vera. "It actually continues down that path and makes it an excellent data center-only CPU for those high-intensity data processing back-end operations," Buck said. "Meta has already had a chance to get on Vera and run some of those workloads. And the results look very promising." While Nvidia has never disclosed its sales to Meta, it is widely believed to be among four customers that made up 61% of its revenue, opens new tab in its most recent fiscal quarter. Moorhead said Nvidia likely highlighted the deal to show that it has retained a large business with Meta and is gaining traction with its central processor chips. Reporting by Stephen Nellis in San Francisco; Ethan Smith Our Standards: The Thomson Reuters Trust Principles., opens new tab

[18]

Meta expands Nvidia deal to use millions of AI chips in data center build-out, including standalone CPUs

Meta CEO Mark Zuckerberg said in a statement that the expanded partnership continues his company's push "to deliver personal superintelligence to everyone in the world," a vision he announced in July. Financial terms of the deal were not provided. In January, Meta announced plans to spend up to $135 billion on AI in 2026. "The deal is certainly in the tens of billions of dollars," said chip analyst Ben Bajarin of Creative Strategies. "We do expect a good portion of Meta's capex to go toward this Nvidia build-out." The partnership is nothing new, as Meta has been using Nvidia graphics processing units for at least a decade, but the deal marks a significantly broader technology partnership between the two Silicon Valley-based giants. Standalone CPUs are the biggest new thing in the deal, with Meta becoming the first to deploy Nvidia's Grace central processing units as standalone chips in its data centers, as opposed to incorporated alongside GPUs in a server. Nvidia said it's the first large-scale deployment of Grace CPUs on their own. "They're really designed to run those inference workloads, run those agentic workloads, as a companion to a Grace Blackwell/Vera Rubin rack," Bajarin said. "Meta doing this at scale is affirmation of the soup-to-nuts strategy that Nvidia's putting across both sets of infrastructure: CPU and GPU." The next-generation Vera CPUs are planned to be deployed by Meta in 2027. The multiyear deal is part of Meta's overall commitment to spend $600 billion in the U.S. by 2028 on data centers and the infrastructure the facilities require. Meta has plans for 30 data centers, 26 of which will be based in the U.S. Its two largest AI data centers are under construction now: the Prometheus 1-gigawatt site in New Albany, Ohio, and the 5-gigawatt Hyperion site in Richland Parish, Louisiana.

[19]

Meta will run AI in WhatsApp through NVIDIA's 'confidential computing'

Meta just announced a deal to buy "millions" of NVIDIA Blackwell and Rubin GPUs in a new long-term partnership. As part of that, the social media giant will deploy NVIDIA's Confidential Computing for WhatsApp, "enabling AI-powered capabilities across the messaging platform while ensuring user data confidentiality and integrity." As part of the deal, Meta committed to using NVIDIA's Confidential Computing for WhatsApp messaging to allow AI inside the app while guaranteeing user data confidentiality. That technology will let Meta secure data during computation, not just when it's being shuttled to a server. It also allows software creators like Meta or third-party AI agent providers "to preserve their intellectual property," NVIDIA wrote on a blog about the technology. Meta will also be the first to deploy NVIDIA's Grace CPUs in a standalone way, instead of incorporating them with GPUs. They're designed to run inference and agentic workloads when running in this fashion. Meta will also be using NVIDIA's Spectrum-X Ethernet switches. Meta announced earlier this year that it would spend up to $135 billion on AI in 2026, so it's not a surprise that a big chunk of that is going toward NVIDIA. However the numbers involved, likely in the "tens of billions" according to analysts, represent a significant expansion of the partnership between the two companies. Meta plans to build up to 30 data centers, including 26 in the US, by 2028 as part of a $600 billion commitment.

[20]

Meta agrees $60bn deal with chipmaker AMD despite AI bubble fears

Facebook owner's investment described by semiconductor company as 'big bet' on artificial intelligence The owner of Facebook has agreed to buy $60bn (£44.5bn) of artificial intelligence chips from the US semiconductor company Advanced Micro Devices despite fears over the vast sums being spent on the AI industry. Meta, which also owns Instagram and WhatsApp, has clinched the five-year deal in which it will also buy 10% of the chip company. AMD signed a similar pact with OpenAI last year, which was hailed as a vote of confidence in its chips and software, significantly boosting its stock price. A recent series of chip supply agreements underscores the AI industry's appetite for processors. Meta has separately struck a deal with AMD's larger rival Nvidia to buy millions of AI chips. AMD will supply 6GW worth of chips to Meta, starting with 1GW of the company's forthcoming MI450 hardware in the second half of this year, AMD's chief executive, Lisa Su, said. In addition to AMD's flagship graphics chips (GPUs), Meta also plans to buy central processors (CPUs), including a variant that will be customised for the social media platform's needs. The custom CPU will be tuned to deliver powerful performance while keeping energy consumption as low as possible, Su said. The deal will include two generations of AMD's CPUs. "So no question Mark is very, very ambitious in what he wants to accomplish, and we want to use every aspect of our technology to really help Meta to accomplish that," Su said, referring to Meta's chief executive, Mark Zuckerberg. "Meta is making a big bet on AMD." Meta contributed to the MI450 design, which is optimised for a computing process known as inference, which is when a chatbot such as OpenAI's ChatGPT responds to a user's queries. Industry analysts expect the market for inference hardware to dwarf the size of the market for the equipment needed to train large AI models. Meta plans to continue to buy chips from other vendors and develop its in-house processors at the same time, Santosh Janardhan, the company's infrastructure head, said. Meta has been in discussions with Google about using the company's tensor processors (TPUs) for AI work, Reuters reported. The scale at which Meta is building datacentres and infrastructure requires multiple chip vendors and approaches, Janardhan said. "All of the chipmakers end up having sort of a seat at the table," Janardhan added.

[21]

Meta and AMD set huge AI chips pact

Why it matters: It comes just a week after Meta announced a deal to buy chips from AMD rival Nvidia and shows that the arms race in AI continues to escalate. * It's also another example of a circular deal in which a customer or supplier takes a stake in the other. Zoom out: The agreement mirrors one AMD struck with OpenAI last October: The AI startup agreed to buy billions of dollars worth of chips as well as a 10% stake. What they're saying: "We are proud to expand our strategic partnership with Meta as they push the boundaries of AI at unprecedented scale," AMD CEO Dr. Lisa Su said in a statement. Zoom in: The first chip shipments will begin the second half of this year. * A gigawatt represents enough power to electrify a small city. * AMD stands to gain tens of billions of dollars in revenue for each gigawatt of computing power, the Wall Street Journal notes. Follow the money: As part of the deal, Meta has received warrants that would allow it buy up to 160 million shares of AMD if certain performance targets are hit. * Shares of AMD were up 11% in pre-market trading. This is a developing story.

[22]

Meta integrates Nvidia GPUs, CPUs, and confidential computing tools

Initiative will combine Meta's production workloads with Nvidia's hardware and software ecosystem * Meta and Nvidia launch multiyear partnership for hyperscale AI infrastructure * Millions of Nvidia's GPUs and Arm-based CPUs will handle extreme workloads * Unified architecture spans data centers and Nvidia cloud partner deployments Meta has announced a multi-year partnership with Nvidia aimed at building hyperscale AI infrastructure capable of handling some of the largest workloads in the technology sector. This collaboration will deploy millions of GPUs and Arm-based CPUs, expand network capacity, and integrate advanced privacy-preserving computing techniques across the company's platforms. The initiative seeks to combine Meta's extensive production workloads with Nvidia's hardware and software ecosystem to optimize performance and efficiency. Unified architecture across data centers The two companies are creating a unified infrastructure architecture that spans on-premises data centers and Nvidia cloud partner deployments. This approach simplifies operations while providing scalable, high-performance computing resources for AI training and inference. "No one deploys AI at Meta's scale -- integrating frontier research with industrial-scale infrastructure to power the world's largest personalization and recommendation systems for billions of users," said Jensen Huang, founder and CEO of Nvidia. "Through deep codesign across CPUs, GPUs, networking and software, we are bringing the full Nvidia platform to Meta's researchers and engineers as they build the foundation for the next AI frontier." Nvidia's GB300-based systems will form the backbone of these deployments. They will offer a platform that integrates compute, memory, and storage to meet the demands of next-generation AI models. Meta is also expanding Nvidia Spectrum-X Ethernet networking throughout its footprint and aims to deliver predictable, low-latency performance while improving operational and energy efficiency for large-scale workloads. Meta has begun adopting Nvidia Confidential Computing to support AI-powered capabilities within WhatsApp, allowing machine learning models to process user data while maintaining privacy and integrity. The collaboration plans to extend this approach to other Meta services, integrating privacy-enhanced AI techniques into multiple applications. Meta and Nvidia engineering teams are working closely to codesign AI models and optimize software across the infrastructure stack. By aligning hardware, software, and workloads, the companies aim to improve performance per watt and accelerate training for state-of-the-art models. Large-scale deployment of Nvidia Grace CPUs is a core part of this effort, with the collaboration representing the first major Grace-only deployment at this scale. Software optimizations in CPU ecosystem libraries are also being implemented to improve throughput and energy efficiency for successive generations of AI workloads. "We're excited to expand our partnership with Nvidia to build leading-edge clusters using their Vera Rubin platform to deliver personal superintelligence to everyone in the world," said Mark Zuckerberg, founder and CEO of Meta. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[23]

Meta Builds AI Infrastructure With NVIDIA

Meta has adopted NVIDIA Confidential Computing, enabling AI capabilities while protecting user privacy. NVIDIA today announced a multiyear, multigenerational strategic partnership with Meta spanning on-premises, cloud and AI infrastructure. Meta will build hyperscale data centers optimized for both training and inference in support of the company's long-term AI infrastructure roadmap. This partnership will enable the large-scale deployment of NVIDIA CPUs and millions of NVIDIA Blackwell and Rubin GPUs, as well as the integration of NVIDIA Spectrum-X™ Ethernet switches for Meta's Facebook Open Switching System platform. "No one deploys AI at Meta's scale -- integrating frontier research with industrial-scale infrastructure to power the world's largest personalization and recommendation systems for billions of users," said Jensen Huang, founder and CEO of NVIDIA. "Through deep codesign across CPUs, GPUs, networking and software, we are bringing the full NVIDIA platform to Meta's researchers and engineers as they build the foundation for the next AI frontier." "We're excited to expand our partnership with NVIDIA to build leading-edge clusters using their Vera Rubin platform to deliver personal superintelligence to everyone in the world," said Mark Zuckerberg, founder and CEO of Meta. Expanded NVIDIA CPU Deployment for Performance Boost Meta and NVIDIA are continuing to partner on deploying Arm-based NVIDIA Grace™ CPUs for Meta's data center production applications, delivering significant performance-per-watt improvements in its data centers as part of Meta's long-term infrastructure strategy. The collaboration represents the first large-scale NVIDIA Grace-only deployment, supported by codesign and software optimization investments in CPU ecosystem libraries to improve performance per watt with every generation. The companies are also collaborating on deploying NVIDIA Vera CPUs, with the potential for large-scale deployment in 2027, further extending Meta's energy-efficient AI compute footprint and advancing the broader Arm software ecosystem. Unified Architecture Supports Meta's AI Infrastructure Meta will deploy industry-leading NVIDIA GB300-based systems and create a unified architecture that spans on-premises data centers and NVIDIA Cloud Partner deployments to simplify operations while maximizing performance and scalability. In addition, Meta has adopted the NVIDIA Spectrum-X Ethernet networking platform across its infrastructure footprint to provide AI-scale networking, delivering predictable, low-latency performance while maximizing utilization and improving both operational and power efficiency. Confidential Computing for WhatsApp Meta has adopted NVIDIA Confidential Computing for WhatsApp private processing, enabling AI-powered capabilities across the messaging platform while ensuring user data confidentiality and integrity. NVIDIA and Meta are collaborating to expand NVIDIA Confidential Compute capabilities beyond WhatsApp to emerging use cases across Meta's portfolio, supporting privacy-enhanced AI at scale. Codesigning Meta's Next-Generation AI Models Engineering teams across NVIDIA and Meta are engaged in deep codesign to optimize and accelerate state-of-the-art AI models across Meta's core workloads. These efforts combine NVIDIA's full-stack platform with Meta's large-scale production workloads to drive higher performance and efficiency for new AI capabilities used by billions around the world.

[24]

AMD and Meta announce yet another circular megamoney GPU deal, this time for $60 billion of chips and potentially a 10% stake in AMD for Meta

Meta to buy $60 billion in GPUs but might end up owning $35 billion share in AMD. Keeping up with all the megabucks deals going on right now in AI land is pretty much a full-time job. But for what it's worth, here's another doozy that involves silly amounts of money and a whiff of just the sort of cannibalistic circularity that makes the whole situation so unnerving. AMD and Meta have announced a new strategic alliance in which Meta buys $60 billion worth of AMD GPUs, but also might end up owning 10% of AMD into the bargain, a stake currently valued at around $35 billion. So, yeah, that's seemingly $60 billion being paid out by Meta, only to effectively get $35 billion back, along with a whole hill of GPUs for making AI slop. First up, however, it's worth noting that those dollar figures are estimates. The $60 billion is a Reuters estimate of the cost of what the official announcement explicitly mentions, namely 6 gigawatts worth of AMD Instinct GPUs for AI processing over a five-year period. As for the $35 billion, that's based on details in the release and AMD's current market value. Long story short, if certain stipulations are met, including the number of AMD Instinct GPUs shipped to Meta, and Meta itself "achieving key technical and commercial milestones", then Zuckerberg's company has the right to buy up to 160 million AMD shares. The slightly more detailed version is that if AMD issues all 160 million shares to Meta, that would then represent a roughly 10% holding in the company. Right now, AMD is worth about $350 billion, and the maths is easy enough to see that 10% of $350 billion is $35 billion. Of course, much can change over the next five years in terms of AMD's overall value. Likewise, the conditions for the share issue may never be met. But the interesting bit here, arguably, is that those shares will likely be sold to Meta almost for free. According to reports, the price per share is likely to be $0.01, compared to the current market price of over $210 per share. The reason why AMD doesn't just give Meta the shares for free comes down to accounting practices and how shares are issued. The $1.6 million Meta would have to pay for the shares is largely incidental. The point is that if the conditions are met, Meta ends up owning about 10% of AMD in return for buying a bucket load of GPUs. At this juncture, you might wonder why AMD doesn't just sell the GPUs to Meta more cheaply. Firstly, it makes AMD look less profitable. It would hurt things like AMD's profit margins and cash flow, which gets the megabucks bean counters at investment banks anxious, and set a real-world price precedent for AMD GPUs below that at which the company would like to sell them. And in pure PR terms, this kind of deal has better optics than selling the GPUs off cheap to secure Meta as a customer. But that is, arguably, what AMD is doing. Anywho, for we mere PC gamers, who knows what it all means. It's positive news for AMD, for sure, but at this stage, any notion of trickle-down economics in terms of AI riches translating into money being made available to invest in creating cool new gaming chips feels a bit hopeful if not downright fanciful. For sure, we'd rather AMD was doing well than badly. But we'd also rather more of its money came from gaming than AI, as utterly unrealistic as that is.

[25]

Meta commits billions to Nvidia chips

Why it matters: Even though Meta is already one of Nvidia's biggest buyers, the deal is so big that it stands to boost both companies. Driving the news: Meta will buy millions of chips from Nvidia, ranging from standalone Grace CPUs to next-gen Blackwell GPUs and upcoming Vera Rubin systems, to use across its U.S. data center buildout. * This makes Meta the first Big Tech firm to commit to buying standalone central processing units from Nvidia, which are used to run AI rather than train AI. * This signals a shift toward inference over training, with the latter typically requiring more intense and expensive general processing units, or GPUs * Financial details of the deal were not disclosed. Between the lines: Meta is locking in scarce next-generation compute at a time when Nvidia's Blackwell GPUs are back-ordered and rivals are scrambling for supply. Zoom out: It's a signal that there's still strong demand for compute power amid the AI buildout. * Hyperscalers -- the companies behind the largest data center buildouts -- are on track to spend $650 billion this year. * Chip companies like Nvidia stand to benefit from that, as their chips are seen as the best -- and most expensive -- on the market. * Any sign of a slowdown in spending tends to hurt Nvidia shares, but a deal like this is a balm for investors who've been increasingly skittish about how long the AI spending spree can last. Follow the money: "The question of why Meta [is] deploying Nvidia's CPUs at scale is the most interesting thing in this announcement," Ben Bajarin, chief executive and principal analyst at tech consultancy Creative Strategies, told The Financial Times. Threat level: It's yet another example of circular funding and more spending from AI players. * Meta is expected to spend about $135 billion on its AI ambitions this year, a number that's expected to continue going up. The intrigue: Google, Amazon, Microsoft and even Meta have all announced new in-house chips in the past few months, which are seen as more affordable alternatives. * Meta was reportedly considering using Google's TPUs, according to CNBC. * Shares of AMD, a competitor to Nvidia, fell after the deal was announced. The bottom line: This agreement makes clear that, at least for the next phase of the AI race, Nvidia is the backbone of Meta's compute strategy.

[26]

Meta inks deal to use millions of Nvidia's chips in data centre build-out

Meta plans to spend up to $135bn this year to support its Meta Superintelligence Labs efforts as well as its core business. Meta will be spending billions - reportedly - in a multi-year partnership with Nvidia to use "millions" of its chips to support the company's data centre build-out, the two companies announced yesterday (17 February). Commenting on the deal, Nvidia founder and CEO Jensen Huang said that no other company deploys AI at Meta's scale. The announcement comes as the social media giant gears up to spend as much as $135bn this year to support its Meta Superintelligence Labs efforts as well as its core business, while competing chipmakers attempt to challenge Nvidia's global dominance in AI. Even Nvidia's Big Tech customers, including Meta and OpenAI are building their own in-house hardware. As per the mega deal, Meta will deploy millions of Nvidia Blackwell, and the newer Rubin GPUs to build hyperscale data centres optimised for both AI training and inference. The company will also integrate Nvidia's recently-announced Spectrum-X ethernet switches for Meta's Facebook open switching system platform, and expand its usage of Nvidia's confidential computing beyond WhatsApp and into other offerings. The two companies said they will continue their partnership to deploy Arm-based Nvidia Grace CPUs for Meta's data centre production applications, representing the first large-scale Nvidia Grace-only deployment. They are also collaborating to deploy Nvidia's Vera CPUs, with the potential for large-scale deployment next year. Meta is also tapping Nvidia's GB300-based systems to continue developing its data centres. It was reported yesterday that Nvidia sold off the last of its stake in Arm Holdings - a company it once tried to acquire. And last September, Huang announced a "giant" $100bn deal with OpenAI - that has apparently not yet transpired. "No one deploys AI at Meta's scale - integrating frontier research with industrial-scale infrastructure to power the world's largest personalization and recommendation systems for billions of users," said Huang. "Through deep codesign across CPUs, GPUs, networking and software, we are bringing the full Nvidia platform to Meta's researchers and engineers as they build the foundation for the next AI frontier." Meta founder and CEO Mark Zuckerberg added: "We're excited to expand our partnership with Nvidia to build leading-edge clusters using their Vera Rubin platform to deliver personal superintelligence to everyone in the world." Don't miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic's digest of need-to-know sci-tech news. Jensen Huang, World Economic Forum Annual Meeting, 2026. Image: © World Economic Forum via Flickr (CC BY-NC-SA 4.0)

[27]

Meta agrees to buy millions more AI chips for Nvidia, raising doubts over its in-house hardware - SiliconANGLE

Meta agrees to buy millions more AI chips for Nvidia, raising doubts over its in-house hardware Meta Platforms Inc. has agreed a new deal with Nvidia Corp. to buy millions of its next-generation Vera Rubin graphics processing units and a similar number of Grace central processing units to fuel its artificial intelligence ambitions. The deal, announced today, is likely to be worth billions of dollars. In a statement, Meta Chief Executive Mark Zuckerberg said the expanded partnership is crucial to enabling his company's previously announced vision of "delivering personal superintelligence to everyone in the world." Meta is already one of Nvidia's biggest customers and is believed to have been using its GPUs for years already, but the deal suggests a much deeper partnership between the two companies. It's notable because Meta has also committed to buying substantial volumes of Nvidia's Grace CPUs and deploying them as standalone chips, rather than use them in tandem with its GPUs. Typically, CPUs are combined with GPUs in a server to improve efficiency: the CPUs handle complex tasks and manage system operations, while the GPUs focus purely on AI processing. By offloading the management to CPUs, GPUs can run much faster. Nvidia said Meta will be the first company to deploy Grace CPUs on their own, and from next year, it will deploy the next-generation Vera CPUs too. Ben Bajarin, a chip analyst at Creative Strategies, said this is significant. "Meta doing this at scale is affirmation of the soup-to-nuts strategy that Nvidia's putting across both sets of infrastructure: CPU and GPU," he told the Financial Times. Neither company disclosed the terms of the deal, but it's likely to be worth tens of billions of dollars, analysts say. Last month, Meta told investors that it's planning to invest $135 billion in its continued AI infrastructure build out this year, and the money spent on chips will account for a significant portion of that amount. Meta has committed to spending $600 billion on AI infrastructure by 2028. Its plans include building 26 data centers in the U.S. and four internationally. The two largest facilities include the 1-gigawatt Prometheus site in New Albany, Ohio, and the 5-gigawatt Hyperion site in Richland Parish, Louisiana, which are both currently under construction. Many of Nvidia's chips are destined to end up in those locations. Meta won't be buying chips alone. The deal also includes Nvidia's Spectrum-X Ethernet switches and InfiniBand interconnect technologies, which are used to link massive clusters of GPUs so they can work together in tandem. In addition, it will also use Nvidia's security products to secure the AI features on platforms such as Facebook, Instagram and WhatsApp. While Meta is doubling-down on Nvidia, it has been careful to diversify its chip strategy too. It's a major customer of Nvidia's rival Advanced Micro Devices Inc., which also makes GPUs, and in November, a report in The Information revealed that the company is also considering using Google LLC's tensor processing units for some of its AI workloads. That report made shareholders nervous at the time, with Nvidia's stock falling more than 4%, although no deal with Google has been announced so far. Perhaps the most telling aspect of today's deal is what it means for Meta's attempts to develop its own, in-house processors for AI. The company first deployed the Meta Training and Inference Accelerator or MTIA chip in 2023 and updated it in 2024, as part of an effort to reduce its reliance on external suppliers. It's primarily used for AI inference, running AI models in production to power applications such as content recommendations on its social media feeds. Last year, Meta revealed it's also working on a new version of MTIA that's specifically designed for model training, which is an area where its first chips have struggled. At the time, it said it was aiming for a broad roll out of its new chips in 2026. It's reportedly working with Taiwan Semiconductor Manufacturing Co. to manufacture the chips, and late last year it was said to have completed the "tape-out" phase, meaning it has apparently finalized the design ahead of testing. Zuckerberg has previously said the new MTIA training chips are designed to be more energy efficient and optimized for the company's "unique workloads," which should allow it to reduce its AI operating costs. But the deal with Nvidia suggests that its planned rollout this year might be delayed. The Financial Times reported that Meta has experienced "technical challenges" with the new chips, citing anonymous sources. While its in-house chip ambitions may have been frustrated, Meta has at least secured a plentiful supply of GPUs to make up for those delays. It said it's planning to work closely with Nvidia to optimize the processors for its AI models, which are thought to include its upcoming frontier model Avocado, which is the successor to its flagship LLM Llama 4. The company needs to make a good impression with Avocado, because Llama 4 was largely viewed as a disappointment, failing to match the capabilities of its rival's most advanced models.

[28]

Facebook owner Meta to buy AI chips from AMD in deal worth up to $100 billion