Nvidia GTC 2026: Jensen Huang shifts focus from chips to AI agents with NemoClaw launch

27 Sources

27 Sources

[1]

How to watch Jensen Huang's Nvidia GTC 2026 keynote -- and what to expect | TechCrunch

Nvidia kicks off its annual GTC developer conference in San Jose, California, on Monday with CEO Jensen Huang's keynote scheduled for 11 a.m. PT / 2 p.m. ET. GTC -- which stands for GPU Technology Conference -- is Nvidia's flagship annual event, running from March 16 to March 19. The chipmaker typically uses the spotlight to announce new products, champion partnerships, and lay out its vision for the future of computing. Huang's keynote will focus on Nvidia's role in the future of computing and AI. You can watch the two-hour address in person at the SAP Center or livestream the talk on the event's website. The broader three-day event is focused on what's coming next for AI across industries, including healthcare, robotics, and autonomous vehicles. On the software side, it's rumored that Nvidia will release an open source platform for enterprise AI agents, dubbed NemoClaw, as originally reported by Wired. The platform would give businesses a structured way to build and deploy AI agents (software that can carry out multistep tasks autonomously) and would position Nvidia to mirror similar offerings from companies like OpenAI. On the hardware side, the company is also rumored to be releasing a new chip designed to accelerate the AI inference process -- the process by which an AI model applies what it has learned to generate responses or make decisions, as distinct from the initial training process, which requires far more computing power. Faster, cheaper inference is widely seen as one of the last bottlenecks to scaling AI applications broadly. The chip would represent Nvidia's latest bid to dominate not just the training market, where it already commands an estimated 80% share, but the inference market as well, where competition from custom chips built by Google, Amazon, and others is fast intensifying. There will also be a range of partnership announcements and demonstrations showcasing Nvidia's AI capabilities across industries. Kevin Cook, a senior equity strategist at Zacks Investment Research, told TechCrunch that attendees should also expect to learn what the company plans to do with its relationship with Groq, the inference company Nvidia reportedly paid $20 billion late last year to license its technology. There's a lot of curiosity around this tie-up, given that Jonathan Ross, Groq's founder; Sunny Madra, Groq's president; and other members of the Groq team agreed to join Nvidia to help advance and scale that licensed tech.

[2]

Nvidia GTC 2026: What to expect at AI Burning Man

From Groq-ing about tokenomics to OpenClaw and the silicon that powers it, our predictions for the hottest ticket in town Nvidia has a bit of a problem. Popular generative AI workloads like code assistants and agentic systems generate massive quantities of tokens and need to move them at speed. But the GPU giant's chips currently struggle to deliver. That will start to change next week when Nvidia CEO Jensen Huang uses his company's GPU Technology Conference (better known as GTC) to explain how he will use the token-spewing accelerator tech he acquired with upstart Groq late last year. Market-watching firm SemiAnalysis' latest InferenceX benchmarks shows how Groq's tech helps to fill the gap in Nvidia's current portfolio. While Nvidia's NVL72 rack systems scale well at lower per-user token generation rates, they become progressively less efficient as user interactivity increases. By contrast, SRAM-heavy architectures, like those championed by Groq and Cerebras, excel in latency sensitive scenarios and can achieve token generation rates often exceeding 500 or even 1,000 tokens a second. That's many more tokens than GPU-based architectures can deliver. In fact, this capability is how Cerebras won OpenAI's business earlier this year to power its Codex model. Nvidia didn't own anything to match Cerebras until it acquired Groq's intellectual property and talent for a staggering $20 billion in December. By combining its GPU tech and CUDA software libraries with Groq's dataflow architecture, Nvidia has the opportunity to raise the Pareto curve dramatically, reducing the cost per token, while at the same time bolstering output speeds. Extending Nvidia's CUDA hardware stack to include Groq's dataflow architecture will not be easy. At GTC, Nvidia might announce it will add limited support for Groq's existing architecture relatively quickly. This GTC already feels a bit different as Nvidia has spilled the beans on its Rubin GPUs back at CES in January. To recap, Rubin packs up to 288 GB of HBM4 memory good for 22 TB/s of bandwidth and 35-50 petaFLOPS of dense NVFP4 performance depending on the use case. The launch represents a major performance uplift over Nvidia's current Blackwell-generation parts, delivering 5x the dense floating point throughput. So far, Nvidia has announced the chips will be available in both an eight-way HGX platform or its NVL72 rack system, which as the name suggests, crams 72 Rubin SXM modules into a single system. There's also Rubin GPX, which was announced back at Computex in June 2025, which will slot into select NVL racks to provide additional compute capacity for large context and video processing workflows. We expect to see Huang hammer on the performance optimizations and efficiency gains delivered by its growing portfolio of GPUs. But with those GPUs growing ever hotter - estimates put Rubin's thermal design power at 1.8kW or perhaps even higher - liquid cooling isn't optional. Some buyers may balk at that requirement, which would benefit AMD and its air-cooled kit. However, given the generation gains delivered by the Rubin architecture, there's nothing stopping Nvidia from releasing a single-die, air cooled version of the chip with five or six HBM stacks rather than eight. Such a chip would still deliver a 2.5x uplift in performance over Blackwell - without requiring liquid cooling. That's just speculation, but we have a sneaking suspicion we might see something along these lines during next week's festivities. Alongside its latest datacenter GPUs, we anticipate more details on Nvidia's standalone Vera CPU. First teased at last year's GTC, Vera features 88 custom-Arm cores which add support for simultaneous multithreading and a slew of confidential computing features previously only available on x86 platforms. So far, we've only seen the CPU packaged as part of Nvidia's Vera-Rubin superchip. However, we've since learned Nvidia will offer the chip as a standalone processor that will compete with Intel and AMD for some mainstream applications. Previously, Nvidia had offered Grace CPU superchips, but those were primarily for use in supercomputers and other HPC applications. However, last month the GPU giant revealed Meta would be its first partner to deploy Grace at scale and that the Social Network was already evaluating Vera CPUs for use in its datacenters as well. Alongside new datacenter silicon, we also anticipate Huang will share more details about Nvidia's next-gen Kyber racks and Feynman GPUs, which should debut in 2027 and 2028. We first saw the Kyber at last year's GTC. The 600 kW behemoth is set to cram 144 GPU sockets, each with four Rubin Ultra GPU dies into a standard rack form factor. Nvidia disclosed the existence of Kyber in part because datacenter operations were already struggling with the 120kW NVL72 systems announced the year before. By revealing Kyber, Nvidia lit a fire under datacenter physical infrastructure providers so they could provision the power supplies and cooling kit necessary to support such a system by 2027. With a yearly release cadence, Nvidia can't wait for the rest of the industry to catch up - it must telegraph its next move years in advance. With Feynman just two years out, we suspect Huang may repeat the exercise, setting new power and cooling targets, likely exceeding a megawatt per rack. Nvidia has long rumored to have been working on an Arm-based system on chip for PCs. A part capable of doing that job arrived last year in form of the DGX Spark and GB10 partner systems that put it to work. So far, however, OEMs have only used the chip in workstation class mini-PCs running Linux. Recent reports indicate Nvidia is working with the likes of Lenovo and Dell to bring a similar product to the Windows PC market. As we previously reported, Nvidia is also working with Intel to integrate its GPU dies into Chipzilla's next-gen processors. GTC seems like as good a time as any to throw gamers a bone and give Nvidia a new market to chase beyond its side hustles in the pro-visualization markets. Integrated Nvidia graphics might not be the RTX 50 Super series cards that many had hoped to see at CES, but given the state of the memory market, it seems unlikely we'll see them make an appearance at GTC. Beyond big iron and the remote possibility of some consumer hardware, you can bet on OpenClaw being a major talking point at GTC. Jensen Huang is apparently quite fond of the agentic framework in spite of its many security vulnerabilities, reportedly describing it as the "most important software release probably ever." The company is reportedly working on its own, presumably safer, version of the platform called NemoClaw. Speaking of claws, we also expect to see a fair few more robots take the stage. Since announcing its Isaac GR00T robotics platform nearly two years ago, Nvidia has launched a steady supply of new toolkits, frameworks, and hardware development platforms aimed at giving generative AI physical form. And to teach them to function in an unpredictable world, you can count on Nvidia's Omniverse digital twin platform to make another appearance. Introduced in 2019 at a time of rising Metaverse hype, the platform aimed to create a virtual environment in which physical processes could be simulated in the digital world before real-life implementation. Developers have since integrated Omniverse in a variety of simulation platforms, including those used to design and build AI bit barns. El Reg will be on the ground in San Jose next week for GTC to bring you the latest news from what has become one of the world's most-watched tech conferences. ®

[3]

Nvidia CEO set to reveal new chips and software at AI megaconference GTC

SAN JOSE, California, March 16 (Reuters) - Nvidia (NVDA.O), opens new tab CEO Jensen Huang is set to detail the company's hardware and software plans to a large crowd in San Jose, California, at the company's annual developer conference on Monday. During a keynote address at a hockey arena with a capacity of more than 18,000, Huang is expected to lay out how the top AI chipmaker plans to adapt to a rapidly changing AI landscape. Nvidia, the world's most valuable listed company, with a market capitalization of more than $4.3 trillion, is likely to detail a next-generation AI chip called Feynman, named after American physicist Richard Feynman, at the four-day conference. Huang is also likely to talk about data centers, Nvidia's chip programming software CUDA, digital assistants known as AI agents and physical AI such as robots. Another focus is likely to be Groq, a chip startup from which Nvidia licensed technology for $17 billion in December. Groq specializes in fast and cheap "inference" computing work, in which an AI model takes what it has already learned and uses it to answer a question or make a prediction in real time. After spending hundreds of billions of dollars in recent years on chips for training their AI models, companies such as OpenAI, Anthropic and Facebook owner Meta Platforms (META.O), opens new tab are shifting toward serving hundreds of millions of users who are tapping those AI systems. Nvidia faces greater competition in the market for chips for inference-computing work than it does for AI-training chips, and analysts expect the company to shore up its defenses against rivals looking to regain market share they lost to Nvidia in recent years. Despite that increased competition, some of which is coming from Nvidia's own customers designing their own chips, Nvidia remains central to the global AI ecosystem. Nations such as Saudi Arabia are building custom AI systems for their own populations using its chips, and it is one of the only large U.S. companies that continues to release open-source AI software, a growing field of competition between the U.S. and China. Huang's keynote is set for 11 a.m. Pacific Time (2 p.m. Eastern Time/1800 GMT). Reporting by Stephen Nellis and Max Cherney in San Jose, California; editing by Sayantani Gosh and Ethan Smith Our Standards: The Thomson Reuters Trust Principles., opens new tab * Suggested Topics: * Asia Pacific Max A. Cherney Thomson Reuters Max A. Cherney is a correspondent for Reuters based in San Francisco, where he reports on the semiconductor industry and artificial intelligence. He joined Reuters in 2023 and has previously worked for Barron's magazine and its sister publication, MarketWatch. Cherney graduated from Trent University with a degree in history.

[4]

Column: Jensen Huang doesn't need a new chip. He needs a new moat.

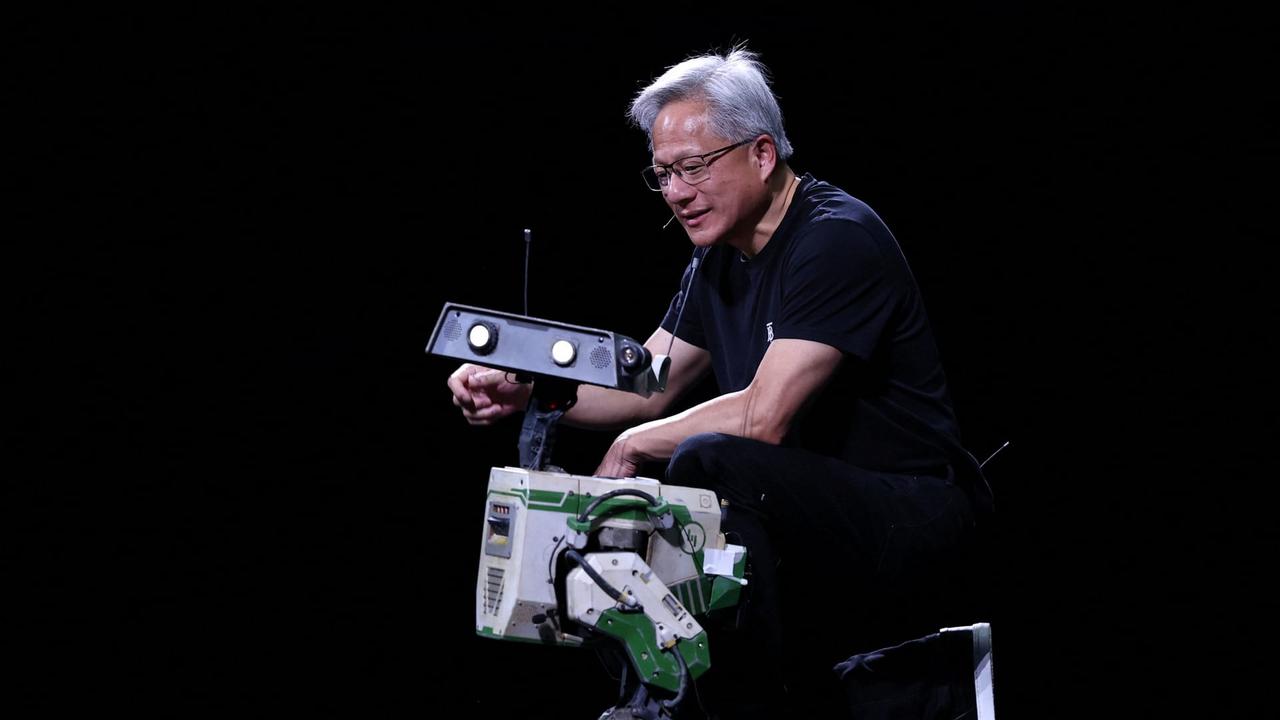

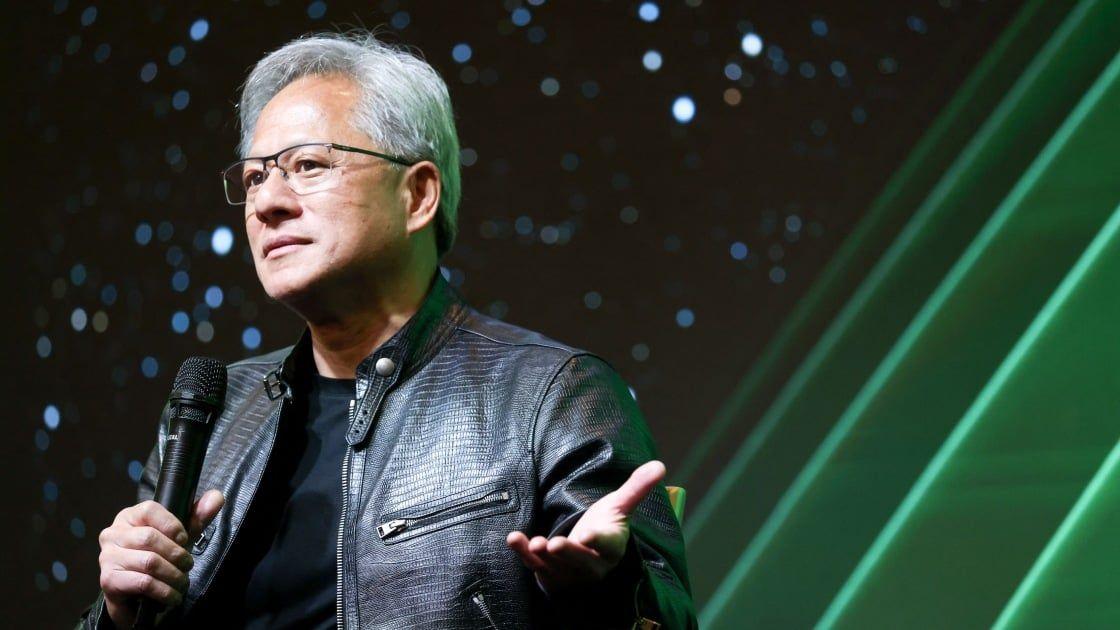

NVIDIA CEO Jensen Huang gestures during the NVIDIA GTC global AI conference in San Jose, California, U.S. March 17, 2026. Nvidia dominated the first era of AI -- CEO Jensen Huang is making sure it owns the next one. He's turning Nvidia from a chipmaker that's helping to drive a market cycle into the operating system for the future of artificial intelligence. The shift has mostly gone unnoticed and hasn't yet been priced in by investors. But the clearest signal to date came this week. At Nvidia's annual developer conference, GTC, Huang launched NemoClaw, an open-source, chip-agnostic platform for building and deploying AI agents - autonomous software programs at the center of the latest advancements in the industry. "Every company in the world should have an agentic system strategy," Huang said. "This is the new computer now." New chip announcements got most of the attention at GTC, but the NemoClaw launch is the more important strategic shift and shows what Nvidia is actually becoming.

[5]

Nvidia Debuts New A.I. Product at GTC Developer Conference

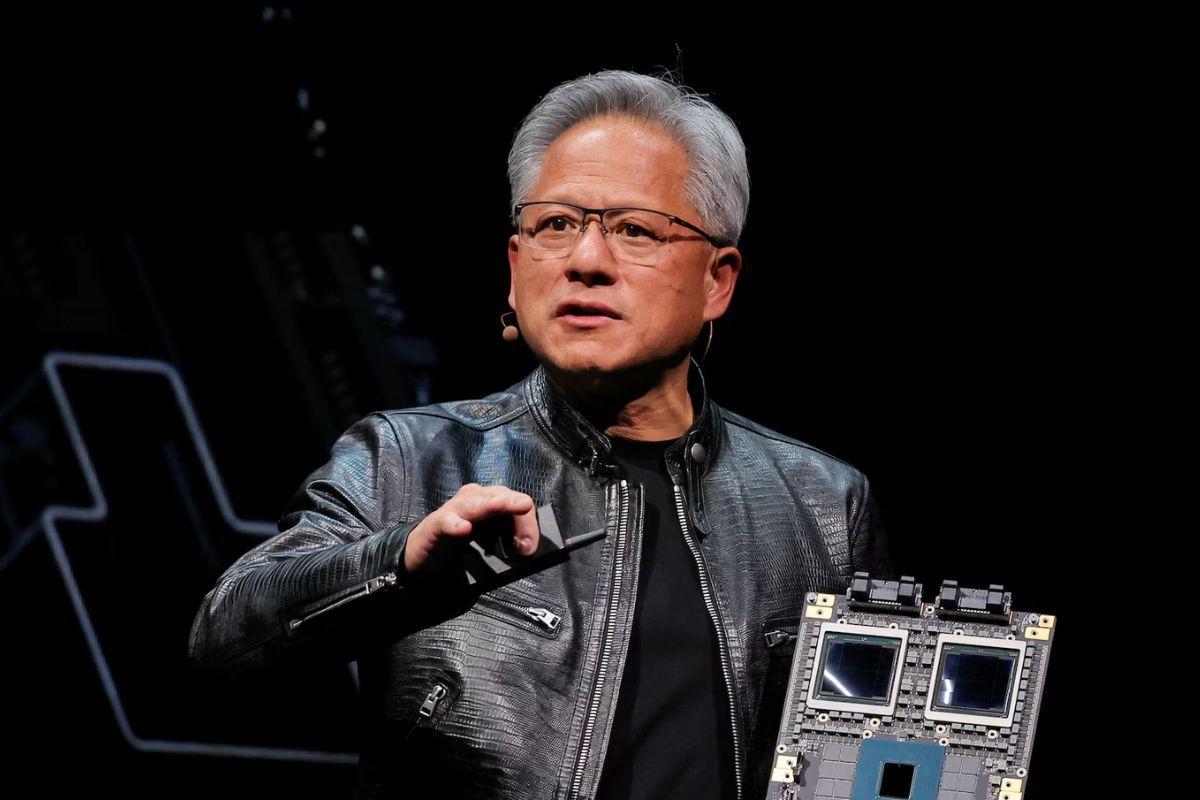

Kalley Huang reported from San Jose, Calif. Tripp Mickle reported from San Francisco. For three years, Jensen Huang, the chief executive of Nvidia, has described his company's chips as the Swiss Army knife of artificial intelligence. They were an all-purpose tool ideal for building and running A.I. But on Monday, in a packed arena for Nvidia's developer conference GTC in San Jose, Calif., Mr. Huang told the story of an industry with changing needs and how his company, the most valuable publicly traded company in the world, is trying to change with it. Mr. Huang unveiled a new product incorporating technology from a start-up called Groq. The product will pair Nvidia's chips, which excel at receiving an A.I. request, with Groq's chips, which have components that can put a charge into how Nvidia's chips operate. Over the past year, A.I. companies have shifted their work. The A.I. systems they built using Nvidia's chips have improved at creating software code, doing research and making images and videos. These capabilities, the result of a process known as inference, have put more value on chips that can generate data as inexpensively and quickly as possible. When it comes to cost and speed, Nvidia's chips have lagged behind those from Google, which makes its own chips called tensor processing units; and upstarts like Cerebras, whose chips specialize in running A.I. The edge those rivals enjoy in inference has helped them win business from some of Nvidia's longtime customers, like OpenAI and Meta. The deals caught Mr. Huang's attention. As competitors began to make a dent into his company's business last year, Nvidia in December announced a $20 billion licensing agreement with Groq, which makes chips custom-built for inference. With the combined technology, Nvidia is expected to make inference quicker and less expensive, analysts said.

[6]

NVIDIA GTC 2026 opens today

NVIDIA's GTC 2026 opens in San Jose today with 30,000 attendees, a keynote at the SAP Center, and announcements that could reshape the next two years of AI infrastructure, from Vera Rubin deep-dives to an enterprise agent platform and a gigawatt deal with Mira Murati's startup. San Jose goes green every March. The city's convention centre fills with developers, research engineers, and enterprise technology buyers who have flown in from 190 countries. The SAP Center, normally home to the San Jose Sharks, is repurposed as an arena for a two-hour keynote watched simultaneously by hundreds of thousands more online. Banks of screens, a leather jacket, and a CEO who speaks in roadmaps. NVIDIA GTC 2026 opens today, 16 March, and runs through 19 March. It is, by any objective measure, the most closely watched event in the technology industry right now: the moment when Jensen Huang, founder and chief executive of the world's most valuable chipmaker, tells an audience what the next phase of artificial intelligence will look like, and, by implication, who will profit from building it. The company came into this week carrying significant momentum. In its fourth-quarter 2025 earnings report, released in February, NVIDIA posted revenue of $68.1 billion, up 73% year on year. It has reported record revenue in each of the past several quarters. The valuation has oscillated with broader market conditions, geopolitical pressures, including the ongoing Iran conflict that is pushing oil prices higher, have weighed on tech stocks, but NVIDIA's fundamental position has not shifted: it supplies the compute on which the global AI buildout depends. GTC is where NVIDIA reminds everyone of that fact. In the press release announcing GTC 2026, Huang framed the conference around a single idea: AI is no longer an application or a model. it is infrastructure. "Every company will use it. Every nation will build it," he said. "From energy and chips to infrastructure, models and applications, every layer of the stack is advancing at once." What is already known, or strongly anticipated, fills in a significant portion of the picture. Beyond the keynote, GTC 2026 runs 1,000+ sessions spread across ten venues in downtown San Jose. Physical AI, covering robotics, autonomous systems, digital twins, and the use of simulation environments for training, is a dominant thread. Speakers include Ashok Elluswamy, VP of AI software at Tesla; Raquel Urtasun, CEO of Waabi; Deepak Pathak, CEO of Skild AI; and representatives from Johnson & Johnson, Disney Research Imagineering, and PhysicsX. Disney's GTC session has drawn particular attention: the company will show how it is using AI-powered physical robotics to bring animated characters into real-world environments, using NVIDIA Isaac simulation tools and reinforcement learning trained on GPU-accelerated infrastructure. On Tuesday, 17 March, Dario Gil, now Undersecretary at the US Department of Energy, joins a session on AI in climate and energy research. The inclusion of a senior government official is not incidental: the energy consumption of AI data centres has become a political question as much as a technical one, and NVIDIA is clearly interested in making the case that accelerated computing, despite its power demands, also accelerates solutions to the problems that power demand creates. Quantum computing features in a dedicated session titled "The Genesis of Accelerated Quantum Supercomputing," which outlines a vision for the convergence of AI and quantum hardware, with a claimed target of scientifically useful accelerated quantum supercomputers by 2028. On Wednesday, 18 March, Huang moderates a panel on the state of open frontier models with leaders from A16Z, AI2, Cursor, and Thinking Machines Lab. Here you can find the entire list with speakers. GTC has been called, at various points, the Super Bowl of AI, the Woodstock of technology, and the real March Madness. Bank of America analysts told investors to treat GTC as a buying opportunity. The event has become the place where the AI industry's direction is set, rather than observed. What makes 2026 different from previous years is not the scale of the announcements, GTC has been big before, but the maturity of the technology being discussed. Blackwell proved that NVIDIA could deliver on its roadmap. Vera Rubin is in production. The question the industry is now asking is not whether AI infrastructure will scale, but who controls what the infrastructure runs, and at what cost. NemoClaw, if it launches as reported, represents NVIDIA's answer to the software layer question. The Thinking Machines deal represents its answer to the frontier lab question. The Vera Rubin technical deep-dives represent its answer to the inference cost question. Taken together, they form an argument that NVIDIA is not merely supplying the picks and shovels to the AI gold rush, but is increasingly shaping the mine itself. Jensen Huang has been making versions of that argument for several years. At GTC 2026, from the floor of the SAP Center, he will have more evidence than ever to make it with.

[7]

Nvidia to focus on competition-beating AI advances at megaconference

SAN FRANCISCO, March 13 (Reuters) - When Jensen Huang strides onto the stage of a packed hockey arena to kick off Nvidia's (NVDA.O), opens new tab annual developer conference on Monday, he is likely to reveal products and partnerships geared toward keeping the AI chipmaker atop a growing array of competitors. Taking over the heart of Silicon Valley for most of a week, Nvidia GTC, as the conference is known, has become CEO Huang's preferred event to show off Nvidia's AI advances in chips, data centers, its chip programming software CUDA, digital assistants known as AI agents, and physical AI such as robots. This year, the four-day event is even more crucial as investors will seek assurance that Nvidia's strategy of plowing back its profits into the AI ecosystem is paying off. "I expect Nvidia to present a full-stack roadmap update from Rubin to Feynman while emphasizing inference, agentic AI, networking, and AI factory infrastructure," said eMarketer analyst Jacob Bourne, using the names for Nvidia's current and forthcoming generations of chips. Nvidia's chips sit at the center of hundreds of billions of dollars in investments in data centers by governments and companies around the globe, but the company is facing competition from other chipmakers and even from some of its customers who are developing their own chips. Analysts told Reuters they expect that overall AI chip market to keep growing, but Nvidia's slice to shrink somewhat as the AI chip market changes rapidly to where AI agents scurry back and forth among computer applications carrying out tasks on behalf of humans. That is a shift from training, where AI labs link many Nvidia chips together into one computer to chew through huge amounts of data to perfect their AI models. Those agents are expected to become so numerous that the humans asking them to do work will even need a new layer of AI middle managers - what technologists call an "orchestration" layer - to sit between human users and their fleets of agents. In some ways, analysts say, that's a good thing for Nvidia, because it signals that AI is becoming more useful. But those tasks, broadly known as "inference" in the AI industry, can also run on other kinds of chips, including the ones that big Nvidia customers such as OpenAI and Meta (META.O), opens new tab, which recently said it plans to release new AI chips every six months, can build for themselves. "Nvidia is definitely going to see more competition compared to a year ago," said KinNgai Chan, a managing director at Summit Insights Group. "Nvidia still has close to over 90% market share in both training and inference markets today." "We think Nvidia will begin to see share loss starting in 2027, once in-house ASIC programs gain some scale especially in the inference market," he said, referring to application-specific integrated circuits, chips tailored for a single function or custom workload, offering higher efficiency than general-purpose graphics processing units. NVIDIA IS SHORING UP DEFENSES The company spent $17 billion in December to purchase Groq, a chip startup that specializes in fast and cheap inference computing work. Talking about Groq on the company's earnings call last month, Huang said the company would showcase at GTC how Nvidia can plug Groq's ultra-fast AI technology into their existing CUDA platform. William McGonigle, analyst at Third Bridge, said his firm expects Nvidia to roll out a new line of servers that will combine Groq's chips with Nvidia's networking technologies to create a speedy and cost-efficient product. Another type of chip that poses an increasing competitive threat to Nvidia is the central processor unit, or CPU, the kind of chip long championed by Intel (INTC.O), opens new tab and Advanced Micro Devices (AMD.O), opens new tab. While those chips took a backseat to Nvidia's graphics processor units (GPUs) in recent years, McGonigle said they are "back in focus" and expects Nvidia to show off servers that use only its CPUs, which Huang talked up on a recent earnings call. "With the rise of agentic AI, the bottleneck is now at the agent orchestration level, which is carried out by the CPUs," McGonigle said. Analysts also expect Nvidia to elaborate on why it invested $2 billion each in Lumentum and Coherent, both of which make lasers for sending information between chips in the form of beams of light. Use of those lasers in what are called co-packaged optics could help speed up the connections among Nvidia's chips inside huge data centers, but they are not currently made in big enough volumes to match the number of chips Nvidia sells each year. "Nvidia will likely frame co-packaged optics as key to connecting massive AI clusters more efficiently, but the challenge is making it affordable enough to deploy at scale," said eMarketer's Bourne. Reporting by Stephen Nellis in San Francisco and Zaheer Kachwala in Bengaluru; Editing by Sayantani Ghosh and Sonali Paul Our Standards: The Thomson Reuters Trust Principles., opens new tab

[8]

Nvidia shares are rising before its big AI conference. Here's what Wall Street expects to hear

Shares of Nvidia climbed ahead of its closely watched developer conference this week as investors bet the event will offer fresh insight into the durability of the artificial intelligence spending boom and the chipmaker's next generation of processors. The stock advanced about 2% Monday ahead of the company's annual GTC conference, where CEO Jensen Huang is scheduled to deliver a keynote address at 2 p.m. ET. The gathering has become an increasingly important venue for Nvidia to outline its technology roadmap and reinforce investor confidence in demand for AI infrastructure. AI spending debate Analysts say the biggest question hanging over the semiconductor sector is whether hyperscaler spending on AI hardware can remain as strong as it has been over the past two years. "We believe that NVIDIA is due to catch up to other stocks in the supply chain, and see this as a very good entry point," analysts at Morgan Stanley wrote in a preview note, reiterating the chipmaker as their top pick in semiconductors. The firm said the conference could help address investor debate around Nvidia's long-term market share as competitors such as Advanced Micro Devices and custom AI chips gain traction. Analysts at Wells Fargo said Nvidia's underperformance relative to the broader semiconductor sector this year has become a frequent topic among investors. The stock is down about 3% year to date compared with an 8% gain for the VanEck Semiconductor ETF . NVDA YTD mountain Nvidia year to date Long-term targets While GTC could act as a catalyst, Wells Fargo said expectations are already high with buy side estimates for Nvidia's 2027 earnings hovering around $13 a share -- a level that already assumes the success of future architectures such as Vera Rubin. Instead, Wells Fargo said clearer long-term targets could help reignite the stock. Analysts pointed out that rivals including Broadcom , Marvell Technology and AMD have all discussed multiyear outlooks, while Nvidia has typically provided only near-term guidance. "If NVDA puts out some firm bogey for CY27, it could be the positive catalyst needed to get the stock working," Wells Fargo said. Analysts at Wolfe Research also said investors are eager for signals about the scale of future demand. The firm said Nvidia could offer new disclosures on AI-related revenue visibility for 2026 and 2027, which could be a potential catalyst if the company provides stronger long-term demand signals. Buybacks Another driver could be capital returns. Nvidia reported more than $60 billion in cash on its balance sheet in its latest quarterly report, while Wall Street models roughly $180 billion and $240 billion in free cash flow for 2026 and 2027, respectively. An updated buyback strategy unveiled at the conference could provide an additional boost for the shares, Wells Fargo said. Pipeline and roadmap Analysts at Bank of America expect the conference to spotlight Nvidia's future product pipeline and customized AI systems designed for inference. The bank said investors will likely focus on updates extending the company's roadmap through so-called Feynman-generation GPUs expected later this decade, along with potential commentary on the rollout of the Rubin architecture slated for 2027 and beyond. Bank of America maintained a buy rating on the shares, calling Nvidia "a top AI pick trading at a historical low 17 times forward earnings." Other analysts are watching for technical announcements that could hint at Nvidia's next wave of growth. Analysts at Mizuho said the conference could include details about a new Rubin rack platform expected to launch in the second half of 2026, as well as updates on networking, optical interconnects and specialized inference processors designed to dramatically boost performance. The firm also flagged potential discussion around quantum computing initiatives, including technology aimed at linking graphics processors with quantum processors in hybrid supercomputing systems. -- CNBC's Michael Bloom contributed reporting.

[9]

Nvidia's GTC will mark an AI chip pivot. Here's why the CPU is taking center stage

Nvidia shifted its CPU strategy in February with an announcement that standalone processors are now deployed in Meta data centers. Nvidia's graphics processing units have been the hottest-selling chips for years, but the sudden advent of agentic artificial intelligence has brought on a renaissance for its more modest host chip, the central processing unit. Now, Nvidia is poised to unveil new details about its agentic-optimized CPUs at its annual GTC conference that kicks off on Monday, with a CPU-only rack likely to appear on the showroom floor. "CPUs are becoming the bottleneck in terms of growing out this AI and agentic workflow," Dion Harris, Nvidia's head of AI infrastructure, told CNBC this week, calling it an "exciting opportunity." The chip giant announced its first data center CPU, Grace, in 2021, and the next generation, Vera, is now in production. The CPUs are typically deployed alongside Nvidia's famous Hopper, Blackwell or Rubin GPUs in full rack-scale systems. Exploding demand for GPUs has turned Nvidia into a household name and the most valuable publicly traded company in the world, with a $4.4 trillion market cap. Its broader chip strategy took a major turn in February, when Nvidia struck a multiyear deal with Meta that included the first large-scale deployment of Grace CPUs on their own, with plans to deploy Vera in 2027. Thousands of standalone Nvidia CPUs are also helping power supercomputers at the Texas Advanced Computing Center and Los Alamos National Lab, Nvidia told CNBC. Bank of America predicts the CPU market could more than double, from $27 billion in 2025 to $60 billion by 2030. In the latest quarter alone, Nvidia generated data center revenue of over $62 billion, up 75% from a year earlier. The CPU resurgence is driven by a fundamental change in compute needs, as mass AI adoption shifts from call-and-answer chatbots to task-oriented agentic apps. While GPUs are ideal for training and running AI models because they have thousands of tiny cores narrowly focused on performing many operations simultaneously, CPUs have a smaller number of powerful cores running sequential general-purpose tasks. Agentic AI requires a lot of general compute power, as they move large amounts of data around for AI workflows, orchestrating across multiple agents. "These agentic systems are spawning off different agents working as a team," CEO Jensen Huang said on Nvidia's earnings call last month. "The number of tokens that are being generated has really, really gone exponential, and so we need to inference at a much higher speed." Huang mentioned agentic AI a dozen times on the call, and said "the best performance-per-watt is literally everything" as hardware needs shift. The company said in a press release that its standalone CPUs deliver significant performance-per-watt improvements in Meta's data centers. "This is new infrastructure: Greenfield expansion of racks of CPUs whose only job is to run agentic AI," said chip analyst Ben Bajarin of Creative Strategies. "Your software is going to sit elsewhere, your accelerators are just going to run tokens, but something has to sit in the middle and orchestrate that." Now, the once-sleepy central processor market is facing what The Futurum Group calls a "quiet supply crisis," predicting the CPU market growth rate could exceed GPU growth by 2028. Leading CPU providers AMD and Intel have warned customers in China of supply shortages, according to Reuters. CPU delivery lead times are up to six months, and prices have gone up more than 10%, according to the report. "Increases in demand are unprecedented over the last six to nine months," AMD's head of data center Forrest Norrod told CNBC in an interview. Norrod said he doesn't see "any prospect of this slowing down or stopping anytime soon," but that AMD anticipated the lift in demand and is "working diligently" to meet it. An Intel spokesperson told CNBC it expects inventory to hit its "lowest level" in the current quarter, "But we are addressing aggressively and expect supply improvement in Q2 through 2026." "Wafers don't grow on trees," Bajarin said. "It's not like we can just go harvest 10% more silicon wafers. There's a crunch across the entire industry. So unfortunately, CPU wafers are constrained." As for whether Nvidia has seen any CPU shipment delays, Harris told CNBC, "So far, so good." He said Nvidia's "robust supply chain" has been able to manage the demand, largely because many of its CPUs will be sold alongside GPUs in its rack-scale systems. Harris said Nvidia took a fundamentally different approach in design that makes its CPUs "best suited" for data processing and agentic AI workflows, compared to the more general-purpose CPUs made by industry leaders Intel and AMD. A big difference is in the number of cores in each CPU. AMD's EPYC line and Intel's Xeon high-performance server CPUs typically have 128 cores, compared to 72 cores in Nvidia's Grace CPU. "If you're a hyperscaler, you want to maximize the number of cores per CPU, and that essentially drives down the cost, the dollars per core. So that's one business model," Harris explained. Instead, Nvidia designed its CPU specifically to help its star GPUs run AI workloads. "Your single-threaded performance becomes much more important than your dollars per core because you're trying to make sure that that very expensive resource, being the GPU, isn't sitting there waiting," Harris said. Nvidia also bases its CPUs on Arm architecture, more typically used for chips in lower-power devices like smartphones, while Intel and AMD base their CPUs on traditional x86 architecture. Introduced by Intel nearly 50 years ago, x86 is the leading instruction set that has dominated PC and server processor designs since its inception. AMD's Norrod said Nvidia has, "Optimized their chips very well, I think, for feeding their GPUs. They're not well optimized for general-purpose applications." Indeed, Nvidia relies on more general-purpose CPUs for some of its products. For example, Nvidia pairs its GPUs with host CPUs from Intel or AMD in its HGX Rubin NVL8 platform that customers use as the building blocks for their own AI racks. Nvidia's foray into standalone CPUs comes as more of its customers are making their own Arm-based processors for their data centers. Amazon was the first major hyperscaler to launch an in-house CPU with the release of Graviton in 2018. Google's Axion processor, released in 2024, now handles some 30% of internal applications, according to the Futurum Group. Microsoft released its second-generation Cobalt processor in November. Arm is expected to launch its own in-house CPU this year, with Meta as an early customer. Mercury Research estimates the server CPU market share in the last quarter of 2025 was dominated by Intel at 60%, AMD at 24.3%, and Nvidia at 6.2%, with the remaining share split among in-house Arm-based CPUs from hyperscalers like Amazon, Microsoft and Google. In the face of insatiable need for compute, Nvidia typically takes a welcoming attitude toward competition. Keeping with that tradition, Nvidia opened up its NVLink networking technology to third-party licensing in May. The rest of 2025 saw a flurry of NVLink deals with Intel, Qualcomm, Fujitsu, and Arm, easing the path for third-party CPUs to integrate with Nvidia GPUs in AI servers. While these deals involve CPUs made on Arm or x86 architecture, Nvidia also now supports open instruction-set architecture RISC-V. Gaining traction in recent years, RISC-V allows companies to design custom processors without paying licensing fees to companies like Arm. In January, Nvidia struck a deal enabling U.S. chip company SiFive to use NVLink to connect its RISC-V chip designs with Nvidia GPUs. Harris said that no matter how the CPU demand gets filled, Nvidia's strategy remains "platform agnostic." "We are certainly building an Arm-based CPU, but we are so invested in the x86 community, we're so invested across the ecosystem, that we're going to have a strong position either way." Bajarin describes Nvidia's shifting strategy as "soup-to-nuts." "To compete, Nvidia's answer can't be you buy GPUs from us or nothing else," Bajarin said. Whether it's GPUs, CPUs or specialized hardware, "that's just the way the product has to expand to meet a diversity of workloads," he said. Choose CNBC as your preferred source on Google and never miss a moment from the most trusted name in business news.

[10]

Nvidia may soon unveil a brand-new AI chip. A closer look at the $20 billion bet to make it happen

On the day before Christmas, when few stocks were stirring, a pricey and pivotal transaction jolted the AI computing race: Nvidia was spending a reported $20 billion to license technology from chip startup Groq and hire key employees, including its CEO, who previously helped Google create what's become the leading alternative to Nvidia's AI processors. In the months since, Nvidia's offensive move has arguably flown under the radar, considering its competitive ramifications in the artificial intelligence gold rush. Perhaps it was lost in the Christmastime shuffle, or in the torrent of other deals and investments that have been flowing from the world's most valuable company over the past year. That should change next week, when Nvidia holds its annual GTC event, called the GPU Technology Conference in its early days, in San Jose, California. The four-day gathering is a big deal in AI. It takes place at the San Jose McEnery Convention Center, with Monday's keynote address from Nvidia CEO Jensen Huang held at the nearby SAP Center, where the NHL's San Jose Sharks play -- a venue befitting Jensen's leather jacket-wearing, rock star-like status. Throughout the week, Nvidia plans to share at least some of its vision for incorporating Groq's chip technology into its already-dominant AI computing ecosystem. "I've got some great ideas that I'd like to share with you at GTC," Jensen said on the chipmaker's late February earnings call. Those ideas figure to be among the notable developments at a conference that's been dubbed the "Super Bowl of AI." Nvidia is also expected to update us on its roadmap for its bread-and-butter graphics processing units (GPUs), including its next-generation Vera Rubin family. The main reason for the Groq intrigue: Nvidia is likely to harness Groq's technology to build a brand-new chip targeting the daily use of AI models, a process known as inference, according to Wall Steet analysts. Inference is becoming a larger and more competitive part of the AI computing picture. Plus, it's the source of revenue for Nvidia's data center customers. Nvidia's GPUs are the clear-cut performance leader in the training stage of AI computing, where the models are fed vast amounts of data to be prepared for real-world usage. Nvidia's dominance in training fueled its meteoric ascent in recent years. The inference market, however, is much more crowded, as AI adoption goes mainstream and customers seek out cost-effective ways to meet the booming demand. Companies are essentially trying to get their hands on whatever kind of chips they can. Advanced Micro Devices , the distant No. 2 maker of GPUs, is finding some traction in inference, recently signing up Meta Platforms as a customer in a splashy partnership announcement . Meanwhile, the custom chips initiatives at large tech companies, including Meta, are generally seen as targeting the inference market. To be sure, Google's in-house Tensor Processing Units (TPUs) are formidable challengers in both training and inference, and the newfound success of Google's Gemini chatbot -- built on TPUs -- has elevated their reputation as Nvidia's biggest threat. Google co-designs TPUs with Broadcom . Amazon has also touted its in-house Trainium chip's capabilities in both tasks. Anthropic, the AI startup behind the Claude model, uses Trainium -- though, in a reflection of the hunt for any-and-all-kinds of computing, Anthropic is also using TPUs and inked a deal with Nvidia in the fall. Another competitor to know: Cerebras, an AI startup preparing for an initial public offering. For the first time, Oracle co-CEO Clay Magouyrk earlier this week name-dropped Cerebras on its earnings call . Nvidia is no slouch in inference. While perhaps a bit outdated, Nvidia in 2024 disclosed that about 40% of its revenue was from inference. At last year's GTC, Jensen told analysts that "the vast majority of the world's inference is on Nvidia today." And, on Nvidia's most recent earnings call in late February, finance chief Colette Kress highlighted that industry publication SemiAnalysis recently "declared Nvidia inference king," noting that its current generation Grace Blackwell GPUs offer massive performance improvements over its predecessor Hopper. Where Groq fits Nvidia evidently saw an opportunity to improve what it brings to the table on inference, otherwise it wouldn't have shelled out a reported $20 billion for Groq's technology and talent. Nvidia didn't outright acquire the entire Groq company, perhaps to avoid antitrust scrutiny. The licensing deal is billed as non-exclusive, and Groq continues to operate an inference cloud service running on its specialized chips (also, in case there was any confusion, the company has no ties to the other Grok, Elon Musk's AI chatbot). Some important people jumped to Nvidia in the deal, though. The most notable addition is Groq's founder and now-ex CEO, Jonathan Ross. Before starting Groq in 2016, Ross was part of the Google team that developed the original TPU. Ross now holds the title of chief software architect at Nvidia. Groq developed and brought to market what it called an inference-focused LPU, short for Language Processing Units. In various podcast interviews over the years, Ross has made it clear that Groq didn't bother trying to compete with Nvidia on training. Instead, he has said, Groq saw inference computing as the place where the startup could innovate and carve out a lane. So, Groq set out to develop a chip for running AI models that prioritizes speed and efficiency at a lower cost. A main reason why Nvidia's GPUs are so good at training AI models is their ability to perform a massive amount of calculations at the same time, often called parallel processing. Keeping it simple, AI models work to identify patterns within a mountain of training data, and that requires doing a lot of math simultaneously -- hence why a GPU is superior for AI training to a traditional computer processor (CPU), which executes tasks sequentially rather than in parallel. Now, another important trait of GPUs is their flexibility, driven in large part by Nvidia's CUDA software program. Jensen has said that CUDA -- short for compute unified device architecture -- enables GPUs to perform across all different types of workloads, including inference. When an AI model is deployed for inference and receives a user's prompt, the model basically refers back to all those learned patterns to determine what the most appropriate response should be, piece by piece (or token by token, in AI parlance). It is making the decision based on the probabilities in its training data. But fundamentally, there is a difference in training and inference computing, and what attributes of a chip are most desirable for each varies. Groq designed its chips to be really good at inference, and in particular, real-time tasks where speed is of the utmost importance. Groq's LPUs use a type of short-term memory, known as SRAM, that is located directly on the chip's engine, a driving force behind its speediness. GPUs, on the other hand, use a type of short-term memory called high-bandwidth memory or HBM, which is located right next to the GPU's engine, not directly on it. The AI boom has created a supply crunch for HBM and set memory prices soaring. "GPUs are really great at training models. When somebody wants to train a model, I'm just like, 'Just use GPUs. Don't talk to us,'" Ross said in a podcast interview with wealth advisory Lumida in late 2023 . "But the big difference is, when you're running one of these models -- not training them, running them after they've already been made -- you can't produce the 100th word until you've produced the 99th," he added. "So, there's a sequential component to them that you just simply can't get out of a GPU. ... It's how quickly you complete the computation, not just how many computations you can complete in parallel. And we do the computations much faster." However, Ross has said he believes Nvidia's bread-and-butter GPUs and Groq's technology can complement each other. He made that clear in a separate interview on The Capital Markets podcast , dated February 2025, still many months before he left Groq for Nvidia. "We're actually so crazy fast compared to GPUs that we've actually experimented a little bit with taking some portions of the model and running it on our LPUs and letting the rest run on GPU. And it actually speeds up and makes the GPU more economical. So, since people already have a bunch of GPUs they've deployed, one use case we've contemplated is selling some of our LPUs to, sort of, nitro boost those GPUs." That comment really jumped out, as we came across this year-old interview, searching for additional insight into Groq and Ross. Hearing Ross say that long before he joined Nvidia made us even more intrigued to hear Jensen's vision next week. There are a lot of possibilities for Groq-infused Nvidia hardware. Indeed, as AI advances, it makes sense that Nvidia would branch out into more specialized chips. History suggests that the more advanced a certain technology gets, the more specialization there is. Back on Nvidia's February earnings call, Jensen indicated that he's looking at Groq in a similar vein to Mellanox, the networking equipment provider that Nvidia acquired six years ago . "What we'll do is we'll extend our architecture with Groq as an accelerator in very much the ways that we extended Nvidia's architecture with Mellanox," Jensen said. That acquisition has aged like fine wine because Nvidia's networking prowess is a crucial ingredient to its success in the AI boom, transforming it into a one-stop shop for AI computing rather than a simple chip designer. In its fiscal 2026 fourth quarter alone, Nvidia's networking business generated around $11 billion in revenue -- roughly the same as AMD's overall revenue. Nvidia's better-than-expected companywide revenue in Q4 surged 73% year over year to $68.13 billion. Less than three years ago, Nvidia's networking revenue was pacing for roughly $10 billion for an entire 12-month period . Now, it's $11 billion in just three months, exploding alongside its GPU revenue, too. Investors can only hope the Groq transaction ends up being anywhere near as successful as Mellanox. The journey to finding out starts next week. (Jim Cramer's Charitable Trust is long NVDA, GOOGL, META, AVGO and AMZN. See here for a full list of the stocks.) As a subscriber to the CNBC Investing Club with Jim Cramer, you will receive a trade alert before Jim makes a trade. Jim waits 45 minutes after sending a trade alert before buying or selling a stock in his charitable trust's portfolio. If Jim has talked about a stock on CNBC TV, he waits 72 hours after issuing the trade alert before executing the trade. THE ABOVE INVESTING CLUB INFORMATION IS SUBJECT TO OUR TERMS AND CONDITIONS AND PRIVACY POLICY , TOGETHER WITH OUR DISCLAIMER . NO FIDUCIARY OBLIGATION OR DUTY EXISTS, OR IS CREATED, BY VIRTUE OF YOUR RECEIPT OF ANY INFORMATION PROVIDED IN CONNECTION WITH THE INVESTING CLUB. NO SPECIFIC OUTCOME OR PROFIT IS GUARANTEED.

[11]

CEO Jensen Huang Wants You to Know Nvidia Is More Than Just an AI Chipmaker

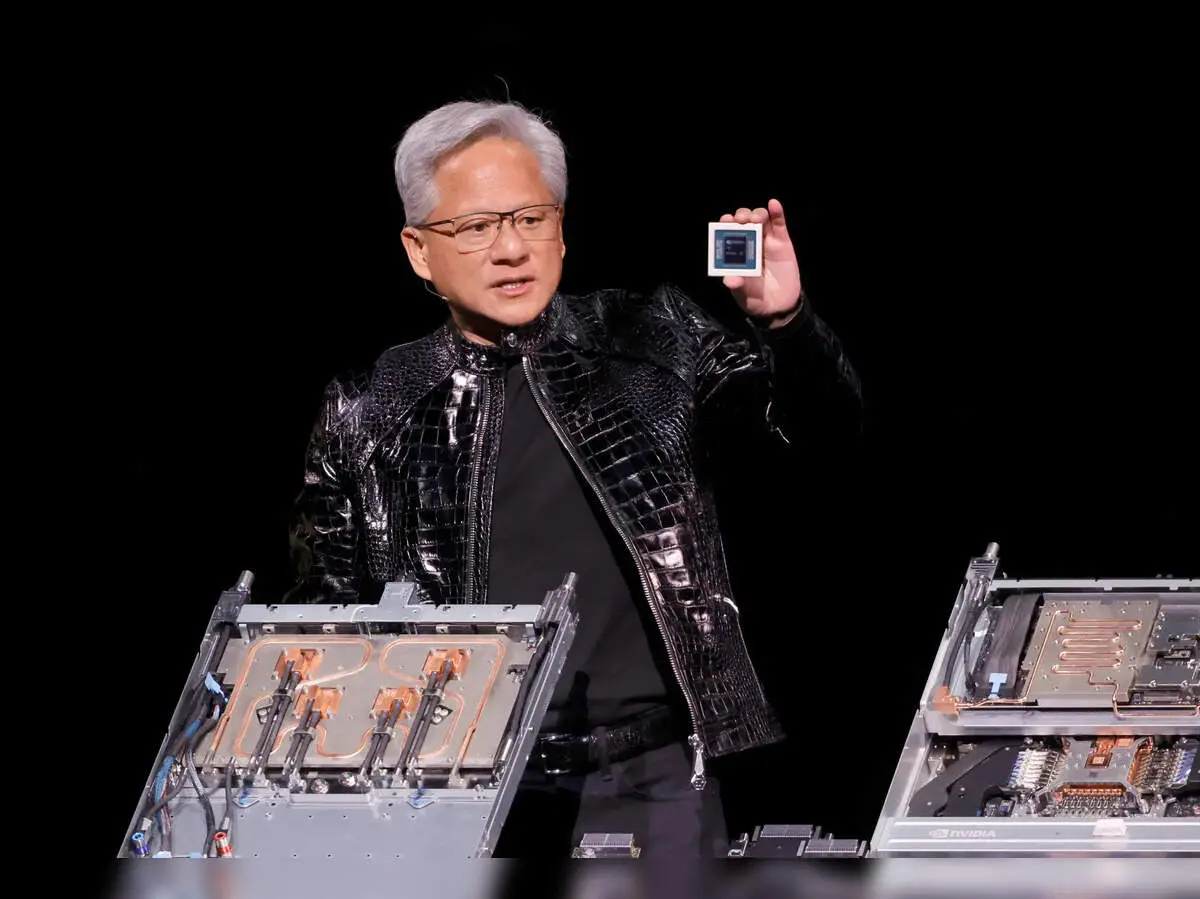

Get personalized, AI-powered answers built on 27+ years of trusted expertise. Nvidia CEO Jensen Huang wants you to know the chipmaker at the heart of the AI boom is far more than just a semiconductor company, and that its growth story is only getting started. "We're going to accelerate everybody," Huang said at the company's GPU Technology Conference keynote Monday, where he unveiled the company's latest chips, software, and more. At the event, Huang highlighted Nvidia's (NVDA) highly anticipated, next-generation Vera Rubin platform, which he called a "revolutionary" product. Together, Vera Rubin and its predecessor Blackwell could drive $1 trillion in revenues through 2027, Huang said. The chipmaker had previously projected $500 billion of combined revenue for Blackwell and Rubin through the end of 2026. The CEO unveiled the company's Feynman platform slated for 2028 as well. Huang said Feynman is set to include a new and even more powerful chip called "Rosa," named after British scientist Rosalind Franklin, perhaps best known for her contributions to the discovery of the structure of DNA. Huang also said Nvidia is rolling out new and updated coding libraries that Huang called the "crown jewels" of the company, and unveiled an open agent framework called NemoClaw, that Huang said would expand the company's reach and influence well beyond its hardware. The CEO told attendees at the event that demand for Nvidia's latest offerings is "off the charts," and that every major player in the space is building on its technology. "We're the only platform in the world today that runs every single domain of AI across every single one of these AI models," Huang said, and gave shoutouts to a number of the company's major partners and customers, including Alphabet's (GOOGL) Google, Amazon (AMZN), Palantir (PLTR), Dell (DELL), IBM (IBM), Oracle (ORCL), CoreWeave (CRWV), and others. "We are now a computing platform that runs all of AI," Huang said. Shares of Nvidia rose close to 2% Monday as Huang gave his keynote address. The stock has lingered in negative territory for the year amid broader worries about the AI trade. Still, they've added more than half their value in the last 12 months.

[12]

How to Watch Jensen Huang's Keynote at the Nvidia GTC 2026

* Jensen Huang's keynote starts March 16 at 11AM PT * Livestream will be available on Nvidia's official website * The AI event is expected to host over 30,000 attendees Nvidia's annual GPU Technology Conference (GTC) has become one of the most closely watched events in the artificial intelligence (AI) industry. Over the past few years, major AI announcements, including new GPUs, robotics platforms, and data centre technologies, have debuted on the GTC stage. The 2026 edition is expected to follow the same trend, with Nvidia CEO Jensen Huang set to outline the company's next wave of AI infrastructure and computing platforms. As AI chips, models, and agentic systems dominate industry conversations, Huang's keynote is likely to attract developers, investors, and tech enthusiasts worldwide. When Is Nvidia GTC 2026? The Nvidia GTC 2026 event will take place from March 16 to March 19 in San Jose, California, bringing together developers, researchers, and companies working with foundation models, accelerated computing, and robotics. The conference typically features hundreds of technical sessions, workshops, and product announcements. Nvidia says the event includes more than 500 sessions and over 30,000 attendees from across the AI ecosystem. The most anticipated moment, however, is the opening keynote delivered by Nvidia founder and CEO Jensen Huang. How to Watch Jensen Huang's Nvidia GTC 2026 Keynote Jensen Huang's keynote is scheduled for Monday, March 16, at 11AM PT at the SAP Center in San Jose. For viewers in India, the keynote will begin at 11:30PM IST on March 16. For those watching online, Nvidia will livestream the keynote on its official GTC website and YouTube channel. The stream is free and does not require registration. What to Expect From Nvidia's GTC 2026 Keynote Nvidia has indicated that the keynote will cover advancements across its entire AI computing stack, including chips, software platforms, robotics, and data centre infrastructure. The company typically uses GTC to outline its AI compute roadmap over the next several years. One of the most closely watched segments will likely involve new AI chips and inference hardware. There is a possibility that Nvidia might introduce or preview next-generation processors designed specifically for AI inference workloads in complex and agentic AI systems. Recently, Nvidia has been expanding its vision of AI factories, the large-scale data centre systems designed to train and run AI models. Huang is expected to discuss new infrastructure and networking solutions that enable these AI-driven computing environments. The tech giant has increasingly focused on robotics and physical AI, which could be part of the keynote announcements.

[13]

Nvidia CEO Huang starts keynote at AI megaconference GTC

Nvidia CEO Jensen Huang has started his keynote at the chipmaker's annual developer conference in San Jose, California, where he is set to detail its hardware and software plans. Shares of Nvidia - the world's most valuable listed company with a market capitalization of more than $4.3 trillion - were up 2.4% on Monday as investors awaited the announcements. At a hockey arena with a capacity of more than 18,000, Huang is expected to lay out how the top AI chipmaker plans to adapt to a rapidly changing AI landscape at the four-day conference. He started the keynote by making the argument that part of Nvidia's competitive advantage was its Cuda chip programming software, which some analysts regard as its strongest shield. "The installed base is what attracts developers who then create (the) new algorithms that achieve the breakthrough" technologies, Huang said. "We are in every cloud. We're in every computer company. We serve just about every single industry." The keynote is also likely to include detailon a next-generation AI chip called Feynman, named after late American physicist Richard Feynman. Huang is also likely to talk about data centers, Nvidia's chip programming software CUDA, digital assistants known as AI agents and physical AI such as robots. This year's event is even more crucial as investors will seek assurance that Nvidia's strategy of plowing back its profits into the AI ecosystem is paying off. Another focus is likely to be Groq, a chip startup from which Nvidia licensed technology for $17 billion in December. Groq specializes in fast and cheap "inference" computing work, in which an AI model takes what it has already learned and uses it to answer a question or make a prediction in real time. After spending hundreds of billions of dollars in recent years on chips for training their AI models, companies such as OpenAI, Anthropic and Facebook owner Meta Platforms are shifting toward serving hundreds of millions of users who are tapping those AI systems. Nvidia faces greater competition in the market for chips for inference-computing work than it does for AI-training chips, and analysts expect the company to shore up its defenses against rivals looking to regain market share they lost to Nvidia in recent years. Analysts also expect Nvidia to elaborate on why it invested $2 billion each in Lumentum and Coherent, both of which make lasers for sending information between chips in the form of beams of light. Despite that increased competition, some of which is coming from Nvidia's own customers designing their own chips, Nvidia remains central to the global AI ecosystem. Nations such as Saudi Arabia are building custom AI systems for their own populations using its chips, and it is one of the only large U.S. companies that continues to release open-source AI software, a growing field of competition between the U.S. and China.

[14]

NVIDIA May Finally Abandon Its "One GPU Does Everything" Mantra at GTC 2026, and Here's What to Expect

We are heading towards GTC 2026, one of the most important events within the AI world, and this year, we are expecting a massive shift in how computing is perceived. The race for AI infrastructure has evolved signifcantly over the past few years, as evolving compute requirements have forced companies like NVIDIA and AMD to innovate in what they offer. Since 2022, we have seen training workloads gain massive popularity, which Hopper and Blackwell capitalized on. Now, moving into 2026, agentic workloads are the next area to focus on for compute providers, which is why the upcoming GTC announcements from NVIDIA will be around them. You will see 'agentic performance' discussed a lot, and Team Green has strategically positioned itself. We have managed to maintain a lead over NVIDIA's Groq acqusition, being among the first to discuss how important the agreement is for the world of compute. Heading into GTC 2026, NVIDIA is expected to materialize its collaboration with Groq into an actual end-product, and one of the prospects we are looking to witness is a blend of Groq's LPU units with NVIDIA's Vera Rubin systems. It is expected that, with Vera Rubin, Team Green will offer a hybrid compute tray configuration featuring LPU units, allowing NVIDIA to capitalize on disaggregated inference. There are various speculations about how LPUs would be integrated into Rubin systems, but we did discuss the possibility of LPU units available in 64, 128, and 256-unit configurations within an individual compute tray, linked to Rubin GPUs via NVLink Fusion. Jensen has already said the Groq agreement will play a similar role to Mellanox, indicating that LPUs will help NVIDIA complement workload stages, such as decode. Rubin CPX has already allowed NVIDIA to cover up prefill workloads, meaning the company has covered up two major stages of a traditional inference request. There are a lot of specifics to discuss about how LPUs will play a role in Rubin's architecture, but essentially, you are looking at a 'platform' shift with the upcoming architecture, where NVIDIA offers various configurations targeting specific workloads. This is why we say the company's "GPU-only" approach has become a bit outdated, especially given how AI workloads are evolving. Since we have already seen Vera Rubin under full production, it is expected that NVIDIA will provide us with a deep dive into Feynman, the next generation of the AI architecture. Feynman was already discussed a bit with GTC 2025, but based on what we know about the lineup, one of the more significant aspects is that NVIDIA will actually depend on Moore's Law this time to help them scale compute capabilities. It is claimed that Feynman will feature TSMC's A16 process, and NVIDIA is expected to be an exclusive customer for the node, given how limited its use case would be for other customer segments. Jensen has already talked about showcasing chips at GTC 2026 that were "never seen before", and with Feynman, it is expected to be a major revamp in terms of the architecture design. The chip lineup is reported to feature TSMC's hybrid bonding technology, likely using SoIC or EMIB, and one report also suggested that Feynman would use Groq's LPUs in its true nature. We could see talks of LPUs being stacked onto the Feynman compute die, since it makes sense given how A16 provides space for front-side LPU connections. There were rumors that NVIDIA is also considering Intel's 14A process for its Feynman chips, but this hasn't been confirmed yet. The above information makes one thing clear: Feynman will also mark a major shift in how NVIDIA approaches microarchitecture, which is why rack-scale solutions across generations will evolve at a similar pace. This confirms our narrative about GTC 2026 marking a 'major' shift in how computing is done. NVIDIA isn't exactly done with Vera Rubin yet, as there is a lot to discuss as well. Back at CES 2026, Team Green showcased the NVL72 rack, featuring the 72-chip configuration, but it's important to note that this is, for now, a baseline offering. NVIDIA is also looking to scale up to NVL144 and NVL576, but reports suggest we might not see the former, given the compute requirements NVIDIA has seen from its customers. We also saw NVIDIA unveil Rubin CPX, a context-focused rack-scale option for prefill, but there haven't been many discussions about customer deployments. Among all, the information around NVL576 is going to be one of the most interesting to watch, given that NVIDIA will shift to the new "Kyber" generation. NVIDIA will switch to stacking compute trays, mounted vertically, similar to books, called vertical blades with Kyber, along with an 800 VDC facility-to-rack power-delivery model. Based on what NVIDIA has told us in the past, NVL576 will be part of Rubin Ultra GPUs, where we are looking at an overhaul of chiplet configurations, which you can see in our previous coverage here. NVL576 also opens a new front in how interconnects are viewed, which is why we might see a shift away from copper as well. With NVIDIA's CPO (Co-Packaged Optics) switches, the idea is to overcome the thermal constraints of a 576-GPU configuration using copper. At the same time, massive upgrades in throughput, switching capacity, and latency are expected as we switch to CPO. We'll discuss this approach in detail once NVIDIA provides more details at GTC, but for now, expect the company to offer the world a mega-rack solution based on optics. I wouldn't be surprised if there's an NVL1,152 showcase at GTC 2026, but we'll have to wait and see how the racks evolve. There's a lot of spotlight to be taken by Rubin and Rubin Ultra, before Feynman comes, which is why Jensen will talk a lot about it. We are also looking at major CPU-focused announcements, including a collaboration with Intel. NVIDIA's GTC 2026 starts March 16, with Jensen's keynote commencing at 11:00 AM PT. As always, we'll be the first to talk about what's being unveiled at the event, so make sure to keep a close eye on the website.

[15]

Nvidia's GTC 2026 Begins Monday -- AI Factories, Next-Gen Chips And What Analysts Expect From Jensen Huang - NVIDIA (NASDAQ:NVDA)

The annual developer conference of NVIDIA Corporation (NASDAQ:NVDA), known as NVIDIA GTC (GPU Technology Conference), is set to commence on Monday. The conference, which will be held between March 16-19, has become a significant event for AI industry enthusiasts and will take place at the SAP Center, the home ground of the San Jose Sharks. The event is expected to draw a whopping 30,000 attendees from 190 countries. The conference will span across ten venues in downtown and will also be streamed free on nvidia.com for virtual attendees. This year's GTC will cover a wide range of topics, including physical AI, AI factories, agentic AI, and inference. NVIDIA's founder and CEO, Jensen Huang's keynote is expected to touch upon the full stack: chips, software, models, and applications. With over 700 sessions planned, the conference promises to provide comprehensive details on the latest developments in the AI industry. Here's What To Expect Pregame Show: The pregame show will feature the CEOs of Perplexity AI, LangChain, Mistral AI, Skild AI, and OpenEvidence three hours before Jensen Huang takes the stage. Open Vs Closed Models: Harrison Chase and leaders from Andreessen Horowitz, Allen Institute for AI, Cursor, and Thinking Machines Lab, discuss with Huang how open models compare with frontier closed models and what it means for developers building on them. Physical AI Systems: Experts to demonstrate practical, end-to-end workflows for physical AI development using NVIDIA Isaac and NVIDIA Omniverse technologies. AI Factories: Discussion on designing and scaling enterprise AI factories for LLMs, agentic AI, physical AI, and HPC, highlighting the infrastructure, software stack, and NVIDIA's NV-Certified systems and reference architectures that help partners deploy AI systems efficiently and consistently. What Do Analysts Say?Economic FramingRubin Power DemandBeyond GPU And RacksNew Chip Tied To Groq A Truist Financial analyst, William Stein, said the GTC could modestly boost the stock, though not in a "forceful" way, with investors already optimistic about its roadmap from Blackwell Ultra to the Feynman architecture, and a potential new chip tied to its Groq acquisition expected to be unveiled. Huang Outlines Five-Layer AI Stack Huang wrote that AI development relies on five "layers" -- energy, chips, infrastructure, models, and applications -- all of which must scale together for widespread adoption, with Nvidia positioned at the center linking much of this ecosystem. These developments underscore NVIDIA's commitment to advancing AI technology and its strategic investments in the sector. The GTC conference is expected to further highlight the company's vision and future plans for AI. Disclaimer: This content was partially produced with the help of AI tools and was reviewed and published by a Benzinga editor. Image via Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[16]

What To Expect From Nvidia's GTC -- the So-Called 'Woodstock of AI'

Get personalized, AI-powered answers built on 27+ years of trusted expertise. The week ahead could be a big one for AI chip leader Nvidia -- and the AI trade. Nvidia (NVDA), the world's most valuable company, is set to kick off its weeklong GPU Technology Conference Monday. The company is widely expected to unveil new products and give more details on its roadmap at the event, and CEO Jensen Huang is due to give a keynote address on Monday at 2 p.m. ET. (You can watch the event here.) That could mean more details about Nvidia's Rubin Ultra line of chips, anticipated next year, or the Feynman GPU expected for 2028. Nvidia is also seen unveiling a platform for AI agents called "NemoClaw," according to a Wired report. And analysts have said they'll be on the lookout for developments in Nvidia's software related to robotics and physical-world applications of AI. Last year, Nvidia announced its Blackwell Ultra chip and Vera Rubin AI computing platform at the event, which has grown in popularity in recent years along with Nvidia's rise to prominence, winning it the monikers the "Woodstock of AI" and "Super Bowl of the Tech Industry." However, some analysts are warning the chipmaker could face a particularly high bar to impress investors this year given weak sentiment around many previously high-flying AI stocks and uncertainty around the trajectory of the technology. "It is hard to see NVDA being able to provide thesis-altering commentary that creates a breakout for the stock," UBS analysts said in a note to clients earlier this week. Wall Street analysts remain overwhelmingly bullish on Nvidia stock. Of the 13 analysts with current ratings tracked by Visible Alpha, 12 have said they consider it a "buy" compared to just one neutral rating. Their mean target around $264 implies 45% upside from Friday's close. Shares of Nvidia were little changed Friday, leaving them down about 3% for 2026 so far. They've added about half their value from the same time a year ago.

[17]

Nvidia CEO to showcase next-gen AI chip Feynman at developer megaconference GTC

Nvidia CEO Jensen Huang will unveil the new AI chip Feynman at the company's developer conference. The event will also focus on Groq technology for inference computing. Nvidia faces growing competition in the AI chip market. Investors will look for assurance that Nvidia's investments are paying off. The company is also expected to discuss data centers and AI agents. Nvidia CEO Jensen Huang is set to detail the company's hardware and software plans to a large crowd in San Jose, California, at its annual developer conference on Monday. Shares of the company were up more than 2% in morning trading. During a keynote address at a hockey arena with a capacity of more than 18,000, Huang is expected to lay out how the top AI chipmaker plans to adapt to a rapidly changing AI landscape. Nvidia, the world's most valuable listed company, with a market capitalization of more than $4.3 trillion, is likely to detail a next-generation AI chip called Feynman, named after American physicist Richard Feynman, at the four-day conference. Huang is also likely to talk about data centers, Nvidia's chip programming software CUDA, digital assistants known as AI agents and physical AI such as robots. This year's event is even more crucial as investors will seek assurance that Nvidia's strategy of plowing back its profits into the AI ecosystem is paying off. Another focus is likely to be Groq, a chip startup from which Nvidia licensed technology for $17 billion in December. Groq specializes in fast and cheap "inference" computing work, in which an AI model takes what it has already learned and uses it to answer a question or make a prediction in real time. After spending hundreds of billions of dollars in recent years on chips for training their AI models, companies such as OpenAI, Anthropic and Facebook owner Meta Platforms are shifting toward serving hundreds of millions of users who are tapping those AI systems. Nvidia faces greater competition in the market for chips for inference-computing work than it does for AI-training chips, and analysts expect the company to shore up its defenses against rivals looking to regain market share they lost to Nvidia in recent years. Analysts also expect Nvidia to elaborate on why it invested $2 billion each in Lumentum and Coherent, both of which make lasers for sending information between chips in the form of beams of light. Despite that increased competition, some of which is coming from Nvidia's own customers designing their own chips, Nvidia remains central to the global AI ecosystem. Nations such as Saudi Arabia are building custom AI systems for their own populations using its chips, and it is one of the only large U.S. companies that continues to release open-source AI software, a growing field of competition between the U.S. and China. Huang's keynote is set for 11 a.m. Pacific Time (2 p.m. Eastern Time/1800 GMT).

[18]

Intel To Show Up at NVIDIA's GTC at the Perfect Time, as Agentic AI Turns CPUs Into the New Bottleneck

Intel is now coming to NVIDIA's GTC mega-event, not just a guest this time, but rather the company will play an important role in dictating the future of NVIDIA's compute capabilities. For those unaware, this year's GTC is expected to feature several major announcements that will influence NVIDIA and its supply chain partners, particularly Intel, which will also get the spotlight. NVIDIA and Intel entered into a $5 billion agreement a few months ago, in which both companies agreed to work together in the realm of CPUs, featuring x86 consumer and enterprise products. According to Intel's recent announcement, GTC will give a rundown of how the partnership with NVIDIA will pan out, and it is expected that enterprise CPUs will be a special focus. While Intel hasn't specifically said what it will unveil at GTC, the broader idea is to help NVIDIA and its customers overcome the CPU bottleneck, which the rise of agentic workloads has recently exacerbated. Hyperscalers like Meta, alongside AI labs like OpenAI, have started to enter into "CPU-only" agreements with NVIDIA in recent times, which is an indicator that, at least in recent times, the importance of CPUs within rack infrastructure has grown tremendously, which is why the NVIDIA-Intel collaboration is more important than ever. We anticipate Intel will unveil an arrangement in which Xeon CPUs will be part of NVIDIA's AI racks, and we already know this is happening, given that Intel is part of NVIDIA's "NVLink Fusion" ecosystem. The more interesting question here is which generation of Xeon processors will be part of this collaboration. If we go with the latest ones, the 6th-generation CPUs, coming under the Sierra Forest and Granite Rapids lineup, will be a part of NVIDIA's AI racks, but for now, this isn't certain. NVIDIA and Intel are also working on a joint x86 laptop-focused SoC featuring RTX GPU chiplets, but we don't expect it to be showcased at GTC, given that the event is more inclined toward enterprise launches. Since NVIDIA is also working on consumer-focused ARM laptop chips, such as the N1/N1X, the joint SoC with Intel would come after them, so it's quite a few years away. NVIDIA's GTC event commences on March 16, where we will see Jensen unveil everything around the future of the AI race.

[19]

Jensen Huang may Announce New Nivida-Groq Chip at the GTC Conference