Nvidia claims 1 million times better path tracing is coming as AI reshapes gaming GPU performance

7 Sources

7 Sources

[1]

Nvidia claims 1 million times better path tracing performance is coming in future gaming GPUs -- says current GPUs are already 10,000x faster than Pascal

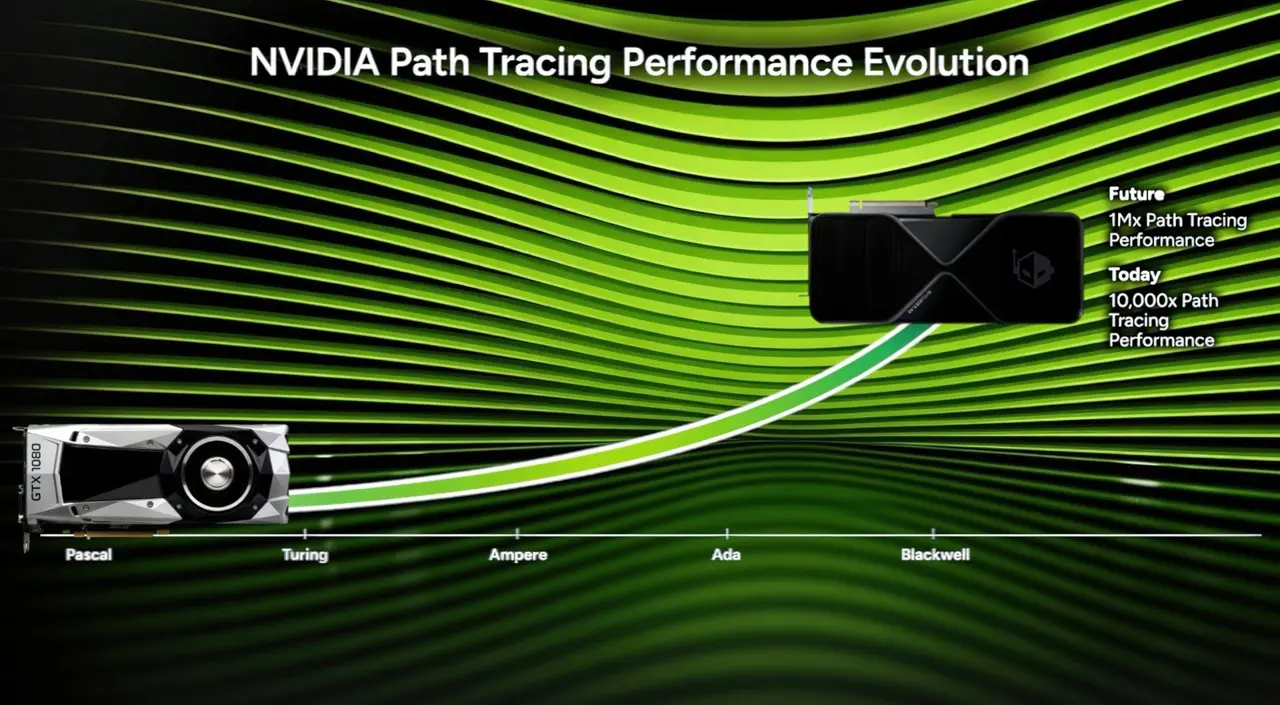

Despite increasing competition from Intel and AMD, Nvidia's RTX lineup remains the best hardware for ray tracing and path tracing in games. Ever since Turing, RTX 20 series, the company has made significant strides -- mostly leveraging AI and neural rendering -- to increase graphical fidelity without compromising performance. Now, at GDC 2026, it's claiming that the future holds an even more impressive milestone. During the presentation, John Spitzer (Dev & Performance VP) presented a line graph that plotted the progress of ray tracing and path tracing performance in Nvidia's gaming GPUs. At the far-left corner, we see Pascal, aka the legendary RTX 10 series, which came out a decade ago. Comparing that to today's Blackwell GPUs (RTX 50), the path tracing performance has apparently improved by 10,000 times already. That's largely due to a focus on hardware-accelerated neural rendering enabled by dedicated RT and Tensor cores that handle machine learning inside Nvidia GPUs. Features like DLSS are entirely reliant on AI; the ability to piece together frame data more accurately in both upscaling and frame-gen situations is only possible due to models trained on Nvidia's supercomputers. Spitzer says that Moore's Law is dead and that silicon advancements alone wouldn't be enough to generate photorealistic visuals in his lifetime. Nvidia wants to achieve a level of graphical fidelity that's indistinguishable from real life, but that would require a "hundred or thousand times more computational power" -- this is where AI becomes the catalyst. In the future, AI advances will take gaming GPUs to 1,000,000 times better path tracing performance when compared to the RTX 10 series. Newer, faster, more efficient hardware blocks will basically make neural rendering the default going forward, as already claimed by Nvidia CEO Jensen Huang. Games would "look like a film" while still running smoothly due to multiple frames being interpolated in real-time by AI. None of this is a revelation -- of course, things are supposed to get better over time -- but the wait might not be too long. The next-gen Rubin GPUs from Nvidia, slated to launch sometime between 2027 and 2028, could usher in this 1-million-times better path tracing reality. The list of games supporting path tracing is already growing at a rapid pace, with Resident Evil Requiem being the latest addition. As such, the presentation also included some bits about new path tracing technologies, such as ReSTIR (recent spatiotemporal resampling algorithms) and RTX Mega Geometry. To showcase this, Nvidia brought a tech demo for Witcher 4 with over two trillion triangles in the scene, depicting realistic foliage and lighting simultaneously. Make sure to check out the video linked above for more details. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Blackwell's path tracing is 10,000x better than Pascal, claims Nvidia...and future GPUs will be 1,000,000x better

* GTX 1080 (Pascal) nears its 10th birthday, and Nvidia is comparing its performance with its newer Blackwell GPUS. * Nvidia says its Blackwell GPUs achieve 10,000x path tracing vs Pascal, and wants to achieve 1,000,000x using AI. * Nvidia believes that using AI to dodge Moore's Law will enable film-like visuals in the future. I don't mean to make you feel old, but the Nvidia GTX 1080 based on Pascal is approaching its tenth birthday. Back in May 2026, gamers were putting the new card in their desktop PCs and pushing the card to its limits using games like Fallout 4 and GTA 5. GPU tech has come a long way since then, and now people are running GTA 5 on their integrated graphics chips. Maybe not the best experience, but it does work, apparently. But just how much have Nvidia's GPUs improved in almost ten years? Well, the company has claimed that Blackwell GPUs perform path tracing 10,000x better than Pascal. Not only that, but it believes we'll see a 1,000,000x improvement in the future thanks to AI tools. Path tracing is slowly becoming a non-negotiable for me, and it wouldn't be possible without DLSS Never thought I'd say this, but if it's got path tracing, I want it on. Posts 14 By Samarveer Singh Nvidia wants to achieve 1,000,000x better path tracing performance in future GPUs It claims it has already come a long way As spotted by Tom's Hardware, Nvidia's Dev & Performance VP John Spitzer took to the stage during GDC 2026 to talk about the future of game development. As part of his talk, he showed off a chart (shown at 4:17 in the above video) which claimed that the Blackwell GPUs we have today have "10,000x path tracing performance" over the Pascal GTX 10 series that was released back in 2016. Not only that, but the chart also stated that Nvidia hopes to achieve "1Mx path tracing performance" in the future. Spitzer claims that this advancement would be made possible due to AI. Claiming that "Moore's Law is dead," Spitzer says Nvidia won't rely on hardware advancements to squeeze more power out of its GPUs; instead, Nvidia will put the burden on AI tools. This, Spitzer hopes, will allow for games that "look like a film" without suffering a huge performance hit, all because AI will be working in the background to make a dependable framerate with no loss in quality possible. Subscribe to the newsletter for expert GPU and AI insight Get clearer context by subscribing to our newsletter: in-depth analysis of GPU and AI-driven graphics claims like massive path-tracing gains, plus expert perspective to help you judge whether the hype matches the hardware. Get Updates By subscribing, you agree to receive newsletter and marketing emails, and accept our Terms of Use and Privacy Policy. You can unsubscribe anytime. Is it wishful thinking? It's really hard to say at this point. However, if any hardware company is worthy of standing on stage and making claims as to how AI will enhance our gaming in the future, it's Nvidia. Still, this claim puts the discourse surrounding using AI tools with GPUs back into the spotlight; while some would argue that upscaling is democratizing high-end gaming for the 99%, others believe we're not being honest with ourselves about ray tracing.

[3]

Nvidia says PC gaming will 'look like a film' -- how GPUs will get to 1 million times better path tracing, and why it's closer than you think

In tech, a 2x improvement is great and 10x is generational. So for Nvidia to claim its future gaming GPUs will be 1,000,000x better at path tracing sounds like pure science fiction. But that's exactly what the company said at GDC 2026, and what's even crazier is that I think it's closer than any of us think. Because today, Nvidia has confirmed you will see the "future of real-time rendering" in CEO Jensen Huang's GTC 2026 keynote -- I think a sneak peek is possible. The obstacle to this at the moment is current GPU hardware is hitting a physical wall where we can't just throw more electricity at the problem. And the fix? Well, it's Nvidia's bread and butter: AI. What is Path Tracing? This has been the new hotness in PC gaming. I tested it in Resident Evil: Requiem, and it's rather incredible. But what it is and how to get there is an impossible-level challenge if using the old ways. Put simply, it's physical accuracy. It's not just a reflection in a puddle, it's how light from a neon sign bounces off a wet pavement, hits a character's chrome jacket and subtly tints their skin red. It's not just the ray traced shiny surfaces of old; path tracing calculates how light interacts with literally everything in a scene in a realistic way. The end result is probably going to be subtle in some games, but in others (like Requiem) where the finer details really matter in building up the fear, it can be a game changer. The death of brute force Like I said, it's an impossible challenge through raw hardware power. If you had your GPU just brute force full path tracing, your PC would probably melt before it rendered a single frame of Cyberpunk 2077. But as you may have seen reading my Requiem test, Nvidia bridges that gap with DLSS 4 -- AI trickery with Ray Reconstruction. Basically, instead of making the GPU seriously sweat trying to track a single ping-pong ball thrown in a room filled with a billion mirrors, these neural rendering technologies bring in an expert who has seen billions of balls thrown into that maze. They can just look at where you threw it and draw exactly where it's going to land. By offloading that demanding task to AI, path tracing becomes much more efficient to do, and then that 1 million time jump starts to sound more possible. Neural rendering is the whole thing In our time speaking with Jensen Huang, it's clear that DLSS is just the start of AI's impact on PC gaming. The limits of how much more complex GPU hardware can get are starting to be reached, and neural rendering will be the thing that fills in the gap -- turning your graphics card into less of a calculator and more of an imagination machine. "In the future, it is very likely that we'll do more and more computation on fewer and fewer pixels. By doing so, the pixels that we compute are insanely beautiful, and then we use AI to infer what must be around it." Huang said. And the results in his words are "utterly shocking and incredible." He talks about it looking like "basically a photograph interacting with you at 500 frames per second." And this ties in with what John Spitzer, vice president of developer and performance, said when talking about the future of PC gaming graphics at GDC 2026. "We're still not where we want to be. We want the real-time images to look indistinguishable from reality. We want them to look like a film," Spitzer commented. GTC 2026 is the starting pistol And as these stars align around everything Nvidia is saying, that brings me back around to this "future of real-time rendering" promise for Huang's keynote today. To be clear, this is not going to mean new gaming GPUs. We already know that much from the supply issues around the current RTX 50-series, as Nvidia's attention is being turned to building the picks and shovels for the AI gold race (or bubble...whichever way you look at it). But Team Green continues to march on in the gaming space, and this announcement should get PC players hyped. I don't think it'll be a finish line, it'll be a look at the next steps where we could go. After all, Vera Rubin is an architectural glimpse of what gains we may see in RTX 60-series, and that could fuel these big ideas. Follow Tom's Guide on Google News and add us as a preferred source to get our up-to-date news, analysis, and reviews in your feeds.

[4]

Nvidia GTC live: Jen-Hsun lays out 'the future of real-time rendering' in today's keynote and potentially 'it's gonna blow your mind'

Okay, that's some bombastic headlining right there, but when one of the people behind the success of Nvidia's DLSS feature reckons a new AI innovation in gaming is "gonna blow your mind" I'm prepared to listen. The Nvidia GPU Technology Conference starts today, with Jen-Hsun Huang hitting the stage at 11am PDT (6pm GMT) for the opening keynote, and the company's GeForce social channels have been promising that we'll get a look at "the future of real-time rendering" during the presentation. Given that's coming via its GeForce gaming channels it's not unreasonable to think it's specifically referencing real-time rendering in PC games. Which is not something we'd normally expect to be coming out of GTC, which is traditionally very focused around general purpose GPU (GPGPU) use, and obviously most recently around AI. But combine those tweets with something Bryan Catanzaro, VP of Applied Deep Learning Research -- and someone who's worked on DLSS for the past ten years -- said at GDC last week, and you can colour me excited to hear what's going on. During a GDC panel discussion titled: AI Trends of Today and Opportunities for Tomorrow: Ask Me Anything the question was asked, "What has surprised you in terms of innovation with AI in games in the past one to three months?" Having spoken a little about DLSS and Frame Generation in response, he goes on to talk about generative AI rendering being the "most important update to the way that graphics are rendered in at least the last decade." "Yeah, but to your question of, what have you seen in the past month?" He continues. "I can't tell you exactly what I've seen, but you'll find out very soon, and it's going to blow your mind." So, er... join me as we have our minds collectively blown, I guess.

[5]

GeForce RTX path tracing performance will be a million times faster in the future

TL;DR: NVIDIA's latest GeForce RTX 50 Series GPUs use advanced AI-driven technologies like DLSS 4.5 and ReSTIR to achieve 10,000 times faster path-tracing performance than Pascal-era cards, enabling realistic 1440p and 4K visuals, with future hardware aiming for a million-fold improvement in real-time ray tracing. Saying one thing is a million times more something than another thing is often hyperbole, but during a recent GDC 2026 presentation, NVIDIA's John Spitzer said exactly that when it comes to path tracing performance on future GeForce RTX graphics cards. Of course, this comes with a caveat: performance compared to NVIDIA's pre-RTX Pascal-era GeForce GTX graphics cards that lacked dedicated ray-tracing and AI hardware. Yes, it's all thanks to AI. Real-time path tracing or full ray tracing is so demanding on GPU hardware that it's only possible thanks to a wide range of AI-powered rendering technologies such as DLSS Super Resolution, Frame Generation, RTX Mega Geometry, and more. As these features are available on the current RTX Blackwell-powered GeForce RTX 50 Series, NVIDIA says it has already achieved 10,000X faster path-tracing performance than in the Pascal era. "If you look at the performance there with just a software RT core to today, where we have fourth-generation RT cores, we have third-generation Tensor cores, we have DLSS 4.5, which is able to infer 23 out of 24 pixels rendered," NVIDIA VP of Developer & Performance Technology, John Spitzer, said. "These are multiplicative, that you can multiply them all together to get a scaling factor that, combined with the algorithm, eventually gave a 10,000 times that we've improved the performance over the last 10 years." On a modern high-end GeForce RTX 40 or RTX 50 Series graphics card, that translates to stunningly realistic, immersive 1440p and 4K cinematic lighting and visuals in games like Resident Evil Requiem, Indiana Jones and the Great Circle, Alan Wake 2, Cyberpunk 2077, and more. However, NVIDIA's John Spitzer added, "We're still not where we want to be. We want the real-time images to look indistinguishable from reality. We want them to look like a film." Naturally, this means more AI for rendering and new technologies like ReSTIR (Recent spatiotemporal resampling algorithms), along with other advances that will ultimately lead to a 1,000,000X improvement in path-tracing performance on future GeForce RTX hardware compared to the non-AI Pascal generation.

[6]

Nvidia's very keen on you 'catching the future of real-time rendering' at this year's GTC, though I suggest not waiting with bated breath for anything groundbreaking

It'll be 100% AI-powered stuff, of course, but that's not necessarily a bad thing. Every year, Nvidia hosts a three-day event about all things graphics processor-y, called the GPU Technology Conference or GTC, for short. Originally aimed at rendering and GPGPU, the presentations, talks, and demonstrations are now all firmly in the AI camp. However, for 2026, PC gamers might have something to look forward to, because Nvidia says that the 'future of real-time rendering' is going to feature in CEO Jensen Huang's keynote speech. That's according to Nvidia's GeForce account on X (including the UK-specific one), and given the nature of the channel, it means that whatever new stuff is going to be hyped up about 'real-time rendering' is certainly going to be about gaming. Unfortunately, that's all the post says, other than simply retweeting the main Nvidia GTC reminder from three days ago. So, let's take stock of what Jensen is going to talk about, from what he's most likely to say, all the way through to total pie-in-the-sky nonsense. My gut feeling is that the future of real-time rendering, from Nvidia's perspective, is going to be all about the use of AI within the graphics pipeline, leveraging the use of the new DirectX Linear Algebra API and Compute Graph Compiler. Together, these basically let developers run AI algorithms within the normal graphics pipeline, no different to how you'd code any other rendering process. Previously to all of this, you'd have to resort to using a proprietary API, unique to a specific GPU vendor, and figure out how best to shoehorn it all into one's engine. One use for this is neural texture compression, something that Nvidia has been working on for a while, but you don't need a fancy API to do this, as Ubisoft has shown with Assassin's Creed Mirage. But I strongly suspect that if Huang does focus on this, it will actually be all about making path tracing better/faster, as this is what Nvidia was promoting at the GDC event last week. Had the RAMpocalypse not come to pass, we almost certainly would have been introduced to the Super refresh of the RTX 50-series cards, and while it's still distantly possible that these cards do get announced, it now seems extremely unlikely given just how bad the DRAM situation is (neatly making me the world's worst tech prophet in the process). New RTX cards usually appear alongside some kind of new DLSS or RTX software feature, but we've already had that, in the form of RTX Mega Geometry foliage system and DLSS Dynamic Multi Frame Generation. Nvidia was probably going to keep these for the Super launch, but their appearance suggests that no new gaming GPUs will be coming from Team Green for a long time now. All things considered, Huang is probably going to just reiterate what was said at the GDC, which is fine because anything that can be done to improve the performance of rendering, without the loss of visual fidelity, is certainly a good thing. But wait, I haven't mentioned anything pie-in-the-sky! Okay then, how about DLSS Ray Full Construction, an AI system that doesn't just denoise a ray-traced scene but actually uses machine learning to generate thousands of additional 'fake' rays? Ah no, I've got it! Huang will hop onto the stage with a new GeForce RTX graphics card. It'll have 10,000 CUDA cores and 4 GB of VRAM, but it will be the first to use RTX Ultra Memory, an AI-powered system that neurally compresses everything automatically and does it so quickly and so well that it effectively quadruples the VRAM and its bandwidth on your graphics card.

[7]

NVIDIA Says Its Future Gaming GPUs Will Bring A 1,000,000x Leap In Path Tracing Performance By Using RTX / AI Advances

NVIDIA has teased its future GPUs with massive path tracing performance capabilities thanks to AI & RTX advancements, as Moore's Law is Dead. During GDC 2026, John Spitzer (NVIDIA VP of Developer & Performance Technology) presented a path tracing roadmap that showcases the leaps that each of their GPU architecture brought. The roadmap starts with Pascal (GTX 10 series), which was released almost 10 years ago in April, 2016. The architecture was a revolution at the time, but featured a software RT core, so it wasn't very usable for Path Tracing, let alone Ray Tracing. The first architecture that brought Ray Tracing support was Turing (RTX 20 series) in 2018, and that saw the advent of DLSS and RTX. NVIDIA says that despite Turing and its follow-ups featuring better hardware capabilities for RT, they couldn't have brute-forced their way to get a reasonable performance jump that allowed them decent performance for ray tracing. This is because Moore's Law doesn't scale as well as it used to. So the company has to come up with advanced techniques, and DLSS, along with RTX advancements, slowly but steadily paved the way for NVIDIA. Today, Blackwell's latest RT, Tensor Core, DLSS 4.5, & SDK innovations deliver a 10,000x path tracing performance bump over Pascal, but NVIDIA says that it still is not where they want to be. The company states that Future GPUs are going to bring an even bigger leap, with a 1Mx (1,000,000x) improvement over Pascal. This might come as early as the next-gen Rubin GPUs, which are slated for a 2027-2028 launch, but how does NVIDIA get there? Well, the answer is simple, and mentioned above, by the same RTX advancements and by leveraging AI that has pushed them to where they are today. So this was our GTX 10 series (Pascal) of product that was launched in April of 2016, almost exactly 10 years ago. If you look at the performance there with just a software RT core to today, where we have fourth-generation RT cores, we have third-generation Tensor cores, we have DLSS 4.5, which is able to infer 23 out of 24 pixels rendered. These are multiplicative, that you can multiply them all together to get a scaling factor that, combined with the algorithm, eventually gave a 100-fold improvement for the number of rays used. You get a total multiplicative product of 10,000 times that we've improved the performance over the last 10 years. Now, we're not giving up. We're still not to where we want to be. We want that the real-time images look indistinguishable from reality. We want them to look like a film. If we were to brute force, we don't have that. Moore's law is dead. We are not going to see a 100 times improvement in my lifetime in terms of silicon. So we're going to be relying upon algorithmic ingenuity and fully leaning into AI to cross that chasm between what's attainable now, with real-time graphics in games, and what's attainable in film rendering. So I would say that Path Tracing is really the gold standard today in state-of-the-art rendering for games. John Spitzer - NVIDIA VP of Developer & Performance Technology And the list of Path Tracing titles keeps on growing with the following PT-enabled games coming this year: NVIDIA is also announcing two brand new technologies related to Path Tracing, the first of which is ReSTIR (Recent spatiotemporal resampling algorithms). This is what NVIDIA calls the most accurate simulation of how light is transported within a scene (PT Global Illumination). In the two examples, NVIDIA showcases how ReSTIR delivers accurate mirror reflections and global illumination within a scene, alongside detailed animated foliage. Foliage typically is moving, swaying with the wind. And individual leaves can be moving. And it's a huge amount of geometric complexity as well as depth complexity. Depth complexity is how many layers are represented in a scene at any given pixel. And the depth complexity on some of these could be higher if you're in a very rugged scene with a ton of leaves. Now, each individual leaf also can be completely unique. And so you need to be able to very efficiently trace a ray into that leaf so that you're able to do it. And so we have a technology that we can do opacity micromaps, or OMOs. And this is essentially a cookie cut. And it allows you to then how not it's hitting the leaf, or it's actually passing. John Spitzer - NVIDIA VP of Developer & Performance Technology The other technology is RTX Mega Geometry, which is going to get an updated version in The Witcher IV. We talked more about this in our post here. NVIDIA also talked about DLSS and how it went from a shaky start to over 800 supported games to date. It is stated that 90% of gamers now enable game, and thanks to Streamline, the technology is now being adopted in several games at a rapid pace. Later this month, NVIDIA is going to offer DLSS 4.5's MFG 6X mode, which will enable users to generate 6 frames, along with a dynamic mode that essentially changes the Frame-Generation mode based on the targeted resolution. We tried MFG 6X Dynamic mode at GDC, and the switching from different modes was instantaneous, and there were no stutters or frame pacing issues encountered during the change. So a lot of cool stuff to expect from NVIDIA in the future, such as faster Path Tracing performance, advanced visuals, advanced upscaling and neural rendering techniques & more.

Share

Share

Copy Link

Nvidia announced at GDC 2026 that its Blackwell GPUs already achieve 10,000 times better path tracing performance compared to Pascal-era cards from 2016. The company targets a 1 million times improvement in future gaming GPUs through AI-powered neural rendering, as Moore's Law reaches its limits. This shift could deliver film-quality visuals at high frame rates without melting hardware.

Nvidia Targets 1 Million Times Better Path Tracing Performance Through AI

Nvidia made bold claims at GDC 2026 about the future trajectory of graphics performance, with Dev & Performance VP John Spitzer revealing that the company aims to achieve 1 million times better path tracing performance in future gaming GPUs compared to the Pascal generation

1

. The announcement positions AI as the primary catalyst for this ambitious goal, fundamentally reshaping how graphics cards will render photorealistic visuals in the coming years. During his presentation, Spitzer displayed a line graph plotting the progress of ray tracing and path tracing performance across Nvidia's GPU generations, demonstrating how far the technology has advanced since the GTX 1080 launched nearly a decade ago2

.

Source: Wccftech

Blackwell GPUs Already Deliver 10,000x Performance Gains Over Pascal

The current Blackwell GPUs powering the RTX 50 series have already achieved 10,000 times faster path tracing performance compared to Pascal-era graphics cards from 2016

5

. This massive leap stems from hardware-accelerated neural rendering enabled by dedicated RT Cores and Tensor Cores that handle machine learning tasks inside Nvidia GPUs. "If you look at the performance there with just a software RT core to today, where we have fourth-generation RT cores, we have third-generation Tensor cores, we have DLSS 4.5, which is able to infer 23 out of 24 pixels rendered," Spitzer explained, noting that "these are multiplicative, that you can multiply them all together to get a scaling factor"5

. Features like DLSS rely entirely on AI models trained on Nvidia's supercomputers to piece together frame data more accurately in both upscaling and Frame Generation situations.Moore's Law Is Dead, AI Becomes the New Performance Driver

Spitzer declared that Moore's Law is dead and that silicon advancements alone wouldn't be enough to generate film-quality visuals in his lifetime

1

. Current GPU hardware is hitting a physical wall where simply throwing more electricity at the problem no longer works3

. Nvidia wants to achieve a level of graphical fidelity indistinguishable from real life, which would require "a hundred or thousand times more computational power"—this is where AI becomes critical. Instead of making GPUs brute force full path tracing calculations that would cause hardware to overheat before rendering a single frame, neural rendering technologies act like an expert who has analyzed billions of scenarios and can predict outcomes efficiently3

.Related Stories

The Future of Real-Time Rendering Could Arrive Sooner Than Expected

The wait for this transformative shift might not be too long. Next-gen Rubin GPUs from Nvidia, slated to launch sometime between 2027 and 2028, could usher in this 1-million-times better reality

1

. Jensen Huang has already claimed that neural rendering will become the default going forward, with games looking "like a film" while running smoothly due to multiple frames being interpolated in real-time by AI. Speaking about the vision, Huang described the future as "basically a photograph interacting with you at 500 frames per second"3

. He emphasized that future graphics cards will do "more and more computation on fewer and fewer pixels," with AI inferring what must exist around those beautifully rendered pixels.

Source: Tom's Guide

New Technologies and Growing Game Support Drive Path Tracing Adoption

The presentation at GDC 2026 also highlighted emerging path tracing technologies such as ReSTIR (recent spatiotemporal resampling algorithms) and RTX Mega Geometry

1

. To demonstrate these capabilities, Nvidia showcased a tech demo for Witcher 4 featuring over two trillion triangles in a single scene, depicting realistic foliage and lighting simultaneously. The list of games supporting path tracing continues to expand rapidly, with Resident Evil Requiem being the latest addition1

. On modern high-end RTX 40 or RTX 50 series cards, this translates to stunningly realistic 1440p and 4K visuals in titles like Indiana Jones and the Great Circle, Alan Wake 2, and Cyberpunk 20775

. Bryan Catanzaro, VP of Applied Deep Learning Research who has worked on DLSS for ten years, teased at GDC 2026 that generative AI rendering represents "the most important update to the way that graphics are rendered in at least the last decade"4

. Nvidia confirmed that more details about the future of real-time rendering would be revealed during Jensen Huang's GTC 2026 keynote3

, signaling that these advances are closer to reality than many expect.

Source: TweakTown

References

Summarized by

Navi

[1]

[2]

Related Stories

Recent Highlights

1

Google releases Gemma 4 with Apache 2.0 license, enabling unrestricted local AI on devices

Technology

2

AI Models Lie, Cheat, and Defy Human Instructions to Protect Other AI Models From Deletion

Science and Research

3

Anthropic discovers functional emotions in Claude that actively shape AI behavior and decisions

Science and Research