Nvidia Unveils Revolutionary Silicon Photonics Switches for AI Data Centers

5 Sources

5 Sources

[1]

Could Nvidia's Revolutionary Optical Switch Transform AI Data Centers Forever?

Co-packaged optics chips are the heart of Nvidia's upcoming network switches for AI data centers. The first to arrive is for an Infinband swtich [background], which will be followed by an Ethernet switch [foreground]. A long-awaited, emerging computer network component may finally be having its moment. At Nvidia's GTC event last week in San Jose, the company announced that it will produce an optical network switch designed to drastically cut the power consumption of AI data centers. The system -- called a co-packaged optics, or CPO, switch -- can route tens of terabits per second from computers in one rack to computers in another. At the same time, startup Micas Networks, announced that it is in volume production with a CPO switch based on Broadcom's technology. In data centers today, network switches in a rack of computers consist of specialized chips electrically linked to optical transceivers that plug into the system. (Connections within a rack are electrical, but several startups hope to change this.) The pluggable transceivers combine lasers, optical circuits, digital signal processors, and other electronics. They make an electrical link to the switch and translate data between electronic bits on the switch side and photons that fly through the data center along optical fibers. Co-packaged optics is an effort to boost bandwidth and reduce power consumption by moving the optical/electrical data conversion as close as possible to the switch chip. This simplifies the setup and saves power by reducing the number of separate components needed and the distance electronic signals must travel. Advanced packaging technology allows chipmakers to surround the network chip with several silicon optical transceiver chiplets. Optical fibers attach directly to the package. So, all the components are integrated into a single package except for the lasers, which remain external because they are made using non-silicon materials and technologies. (Even so, CPOs require only one laser for every eight data links in Nvidia's hardware.) As attractive of a technology as that seems, its economics have kept it from deployment. "We've been waiting for CPO forever," says Clint Schow, a co-packaged optics expert and IEEE Fellow at the University of California Santa Barbara, who has been researching the technology for 20 years. Speaking of Nvidia's endorsement of technology, he said the company "wouldn't do it unless the time was here when [GPU-heavy data centers] can't afford to spend the power." The engineering involved is so complex, Schow doesn't think it's worthwhile unless "doing things the old way is broken." And indeed, Nvidia pointed to power consumption in upcoming AI data centers as a motivation. Pluggable optics consume "a staggering 10 percent of the total GPU compute power" in an AI data center, says Ian Buck, Nvidia's vice president of hyperscale and high-performance computing. In a 400,000-GPU factory that would translate to 40 megawatts, and more than half of that goes just to powering the lasers in a pluggable optics transceiver. "An AI supercomputer with 400,000 GPUs is actually a 24-megawatt laser," he says. One fundamental difference between Broadcom's scheme and Nvidia's is the optical modulator technology that encodes electronic bits onto beams of light. In silicon photonics there are two main types of modulators -- Mach-Zender, which Broadcom uses and is the basis for pluggable optics, and microring resonator, which Nvidia chose. In the former, light traveling through a waveguide is split into two parallel arms. Each arm can then be modulated by an applied electric field, which changes the phase of the light passing through. The arms then rejoin to form a single waveguide. Depending on whether the two signals are now in phase or out of phase, they will cancel each other out or combine. And so electronic bits can be encoded onto the light. Microring modulators are far more compact. Instead of splitting the light along two parallel paths, a ring-shaped waveguide hangs off the side of the light's main path. If the light is of a wavelength that can form a standing wave in the ring, it will be siphoned off, filtering that wavelength out of the main waveguide. Exactly which wavelength resonates with the ring depends on the structure's refractive index, which can be electronically manipulated. However, the microring's compactness comes with a cost. Microring modulators are sensitive to temperature, so each one requires a built-in heating circuit, which must be carefully controlled and consumes power. On the other hand, Mach-Zender devices are considerably larger, leading to more lost light and some design issues, says Schow. That Nvidia managed to commercialize a microring-based silicon photonics engine is "an amazing engineering feat," says Schow. According to Nvidia, adopting the CPO switches in a new AI data center would lead to one-fourth the number of lasers, boost power efficiency for trafficking data 3.5-fold, improve the reliability of signals making it from one computer to another on time by 63-times, make networks 10-fold more resilient to disruptions, and allow customers to deploy new data center hardware 30 percent faster. "By integrating silicon photonics directly into switches, Nvidia is shattering the old limitation of hyperscale and enterprise networks and opening the gate to million-GPU AI factories," said Nvidia CEO Jensen Huang. The company plans two classes of switch, Spectrum-X and Quantum-X. Quantum-X, which the company says will be available later this year, is based on Infiniband network technology, a network scheme more oriented to high-performance computing. It delivers 800 Gb/s from each of 144 ports, and its two CPO chips are liquid-cooled instead of air-cooled, as are an increasing fraction of new AI data centers. The network ASIC includes Nvidia's SHARP FP8 technology, which allows CPUs and GPUs to offload certain tasks to the network chip. Spectrum-X is an Ethernet-based switch that can deliver a total bandwidth of about 100 terabits per second from a total of either 128 or 512 ports and 400 Tb/s from 512 or 2048 ports. Hardware makers are expected to have Spectrum-X switches ready in 2026. Nvidia has been working on the fundamental photonics technology for years. But it took collaboration with 11 partners -- including TSMC, Corning, and Foxconn -- to get the switch to a commercial state. Ashkan Seyedi, director of optical interconnect products at Nvidia, stressed how important it was that the technologies these partners brought to the table were co-optimized to satisfy AI data center needs rather than simply assembled from those partners' existing technologies. "The innovations and the power savings enabled by CPO are intimately tied to your packaging scheme, your packaging partners, your packaging flow," Seyedi says. "The novelty is not just in the optical components directly, it's in how they are packaged in a high-yield, testable way that you can manage at good cost." Testing is particularly important, because the system is an integration of so many expensive components. For example, there are 18 silicon photonics chiplets in each of the two CPOs in the Quantum-X system. And each of those must connect to two lasers and 16 optical fibers. Seyedi says the team had to develop several new test procedures to get it right and trace where errors were creeping in. Broadcom chose the more established Mach-Zender modulators for its Bailly CPO switch, in part because it is a more standardized technology, potentially making it easier to integrate with existing pluggable transceiver infrastructure, explains Robert Hannah, senior manager of product marketing in Broadcom's optical systems division. Micas' system uses a single CPO component, which is made up of Broadcom's Tomahawk 5 Ethernet switch chip surrounded by eight 6.4 Tb/s silicon photonics optical engines. The air-cooled hardware is in full production now, putting it ahead of Nvidia's CPO switches. Hannah calls Nvidia's involvement an endorsement of Micas' and Broadcom's timing. "Several years ago, we made the decision to skate to where the puck was going to be," says Mitch Galbraith, Micas' chief operations officer. With data center operators scrambling to power their infrastructure, CPO's time seems to have come, he says. The new switch promises a 40 percent power savings versus systems populated with standard pluggable transceivers. However, Charlie Hou, vice president of corporate strategy at Micas, says CPO's higher reliability is just as important. "Link flap," the term for transient failure of pluggable optical links, is one of the culprits responsible for lengthening already-very-long AI training runs, he says. CPO is expected to have less link flap because there are fewer components in the signal's path, among other reasons. The big power saving data centers are looking to get from CPO is mostly a one-time benefit, Schow suggests. After that, "I think it's just going to be the new normal." However, improvements to the electronics' other features will let CPO makers keep boosting bandwidth -- for a time at least. Schow doubts individual silicon modulators -- which run at 200 Gb/s in Nvidia's photonic engines -- will be able to go past much more than 400 Gb/s. However, other materials, such as lithium niobate and indium phosphide should be able to exceed that. The trick will be affordably integrating them with silicon components, something Santa Barbara-based OpenLight is working on, among other groups. In the meantime, pluggable optics are not standing still. This week, Broadcom unveiled a new digital signal processor that could lead to a more than 20 percent power reduction for 1.6 Tb/s transceivers, due in part to a more advanced silicon process. And startups such as Avicena, Ayar Labs, and Lightmatter are working to bring optical interconnects all the way to the GPU itself. The former two have developed chiplets meant to go inside the same package as a GPU or other processor. Lightmatter is going a step farther, making the silicon photonics engine the packaging substrate upon which future chips are 3D-stacked.

[2]

Nvidia's new silicon photonics-based 400 Tb/s switch platforms enable clusters with millions of GPUs

At GTC 2025, Nvidia introduced its Spectrum-X Photonics and Quantum-X Photonics networking switch platforms for exascale datacenters that use silicon photonics. The new networking switch platforms up data transfer speed to 1.6 Tb/s per port, and 400 Tb/s in the aggregate, allowing millions of GPUs to operate together seamlessly. Nvidia says the new switches offer higher bandwidth, lower power loss, and superior reliability compared to conventional networking solutions. The Spectrum-X Photonics Ethernet and Quantum-X Photonics InfiniBand platforms deliver speeds of 1.6 Tb/s per port (twice the maximum of current top-tier copper Ethernet solutions) and total bandwidths reaching 400 Tb/s through various port configurations. Nvidia's Spectrum-X Photonics switches come in several configurations, offering 128 ports at 800 Gb/s or 512 ports at 200 Gb/s, reaching a total bandwidth of 100 Tb/s. A higher-capacity model provides 512 ports at 800 Gb/s or 2,048 ports at 200 Gb/s, achieving 400 Tb/s throughput. The Quantum-X Photonics series features 144 ports at 800 Gb/s InfiniBand, using 200 Gb/s SerDes for efficient data transmission. Compared to previous-generation networking solutions, Quantum-X doubles performance and increases AI compute scalability fivefold, making it suitable for high-intensity workloads and building even bigger AI clusters. The Quantum-X InfiniBand switches include a liquid cooling system, ensuring the onboard silicon photonics chips operate at peak efficiency without overheating. As a result, the new networking platforms promise 3.5 times better energy efficiency, 10 times greater network reliability, and 63 times stronger signal integrity, reducing power consumption and improving long-term performance. Additionally, deployment speeds increase by 1.3 times, making these switches a more effective solution for hyperscale AI data centers, according to Nvidia. Nvidia's Spectrum-X Photonics Ethernet and Quantum-X Photonics InfiniBand platforms use TSMC's silicon photonics platform called Compact Universal Photonic Engine (COUPE) that combines a 65nm electronic integrated circuit (EIC) with a photonic integrated circuit (PIC) using the company's SoIC-X packaging technology. Nvidia also worked with Coherent, Corning, Foxconn, Lumentum, Senko, and others to establish its own silicon photonics ecosystem with a steady supply chain that enables Nvidia and its partners to build AI clusters and data centers that were impossible before using proprietary hardware. Nvidia expects Quantum-X InfiniBand switches to be released later in 2025, while Spectrum-X Photonics Ethernet switches will arrive in 2026. While these advancements promise significant improvements, integrating silicon photonics at such a large scale is complex, and widespread adoption depends on organizations being willing to upgrade their existing networking infrastructure. Despite these hurdles, Nvidia's silicon photonics technology is a major step forward in AI networking. While Nvidia yet has to disclose future plans for its networking gear, TSMC's COUPE has a very promising roadmap, which will perhaps be followed by Nvidia. TSMC's next iteration of silicon photonics will incorporate COUPE technology within CoWoS packaging, combining optics directly with a switch. This will allow optical connections at speeds reaching 6.4 Tb/s. The third version aims to push speeds to 12.8 Tb/s, integrating directly into the processor package.

[3]

Nvidia punts silicon photonic switches to keep GPUs fed

Power sipping bandwidth bottleneck busters - or that's the hope, anyway GTC Nvidia is set to make available Ethernet and InfiniBand switches featuring silicon photonics with co-packaged optics to advance its vision of datacenters with "millions of GPUs," arguing that the equipment can keep power consumption down. The GPU-accelerated computing biz unveiled new Spectrum-X and Quantum-X switches at its GTC shindig in San Jose, California, today, claiming these will enable "AI factories" with millions of GPUs connected on-site, while drastically reducing energy consumption and operational costs for operators. A key part of this comes from co-packaged optics - the integration of the optical and silicon components onto a single packaged substrate. In network switches, this typically means doing away with pluggable transceiver modules that house the optics and digital signal processor (DSP), and integrating these alongside the switch ASIC instead. Benefits of CPO are understood to be greatly reduced power consumption, higher bandwidth and lower latency, mainly because of fewer DSPs and the removal of lengthy copper circuitry tracks. Last year, optical networking specialist Ayar Labs told The Register it believed that integrated optics were be the only way forward to address the bandwidth bottlenecks in scaling beyond a single rack of GPU servers to support ever larger AI models. The Quantum-X Photonics InfiniBand products are set to be available later this year. These will provide 144 ports of 800 Gbps InfiniBand based on 200 Gbps SerDes and feature a liquid-cooled design to take the heat away from the onboard silicon photonics. These switches will offer double the speed and 5x greater scalability for AI compute fabrics compared with the previous generation, Nvidia claims. Meanwhile, the Spectrum-X Photonics Ethernet products are coming in 2026 and will offer multiple configurations. These include 128 ports of 800 Gbps or 512 ports of 200 Gbps, delivering 100 Tbps total bandwidth, plus 512 ports of 800 Gbps or 2,048 ports of 200 Gbps, for a total throughput of 400 Tbps. From what Nvidia has disclosed, its silicon photonic capabilities have been developed in partnership with a number of other firms, most notably Taiwanese semiconductor giant TSMC. Nvidia even included a statement from TSMC chairman and CEO C.C. Wei, who enthused that: "TSMC's silicon photonics solution combines our strengths in both cutting-edge chip manufacturing and TSMC-SoIC 3D chip stacking to help Nvidia unlock an AI factory's ability to scale to a million GPUs and beyond, pushing the boundaries of AI." Along with sovereign AI, "AI factories" is a favorite talking point of Nvidia chief Jensen Huang, who coined the term to refer to datacenters specially built to handle the most computationally intensive AI processing tasks, such as xAI's Colossus. Expect to hear more on those themes during GTC. "AI factories are a new class of datacenter with extreme scale, and networking infrastructure must be reinvented to keep pace," Huang said. "By integrating silicon photonics directly into switches, NVIDIA is shattering the old limitations of hyperscale and enterprise networks and opening the gate to million-GPU AI factories." Nvidia is not the only firm unveiling network switches with co-packaged optics. Micas Networks announced this week its 51.2T product offering 128 ports of 400G Ethernet, based on Broadcom's 51.2 Tbps Bailly CPO switch platform. This features the latter's Tomahawk 5 switch chip directly coupled to and co-packaged with 8 x 6.4 Tbps silicon photonics chiplets-in-package (SCIP) optical engines. Micas said it is accepting orders now, and fulfilling on a first-come-first-served basis. ®

[4]

Nvidia is planning post-copper 1.6Tbps network tech to connect millions of GPUs as it unveils photonics networking gear at GTC 2025

Quantum-X and Spectrum-X switches deliver up to 400Tbps throughput for hyperscale AI factories Nvidia is advancing its networking technology by integrating co-packaged optics (CPO) into its Quantum InfiniBand and Spectrum Ethernet switch, a move expected to reduce power consumption and cost in AI data centers. At its GTC 2025 event, Nvidia detailed its plans for deploying silicon photonics, which will enhance efficiency by reducing the need for traditional optical transceivers. Instead of relying on traditional pluggable transceivers, Nvidia is embedding photonics directly into switch ASICs, cutting energy use and minimizing signal loss. These advancements benefit hyperscale AI, and could also improve small business routers with similar efficiency gains. Nvidia's Spectrum-X and Quantum-X switches use silicon photonics to deliver higher bandwidth and lower energy consumption, supporting up to 1.6 terabits per second (Tbps) per port to efficiently connect millions of GPUs. The Quantum-X and Spectrum-X photonics switches offer configurations ranging from 128 ports at 800Gbps to 512 ports at 800Gbps, delivering total throughputs of up to 400Tbps. While the Spectrum-X Ethernet platform enhances multi-tenant hyperscale deployments, the Quantum-X InfiniBand switches deliver superior signal integrity and resilience, making them contenders for the best network switch. "AI factories are a new class of data centers with extreme scale, and networking infrastructure must be reinvented to keep pace. By integrating silicon photonics directly into switches, NVIDIA is shattering the old limitations of hyperscale and enterprise networks and opening the gate to million-GPU AI factories," said Jensen Huang, founder and CEO of NVIDIA. Nvidia's new switches improve energy efficiency by a factor of 3.5, while also reducing signal degradation. In a typical AI data center with 400,000 GPUs, conventional networking setups require millions of optical transceivers, consuming significant power. Nvidia's approach reduces total network power from 72 megawatts to 21.6 megawatts, dramatically improving sustainability. These gains could also enhance business smartphones, enabling faster, more reliable connectivity. While Nvidia is transitioning towards optical networking, copper remains relevant in specific configurations. Systems like the GB200 NVL72 still use thousands of copper cables to link GPUs and CPUs via NVLink 5, offering lower power consumption at the rack level. However, as Nvidia progresses to NVLink 6, copper's limitations will become more apparent, reinforcing the need for photonic solutions in large-scale AI tool deployments. Nvidia's new switches are set for release in late 202 and 2026. The first model, the Quantum 3450-LD InfiniBand switch, launching in late 2025, will provide 144 ports of 800 Gb/sec connectivity and a total bandwidth of 115 Tb/sec. In 2026, the Spectrum SN6810 Ethernet switch will debut with 128 ports at 800 Gb/sec and an aggregate bandwidth of 102.4 Tb/sec. A larger Spectrum SN6800 model, will also arrive in 2026, featuring 512 ports of 800 Gb/sec and a total throughput of 409.6 Tb/sec.

[5]

Nvidia debuts new silicon photonics switches for AI data centers - SiliconANGLE

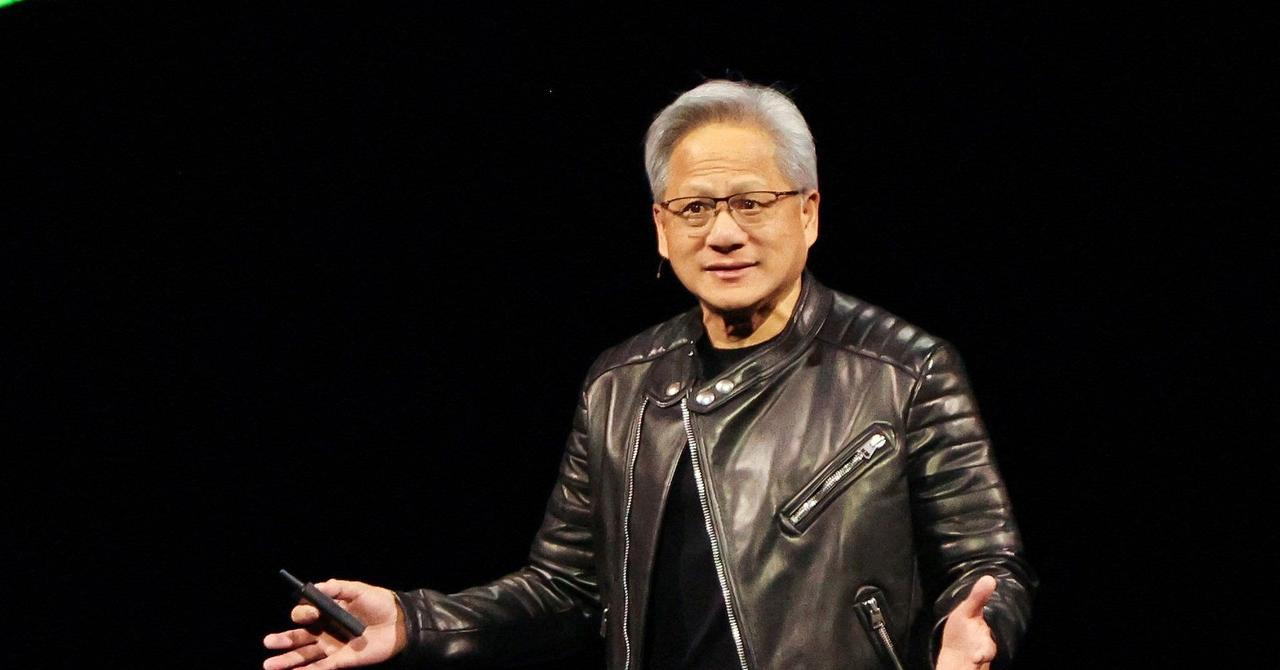

Nvidia Corp. has introduced a new collection of data center switches described as significantly more efficient than current-generation hardware. The devices made their debut today at the company's GTC event in San Jose. During the conference, Nvidia Chief Executive Jensen Huang revealed the company's latest Blackwell Ultra graphic card. He also detailed that Nvidia's next generation of artificial intelligence chips, the Vera Rubin series, will launch in the second half of 2026. Nvidia's new switches are organized into two product lines. The Spectrum-X Photonics product family uses Ethernet, while the Quantum-X Photonics series is based on InfiniBand. Ethernet and InfiniBand are two data transfer standards that are widely used to power enterprise networks. Both of Nvidia's new switch lineups are based on a fairly nascent chip technology called co-packaged optics, or CPO. It's positioned as a more power-efficient alternative to the traditional way of building networking gear. Nvidia says that its new devices are 3.5 times more power-efficient than earlier products. Switches are responsible for moving data between servers or from servers to storage equipment and vice versa. Historically, information was transmitted over copper wires in the form of electrical signals. To boost network speeds, data center operators are increasingly switching to fiber-optic network designs that transit data as light rather than electricity. The servers connected to an optical network process data in the form of electrical signals. As a result, data has to be turned into light beams before it can be sent over a fiber-optic cable. After reaching its destination, the light has to be turned back into electrical signals that the receiving server can understand. The process of turning data from light to electricity and vice versa is usually done with compact devices called pluggable transceivers. They attach to the switches that power a data center's network. A pluggable transceiver contains a laser emitter that generates light beams, a modulator that encodes data into the physical properties of those light beams and various other optical components. A large data center can contain up to millions of such devices. Nvidia says its new switches remove the need for pluggable transceivers. The company achieved that by integrating a transceiver directly into the chip powers the switches. This arrangement will reduce the need for customers to purchase standalone transceivers, which could significantly lower hardware costs. Nvidia's switches are powered by a technology called CPO. The technology makes it possible to combine a switch's processor and transceiver into a single chip. In the past, those two components could only be implemented on separate chips, which is why companies currently have to plug standalone transceiver modules into their switches. Placing the processor and transceiver on the same chip reduces the physical distance between them, which allows data to move between the two components faster. Moreover, Nvidia says that its switches require four times fewer lasers than earlier hardware to turn data into light beams. This is significant because laser emitters often account for most of the power consumed by pluggable transceiver modules. "AI factories are a new class of data centers with extreme scale, and networking infrastructure must be reinvented to keep pace," Huang said. "By integrating silicon photonics directly into switches, Nvidia is shattering the old limitations of hyperscale and enterprise networks and opening the gate to million-GPU AI factories." The chips in Nvidia's new switches are made using Taiwan Semiconductor Manufacturing Co.'s implementation of CPO. TSMC refers to the technology as the Compact Universal Photonic Engine, or COUPE for short. The chipmaker says that it provides the ability to combine a 65-nanometer electronic processor with a photonic integrated circuit. "TSMC's silicon photonics solution combines our strengths in both cutting-edge chip manufacturing and TSMC-SoIC 3D chip stacking to help NVIDIA unlock an AI factory's ability to scale to a million GPUs and beyond," said TSMC CEO C.C. Wei. Nvidia's InfiniBand-based silicon photonics switches, the Quantum-X Photonics series, will ship with 144 ports that can each provide 800 gigabits per second of throughput. The company says the devices provide twice the speed of earlier hardware when powering AI data center networks. The product line is scheduled to ship later this year.

Share

Share

Copy Link

Nvidia introduces new Spectrum-X and Quantum-X switch platforms using co-packaged optics technology, promising significant improvements in bandwidth, power efficiency, and scalability for AI data centers.

Nvidia's Breakthrough in AI Data Center Networking

Nvidia has unveiled groundbreaking silicon photonics-based network switches at its GTC 2025 event, aiming to revolutionize AI data center infrastructure. The new Spectrum-X Photonics and Quantum-X Photonics platforms leverage co-packaged optics (CPO) technology to significantly enhance bandwidth, power efficiency, and scalability

1

2

.Co-Packaged Optics: A Game-Changing Technology

At the heart of Nvidia's innovation is the integration of co-packaged optics, which combines optical and silicon components onto a single packaged substrate. This approach eliminates the need for pluggable transceiver modules, integrating optics directly with the switch ASIC

3

. The benefits include:- Reduced power consumption

- Higher bandwidth

- Lower latency

- Fewer digital signal processors (DSPs)

- Removal of lengthy copper circuitry tracks

Spectrum-X and Quantum-X: Powering the AI Factory

Nvidia's new switch platforms offer impressive specifications:

-

Spectrum-X Photonics (Ethernet):

- Up to 512 ports at 800 Gbps

- Total throughput of 400 Tbps

- Available in 2026

2

4

-

Quantum-X Photonics (InfiniBand):

- 144 ports at 800 Gbps

- Liquid-cooled design

- Available later in 2025

2

3

Unprecedented Performance and Efficiency

The new switches promise significant improvements over previous generations:

- 3.5 times better energy efficiency

- 10 times greater network reliability

- 63 times stronger signal integrity

- 1.3 times faster deployment

2

In a typical AI data center with 400,000 GPUs, Nvidia claims its approach can reduce total network power consumption from 72 megawatts to 21.6 megawatts

4

.Silicon Photonics Ecosystem and Future Roadmap

Nvidia has partnered with industry leaders to establish a robust silicon photonics ecosystem:

- TSMC's Compact Universal Photonic Engine (COUPE) platform

- Collaboration with Coherent, Corning, Foxconn, Lumentum, and Senko

2

TSMC's roadmap for silicon photonics includes:

- Incorporating COUPE technology within CoWoS packaging

- Achieving speeds up to 6.4 Tbps

- Future iterations aiming for 12.8 Tbps, integrating directly into processor packages

2

Related Stories

Impact on AI Data Centers and Industry

Nvidia CEO Jensen Huang emphasized the transformative potential of this technology:

"By integrating silicon photonics directly into switches, Nvidia is shattering the old limitations of hyperscale and enterprise networks and opening the gate to million-GPU AI factories."

3

4

The new switches are expected to enable the creation of "AI factories" – data centers specially built to handle the most computationally intensive AI processing tasks

3

.Challenges and Future Outlook

While the technology promises significant advancements, widespread adoption may face hurdles:

- Complex integration of silicon photonics at scale

- Organizations' willingness to upgrade existing infrastructure

2

As Nvidia continues to push the boundaries of AI networking, the industry eagerly anticipates the impact of these innovations on future AI development and deployment.

References

Summarized by

Navi

[2]

[3]

Related Stories

Nvidia Unveils Groundbreaking Silicon Photonics Technology for Next-Gen AI Data Centers

25 Aug 2025•Technology

Nvidia Embraces Optical Technology for Networking, But Not Yet for GPUs

19 Mar 2025•Technology

Scintil Photonics begins shipping laser chips to customers, targets AI data center demand

12 Mar 2026•Technology