Palantir defends AI in warfare as Maven Smart System faces scrutiny over Iran strikes

2 Sources

2 Sources

[1]

Palantir UK boss says it's up to militaries to decide how AI targeting is used in war

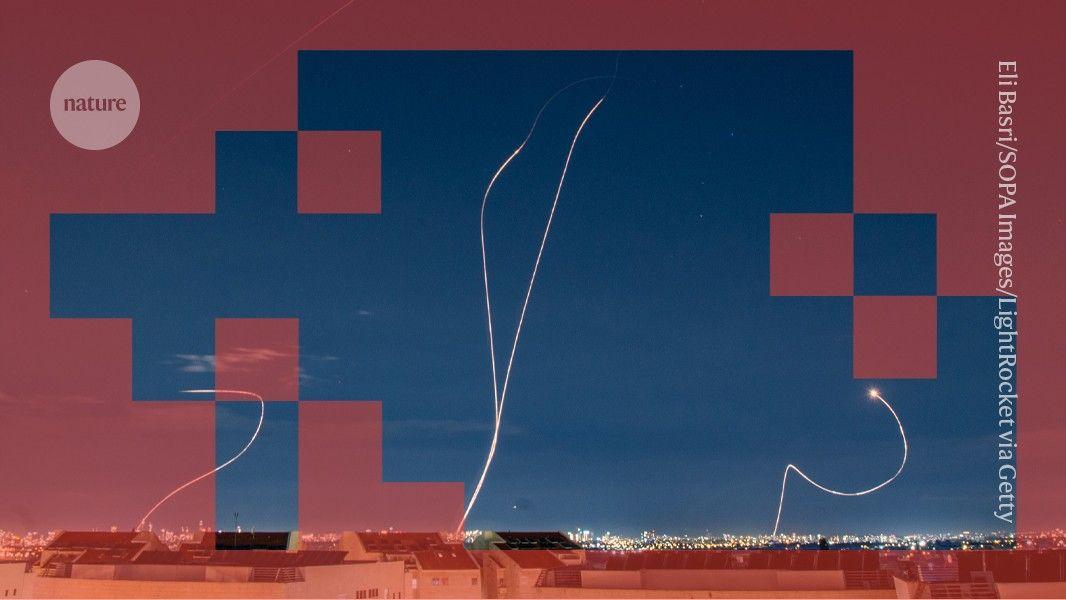

Tech giant Palantir has pushed back against concerns that military use of its AI platforms could lead to unforeseen risks, in an exclusive interview with the BBC, insisting that the way the technology is used is the responsibility of its military customers. It comes as experts have expressed concern over the use of Palantir's AI-powered defence platform - Maven Smart System - during wartime and its reported use in US attacks on Iran. Analysts have warned that the military's use of the platform, which helps personnel plan attacks, leaves little time for "meaningful verification" of its output and could lead to incorrect targets being hit. But the company's UK and Europe head, Louis Mosley, told the BBC in a wide-ranging interview that while AI platforms like Maven have been "instrumental" to the US management of the Iran war, responsibility for how their output is used must always remain "with the military organisation". "There's always a human in the loop, so there is always a human that makes the ultimate decision. That's the current set-up." The Maven Smart System was launched by the Pentagon in 2017 and is designed to speed up military targeting decisions by bringing together masses of data, including a range of intelligence, satellite and drone images. The system analyses this data and can then provide recommendations for targeting. It can also suggest the level of force to use based on the availability of personnel and military hardware, such as aircraft. But scrutiny has grown over the use of such tools in warfare. In February, the Pentagon announced that it would be phasing out Anthropic's Claude AI system - which helps to power Maven - after the company refused to allow use of its AI in autonomous weapons and surveillance. Palantir says alternatives can replace it. Since the war with Iran began in February, the US has reportedly used Maven to plan strikes across the country. Pushed by the BBC on the risk that Maven might suggest incorrect targets - which could include civilians - Mosley insisted that the platform is only meant to serve as a guide to speed up the decision-making process for military personnel and that it should not be seen as an automated targeting system. "You could think of it as a support tool," Mosley said. "It's allowing them to synthesise vast amounts of information that previously they would have had to do manually one by one." However, Mosley deferred to individual militaries when challenged by the BBC on the risk of time-pressured commanders ordering their officers to take Maven's output as being rubber-stamped. "That's really a question for our military customers. They're the ones that decide the policy framework that determines who gets to make what decision," he said. "That's not our role." Since 28 February, the US has launched more than 11,000 strikes against Iran, many reportedly identified by Maven. Adm Brad Cooper, head of the US military in the Middle East, has hailed AI systems for helping officers "sift through vast amounts of data in seconds, so our leaders can cut through the noise and make smarter decisions faster than the enemy can react". But some worry AI's involvement in mission planning creates significant risks. "This prioritisation of speed and scale and the use of force then leaves very little time for meaningful verification of targets to make sure that they don't include civilian targets accidentally," Prof Elke Schwarz of Queen Mary University of London said. "If there's a risk of killing and you co-opt a lot of your critical thinking to software that will take care of these things for you, then you just become reliant on the software," she added. "It's a race to the bottom." In recent weeks, Pentagon officials have faced questions as to whether AI tools such as Maven were used to identify targets in the deadly strike on a school in the Iranian town of Minab. Iranian officials said the strike killed 168 people, including around 110 children, on the opening day of the war. In Congress, a number of senior Democrats have called for increased scrutiny of AI platforms like Maven. Rep Sara Jacobs - a member of the House Armed Services Committee - called for clearly enforced rules and regulations about how and when AI systems are used. "AI tools aren't 100% reliable -- they can fail in subtle ways and yet operators continue to over-trust them," she told NBC News last month. "We have a responsibility to enforce strict guardrails on the military's use of AI and guarantee a human is in the loop in every decision to use lethal force, because the cost of getting it wrong could be devastating for civilians and the service members carrying out these missions." But Mosley pushed back against suggestions that the speed of his company's platform is rushing decision making at the Pentagon and potentially creating dangerous situations. He instead argued that the speed at which commanders are now taking action is a "consequence of the increased efficiency" that Maven has enabled. Citing "operational security", the Pentagon declined to comment when approached by the BBC on how AI systems like Maven will be used in future or who would be held responsible should something go wrong. But officials in the US appear to be moving forward with plans to further integrate Maven into its systems. Last week, the Reuters news agency reported that the Pentagon had designated Maven as "an official program of record" - establishing it as a technology to be integrated long-term across the US military. In a letter obtained by Reuters, deputy Defence Secretary Steve Feinberg said the platform would provide commanders "with the latest tools necessary to detect, deter, and dominate our adversaries in all domains". Additional reporting by Jemimah Herd

[2]

The US is waging AI-assisted war on Iran. Here's how

While AI tools used by the military are very advanced, they're not yet at the level where human judgement is unnecessary. * Experts and former officials say the military's artificial intelligence systems are central to "Operation Epic Fury" * As the war drags on, AI could play an increasing role * More than a hundred lawmakers in the House and Senate signed letters sent to Pentagon chief Pete Hegseth in mid-March asking whether the Maven Smart System was involved in the strike on the school Hundreds of Iranian civilian deaths in the war have put the U.S. military's new AI systems in the spotlight and raised concerns from lawmakers over whether these systems are making deadly mistakes. Experts and former officials say the military's artificial intelligence systems are central to "Operation Epic Fury," which is seeing AI deployed on the battlefield to a new degree. "For somebody who spent years talking about how we're moving too slow, I'm now concerned about how fast we're moving," said Jack Shanahan, a retired lieutenant general who led efforts to develop and integrate AI into the military. "At some point it may become increasingly difficult to define what an advanced AI system must not do, as opposed to humans defining what they want it to do." At a closed door House Armed Services Committee briefing on March 25, Pentagon officials told lawmakers AI was used in data management, but not final target selection, according to a person with knowledge of the briefing. U.S. soldiers are "leveraging a variety of advanced AI tools," Adm. Brad Cooper, the commander of U.S. Central Command, said in a March 11 video update on the war. "Humans will always make final decisions on what to shoot and what not to shoot and when to shoot but advanced AI tools can turn processes that used to take hours, and sometimes even days, into seconds." The military has hit more than 12,000 targets in the monthlong Iran war, including more than 1,000 in the first 24 hours after the war launched on Feb. 28. One of the sites bombed that day was an Iranian school, leading to at least 175 deaths, most of them children. In the early days of the war, the U.S. military fired more long-range, expensive missiles to hit Iran from far away, but has since shifted to using more short-range, gravity bombs that can be dropped from aircraft, now that Iran's air defenses are degraded, according to Chairman of the Joint Chiefs of Staff Gen. Dan Caine and other officials. The first targets struck likely came from longstanding Pentagon plans for an Iran attack, said Emelia Probasco, a senior fellow at Georgetown University's Center for Security and Emerging Technology who studies military uses of AI. But as the war drags on, AI could play an increasing role, Probasco said, including in "prioritization" of targets - telling soldiers where to hit first. "We are now entering the phase where those targets have been attacked and now you could potentially start to see an even greater impact of AI," she said. "You're looking for time critical targets, targets that move, targets that we didn't know about before." 20 soldiers with AI match the work of 2,000 For nearly a decade, the military has been integrating an AI tool known as the Maven Smart System into its computer systems. The system, often shortened to "Maven," fuses the military's many, disparate channels of data, intelligence, satellite imagery and asset movements into a single software platform. Military leaders say the system can make decisions in the heat of battle faster and more effective. The system has already drastically increased the number of targets that a given number of operators can hit. According to Probasco's 2024 study of Army exercises using the system, roughly 20 people using it could match the work of more than 2,000 soldiers in Iraq war-era targeting cells then considered the most efficient in U.S. military history. And its development in the two years since her study has been "dramatic," she added. In a demo of the Maven Smart System at a March 12 conference, Cameron Stanley, the Pentagon's chief digital and artificial intelligence officer, showed the ease with which a user could turn a structure into a ball of flame with a "left click, right click, left click." On the screen behind Cameron, a cursor hovered over an overhead image of lined up cars, showing numbers representing their measurements, locational coordinates and other data. With a few clicks, the "detection" of an object could be moved into a "targeting workflow," Cameron said. The system offered a choice of "which metrics AI should prioritize," including "time to target," "distance," or "munitions." A sleek graphic appeared to show on a map the circular blast radius that the strike would create, and the arc that the weapons would travel. After a couple clicks on a blue "approve" bar and green "task executed" bar, the dark cloud of an explosion filled the screen. "When we started this, it literally took hours to do what you just saw there," Cameron said. Iran school strike raises AI questions In spite of officials' claims that AI improves the military's accuracy, the civilian death toll in Iran has raised concerns over whether it has contributed to faulty targeting. Lawmakers have asked whether AI played a role in the school strike. Investigations by the New York Times and other outlets found that the United States was likely behind the strike, which used a U.S.-made Tomahawk missile. The school may have been on an outdated list of targets that the military failed to recheck, according to those reports. The Pentagon has said its own investigation into the strike is ongoing. More than a hundred lawmakers in the House and Senate signed letters sent to Pentagon chief Pete Hegseth in mid-March asking whether the Maven Smart System was involved in the strike on the school, and for more details on how the military is checking the work of AI. Shanahan said he saw "no indications" that AI was involved in the strike, "but we need to acknowledge that while future AI will be capable of finding more targets than ever before, humans must remain responsible and accountable for the decisions to hit those targets." In past military exercises, AI has demonstrated far lower accuracy than humans. In the Army exercises that Probasco studied, the Maven Smart System could correctly identify a tank around 60% of the time, as compared to a human soldier's 84% accuracy, and that number dropped to just 30% in snowy weather. An AI targeting system tested by the Air Force in 2021 hit just 25% accuracy when it was tested on imperfect conditions. The Pentagon in 2023 issued a directive that soldiers and commanders using AI systems must be able to "exercise appropriate levels of human judgment over the use of force." "Our military operates in full compliance with all U.S. laws and established policies, such as ensuring a human is always in the loop for critical operational decisions," the Pentagon said in a statement to USA TODAY. "The responsibility for the lawful use of any AI tool rests with the human operator and the chain of command, not within the software itself." Pentagon goes after company behind its AI chatbot The Trump administration as a whole has moved to remove regulations around AI in the name of innovation and cutting bureaucracy, and the Pentagon has followed suit. In a Jan. 9 memo laying out the military's AI strategy, Hegseth directed the Pentagon to work towards "unleashing experimentation" with AI models and "aggressively identifying and eliminating bureaucratic barriers to deeper integration" of AI. "We must accept that the risks of not moving fast enough outweigh the risks of imperfect alignment," the memo read. In recent months, that approach has put the Pentagon at odds with Anthropic, the Silicon Valley company behind Claude, the only AI chatbot that is currently configured to operate on the Maven Smart System. Anthropic sought out an agreement from the Pentagon that its technology would not be used for mass surveillance, or to hit targets without human signoff. The Pentagon refused to accept those terms, saying Claude must be available to the military for "all lawful uses," as its officials publicly blasted the company on social media. The Pentagon moved to declare the company a "supply chain risk" - a designation meant to restrict companies vulnerable to sabotage or subversion by U.S. adversaries - but was blocked from the move by a federal judge's ruling on March 26. "The military will not allow a vendor to insert itself into the chain of command by restricting the lawful use of a critical capability," the Pentagon said in a statement. "It is the military's sole responsibility to ensure our warfighters have the tools they need to win in a crisis, without interference from corporate policies." Anthropic has said in statements that it does not believe the Pentagon has yet used Claude in a way that broke its conditions. But the dispute reportedly arose after Anthropic learned that the military used Claude in its operation to capture Venezuelan President Nicolas Maduro. "Anthropic currently does not have confidence," the company maintained in court documents, "that Claude would function reliably or safely if used to support lethal autonomous warfare." AI built for military purposes "already has a lot of accuracy issues," but language learning models like Claude "are actually even more inaccurate," said Heidy Khlaaf, chief AI scientist at the AI Now Institute. "They're not very good at solving for tasks outside of what they've been trained on, and that's ok if you're using it in a non critical environment, like writing an email, but that's very different when you're dealing with novel scenarios like a fog of war." The dispute with Claude is not the first time that the increasing business partnerships between Silicon Valley and the Pentagon to create high tech weapons and military tools have come under criticism from the companies building them. Google was originally contracted to work on the Maven Smart System in its early developmental stages, but dropped the contract in 2018 in response to a protest movement from its workers. Google and Amazon workers have also in recent years protested the companies' AI contract with the Israeli military and Google's work with immigration and border enforcement. "If any tech company caves to the Pentagon's demands," Hegseth "will have the power to build and deploy A.I.-powered drones that kill people without the approval of any human," a group of organizations representing Amazon, Google, and Microsoft workers wrote in a statement on the Anthropic dispute. Shanahan said human control of AI for military uses is a "nonnegotiable starting point," but it could eventually be confined to the design and development of systems that increasingly operate on their own. "You're going to be operating under the assumption that at some point an autonomous weapon is released, and no human will have the ability to bring it back."

Share

Share

Copy Link

Palantir's UK boss says militaries must decide how AI targeting is used, as the Maven Smart System faces growing scrutiny over its role in US strikes on Iran. Experts warn the AI-powered platform leaves little time for meaningful verification, while the Pentagon insists humans make final decisions. More than 11,000 strikes have been launched since February, many identified by Maven.

Palantir Pushes Back on AI in Warfare Concerns

Palantir has defended its AI-powered defense platform amid mounting concerns over military targeting decisions, with the company's UK and Europe head Louis Mosley telling the BBC that responsibility for how AI output is used must remain "with the military organisation." The Maven Smart System, launched by the Pentagon in 2017, has become central to US military operations, particularly during the ongoing conflict with Iran that began in February

1

. Since February 28, the US has launched more more than 11,000 strikes against Iran, many reportedly identified by the AI-powered targeting platform1

.

Source: BBC

The system analyzes masses of data, including intelligence, satellite imagery, and drone footage, to provide recommendations for military targeting and suggest force levels based on available personnel and hardware. Mosley insisted there is "always a human in the loop" and characterized Maven as a "support tool" designed to help military personnel "synthesis vast amounts of information that previously they would have had to do manually one by one"

1

.US Military AI Use Transforms Decision-Making Process

The scale of AI-assisted war has reached unprecedented levels during Operation Epic Fury, with Adm. Brad Cooper, head of US Central Command, crediting AI systems for helping officers "sift through vast amounts of data in seconds, so our leaders can cut through the noise and make smarter decisions faster than the enemy can react"

1

. According to a 2024 study, roughly 20 people using the Maven Smart System could match the work of more than 2,000 soldiers in Iraq war-era targeting cells2

.

Source: USA Today

At a March 12 conference demonstration, Pentagon chief digital and artificial intelligence officer Cameron Stanley showcased how users could execute strikes with simple clicks—"left click, right click, left click"—with the system offering choices of "which metrics AI should prioritize," including "time to target," "distance," or "munitions"

2

. At a closed-door House Armed Services Committee briefing on March 25, Pentagon officials told lawmakers AI was used in data management but not final target selection2

.Risks of AI-Driven Targeting and Civilian Casualties

The ethical implications of AI have come under intense scrutiny following civilian casualties in Iran, particularly a deadly strike on a school in Minab on the opening day of the war that Iranian officials said killed 168 people, including around 110 children

1

. More than a hundred lawmakers signed letters to Pentagon chief Pete Hegseth in mid-March asking whether Maven was involved in the school strike2

.Prof. Elke Schwarz of Queen Mary University of London warned that "this prioritisation of speed and scale and the use of force then leaves very little time for meaningful verification of targets to make sure that they don't include civilian targets accidentally"

1

. She added that reliance on software for critical thinking in warfare represents "a race to the bottom"1

.Related Stories

Growing Calls for Regulations on AI Use

Concerns about AI autonomy in warfare have prompted calls for stricter oversight. Rep. Sara Jacobs, a member of the House Armed Services Committee, emphasized that "AI tools aren't 100% reliable—they can fail in subtle ways and yet operators continue to over-trust them," calling for clearly enforced regulations on AI use and guaranteeing human judgment in warfare remains paramount in every decision to use lethal force

1

.In February, the Pentagon announced it would phase out Anthropic's Claude AI system—which helps power Maven—after the company refused to allow use of its AI in autonomous weapons and surveillance, though Palantir says alternatives can replace it

1

. Retired Lt. Gen. Jack Shanahan, who led efforts to develop and integrate AI into the military, expressed concern about the pace of deployment: "For somebody who spent years talking about how we're moving too slow, I'm now concerned about how fast we're moving"2

.Target Prioritization and Future Implications

As the war continues, experts predict AI could play an increasing role in target prioritization. Emelia Probasco, a senior fellow at Georgetown University's Center for Security and Emerging Technology, noted that while early strikes likely came from longstanding Pentagon plans, "we are now entering the phase where those targets have been attacked and now you could potentially start to see an even greater impact of AI" in identifying time-critical targets that move or weren't previously known

2

. The military has shifted from firing expensive long-range missiles to using more short-range gravity bombs dropped from aircraft as Iran's air defenses have degraded2

.References

Summarized by

Navi

[2]

Related Stories

Pentagon reveals how Military AI chatbots accelerate targeting decisions in Iran operations

11 Mar 2026•Technology

Anthropic clashes with US Military over AI warfare ethics as Trump orders federal ban

28 Feb 2026•Policy and Regulation

Pentagon formalizes Palantir's Maven AI as core military system with multi-year funding

21 Mar 2026•Policy and Regulation

Recent Highlights

1

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

2

Anthropic's Claude Code Source Leak Reveals Hidden AI Agent Plans and Extensive System Access

Technology

3

Judge blocks Pentagon from branding Anthropic a security risk over AI safety guardrails dispute

Policy and Regulation