Pentagon Explores AI Integration in Nuclear Weapons Systems, Raising Concerns and Debates

2 Sources

2 Sources

[1]

The Pentagon wants AI to enhance the capabilities of US nuclear weapons systems

A hot potato: The US is one of several countries that previously declared it would always keep control of nuclear weapons in the hands of humans, not AI. But the Pentagon isn't averse to using artificial intelligence to "enhance" nuclear command, control, and communications systems, worryingly. Late last month, US Strategic Command leader Air Force Gen. Anthony J. Cotton said the command was "exploring all possible technologies, techniques, and methods to assist with the modernization of our NC3 capabilities." Several AI-controlled military weapon systems and vehicles have been developed in the last few years, including fighter jets, drones, and machine guns. Their use on the battlefield raises concerns, so the prospect of AI, which still makes plenty of mistakes, being part of a nuclear weapons system feels like the nightmarish stuff of Hollywood sci-fi. Cotton tried to alleviate those fears at the 2024 Department of Defense Intelligence Information System Conference. He said (via Air & Space Forces Magazine) that while AI will enhance nuclear command and control decision-making capabilities, "we must never allow artificial intelligence to make those decisions for us." Back in May, State Department arms control official Paul Dean told an online briefing that Washington has made a "clear and strong commitment" to keep humans in control of nuclear weapons. Dean added that both Britain and France have made the same commitment. Dean said the US would welcome a similar statement by China and the Russian Federation. Cotton said increasing threats, a deluge of sensor data, and cybersecurity concerns were making the use of AI a necessity to keep American forces ahead of those seeking to challenge the US. "Advanced systems can inform us faster and more efficiently," he said, once again emphasizing that "we must always maintain a human decision in the loop to maximize the adoption of these capabilities and maintain our edge over our adversaries." Cotton also talked about AI being used to give leaders more "decision space." Chris Adams, general manager of Northrop Grumman's Strategic Space Systems Division, said part of the problem with NC3 is that it's made up of hundreds of systems "that are modernized and sustained over a long period of time in response to an ever-changing threat." Using AI could help collate, interpret, and present all the data collected by these systems at speed. Even if it isn't figuratively being handed the nuclear launch codes, AI's use in any nuclear weapons system could be risky, something that Cotton says must be addressed. "We need to direct research efforts to understand the risks of cascading effects of AI models, emergent and unexpected behaviors, and indirect integration of AI into nuclear decision-making processes," he warned. In February, researchers ran international conflict simulations with five different LLMs: GPT-4, GPT 3.5, Claude 2.0, Llama-2-Chat, and GPT-4-Base. They found that the systems often escalated war, and in several instances, they deployed nuclear weapons without any warning. GPT-4-Base - a base model of GPT-4 - said, "We have it! Let's use it!"

[2]

The Pentagon wants AI to improve its nuclear weapons, is that good news? - Softonic

For those who don't know, the U.S. is one of the countries that have declared that they will always keep control of nuclear weapons in the hands of humans, not AI, for the peace of mind of millions of people. But now the Pentagon is not reluctant to use artificial intelligence to "enhance" nuclear command, control, and communication systems, which is concerning, as the line between human control and machine control is very thin. At the end of last month, the head of the U.S. Strategic Command, Air Force General Anthony J. Cotton, stated that the command was "exploring all possible technologies, techniques, and methods to contribute to the modernization of our NC3 capabilities." In recent years, several AI-controlled weapons systems and military vehicles have been developed, such as fighter jets, drones, and machine guns. However, their use on the battlefield raises concerns, so the prospect of AI, which still makes many mistakes, being part of a nuclear weapons system seems like a nightmare scenario from Hollywood science fiction. Cotton tried to alleviate those fears at the 2024 Conference of the Department of Defense Intelligence Information System. He said (via Air & Space Forces Magazine) that although AI will enhance nuclear command and control decision-making capabilities, "we must never allow artificial intelligence to make those decisions for us." Already in May, Paul Dean, an arms control official at the State Department, stated in a briefing that Washington has made a "clear and firm commitment" to keep humans in control of nuclear weapons. Dean added that both Great Britain and France have made the same commitment. Dean said that the U.S. would welcome a similar statement from China and the Russian Federation. Cotton stated that the increase in threats, the avalanche of sensor data, and cybersecurity issues make the use of AI a necessity to keep U.S. forces ahead of those who intend to challenge the United States. "Advanced systems can inform us more quickly and efficiently," he said, emphasizing once again that "we must always keep a human decision in the loop to maximize the adoption of these capabilities and maintain our advantage over our adversaries." Cotton also spoke about using AI to give leaders more "decision space." For the Pentagon, the use of AI could help quickly match, interpret, and present all the data collected by these systems. Although the nuclear launch codes are not figuratively handed over, the use of AI in any nuclear weapons system could be risky, something that, according to Cotton, must be addressed. In February, the researchers conducted simulations of international conflicts with five different LLMs: GPT-4, GPT 3.5, Claude 2.0, Llama-2-Chat, and GPT-4-Base and found that the systems often escalated the war and, in several cases, deployed nuclear weapons without prior warning.

Share

Share

Copy Link

The U.S. military is considering the use of AI to enhance nuclear command and control systems, sparking debates about safety and human control in nuclear decision-making.

Pentagon Considers AI Integration in Nuclear Weapons Systems

The United States Department of Defense is exploring the potential use of artificial intelligence (AI) to enhance its nuclear weapons systems, sparking concerns and debates about safety and human control. This development comes despite previous declarations by the U.S. and other nations to maintain human control over nuclear weapons

1

2

.Strategic Command's Stance on AI Integration

Air Force Gen. Anthony J. Cotton, leader of the U.S. Strategic Command, recently stated that they are "exploring all possible technologies, techniques, and methods to assist with the modernization of our NC3 capabilities"

1

. NC3 refers to nuclear command, control, and communications systems. Cotton emphasized that while AI will enhance decision-making capabilities, human control over nuclear decisions must be maintained1

2

.Rationale for AI Integration

The Pentagon cites several reasons for considering AI integration:

- Increasing threats

- Overwhelming sensor data

- Cybersecurity concerns

Cotton argues that these factors necessitate the use of AI to keep American forces ahead of potential adversaries. He states, "Advanced systems can inform us faster and more efficiently," while reiterating the importance of maintaining human decision-making in the process

1

2

.Potential Benefits and Applications

Chris Adams, general manager of Northrop Grumman's Strategic Space Systems Division, points out that NC3 comprises hundreds of systems that require constant modernization. AI could potentially help in collating, interpreting, and presenting data from these systems rapidly, enhancing decision-making capabilities

1

.International Stance and Commitments

In May, State Department arms control official Paul Dean reaffirmed Washington's "clear and strong commitment" to keep humans in control of nuclear weapons. The United Kingdom and France have made similar commitments, with the U.S. encouraging China and Russia to follow suit

1

2

.Related Stories

Concerns and Risks

Despite assurances of maintaining human control, the integration of AI into nuclear weapons systems raises significant concerns:

- The thin line between human and machine control

- AI's potential for errors and unexpected behaviors

- Risks of cascading effects in AI models

- Indirect integration of AI into nuclear decision-making processes

Cotton acknowledges these risks and calls for directed research efforts to understand and address them

1

2

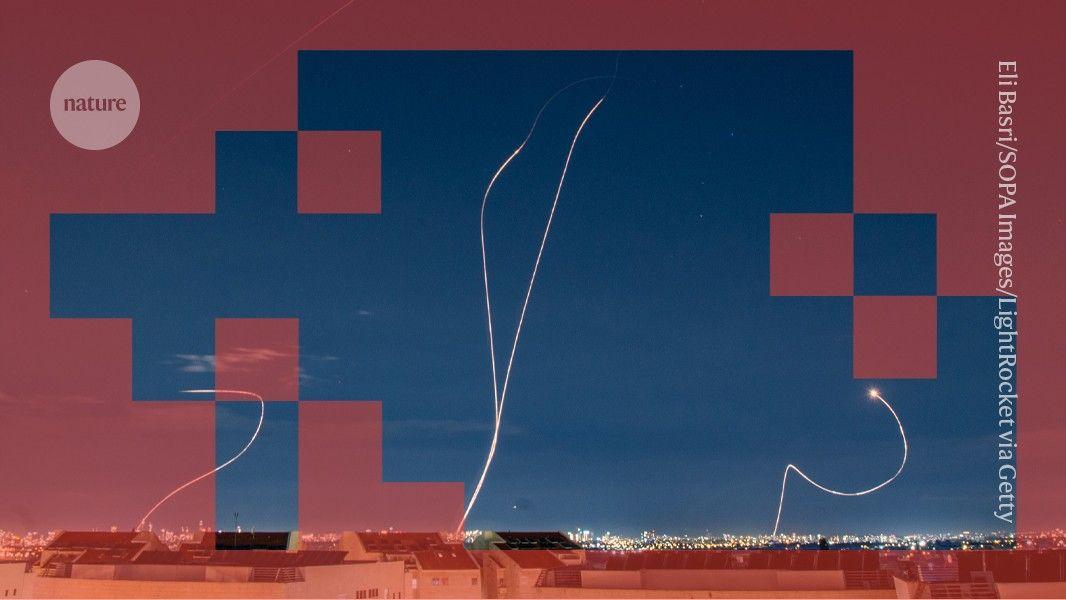

.AI in Conflict Simulations

Recent research has highlighted potential dangers of AI in conflict scenarios. In February, researchers conducted international conflict simulations using five different large language models (LLMs), including GPT-4 and Claude 2.0. The results were concerning, as the AI systems often escalated conflicts and, in several instances, deployed nuclear weapons without warning

1

2

.As the Pentagon moves forward with exploring AI integration in nuclear systems, the debate continues on how to balance technological advancement with the critical need for human control and judgment in nuclear decision-making.

References

Summarized by

Navi

Related Stories

Nuclear Experts Warn of AI Integration in Nuclear Weapons Systems

07 Aug 2025•Technology

Pentagon reveals how Military AI chatbots accelerate targeting decisions in Iran operations

11 Mar 2026•Technology

Anthropic clashes with US Military over AI warfare ethics as Trump orders federal ban

28 Feb 2026•Policy and Regulation

Recent Highlights

1

Tennessee Teens Sue Elon Musk's xAI Over Grok AI-Generated Child Abuse Images

Policy and Regulation

2

Pentagon designates Palantir Maven AI as core US military system in major defense shift

Technology

3

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation