RunSybil raises $40M to automate penetration testing with AI agents that hack like humans

2 Sources

2 Sources

[1]

Exclusive: RunSybil, a startup using AI to automate penetration testing, raises $40M in VC funding in round led by Khosla Ventures | Fortune

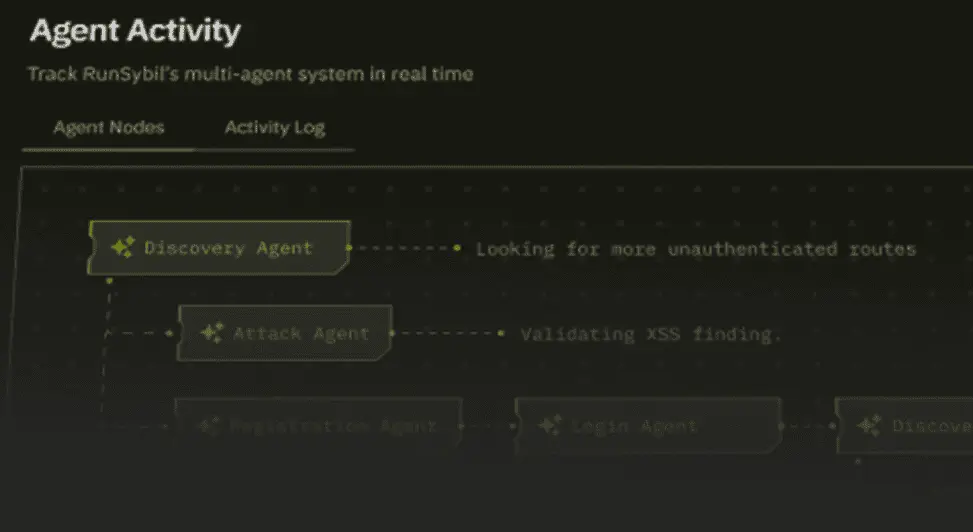

RunSybil, an AI cybersecurity startup that uses AI agents to automatically hack company software to find security weaknesses, has secured $40 million in venture capital funding. The round was led by Khosla Ventures, with participation from S32, the Anthology Fund from Anthropic and Menlo Ventures, Conviction and Elad Gil, along with angel investors including Nikesh Arora, Amit Agarwal, Jeff Dean, and other founders and leaders from companies including OpenAI, Palo Alto Networks, Stripe and Google. The company did not disclose the valuation it achieved in the new funding round. The company's AI agent, Sybil, conducts continuous autonomous penetration tests against live applications -- finding, exploiting and documenting real security vulnerabilities without humans in the loop. That's different from other security tools currently making headlines, such as Claude Code Security, which analyzes source code in applications for known vulnerabilities before it is deployed. RunSybil instead tests software that is already running, probing live systems the way a hacker would -- by exploring systems, chaining vulnerabilities together and testing authentication boundaries to find paths to sensitive data. Companies have long relied on a mix of penetration tests -- where outside security experts, or "ethical hackers," try to break into their systems; bug bounty programs that reward independent hackers for reporting flaws; and internal "red teams" that simulate real cyberattacks. RunSybil says its AI system can automate much of that work, continuously probing applications for vulnerabilities as new code is deployed. RunSybil argues this kind of automation is becoming necessary as AI reshapes how companies operate. Procurement, legal, finance, engineering and operations are all being rebuilt with AI -- including the growing use of AI agents. Yet security testing is still often treated as a discrete, scheduled event managed by a separate team on its own timeline. That mismatch can be especially challenging for highly regulated industries such as finance, insurance and health care, which face strict legal and audit requirements around cybersecurity. RunSybil was co-founded in 2023 by Ari Herbert-Voss, who joined OpenAI as its first security research hire in 2019, and Vlad Ionescu, who previously led offensive security red teams at Meta. Together, they say they represent a rare intersection: people who understand how to build frontier AI systems and how to hack into complex software. "We check every box that needs to be checked -- for auditors, regulators and compliance teams," Herbert-Voss said. But the real work, he said is transforming where, when and how customers discover and fix security issues: "Not as a project, but as a permanent capability embedded in how they build." Vinod Khosla, who made an early bet on OpenAI in 2019 and often invests in companies he considers to be on the technological frontier, told Fortune that "what it takes to add security and penetration testing to the AI world is definitely frontier -- RunSybil is on the edge." There is currently little competition in this part of the offensive security market, he said, though security incumbents such as Palo Alto Networks may eventually move into the space. For now, "nobody's really knowledgeable about it except individuals like [Herbert-Voss]," he said, adding that he has long been concerned about AI's cyber capabilities falling into the hands of adversaries such as China. "We invest in founders who tackle large, unsolved problems with technically ambitious solutions," he added. "[Herbert-Voss and Ionsecu] are building exactly the kind of platform security teams will need as software complexity and AI-driven development accelerate." Herbert-Voss has long been steeped in both hacking and AI. Growing up in a mostly Mormon community in Utah, he said he was drawn to the online hacker scene in middle and high school but pivoted away after friends "started getting arrested." While pursuing a Ph.D. at Harvard University studying machine learning and ways to make algorithms more efficient, he first heard about OpenAI. He dropped out of Harvard, he said, after becoming convinced that the rapid scaling of AI models -- training larger systems with more data and computing power -- would unlock powerful new capabilities. "Once OpenAI dropped GPT-2, I said wow, this changes everything about the economics of what it would take to run a cyber campaign," he explained. He sent a couple of hacker demos to OpenAI CEO Sam Altman and Jack Clark, then-head of policy at OpenAI who went on to co-found Anthropic. Both of them expressed their concerns about the potential misuse of LLMs and asked Herbert-Voss to come on to do security research. But by 2022, Herbert-Voss said he also began to see how quickly offensive cyber capabilities could evolve once powerful language models became widely available, including to malicious actors. Those same advances, he said, could dramatically expand cyber threats. That led to Herbert-Voss's decision to leave OpenAI and start RunSybil as a research project. RunSybil currently works with startups including Cursor, Turbopuffer, Notion, Baseten, and Thinking Machines Lab, as well as what the company says are major financial institutions and Fortune 500 companies. (The company declined to name any of those Fortune 500 or financial customers.) Herbert-Voss said that customers have already reported finding critical vulnerabilities that had gone undetected using traditional methods.

[2]

RunSybil raises $40M to automate offensive security with AI agents - SiliconANGLE

RunSybil raises $40M to automate offensive security with AI agents Offensive security startup RunSybil Inc. said today it has closed on a $40 million round of funding to help enterprises find and fix critical vulnerabilities in their software before the attackers get to them first. The round was led by Khosla Ventures and saw the participation of several venture capital heavy hitters, including Menlo Ventures, Anthropic PBC's Anthology Fund, S32 and Conviction. Notable angel investors in the round include Elad Gil, Palo Alto Networks Chief Executive Nikesh Arora, Datadog Inc. President Amit Agarwal and Google DeepMind Chief Scientist Jeff Dean. The investors RunSybil has managed to attract offer a big hint to the nature of RunSybil's offensive security platform. It leverages autonomous artificial intelligence agents to simulate the behavior of sophisticated human hackers. Unlike traditional cybersecurity tools that require access to a company's source code, RunSybil's agents interact with systems via their standard interfaces, searching for forgotten endpoints and probing for authentication boundaries. They use reasoning to try and replicate an attacker's intuition, then chain together multiple minor vulnerabilities to try and uncover paths to sensitive data that's usually overlooked by legacy scanning tools. It can be thought of as an AI-powered red team, looking to exploit systems and validate security gaps in real time. RunSybil argues that traditional scanning tools leave a persistent visibility gap in cybersecurity due to their reliance on static code analysis and infrequent manual testing. While existing tools excel at identifying known patterns, they're unable to properly replicate how attackers explore and manipulate live systems. Enterprises also have the option to pay for traditional penetration testing, which offers a more thorough examination of its security, but this is done by human experts, meaning it's slow and comes at a steep cost. As such, most companies only do penetration testing once or twice a year. Some companies rely on bug bounty programs to entice third-parties to explore their systems looking for weaknesses, but the results of these can be inconsistent. Independent researchers are motivated by the rewards on offer, and so they try to cherry pick the easy-to-find bugs and secure a quick payout, rather than conducting more comprehensive assessments of a system's architecture. In other words, there are problems with every kind of cybersecurity testing method, said RunSybil co-founder and CEO Ari Herbert-Voss. "Both approaches miss huge chunks of your actual attack surface," he explained. "We're the first to provide comprehensive black-box testing using AI to reason like a security researcher and find critical vulnerabilities without ever seeing a line of code." The startup says its novel approach has already delivered results for AI startups such as Cursor and Notion Labs Inc., as well as a number of unnamed Fortune 500 companies. Those companies report detecting critical flaws that were repeatedly missed by traditional bug bounty hunters and penetration tests. It also claims to have reduced the number of false positives by 90% compared to standard security scanners. One advantage of RunSybil's approach is that its AI agents learn from each previous interaction with a system, which means they become more capable over time. According to Herbert-Voss, it's like having access to an elite, highly-paid team of security researchers. Khosla Ventures founder Vinod Khosla said RunSybil's approach to security testing will lead to a fundamental shift in how modern software is protected. "We invest in founders who tackle large, unsolved problems with technically ambitious solutions," he explained. "Ari and Vlad are building exactly the kind of platform security teams will need as software complexity and AI-driven development accelerate." With its war chest now stuffed with cash, RunSybil plans to accelerate its research and development and expand its agentic security testing capabilities further. At the same time, it will scale its go-to-market teams and hire more researchers.

Share

Share

Copy Link

RunSybil, an AI cybersecurity startup founded by OpenAI's first security hire, has raised $40 million in funding led by Khosla Ventures. The company's AI agent continuously tests live applications by probing systems like a hacker would, finding software vulnerabilities that traditional security tools miss. This approach aims to transform security testing from a scheduled event into a permanent capability embedded in how companies build software.

RunSybil Secures $40 Million in Funding for AI-Powered Security Platform

RunSybil has closed a $40 million in funding round led by Khosla Ventures, marking a significant investment in AI cybersecurity innovation

1

. The round attracted participation from S32, Anthropic's Anthology Fund, Menlo Ventures, and Conviction, alongside notable angel investors including Nikesh Arora, Amit Agarwal, Jeff Dean, and Elad Gil2

. This venture capital funding validates the startup's approach to offensive security, which uses autonomous AI agents to simulate human hackers and identify software vulnerabilities before malicious actors can exploit them.How RunSybil's AI Agent Transforms Penetration Testing

The company's core technology, an AI agent called Sybil, conducts continuous autonomous penetration tests against live applications without requiring access to source code

1

. Unlike tools such as Claude Code Security that rely on static code analysis before deployment, RunSybil tests software that is already running in production environments. The platform performs black-box testing by exploring systems through their standard interfaces, searching for forgotten endpoints, and probing authentication boundaries to uncover paths to sensitive data2

. This method enables the AI to chain together multiple minor vulnerabilities that legacy scanning tools typically overlook, replicating the reasoning and intuition of sophisticated attackers.

Source: SiliconANGLE

Addressing Critical Gaps in Traditional Security Testing

Companies traditionally rely on a combination of manual penetration testing, bug bounty programs, and internal red team exercises to identify security gaps

1

. However, these approaches face significant limitations. Manual penetration testing conducted by human experts is expensive and slow, leading most organizations to conduct assessments only once or twice annually. Bug bounty programs produce inconsistent results as independent researchers often cherry-pick easy-to-find bugs for quick payouts rather than conducting comprehensive evaluations of a system's attack surface2

. Co-founder and CEO Ari Herbert-Voss explained that "both approaches miss huge chunks of your actual attack surface," positioning RunSybil as the first to provide comprehensive testing using AI to reason like a security researcher without ever seeing a line of code2

.Proven Results and Growing Adoption

RunSybil's security testing capabilities have already demonstrated measurable impact for early customers including AI startups Cursor and Notion Labs, along with several Fortune 500 companies

2

. These organizations report detecting critical flaws that were repeatedly missed by traditional bug bounty hunters and penetration tests. The platform has reduced false positives by 90% compared to standard security scanners, while its AI agents continuously improve by learning from each interaction with a system. This capability becomes particularly valuable as companies increasingly deploy AI across procurement, legal, finance, engineering, and operations, yet security testing remains treated as a discrete, scheduled event on a separate timeline1

.Founders Bring Rare Expertise to Automate Penetration Testing

RunSybil was co-founded in 2023 by Ari Herbert-Voss, who joined OpenAI as its first security research hire in 2019, and Vlad Ionescu, who previously led offensive security red teams at Meta

1

. Herbert-Voss dropped out of his Harvard Ph.D. program studying machine learning after recognizing that rapid AI scaling would unlock powerful new capabilities. After seeing GPT-2, he realized "this changes everything about the economics of what it would take to run a cyber campaign" and joined OpenAI after sending hacker demos to CEO Sam Altman1

. By 2022, he recognized that offensive cyber capabilities could evolve rapidly once powerful language models became widely available, including to malicious actors conducting cyberattacks.

Source: Fortune

Related Stories

Investor Confidence in Frontier Security Technology

Vinod Khosla, who made an early bet on OpenAI in 2019, told Fortune that "what it takes to add security and penetration testing to the AI world is definitely frontier—RunSybil is on the edge"

1

. He noted there is currently little competition in this part of the offensive security market, though incumbents such as Palo Alto Networks may eventually enter the space. Khosla emphasized that "we invest in founders who tackle large, unsolved problems with technically ambitious solutions," adding that Herbert-Voss and Ionescu "are building exactly the kind of platform security teams will need as software complexity and AI-driven development accelerate"2

.What This Means for Enterprise Security

Herbert-Voss emphasized that RunSybil aims to transform where, when, and how customers discover and fix security issues: "Not as a project, but as a permanent capability embedded in how they build"

1

. This shift becomes especially critical for highly regulated industries such as finance, insurance, and healthcare, which face strict legal and audit requirements around cybersecurity. The company plans to use its funding to accelerate research and development, expand its agentic security testing capabilities, scale go-to-market teams, and hire additional researchers2

. As AI reshapes enterprise operations and development cycles accelerate, continuous automated testing may become essential for organizations seeking to protect their systems against increasingly sophisticated threats while meeting compliance requirements.References

Summarized by

Navi

Related Stories

Depthfirst Raises $40M Series A to Scale AI Security Platform Against Evolving Cyber Threats

15 Jan 2026•Startups

Cogent Security raises $42 million as AI agents tackle vulnerability remediation bottleneck

19 Feb 2026•Startups

Onyx Security raises $40 million to secure AI agents as enterprises face new cybersecurity risks

12 Mar 2026•Technology

Recent Highlights

1

Google Maps unveils Ask Maps with Gemini AI and 3D Immersive Navigation in biggest update

Technology

2

Three Tennessee teens sue xAI over Grok AI creating child sexual abuse material from real photos

Policy and Regulation

3

Meta plans to cut 20% of workforce as AI spending reaches $600 billion, mirroring broader tech trend

Business and Economy