AI Chatbots May Hinder Deep Learning Compared to Traditional Web Search, Study Finds

4 Sources

4 Sources

[1]

Chatbots may make learning feel easy -- but it's superficial

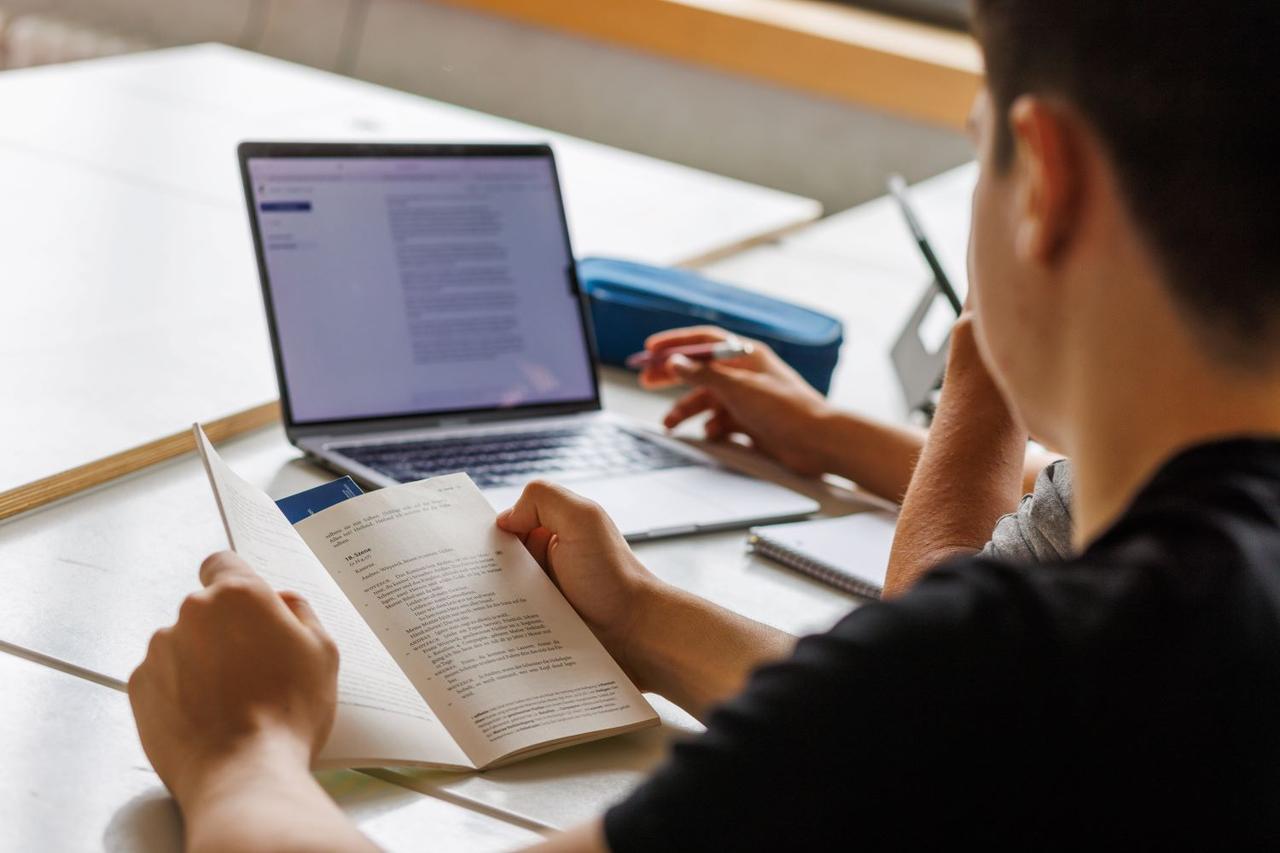

Googling the old-fashioned way leads to deeper learning than using AI tools, a study finds When it comes to learning something new, old-fashioned Googling might be the smarter move compared with asking ChatGPT. Large language models, or LLMs -- the artificial intelligence systems that power chatbots like ChatGPT -- are increasingly being used as sources of quick answers. But in a new study, people who used a traditional search engine to look up information developed deeper knowledge than those who relied on an AI chatbot, researchers report in the October PNAS Nexus. "LLMs are fundamentally changing not just how we acquire information but how we develop knowledge," says Shiri Melumad, a consumer psychology researcher at the University of Pennsylvania. "The more we learn about their effects -- both their benefits and risks -- the more effectively people can use them, and the better they can be designed." Melumad and Jin Ho Yun, a neuroscientist at the University of Pennsylvania, ran a series of experiments comparing what people learn through LLMs versus traditional web searches. Over 10,000 participants across seven experiments were randomly assigned to research different topics -- such as how to grow a vegetable garden or how to lead a healthier lifestyle -- using either Google or ChatGPT, then write advice for a friend based on what they'd learned. The researchers evaluated how much participants learned from the task and how invested they were in their advice. Even controlling for the information available -- for instance, by using identical sets of facts in simulated interfaces -- the pattern held: Knowledge gained from chatbot summaries was shallower compared with knowledge gained from web links. Indicators for "shallow" versus "deep" knowledge were based on participant self-reporting, natural language processing tools and evaluations by independent human judges. The analysis also found that those who learned via LLMs were less invested in the advice they gave, produced less informative content and were less likely to adopt the advice for themselves compared with those who used web searches. "The same results arose even when participants used a version of ChatGPT that provided optional web links to original sources," Melumad says. Only about a quarter of the roughly 800 participants in that "ChatGPT with links" experiment were even motivated to click on at least one link. "While LLMs can reduce the load of having to synthesize information for oneself, this ease comes at the cost of developing deeper knowledge on a topic," she says. She also adds that more could be done to design search tools that actively encourage users to dig deeper. Psychologist Daniel Oppenheimer of Carnegie Mellon University in Pittsburgh says that while this is a good project, he would frame it differently. He thinks it's more accurate to say that "LLMs reduce motivation for people to do their own thinking," rather than claiming that people who synthesize information for themselves gain a deeper understanding than those who receive a synthesis from another entity, such as an LLM. However, he adds that he would hate for people to abandon a useful tool because they think it will universally lead to shallower learning. "Like all learning," he says, "the effectiveness of the tool depends on how you use it. What this finding is showing is that people don't naturally use it as well as they might."

[2]

Learning with AI falls short compared to old-fashioned web search

Shiri Melumad does not work for, consult, own shares in or receive funding from any company or organisation that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment. Since the release of ChatGPT in late 2022, millions of people have started using large language models to access knowledge. And it's easy to understand their appeal: Ask a question, get a polished synthesis and move on - it feels like effortless learning. However, a new paper I co-authored offers experimental evidence that this ease may come at a cost: When people rely on large language models to summarize information on a topic for them, they tend to develop shallower knowledge about it compared to learning through a standard Google search. Co-author Jin Ho Yun and I, both professors of marketing, reported this finding in a paper based on seven studies with more than 10,000 participants. Most of the studies used the same basic paradigm: Participants were asked to learn about a topic - such as how to grow a vegetable garden - and were randomly assigned to do so by using either an LLM like ChatGPT or the "old-fashioned way," by navigating links using a standard Google search. No restrictions were put on how they used the tools; they could search on Google as long as they wanted and could continue to prompt ChatGPT if they felt they wanted more information. Once they completed their research, they were then asked to write advice to a friend on the topic based on what they learned. The data revealed a consistent pattern: People who learned about a topic through an LLM versus web search felt that they learned less, invested less effort in subsequently writing their advice, and ultimately wrote advice that was shorter, less factual and more generic. In turn, when this advice was presented to an independent sample of readers, who were unaware of which tool had been used to learn about the topic, they found the advice to be less informative, less helpful, and they were less likely to adopt it. We found these differences to be robust across a variety of contexts. For example, one possible reason LLM users wrote briefer and more generic advice is simply that the LLM results exposed users to less eclectic information than the Google results. To control for this possibility, we conducted an experiment where participants were exposed to an identical set of facts in the results of their Google and ChatGPT searches. Likewise, in another experiment we held constant the search platform - Google - and varied whether participants learned from standard Google results or Google's AI Overview feature. The findings confirmed that, even when holding the facts and platform constant, learning from synthesized LLM responses led to shallower knowledge compared to gathering, interpreting and synthesizing information for oneself via standard web links. Why it matters Why did the use of LLMs appear to diminish learning? One of the most fundamental principles of skill development is that people learn best when they are actively engaged with the material they are trying to learn. When we learn about a topic through Google search, we face much more "friction": We must navigate different web links, read informational sources, and interpret and synthesize them ourselves. While more challenging, this friction leads to the development of a deeper, more original mental representation of the topic at hand. But with LLMs, this entire process is done on the user's behalf, transforming learning from a more active to passive process. What's next? To be clear, we do not believe the solution to these issues is to avoid using LLMs, especially given the undeniable benefits they offer in many contexts. Rather, our message is that people simply need to become smarter or more strategic users of LLMs - which starts by understanding the domains wherein LLMs are beneficial versus harmful to their goals. Need a quick, factual answer to a question? Feel free to use your favorite AI co-pilot. But if your aim is to develop deep and generalizable knowledge in an area, relying on LLM syntheses alone will be less helpful. As part of my research on the psychology of new technology and new media, I am also interested in whether it's possible to make LLM learning a more active process. In another experiment we tested this by having participants engage with a specialized GPT model that offered real-time web links alongside its synthesized responses. There, however, we found that once participants received an LLM summary, they weren't motivated to dig deeper into the original sources. The result was that the participants still developed shallower knowledge compared to those who used standard Google. Building on this, in my future research I plan to study generative AI tools that impose healthy frictions for learning tasks - specifically, examining which types of guardrails or speed bumps most successfully motivate users to actively learn more beyond easy, synthesized answers. Such tools would seem particularly critical in secondary education, where a major challenge for educators is how best to equip students to develop foundational reading, writing and math skills while also preparing for a real world where LLMs are likely to be an integral part of their daily lives. The Research Brief is a short take on interesting academic work.

[3]

Learning With AI Falls Short Compared to Old-Fashioned Web Search

Since the release of ChatGPT in late 2022, millions of people have started using large language models to access knowledge. And it’s easy to understand their appeal: Ask a question, get a polished synthesis and move on â€" it feels like effortless learning. However, a new paper I co-authored offers experimental evidence that this ease may come at a cost: When people rely on large language models to summarize information on a topic for them, they tend to develop shallower knowledge about it compared to learning through a standard Google search. Co-author Jin Ho Yun and I, both professors of marketing, reported this finding in a paper based on seven studies with more than 10,000 participants. Most of the studies used the same basic paradigm: Participants were asked to learn about a topic â€" such as how to grow a vegetable garden â€" and were randomly assigned to do so by using either an LLM like ChatGPT or the “old-fashioned way,†by navigating links using a standard Google search. No restrictions were put on how they used the tools; they could search on Google as long as they wanted and could continue to prompt ChatGPT if they felt they wanted more information. Once they completed their research, they were then asked to write advice to a friend on the topic based on what they learned. The data revealed a consistent pattern: People who learned about a topic through an LLM versus web search felt that they learned less, invested less effort in subsequently writing their advice, and ultimately wrote advice that was shorter, less factual and more generic. In turn, when this advice was presented to an independent sample of readers, who were unaware of which tool had been used to learn about the topic, they found the advice to be less informative, less helpful, and they were less likely to adopt it. We found these differences to be robust across a variety of contexts. For example, one possible reason LLM users wrote briefer and more generic advice is simply that the LLM results exposed users to less eclectic information than the Google results. To control for this possibility, we conducted an experiment where participants were exposed to an identical set of facts in the results of their Google and ChatGPT searches. Likewise, in another experiment we held constant the search platform â€" Google â€" and varied whether participants learned from standard Google results or Google’s AI Overview feature. The findings confirmed that, even when holding the facts and platform constant, learning from synthesized LLM responses led to shallower knowledge compared to gathering, interpreting and synthesizing information for oneself via standard web links. Why did the use of LLMs appear to diminish learning? One of the most fundamental principles of skill development is that people learn best when they are actively engaged with the material they are trying to learn. When we learn about a topic through Google search, we face much more “frictionâ€: We must navigate different web links, read informational sources, and interpret and synthesize them ourselves. While more challenging, this friction leads to the development of a deeper, more original mental representation of the topic at hand. But with LLMs, this entire process is done on the user’s behalf, transforming learning from a more active to passive process. To be clear, we do not believe the solution to these issues is to avoid using LLMs, especially given the undeniable benefits they offer in many contexts. Rather, our message is that people simply need to become smarter or more strategic users of LLMs â€" which starts by understanding the domains wherein LLMs are beneficial versus harmful to their goals. Need a quick, factual answer to a question? Feel free to use your favorite AI co-pilot. But if your aim is to develop deep and generalizable knowledge in an area, relying on LLM syntheses alone will be less helpful. As part of my research on the psychology of new technology and new media, I am also interested in whether it’s possible to make LLM learning a more active process. In another experiment we tested this by having participants engage with a specialized GPT model that offered real-time web links alongside its synthesized responses. There, however, we found that once participants received an LLM summary, they weren’t motivated to dig deeper into the original sources. The result was that the participants still developed shallower knowledge compared to those who used standard Google. Building on this, in my future research I plan to study generative AI tools that impose healthy frictions for learning tasks â€" specifically, examining which types of guardrails or speed bumps most successfully motivate users to actively learn more beyond easy, synthesized answers. Such tools would seem particularly critical in secondary education, where a major challenge for educators is how best to equip students to develop foundational reading, writing and math skills while also preparing for a real world where LLMs are likely to be an integral part of their daily lives.

[4]

Something Disturbing Happens When You "Learn" Something With ChatGPT

ChatGPT and other AI chatbots are replacing the search engine. Instead of letting you suffer the laborious task of looking up sources of information, these powerful large language models will simply concoct an answer for you, with the minor risk that it might be totally made up. It turns out there's another hazard: while getting your answers this way may be quick, it isn't great for actually learning, according to a new study published in the journal PNAS Nexus. "When people rely on large language models to summarize information on a topic for them, they tend to develop shallower knowledge about it compared to learning through a standard Google search," study co-lead author Shiri Melumad, a professor at the Wharton School of the University of Pennsylvania, wrote in an essay for The Conversation about her work. The findings are based on an analysis of seven studies with more than 10,000 participants. The gist of their experiments went like this: the participants were told to learn about a topic, and were randomly assigned to either only use an AI chatbot like ChatGPT to do their research, or a standard search engine like Google. At the end, the participants were asked to write advice to a friend about what they learned. A clear pattern emerged. The participants who used AI to do their research wrote shorter advice, with generic tips and less factual information, while the people who used a Google search produced more detailed and thoughtful tips. The pattern held even after controlling for factors like the information the users saw during their research by showing each group the same facts, or what tools they used. "The findings confirmed that, even when holding the facts and platform constant, learning from synthesized LLM responses led to shallower knowledge compared to gathering, interpreting and synthesizing information for oneself via standard web links," Melumad wrote. Scientists are still only beginning to grasp the longterm effects of AI usage on the brain, but the body of evidence so far suggests alarming risks. One major study that turned heads was conducted by researchers from Carnegie Mellon and Microsoft, and found that people who trusted the accuracy of AI tools saw their critical thinking skills atrophy. Another study linked students who heavily relied on ChatGPT to memory loss and tanking grades. "One of the most fundamental principles of skill development is that people learn best when they are actively engaged with the material they are trying to learn," Melumad explained. "When we learn about a topic through Google search, we face much more 'friction': We must navigate different web links, read informational sources, and interpret and synthesize them ourselves." "But with LLMs," Meluad added, "this entire process is done on the user's behalf, transforming learning from a more active to passive process." While scientists continue to explore AI's risks, the tech is making rapid inroads into education, where they're a popular tool for cheating on assignments. Leaders like OpenAI, Microsoft, and Anthropic are spending millions of dollars to provide teachers training on how to use their AI products, while universities partner with these same firms to create their own tailor-made chatbots to foist on their students, like Duke University's creatively named collaboration with OpenAI, "DukeGPT."

Share

Share

Copy Link

A comprehensive study involving over 10,000 participants reveals that using AI chatbots like ChatGPT for learning leads to shallower knowledge acquisition compared to traditional Google searches, raising concerns about the educational impact of AI tools.

Study Reveals Learning Limitations of AI Chatbots

A groundbreaking study published in PNAS Nexus has found that while AI chatbots like ChatGPT offer convenient access to information, they may significantly impair deep learning compared to traditional web search methods. The research, conducted by Shiri Melumad from the University of Pennsylvania's Wharton School and neuroscientist Jin Ho Yun, involved over 10,000 participants across seven comprehensive experiments

1

.The study's methodology was straightforward yet revealing. Participants were randomly assigned to research various topics—such as vegetable gardening or healthy lifestyle practices—using either large language models like ChatGPT or traditional Google searches. After completing their research, participants wrote advice for friends based on their newly acquired knowledge

2

.

Source: Science News

Consistent Pattern of Shallow Learning

The results demonstrated a consistent and concerning pattern across all experiments. Participants who relied on AI chatbots developed notably shallower knowledge compared to those using traditional search engines. This manifested in several measurable ways: chatbot users felt they learned less, invested less effort in writing advice, and produced content that was shorter, less factual, and more generic

3

.When independent evaluators—unaware of which research method participants had used—assessed the advice, they consistently rated content from traditional search users as more informative, helpful, and actionable. The evaluators were also more likely to adopt advice generated through conventional web research methods

1

.

Source: Futurism

Controlled Experiments Confirm Findings

To ensure their findings weren't simply due to different information exposure, researchers conducted controlled experiments where participants accessed identical sets of facts through both Google and ChatGPT interfaces. Even under these controlled conditions, the pattern persisted: synthesized AI responses led to shallower knowledge acquisition compared to gathering and interpreting information independently

2

.Additionally, researchers tested Google's AI Overview feature against standard Google results while keeping the platform constant. The findings remained consistent, confirming that the issue lies in the synthesized nature of AI responses rather than platform differences

3

.The Science Behind Active Learning

The research team attributes these findings to fundamental principles of skill development and cognitive psychology. "One of the most fundamental principles of skill development is that people learn best when they are actively engaged with the material they are trying to learn," Melumad explained

4

.Traditional web searches require users to navigate multiple sources, read various informational materials, and synthesize information independently. This "friction," while more challenging, leads to deeper and more original mental representations of topics. In contrast, AI chatbots perform this entire cognitive process on behalf of users, transforming learning from an active to a passive experience

2

.

Source: Gizmodo

Related Stories

Broader Implications for Education

These findings raise significant concerns about AI's rapid integration into educational settings. The technology is increasingly being adopted in schools and universities, with major companies like OpenAI, Microsoft, and Anthropic investing millions in teacher training programs. Universities are partnering with AI firms to create custom chatbots for students, such as Duke University's "DukeGPT" collaboration with OpenAI

4

.The research adds to growing evidence about AI's potential cognitive impacts. Previous studies have linked heavy AI reliance to deteriorating critical thinking skills and, among students, memory loss and declining academic performance

4

.Strategic AI Usage Recommendations

Rather than advocating complete avoidance of AI tools, researchers emphasize the need for strategic usage. "Need a quick, factual answer to a question? Feel free to use your favorite AI co-pilot. But if your aim is to develop deep and generalizable knowledge in an area, relying on LLM syntheses alone will be less helpful," Melumad noted

2

.The research team tested whether providing web links alongside AI summaries might encourage deeper engagement. However, they found that once participants received AI-generated summaries, only about 25% were motivated to explore additional sources, suggesting that the convenience of synthesized information reduces motivation for further investigation

1

.References

Summarized by

Navi

[1]

[2]

Related Stories

MIT Study Reveals Potential Cognitive Impacts of AI Assistance in Writing Tasks

09 Jul 2025•Science and Research

AI's Impact on Brain Activity and Writing: New Research Raises Concerns

26 Jun 2025•Technology

MIT Study Reveals Cognitive Costs of Using ChatGPT for Writing Tasks

18 Jun 2025•Science and Research

Recent Highlights

1

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

2

Anthropic's Claude Code Source Leak Reveals Hidden AI Agent Plans and Extensive System Access

Technology

3

Judge blocks Pentagon from branding Anthropic a security risk over AI safety guardrails dispute

Policy and Regulation