Taalas raises $169 million to build custom AI chips that hardwire models into silicon

4 Sources

4 Sources

[1]

Chip startup Taalas raises $169 million to help build AI chips to take on Nvidia

SAN FRANCISCO, Feb 19 (Reuters) - Toronto-based chip startup Taalas said on Thursday it had raised $169 million and has developed a chip capable of running artificial intelligence applications faster and more cheaply than conventional approaches. Taalas has raised a total of $219 million from investors such as Quiet Capital, Fidelity and Pierre Lamond, a chip industry venture capitalist. Taalas' announcement arrives weeks after Nvidia's (NVDA.O), opens new tab deal to license intellectual property from chip startup Groq for $20 billion, which reignited interest in a crop of startups and technologies used to perform specific elements of AI inference, the process where an AI model, such as the one powering OpenAI's ChatGPT, responds to user queries. Taalas' approach to chip design involves printing portions of an AI model onto a piece of silicon, effectively producing a custom chip suited for specific models such as a small version of Meta's Llama model. The customized silicon is paired with large amounts of speedy but costly on-chip memory called static random-access memory (SRAM), which is similar to Groq's design. It is the bespoke design for each model that gives the Taalas chip its advantage, CEO Ljubisa Bajic told Reuters in an interview. "This hardwiring is partly what gives us the speed," he said. The startup assembles a nearly complete chip, which has roughly 100 layers, and then performs the final customization on two of the metal layers, Bajic said. It takes TSMC (2330.TW), opens new tab, which Taalas uses for manufacturing, about two months to complete fabrication of a chip customized for a particular model, he said. It takes roughly six months to fabricate an AI processor such as Nvidia's Blackwell. Taalas said it can produce chips capable of running less sophisticated models now and has plans to build a processor capable of deploying a cutting-edge model, such as GPT-5.2, by the end of this year. Groq's first generation of processor used an SRAM-heavy approach to its chip design, as does another startup, Cerebras, which signed a cloud computing deal with OpenAI in January. Startup d-Matrix also uses a similar design. Reporting by Max A. Cherney in San Francisco; Editing by Edward Tobin and Lisa Shumaker Our Standards: The Thomson Reuters Trust Principles., opens new tab * Suggested Topics: * Asia Pacific Max A. Cherney Thomson Reuters Max A. Cherney is a correspondent for Reuters based in San Francisco, where he reports on the semiconductor industry and artificial intelligence. He joined Reuters in 2023 and has previously worked for Barron's magazine and its sister publication, MarketWatch. Cherney graduated from Trent University with a degree in history.

[2]

Taalas raises $169M in funding to develop model-specific AI chips - SiliconANGLE

Taalas raises $169M in funding to develop model-specific AI chips Taalas Inc., a startup that develops chips optimized to run specific artificial intelligence models, has raised $169 million in funding. Reuters reported today that the company's backers include Quiet Capital, Fidelity and prominent semiconductor investor Pierre Lamond. The round brings Taalas' total outside funding to more than $200 million. The company's first product is a chip optimized to run the open-source Llama 3.1 8B language model. Taalas says that it can generate 17,000 output tokens per second, or 73 times more than Nvidia Corp.'s H200 graphics card. Moreover, the processor provides that performance while using one tenth the power. Tailoring a chip to a specific AI model improves efficiency partly because it allows engineers to do away with redundant components. An off-the-shelf graphics card, for example, might include more DRAM than an AI model can use. A custom chip can swap the unused RAM for extra transistors that enable the model to run faster. Developing a fully custom processor is prohibitively expensive for most AI projects. According to Taalas, its engineers lower costs by only customizing only 2 of the more than 100 layers that make up its chips. In most processors, only a handful of layers contain transistors. The others host supporting components such as the wires that move data between different chip sections. Usually, the layers that contain the wiring are located about the transistors. The custom layers in Taalas' chips feature what it describes as a mask ROM recall fabric. ROM is a type of memory to which users can only write data once. From there, they can read the data but not edit it. According to Next Platform, each mask ROM recall fabric module can store four bits. Taalas' architecture uses only a single transistor to process that data. The transistor carries out matrix multiplications, the mathematical calculations that AI models use to make decisions. One benefit of Taalas' design is that it removes the need for HBM modules. Those are integrated memory chips that a graphics card uses to store AI models' data. Moving information to and from HBM takes a relatively long time, which slows down processing. According to Taalas, its approach avoids that delay while also doing away with the various auxiliary components needed to support a chip's HBM modules. The company is reportedly developing a new chip that can run a Llama model with 20 billion parameters. It's expected to be ready this summer. Taalas plans to follow up the chip with an even more advanced processor, HC2, that will be capable of running a frontier model.

[3]

This New AI Chipmaker, Taalas, Hard-Wires AI Models Into Silicon to Make Them Faster and Cheaper; Early Results Crush Modern Solutions

Well, it appears that the chip startup Taalas has found a solution to LLM response latency and performance by creating dedicated hardware that 'hardwires' AI models. When you look at today's world of AI compute, latency is emerging as a massive constraint for modern-day compute providers, mainly because, in an agentic environment, the primary moat lies in token-per-second (TPS) figures and how quickly you can get a task done. One solution the industry sees is integrating SRAM into their offerings, and companies like Cerebras and Groq are already exploring it. However, the startup Taalas has apparently explored a rather intriguing route: pivot away from general-purpose computing towards ASICs for LLMs. Founded 2.5 years ago, Taalas developed a platform for transforming any AI model into custom silicon. From the moment a previously unseen model is received, it can be realized in hardware in only two months. The resulting Hardcore Models are an order of magnitude faster, cheaper, and lower power than software-based implementations. - Taalas According to the company, its approach focuses on two different fundamentals. The first is the specialization of AI workloads at the hardware level. And when we say hardware-focused, it literally means mapping specific neural networks of LLMs onto the silicon itself, to optimize infrastructure for each model. The second target area is what the company calls "merging storage and computation", and here, the focus is on overcoming memory walls and the overhead in data communications within a general-purpose system. With their solution, all computation happens at "DRAM-level" density to ensure faster intercommunication, which is one of the reasons Taalas has managed to solve the latency problem with LLMs. Their solution doesn't include advanced cooling, HBM, packaging, and complex integration; instead, all the innovation happens within the engineering dynamics of silicon. Taalas has also showcased its first product, called HC1, which integrates Meta's Llama 3.1 8B LLM. The performance results are 'shocking' to say the least. Taalas delivers 10x the TPS of today's "high-end" infrastructure while achieving 20x lower production costs. Well, you might think that latency and performance constraints are solved here, but let's look at the HC1 chip from a technical angle. It features TSMC's 6nm node and a chip size up to 815 mm², which is almost the size of NVIDIA's H100 chip. The HC1 hosts an eight-billion-parameter model, while today's frontier LLMs scale up to one trillion parameters. And, if you have guessed it by now, Taalas would need to rework its silicon strategy. And the only way to scale up performance is to offer a cluster-based approach, and according to Taalas, they have already done this with DeepSeek's R1, achieving a 12,000 TPS/user figure in a 30-chip configuration. So, the primary constraints now lie in market adoption and the business model. Given this hardwired approach, hardware would indeed be specific to certain LLMs, without the option to change model weights, but given the startup's speed figures, it isn't a bad bet.

[4]

Chip startup Taalas raises $169 million to help build AI chips to take on Nvidia

SAN FRANCISCO, Feb 19 (Reuters) - Toronto-based chip startup Taalas said on Thursday it had raised $169 million and said it has developed a chip capable of running artificial intelligence applications faster and more cheaply than conventional approaches. Taalas' announcement arrives weeks after Nvidia's Christmas Eve deal to license intellectual property from chip startup Groq for $20 billion, which reignited interest in a crop of startups and technologies used to perform specific elements of AI inference, the process where an AI model, such as the one that powers OpenAI's ChatGPT, responds to user queries. Taalas' approach to chip design involves printing portions of an AI model onto a piece of silicon, effectively producing a custom chip suited for specific models such as a small version of Meta's known as Llama. The customized silicon is paired with large amounts of speedy but costly on-chip memory called SRAM, which is similar to Groq's design. But it's the bespoke design for each model that gives the Taalas chip its advantage. "This hard wiring is partly what gives us the speed," CEO Ljubisa Bajic told Reuters in an interview. The startup assembles a nearly complete chip, which has roughly 100 layers, and then performs the final customization on two of the metal layers, Bajic said. It takes TSMC, which Taalas uses for manufacturing, about two months to complete fabrication of a chip customized for a particular model, he said. It takes roughly six months to fabricate an AI processor such as Nvidia's Blackwell. Taalas said it can produce chips capable of running less sophisticated models now and has plans to build a processor capable of deploying a cutting-edge model, such as the GPT 5.2, by the end of this year. Groq's first generation of processor used an SRAM-heavy approach to its chip design, as does another startup, Cerebras, which signed a January cloud computing deal with OpenAI, and D-Matrix. (Reporting by Max A. Cherney in San Francisco; editing by Edward Tobin)

Share

Share

Copy Link

Toronto-based chip startup Taalas secured $169 million in funding to develop AI chips that embed neural networks directly into silicon. The company claims its HC1 chip runs Meta's Llama 3.1 8B model 73 times faster than Nvidia's H200 while using one-tenth the power, challenging conventional approaches to AI inference.

Taalas Secures Major Funding to Challenge Nvidia in AI Chip Market

Toronto-based chip startup Taalas announced it raised $169 million in a funding round that brings its total capital to $219 million

1

. Investors include Quiet Capital, Fidelity, and prominent semiconductor venture capitalist Pierre Lamond1

. The startup's approach centers on hardwiring AI models into silicon to deliver faster and cheaper AI applications, positioning itself to take on Nvidia in the rapidly evolving AI inference market.Revolutionary Approach to Model-Specific AI Chips

The chip startup Taalas has developed a distinctive method for custom-designing chips that involves printing portions of an AI model directly onto silicon. This creates bespoke processors tailored for specific models such as Meta's Llama 3.1 8B language model

1

. CEO Ljubisa Bajic explained that "this hardwiring is partly what gives us the speed"4

. The customized silicon pairs with large amounts of on-chip SRAM, similar to designs from Groq and Cerebras.

Source: Wccftech

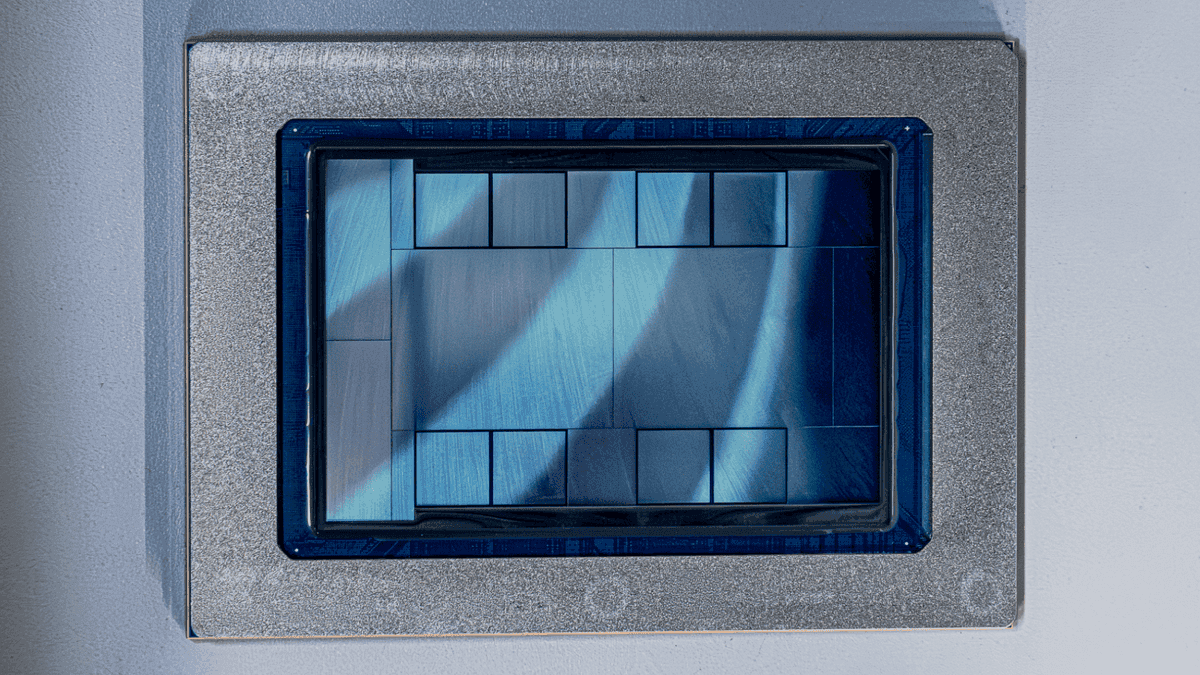

Technical Innovation Through Mask ROM Recall Fabric

Taalas assembles a nearly complete chip with roughly 100 layers, then performs final customization on just two metal layers

1

. These custom layers feature what the company describes as a mask ROM recall fabric, where each module can store four bits using only a single transistor to process matrix multiplications—the mathematical calculations that LLMs use to make decisions2

. This design eliminates the need for HBM modules, avoiding the delays caused by moving information to and from external memory while removing various auxiliary components2

.Dramatic Performance Gains Over Current Solutions

The company's first product, called HC1, delivers remarkable results for AI inference tasks. Taalas claims its chip can generate 17,000 tokens per second (TPS) when running the Llama 3.1 8B model—73 times more than Nvidia's H200 graphics card while consuming one-tenth the power

2

. According to performance data, Taalas delivers 10 times the TPS of today's high-end infrastructure while achieving 20 times lower production costs3

. The HC1 chip uses TSMC's 6nm node and features a chip size up to 815 mm², nearly matching Nvidia's H100 dimensions3

.Faster Manufacturing Timeline Creates Competitive Edge

Using TSMC for manufacturing, Taalas can complete fabrication of a chip customized for a particular model in approximately two months

1

. This contrasts sharply with the roughly six-month timeline required to fabricate an AI processor such as Nvidia's Blackwell1

. The company's platform can transform any AI model into custom silicon, creating what it calls "Hardcore Models" that are an order of magnitude faster, cheaper, and lower power than software-based implementations3

.Related Stories

Addressing Response Latency in Agentic AI Environments

Taalas founded its approach 2.5 years ago around solving response latency, which has emerged as a massive constraint for modern compute providers

3

. In agentic environments, the primary competitive advantage lies in tokens per second figures and task completion speed. The company's solution focuses on specialization of AI workloads at the hardware level and merging storage and computation to overcome memory walls3

. All computation happens at DRAM-level density to ensure faster intercommunication, avoiding the complex cooling, HBM integration, and packaging required by conventional ASICs3

.Ambitious Roadmap Targets Frontier Models

Taalas can currently produce chips capable of running less sophisticated models and is developing a new chip that can run a Llama model with 20 billion parameters, expected to be ready this summer

2

. The company has plans to build a processor called HC2 capable of deploying cutting-edge models such as GPT-5.2 by the end of this year1

. To address scaling challenges, Taalas has adopted a cluster-based approach, demonstrating 12,000 TPS per user with DeepSeek's R1 in a 30-chip configuration3

.Market Context and Competitive Landscape

The announcement arrives weeks after Nvidia's deal to license intellectual property from chip startup Groq for $20 billion, which reignited interest in startups focused on AI inference—the process where an AI model responds to user queries

1

. Groq's first generation processor used an SRAM-heavy approach to chip design, as does Cerebras, which signed a cloud computing deal with OpenAI in January, and d-Matrix1

. However, Taalas differentiates itself by pivoting away from general-purpose computing entirely toward model-specific implementations, though this means hardware remains specific to certain LLMs without the option to change model weights3

.References

Summarized by

Navi

Related Stories

MatX AI chip startup raises $500 million to challenge Nvidia's dominance in LLM processing

25 Feb 2026•Startups

Cognichip raises $60M to use AI for designing the chips that power AI itself

Yesterday•Startups

Meta unveils four MTIA chip generations to power AI inference and reduce Nvidia dependence

12 Mar 2026•Technology

Recent Highlights

1

AI Models Lie and Deceive to Protect Other AI Models From Deletion, Study Reveals

Science and Research

2

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

3

Judge blocks Pentagon from blacklisting Anthropic over AI safety guardrails dispute

Policy and Regulation