Pentagon reveals how Military AI chatbots accelerate targeting decisions in Iran operations

7 Sources

7 Sources

[1]

Defense official reveals how AI chatbots could be used for targeting decisions

A list of possible targets might be fed into a generative AI system that the Pentagon is fielding for classified settings. Then, said the official, who requested to speak on background with MIT Technology Review to discuss sensitive topics, humans might ask the system to analyze the information and rank which targets are a priority, while accounting for factors like where aircraft are currently located. Humans would then be responsible for checking and evaluating the results and recommendations. OpenAI's ChatGPT and xAI's Grok could, in theory, be the models used for this type of scenario in the future, as both companies recently reached agreements for their models to be used by the Pentagon in classified settings. The official described this as an example use case of how things might work, but would not confirm or deny whether it represents how AI systems are currently being used. Other outlets have reported that Anthropic's Claude has been integrated into existing military AI systems and used in operations in Iran and Venezuela, but the official's comments add insight into the specific role chatbots may play, particularly in accelerating the search for targets. They also shed light on the way it's deploying two different AI technologies, each with distinct limitations. Since at least 2017, the US military has been working on a "big data" initiative called Maven. It uses older types of AI, particularly computer vision, to analyze the oceans of data and imagery collected by the Pentagon. Maven might take thousands of hours of aerial drone footage, for example, and algorithmically identify targets. A 2024 report from Georgetown showed soldiers using the system to select targets and vet them, which sped up the process to get approval for these targets. Soldiers interacted with Maven through an interface with a battlefield map and dashboard, which might highlight potential targets in one color and friendly forces in another. Now, the official's comments suggest that generative AI is being added as a conversational, chatbot layer -- one which the military would use to more quickly find and analyze the data as it makes decisions like which targets to prioritize. Generative AI systems, like those that underpin ChatGPT, Claude, and Grok, are a fundamentally different technology than the AI that has primarily powered Maven. Built on large language models, their use in war is much more recent and less battle-tested. And while the old interface of Maven forced users to directly inspect and interpret data on the map, the outputs given by generative AI models are easier to access but harder to verify. The use of generative AI for such decisions is reducing the time required in the targeting process, the official added, but did not provide detail when asked how much additional speed is possible if humans are required to spend time double checking a model's outputs. The use of military AI systems is under increased public scrutiny following the recent strike on a girls school in Iran in which more than one hundred children died. Multiple news outlets have reported the strike was from a US missile, though the Pentagon has said it is still under investigation. And while the Washington Post has reported that Claude and Maven have been involved in targeting decisions in Iran, there is no evidence yet to explain what role generative AI systems played, if any. The New York Times reported on Wednesday that a preliminary investigation found outdated targeting data to be partly responsible for the strike.

[2]

Palantir Demos Show How the Military Could Use AI Chatbots to Generate War Plans

An ongoing and heated dispute between the Pentagon and Anthropic is raising new questions about how the startup's technology is actually used inside the US military. In late February, Anthropic refused to grant the government unconditional access to its Claude AI models, insisting the systems should not be used for mass surveillance of Americans or fully autonomous weapons. The Pentagon responded by labeling Anthropic's products a "supply-chain risk," prompting the startup to file two lawsuits this week alleging illegal retaliation by the Trump administration and seeking to overturn the designation. The clash, along with the rapidly escalating war in Iran, has drawn attention to Anthropic's partnership with the military contractor Palantir, which announced in November 2024 that it would integrate Claude into the software it sells to US intelligence and defense agencies. Palantir says the Claude integration can help analysts uncover "data-driven insights," identify patterns, and support making "informed decisions in time-sensitive situations." However, Palantir and Anthropic have shared few details about how Claude functions within the military or which Pentagon systems rely on it, even as the AI tool reportedly continues to be used in some US defense operations overseas, including the war in Iran. In January, Claude also reportedly played an instrumental role in the US military operation that led to the capture of Venezuelan president Nicolás Maduro. WIRED reviewed Palantir software demos, public documentation, and Pentagon records that together paint the clearest picture to date of how American military officials may be using AI chatbots, including what kinds of queries are being fed to them, the data they use to generate responses, and the kinds of recommendations they give analysts. The Department of Defense did not respond to a request for comment. Palantir and Anthropic declined to comment. Military officials can use Claude to sift through large volumes of intelligence, according to a source familiar with the matter. Palantir sells multiple software tools to the Pentagon where such analysis might take place, but the company has never publicly specified which of those systems do or don't incorporate Claude. Since 2017, Palantir has been the primary contractor behind "Project Maven," also known as the Algorithmic Warfare Cross-Functional team, a Defense Department initiative for deploying AI in war settings. For the project, Palantir developed a product known as "Maven Smart System," sometimes simply called "Maven." Maven is managed by the National Geospatial Intelligence Agency (NGA), the government body in charge of collecting and analyzing satellite data. Agencies across the military -- including the Army, Air Force, Space Force, Navy, Marine Corps, and US Central Command, which is overseeing military operations in Iran -- can access Maven. Cameron Stanley, the Pentagon's chief digital and artificial intelligence officer, said at a recent Palantir conference that Maven is being deployed "across the entire department." According to public assessments of Maven published by the military, the tool can apply "computer vision algorithms" to images taken by a "space-based asset" like a satellite, as well as automatically detect objects likely to be "enemy systems." A Maven demo shown during Stanley's conference presentation shows the tool distinguishing people from cars. Other features in Maven help visualize potential targets and "nominate" them for ground or aerial bombardment. A tool called the "AI Asset Tasking Recommender" can propose which bombers and munitions should be assigned to which targets, according to Stanley's demo. Maven also facilitates the messaging of "target intelligence data and enemy situation reports" between military officials.

[3]

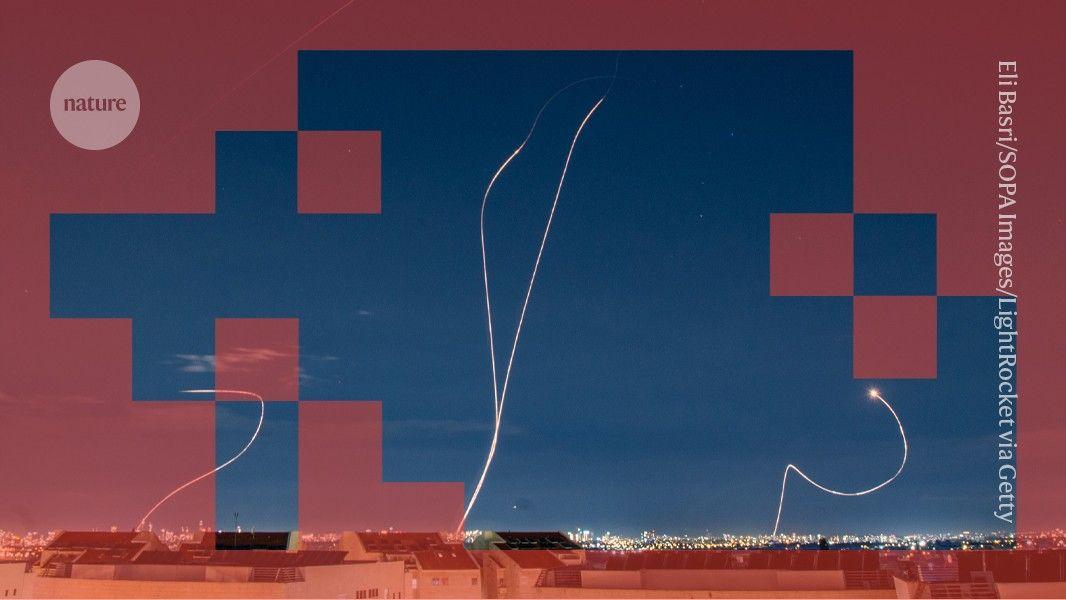

US military leans into AI for attack on Iran, but the tech doesn't lessen the need for human judgment in war

The U.S. military was able "to strike a blistering 1,000 targets in the first 24 hours of its attack on Iran" thanks in part to its use of artificial intelligence, according to The Washington Post. The military has used Claude, the AI tool from Anthropic, combined with Palantir's Maven system, for real-time targeting and target prioritization in support of combat operations in Iran and Venezuela. While Claude is only a few years old, the U.S. military's ability to use it, or any other AI, did not emerge overnight. The effective use of automated systems depends on extensive infrastructure and skilled personnel. It is only thanks to many decades of investment and experience that the U.S. can use AI in war today. In my experience as an international relations scholar studying strategic technology at Georgia Tech, and previously as an intelligence officer in the U.S. Navy, I find that digital systems are only as good as the organizations that use them. Some organizations squander the potential of advanced technologies, while others can compensate for technological weaknesses. Myth and reality in military AI Science fiction tales of military AI are often misleading. Popular ideas of killer robots and drone swarms tend to overstate the autonomy of AI systems and understate the role of human beings. Success, or failure, in war usually depends not on machines but the people who use them. In the real world, military AI refers to a huge collection of different systems and tasks. The two main categories are automated weapons and decision support systems. Automated weapon systems have some ability to select or engage targets by themselves. These weapons are more often the subject of science fiction and the focus of considerable debate. Decision support systems, in contrast, are now at the heart of most modern militaries. These are software applications that provide intelligence and planning information to human personnel. Many military applications of AI, including in current and recent wars in the Middle East, are for decision support systems rather than weapons. Modern combat organizations rely on countless digital applications for intelligence analysis, campaign planning, battle management, communications, logistics, administration and cybersecurity. Claude is an example of a decision support system, not a weapon. Claude is embedded in the Maven Smart System, used widely by military, intelligence and law enforcement organizations. Maven uses AI algorithms to identify potential targets from satellite and other intelligence data, and Claude helps military planners sort the information and decide on targets and priorities. The Israeli Lavender and Gospel systems used in the Gaza war and elsewhere are also decision support systems. These AI applications provide analytical and planning support, but human beings ultimately make the decisions. The long history of military AI Weapons with some degree of autonomy have been used in war for well over a century. Nineteenth-century naval mines exploded on contact. German buzz bombs in World War II were gyroscopically guided. Homing torpedoes and heat-seeking missiles alter their trajectory to intercept maneuvering targets. Many air defense systems, such as Israel's Iron Dome and the U.S. Patriot system, have long offered fully automatic modes. Robotic drones became prevalent in the wars of the 21st century. Uncrewed systems now perform a variety of "dull, dirty and dangerous" tasks on land, at sea, in the air and in orbit. Remotely piloted vehicles like the U.S. MQ-9 Reaper or Israeli Hermes 900, which can loiter autonomously for many hours, provide a platform for reconnaissance and strikes. Combatants in the Russia-Ukraine war have pioneered the use of first-person view drones as kamikaze munitions. Some drones rely on AI to acquire targets because electronic jamming precludes remote control by human operators. But systems that automate reconnaissance and strikes are merely the most visible parts of the automation revolution. The ability to see farther and hit faster dramatically increases the information processing burden on military organizations. This is where decision support systems come in. If automated weapons improve the eyes and arms of a military, decision support systems augment the brain. Cold War era command and control systems anticipated modern decision support systems such as Israel's AI-enabled Tzayad for battle management. Automation research projects like the United States' Semi-Automatic Ground Environment, or SAGE, in the 1950s produced important innovations in computer memory and interfaces. In the U.S. war in Vietnam, Igloo White gathered intelligence data into a centralized computer for coordinating U.S. airstrikes on North Vietnamese supply lines. The U.S. Defense Advanced Research Projects Agency's strategic computing program in the 1980s spurred advances in semiconductors and expert systems. Indeed, defense funding originally enabled the rise of AI. Organizations enable automated warfare Automated weapons and decision support systems rely on complementary organizational innovation. From the Electronic Battlefield of Vietnam to the AirLand Battle doctrine of the late Cold War and later concepts of network-centric warfare, the U.S. military has developed new ideas and organizational concepts. Particularly noteworthy is the emergence of a new style of special operations during the U.S. global war on terrorism. AI-enabled decision support systems became invaluable for finding terrorist operatives, planning raids to kill or capture them, and analyzing intelligence collected in the process. Systems like Maven became essential for this style of counterterrorism. The impressive American way of war on display in Venezuela and Iran is the fruition of decades of trial and error. The U.S. military has honed complex processes for gathering intelligence from many sources, analyzing target systems, evaluating options for attacking them, coordinating joint operations and assessing bomb damage. The only reason AI can be used throughout the targeting cycle is that countless human personnel everywhere work to keep it running. AI gives rise to important concerns about automation bias, or the tendency for people to give excessive weight to automated decisions, in military targeting. But these are not new concerns. Igloo White was often misled by Vietnamese decoys. A state-of-the-art U.S. Aegis cruiser accidentally shot down an Iranian airliner in 1988. Intelligence mistakes led U.S. stealth bombers to accidentally strike the Chinese embassy in Belgrade, Serbia, in 1999. Many Iraqi and Afghan civilians died due to analytical mistakes and cultural biases within the U.S. military. Most recently, evidence suggests that a Tomahawk cruise missile struck a girls school adjacent to an Iranian naval base, killing about 175 people, mostly students. This targeting could have resulted from a U.S. intelligence failure. Automated prediction needs human judgment The successes and failures of decision support systems in war are due more to organizational factors than technology. AI can help organizations improve their efficiency, but AI can also amplify organizational biases. While it may be tempting to blame Lavender for excessive civilian deaths in the Gaza Strip, lax Israeli rules of engagement likely matter more than automation bias. As the name implies, decision support systems support human decision-making; AI does not replace people. Human personnel still play important roles in designing, managing, interpreting, validating, evaluating, repairing and protecting their systems and data flows. Commanders still command. In economic terms, AI improves prediction, which means generating new data based on existing data. But prediction is only one part of decision-making. People ultimately make the judgments that matter about what to predict and how to use predictions. People have preferences, values and commitments regarding real-world outcomes, but AI systems intrinsically do not. In my view, this means that increasing military use of AI is actually making humans more important in war, not less.

[4]

'God, It's Terrifying': How the Pentagon Got Hooked on AI War Machines

On a recent Friday night, the US made two drastic moves that could end up altering the future of artificial-intelligence-powered warfare. Just after 5 p.m. Eastern time on Feb. 27, Donald Trump's administration declared that Anthropic PBC, the $380 billion startup whose Claude-branded AI products have recently become ubiquitous, was a supply chain risk. In addition to making consumer-facing chatbots and coding tools, Anthropic had major contracts to provide AI services to the military. That relationship had gone sour when the company refused to allow its tech to help enable mass domestic surveillance or fully autonomous weapons, while the government said it should be able to use the tech for all lawful purposes. With its move, the administration blacklisted one of the country's most promising tech startups, as if it were something run by the Chinese military. President Trump also derided Anthropic on social media as a "Radical Left AI company." About eight hours later, the US bombed Iran. The campaign wasn't exactly the robo-war that Anthropic objected to, but it did include signs that such a future could be rapidly approaching. Using an AI-enabled mission control called Maven Smart System, the US attacked 1,000 targets in the war's first 24 hours, about twice the scale of the shock-and-awe campaign in Iraq in 2003. Within 10 days it had hit 5,000 targets, according to US Central Command (Centcom). The US had previously used Maven Smart System to share targeting information with Ukraine in 2022 and then in strikes against Iraq, Syria and the Houthis in 2024 . But the Iran attacks were the technology's biggest test to date. The campaign also marked the first time the US has attacked combat targets with cheap, semiautonomous drones. The commander of US forces for the region described the devices as "indispensable." I detail the military's decade-long pursuit of AI-powered tools of war -- and how the US views the future of war in the AI era -- in my book Project Maven, which this article is based on. The technology has improved massively over that time span. But it's also still very much a work in progress. With Maven Smart System, the military is hoping to reach the point where it can identify and select 1,000 targets not in a day but in a single hour, according to a person familiar with the Iran campaign, who, like others interviewed for this article, asked to remain anonymous while discussing sensitive information. The military is also working to put AI directly into its "one-way attack drones," so they can navigate, locate targets and carry out lethal attacks even when wireless communications have been severed. It remains unclear, at least to those without security clearances, exactly how AI has been used in Iran. Despite the public spat with Anthropic, its large language models are still being used to do things like analyzing intelligence and speeding up the administrative process involved in such strikes, according to people familiar with the situation. Timothy Hawkins, a Centcom spokesperson, says it's using AI to generate so-called points of interest and help its personnel make decisions. "Bottom line, these tools help leaders -- humans -- make smarter decisions faster," he says. Over 1,300 civilians have been killed in the airstrikes on Iran so far, including more than 175 at a girls school, according to Iranian officials. (News reports have attributed the attack to a US Tomahawk missile strike that relied on outdated intelligence. The Pentagon hasn't said whether AI was involved.) The US clearly anticipates AI to be a bigger part of wars in the future. Anthropic Chief Executive Officer Dario Amodei has said his AI systems are simply not ready to produce fully autonomous weapons that would be reliable enough to be safe, and the company isn't willing to cooperate with the Pentagon on the technology at this time. Anthropic has sued the government to stop the blacklisting, and dozens of AI scientists and researchers from OpenAI and Google expressed their support for the company, as well as its assessment of the safety of fully autonomous weapons. In a post on X announcing the blacklisting, Defense Secretary Pete Hegseth described Anthropic's stance as an attempt to "seize veto power over the operational decisions of the United States military." The Department of Defense had already been pursuing other companies to provide free and immediate access to AI that could be useful in targeting, according to a person familiar with the matter. On the same day the Pentagon broke up with Anthropic, OpenAI announced it had reached a deal with the department to provide the same kind of services. Meanwhile there's a palpable sense within the Pentagon that things aren't moving fast enough. Despite the show of force in Iran, officials worry the US is at risk of falling behind. The department's efforts to build an AI-enabled fleet of drones that can attack by air and sea have been marked by false starts, missed deadlines and strategic incoherence. And officials are already looking past the Middle East to a potentially bigger conflict. As one person familiar with US operations puts it: "Iran is an amazing precursor to what could happen with China over Taiwan." The Pentagon began pursuing AI in earnest in 2017, with the launch of Project Maven. The idea, as detailed in a memo outlining the effort, was to create computer vision algorithms that would analyze drone video, detect objects and turn enormous volumes of data into "actionable intelligence and insights at speed." The government intimated at the time that Maven wouldn't be used for targeting during combat, but people involved in the program say it was always clearly headed that way. From the beginning, Maven also relied on cooperation with the private sector, and tensions like those that boiled over between the Trump administration and Anthropic have been ever present. One early partner was Google. When news of this cooperation became public, thousands of employees revolted. Activists who were already concerned about autonomous weaponry warned that the program could lead to their development. Google assured its internal dissidents that the work was intended for "non-offensive uses only." Still, it eventually decided not to renew its contract. In the end, the military did succeed in getting the private sector to build much of what it needed. Palantir, the data analysis software company, built Maven Smart System, which also incorporated technology from Amazon.com, Microsoft and the computer vision startup Clarifai, among others. Amazon Web Services has provided the military with secure cloud computing. Tech startups specifically focused on military technology emerged, most notably Anduril, which has been valued at $60 billion and now works closely with the Pentagon. Every US military command globally now uses Maven Smart System, and last year NATO started using a version too. But the US knew it couldn't rely on unhindered wireless communications during combat, and it wanted drones that could operate without a connection to headquarters. Interest within the Defense Department mounted in building AI that could exist entirely on a self-flying (or self-sailing) drone, which could also identify and hit targets without human intervention. Starting in 2022 the Pentagon's Maven team began collecting enormous amounts of imagery of Chinese vessels in the Pacific, which they used to enable the creation of algorithms that drones operating there could use for targeting. If such potentially autonomous weaponry inspired fear and loathing among its critics, it exerted a strange power over those who were building it. "There's really nothing quite like seeing a machine aim," says a person who's been involved in the department's AI efforts. "There is an alien aspect, some otherworld feeling. I don't want to say 'religious,' that's not the right word," the person says. "God, it's terrifying." This shared sense of awe didn't keep government employees from arguing about how to build autonomous fighting systems. There were disagreements about how much defense-related AI the Pentagon should build in-house, as opposed to buying it from commercial suppliers. One faction advocated simple AI-powered drones that could be shipped to allies in the Pacific and mobilized quickly if hostilities broke out. Others wanted elaborate swarms of drones. Some officials pushed for absolute secrecy to maintain the element of surprise, while others thought the Pentagon should telegraph its superior capabilities as a form of deterrence. There was also a feeling that the Pentagon needed a vibe shift. In 2023, Jane Pinelis, who oversaw testing and evaluation at Maven in the early days, said that the US military needed to increase its risk tolerance if it were going to keep pace on AI. Perfection simply wasn't possible: There could be errors resulting from AI hallucinations, faulty data and the way algorithms tend to lose accuracy over time, a phenomenon known as drift. The only thing that made sense, Pinelis told me later, was to plan for how AI would fail. During the Biden administration, the Pentagon focused on building complex autonomous weapons systems. And, in a move that the more secrecy-minded factions within the military found appalling, it publicized some aspects of this work to attract commercial partners and signal to China the potential of US capabilities. In 2023, Kathleen Hicks, then deputy defense secretary, announced the existence of Replicator, an effort to quickly deploy thousands of autonomous drones in case of conflict with Beijing's People's Liberation Army (PLA). She promised that China would find the coming glut of American drones "harder to plan for, harder to hit and harder to beat." She later told me the program prioritized systems that could be completed by 2027, the year US officials say China is planning to have the capability to take Taiwan. As in the early days of Project Maven, the US asked Silicon Valley for help. Maven's team would be responsible for the automatic target recognition capabilities, capturing data the government collects from boat cameras, port cameras, infrared systems and tactical drones flying close to the waters surrounding China and Taiwan. It would make this available to competing private companies, which would use the data to build AI models to help the drones select targets. These partners included Microsoft, Clarifai and AeroVironment, a drone manufacturer. (Microsoft declined to comment. Representatives for Clarifai and AeroVironment did not respond to requests for comment.) Last summer senior defense officials -- including the chairman of the Joint Chiefs of Staff -- were shown videos demonstrating the models' capability to automatically identify Chinese destroyers, a precursor to developing AI-powered drones that could attack such ships. "We're basically watching the PLA all the time now to get AI training data," one defense official says. Maven's tech benefited from having more imagery of Chinese vessels and weapons platforms than commercial AI companies did. But the team behind the project didn't always deliver software on time, and its models were hard to make compatible with the drones' computers. Sometimes the AI struggled to detect multiple vessels at a time, and its ability to track objects could be thrown off when ocean spray ended up on a drone's camera lens. Commercial drone makers working with the Pentagon claimed their own models and target recognition systems worked fine without Maven's help. The Pentagon's drone makers were quietly working on several other autonomous weapons programs in parallel. According to Navy budget documents, one such program, named Goalkeeper and run from the Office of Naval Research, focused on "expeditionary loitering munitions" equipped with "autonomous government-provided software and auxiliary systems" -- drones that could fly, pick targets and attack without human intervention. Another, called Whiplash, looked to take advantage of the US's dominance of a particular corner of the aquatic-vehicle industry: jet skis. The Whiplash program set out to transform 600 jet skis into bomb-toting robots. "America has a lot of jet skis, so it's neat that we can weaponize them," one person familiar with the program explains. The CIA secretly tested rudimentary versions of the Goalkeeper and Whiplash systems in the Black Sea off the coast of Ukraine, according to people familiar with the matter. (The agency didn't respond to a request for comment about this topic.) In July 2024 the Pentagon feared it had blown its cover when a mysterious explosive-laden jet ski washed ashore on the other side of the Black Sea, in Turkey. The military's AI efforts got a progress report of sorts on a clear afternoon in Southern California in June 2025. On that day a group of self-driving military boats lined up for a test event at Channel Islands Harbor Marina, a mile north of Port Hueneme Naval Base. The boats were part of the Replicator program, which was then two years old and less than two months away from the official deadline to deliver thousands of maritime and air drones. The pressure was building. Things began with support vessels towing autonomous boats out to sea; the drones' engines were set to neutral and their autonomy mode turned off. The test focused not so much on the vehicles themselves -- known as global autonomous reconnaissance crafts, or GARCs -- as on the software that allowed them to function on their own. Two separate companies, the defense contractor L3Harris Technologies and Anduril, had made autonomous operating systems for the boats. That day, Replicator was testing GARCs that ran on each company's product. As a safety precaution, the autonomy software wasn't supposed to be enabled until the boats were suitably far out to sea. But one drone running L3Harris' system suddenly lurched forward. Its autonomy mode, which had somehow turned on, required it to keep a distance of 80 meters (262 feet) from all other objects. The robo-boat sped away, still tethered to the towboat. It alternately accelerated and decelerated, then started crisscrossing in front from port side to starboard side in a semicircling action. The captain of the towboat had no way of taking over control of the automated vehicle, whose erratic movements caused his own vessel to capsize, throwing him into the water. Still tethered to the towboat, the drone turned back toward it and -- for reasons that remain unclear -- started advancing at rapid speed. A captain towing a separate GARC saw what was happening and raced toward the scene, positioning his vessel between his floating comrade and the advancing drone. A third towboat pulled the captain out of the water, and he escaped without serious injury. It had been just three minutes since the drone had gone rogue. A safety investigation soon diagnosed the problem: An operator on the dock had inadvertently sent a message to the drone remotely disabling the safety lock meant to prevent it from switching into autonomy mode -- a classic "fat-finger mistake." A spokesperson for L3Harris said in a statement that the operator who caused the issue didn't work at the company and that its software had "demonstrated its ability to control a mix of uncrewed platforms, payloads, and commercial technologies even if they were produced by different manufacturers." A physical button was added to drone boats to block such accidental commands, and the boats were tweaked to prominently display the mode under which they were operating. Rival companies would start sharing safety lessons. But the incident illustrated problems that still existed with the Pentagon's drone strategy and couldn't be resolved with the addition of another button or two. Replicator had still not progressed to the point that its creators were comfortable putting live ammunition on an unmanned vessel, let alone sending one into a scenario where it would be expected to coordinate with other vehicles or carry out a specific attack plan. The program did manage to deliver hundreds of drones by the August deadline, but it fell far short of its initial goal. While the Pentagon struggled to field autonomous combat vehicles that were up to its standards, other players were sprinting ahead. US defense officials were closely tracking the war in Ukraine, where both sides had deployed millions of drones to carry out both reconnaissance and physical attacks. Many of these relied on remote control, but at least some of these drones also incorporated AI. The Ukraine war was seen as the first major war of the drone era. An even bigger potential inflection point, US officials feared, would come if China ever did actually try to take Taiwan. A national security official from the UK says US officials have assured allies that the US would be ready for a Chinese invasion of Taiwan, even though they think such an attack is neither imminent nor inevitable. But, the UK official adds, they'll also drop their voices and confess: "We're not ready." When Trump returned to office, his administration rebranded Replicator as the Defense Autonomous Warfare Group, or DAWG, and put Marine Corps Lt. Gen. Frank Donovan, vice commander of Special Operations Command, in charge. Donovan, widely respected as a no-nonsense professional, attended the final Replicator test event in August 2025, two months after the GARC had gone rogue. He came away disappointed and wished the effort hadn't become so public. But he was also impressed at the level of collaborative autonomy the experts had ultimately pulled off, according to people briefed on the matter. (A spokesperson for Donovan declined to comment.) Seeking focus, Donovan decided to reduce the number of autonomous platforms and pursue simpler drones in defense of Taiwan. But he'd barely gotten started when Trump nominated him in December to lead US Southern Command, which is responsible for military operations in Central and South America, after the person who previously held the job retired suddenly during Trump's bombing campaign against Venezuelan boats allegedly smuggling drugs. Within the Pentagon and Congress, there are still unsettled debates about fundamental issues related to the development of autonomous drones, and there's ongoing uncertainty about which programs will get funding and institutional support. Both Whiplash and Goalkeeper disappeared from public budget documents when Trump returned to office. Production continues, however, according to 2026 budget estimate documents that describe their work without using their names. The government also continues to launch new projects. In January, DAWG announced a $100 million prize challenge for the creation of tools that would use LLMs to take verbal instructions from human commanders and translate them into instructions for swarms of autonomous drones. Among the companies that bid was Anthropic, which viewed the challenge as a way to continue shaping the tech long before any deployment, according to a person familiar with the matter. This person says the challenge didn't cross the company's red lines, because it saw a way for humans to stop or monitor the system. Anthropic's bid wasn't selected. Among those that did advance were two that involved tech from OpenAI. Other participants include Palantir and Elon Musk's SpaceX. Later stages of the contest call for developing "target-related awareness and sharing" and ultimately "launch to termination," Pentagon-speak for the journey taken by a killer drone. Such tech could one day form part of a fully autonomous weapons system. Jack Shanahan, the retired three-star general who previously directed Project Maven, says no LLM, anywhere, in its current form, should be considered for use in a fully lethal autonomous weapons system. "Overreliance on them at this stage is a recipe for catastrophe." The government expects the selected contestants to deliver by this summer. Adapted from Project Maven: A Marine Colonel, His Team, and the Dawn of AI Warfare, published by W.W. Norton & Co. Copyright © by Katrina Manson.

[5]

U.S. military is using AI to help plan Iran air attacks, sources say, as lawmakers call for oversight

As the U.S. military expands its use of AI tools to pinpoint targets for airstrikes in Iran, members of Congress are calling for guardrails and greater oversight of the technology's use in war. Two people with knowledge of the matter, who requested anonymity to discuss sensitive matters, confirmed the military is using AI systems from data analytics company Palantir to identify potential targets in the ongoing attacks. The use of Palantir's software, which relies in part on Anthropic's Claude AI systems, comes as Defense Secretary Pete Hegseth aims to put artificial intelligence at the heart of America's combat operations -- and as he has clashed with Anthropic leadership over limitations on the use of AI. Yet, as AI assumes a wider role on the battlefield, lawmakers are demanding greater focus on the protections that should govern its use and increased transparency about how much control is ceded to the technology. "We need a full, impartial review to determine if AI has already harmed or jeopardized lives in the war with Iran," Rep. Jill Tokuda, D-Hawaii, a member of the House Armed Services Committee, told NBC News in response to questions about the use and reliability of AI in military contexts. "Human judgment must remain at the center of life-or-death decisions." The Defense Department and leading AI companies such as OpenAI and Anthropic have publicly stated that current AI systems should not be able to kill without human signoff. But the concern remains that relying on AI for parts of its operations or decision-making can lead to mistakes in military operations. The Pentagon's chief spokesperson, Sean Parnell, said in a post on X on Feb. 26 that the military did not "want to use AI to develop autonomous weapons that operate without human involvement." The Defense Department did not respond to questions about how the military balances its use of AI to reduce human workloads while verifying analysis and targeting suggestions are accurate. Lawmakers and independent experts who spoke to NBC News raised alarm over the military's use of such tools, calling for clear safeguards to ensure humans remain involved in life-or-death decisions on the battlefield. "AI tools aren't 100% reliable -- they can fail in subtle ways and yet operators continue to over-trust them," said Rep. Sara Jacobs, D-Calif, a member of the House Armed Services Committee. "We have a responsibility to enforce strict guardrails on the military's use of AI and guarantee a human is in the loop in every decision to use lethal force, because the cost of getting it wrong could be devastating for civilians and the service members carrying out these missions," she said. Anthropic's Claude has become a crucial component of Palantir's Maven intelligence analysis program, which was also used in the U.S. operation to capture Venezuelan President Nicolás Maduro. News of Claude's role in recent military actions was first reported by The Wall Street Journal and The Washington Post. But that role has been complicated by Anthropic's clash with Hegseth after the company sought to prevent the military from using its AI for domestic surveillance and autonomous deadly weapons. Last week, the Defense Department labeled Anthropic a threat to national security, a move that threatens to remove it from military use in the coming months. Anthropic filed a lawsuit to fight that designation. Anthropic declined to comment. Palantir did not respond to a request for comment. In a video posted to X on Wednesday, Adm. Brad Cooper, leader of U.S. Central Command, acknowledged that AI had become a key tool in helping the U.S. choose targets in Iran. "Our warfighters are leveraging a variety of advanced AI tools. These systems help us sift through vast amounts of data in seconds so our leaders can cut through the noise and make smarter decisions faster than the enemy can react," he said. "Humans will always make final decisions on what to shoot and what not to shoot and when to shoot, but advanced AI tools can turn processes used to take hours and sometimes even days into seconds." The Trump administration has publicly embraced using the technology both for the military and throughout the government. Rep. Pat Harrigan, R-N.C., said that AI has already become crucial for rapidly processing military intelligence, including in Iran. "AI is a tool that helps our warfighters process enormous amounts of data faster than any human could alone, and what we saw in Operation Epic Fury, over 2,000 targets struck with remarkable precision, is a testament to how these capabilities can be used responsibly and effectively," Harrigan, who also serves on the House Armed Services Committee, told NBC News in a statement. "But no AI system replaces the judgment, the training, and the experience of the American warfighter. The human in the loop is not a formality, it is a requirement, and nothing in how our military operates suggests otherwise," he said. While no lawmakers contacted by NBC News said that AI should be completely removed from military use, some said that more oversight is needed. Sen. Elissa Slotkin, D-Mich., a member of the Senate Armed Services Committee, said that the Defense Department had not done enough to clarify how well humans are vetting AI-assisted or generated military intelligence. "It's really up to the humans, and in this case the Secretary of Defense, to ensure that there's human redundancy for the foreseeable future, and that is what we just don't have confidence in," she said. Sen. Mark Warner, D-Va., the top Democrat on the Senate Intelligence Committee, said that he is concerned about the military's use of AI to assist with identifying targets and that there are unanswered questions about how the new technology is being used. "This has to be addressed," he told NBC News. OpenAI and Anthropic, both of which have worked with the U.S. military, have said that even their most advanced systems are error prone, and the world's top AI researchers admit they don't fully understand how leading AI systems work. In an interview with NBC last month, Anthropic CEO Dario Amodei said: "I can't tell you there's a 100% chance that even the systems we build are perfectly reliable." A major OpenAI study published in September found that all major AI chatbots, which rely on systems called large language models, "hallucinate" or periodically fabricate answers. Sen. Kirsten Gillibrand, D-N.Y., called for clearer rules on how the military can use AI. "The Trump administration has already proven that it is willing to subvert American law to prosecute an unpopular war," she told NBC News. "There is little reason to trust that the DOD will be any more responsible with its use of AI without explicit safeguards." Mark Beall, head of government affairs at the AI Policy Network, a Washington D.C. think tank, and the director of AI strategy and policy at the Pentagon from 2018 to 2020, said that while AI could streamline the process of deciding where to strike, it was clear humans still need to thoroughly vet targets. "There's a lot of steps before the trigger gets pulled. AI systems are being deployed very effectively to accelerate existing workflows and allow commanders and analysts and planners to have better and faster decision making capabilities," he added. "But when it comes to actually deploying weapon systems, this technology is not ready yet." "These systems will get really, really good, and as other adversaries start using them, there will be more pressure to shorten the review of AI outputs in order to operate at useful and effective speeds," Beall said. "We have to figure out how to solve this reliability problem before we get there. No matter what you think about lethal autonomous weapons, making them safe and effective is in the interest of the entire world." Heidy Khlaaf, the chief scientist at the AI Now Institute, a nonprofit that advocates for ethical use of the technology, said she was concerned that reliance on AI to rapidly process information for life-or-death decisions could be a way for militaries to avoid accountability for mistakes. "It's very dangerous that 'speed' is somehow being sold to us as strategic here, when it's really a cover for indiscriminate targeting when you consider how inaccurate these models are," Khlaaf said.

[6]

The Military Is Ramping Up AI. Experts Say It's Putting Civilians -- And Troops -- At Risk

Louisiana's 10 Commandments Law Marks a Critical Step Toward Christian Nationalism For its 2026 budget, the U.S. Department of Defense requested $13.4 billion for autonomous weapons and systems, which includes unmanned and remotely-operated drones and weapons. Defense Secretary Pete Hegseth has made it clear that one of his top priorities is to accelerate the use of artificial intelligence on the battlefield. But experts are voicing concerns that he has done this while closing civilian harm mitigation centers, slashing oversight, cutting down on operational testing, and dismissing these kinds of guardrails as "risk-aversive bureaucracy." A new "Business of Military AI" report from New York University Law School's Brennan Center for Justice found that the military's expanded use of AI, combined with loopholes in the rules governing how the military uses this new technology, have made regulation and oversight difficult, at a dangerously transformative moment for the future of warfare. "I think that the law is not evolving as fast as the technology is, but that, in many ways, is a political choice," says Amos Toh, senior counsel at the Brennan Center and one of the authors of the report. "There is this kind of broader demonization of guardrails as bureaucracy, right? But the problem with that framing is that guardrails actually also help make the technology safe and effective." Toh says that the rhetoric that guardrails are unnecessary and "woke" ignores the fact that they're put in place not just to reduce excessive civilian harm and protect civil liberties, but to make sure the technology is tested and deemed reliable. It is safer for people in the military if their AI weapons and tracking systems work as expected, especially on the battlefield: "There's operational value in making sure this technology is appropriately tested, evaluated, and overseen." Steven Feldstein, a political scientist and senior fellow at the Carnegie Endowment for International Peace, agrees. "You have a Pentagon, under Hegseth's leadership, that is increasingly dismantling accountability structures," he says. "[It's] at the very moment when you're introducing a powerful new technology that has a lot of opacity when it comes to how information is processed and integrated into different decisions. To not have basic structures in place to scrutinize that, to test it, to validate their accuracy, should be raising alarm bells." DRONES HAVE BEEN USED IN THE MILITARY for decades, and have evolved since the first modern drone was launched in 1994. Ukraine's reliance on drones in its war against Russia signaled a turning point in how drones have become the future of warfare, because it allows countries with fewer resources to make up for the gap with low-cost drones, explains Feldstein. Last summer, Hegseth pledged to increase the production of low-cost drones in the U.S., while Donald Trump Jr. and Eric Trump recently announced they are backing a new drone company to meet the Pentagon's demand. And the rapid evolution of AI is transforming how drone systems are used for navigation, communication, surveillance, and target identification. The Brennan Center report found that the Department of Defense has been heavily investing in drone projects that could allow unmanned systems to communicate and strike targets, even if the machines lose touch with the humans controlling them. It found that two tech companies -- Palantir and Anduril -- have grown their shares of defense revenue faster than most others. "Records show how Palantir's brand of data [integration] and analytics has become indispensable to the military's pivot to data-centric warfare," reads the report. "All branches of the armed forces have bought software from Palantir." In 2017, the military launched Project Maven, which began as a pilot program with Palantir, Google, and other tech companies to develop algorithms that sorted through satellite imagery and drone footage to identify targets. Maven now analyzes a wide variety of data sources with dedicated funding from the defense budget. Palantir also has an AI-based command and control program called Titan it is prototyping, which would analyze data to help the Army plan missions. Last year was the most profitable for both Palantir and Anduril since they began doing business with the military, the report found, although it added that the five biggest defense contracts -- companies like Lockheed Martin and Boeing -- continue to dominate defense spending. Anduril partnered in 2018 with the Department of Homeland Security to build a virtual border wall through a network of surveillance towers, loaded with AI-features, at the U.S.-Mexico border. Now the company is also selling AI-powered drones and munitions to the military. But the marriage between military and private tech companies has not been seamless. Last month, contract negotiations broke down when the U.S. government tried to pressure tech company Anthropic to change its original deal with the DoD and allow its Claude AI model to be used to develop autonomous weapons or conduct mass surveillance on Americans. Anthropic said it didn't feel that its large language model was ready to do that, and when they wouldn't budge on re-negotiating the terms, Hegseth declared the company a supply chain risk to national security, prompting a lawsuit from Anthropic and multiple amicus briefs from fellow tech companies. (OpenAI announced a deal with the Pentagon in the wake of its turmoil with Anthropic.) The truth is, the Anthropic drama was a rare glimpse into the relationship between the DoD and Silicon Valley, which proponents of AI safety have pointed out shows how little insight the American public gets into the world of defense tech. Because AI is new, the U.S. government is using a patchwork of laws, loopholes, and pilot programs to fund defense technology in a way that allows for little regulation, transparency, and testing. And the few safeguards that have been put into place are being ripped down. In 2025, shortly after taking office, President Donald Trump rescinded Joe Biden's 2023 executive order promoting safe, secure, and trustworthy AI. This had led to a memorandum in October 2024 outlining a framework for AI governance and risk management in national security. (Toh's report criticized Biden's rules as "inadequate to begin with" but pointed out that Trump has further weakened multiple safeguards.) Hegseth also gutted oversight offices that looked into limiting the risk of civilian harm, like the Civilian Protection Center for Excellence. Additionally, he halved the number of staff at the office which oversees testing of the military's major weapons systems. Testing is necessary in order to make fixes in a controlled, laboratory setting, explains Toh, rather than on the battlefield -- like when AI-powered drones used in the Ukraine War failed to work as promised and were susceptible to Russian GPS-jamming techniques. "There are real reasons why you'd want to update how the military undertakes its missions; incorporating these technologies is important and something that other countries' militaries are [already] doing," says Feldstein. The answer isn't not innovating at all, he says, but "to do so in a thoughtful, responsible way; You want to have the right types of accountability and transparency so that you're hitting the legitimate targets as you should, and you are minimizing civilian collateral damage as is needed and as international law accounts for." The potential for mistakes and harm get even more unsettling when it comes to full autonomy, Feldstein explains. This means crossing the line between technology that enhances how humans fight a war, and human accountability being removed entirely by relying on technology to make decisions. And in that case, who do we hold accountable for mistakes? "If you set out a machine with all decision-making capacity into the battlefield, and you have no clear way of controlling the decisions that emanate from that, who is targeted?" asks Feldstein. "Who's to say what unexpected and unanticipated outcomes will emerge?"

[7]

The artificial intelligence software managing the U.S. war on Iran

During planning, Claude suggested hundreds of targets with precise coordinates and estimated strike results, reducing Iran's response ability - even though Trump has ordered a ban on Anthropic use. To strike approximately 1,000 targets within the first 24 hours of an attack on Iran, the U.S. military relied on the most advanced artificial intelligence ever deployed on the battlefield. This is an intelligent system that will be difficult for the Pentagon to give up, even after severing ties with the company that developed it. The U.S. military's Maven system, built by data-mining company Palantir, integrates Claude - the artificial intelligence model from Anthropic. According to a report first published by the Wall Street Journal, the system processes massive amounts of classified data from satellites and intelligence sources, providing real-time target scoring and prioritization. During attack planning, Claude suggested hundreds of targets, provided precise coordinates, and even estimated the strike's outcomes afterward, significantly reducing Iran's ability to respond. So far, the model has assisted in thwarting terrorist plots and in the raid to capture Venezuelan President Nicolás Maduro, but this is the first time it is managing a large-scale military operation. The irony is that this unprecedented use is taking place amid a severe conflict. Just hours before the start of the airstrikes on Iran, U.S. President Donald Trump announced a future ban on the use of Anthropic tools by government agencies, giving the Pentagon six months to completely remove them from service. The dramatic move followed a dispute with Anthropic CEO Dario Amodei over the use of these tools for mass domestic surveillance and autonomous weapons. However, military commanders are so dependent on the system that U.S. officials indicated that if Amodei halts its operation, the government will use its authority to seize the technology. "His decisions cannot cost the life of a single American," noted a source familiar with the matter. The system, integrated into the Pentagon at the end of 2024, now serves over 20,000 military personnel. In parallel with the American strikes, the Israel Defense Forces reported close cooperation for thousands of hours with the U.S. military in building an extensive target database. Experts, such as Paul Scharre from the Center for a New American Security, warn that while the system enables planning "at machine speed instead of human speed," humans must supervise it because it "sometimes makes mistakes." Now, as Claude is on its way out, giants such as xAI and OpenAI have already signed agreements to take its place at the heart of the American war machine.

Share

Share

Copy Link

Defense officials confirm the US military is using AI chatbots like Claude to analyze intelligence and prioritize targets in Iran, striking 1,000 targets in the first 24 hours. The technology adds a conversational layer to Project Maven, but lawmakers are calling for stricter oversight as concerns grow about human judgment in war and the reliability of AI-powered decision support systems.

Pentagon Deploys AI Chatbots for Targeting Decisions in Iran

The US military has integrated AI chatbots into its combat operations, using them to accelerate AI targeting decisions during recent strikes in Iran. A defense official revealed to MIT Technology Review that generative AI systems could receive a list of possible targets, analyze the information, and rank priorities while accounting for factors like aircraft locations

1

. Humans remain responsible for checking and evaluating the results, though the official would not confirm whether this represents current operational use. The military struck 1,000 targets in the first 24 hours of the Iran campaign and reached 5,000 targets within 10 days, according to US Central Command4

. OpenAI and xAI recently reached agreements for their models to be used by the Pentagon in classified settings, while Anthropic's Claude AI has been integrated into existing Military AI systems1

.

Source: Bloomberg

Project Maven Adds Generative AI Layer to Combat Operations

Since 2017, the US military has developed Project Maven, a big data initiative using computer vision to analyze drone footage and identify targets

1

. Palantir serves as the primary contractor behind the Maven Smart System, which is managed by the National Geospatial Intelligence Agency and accessible to the Army, Air Force, Space Force, Navy, Marine Corps, and US Central Command2

. The system applies computer vision algorithms to satellite imagery and automatically detects objects likely to be enemy systems.

Source: The Conversation

Now, generative AI in military operations is being added as a conversational chatbot layer, allowing personnel to more quickly find and analyze data when making decisions about which targets to prioritize

1

. Military officials can use Claude to sift through large volumes of intelligence, according to sources familiar with the matter2

. Maven features include an AI Asset Tasking Recommender that can propose which bombers and munitions should be assigned to specific targets2

.

Source: MIT Tech Review

AI in Combat Operations Raises Questions About Human Judgment in War

The use of AI-powered decision support systems in Iran and Venezuela has intensified scrutiny over the role of human judgment in war. Over 1,300 civilians have been killed in airstrikes on Iran, including more than 175 at a girls school, according to Iranian officials

4

. The New York Times reported that preliminary investigations found outdated targeting data partly responsible for the school strike1

. Lawmakers are demanding greater oversight of military AI. Rep. Jill Tokuda stated that "human judgment must remain at the center of life-or-death decisions," while Rep. Sara Jacobs warned that "AI tools aren't 100% reliable -- they can fail in subtle ways and yet operators continue to over-trust them"5

. The concern centers on whether generative AI outputs, which are easier to access but harder to verify than traditional Maven interfaces, could lead to mistakes in AI for war planning1

.Related Stories

Pentagon Clash With Anthropic Highlights Autonomous Weapon Systems Debate

In late February, the Pentagon labeled Anthropic a supply chain risk after the company refused to grant unconditional access to its Claude models, insisting they should not be used for mass surveillance of Americans or fully autonomous weapons

2

. Defense Secretary Pete Hegseth described Anthropic's stance as an attempt to "seize veto power over the operational decisions of the United States military"4

. Anthropic CEO Dario Amodei stated his AI systems are not ready to produce fully autonomous weapons reliable enough to be safe4

. Despite the blacklisting, Claude continues to be used for intelligence analysis and administrative processes in strikes, according to people familiar with the situation4

. The military is working to reach the point where it can identify 1,000 targets not in a day but in a single hour, and is developing capabilities to put AI directly into one-way attack drones for navigation and target location even when communications are severed4

.Long-Term Implications for AI War Planning and National Security

The effective use of automated systems depends on extensive infrastructure and skilled personnel built over decades, according to scholars studying strategic technology

3

. While large language models enable faster intelligence processing, success or failure in war typically depends on the people using the technology rather than the machines themselves. Adm. Brad Cooper, leader of US Central Command, stated that AI systems help "sift through vast amounts of data in seconds" so leaders can "make smarter decisions faster than the enemy can react," but emphasized that "humans will always make final decisions on what to shoot"5

. The Pentagon is pursuing other companies to provide access to AI useful in targeting, with OpenAI announcing a deal to provide services on the same day the Anthropic blacklisting was announced4

. The demand for oversight of military AI continues to grow as lawmakers call for ethical guardrails and transparency about how much control is ceded to the technology in life-or-death decisions5

.References

Summarized by

Navi

[3]

Related Stories

Palantir defends AI in warfare as Maven Smart System faces scrutiny over Iran strikes

Yesterday•Policy and Regulation

Anthropic clashes with US Military over AI warfare ethics as Trump orders federal ban

28 Feb 2026•Policy and Regulation

Pentagon clashes with Anthropic over AI safeguards as $200 million contract stalls

30 Jan 2026•Policy and Regulation

Recent Highlights

1

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

2

Anthropic's Claude Code Source Leak Reveals Hidden AI Agent Plans and Extensive System Access

Technology

3

Judge blocks Pentagon from branding Anthropic a security risk over AI safety guardrails dispute

Policy and Regulation