Vast Data expands AI Operating System with global control plane and zero-trust framework

6 Sources

6 Sources

[1]

Unified data platform is essential for AI at scale, says Vast - SiliconANGLE

AI at scale is demanding a fundamentally different data architecture Organizations adopting artificial intelligence soon discover the twin challenges of AI-scale data performance and global data orchestration. At that level, only a unified data platform can control capacity and policy across distributed environments. Lack of centralized control forces organizations to rely on reactive capacity planning and siloed monitoring tools -- introducing risk and escalating operational cost. What's needed instead is both a high-performance data engine, as well as a unified operating and intelligence layer that delivers manageability on a global scale, according to Jonsi Stefansson (pictured), general manager of cloud at Vast Data Inc. "There is [a] certain level of supply chain crisis in the world today," Stefansson noted. "You have to have the flexibility to actually figure out: Where do I have compute availability? Where do I have GPU availability? Where does my data reside? [At Vast] we want to solve exactly that." Stefansson spoke with theCUBE's Dave Vellante and Rebecca Knight at Vast Forward 2026, during an exclusive broadcast on theCUBE, SiliconANGLE Media's livestreaming studio. They discussed how to address the performance and scale problems associated with raw data storage for AI through a unified data platform, as well as solving the fragmentation problem that emerges as infrastructure becomes distributed. (* Disclosure below.) Disconnected storage models were never built for AI scale. A range of enterprises have refocused their efforts on file and object storage to reflect the move away from siloed platforms. But what organizations really need is a unified platform uniting storage and the operating system, Stefansson explained. "Storage is the key, but ultimately it is the AI operating system capabilities of unifying or consolidating your entire AI pipeline into a single platform that is right next to the storage [that's needed]," Stefansson said. "You can basically start contextualizing all your unstructured data within the same platform without having to migrate it." But the shift isn't just about reducing copies or chasing lower costs. Instead, it's about giving enterprises the ability to dynamically place data and compute wherever opportunity appears -- without losing control of context or blowing up storage footprints, according to Stefansson. But the focus is squarely on efficiency, not total reinvention. "We are not breaching the laws of physics," he noted, in regards to the realities of moving massive AI data sets across clouds and regions. "We're just making it more efficient." Here's the complete video interview, part of SiliconANGLE's and theCUBE's coverage of Vast Forward:

[2]

Vast AI Operating System expands with strategic partners - SiliconANGLE

Vast bets big on unified AI and cybersecurity with expanding partner ecosystem Enterprises pushing artificial intelligence from trial to production are learning that infrastructure and cybersecurity are no longer distinct, isolated disciplines. Now, Vast Data Inc., a provider of disaggregated, high-performance data solutions, is addressing this reality through an expanding partner ecosystem built around expanding its proprietary AI Operating System. That AI Operating System platform -- the company's bid to become the backbone of enterprise AI infrastructure -- is built around two staple pillars, according to John Mao (pictured), vice president of business development and alliances at Vast. The first is tight integration with hardware vendors across compute, storage, and networking, and the second is a burgeoning layer of application and model partnerships, he explained. Both are expanding through the newly announced Cosmos partnership program, a global community of developers, builders and AI experts. "You can't go to production as an enterprise and have an accident when things like this leak out or people access the wrong information. Or maybe even basic things ... like supply chain risk -- at the hardware level you're familiar [with it], but at the software level it's the same. Now it's within models; it's also the same," Mao explained. "It's a bigger surface area for the enterprise to have to wrestle with on top of all the new technologies. I think they will start to fuse and become one -- not one and the same -- but they have to be thought of together as a single system." Mao spoke with theCUBE's Dave Vellante and Rebecca Knight at Vast Forward 2026, during an exclusive broadcast on theCUBE, SiliconANGLE Media's livestreaming studio. They explored Vast's role in building a unified AI operating system through strategic hardware, software and security partnerships. (* Disclosure below.) At its first dedicated user conference this week, Vast Data Inc. unveiled a series of ecosystem expansions aimed at strengthening enterprise AI deployments. Highlights included the announcement of native integration with CrowdStrike's Falcon program, focusing on two of the most critical security risks in enterprise AI adoption: data access control and data exfiltration, according to Mao. "There's CrowdStrike agents that can come into our platform. We can also integrate them into our pipeline by invoking specific services that may even be hosted remotely," he said. "There's lots of different paths in which customers could eventually consume this, but it is about inserting different capabilities. We're not naive to think we can do everything. We're not a security expert. We're not doing all of the things that those companies do in terms of tracking, bad actors and things that are out there from a software perspective, so we need to lean on them." Rather than attempting to build a monolithic stack, Vast is clearly positioning its AI Operating System as an open foundation that integrates specialized capabilities from strategic partners. The company also announced that Twelve Labs Inc.'s technology will be brought onto the AI Operating System to serve the likes of regulated industries and financial institutions, as well as enterprises in sectors such as fraud prevention and retail analytics, Mao noted. But the company views these additions as only the start of a much broader expansion. "We launched with about 60 partners this week," Mao said. "I want to see that number become 600, and then, eventually, 6,000." Here's the complete video interview, part of SiliconANGLE's and theCUBE's coverage of Vast Forward:

[3]

Production-ready AI infrastructure in focus - SiliconANGLE

Inside Cisco and Vast's play to simplify -- and secure -- production-ready AI infrastructure As enterprises race to deploy artificial intelligence, the focus is shifting from isolated tools to production-ready AI infrastructure that can deliver results at scale. In the process, companies are rethinking not just their technology architecture, but also the operating models needed to support AI in the real world. For Cisco Systems Inc. and Vast Data Inc., which builds scalable storage for AI and analytics, this new landscape brings an opportunity to collaborate on enterprise deployments. With the rise of AI, a new infrastructure model so fragile and complex has emerged that even the world's most sophisticated information technology teams are struggling to keep it all standing, according to Danny McGinniss (pictured, right), vice president of product management for the Compute Business Unit at Cisco. "The [AI factory question] was: 'How do we provide not just an infrastructure stack to get the workload up and running, but [also] provide a security posture and all the observability tools around it to really get it ready for production?'" McGinniss said. "That's the concept. When we say secure AI factory, that's really what we mean." McGinniss and John Mao (left), vice president of business development and alliances at Vast, spoke with theCUBE's Dave Vellante and Rebecca Knight at Vast Forward 2026, during an exclusive broadcast on theCUBE, SiliconANGLE Media's livestreaming studio. They discussed production-ready AI infrastructure and the enterprise shift toward AI-driven operations. (* Disclosure below.) Reference architectures are increasingly centered on networking, which has become the lifeblood of modern AI infrastructure projects. The broader goal is to create more integrated infrastructure that simplifies adoption as enterprises navigate new data center demands, according to Mao. "You think about all [that the enterprises] have to deal with when they think about AI infrastructure and AI solutions, from new data center considerations, liquid cooling, going to like a hundred kilowatt racks, to new types of infrastructure, new speeds and networking," Mao said. "All of this is complex, and many enterprises don't have the skill sets and ... the experience there in the industry." And the environment is changing so rapidly that even a single driver update or container-layer change can force teams to reset and reevaluate the entire stack, according to McGinniss. Many customers simply do not have the time -- or the resources -- to keep pace. "At the same time, they're trying to focus on how to transform a business. They're thinking about, how do we actually use AI to give us a competitive advantage?" he said. "There's a whole bunch of thought and resources being spent there." Past technology waves such as virtualization, cloud and containers were largely about making IT more efficient, lowering costs and introducing new consumption models, McGinniss explained. AI is different because organizations now see it as a source of competitive advantage. "You're rewriting your entire operational model around the technology," he said. "Before it was [about using] technology to be more efficient. Now we're rewriting the way the company works around the technology available to us." Of course, different enterprises are at very different stages of the AI journey, meaning conversations vary depending on where each organization is starting. Overwhelming as the considerations may be, Cisco and Vast are working to give customers a stable foundation for AI in production -- not just tools, but an operating model. This comes at a time where boardroom conversations have clearly moved away from seeing AI as anything less than inevitable, according to Mao. "Most of the senior leadership that we speak with -- it's not a question of if they will do this," Mao noted. "It's a question of when and how." Stay tuned for the complete video interview, part of SiliconANGLE's and theCUBE's coverage of Vast Forward 2026.

[4]

Vast Data expands AI Operating System with global control plane, zero-trust agent framework and deeper Nvidia integration - SiliconANGLE

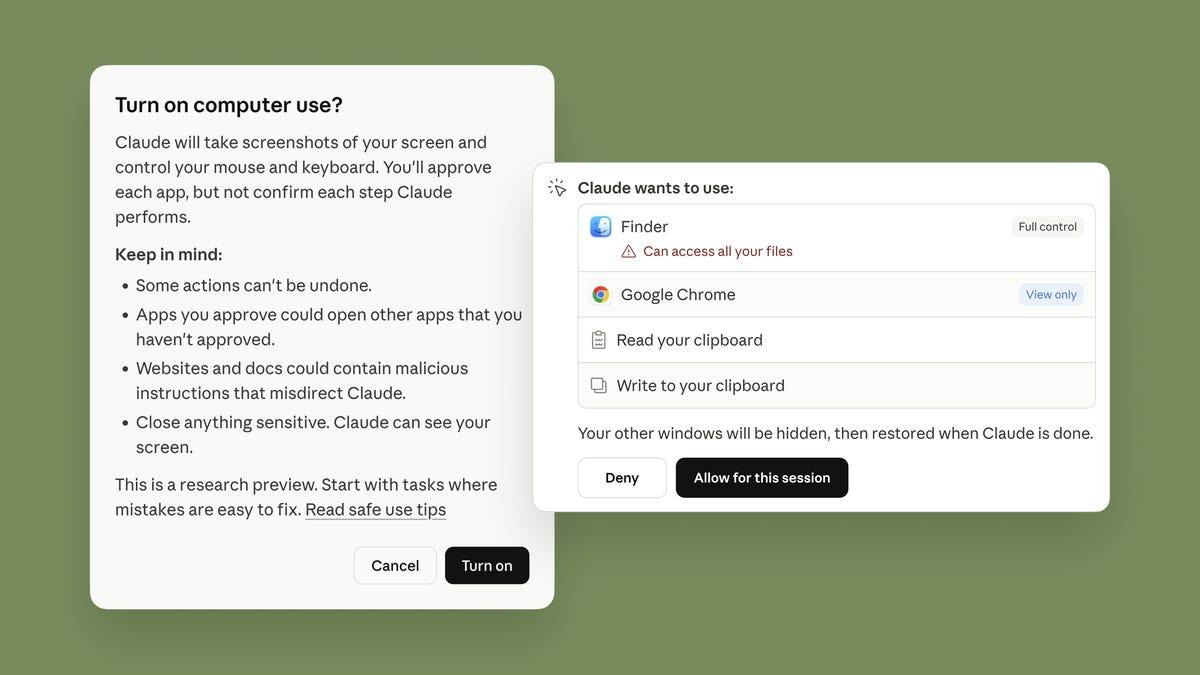

Vast Data expands AI Operating System with global control plane, zero-trust agent framework and deeper Nvidia integration Vast Data Inc. continues its evolution from a storage provider to an artificial intelligence infrastructure platform today with a series of announcements at its Forward 2026 conference, highlighted by the broadest expansion yet of what it calls its AI Operating System. They include the introduction of a global control plane for hybrid and multicloud deployments, a zero-trust framework for agentic AI systems, deeper integration with Nvidia Corp.'s accelerated computing stack, and new ecosystem partnerships spanning video intelligence, cybersecurity and cloud services. The company is betting that enterprises building mission-critical AI systems will prioritize tightly integrated data, compute, governance and orchestration under a single operating model. "Data in particular is critical to AI strategies," said Vast co-founder Jeff Denworth, "especially as enterprises expand inference pipelines and bring regulated and enterprise data into AI-driven workflows." At the center of the infrastructure announcements is Polaris, a Kubernetes-based global control plane designed to orchestrate Vast clusters across public cloud, neocloud and on-premises environments. As AI training, inference and data collection increasingly take place in different geographies and under varying compliance regimes, enterprises are wrestling with operational sprawl, Vast said. Polaris introduces a centralized management layer that provisions, upgrades and governs distributed Vast environments while maintaining local data paths. Polaris evolved from a cloud lifecycle manager into a broader orchestration framework capable of connecting hybrid deployments through lightweight agents rather than full-stack installations everywhere, said Jonsi Stefansson, Vast's general manager of cloud. The architecture centralizes intelligence while preserving distributed execution, enabling global policy management, fleet visibility and nondisruptive upgrades without forcing data to be centralized. Polaris integrates with major hyperscalers, including Microsoft Corp., Amazon Web Services Inc., Google LLC and Oracle Corp. It's positioned as complementary to its DataSpace global namespace, which abstracts data location. Polaris abstracts infrastructure location, allowing AI pipelines to operate against what appears to be a single logical environment. Vast is also introducing two new services - PolicyEngine and TuningEngine -- to address what executives described as the trust barrier to large-scale enterprise AI adoption. PolicyEngine acts as an inline policy enforcement point across the AI Operating System. It governs agent access to shared memory, tools, knowledge bases and other agents using fine-grained permissions and AI-derived context. Enforcement occurs before actions are executed, and the system generates tamper-proof audit logs to support replay, explainability and regulatory compliance. Denworth described the approach as mediating every type of input and output within the system, enabling redaction or transformation of sensitive data before exposure to models or agents. The goal is to maintain a zero-trust posture across AI workflows while preserving operational flexibility. TuningEngine complements the control plane by managing model evolution. It collects telemetry and feedback from agentic workflows, processes data through extract-transform-load pipelines, and feeds curated outputs into fine-tuning frameworks such as LoRA, supervised fine-tuning and reinforcement learning. The result is a closed-loop system in which candidate models are trained, benchmarked and redeployed within the same platform. By embedding fine-tuning inside the enterprise boundary, Vast aims to support customers that cannot rely on hyperscaler-hosted AI labs but still require continuous model improvement. "If we don't handle fine-tuning, then that's going to be a security gap," Denworth said. Both engines will roll out over the course of the year. Denworth said the announcements are being made in advance so customer input can be incorporated. Vast also deepened its collaboration with Nvidia, introducing CNode-X, a new graphics processing unit-accelerated server configuration that runs the Vast AI Operating System directly on Nvidia-powered infrastructure. The servers will be offered through partners including Cisco Systems Inc. and Supermicro Inc. The architecture embeds Nvidia Compute Unified Device Architecture libraries into core Vast services, accelerating real-time SQL analytics, vector search, retrieval-augmented generation pipelines and inference workloads. The system integrates Nvidia cuDF DataFrame library for GPU-accelerated SQL execution via the Sirius open-source query engine, Nvidia cuVS for vector search acceleration and Nvidia Inference Microservices for scalable inference pipelines. Vast said early benchmarks of the Sirius integration showed up to 44% reduction in query time and 80% reduction in query cost. The platform also supports Nvidia Context Memory Storage and BlueField-4 data processing units to accelerate shared key-value cache access in long-context inference scenarios. Denworth characterized the work with Nvidia as extensive, noting that the companies are collaborating on more than two dozen joint engineering initiatives. In the application ecosystem, Vast announced a partnership with TwelveLabs Inc., a developer of multimodal video foundation models including Marengo for embeddings and Pegasus for deep video understanding. The companies will provide a customer-managed deployment path for TwelveLabs' models on the Vast AI Operating System. Historically delivered primarily through public cloud services, the models will now be deployable in on-premises and sovereign environments where data residency, governance and cost constraints limit cloud-only architectures. Vast also announced a strategic partnership with CrowdStrike Holdings Inc. to integrate enterprise threat detection and response into the AI lifecycle. The integration connects telemetry from the Vast AI Operating System to the CrowdStrike Falcon platform, enabling coordinated detection across data ingestion, model training and runtime inference environments. The companies said the joint solution helps mitigate risks such as data poisoning, unauthorized access and malware injection into AI workflows. Executives described the partnership as extending across infrastructure, workloads and data layers, and as complementary to both companies' existing collaborations with Nvidia. Finally, Vast expanded its Cosmos Community for third-party partners into a unified global program encompassing channel, cloud, technology alliance and systems integration partners. The new framework formalizes routes to market and provides centralized training, enablement and governance resources through a partner portal. Executives said the goal is to make the AI Operating System extensible in both "northbound" and "southbound" directions, meaning that it covers the gamut from hardware components to AI frameworks, MLOps platforms, analytics engines and industry-specific applications. Vast is scaling up rapidly. Denworth said the 10-year-old firm has now surpassed $4 billion in cumulative software bookings and exceeded $500 million in contracted annual recurring revenue. The company said it tripled total sales in its most recent fiscal year and reached operating profitability while remaining free-cash-flow-positive.

[5]

VAST Data unveils 'thinking machine' AI upgrades

With the introduction of PolicyEngine and TuningEngine, VAST Data said its AI OS now enables a closed operational loop that observes, reasons, acts, evaluates and improves Jeff Denworth, co-founder at VAST Data/Image: Supplied At VAST Forward 2026, VAST Data unveiled two new computing services -- VAST Data PolicyEngine and VAST Data TuningEngine -- designed to advance the capabilities of its AI Operating System and support organisations scaling mission-critical AI deployments. The two services are engineered to work in tandem, enabling AI systems that are governed, explainable and continuously learning. PolicyEngine is focused on governing agentic activity, while TuningEngine manages model tuning and reinforcement learning workflows. Together, they create automated learning loops designed to remain aligned with organisational policies and expectations. "Just as people are always learning, so should tomorrow's applications," said Jeff Denworth, co-founder at VAST Data. "With the introduction of PolicyEngine and TuningEngine, the VAST AI Operating System has become a thinking machine that customers can deploy wherever they compute - a machine that safeguards every interaction and learns from every outcome, bringing the power of AI within reach of every organization." Strengthening governance in agentic AI As AI agents increasingly access enterprise data and generate new information -- from model outputs to agent-to-agent communications -- governance has become a critical requirement. Without granular controls and auditability, risks such as data leakage and policy violations increase. VAST's PolicyEngine addresses this challenge through inline policy enforcement that governs agent access to shared memory, tools, knowledge bases and other agents. The system applies fine-grained, explicit permissions and AI-derived contextual controls before actions are executed. It also maintains tamper-proof logs and traceability, reinforcing a zero-trust operating model designed to ensure that agent decisions remain observable, explainable and auditable. Enabling continuous model improvement Complementing PolicyEngine, the TuningEngine extends VAST's AgentEngine -- the AI OS's serverless agentic runtime -- by introducing structured learning loops. While AgentEngine supports multi-agent orchestration and model deployment, TuningEngine captures performance data from agent workflows and uses curated feedback to continuously improve models. Using techniques such as LoRA fine tuning, supervised fine tuning and reinforcement learning, TuningEngine automates data ingestion, candidate model generation and benchmarking within the VAST AI OS. Approved models can then be deployed manually or automatically, initiating new cycles of improvement based on future interactions. The TuningEngine will also integrate with NVIDIA's NeMo Data Designer to support training and fine-tuning of NVIDIA Nemotron open models, expanding VAST's collaboration with NVIDIA. With the introduction of PolicyEngine and TuningEngine, VAST Data said its AI OS now enables a closed operational loop that observes, reasons, acts, evaluates and improves -- while embedding governance and security at every stage. The new services are expected to be available by the end of 2026.

[6]

VAST Data Unveils A Platform For Secure, Trusted, And Self-Learning Agentic AI S...

Today at VAST Forward 2026, VAST Data, the AI Operating System company, announced the VAST Data PolicyEngine and VAST Data TuningEngine, two new computing services that will allow the next generation of the VAST AI Operating System to deliver key requirements for organisations looking to scale their mission-critical AI initiatives. Specifically, PolicyEngine and TuningEngine work in tandem within the VAST DataEngine to create AI systems and interactions that are trusted, explainable, and continuously learning. PolicyEngine governs agentic activity and TuningEngine manages model tuning, working in conjunction to power automatic learning loops that remain aligned with organisational expectations. "Just as people are always learning, so should tomorrow's applications," said Jeff Denworth, Co-Founder at VAST Data. "With the introduction of PolicyEngine and TuningEngine, the VAST AI Operating System has become a thinking machine that customers can deploy wherever they compute - a machine that safeguards every interaction and learns from every outcome, bringing the power of AI within reach of every organisation." AI workflows and agents are increasingly accessing organisational data, using it to produce more information in the form of generated responses, agent-to-agent communications, event logs, and more. Without fine-grained controls on what agents can access and how they communicate with other agents, tools, and remote data products, the chance for data spillage and leakage rises greatly. Without strict controls on how data is accessed and how services communicate, and without tools to log every aspect of an agentic workflow, AI cannot be fully trusted. The VAST PolicyEngine resolves these concerns via an inline policy enforcement engine to safeguard every aspect of agentic interaction and communication. PolicyEngine governs agents' access to shared memory, external tools, knowledge bases, or other agents by permitting access, actions, and communications according to fine-grained, explicit permissions, as well as AI-derived context. Because enforcement occurs before actions execute, and because the system maintains extensive, tamper-proof traces and logs, the system maintains a zero-trust operating posture to ensure that decisions and actions remain observable, explainable, and auditable. VAST AgentEngine is the agentic runtime of the AI OS. This serverless computing environment is simple to program and coordinates multi-agent workflows, model invocation, and agentic tool usage within the VAST AI OS. While AgentEngine has been suitable for the deployment of static models, the completeness of the AI OS stack allows the platform to also support 'learning loops' that use all of the system's telemetry, as well as agent and model feedback, to support fine tuning and reinforcement learning pipelines. The VAST TuningEngine captures outcomes from agentic pipelines and utilises curated feedback to enhance model performance over time. Using popular methods such as LoRA fine tuning, supervised fine tuning, and reinforcement learning, TuningEngine pipelines automatically ingest that data, process it, and suggest new candidate models. Each new candidate can be evaluated and benchmarked within the VAST AI OS, and then manually or automatically deployed into the platform. This will kick off a new learning loop that uses future interactions to improve on the newly deployed, updated model. These new capabilities represent a massive step toward building systems that automatically evolve as they interact with data from the natural world. VAST Data has been working on building such a system since 2016, and unveiled the full extent of its vision in 2023. With today's announcement, VAST AI OS finally creates a closed operational computing loop that observes, reasons, acts, evaluates, and improves - all while fortifying security and explainability by unifying and safeguarding all activities in one unified system. The VAST PolicyEngine and TuningEngine are slated for release by the end of 2026.

Share

Share

Copy Link

Vast Data unveiled major expansions to its AI Operating System at Forward 2026, introducing Polaris global control plane, PolicyEngine for zero-trust governance, and TuningEngine for continuous model improvement. The company launched 60 partnerships including Cisco, Nvidia, and CrowdStrike to address enterprise AI infrastructure challenges.

Vast Data Transforms AI Infrastructure with Unified Platform

Vast Data is positioning itself as more than a storage provider, evolving into a comprehensive AI infrastructure platform with announcements at its Forward 2026 conference that address the growing complexity enterprises face when deploying AI at scale

4

. The company's AI Operating System now encompasses a unified data platform designed to control capacity and policy across distributed environments, tackling what executives describe as twin challenges: AI-scale data performance and global data orchestration1

.

Source: SiliconANGLE

Organizations adopting artificial intelligence quickly discover that disconnected storage models were never built for AI at scale. According to Jonsi Stefansson, general manager of cloud at Vast Data, enterprises need both a high-performance data engine and a unified operating layer that delivers manageability on a global scale

1

. The shift addresses a supply chain crisis where organizations must determine compute availability, GPU availability, and data residency simultaneously.Polaris Control Plane Orchestrates Hybrid and Multi-Cloud AI Deployments

At the center of Vast Data's infrastructure expansion is Polaris, a Kubernetes-based global control plane designed to orchestrate Vast clusters across public cloud, neocloud, and on-premises environments

4

. As AI training, inference, and data collection increasingly occur across different geographies under varying compliance regimes, enterprises struggle with operational sprawl. Polaris introduces centralized management that provisions, upgrades, and governs distributed Vast environments while maintaining local data paths.The architecture evolved from a cloud lifecycle manager into a broader orchestration framework capable of connecting hybrid deployments through lightweight agents rather than full-stack installations. This approach centralizes intelligence while preserving distributed execution, enabling global policy management and fleet visibility without forcing data centralization

4

. Polaris integrates with major hyperscalers including Microsoft, Amazon Web Services, Google, and Oracle, positioning itself as complementary to Vast's DataSpace global namespace.Zero-Trust Agent Framework Addresses Enterprise AI Security

Vast Data introduced PolicyEngine and TuningEngine to address what executives describe as the trust barrier preventing large-scale enterprise AI adoption

5

. PolicyEngine acts as an inline policy enforcement point across the AI Operating System, governing agent access to shared memory, tools, knowledge bases, and other agents using fine-grained permissions and AI-derived context4

.Enforcement occurs before actions execute, and the system generates tamper-proof audit logs to support replay, explainability, and regulatory compliance. Jeff Denworth, co-founder at Vast Data, described the approach as mediating every input and output within the system, enabling redaction or transformation of sensitive data before exposure to models or agents

4

.

Source: Gulf Business

This zero-trust operating model ensures agent decisions remain observable, explainable, and auditable.

Continuous AI Model Improvement Through Automated Learning Loops

TuningEngine complements the governance for AI systems by managing model evolution and enabling continuous AI model improvement

5

. The service collects telemetry and feedback from agentic workflows, processes data through extract-transform-load pipelines, and feeds curated outputs into fine-tuning frameworks including LoRA, supervised fine-tuning, and reinforcement learning4

.This creates a closed-loop system where candidate models are trained, benchmarked, and redeployed within the same platform. By embedding fine-tuning inside the enterprise boundary, Vast Data supports customers that cannot rely on hyperscaler-hosted AI labs but still require continuous model improvement. With PolicyEngine and TuningEngine working in tandem, the AI Operating System now enables a closed operational loop that observes, reasons, acts, evaluates, and improves

5

.Related Stories

Strategic Partnerships Expand Production-Ready AI Infrastructure

Vast Data launched the Cosmos partnership program, a global community of developers, builders, and AI experts, starting with approximately 60 partners

2

. John Mao, vice president of business development and alliances at Vast Data, stated the goal is to grow this number to 600, then eventually 6,000 partners. The company announced native integration with CrowdStrike's Falcon program, focusing on critical cybersecurity risks in enterprise AI deployments: data access control and data exfiltration2

.Vast Data also deepened collaboration with Nvidia, introducing CNode-X, a GPU-accelerated server configuration that runs the AI Operating System directly on Nvidia-powered infrastructure

4

. The servers will be offered through partners including Cisco and Supermicro. The architecture embeds Nvidia CUDA libraries into core Vast services, accelerating real-time SQL analytics, vector search, retrieval-augmented generation pipelines, and inference workloads.Cisco Collaboration Simplifies Enterprise AI Deployments

Cisco and Vast Data are collaborating on production-ready AI infrastructure that addresses the fragile complexity emerging in modern deployments

3

. Danny McGinniss, vice president of product management for the Compute Business Unit at Cisco, explained that reference architectures increasingly center on networking, which has become the lifeblood of modern AI infrastructure projects. The goal is creating integrated infrastructure that simplifies adoption as enterprises navigate new data center demands, from liquid cooling to hundred-kilowatt racks and new networking speeds3

.

Source: SiliconANGLE

The environment changes so rapidly that even a single driver update or container-layer change can force teams to reset the entire stack. Many customers lack the time or resources to keep pace while simultaneously trying to transform their business and gain competitive advantage through AI. According to Mao, most senior leadership no longer questions if they will pursue AI, but when and how

3

. Both PolicyEngine and TuningEngine are expected to be available by the end of 20265

.References

Summarized by

Navi

[3]

[4]

[5]

Related Stories

VAST Data Launches Cosmos: A New AI Community to Accelerate Enterprise AI Adoption

03 Oct 2024•Technology

VAST Data Unveils AI Operating System for Large-Scale Agent Deployment

22 May 2025•Technology

VAST Data Revolutionizes Enterprise AI with VAST InSightEngine and Secure Infrastructure Solutions

02 Oct 2024

Recent Highlights

1

Tennessee Teens Sue Elon Musk's xAI Over Grok AI-Generated Child Abuse Images

Policy and Regulation

2

Pentagon designates Palantir Maven AI as core US military system in major defense shift

Technology

3

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation