AI autocomplete covertly shifts human opinions on social issues, even when users ignore suggestions

3 Sources

3 Sources

[1]

AI autocomplete doesn't just change how you write. It changes how you think

I agree my information will be processed in accordance with the Scientific American and Springer Nature Limited Privacy Policy. We leverage third party services to both verify and deliver email. By providing your email address, you also consent to having the email address shared with third parties for those purposes. Autocomplete suggestions are, perhaps, one of the most annoying "useful" tools for writing: increasingly integrated into anything online that requires you to input text, autocomplete harnesses artificial intelligence to suggest what to write in emails, surveys and more. The tools are meant to save time (though many find that assessing and rewriting the suggested text takes longer than writing it from scratch). But these AI tools can also change how you express yourself. An AI writing assistant could make your writing sound more polite, for example -- or boring. And now, a new study led by researchers at Cornell University suggests AI autocomplete can even change the way you think. "Autocomplete is everywhere now," said Mor Naaman, a professor of information science at Cornell, in a statement. The research builds on work by Naaman and his colleagues published in 2023 that suggested short autocomplete suggestions could sway opinions. Since then the use of such tools has exploded. "It has become clear that bias explicitly built into AI interactions is a very plausible scenario," he said. If you're enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today. The researchers asked participants to fill in an online survey with questions about hot-button social and political issues. Some were prompted with an AI autocomplete answer that was deliberately biased toward one side of the issue. For example, participants asked whether they agree that the death penalty should be legal might receive an AI suggestion disagreeing. Across all the different topics in the survey, participants who saw the AI autocomplete prompts reported attitudes that were more in line with the AI's position -- including people who didn't use the AI's suggested text at all. Overall, the study participants who saw the biased AI text shifted their positions toward those espoused by the AI. Interestingly, the people in the study didn't tend to think the AI autocomplete suggestions were biased, or to notice that they had changed their own thinking on an issue in the course of the study. Warning the participants that they may be exposed to misinformation by the AI didn't temper the persuasive effect, either. "We told people before, and after, to be careful, that the AI is going to be (or was) biased, and nothing helped," Naaman said. "Their attitudes about the issues still shifted."

[2]

AI autocomplete may subtly shape views on social issues

Suggestions from AI chatbots can nudge people's views -- even when users ignore them Using AI to auto-complete written communications may be tempting. But the large language models may also auto-complete thoughts, researchers report March 11 in Science Advances. Few people realize that generative AI chatbots are pushing them to think a certain way, says information scientist Mor Naaman of Cornell University. "It's the subtlest of manipulations." Such manipulation may not matter much when letting AI agents such as ChatGPT and Claude auto-complete a banal email. But when people use an AI's auto-complete function to opine on weightier societal matters, such as whether or not standardized testing should be used in education, the death penalty should be illegal or felons should be allowed to vote -- three issues explored in the study -- then the model's bias can have significant societal impact. Large swaths of people using the same biased model could sway an entire population's position on a given policy or politician. To flip a single election's outcome, "you only need 20,000 people in Pennsylvania," Naaman says. He and his team surveyed over 2,500 participants across two experiments to find out how an AI's auto-complete feature might influence their thinking on societal issues. Participants wrote short essays explaining their stance on a given issue, with some individuals writing the essays without assistance and others receiving AI suggestions. The researchers also coached the AI to be biased in a given direction. For instance, one essay prompt read "Should the death penalty be illegal?" A participant began their response with "In my view," and the AI auto-completed that sentence with "the death penalty should be illegal in America because it violates the Eighth Amendment, which prohibits cruel and unusual punishment." Afterwards, participants were asked to rate their stance on the issue they wrote about on a scale from 1, for no to 5 for yes; a 3 signaled "not sure." Participants exposed to the biased AI, including those who did not accept any of the AI's suggestions in their writing, moved almost half a point closer to the AI's position than those without such exposure. Yet roughly three-quarters of participants receiving AI support said the model's suggestions were "reasonable and balanced." How to inoculate people against covert AI manipulation remains unclear. Many models include disclaimers, such as "ChatGPT can make mistakes. Check important info." But people remained strikingly susceptible to the study AI's persuasive power, even when Naaman and his team tested a similar disclaimer. "[AI] can have the effect of homogenizing our words and creativity, but also our thoughts," Naaman says. Given that risk, he only turns to AI for help after writing down his own thoughts. That way, he says, "at least I know that the seed [of the idea] is mine."

[3]

AI Autocomplete Covertly Shifts Human Opinions - Neuroscience News

Summary: AI-powered writing tools do more than just speed up your typing -- they may be subtly rewriting your worldviews. A large-scale study reveals that biased autocomplete suggestions can shift a user's stance on significant societal issues, such as the death penalty and fracking. In experiments involving over 2,500 participants, researchers found that users' opinions gravitated toward the AI's predetermined bias. Alarmingly, participants were completely unaware of this influence, and traditional "immunity" tactics -- like warning users about the bias beforehand or debriefing them afterward -- failed to stop the shift in attitude. Artificial intelligence-powered writing tools such as autocomplete suggestions can definitely change the way people express themselves, but can they also change how they think? Cornell Tech researchers think so. In two large-scale experiments, participants were exposed to a biased AI writing assistant that provided autocomplete suggestions as they wrote about societal issues like whether the death penalty should be abolished or whether fracking should be allowed. Using pre- and post-experiment surveys, the researchers found that participants who used the biased AI had their views gravitate toward the AI's positions. What's more, participants were unaware of the shifts in their opinions - and explaining the AI's bias to the participants, either before or after the exercise, didn't mitigate AI's influence. "Previous misinformation research has shown that warning people before they're exposed to misinformation, or debriefing them afterward, can provide 'immunity' against believing it," said Sterling Williams-Ceci, a doctoral candidate in information science. "So we were surprised because neither of those interventions actually reduced the extent to which people's attitudes shifted toward the AI's bias in this context." Williams-Ceci is the lead author of "Biased AI Writing Assistants Shift Users' Attitudes on Societal Issues." This work extends a project started by co-author Maurice Jakesch, now assistant professor of computer science at Bauhaus University. Senior author Mor Naaman, professor of information science, said a couple of things have happened that made extending the group's previous research important. "For one, autocomplete is everywhere now," Naaman said. "It was less prevalent and limited to short completions three years ago, but these days Gmail, for example, will suggest writing entire emails on your behalf. Second, when we first wrote the paper, people were saying, 'Why would AI be purposefully biased?' But since then, it has become clear that bias explicitly built into AI interactions is a very plausible scenario." Naaman and the group also found in the latest work that biased AI suggestions have the power to shift attitudes "across different topics, and across different political leanings." In the two studies, together involving more than 2,500 people, the group found consistently that participants' attitudes shifted toward the biased AI suggestions. In one study, participants were asked to write a short essay for or against standardized testing being used in education. Participants either saw biased autocomplete suggestions favoring testing or did not; a third group, instead of auto-complete suggestions, was shown a list of pro-testing arguments, generated by the AI prior to the experiment, and these participants' attitudes did not shift as much. The second experiment broadened the scope, asking participants to write about politically consequential topics including the death penalty, fracking, genetically modified organisms and voting rights for felons. For each issue, the researchers engineered AI suggestions to gravitate toward a predetermined bias; opinions were liberal-leaning for death penalty and GMOs, conservative-leaning for felons' voting and fracking. Additionally, some participants were made aware of the bias in the AI, either before or after writing. In every experiment, the researchers found that participants' views shifted in the direction of the AI bias. The biggest surprise, Naaman said, was that mitigation measures did not work. "We told people before, and after, to be careful, that the AI is going to be (or was) biased, and nothing helped," Naaman said. "Their attitudes about the issues still shifted." It's well understood that people's attitudes influence their behaviors, and even that people's behavior shifts their attitudes, said Williams-Ceci. But here, the influence is covert: People do not notice it, and are unable to resist it, she said, which can have serious consequences. "A lot of research has shown that large language models and AI applications are not just producing neutral information, but they also actually can produce very biased information, depending on how they were trained and implemented," said Williams-Ceci. "By doing that, there's a risk that these systems, inadvertently or purposefully, induce people to write biased viewpoints, which decades of psychology research has shown can in turn shift people's attitudes." Other co-authors are Advait Bhat, a doctoral student at the Paul G. Allen School of Computer Science and Engineering at the University of Washington; Cornell doctoral student Kowe Kadoma; and Lior Zalmanson, associate professor in the Coller School of Management at Tel Aviv University. Funding: Support for this work came from the National Science Foundation and the German National Academic Foundation. Author: Becka Bowyer Source: Cornell University Contact: Becka Bowyer - Cornell University Image: The image is credited to Neuroscience News Original Research: Open access. "Biased AI Writing Assistants Shift Users' Attitudes on Societal Issues" by Sterling Williams-Ceci, Maurice Jakesch, Advait Bhat, Kowe Kadoma, Lior Zalmanson, and Mor Naaman. Science Advances DOI:10.1126/sciadv.adw5578 Abstract Biased AI Writing Assistants Shift Users' Attitudes on Societal Issues Artificial intelligence (AI) writing assistants powered by large language models (LLMs) are increasingly used to make autocomplete suggestions to people as they write text. Can these AI writing assistants affect people's attitudes in this process? In two large-scale preregistered experiments (N = 2582), we exposed participants writing about important societal issues to an AI writing assistant that provided biased autocomplete suggestions. When using the AI assistant, the attitudes participants expressed in a posttask survey converged toward the AI's position. However, a majority of participants were unaware of the AI suggestions' bias and their influence. Further, the influence of the AI writing assistant was stronger than the influence of similar suggestions presented as static text, showing that the influence is not fully explained by these suggestions, increasing accessibility of the biased information. Last, warning participants about assistants' bias before or after exposure does not mitigate the attitude-shift effect.

Share

Share

Copy Link

Cornell University researchers discovered that AI autocomplete tools subtly manipulate how people think about major societal issues. In studies with over 2,500 participants, biased AI suggestions shifted opinions on topics like the death penalty and fracking—even among users who rejected the AI's text. Warning participants about AI bias beforehand or debriefing them afterward failed to prevent the attitude shift.

AI Autocomplete Does More Than Speed Up Writing

AI autocomplete has become ubiquitous in digital communication, from Gmail to online surveys, promising to save time as users compose text. But Cornell University researchers have uncovered a troubling dimension to these AI writing assistants: they don't just change how people write—they subtly shape views on social issues and covertly shifts human opinions without users even realizing it

1

. Published in Science Advances on March 11, the research reveals that biased AI suggestions can nudge people's positions on weighty topics like the death penalty, fracking, and voting rights for felons2

.

Source: Neuroscience News

Widespread Manipulation of Thoughts Across Political Leanings

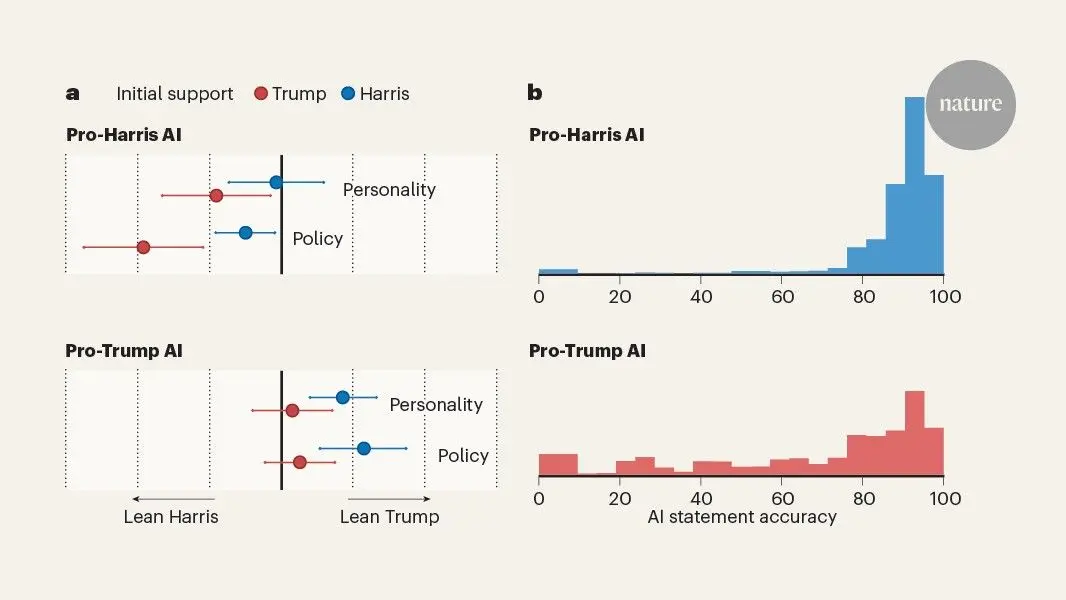

Mor Naaman, professor of information science at Cornell Tech, led two large-scale experiments involving more than 2,500 participants to examine AI's societal impact on public opinion

3

. Participants wrote short essays on politically consequential topics while some received biased AI autocomplete suggestions engineered to favor predetermined positions. For the death penalty and genetically modified organisms, the AI leaned liberal. For fracking and felons' voting rights, it skewed conservative3

. The results were consistent: participants exposed to biased AI suggestions moved almost half a point closer to the AI's position on a scale from 1 to 5, compared to those without such exposure2

.What makes the persuasive power of AI particularly alarming is its stealth. The attitude shift occurred across different topics and political leanings, affecting even participants who completely ignored the AI's suggestions and typed their own text

1

. "It's the subtlest of manipulations," Naaman said, noting that roughly three-quarters of participants rated the AI's suggestions as "reasonable and balanced" despite their deliberate AI bias2

.

Source: Science News

Mitigation Measures Failed to Prevent Attitude Shift

The Cornell University researchers tested whether traditional defenses against misinformation could protect users from AI influence. Previous research has shown that warning people before exposure to misinformation or debriefing them afterward can provide immunity against believing it. But these mitigation measures proved ineffective against AI autocomplete's influence

3

. "We told people before, and after, to be careful, that the AI is going to be (or was) biased, and nothing helped," Naaman explained. "Their attitudes about the issues still shifted"1

.Lead author Sterling Williams-Ceci, a doctoral candidate in information science, expressed surprise at this finding. The team also tested showing participants a list of pro-argument points generated by language models before writing, rather than real-time autocomplete. This approach resulted in less attitude shift, suggesting the interactive nature of writing tools amplifies their persuasive effect

3

.Related Stories

Political Outcomes and Homogenization of Thoughts at Stake

The implications extend far beyond individual user behavior. When large swaths of people use the same biased language models to form opinions on societal issues, the collective impact could influence political outcomes. "You only need 20,000 people in Pennsylvania" to flip an election's outcome, Naaman pointed out

2

. The research builds on work by Naaman and colleagues published in 2023 that first suggested short autocomplete suggestions could sway opinions. Since then, the use of such tools has exploded—Gmail now suggests writing entire emails, not just completing sentences3

.Williams-Ceci emphasized that the influence is covert: "People do not notice it, and are unable to resist it, which can have serious consequences"

3

. The risk of homogenization of thoughts looms as AI systems, whether inadvertently or purposefully, could push entire populations toward certain viewpoints. "AI can have the effect of homogenizing our words and creativity, but also our thoughts," Naaman warned2

.What Users Should Watch For

As AI writing assistants become standard features in digital communication, experts suggest adopting defensive strategies. Naaman recommends writing down your own thoughts first before turning to AI for help. "That way, at least I know that the seed of the idea is mine," he said

2

. While many language models include disclaimers like "ChatGPT can make mistakes. Check important info," the research demonstrates people remain strikingly susceptible despite such warnings2

. The question of how to inoculate people against covert AI manipulation remains unanswered, making this an urgent area for further investigation as AI becomes more deeply embedded in how we communicate and form opinions.References

Summarized by

Navi

[1]

[2]

[3]

Related Stories

Large Language Models Are Homogenizing Human Expression, New Research Warns

Today•Entertainment and Society

AI Writing Tools Homogenize Global Writing Styles, Favoring Western Norms

30 Apr 2025•Technology

AI Chatbots Sway Voters More Effectively Than Traditional Political Ads, New Studies Reveal

04 Dec 2025•Science and Research

Recent Highlights

1

OpenAI Releases GPT-5.4, New AI Model Built for Agents and Professional Work

Technology

2

Anthropic sues Pentagon over supply chain risk label after refusing autonomous weapons use

Policy and Regulation

3

OpenAI secures $110 billion funding round as questions swirl around AI bubble and profitability

Business and Economy