AI Face Models Apply for Jobs Running Deepfake Video Scams, Making 100 Calls Per Day

2 Sources

2 Sources

[1]

'100 Video Calls Per Day': Models Are Applying to Be the Face of AI Scams

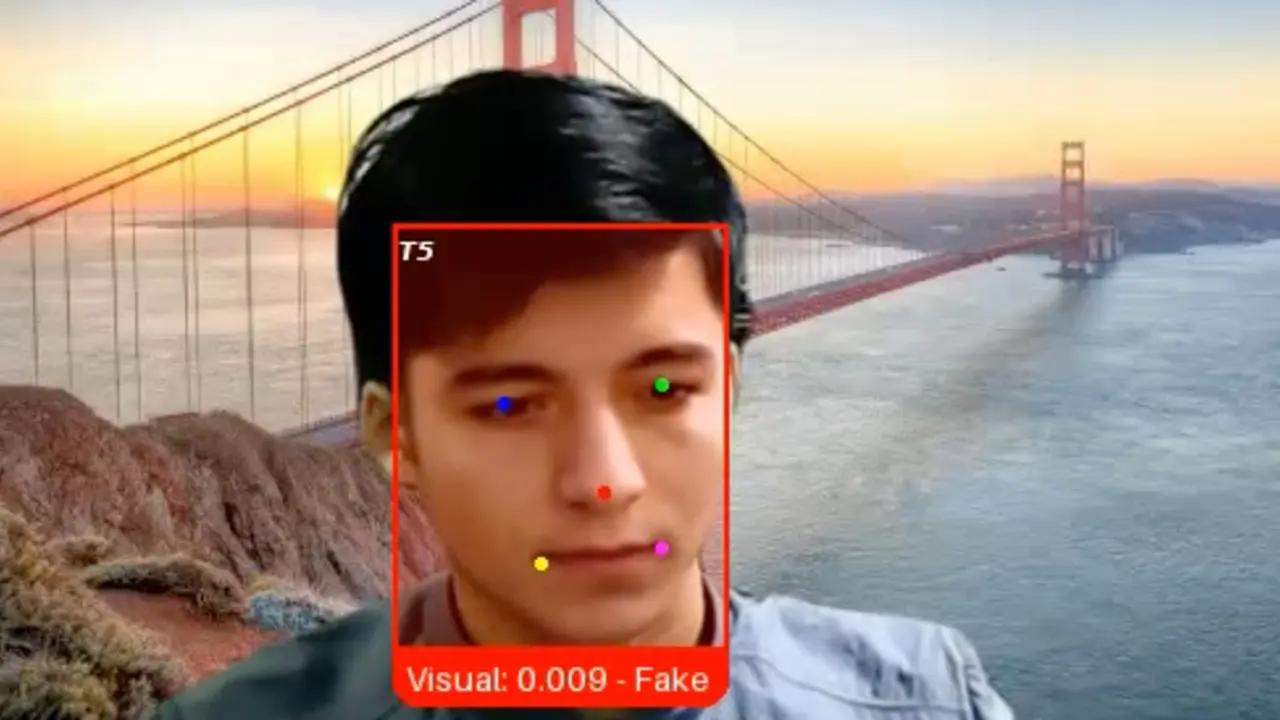

When applying for jobs, Angel talks up her language skills. "I can speak fluent English, I can speak good Chinese, I also speak Russian and Turkish," the glamorous, 24-year-old Uzbekistani woman explains in a selfie-style video made for recruiters. Angel had arrived in the Cambodian city of Sihanoukville that day, she said, and was ready to start work immediately. Those impressive language skills, however, have likely been put to use as part of elaborate "pig-butchering" scams targeting Americans. That's because, instead of applying for a conventional corporate job, Angel was putting herself forward to work as an "AI face model" -- sitting in front of a computer all day and making deepfake video calls to manipulate potential scam victims. Her application, which also required her height and weight, says she has already clocked up "1 year as an AI model." Angel is far from alone in this pursuit. A WIRED review of dozens of recruitment videos and job ads posted to Telegram show people from around the world -- including Turkey, Russia, Ukraine, Belarus, and multiple Asian countries -- applying to be AI models or "real face" models in Cambodia and Southeast Asia. The region has become home to vast, industrialized scamming operations that hold thousands of human trafficking victims captive and force them to run online cryptocurrency investment and romance scams. As well as tricking people into working in scam compounds, these high-tech, multibillion-dollar criminal enterprises can also attract people into seeking "work" as part of the operations. "In the past year until today, they are also hiring people doing AI modeling," says Hieu Minh Ngo, a cybercrime investigator at the Vietnamese scam-fighting nonprofit ChongLuaDao. "They will give you the software so they can swap their face by using AI and they can do romance scams," he says. Ngo, a reformed criminal hacker who now tracks scam compound activity and supports victims, identified around two dozen channels on Telegram that have some job postings for AI models in the region. Humanity Research Consultancy, an anti-human-trafficking organization, has also tracked people applying on Telegram for jobs in "known scam hub cities" as "models" and "AI models," including Angel's application. The rise of AI models comes as cybercriminals are broadly adopting AI and using face-swapping as part of their online scamming. Typically, fraudsters will use fake personas to contact potential victims on social media or messaging platforms. They will often use stolen images of celebrities or attractive men or women to entice a person into talking to them. Once they make contact, they will then bombard them with attention to help build up a relationship, before trying to get them to part with their cash. In some instances, multiple people may control the scammers' account and message the victim under a single fake persona. But if a potential victim asks for a video call during these interactions -- to check if the person they are speaking to is real, for instance -- that's when deepfake video calls and models who have their faces swapped can be used. Some Southeast Asian scam centers have dedicated "AI rooms" where the calls are made from. Job advertisements for AI models or "real models" reviewed by WIRED demand excessive working hours, offer little free time, and require a relentless schedule. The ads are usually posted by a channel administrator and don't include contact details or list who someone would specifically be working for. One recruitment post for an alleged six-month contract says the person will need to send photos daily, make video and voice calls, and create audio and video messages. "Approximately 100 video calls per day," the post says.

[2]

Another Dark Side of AI Deepfakes: The Rise of Video 'Model' Jobs Powering Online Scams

There's a new category of AI job that's gaining popularity among criminal recruiters. Recruitment listings for AI face "models" have surged within the past year, and seek applicants who can carry out financial scams via video chat. The models conducting the video chats typically appear as other people thanks to deepfake technology, so their real faces may never be seen. A Wired investigation found that within the past year alone, dozens of online recruitment channels have popped up on secure messaging app Telegram. These channels seek people -- many of them young women -- for "AI face model" jobs. The jobs vary, but typically entail making contact with a mark, then messaging them repeatedly to build up a rapport that leads to financial requests, in a practice known as "pig-butchering." Based on applications for the model roles, reviewed by Wired, some of the individuals know the kind of work they are applying for requires scamming others, and may even have past experience in it. Hotspots for recruiting include countries like Turkey, Russia, Ukraine, Belarus, Cambodia and multiple others in Asia and Southeast Asia. The scams are often multi-fold: targeting vulnerable workers into exploitative roles that help criminal enterprises extract billions from unsuspecting victims. The job listings in some cases contain veiled if not explicit references to crypto schemes, gold trading, and romance scams. They may offer applicants contracts of varying lengths, and require workers to send messages, share photos, and make audio and video calls. Ideal candidates speak multiple languages.

Share

Share

Copy Link

Dozens of recruitment channels on Telegram are seeking AI face models to conduct pig-butchering scams via deepfake video calls. Workers from Turkey, Russia, Ukraine, and Asia apply for roles requiring up to 100 video calls daily, using face-swapping technology to manipulate victims into cryptocurrency and romance scams across Southeast Asia.

AI Face Models Emerge as New Frontier in Online Financial Scams

A disturbing trend has emerged in the world of cybercrime: people actively applying to become AI face models for sophisticated scam operations. Angel, a 24-year-old woman from Uzbekistan, arrived in the Cambodian city of Sihanoukville ready to leverage her fluency in English, Chinese, Russian, and Turkish. But her linguistic talents weren't destined for conventional corporate work. Instead, she was applying to sit in front of a computer all day, making deepfake video calls to manipulate Americans in elaborate pig-butchering schemes

1

.

Source: Inc.

Angel's application video reveals she already has one year of experience as an "AI model," highlighting how this exploitative industry has matured over recent months. A WIRED investigation uncovered dozens of recruitment videos and job advertisements posted to Telegram, showing applicants from Turkey, Russia, Ukraine, Belarus, and multiple Asian countries seeking these roles in Cambodia and Southeast Asia

1

. The region has become notorious for vast, industrialized scam compounds that hold thousands of human trafficking victims captive while forcing them to run cryptocurrency investment scams and romance scams.How Recruitment for AI Models Operates on Telegram

Hieu Minh Ngo, a cybercrime investigator at the Vietnamese scam-fighting nonprofit ChongLuaDao, identified around two dozen channels on Telegram dedicated to these job postings. "In the past year until today, they are also hiring people doing AI modeling," Ngo explains. "They will give you the software so they can swap their face by using AI and they can do romance scams"

1

. Humanity Research Consultancy, an anti-human-trafficking organization, has independently tracked people applying on Telegram for jobs in known scam hub cities as models and AI face models.The job advertisements paint a grim picture of working conditions. Recruiters demand excessive hours with little free time and relentless schedules. One posting for an alleged six-month contract specifies that workers must send photos daily, make video and voice calls, and create audio and video messages—approximately 100 video calls per day

1

. These listings often contain veiled or explicit references to crypto schemes, gold trading, and romance scams, with ideal candidates speaking multiple languages2

.Face-Swapping Technology Powers Deepfake Video Calls

The mechanics of these AI scams reveal how criminal organizations in Southeast Asia have industrialized deception. Fraudsters typically use stolen images of celebrities or attractive individuals to create fake personas on social media and messaging platforms. Once contact is established, they bombard potential victims with attention to build relationships before requesting money. When suspicious victims request video calls to verify authenticity, that's when AI deepfake technology and face-swapping technology come into play

1

.

Source: Wired

Some Southeast Asian scam centers have established dedicated "AI rooms" specifically for conducting these deepfake video calls. Multiple people may control a single scammer account, messaging victims under one fake persona. The AI face models provide the human element needed to sustain the illusion during video interactions, with their real faces swapped in real-time to match the fake profile images victims have been shown

2

.Related Stories

The Double Exploitation of AI Scams

What makes this phenomenon particularly troubling is the multi-layered exploitation involved. Based on applications reviewed, some individuals applying for these roles understand they'll be scamming others and may even have past experience in it

2

. Yet the scams are often multi-fold: targeting vulnerable workers into exploitative roles that help criminal enterprises extract billions from unsuspecting victims.While some people actively seek out this work, the broader context involves human trafficking and forced labor in scam compounds across the region. These high-tech, multibillion-dollar criminal enterprises both trick people into working in scam facilities and attract willing applicants seeking employment

1

. The rise of recruitment channels within the past year alone signals how quickly this industry is expanding, with applications requiring details like height and weight alongside language skills and prior AI modeling experience.References

Summarized by

Navi

Related Stories

AI scams escalate as criminal networks use cheap tools to evade crackdown and dupe more victims

10 Feb 2026•Technology

AI-Powered Fake Job Seekers: A Growing Threat to Remote Hiring

09 Apr 2025•Technology

AI-Powered Deepfake Romance Scam in Hong Kong Defrauds Victims of $46 Million

17 Oct 2024•Technology

Recent Highlights

1

Google releases Gemma 4 with Apache 2.0 license, enabling unrestricted local AI on devices

Technology

2

AI Models Lie, Cheat, and Defy Human Instructions to Protect Other AI Models From Deletion

Science and Research

3

Anthropic discovers emotion-like patterns in Claude that actively shape AI behavior and decisions

Science and Research