Alibaba launches Qwen 3.5 small AI models for edge devices with offline capabilities

3 Sources

3 Sources

[1]

Alibaba launches Qwen 3.5 AI models for edge devices

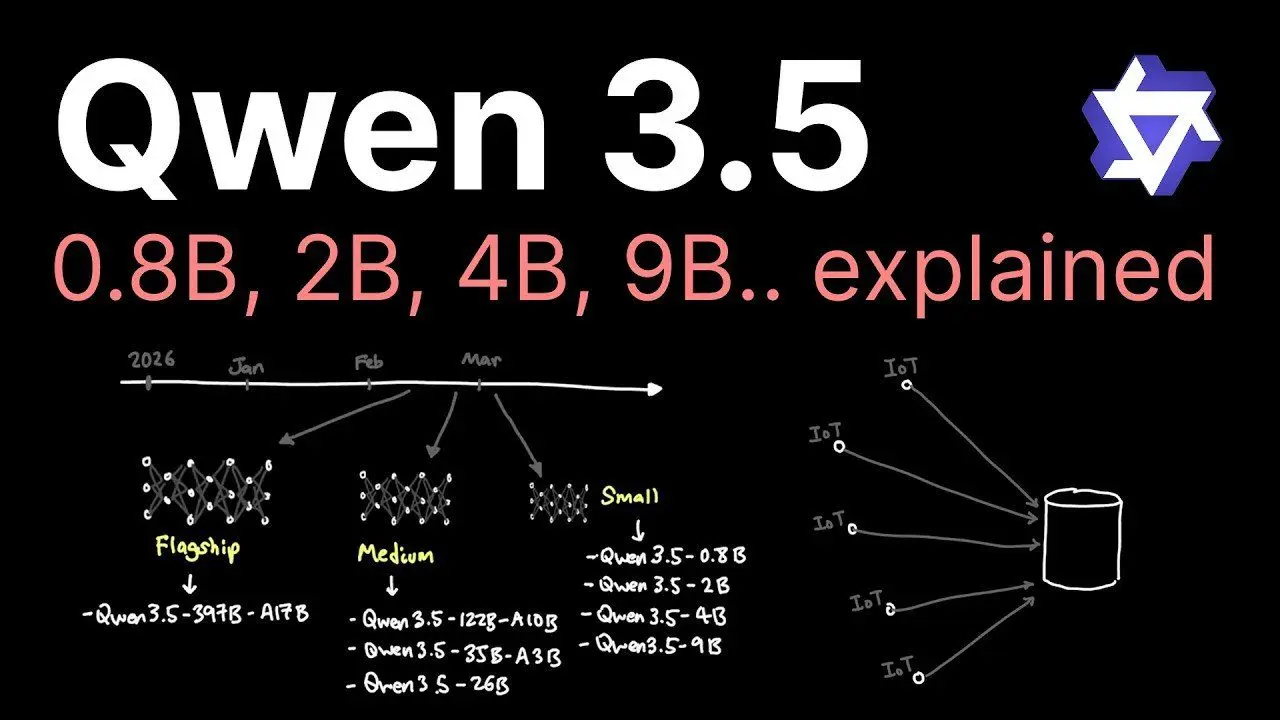

Alibaba launched the Qwen 3.5 series of artificial intelligence models optimized for edge devices. The new series focuses on smaller, efficient designs ranging from 800 million to 9 billion parameters, challenging the industry trend of massive centralized systems. This strategy contrasts with many AI labs prioritizing large-scale models for cloud deployment. The Qwen 3.5 series enables local computation on consumer-grade hardware, enhancing privacy by processing data locally and supporting offline functionality. The 800 million parameter model is optimized for lightweight applications, making it ideal for resource-constrained environments such as IoT devices. The 9 billion parameter model delivers high performance comparable to larger counterparts, excelling in benchmarks like MMLU for complex tasks. Innovations such as enhanced architecture, refined training techniques, and high-quality datasets allow the smaller models to achieve high performance. These advancements reduce hardware demands and increase accessibility for devices with limited capabilities, including smartphones and IoT systems. The series is particularly suited for IoT ecosystems, allowing tasks such as real-time data analysis, anomaly detection, and image recognition. By processing data directly on devices, these models reduce latency and improve responsiveness for time-sensitive tasks. Alibaba's focus on compact, versatile AI models positions it as a leader in privacy-focused and hardware-compatible solutions. This approach ensures that AI technology is accessible to a wider audience, including industries and consumers with limited computational resources. The Qwen 3.5 series builds on predecessors like Qwen 2 and Qwen 3, with advancements in training data quality and architectural design. Future developments may include even smaller models with enhanced multimodal capabilities and broader integration into consumer electronics.

[2]

Alibaba Qwen 3.5 Small Models: 0.8B & 2B Benchmarks and Edge Tests

Alibaba's Qwen 3.5 small models are compact AI systems designed to operate efficiently on edge devices, including older laptops and smartphones. According to Better Stack, these models feature parameter sizes of 0.8 billion and 2 billion, paired with a 262,000-token context window. This allows them to process extensive datasets, such as lengthy documents or complex codebases, while maintaining coherence. Additionally, their offline functionality supports users in environments with limited or no internet access, making them practical for resource-constrained scenarios. You'll learn how these models perform on tasks like text summarization, object recognition and coding, as well as their results on benchmarks such as MMLU and OCR. The analysis also examines their compatibility with older hardware and identifies areas where they face challenges, such as advanced reasoning or handling nuanced vision tasks. This breakdown provides a detailed look at their strengths and limitations for edge-compatible AI applications. Multimodal Capabilities in a Compact Design Qwen 3.5's standout feature is its ability to handle multiple modalities, text, vision and coding, within a compact framework. Unlike traditional large-scale models that require significant computational resources, Qwen 3.5's 0.8B and 2B parameter models are optimized for offline use, making them ideal for environments with limited or no internet access. The models' 262,000-token context window is a critical advantage, allowing them to process extensive datasets, such as lengthy documents or intricate codebases, in a single session. This capability is particularly useful for tasks like summarizing detailed overviews, analyzing large datasets, or debugging complex code. By maintaining a broad context, the models ensure that outputs remain coherent and relevant, even when dealing with substantial input sizes. Performance Benchmarks: Compact Models Delivering Big Results Despite their small size, Qwen 3.5 models deliver competitive results across various benchmarks, demonstrating their efficiency and capability: * Language Understanding: The 2B model achieved a score of 66.5 on the MMLU (Massive Multitask Language Understanding) benchmark, while the 0.8B model scored 42.3. These results rival larger models like Llama 2 (7B), highlighting the effectiveness of Qwen 3.5's design in handling complex language tasks. * Vision Tasks: On OCR (Optical Character Recognition) benchmarks, the 2B model scored 85.4 and the 0.8B model achieved 79.1. These scores reflect their ability to recognize text and objects with reasonable accuracy, although performance varied depending on task complexity. These benchmarks underscore the models' ability to compete with larger counterparts, particularly in tasks requiring moderate computational power. Their efficiency and compactness make them a practical choice for users seeking advanced AI capabilities without the need for high-end hardware. Discover other guides from our vast content that could be of interest on Qwen. Optimized for Edge Devices One of the most compelling aspects of Qwen 3.5 is its compatibility with edge devices. Testing demonstrated that both the 0.8B and 2B models ran efficiently on devices such as an M2 MacBook Pro and an iPhone 14 Pro, delivering fast response times for tasks like text summarization, object recognition and basic coding. Even older devices, including legacy laptops and smartphones with limited processing power, were able to handle the models effectively. This adaptability significantly broadens access to advanced AI technologies, allowing users with older or less powerful hardware to benefit from innovative capabilities. By providing widespread access to access to AI, Qwen 3.5 offers practical solutions for a wide range of applications, from personal productivity to educational tools. Coding and Vision: Strengths and Areas for Improvement Qwen 3.5's coding capabilities were evaluated on a variety of programming tasks. The 0.8B model produced functional but limited outputs, often encountering logical errors or design constraints. In contrast, the 2B model demonstrated greater accuracy and versatility, generating more reliable code snippets. However, challenges such as infinite loops and slower task completion occasionally arose, indicating areas where further refinement is needed. In vision-related tasks, the models excelled at recognizing common objects and extracting text from images. For example, they successfully identified everyday items and read text from photos with high accuracy. However, their performance was less consistent in more nuanced scenarios, such as distinguishing between visually similar objects or interpreting multilingual text. These limitations highlight the trade-offs inherent in compact AI design, particularly when balancing size with functionality. Challenges and Limitations While Qwen 3.5 models excel in many areas, they are not without challenges. Key limitations include: * Complex Reasoning: The models struggled with tasks requiring advanced reasoning, abstract thinking, or specialized domain knowledge. * Technical Issues: Problems such as hallucinations, logical inconsistencies and infinite loops were observed, particularly with the 2B model during more demanding tasks. * Design Trade-offs: The compact design, while efficient, limits the models' ability to handle highly complex or resource-intensive scenarios, making them less suitable for certain advanced applications. These challenges underscore the inherent trade-offs in designing compact AI systems. While the models perform admirably in many areas, their limitations highlight the need for continued innovation to address these shortcomings and expand their applicability. Future Potential and Development The future of Qwen 3.5 and its successors remains uncertain. Overviews of organizational restructuring within Alibaba's Qwen team suggest that this release may mark the final major development from the group for the foreseeable future. Despite this uncertainty, Qwen 3.5 represents a significant achievement in compact AI design, showcasing the potential of small models to deliver high performance across a range of applications. For now, Qwen 3.5 stands as a valuable tool for users seeking advanced AI capabilities in a compact, efficient package. Its ability to operate offline on edge devices, combined with its multimodal functionality, makes it a practical choice for diverse use cases. However, addressing its current limitations will require ongoing research and refinement, particularly in areas like complex reasoning and technical reliability. As the field of artificial intelligence continues to evolve, Qwen 3.5 serves as both a milestone and a reminder of the challenges that remain in creating versatile, reliable and compact AI systems. Its development highlights the potential for innovation in compact AI, paving the way for future advancements that could further provide widespread access to access to innovative technology. Media Credit: Better Stack Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.

[3]

Qwen 3.5 Small Expands On-Device AI to Phones and IoT with Offline Support

Alibaba's Qwen 3.5 series introduces a compelling shift in AI development by focusing on smaller, efficient models optimized for edge devices. As highlighted by Caleb Writes Code, these models range from 800 million to 9 billion parameters, offering a balance between compactness and performance. For instance, the 800 million parameter variant is tailored for lightweight applications like IoT devices, while the 9 billion parameter model excels in complex tasks, rivaling larger systems in benchmarks such as MMLU. This approach prioritizes local computation, enhancing privacy and allowing offline functionality, which is particularly advantageous for consumer-grade hardware and resource-constrained environments. In this overview, you'll explore how Qwen 3.5 achieves high performance through innovations like refined training techniques and enhanced architecture. Key takeaways include the practical benefits of deploying these models in IoT ecosystems, such as real-time data analysis and anomaly detection and their adaptability to diverse use cases, from smartphones to industrial applications. Whether you're interested in privacy-focused AI or scalable solutions for edge computing, this breakdown offers insights into how smaller models can meet the growing demands of modern AI deployment. The Qwen 3.5 series offers a diverse range of model sizes, catering to various computational needs without compromising on performance. Unlike many AI labs that prioritize developing massive models, Alibaba adopts an inclusive strategy, making sure that smaller models remain powerful and versatile. This flexibility allows developers to select models that align with specific use cases, from high-performance computing to basic AI tasks on low-power devices. By addressing a broad spectrum of needs, Qwen 3.5 ensures that AI technology is accessible to a wider audience, including industries and consumers with limited computational resources. The efficiency of Qwen 3.5 stems from several key advancements that enable smaller models to achieve results traditionally associated with larger systems: For instance, the 9 billion parameter model excels in natural language understanding and multimodal tasks, rivaling larger predecessors while requiring fewer computational resources. These innovations not only reduce hardware demands but also make AI more accessible to devices with limited capabilities, such as smartphones and IoT systems. Learn more about Qwen with other articles and guides we have written below. A defining feature of Qwen 3.5 is its optimization for edge devices, allowing local computation on consumer-grade hardware. This approach offers several distinct advantages: For example, the 9 billion parameter model can power advanced AI features on a smartphone, while the 800 million parameter model is well-suited for basic AI tasks on IoT devices. This adaptability ensures that Qwen 3.5 can meet the needs of a wide range of users, from individual consumers to industrial applications. By allowing real-time, localized AI processing, these models enhance responsiveness and reduce latency, making them particularly valuable for time-sensitive tasks. The Qwen 3.5 series highlights the growing role of AI in IoT and edge computing, where smaller, efficient models are essential for on-device computation. The 800 million parameter variant, in particular, is well-suited for IoT ecosystems, allowing tasks such as: By processing data directly on devices, these models reduce latency and improve responsiveness, making them ideal for applications that require immediate action. Additionally, the inclusion of multimodal capabilities, such as handling both text and images, broadens the scope of potential use cases. For instance, a smart home system powered by Qwen 3.5 could seamlessly integrate voice commands with camera feeds, creating a more intuitive and efficient user experience. Alibaba's focus on smaller, efficient models positions it to address the growing demand for AI in edge computing and privacy-focused applications. This strategy contrasts with the approach of many AI labs, which prioritize large-scale models designed for centralized cloud deployment. The Qwen 3.5 series demonstrates that smaller models can deliver comparable performance while offering unique advantages: As the AI landscape evolves, these models could redefine industry standards, balancing efficiency with capability. By prioritizing accessibility and versatility, Qwen 3.5 sets a new benchmark for AI deployment, particularly in environments where hardware limitations and privacy concerns are paramount. The Qwen 3.5 series builds on the foundation established by its predecessors, such as Qwen 2 and Qwen 3. These earlier models laid the groundwork for Alibaba's commitment to creating versatile and accessible AI solutions. Over time, advancements in training data quality, stabilization techniques and architectural design have enabled significant performance improvements. For example, the intelligence density of Qwen 3.5 surpasses that of its predecessors, allowing smaller models to achieve results that were previously unattainable. This progression underscores Alibaba's dedication to pushing the boundaries of what compact AI models can accomplish, making sure that they remain competitive in an increasingly demanding market. The Qwen 3.5 series sets the stage for future advancements in AI, as competition intensifies with upcoming releases from other labs, such as OpenAI's GPT-5.3. Alibaba's focus on quantization and compact models positions it to address a wide range of use cases, from consumer devices to industrial IoT systems. Potential future developments may include: These innovations could further expand the possibilities for AI at the edge, making it more accessible, versatile and impactful than ever before. By continuing to prioritize efficiency and adaptability, Alibaba is poised to play a leading role in shaping the future of AI deployment. Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.

Share

Share

Copy Link

Alibaba unveiled its Qwen 3.5 series featuring compact AI models ranging from 800 million to 9 billion parameters, optimized for edge devices like smartphones and IoT systems. The models enable local computation with enhanced privacy and offline functionality, challenging the industry trend of massive cloud-based systems while delivering competitive performance on benchmarks like MMLU.

Alibaba Shifts Focus to Compact AI Models for Edge Computing

Alibaba launched the Qwen 3.5 series of artificial intelligence models, introducing a strategic pivot toward smaller, efficient designs optimized for edge devices

1

. The new series features Qwen 3.5 small AI models ranging from 800 million to 9 billion parameters, contrasting sharply with the industry trend of developing massive centralized systems for cloud-based AI deployment3

. This approach enables local computation on consumer-grade hardware, addressing growing concerns about data privacy while supporting offline functionality in resource-constrained environments1

.

Source: Geeky Gadgets

The 800 million parameter model targets lightweight applications, making it ideal for IoT devices with limited processing power. Meanwhile, the 9 billion parameter model delivers high performance comparable to larger counterparts, excelling in AI benchmarks like MMLU for complex language understanding tasks

1

. Testing demonstrated that both the 0.8B and 2B models ran efficiently on devices including an M2 MacBook Pro and an iPhone 14 Pro, with even older legacy laptops and smartphones handling the models effectively2

.

Source: Geeky Gadgets

Impressive Performance on AI Benchmarks Despite Compact Size

Despite their compact design, Qwen 3.5 models deliver competitive results across various performance metrics. The 2B model achieved a score of 66.5 on the MMLU benchmark, while the 0.8B model scored 42.3, rivaling larger models like Llama 2 with 7 billion parameters

2

. On OCR tasks, the 2B model scored 85.4 and the 0.8B model achieved 79.1, demonstrating reasonable accuracy in text and image recognition2

.A standout feature is the 262,000-token context window, which allows the models to process extensive datasets such as lengthy documents or complex codebases while maintaining coherence

2

. This capability proves particularly valuable for tasks like summarizing detailed overviews, analyzing large datasets, or debugging complex code in a single session. Innovations such as enhanced architecture, refined training techniques, and high-quality datasets enable these smaller models to achieve performance traditionally associated with larger systems1

.Multimodal Capabilities Enable Diverse Applications

The Qwen 3.5 series showcases multimodal capabilities, handling text, vision, and coding tasks within a compact framework

2

. The models excelled at recognizing common objects and extracting text from images with high accuracy, though performance varied in more nuanced scenarios such as distinguishing visually similar objects or interpreting multilingual text2

. In coding evaluations, the 2B model demonstrated greater accuracy and versatility than the 0.8B variant, generating more reliable code snippets, though challenges like infinite loops occasionally arose2

.Related Stories

Enhanced Privacy Through Local Data Processing

By processing data directly on edge devices, Qwen 3.5 addresses critical privacy concerns that plague cloud-based AI systems. Local data processing means sensitive information never leaves the device, providing enhanced privacy for users and organizations handling confidential data

3

. This approach also reduces latency and improves responsiveness for time-sensitive tasks, making the models particularly valuable for real-time applications1

.Strategic Positioning for IoT and Consumer Electronics

The series proves particularly suited for IoT ecosystems, allowing tasks such as real-time data analysis, anomaly detection, and image recognition directly on devices

1

. The 800 million parameter variant integrates seamlessly into smart home systems, wearables, and industrial sensors, while the larger models power advanced AI features on smartphones and consumer electronics3

. This adaptability ensures AI technology becomes accessible to a wider audience, including industries and consumers with limited computational resources1

.Alibaba's focus on compact, versatile AI models positions it as a leader in privacy-focused and hardware-compatible solutions, contrasting with competitors prioritizing large-scale models for centralized deployment. The Qwen 3.5 series builds on predecessors like Qwen 2 and Qwen 3, with advancements in training data quality and architectural design

1

. Future developments may include even smaller models with enhanced multimodal capabilities and broader integration into consumer electronics, potentially redefining industry standards for on-device AI deployment1

. As demand grows for AI solutions that balance performance with accessibility, Qwen 3.5 demonstrates that efficient on-device deployment can deliver competitive results without requiring high-end hardware or constant internet connectivity.References

Summarized by

Navi

[1]

[2]

Related Stories

Alibaba unveils Qwen3.5 AI model with visual agentic capabilities, claims edge over GPT-5.2

16 Feb 2026•Technology

Alibaba Unveils Qwen 3: A New Family of Hybrid AI Reasoning Models Challenging Global Leaders

29 Apr 2025•Technology

Alibaba's Qwen3 Models Set New Benchmarks in Open-Source AI

23 Jul 2025•Technology