AMD Ryzen AI Embedded P100 Series Targets Edge AI and Industrial Applications with Zen 5

2 Sources

2 Sources

[1]

AMD Ryzen AI Embedded P100 Series Debuts: Zen 5, RDNA 3.5 and XDNA 2

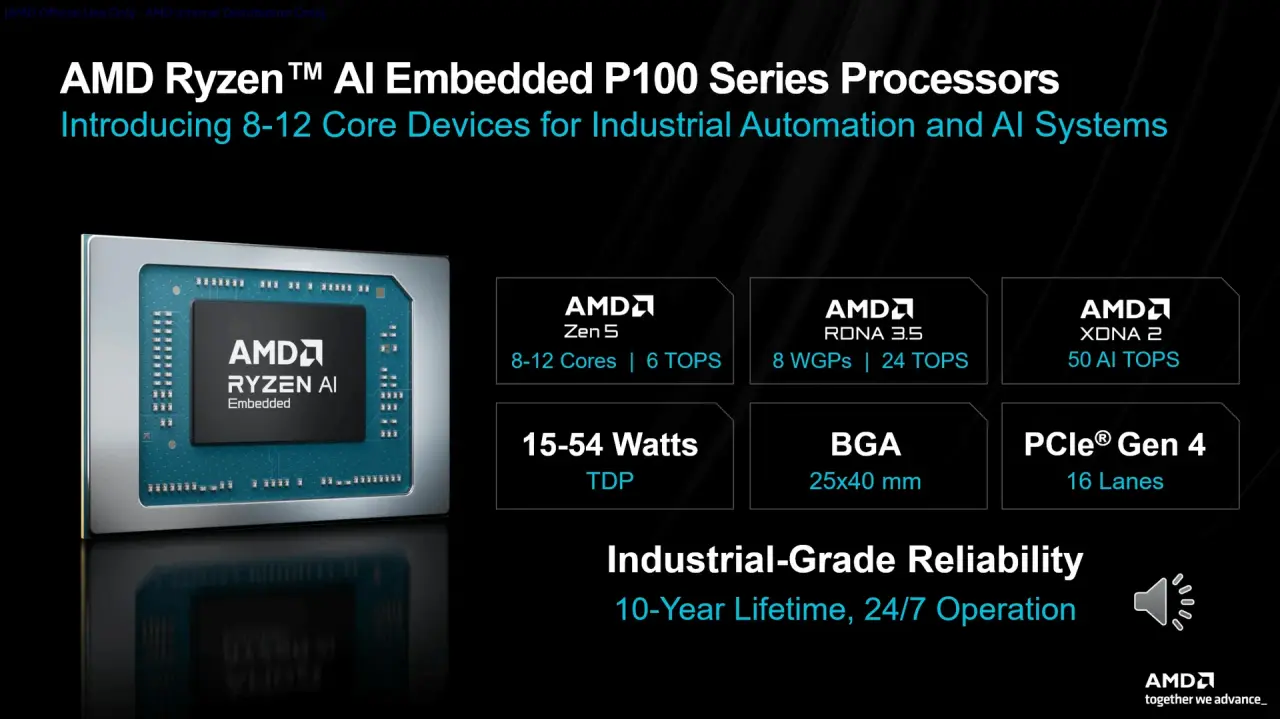

AMD is widening its embedded roadmap in a way that feels very deliberate, very timely, and frankly rather aggressive. The Ryzen AI Embedded P100 series is no longer just about compact edge compute with modest core counts and respectable graphics. With the arrival of the new 8-core through 12-core parts, AMD is taking the platform deeper into industrial automation, machine vision, smart edge inference, digital signage, HMI, robotics, and systems that need to balance CPU throughput, graphics acceleration, and low-latency AI in one compact footprint. This is not merely another embedded processor family with long-life support and ruggedized branding wrapped around it. The P100 series now looks like a properly scalable embedded platform, stretching from smaller 4-core and 6-core designs all the way up to 12 Zen 5 cores, while keeping the same general package concept, configurable power envelope, and a strong emphasis on pin and package compatibility across the family. For system integrators and industrial OEMs, that matters far more than glossy marketing slogans. It means a single board design can potentially address several performance tiers, reducing validation work, simplifying deployment, and shortening product cycles. Architecturally, AMD is leaning into a monolithic heterogeneous design. In plain English, that means the CPU, graphics engine, and NPU are all sitting together in one tightly integrated chip rather than being bolted together as an awkward compromise. The CPU side is based on Zen 5, which immediately gives the platform a serious uplift in scalar and multi-threaded performance. AMD is claiming up to 39 percent greater multi-threaded performance versus the previous-generation Ryzen Embedded 8000 class part in its internal comparison material, and even the single-threaded delta looks healthy. For embedded markets, where platform longevity often matters more than benchmark chest-thumping, that is still a meaningful jump. More performance per watt means more flexibility in passive systems, smaller enclosures, or simply more headroom for concurrent workloads. The graphics portion is based on RDNA 3.5, scaling up to eight work group processors. That gives the higher-end chips genuine multimedia and parallel compute usefulness rather than basic display duty. These parts are clearly intended to do more than just light desktop rendering. AMD positions the iGPU for burst and event-driven AI workloads, computer vision acceleration, graphics-heavy HMIs, and high-resolution multi-display deployments. Support for up to four 4K120 displays or dual 8K120 output underlines that this is not an afterthought. In industrial control rooms, medical imaging terminals, smart retail, transportation hubs, and advanced signage, display capability remains a big selling point. Then there is the NPU, based on XDNA 2, offering up to 50 AI TOPS. That is the part of the equation that makes this family especially relevant in 2026. AMD is clearly pitching a hybrid AI execution model where always-on, low-power inference can sit on the NPU, while heavier or more burst-oriented work can be passed to the graphics engine. This split is actually sensible. Voice trigger models, always-on object detection, and persistent sensor analysis are ideal candidates for the NPU, while more demanding visual reasoning, larger model handling, or transient inference bursts can land on the iGPU. The result is a more efficient allocation of silicon resources and, in theory, better responsiveness for edge workloads where time-to-first-token or low-latency action matters. At the top end, the P185 stands out as the headline part. It brings 12 Zen 5 cores, a peak frequency of 5.1 GHz, 24 MB of L3 cache, eight RDNA 3.5 work group processors running up to 2.9 GHz, and the full 50 TOPS NPU capability. That is an impressive specification set for an embedded BGA processor in a 25 x 40 mm package. The P174 and P164 sit just below it with 10-core and 8-core configurations, while the P132 and P121 continue to serve lower-power or more cost-sensitive deployments with 6-core and 4-core options. The scalability across the stack is one of the strongest arguments in favor of this platform. Memory and I/O support also look well judged for embedded deployments. AMD lists DDR5 support at 5600 MT/s with ECC, along with LPDDR5X support up to 8533 MT/s in certain configurations. Connectivity includes up to 16 lanes of PCIe Gen 4, which should be enough for NVMe storage, frame grabbers, AI accelerators, high-speed networking, or specialized co-processors without feeling overly constrained. USB 4 is present on much of the lineup, accompanied by USB 3.x and USB 2.0 connectivity. On select models there is also integrated 10GbE with TSN support, which is exactly the kind of feature industrial networking and deterministic control environments like to see. Power is another strong point. The series spans from 15 watts up to 54 watts depending on configuration, with nominal values around 28 watts for much of the lineup and a 45-watt nominal target for the P132a automotive-grade model. That gives board vendors room to tune for fanless edge boxes, compact DIN-rail systems, panel PCs, or more performance-oriented embedded controllers. Crucially, AMD is not sacrificing rugged credentials in the process. Industrial-temperature variants run from minus 40 to 105 degrees Celsius junction temperature, and AMD is advertising industrial-grade reliability with extended availability and 24/7 operation in mind. Automotive-grade options are also part of the wider family, which broadens the platform's appeal into transportation, autonomy, and machine-based vision systems. Specification P121 P132 P164 P174 P185 CPU Architecture AMD Zen 5 AMD Zen 5 AMD Zen 5 AMD Zen 5 AMD Zen 5 CPU Cores / Threads 4 / 8 6 / 12 8 / 16 10 / 20 12 / 24 Max CPU Frequency 4.4 GHz 4.5 GHz 5.0 GHz 5.0 GHz 5.1 GHz L3 Cache 8 MB 8 MB 16 MB 24 MB 24 MB GPU Architecture AMD RDNA 3.5 AMD RDNA 3.5 AMD RDNA 3.5 AMD RDNA 3.5 AMD RDNA 3.5 GPU Compute Units / WGPs 1 WGP 2 WGPs 6 WGPs 6 WGPs 8 WGPs GPU Max Frequency 2.7 GHz 2.8 GHz 2.8 GHz 2.8 GHz 2.9 GHz NPU Architecture AMD XDNA 2 AMD XDNA 2 AMD XDNA 2 AMD XDNA 2 AMD XDNA 2 NPU Performance 30 TOPS 50 TOPS 50 TOPS 50 TOPS 50 TOPS Total System AI Performance Up to 60 TOPS Up to 80 TOPS Up to 80 TOPS Up to 80 TOPS Up to 80 TOPS Memory Support DDR5-5600, LPDDR5X-7500 DDR5-5600, LPDDR5X-8000 DDR5-5600, LPDDR5X-8533 DDR5-5600, LPDDR5X-8533 DDR5-5600, LPDDR5X-8533 ECC Support Yes Yes Yes Yes Yes PCIe Support PCIe Gen 4, up to 16 lanes PCIe Gen 4, up to 16 lanes PCIe Gen 4, up to 16 lanes PCIe Gen 4, up to 16 lanes PCIe Gen 4, up to 16 lanes Display Support Up to 4x 4K120 / 2x 8K120 Up to 4x 4K120 / 2x 8K120 Up to 4x 4K120 / 2x 8K120 Up to 4x 4K120 / 2x 8K120 Up to 4x 4K120 / 2x 8K120 USB 2x USB4 + additional USB 3.x / USB 2.0 2x USB4 + additional USB 3.x / USB 2.0 2x USB4 + additional USB 3.x / USB 2.0 2x USB4 + additional USB 3.x / USB 2.0 2x USB4 + additional USB 3.x / USB 2.0 10GbE with TSN 2 cores 2 cores N/A N/A N/A TDP Range 15-54 W 15-54 W 15-54 W 15-54 W 15-54 W Package 25 x 40 mm BGA 25 x 40 mm BGA 25 x 40 mm BGA 25 x 40 mm BGA 25 x 40 mm BGA Industrial Reliability 24/7 operation, up to 10-year lifecycle 24/7 operation, up to 10-year lifecycle 24/7 operation, up to 10-year lifecycle 24/7 operation, up to 10-year lifecycle 24/7 operation, up to 10-year lifecycle The other important angle is product timing. Silicon samples and tools are available now, production is slated for the third quarter of 2026, and customer reference boards are expected in the second half of 2026. That suggests AMD is not merely seeding a future roadmap slide. It is actively trying to get design wins lined up for the next wave of intelligent industrial hardware. From a market perspective, the Ryzen AI Embedded P100 series feels like AMD moving its embedded strategy beyond incremental refreshes. The company is combining modern Zen 5 compute, competent RDNA graphics, and meaningful NPU acceleration in a single embedded platform that can scale from relatively compact devices to far more ambitious industrial systems. That matters because the edge is changing. Customers no longer want a simple controller with a display output. They want local inference, real-time analytics, richer visual interfaces, sensor fusion, and long deployment lifecycles without throwing discrete components at every problem.

[2]

AMD Introduces Scalable Ryzen AI Embedded Chips for Edge and Industrial Applications

Compared with the prior generation AMD Ryzen™ Embedded 8000 Series, the P100 Series is expected to provide up to 39% higher multithreaded performance and up to 2.1x higher total system TOPS2. The new processors deliver exceptional AI performance-per-watt and support almost twice the number of virtual machines and larger large language models, like Llama3.2-Vision 11B, than the existing P100 Series to enable more advanced AI and mixed workloads. ROCm Software Support and Virtualized Reference Stack Support for the AMD ROCm open software ecosystem brings a proven, open-source AI software stack to embedded applications. Developers can run standard AI frameworks while relying on open-source compilers, runtimes, and libraries - all while having immediate access to embedded-ready models without rewriting code. At the programming level, ROCm software uses the open-source Heterogeneous-computing Interface for Portability (HIP), decoupling GPU programming from the hardware and eliminating vendor lock-in between the software stack and the hardware. The tightly integrated CPU, GPU, and NPU architecture enables efficient workload partitioning and predictable latency under mixed workloads, while the use of familiar frameworks and software stacks help simplify and streamline development and deployment across broad use cases. This level of integration enables advanced compute and graphics capabilities without the need for additional external components, making it easier for OEMs and system integrators to design scalable platforms.

Share

Share

Copy Link

AMD expands its Ryzen AI Embedded P100 series with processors featuring up to 12 Zen 5 cores, RDNA 3.5 graphics, and 50 AI TOPS for edge AI and industrial applications. The new chips deliver up to 39% higher multithreaded performance and support advanced workloads including Llama3.2-Vision 11B, while offering ROCm open-source AI software stack compatibility for simplified deployment.

AMD Scales Ryzen AI Embedded Platform for Edge AI and Industrial Deployments

AMD is expanding its Ryzen AI Embedded P100 series with new processors designed specifically for edge AI and industrial applications that demand balanced CPU performance, graphics acceleration, and low-latency AI inference

1

. The lineup now spans from 4-core to 12-core configurations, all built on a monolithic heterogeneous design that integrates CPU, graphics, and NPU capabilities into a compact 25 x 40 mm BGA package1

. This scalability matters for system integrators and OEMs working on machine vision, robotics, smart edge inference, digital signage, and HMI systems, as a single board design can address multiple performance tiers without extensive revalidation1

.

Source: DT

Performance Gains Through Zen 5 Architecture

The Ryzen AI Embedded P100 series leverages Zen 5 cores to deliver up to 39% higher multithreaded performance compared to the previous Ryzen Embedded 8000 Series

2

. The flagship P185 model features 12 Zen 5 cores running at up to 5.1 GHz with 24 MB of L3 cache, while the P174 and P164 offer 10-core and 8-core configurations respectively1

. For embedded applications where platform longevity typically outweighs benchmark performance, this performance-per-watt improvement enables more flexible passive cooling systems, smaller enclosures, and greater headroom for concurrent workloads1

.Hybrid AI Execution with XDNA 2 NPU and RDNA 3.5 Graphics

AMD's approach to compact edge compute centers on a hybrid AI execution model that allocates workloads between the NPU and iGPU based on power and latency requirements

1

. The XDNA 2-based NPU delivers up to 50 AI TOPS for always-on, low-power inference tasks like voice triggers, persistent object detection, and sensor fusion, while the RDNA 3.5 graphics engine with up to eight work group processors running at 2.9 GHz handles burst-oriented AI workloads and visual reasoning1

. This architecture delivers up to 2.1x higher total system AI TOPS compared to the earlier P100 Series2

. The iGPU also supports up to four 4K120 displays or dual 8K120 output, addressing requirements for industrial control rooms, medical imaging terminals, and transportation hubs1

.

Source: Guru3D

Related Stories

ROCm Support Brings Open-Source AI Software Stack to Embedded Applications

AMD is bringing its ROCm open-source AI software stack to the Ryzen AI Embedded platform, allowing developers to run standard AI frameworks without rewriting code for embedded applications

2

. ROCm uses the open-source Heterogeneous-computing Interface for Portability (HIP) at the programming level, which decouples GPU programming from hardware and eliminates vendor lock-in2

. The new processors support nearly twice the number of virtual machines and can handle larger large language models like Llama3.2-Vision 11B compared to existing P100 Series chips2

. This tightly integrated CPU, GPU, and NPU architecture enables efficient workload partitioning and predictable latency under mixed workloads, simplifying development and deployment for system integrators2

.Industrial-Grade Connectivity and Memory Support

The P100 series includes DDR5 support at 5600 MT/s with ECC and LPDDR5X support up to 8533 MT/s in certain configurations

1

. Connectivity options include up to 16 lanes of PCIe Gen 4 for NVMe storage, frame grabbers, AI accelerators, and high-speed networking, along with USB 4 support across much of the lineup1

. Select models feature integrated 10GbE with Time-Sensitive Networking (TSN) support, addressing requirements for industrial networking and deterministic control environments1

. The series spans configurable power envelopes from 15 watts to 54 watts depending on configuration, giving OEMs flexibility in thermal design1

. Watch for adoption in automation systems requiring real-time inference at the edge, as the combination of deterministic networking, ECC memory, and hybrid AI execution positions these chips for safety-critical and time-sensitive industrial applications where reliability matters as much as raw performance.References

Summarized by

Navi

Related Stories

Recent Highlights

1

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

2

Anthropic's Claude Code Source Leak Reveals Hidden AI Agent Plans and Extensive System Access

Technology

3

Judge blocks Pentagon from branding Anthropic a security risk over AI safety guardrails dispute

Policy and Regulation