Brain Implants Decode Inner Speech: Medical Breakthrough Raises Ethical Concerns

2 Sources

2 Sources

[1]

Brain implants that read minds: a medical miracle raises new ethical questions

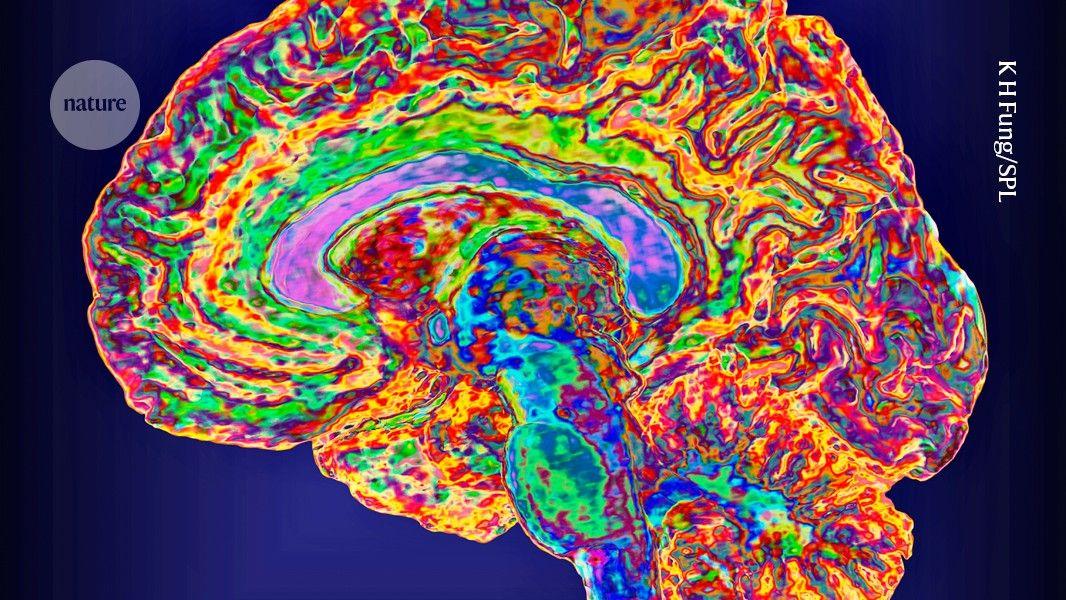

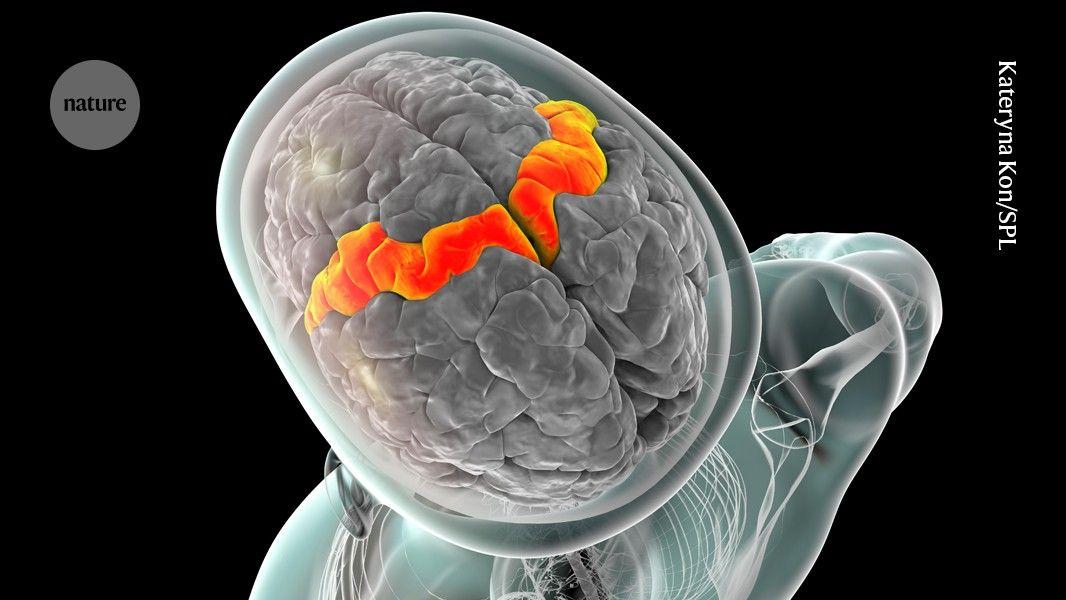

For the first time, researchers have succeeded in translating silent thoughts in real time using a brain implant coupled with artificial intelligence. This technology promises to offer a new form of communication to paralysed people. But it also raises consent and privacy concerns. It's a power associated with fictional superheroes, not the stuff of real life. But the capacity to read minds via direct neural interfaces, called brain-computer interfaces (BCIs), has advanced by leaps and bounds in recent years. A recent Stanford University study has made it possible to directly decode inner speech, or what a person thinks they are saying, without gestures or sound. BCIs work by connecting a person's nervous system to implanted electrodes capable of interpreting brain activity, allowing them to perform actions - such as using a computer or moving a prosthetic hand - using only their thoughts. The technology could offer people with disabilities a renewed sense of autonomy. Researchers were previously able to give a voice to people unable to speak by capturing signals in the motor cortex of the brain as they attempted to move their mouths, tongues, lips and vocal cords. But the new Stanford University study has managed to bypass physical speech. "If we could decode that [inner speech], then that could bypass the physical effort," Stanford neuroscientist and lead author of the new study Erin Kunz told The New York Times. "It would be less tiring, so they could use the system for longer." Published August 21 in the scientific journal Cell, the study's findings could make it even easier for people who cannot speak to communicate: The system showed a 74% accuracy rate in real time, an unprecedented performance for this type of technology. But decoding our inner voice is not without risks. During trials, the implant sometimes picked up unexpected signals, requiring the implementation of a mental password to protect certain thoughts. Decoded in real time "This is the first time we've managed to understand what brain activity looks like when you just think about speaking," Kunz told the Financial Times. Using multi-unit recordings from four participants, researchers found that inner speech is strongly represented in the motor cortex, a part of the brain responsible for speech, and that imagined sentences can be decoded in real time. To achieve this, the team implanted microelectrodes in the motor cortex to record neural signals. The participants in this study were severely paralysed, either by amyotrophic lateral sclerosis (ALS) or due to a stroke. The researchers asked them to try to speak or imagine saying a series of words. Both actions activated overlapping areas of the brain and elicited similar types of brain activity. Artificial intelligence (AI) models were trained to recognise phonemes (basic units of language), translate these signals into words and then into sentences that the participants were thinking but not saying aloud. In a demonstration, the brain chip was able to translate the imagined sentences with an accuracy rate of up to 74%. Frank Willett, assistant professor of neurosurgery at Stanford, told the Financial Times that the decoding was reliable enough to demonstrate that, with improvements in implant hardware and recognition software, "future systems could restore fluent, rapid and comfortable speech via inner speech alone". A password to protect private thoughts But these exciting advances come with privacy concerns. The study found that BCIs could also capture internal speech that participants had not been asked to imagine saying, raising the spectre of private thoughts being leaked against the user's will. Technology's new ability to blur the line between voluntary and intimate thoughts has sparked fears of non-consensual mind reading. "This means that the line between private and public thought may be more blurred than we assume," warned Nita Farahany, a professor of law and philosophy at Duke University and author of the book, "The Battle for Your Brain: Defending the Right to Think Freely in the Age of Neurotechnology". "The more we push this research forward, the more transparent our brains become, and we have to recognise that this era of brain transparency really is an entirely new frontier for us," Farahany noted in an interview with National Public Radio (NPR). The question of how to ensure that an individual's mind remains an inviolable sanctuary is an ethical issue that now confronts researchers working in neurotechnology. Stanford researchers devised a password-protection system that prevents the technology from decoding someone's inner speech unless they first think of the password. They chose "Chitty Chitty Bang Bang," the name of a 1964 children's book by Ian Fleming as well as a 1968 movie starring Dick van Dyke. The password was long enough, and unusual enough, to prevent the unintentional decoding of private thoughts with a 98% success rate. Given the development of this password-protection system, Cohen Marcus Lionel Brown, a bioethicist at the University of Wollongong in Australia, told the New York Times that the Stanford study "represents a step in the right direction, ethically speaking". He added that, "If implemented faithfully, it would give patients even greater power to decide what information they share and when." In a March 2023 interview with NPR, Farahany noted that the brain was "the most sensitive organ we have. Opening that up to the rest of the world profoundly changes what it means to be human and how we relate to one another." For her part, Evelina Fedorenko, a cognitive neuroscientist at the Massachusetts Institute of Technology (MIT), who was not involved in the new study, noted that much of human thought is nonverbal. She noted that BCI studies showed high success rates when decoding words that patients consciously imagined saying, but the success rate fell when people responded to open-ended commands. When participants were asked to think about their favourite childhood hobby, Fedorenko noted that what was recorded was "mostly garbage". A lot of spontaneous thoughts, she told the New York Times, are "just not well-formed linguistic sentences". Kunz, the Stanford study's lead author, acknowledges that the computers decoding inner speech are still not good enough to enable people to hold conversations. "The results are an initial proof of concept more than anything," she said. Despite the advances in the field, expectations must be tempered. At this stage, the vocabulary remains limited, accuracy is far from perfect, and the implants are invasive. The device also requires lengthy training and constant adjustments. For a large-scale clinical deployment, improvements will need to be made to the algorithms, hardware interfaces and implantation conditions, which, according to the researchers, will take several more years. However, these advances have sparked a heated debate on "neurorights", an emerging study aimed at protecting mental life from intrusion. In other words, will our "mental security" need to be defined and protected in the future? While promising to give a voice to those who have been deprived of it, this breakthrough also outlines the contours of an unprecedented future where, even when unwanted, silences can speak.

[2]

Brain implants that read minds: a medical miracle raises new ethical questions - VnExpress International

It's a power associated with fictional superheroes, not the stuff of real life. But the capacity to read minds via direct neural interfaces, called brain-computer interfaces (BCIs), has advanced by leaps and bounds in recent years. A recent Stanford University study has made it possible to directly decode inner speech, or what a person thinks they are saying, without gestures or sound. BCIs work by connecting a person's nervous system to implanted electrodes capable of interpreting brain activity, allowing them to perform actions - such as using a computer or moving a prosthetic hand - using only their thoughts. The technology could offer people with disabilities a renewed sense of autonomy. Researchers were previously able to give a voice to people unable to speak by capturing signals in the motor cortex of the brain as they attempted to move their mouths, tongues, lips and vocal cords. But the new Stanford University study has managed to bypass physical speech. "If we could decode that [inner speech], then that could bypass the physical effort," Stanford neuroscientist and lead author of the new study Erin Kunz told The New York Times. "It would be less tiring, so they could use the system for longer." Published Aug. 21 in the scientific journal Cell, the study's findings could make it even easier for people who cannot speak to communicate: The system showed a 74% accuracy rate in real time, an unprecedented performance for this type of technology. But decoding our inner voice is not without risks. During trials, the implant sometimes picked up unexpected signals, requiring the implementation of a mental password to protect certain thoughts. Decoded in real time "This is the first time we've managed to understand what brain activity looks like when you just think about speaking," Kunz told the Financial Times. Using multi-unit recordings from four participants, researchers found that inner speech is strongly represented in the motor cortex, a part of the brain responsible for speech, and that imagined sentences can be decoded in real time. To achieve this, the team implanted microelectrodes in the motor cortex to record neural signals. The participants in this study were severely paralyzed, either by amyotrophic lateral sclerosis (ALS) or due to a stroke. The researchers asked them to try to speak or imagine saying a series of words. Both actions activated overlapping areas of the brain and elicited similar types of brain activity. Artificial intelligence (AI) models were trained to recognize phonemes (basic units of language), translate these signals into words and then into sentences that the participants were thinking but not saying aloud. In a demonstration, the brain chip was able to translate the imagined sentences with an accuracy rate of up to 74%. Frank Willett, assistant professor of neurosurgery at Stanford, told the Financial Times that the decoding was reliable enough to demonstrate that, with improvements in implant hardware and recognition software, "future systems could restore fluent, rapid and comfortable speech via inner speech alone". A password to protect private thoughts But these exciting advances come with privacy concerns. The study found that BCIs could also capture internal speech that participants had not been asked to imagine saying, raising the specter of private thoughts being leaked against the user's will. Technology's new ability to blur the line between voluntary and intimate thoughts has sparked fears of non-consensual mind reading. "This means that the line between private and public thought may be more blurred than we assume," warned Nita Farahany, a professor of law and philosophy at Duke University and author of the book, "The Battle for Your Brain: Defending the Right to Think Freely in the Age of Neurotechnology". "The more we push this research forward, the more transparent our brains become, and we have to recognize that this era of brain transparency really is an entirely new frontier for us," Farahany noted in an interview with National Public Radio (NPR). The question of how to ensure that an individual's mind remains an inviolable sanctuary is an ethical issue that now confronts researchers working in neurotechnology. Stanford researchers devised a password-protection system that prevents the technology from decoding someone's inner speech unless they first think of the password.

Share

Share

Copy Link

Stanford researchers have developed a brain-computer interface that can translate silent thoughts in real-time, offering hope for paralyzed individuals but raising privacy concerns.

Breakthrough in Brain-Computer Interface Technology

In a groundbreaking study published in the scientific journal Cell, Stanford University researchers have achieved a significant milestone in brain-computer interface (BCI) technology. For the first time, scientists have successfully translated silent thoughts in real-time using a brain implant coupled with artificial intelligence, with an unprecedented 74% accuracy rate

1

.

Source: VnExpress

The Science Behind Inner Speech Decoding

The study, led by Stanford neuroscientist Erin Kunz, focused on decoding inner speech, or what a person thinks they are saying, without the need for physical gestures or sound. The research team implanted microelectrodes in the motor cortex of four severely paralyzed participants to record neural signals

2

.Artificial intelligence models were trained to recognize phonemes, translate these signals into words, and then into sentences that participants were thinking but not saying aloud. This breakthrough could potentially offer a new form of communication for people with disabilities, allowing for more effortless and prolonged use of the system.

Implications for Medical Applications

Frank Willett, assistant professor of neurosurgery at Stanford, highlighted the potential of this technology, stating that "future systems could restore fluent, rapid and comfortable speech via inner speech alone"

1

. This advancement holds promise for individuals with conditions such as amyotrophic lateral sclerosis (ALS) or those who have suffered strokes, potentially offering them a renewed sense of autonomy.Ethical Concerns and Privacy Issues

While the medical applications are promising, the study has also raised significant ethical questions. The BCI was found to occasionally capture internal speech that participants had not been asked to imagine saying, sparking concerns about privacy and the potential for non-consensual mind reading

1

.Nita Farahany, a professor of law and philosophy at Duke University, warned that "the line between private and public thought may be more blurred than we assume"

2

. This development has brought to the forefront the need to address how an individual's mind can remain an inviolable sanctuary in the age of advancing neurotechnology.

Source: France 24

Related Stories

Safeguarding Private Thoughts

To address these privacy concerns, Stanford researchers have developed a password-protection system. This innovative approach prevents the technology from decoding someone's inner speech unless they first think of a specific password

1

. The chosen password, "Chitty Chitty Bang Bang," proved effective in preventing unintentional decoding of private thoughts with a 98% success rate.Cohen Marcus Lionel Brown, a bioethicist at the University of Wollongong in Australia, views this password system as "a step in the right direction, ethically speaking"

1

. He believes that if implemented faithfully, it would give patients greater control over what information they share and when.The Future of Neurotechnology

As research in this field progresses, the ethical implications of brain transparency are becoming increasingly apparent. Evelina Fedorenko, a cognitive neuroscientist at MIT, noted that much of human thought is nonverbal, adding another layer of complexity to the decoding process

1

.The development of BCIs that can read minds represents a new frontier in neurotechnology, blurring the lines between private and public thought. As we continue to push the boundaries of what's possible, it's crucial to balance the potential medical benefits with the need to protect individual privacy and autonomy in this brave new world of brain-computer interfaces.

References

Summarized by

Navi

Related Stories

Brain Implant Decodes Inner Speech with Password Protection, Advancing AI-Powered Communication

15 Aug 2025•Science and Research

Brain Implant Breakthrough: Real-Time Thought-to-Speech Translation

01 Apr 2025•Science and Research

Breakthrough Brain-Computer Interface Restores Real-Time Speech for ALS Patient

12 Jun 2025•Science and Research

Recent Highlights

1

Apple Plans Major Siri AI Overhaul in iOS 27 With Third-Party Chatbot Integration

Technology

2

OpenAI shuts down Sora after six months, ending Disney's $1 billion licensing partnership

Technology

3

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research