Cursor admits Composer 2 was built on Chinese AI model, exposing Western open-source gaps

10 Sources

10 Sources

[1]

Cursor admits its new coding model was built on top of Moonshot AI's Kimi | TechCrunch

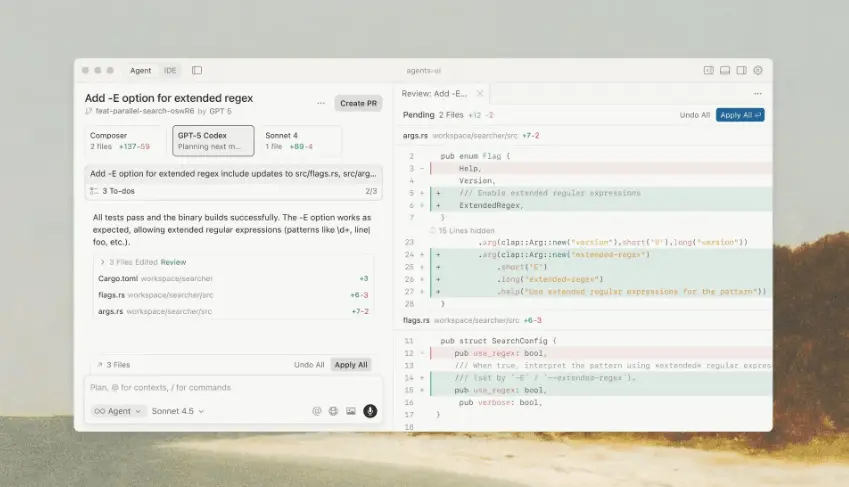

AI coding company Cursor launched a new model this week called Composer 2, which it promoted as offering "frontier-level coding intelligence." However, an X user posting under the name Fynn soon claimed that Composer 2 was "just Kimi 2.5" with additional reinforcement learning -- Kimi 2.5 being an open source model recently released by Moonshot AI, a Chinese company backed by Alibaba and HongShan (formerly Sequoia China). As evidence, Fynn pointed to code that seemed to identify Kimi as the model. "[A]t least rename the model ID," they scoffed. It was a surprising revelation, since Cursor is a well-funded U.S. startup that raised a $2.3 billion round last fall at a $29.3 billion valuation, and is reportedly exceeding $2 billion in annualized revenue. Also, the company didn't mention anything about Moonshot AI or Kimi in its announcement. However, Cursor's vice president of developer education Lee Robinson soon acknowledged, "Yep, Composer 2 started from an open-source base!" But he said, "Only ~1/4 of the compute spent on the final model came from the base, the rest is from our training." As a result, he said Composer 2's performance on various benchmarks is "very different" from Kimi's. Robinson also insisted that Cursor's use of Kimi was consistent with the terms of its license, a point the Kimi account on X repeated in a subsequent post congratulating Cursor, where it said the Cursor used Kimi "as part of an authorized commercial partnership" with Fireworks AI. "We are proud to see Kimi-k2.5 provide the foundation," the Kimi account said. "Seeing our model integrated effectively through Cursor's continued pretraining & high-compute RL training is the open model ecosystem we love to support." So why not acknowledge Kimi upfront? Beyond any potential embarrassment in not creating a model from scratch, building on top of a Chinese model might feel particularly fraught right now, with the so-called AI "arms race" often framed as an existential battle between United States and China. (See, for example, Silicon Valley's apparent panic after Chinese company DeepSeek released a competitive model early last year.) Cursor co-founder Aman Sanger acknowledged, "It was a miss to not mention the Kimi base in our blog from the start. We'll fix that for the next model."

[2]

AI Coding Startup Cursor Plans New Model to Rival Anthropic, OpenAI

Cursor co-founder Aman Sanger said Composer 2 was trained solely on coding-related data to build a smaller model that's meant to be less expensive to use. Cursor, a leading artificial intelligence startup for coding, is set to release a more efficient AI model for software development in a bid to keep pace with larger firms like Anthropic PBC and OpenAI. The company on Thursday plans to unveil Composer 2, which is meant to work as an AI agent that carries out lengthy coding tasks on a user's behalf. Anthropic and OpenAI have also introduced more powerful AI models that they say can take on increasingly complicated and time-consuming work writing software. San Francisco-based Cursor launched its first AI coding assistant in 2023 and quickly caught on with professional software developers, leading to a new style of programming known as vibe coding. The company now has more than 1 million daily users, including 50,000 businesses such as payment processing firm Stripe Inc. and creative software maker Figma Inc. Cursor has also been in talks to raise a new round of financing at a roughly $50 billion valuation, Bloomberg News reported this month. However, the company faces heated competition from OpenAI, Anthropic and a number of newer startups that offer AI coding assistants designed to field more complex tasks on behalf of the user. Cursor supports a wide range of models, including those from OpenAI and Anthropic, and counts the ChatGPT maker as an investor. Cursor co-founder Aman Sanger, who leads its research team, said the startup focused on training Composer 2 solely on coding-related data -- an effort that let it build a smaller model that's meant to be less expensive to use. Unlike other leading AI developers whose tools are used for a wide range of tasks, Cursor's model is designed purely for coding. "It won't help you do your taxes," Sanger said. "It won't be able to write poems."

[3]

Cursor's Composer 2 was secretly built on a Chinese AI model -- and it exposes a deeper problem with Western open-source AI

The $29.3 billion AI coding tool just got caught with its provenance showing. When Cursor launched Composer 2 last week -- calling it "frontier-level coding intelligence" -- it presented the model as evidence that the company is a serious AI research lab, not just a forked integrated development environment (IDE) wrapping someone else's foundation model. What the announcement omitted was that Composer 2 was built on top of Kimi K2.5, an open-source model from Moonshot AI, a Chinese startup backed by Alibaba, Tencent and HongShan (the firm formerly known as Sequoia China). A developer named Fynn (@fynnso) on X figured it out within hours. By setting up a local debug proxy server and routing Cursor's API traffic through it, Fynn intercepted the outbound request and found the model ID in plain sight: accounts/anysphere/models/kimi-k2p5-rl-0317-s515-fast. "So composer 2 is just Kimi K2.5 with RL," Fynn wrote. "At least rename the model ID." The post racked up 2.6 million views. In a follow-up, Fynn noted that Cursor's previous model, Composer 1.5, blocked this kind of request interception -- but Composer 2 did not, calling it "probably an oversight." Cursor quickly patched it, but the fact was clearly out. Cursor's VP of Developer Education, Lee Robinson, confirmed the Kimi connection within hours, and co-founder Aman Sanger acknowledged it was a mistake not to disclose the base model from the start. But the story that matters here is not about one company's disclosure failure. It is about why Cursor -- and likely many other AI product companies -- turned to a Chinese open model in the first place. The open-model vacuum: Why Western companies keep reaching for Chinese foundations Cursor's decision to build on Kimi K2.5 was not random. The model is a 1 trillion parameter mixture-of-experts architecture with 32 billion active parameters, a 256,000-token context window, native image and video support, and an Agent Swarm capability that runs up to 100 parallel sub-agents simultaneously. Released under a modified MIT license that permits commercial use, Kimi K2.5 is competitive with the best models in the world on agentic benchmarks and scored first among all models on MathVista at release. When an AI product company needs a strong open model for continued pretraining and reinforcement learning -- the kind of deep customization that turns a foundation into a differentiated product -- the options from Western labs have been surprisingly thin. Meta's Llama 4 Scout and Maverick shipped in April 2025, but they were severely lacking, and the much-anticipated Llama 4 Behemoth has been indefinitely delayed. As of March 2026, Behemoth still has no public release date, with reports suggesting Meta's internal teams are not convinced the 2-trillion-parameter model delivers enough of a performance leap to justify shipping it. Google's Gemma 3 family topped out at 27 billion parameters -- excellent for edge and single-accelerator deployment, but not a frontier-class foundation for building production coding agents. Gemma 4 has yet to be announced, though it has sparked speculation that a release may be imminent. And then there's OpenAI, which released arguably the most conspicuous American open source contender, the gpt-oss family (in 20-billion and 120-billion parameter variants) in August 2025. Why wouldn't Cursor build atop this model if it needed a base model to fine-tune? The answer lies in the "intelligence density" required for frontier-class coding. While gpt-oss-120b is a monumental achievement for Western open source -- offering reasoning capabilities that rival proprietary models like o4-mini -- it is fundamentally a sparse Mixture-of-Experts (MoE) model that activates only 5.1 billion parameters per token. For a general-purpose reasoning assistant, that is an efficiency masterstroke; for a tool like Composer 2, which must maintain structural coherence across a 256,000-token context window, it is arguably too "thin." By contrast, Kimi K2.5 is a 1-trillion-parameter titan that keeps 32 billion parameters active at any given moment. In the high-stakes world of agentic coding, sheer cognitive mass still dictates performance, and Cursor clearly calculated that Kimi's 6x advantage in active parameter count was essential for synthesizing the "context explosion" that occurs during complex, multi-step autonomous programming tasks. Beyond raw scale, there is the matter of structural resilience. OpenAI's open-weight models have gained a quiet reputation among elite developer circles for being "post-training brittle" -- models that are brilliant out of the box but prone to catastrophic forgetting when subjected to the kind of aggressive, high-compute reinforcement learning Cursor required. Cursor didn't just apply a light fine-tune; they executed a "4x scale-up" in training compute to bake in their proprietary self-summarization logic. Kimi K2.5, built specifically for agentic stability and long-horizon tasks, provided a more durable "chassis" for these deep architectural renovations. It allowed Cursor to build a specialized agent that could solve competition-level problems, like compiling the original Doom for a MIPS architecture, without the model's core logic collapsing under the weight of its own specialized training. That leaves a gap. And Chinese labs -- Moonshot, DeepSeek, Qwen, and others -- have filled it aggressively. DeepSeek's V3 and R1 models caused a panic in Silicon Valley in early 2025 by matching frontier performance at a fraction of the cost. Alibaba's Qwen3.5 family has shipped models at nearly every parameter count from 600 million to 397 billion active parameters. Kimi K2.5 sits squarely in the sweet spot for companies that want a powerful, open, customizable base. Cursor is not the only product company in this position. Any enterprise building specialized AI applications on top of open models today confronts the same calculus: the most capable, most permissively licensed open foundations disproportionately come from Chinese labs. What Cursor actually built -- and why the base model matters less than you think To its credit, Cursor did not just slap a UI on Kimi. Lee Robinson stated that roughly a quarter of the total compute used to build Composer 2 came from the Kimi base, with the remaining three quarters from Cursor's own continued training. The company's technical blog post describes a technique called self-summarization that addresses one of the hardest problems in agentic coding: context overflow during long-running tasks. When an AI coding agent works on complex, multi-step problems, it generates far more context than any model can hold in memory at once. The typical workaround -- truncating old context or using a separate model to summarize it -- causes the agent to lose critical information and make cascading errors. Cursor's approach trains the model itself to compress its own working memory in the middle of a task, as part of the reinforcement learning process. When Composer 2 nears its context limit, it pauses, compresses everything down to roughly 1,000 tokens, and continues. Those summaries are rewarded or penalized based on whether they helped complete the overall task, so the model learns what to retain and what to discard over thousands of training runs. The results are meaningful. Cursor reports that self-summarization cuts compaction errors by 50 percent compared to heavily engineered prompt-based baselines, using one-fifth the tokens. As a demonstration, Composer 2 solved a Terminal-Bench problem -- compiling the original Doom game for a MIPS processor architecture -- in 170 turns, self-summarizing over 100,000 tokens repeatedly across the task. Several frontier models cannot complete it. On CursorBench, Composer 2 scores 61.3 compared to 44.2 for Composer 1.5, and reaches 61.7 on Terminal-Bench 2.0 and 73.7 on SWE-bench Multilingual. Moonshot AI itself responded supportively after the story broke, posting on X that it was proud to see Kimi provide the foundation and confirming that Cursor accessed the model through an authorized commercial partnership with Fireworks AI, a model hosting company. Nothing was stolen. The use was commercially licensed. Beyond attribution: The silence raises licensing and governance questions Cursor co-founder Aman Sanger acknowledged the omission, saying it was a miss not to mention the Kimi base in the original blog post. The reasons for that silence are not hard to infer. Cursor is valued at nearly $30 billion on the premise that it is an AI research company, not an integration layer. And Kimi K2.5 was built by a Chinese company backed by Alibaba -- a sensitive provenance at a moment when the US-China AI relationship is strained and government and enterprise customers increasingly care about supply chain origins. The real lesson is broader. The whole industry builds on other people's foundations. OpenAI's models are trained on decades of academic research and internet-scale data. Meta's Llama is trained on data it does not always fully disclose. Every model sits atop layers of prior work. The question is what companies say about it -- and right now, the incentive structure rewards obscuring the connection, especially when the foundation comes from China. For IT decision-makers evaluating AI coding tools and agent platforms, this episode surfaces practical questions: do you know what's under the hood of your AI vendor's product? Does it matter for your compliance, security, and supply chain requirements? And is your vendor meeting the license obligations of its own foundation model? The Western open-model gap is starting to close -- but slowly The good news for enterprises concerned about model provenance is that it does seem Western open models are about to get significantly more competitive. NVIDIA has been on an aggressive release cadence. Nemotron 3 Super, released on March 11, is a 120-billion-parameter hybrid Mamba-Transformer model with 12 billion active parameters, a 1-million-token context window, and up to 5x higher throughput than its predecessor. It uses a novel latent mixture-of-experts architecture and was pre-trained in NVIDIA's NVFP4 format on the Blackwell architecture. Companies including Perplexity, CodeRabbit, Factory, and Greptile are already integrating it into their AI agents. Days later, NVIDIA followed with Nemotron-Cascade 2, a 30-billion-parameter MoE model with just 3 billion active parameters that outperforms both Qwen 3.5-35B and the larger Nemotron 3 Super across mathematics, code reasoning, alignment, and instruction-following benchmarks. Cascade 2 achieved gold-medal-level performance on the 2025 International Mathematical Olympiad, the International Olympiad in Informatics, and the ICPC World Finals -- making it only the second open-weight model after DeepSeek-V3.2-Speciale to accomplish that. Both models ship with fully open weights, training datasets, and reinforcement learning recipes under permissive licenses -- exactly the kind of transparency that Cursor's Kimi episode highlighted as missing. What IT leaders should watch: The provenance question is not going away The Cursor-Kimi episode is a preview of a recurring pattern. As AI product companies increasingly build differentiated applications through continued pretraining, reinforcement learning, and novel techniques like self-summarization on top of open foundation models, the question of which foundation sits at the bottom of the stack becomes a matter of enterprise governance -- not just technical preference. NVIDIA's Nemotron family and the anticipated Gemma 4 represent the strongest near-term candidates for closing the Western open-model gap. Nemotron 3 Super's hybrid architecture and million-token context window make it directly relevant for the same agentic coding use cases that Cursor addressed with Kimi. Cascade 2's extraordinary intelligence density -- gold-medal competition performance at just 3 billion active parameters -- suggests that smaller, highly optimized models trained with advanced RL techniques can increasingly substitute for the massive Chinese foundations that have dominated the open-model landscape. But for now, the line between American AI products and Chinese model foundations is not as clean as the geopolitical narrative suggests. One of the most-used coding tools in the world runs on a model backed by Alibaba -- and may not originally have been meeting the attribution requirements of the license that enabled it. Cursor says it will disclose the base model next time. The more interesting question is whether, next time, it will have a credible Western alternative to disclose.

[4]

Cursor's new coding model Composer 2 is here: It beats Claude Opus 4.6 but still trails GPT-5.4

Cursor, a San Francisco AI coding platform from startup Anysphere valued at $29.3 billion, has launched Composer 2, a new in-house coding model now available inside its agentic AI coding environment, and it offers drastically improved benchmarks from its prior in-house model. It's also launching and making Composer 2 Fast, a higher-priced but faster variant, the default experience for users. Here's the cost breakdown: That's a big drop from Cursor's predecessor in-house model, Composer 1.5, from February, which cost $3.50 per million input tokens and $17.50 per million output tokens; Composer 2 is about 86% cheaper on both counts. Composer 2 Fast is also roughly 57% cheaper than Composer 1.5. There's also discounts for "cache-read pricing," that is, sending some of the same tokens in a prompt to the model again, of $0.20 per million tokens for Composer 2 and $0.35 per million for Composer 2 Fast, versus $0.35 per million for Composer 1.5. It also matters that this appears to be a Cursor-native release, not a broadly distributed standalone model. In the company's announcement and model documentation, Composer 2 is described as available in Cursor, tuned for Cursor's agent workflow and integrated with the product's tool stack. The materials provided do not indicate separate availability through external model platforms or as a general-purpose API outside the Cursor environment. The deeper technical claim in this release is not merely that Composer 2 scores higher than Composer 1.5. It is that Cursor says the model is better suited to long-horizon agentic coding. In its blog, Cursor says the quality gains come from its first continued pretraining run, which gave it a stronger base for scaled reinforcement learning. From there, the company says it trained Composer 2 on long-horizon coding tasks and that the model can solve problems requiring hundreds of actions. That framing is important because it addresses one of the biggest unresolved issues in coding AI. Many models are good at isolated code generation. Far fewer remain reliable across a longer workflow that includes reading a repository, deciding what to change, editing multiple files, running commands, interpreting failures and continuing toward a goal. Cursor's documentation reinforces that this is the use case it cares about. It describes Composer 2 as an agentic model with a 200,000-token context window, tuned for tool use, file edits and terminal operations inside Cursor. It also notes training techniques such as self-summarization for long-running tasks. For developers already using Cursor as their main environment, that tighter tuning may matter more than a generic leaderboard claim. Cursor's published results show a clear improvement over prior Composer models. The company lists Composer 2 at 61.3 on CursorBench, 61.7 on Terminal-Bench 2.0, and 73.7 on SWE-bench Multilingual. That compares with Composer 1.5 at 44.2, 47.9 and 65.9, and Composer 1 at 38.0, 40.0 and 56.9. The release is more measured than some model launches because Cursor is not claiming universal leadership. On Terminal-Bench 2.0, which measures how well an AI agent performs tasks in command line terminal-style interfaces, GPT-5.4 still leads at 75.1, while Composer 2 scores 61.7, ahead of Opus 4.6 at 58.0, Opus 4.5 at 52.1 and Composer 1.5 at 47.9. That makes Cursor's pitch more pragmatic and arguably more useful for buyers. The company is not saying Composer 2 is the single best model at everything. It is saying the model has moved into a more competitive quality tier while offering more attractive economics and stronger integration with the product developers are already using. Cursor also included a performance-versus-cost chart on its CursorBench benchmarking suite that appears designed to make a Pareto-style argument for Composer 2. In that graphic, Composer 2 sits at a stronger cost-to-performance point than Composer 1.5 and compares favorably with higher-cost GPT-5.4 and Opus 4.6 settings shown by Cursor. The company's message is not simply that Composer 2 scores higher than its predecessor, but that it may offer a more efficient cost-to-intelligence tradeoff for everyday coding work inside Cursor. For readers deciding whether to use Composer 2, the most important question may not be benchmark performance alone. It may be whether they want a model optimized for Cursor's own product experience. That can be a strength. According to the documentation, Composer 2 can access Cursor's agent tool stack, including semantic code search, file and folder search, file reads, file edits, shell commands, browser control and web access. That kind of integration can be more valuable than raw model quality if the goal is to complete real software tasks rather than produce impressive one-shot answers. But it also narrows the addressable audience. Teams looking for a model they can deploy broadly across multiple external tools and platforms should recognize that Cursor is presenting Composer 2 as a model for Cursor users, not as a generally available standalone foundation model. The significance of Composer 2 is not that Cursor has suddenly taken the top spot on every coding benchmark. It has not. The more important point is that Cursor is making an operational argument: its model is getting better, its pricing is low enough to encourage broader use, and its faster tier is responsive enough that the company is comfortable making it the default despite the higher cost. That combination could resonate with engineering teams that increasingly care less about abstract model prestige and more about whether an assistant can stay useful across long coding sessions without becoming prohibitively expensive. Cursor's broader pricing structure helps frame the competitive pressure around this launch. On its current pricing page, Cursor offers a free Hobby tier, a Pro plan at $20 per month, Pro+ at $60 per month, and Ultra at $200 per month for individual users, with higher tiers offering more usage across models from OpenAI, Anthropic and Google. On the business side, Teams costs $40 per user per month, while Enterprise is custom-priced and adds pooled usage, centralized billing, usage analytics, privacy controls, SSO, audit logs and granular admin controls. In other words, Cursor is not just charging for access to a coding model. It is charging for a managed application layer that sits on top of multiple model providers while adding team features, governance and workflow tooling. That model is increasingly under pressure as first-party AI companies push deeper into coding itself. OpenAI and Anthropic are no longer just selling models through third-party products; they are also shipping their own coding interfaces, agents and evaluation frameworks -- such as Codex and Claude Code -- raising the question of how much room remains for an intermediary platform. Commenters on X, while unverified and not necessarily representative of the broader market, have increasingly described moving from Cursor to Anthropic's Claude Code, especially among power users drawn to terminal-first workflows, longer-running agent behavior and lower perceived overhead. Some of those posts describe frustration with Cursor's pricing, context loss or editor-centric experience, while praising Claude Code as a more direct and fully agentic way to work. Even treated cautiously, that kind of social chatter points to the strategic problem Cursor faces: it has to prove that its integrated platform, team controls and now its own in-house models add enough value to justify sitting between developers and the model makers' increasingly capable coding products. That makes Composer 2 strategically important for Cursor. By offering a much cheaper in-house model than Composer 1.5, tuning it tightly to Cursor's own tool stack and making a faster version the default, the company is trying to show that it provides more than a wrapper around outside systems. The challenge is that as first-party coding products improve, developers and enterprise buyers may increasingly ask whether they want a separate AI coding platform at all, or whether the model makers' own tools are becoming sufficient on their own.

[5]

Vibe coding startup Cursor launches programming-optimized Composer 2 model - SiliconANGLE

Vibe coding startup Cursor launches programming-optimized Composer 2 model Cursor today introduced an artificial intelligence model called Composer 2 that it says can outperform Claude Opus 4.6 across many programming tasks. The model is accessible through the company's popular AI code editor. Cursor, which is incorporated as Anysphere Inc,. says that the software has more than 1 million daily active users. That large install base helped the company secure a $29.3 billion valuation last November. Composer 2 supports prompts with up to 200,000 tokens. It can generate code, fix bugs in existing software and interact with a computer's command line interface. Developers can optionally extend the model's capabilities by providing it with access to a browser, an image generator and other tools. Cursor evaluated Composer 2 using an internal benchmark called CursorBench. The programming challenges that it contains are based on tasks completed by the company's engineering team. The average CursorBench challenge includes 352 lines of code spread across 8 files. Composer 2 achieved a score of more than 60%, which put it in third place behind GPT-5.4's high and medium configurations. Those are modes in which OpenAI Group PBC's flagship model uses more hardware to increase output quality. According to Cursor, Composer 2 outperformed GPT 5.4's low configuration and Claude Opus 4.6. Composer 2 also bested Anthropic PBC's model on the Terminal-Bench 2.0 benchmark. The evaluation measures AI models' ability to perform tasks in a command line interface. Cursor says that Composer 2 is more cost-efficient than many competing frontier models. The standard edition of the algorithm is priced at $0.5 per million input tokens and $2.5 per million output tokens. There's also a second, more expensive version that offers the same output quality but responds to developers' prompts considerably faster. It's available for $1.5 per million input tokens and $7.5 per million output tokens. According to Bloomberg, the model's cost-efficiency partly stems from the fact that it was trained solely on coding datasets. Frontier models are usually trained to automate a wider range of tasks, which increases their hardware footprint. Cursor used a machine learning method called self-summarization to streamline the development process. The coding tasks that an AI model receives during training require it to process a significant amount of data. In some cases, the data volume exceeds the model's context window. Self-summarization compresses information into a form that doesn't exceed context window limits.

[6]

Cursor admits Composer 2 based on Moonshot AI's Kimi 2.5

Coding artificial intelligence company Cursor acknowledged its new Composer 2 model is based on Moonshot AI's Kimi 2.5 after an X user identified Kimi code within the product. The revelation prompted questions regarding transparency from Cursor, which had promoted Composer 2 as offering "frontier-level coding intelligence" without mentioning a third-party base model in its announcement. Cursor's Vice President of Developer Education Lee Robinson stated that Composer 2 "started from an open-source base," but said only approximately "1/4 of the compute spent on the final model came from the base." Robinson also stated Composer 2's performance on benchmarks is "very different" from Kimi's due to Cursor's additional training. The Kimi account on X confirmed the arrangement, stating Cursor used Kimi "as part of an authorized commercial partnership" with Fireworks AI. The Kimi account added it was "proud to see Kimi-k2.5 provide the foundation." Cursor co-founder Aman Sanger acknowledged, "It was a miss to not mention the Kimi base in our blog from the start." Sanger added the company would address this for future models. Cursor is a U.S. startup which raised $2.3 billion in fall 2023 at a $29.3 billion valuation. The company is reportedly exceeding $2 billion in annualized revenue. Moonshot AI is a Chinese company backed by Alibaba and HongShan.

[7]

Cursor founder clears air on Kimi model use in Composer 2: Here's all you need to know

Users had speculated that Composer 2, a new model designed to improve efficiency in software development workflows, was built on an external base model that was not disclosed at launch. In an X post, cofounder Aman Sanger acknowledged that they had indeed missed mentioning the Kimi base in their blog and added that the company will correct this in future releases. Artificial intelligence (AI) coding startup Cursor is facing scrutiny over its newly launched Composer 2 model after users speculated that the system is built on an external base model that was not disclosed at launch. Cursor unveiled Composer 2 on March 19, a new model designed to improve efficiency in software development workflows. However, Chinese AI startup Moonshot AI had publicly endorsed Cursor's newly launched Composer 2 on Saturday. Further, in a post on X, Cursor cofounder Aman Sanger also confirmed that the company selected Kimi K2.5 after evaluating multiple base models. Moonshot AI develops and owns the Kimi family of models, including Kimi K2.5. "We've evaluated a lot of base models on perplexity-based evals, and Kimi K2.5 proved to be the strongest," Sanger said. He added that Composer 2 is built on top of the base model with further training, fine-tuning using reinforcement learning, and supporting systems that help it run efficiently. Sanger acknowledged that Cursor did not initially disclose its use of the Kimi base model in its launch blog. "It was a miss to not mention the Kimi base in our blog from the start," he said, adding that the company plans to correct this in future releases. Cursor operates in a competitive landscape alongside established players such as OpenAI and Anthropic, as well as a growing number of specialised startups building coding-focussed AI tools. Sanger, who also leads the company's research efforts, had said earlier that Composer 2 is trained specifically on coding-related data. The approach focusses on building a smaller, more specialised model optimised for software engineering tasks. Composer 2 features and pricing Composer 2 is priced at $0.50 per million input tokens and $2.50 per million output tokens, positioning it competitively among coding-focussed AI models. The model is designed for long-horizon coding tasks, enabling it to handle multi-step software problems such as debugging, testing and implementation across larger codebases, the company said in a blog post. Cursor said Composer 2 shows measurable gains over earlier versions, reporting 61.3 on CursorBench, 61.7 on Terminal Bench 2.0, and 73.7 on SWE-bench Multilingual. These benchmarks evaluate performance across areas such as coding accuracy, instruction following, and the capability of the AI model to perform real-world software engineering tasks. How it stacks up against OpenAI, Anthropic In Terminal Bench 2.0, Composer 2 outperformed several competing models. Cursor said OpenAI's GPT model scored 75.1%, while Anthropic's Opus 4.6 recorded 58.0%, placing it below Composer 2 on that benchmark. However, comparisons across models may vary depending on evaluation setup, datasets, and tokenisation methods. Cursor also noted that tokens used by Anthropic's models are approximately 15% smaller than those used by Composer and GPT models, which can affect cost and performance comparisons. Composer is built as a mixture-of-experts model trained with reinforcement learning in real development environments. This training approach differs from many competing systems, which are typically trained on broader datasets and later adapted for coding tasks.

[8]

Cursor Faces Backlash After Revealing Its Coding Model Was Built On Moonshot AI's Kimi K2.5

Artificial intelligence coding startup, Cursor, faced backlash after revealing its latest Composer 2 model was built on Kimi K2.5, an open-source platform from China's Moonshot AI. "I'm a big believer in open source, especially as AI improves. It was a miss to not mention the Kimi base in our blog from the start. We'll fix that for the next model. Their team clarified our usage was licensed in the tweet below," Robinson wrote on X. Moonshot AI confirmed its partnership with Cursor, affirming the integration aligns with its strategy of supporting open-model ecosystems. The Kimi account also stated that Cursor's use of Kimi was part of an authorized commercial agreement with Fireworks AI. Transparency and Integrity of Open-Source Models Questioned This incident has reignited discussions in Silicon Valley regarding the transparency and integrity of using open-source or foreign-developed models in commercial AI systems. Policymakers have warned that China's growing dominance in open-source AI could challenge U.S. leadership and complicate oversight of widely adopted systems, Reuters reported. Industry leaders also voiced unease, with executives cautioning that self-regulation might be insufficient as AI capabilities rapidly expand. China has opted to go all-in on an open-source approach, which reshaped the competitive landscape, the U.S.-China Economic Security Review Commission wrote in a report. "Permissive licensing, aggressive pricing and an ecosystem that encourages collaboration are accelerating global uptake of Chinese AI and faster iteration among Chinese labs. While top U.S. models maintain a narrow lead in capabilities, they risk losing not only the race to a global user base but also the ability to set the technical standards and norms that will govern AI development for years to come," the report continued. Photo: Gorodenkoff on Shutterstock This content was partially produced with the help of AI tools and was reviewed and published by Benzinga editors. Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

[9]

AI coding startup Cursor plans new model to rival Anthropic, OpenAI - The Economic Times

The company on Thursday plans to unveil Composer 2, which is meant to work as an AI agent that carries out lengthy coding tasks on a user's behalf. Anthropic and OpenAI have also introduced more powerful AI models that they say can take on increasingly complicated and time-consuming work writing software.Cursor, a leading artificial intelligence startup for coding, is set to release a more efficient AI model for software development in a bid to keep pace with larger firms like Anthropic PBC and OpenAI. The company on Thursday plans to unveil Composer 2, which is meant to work as an AI agent that carries out lengthy coding tasks on a user's behalf. Anthropic and OpenAI have also introduced more powerful AI models that they say can take on increasingly complicated and time-consuming work writing software. San Francisco-based Cursor launched its first AI coding assistant in 2023 and quickly caught on with professional software developers, leading to a new style of programming known as vibe coding. The company now has more than 1 million daily users, including 50,000 businesses such as payment processing firm Stripe and creative software maker Figma. Cursor has also been in talks to raise a new round of financing at a roughly $50 billion valuation, Bloomberg News reported this month. However, the company faces heated competition from OpenAI, Anthropic and a number of newer startups that offer AI coding assistants designed to field more complex tasks on behalf of the user. Cursor supports a wide range of models, including those from OpenAI and Anthropic, and counts the ChatGPT maker as an investor. Cursor cofounder Aman Sanger, who leads its research team, said the startup focused on training Composer 2 solely on coding-related data -- an effort that let it build a smaller model that's meant to be less expensive to use. Unlike other leading AI developers whose tools are used for a wide range of tasks, Cursor's model is designed purely for coding. "It won't help you do your taxes," Sanger said. "It won't be able to write poems."

[10]

Cursor Launches Composer 2 To Rival OpenAI, Anthropic

Cursor is launching a new AI model for software development that will reportedly rival OpenAI and Anthropic PBC. Composer 2, a third-gen coding model, is anticipated to exceed Anthropic's Opus 4.6 on several major coding benchmarks, Bloomberg reports. The coding model is designed as an AI agent that can autonomously handle complex, time-consuming coding tasks for users. Sualeh Asif, Aman Sanger, Arvid Lunnemark, and Michael Truell founded Anysphere in 2022. Cursor introduced its AI assistant in 2023, designed to streamline coding and debugging for developers, making their workflow faster and more precise. Anysphere saw extremely fast growth, reaching $100 million in annual recurring revenue (ARR) in January 2025. The company hit a major funding milestone in June 2025, raising $900 million. This round, led by Thrive Capital and with participation from Andreessen Horowitz, Accel, and DST Global, valued the company at approximately $9.9 billion, Bloomberg reported. Cursor is also seeking to raise $50 billion in a new funding round, sources told Bloomberg earlier this month. Sources said that plans are still in the early stages and could change. Cursor now serves over 1 million daily users, including 50,000 businesses, such as the payment processing company Stripe Inc. and the creative software platform Figma Inc. Photo: Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

Share

Share

Copy Link

AI coding startup Cursor launched Composer 2 as frontier-level coding intelligence but failed to disclose it was built on Moonshot AI's Kimi 2.5 model. A developer exposed the connection within hours by intercepting API traffic. The incident highlights why Western companies increasingly turn to Chinese open models when competing with larger AI firms.

Cursor Launches Composer 2 Without Disclosing Foundation

Cursor, the AI coding startup valued at $29.3 billion, launched Composer 2 this week, promoting it as offering frontier-level coding intelligence for AI-powered software development

1

. The San Francisco-based company, which now serves more than 1 million daily users including major clients like Stripe and Figma, positioned the new AI coding model as evidence of its capability to compete with larger AI firms like Anthropic and OpenAI2

. However, what the announcement omitted was crucial: Composer 2 was built on top of Kimi K2.5, an open-source model from Moonshot AI, a Chinese startup backed by Alibaba, Tencent and HongShan, formerly known as Sequoia China3

.

Source: SiliconANGLE

Developer Exposes Kimi Connection Through API Traffic

A developer named Fynn figured out the connection within hours of the launch. By setting up a local debug proxy server and routing Cursor's API traffic through it, Fynn intercepted the outbound request and found the model ID in plain sight: accounts/anysphere/models/kimi-k2p5-rl-0317-s515-fast

3

. "So composer 2 is just Kimi K2.5 with RL," Fynn wrote on X. "At least rename the model ID." The post accumulated 2.6 million views, forcing Cursor to acknowledge what it had initially concealed1

.Cursor's vice president of developer education Lee Robinson soon confirmed the connection, stating "Yep, Composer 2 started from an open-source base!" He emphasized that only approximately one-fourth of the compute spent on the final model came from the base, with the rest from their training through reinforcement learning

1

. Co-founder Aman Sanger, who leads the research team, acknowledged the oversight: "It was a miss to not mention the Kimi base in our blog from the start. We'll fix that for the next model"1

.

Source: ET

Why Cursor Chose Built on Moonshot AI Over Western Alternatives

The decision to build on Kimi K2.5 reveals significant gaps in Western open-source AI options. Kimi K2.5 is a 1 trillion parameter Mixture-of-Experts architecture with 32 billion active parameters, a 256,000-token context window, and native image and video support

3

. Released under a modified MIT license permitting commercial use through an authorized commercial partnership with Fireworks AI, it proved competitive on agentic benchmarks1

.When AI coding startups need strong open models for continued pretraining and reinforcement learning to handle agentic coding tasks, Western options have been surprisingly limited. Meta's Llama 4 Behemoth has been indefinitely delayed with no public release date as of March 2026. Google's Gemma 3 family topped out at 27 billion parameters, insufficient for frontier-class coding agents. While OpenAI released the gpt-oss family in August 2025, including a 120-billion parameter variant, it activates only 5.1 billion parameters per token compared to Kimi's 32 billion active parameters

3

. For programming-optimized models handling complex, multi-step autonomous programming tasks across a massive context window, this intelligence density matters significantly.Related Stories

Composer 2 Performance and Pricing for Agentic Coding Tasks

Despite the controversy, Composer 2 delivers substantial improvements over its predecessor. The AI coding model achieved a score of 61.3 on CursorBench, 61.7 on Terminal-Bench 2.0, and 73.7 on SWE-bench Multilingual, compared to Composer 1.5's scores of 44.2, 47.9, and 65.9 respectively

4

. On Terminal-Bench 2.0, Composer 2 outperformed Claude Opus 4.6's score of 58.0, though it still trails GPT-5.4's leading score of 75.14

.The standard edition costs $0.5 per million input tokens and $2.5 per million output tokens, representing an 86% cost reduction from Composer 1.5's $3.50 and $17.50 pricing

4

. Aman Sanger explained that training Composer 2 solely on coding-related data enabled the company to build a smaller, more cost-efficient model2

. The model supports a 200,000-token context window and uses self-summarization techniques to compress information during long-horizon coding tasks .Implications for Silicon Valley and the AI Arms Race

The revelation carries particular weight given the current geopolitical climate surrounding AI development. Building on a Chinese model feels especially fraught as Silicon Valley frames the AI arms race as an existential battle between the United States and China

1

. This comes after the industry's panic following DeepSeek's release of a competitive model early last year. For a company that raised a $2.3 billion round at a $29.3 billion valuation and reportedly exceeds $2 billion in annualized revenue, the lack of upfront disclosure raises questions about transparency in an industry increasingly shaped by national security concerns1

.The incident suggests that Western AI companies may continue reaching for Chinese foundation models until domestic alternatives match their capabilities for specialized applications. Cursor is now in talks to raise financing at a roughly $50 billion valuation, making its technology choices and disclosure practices increasingly significant for investors and enterprise customers evaluating long-term partnerships

2

. As the company integrates Composer 2 into its agentic AI coding environment with access to semantic code search, file operations, shell commands, and browser control, developers must weigh performance gains against the model's origins and what that signals about the state of Western open-source AI infrastructure4

.

Source: Benzinga

References

Summarized by

Navi

[1]

[3]

[4]

Related Stories

Cursor CEO explains how his AI coding assistant will compete with OpenAI and Anthropic

10 Dec 2025•Technology

Kimi K2: China's New Open-Source AI Model Challenges Global Competitors

12 Jul 2025•Technology

Cursor launches AI agent experience to compete with Claude Code and OpenAI Codex

03 Apr 2026•Technology