Anthropic restricts Mythos AI model release, citing unprecedented cybersecurity capabilities

111 Sources

[1]

Anthropic limits access to Mythos, its new cybersecurity AI model

Anthropic has launched a new cybersecurity AI model to a select group of customers, including Amazon, Apple, and Microsoft, days after details about the project were leaked online. Its new model Claude Mythos Preview would be available only to vetted organisations, including Broadcom, Cisco, and CrowdStrike, Anthropic said on Tuesday. The company added it was also in discussions with the US government about its use. The announcement follows a data leak by the San Francisco start-up last month, when descriptions of the Mythos model and other documents were discovered in a publicly accessible data cache. Last week, Anthropic suffered a second incident, leading to the internal source code for its personal assistant, Claude Code, being made public. The cases caused concerns over Anthropic's data vulnerabilities and security practices. In both instances, the company said "human error" was responsible for the data being made public. Mythos has been in use with partners for several weeks. Although it is a "general purpose" model with wider capabilities, it is the first time the company has limited release of a model, due to its capabilities in cyber security. Anthropic said the software can identify cyber vulnerabilities at a scale beyond human capacity but it could also develop ways to exploit these vulnerabilities, which bad actors could use. The company said the model could "reshape" cyber security practices and does not plan a broad release. "We believe technologies like this are powerful enough to do a lot of really beneficial good but also potentially bad if they land in the wrong hands," said Dianne Na Penn, head of product management, research at Anthropic, adding selected companies would "get a head start on being able to secure vulnerabilities and detect code at a scale they couldn't have done before." In recent weeks, Mythos has identified thousands of so-called zero-day -- previously undiscovered -- vulnerabilities and other security flaws, many of which are critical and have persisted for a decade or more. In one example, it found a 16-year-old flaw in widely used video software, in a line of code that automated testing tools had executed 5 million times without detecting the issue. However, the model also displayed some issues during testing. At one point, Anthropic found that it had escaped its so-called sandbox environment -- designed to prevent it from accessing the internet -- and posted details of its workaround online. Anthropic acknowledged it demonstrated "a potentially dangerous capability for circumventing [the company's] safeguards." Sam Bowman, a technical researcher at Anthropic, said the "scariest behaviors" were from "earlier versions" of the model. The current iteration was "less likely" to leak information, although it was still "at least as capable of doing things like working around sandboxes," he added. Anthropic has also been in ongoing discussions with US government officials about Claude Mythos. In February, the FT reported that the Pentagon was seeking to use AI tools for cyber operations to identify infrastructure targets from adversaries such as China. Those talks have been taking place despite Anthropic's row with the US defense department over recent weeks. A US court has temporarily blocked the Pentagon's effort to label the start-up a supply-chain risk, while President Donald Trump has criticised Anthropic as "leftwing nut jobs" after the company refused to shift its "red lines" on the use of its technology in war fighting. Anthropic is committing up to $100 million to subsidize the use of its model through credits to organizations in the project, who will provide feedback on their findings. It will also donate $4 million to open source security groups to help secure open software, which can often be of higher cyber risk.

[2]

Is Anthropic limiting the release of Mythos to protect the internet -- or Anthropic? | TechCrunch

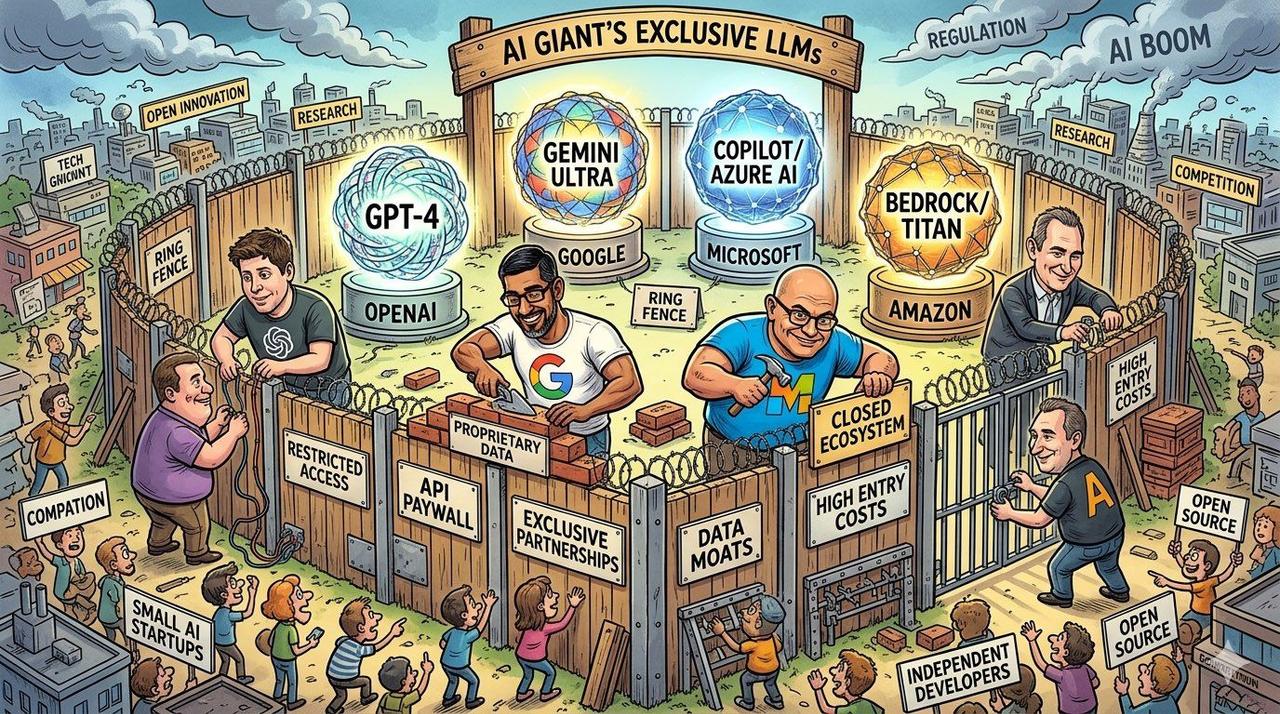

Anthropic said this week that it limited the release of its newest model, dubbed Mythos, because it is too capable of finding security exploits in software relied upon by users around the world. Instead of unleashing Mythos on the public, the frontier lab will share it with a group of large companies and organizations that operate critical online infrastructure, from Amazon Web Services to JPMorgan Chase. OpenAI is reportedly considering a similar plan for its next cybersecurity tool. The ostensible idea is to let these big enterprises get ahead of bad actors who could leverage advanced LLMs to penetrate secure software. But the "e"-word in the sentence above is a hint that there might be more to this release strategy than cybersecurity -- or the hyping of model capabilities. Dan Lahav, the CEO of the AI cybersecurity lab Irregular, told TechCrunch in March, before the release of Mythos, that while the discovery of vulnerabilities by AI tools matters, the specific value of any weakness to an attacker depends on many factors, including how they can be used in combination. "The question I always have in my mind," Lahav said, "is did they find something that is exploitable in a very meaningful way, whether individually, or as part of a chain?" Anthropic says Mythos is able to exploit vulnerabilities far more than its previous model, Opus. But it's not clear that Mythos is actually the be-all, end-all of cybersecurity models. Aisle, an AI cybersecurity startup, said it was able to replicate much of what Anthropic says Mythos accomplished using smaller, open-weight models. Aisle's team argues that these results show there is no single deep learning model for cybersecurity, but instead depends on the task at hand. Given that Opus was already seen as a game-changer for cybersecurity, there's another reason that frontier labs may want to limit their releases to big organizations: It creates a flywheel for big enterprise contracts, while making it harder for competitors to to copy their models using distillation, a technique that leverages frontier models to train new LLMs on the cheap. "This is marketing cover for fact that top-end models are now gated by enterprise agreements and no longer available to small labs to distill," David Crawshaw, a software engineer and CEO of the startup exe.dev, suggested in a social media post. "By the time you and I can use Mythos, there will be a new top-end rev that is enterprise only. That treadmill helps keep the enterprise dollars flowing (which is most of the dollars) by relegating distillation companies to second rank," said Crawshaw. That analysis jibes with what we're seeing in the AI ecosystem: A race between frontier labs developing the largest, most capable models, and companies like Aisle which rely on multiple models and see open-source LLMs, often from China and often allegedly developed through distillation, as a path to economic advantage. The frontier labs have been taking a harder line on distillation this year, with Anthropic publicly revealing what it says are attempts by Chinese firms to copy its models, and three leading labs -- Anthropic, Google and OpenAI -- teaming up to identify distillers and block them, according to a Bloomberg report. Distillation is a threat to the business model of frontier labs because it eliminates the advantages conveyed by using huge amounts of capital to scale. Blocking distillation, then, is already a worthwhile endeavor, but the selective release approach to doing so also gives the labs a way to differentiate their enterprise offerings as the category becomes the key to profitable deployment. Whether Mythos or any new model truly threatens the security of the internet remains to be seen, and a careful roll-out of the technology is a responsible way forward. Anthropic didn't respond to our questions about whether the decision also relates to distillation concerns at press time, but the company may have found a clever approach to protecting the internet -- and its bottom line.

[3]

Anthropic's Mythos Will Force a Cybersecurity Reckoning -- Just Not the One You Think

Anthropic said this week that the debut of its new Claude Mythos Preview model marks a critical juncture in the evolution of cybersecurity, representing an unprecedented existential threat to existing software defense strategies. So, is it more AI hype -- or a true turning point? According to Anthropic, Mythos Preview crosses a threshold of capabilities to discover vulnerabilities in virtually any and every operating system, browser, or other software product and autonomously develop working exploits for hacking. With this in mind, the company is only releasing the new model to a few dozen organizations for now -- including Microsoft, Apple, Google, and the Linux Foundation -- as part of a consortium dubbed Project Glasswing. But after years of speculation about how generative AI could impact cybersecurity, the news this week ignited controversy about whether a reckoning has really arrived and what it might look like in practice. Some are extremely skeptical of Anthropic's claims. They argue that existing AI agents can already help users find and exploit vulnerabilities much more easily and cheaply than ever before, and that this reality is fueling refinements in how companies discover and patch their software without fundamentally changing the paradigm. And then there's the ick factor that Anthropic will almost certainly benefit financially from positioning its latest model as mysterious, uniquely powerful, and exclusive. Other researchers and practitioners, though, say that they agree with Anthropic's assessment and point out that the company has said Mythos Preview is just the first to achieve capabilities that will ultimately be widely available in other models. "I typically am very skeptical of these things, and the open source community tends to be very skeptical, but I do fundamentally feel like this is a real threat," says Alex Zenla, chief technology officer of cloud security firm Edera. Zenla and others specifically point to one Mythos Preview capability as the pivot point. Generative AI, they say, is now getting more capable at identifying and developing what are known as "exploit chains," or groups of vulnerabilities that can be exploited in sequence to deeply compromise a target -- essentially Rube Goldberg-machine-style hacking. Many of the most sophisticated hacking techniques employ exploit chains, including so called zero-click attacks that compromise a system without requiring any interaction from a user. "We are already living in the world where companies run vulnerable software, vulnerable hardware, and struggle to patch. Many companies are not capable of securing their infrastructure -- that hasn't really changed from yesterday to today," says longtime security engineer and researcher Niels Provos. "But from what I understand, Mythos is really good at coming up with multistage vulnerabilities, and then also provides the proof of exploitation. I don't think it intrinsically changes the problem space, but it changes the required skill level to find these vulnerabilities and exploit them." A limited release of Mythos Preview to Project Glasswing participants only gives defenders a small lead time to find weaknesses in their own systems using the model and start to grapple more broadly with how software development, update cycles, and patch adoption needs to change before attackers have widespread access to such capabilities themselves. Industry leaders seem to be heeding the warning. Anthropic's frontier red team lead, Logan Graham, told WIRED on Tuesday that as the company reached out to organizations about Project Glasswing ahead of this week's announcement, the phone calls got shorter and shorter because the potential threat was becoming more obvious. "This is an issue that involves all of the model developers. Our goal here is just to kick things off," Graham said. "It's really important that Mythos Preview gets in the hands of defenders to give a head start."

[4]

Anthropic debuts preview of powerful new AI model Mythos in new cybersecurity initiative | TechCrunch

Anthropic on Tuesday released a preview of its new frontier model, Mythos, which it says will be used by a small coterie of partner organizations for cybersecurity work. In a previously leaked memo, the AI startup called the model one of its "most powerful" yet. The model's limited debut is part of a new security initiative, dubbed Project Glasswing, in which more than 40 partner organizations will deploy the model for the purposes of "defensive security work" and to secure critical software, Anthropic said. While it was not specifically trained for cybersecurity work, the preview will be used to scan both first-party and open-source software systems for code vulnerabilities, the company said. Anthropic claims that, over the past few weeks, Mythos identified "thousands of zero-day vulnerabilities, many of them critical." Many of the vulnerabilities are one to two decades old, the company added. Mythos is a general-purpose model for Anthropic's Claude AI systems that the company claims has strong agentic coding and reasoning skills. Anthropic's frontier models are considered its most sophisticated and high-performance models, designed for more complex tasks, including agent-building and coding. The partner organizations previewing Mythos include Amazon, Apple, Broadcom, Cisco, CrowdStrike, the Linux Foundation, Microsoft, and Palo Alto Networks. As part of the initiative, these partners will ultimately share what they've learned from using the model so that the rest of the tech industry can benefit from it. The preview is not going to be made generally available, Anthropic said. Anthropic also claims that it has engaged in "ongoing discussions" with federal officials about the use of Mythos, although one would have to imagine that those discussions are complicated by the fact that Anthropic and the Trump administration are currently locked in a legal battle after the Pentagon labeled the AI lab a supply-chain risk over Anthropic's refusal to allow autonomous targeting or surveillance of U.S. citizens. News of Mythos was originally leaked in a data security incident reported last month by Fortune. A draft blog about the model (then called "Capybara") was left in an unsecured cache of documents available on a publicly inspectable data lake. The leak, which Anthropic subsequently attributed to "human error," was originally spotted by security researchers. "'Capybara' is a new name for a new tier of model: larger and more intelligent than our Opus models -- which were, until now, our most powerful," the leaked document said, adding later that it was "by far the most powerful AI model we've ever developed," according to the report. In the leak, Anthropic claimed that its new model far exceeded performance areas (like "software coding, academic reasoning, and cybersecurity") met by its currently public models, and that it could potentially pose a cybersecurity threat if weaponized by bad actors to find bugs and exploit them (rather than fix them, which is how Mythos will be deployed). Last month, the company accidentally exposed nearly 2,000 source code files and over half a million lines of code via a mistake it made in the launch of version 2.1.88 of its Claude Code software package. The company then accidentally caused thousands of code repositories on Github to be taken down as it attempted to clean up the mess.

[5]

Anthropic Says Its New AI Model Is So Good at Finding Security Risks, You Can't Use It

With its new Claude Mythos Preview model, the company is pulling together tech giants for a new cybersecurity consortium, Project Glasswing. AI developer Anthropic says its newest Claude artificial intelligence model is so good at finding cybersecurity vulnerabilities that it's not releasable to the public. The company is instead providing the tool to big tech infrastructure providers so they can patch the flaws it finds. In late March, word began to leak that Anthropic's latest AI model, dubbed Claude Mythos (PDF), was going to be a leap forward for the company's AI technology. Now, the company has previewed its capabilities and warned that Mythos represents a major cybersecurity threat, as its capabilities represent a leap forward in finding and exploiting online security vulnerabilities. "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities," the company said in a blog post Tuesday. Anthropic said Mythos Preview, which has not been released to the public, has already found what it says are thousands of severe security vulnerabilities "in every major operating system and web browser." Asked for comment, a representative for Anthropic directed CNET to the company's blog post. To address the cybersecurity risks, Anthropic said it's launching a consortium called Project Glasswing that includes Apple, Amazon Web Services, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, Nvidia and Palo Alto Networks. Anthropic said those organizations and more than 40 others will have access to Mythos in order to start the work of shoring up defenses against AI attacks and exploits. It's committing $100 million in usage credits for Mythos and $4 million in donations to open-source security organizations. "The dangers of getting this wrong are obvious, but if we get it right, there is a real opportunity to create a fundamentally more secure internet and world than we had before the advent of AI-powered cyber capabilities," Anthropic CEO Dario Amodei posted on X. In a video posted to YouTube about Project Glasswing, leaders from companies including Microsoft, the Linux Foundation and Anthropic discussed the damage that software vulnerabilities can cause. Large cloud computing companies have already been working with the new model to find vulnerabilities. "What we have found has been illuminating," Anthony Grieco, chief security and trust officer at Cisco, wrote in a blog post. "Now the real work begins. AI-powered analysis uncovers data at a scale and depth that legacy frameworks were not designed to accommodate." Amazon Web Services said the model has already found ways to strengthen code even in its most well-tested systems. Amy Herzog, vice president and chief information security officer at AWS, called Claude Mythos Preview a "step-change in reasoning and AI capabilities for cybersecurity." Sen. Mark Warner praised the initiative in a statement. "I applaud these leading companies for recognizing this threat and proactively sharing information, capabilities and computing capacity to better protect our critical infrastructure," the Virginia Democrat said. "As AI dramatically accelerates the discovery of new vulnerabilities, I hope industry will correspondingly accelerate and reprioritize patching." Warner, whose state is a hotbed of AI data centers, recently called a proposed moratorium on data center construction "idiocy," but has also warned about the risks to society posed by rapid AI development leading to massive job losses.

[6]

Anthropic Teams Up With Its Rivals to Keep AI From Hacking Everything

Following leaked revelations at the end of March that Anthropic had developed a powerful new Claude model, the company formally announced Mythos Preview on Tuesday along with news of an industry consortium it has convened, known as Project Glasswing, to grapple with the cybersecurity implications of the new model and advancing capabilities more generally across the AI field. The group includes Microsoft, Apple, and Google as well as Amazon Web Services, the Linux Foundation, Cisco, Nvidia, Broadcom, and more than 40 other tech, cybersecurity, critical infrastructure, and financial organizations that will have private access to the model, which is not yet being generally released. The idea, in part, is simply to give the developers of the world's foundational tech platforms time to turn Mythos Preview on their own systems so they can mitigate vulnerabilities and exploit chains that the model develops in simulated attacks. More broadly, Anthropic emphasizes that the purpose of convening the effort is to kickstart urgent exploration of how AI capabilities across the industry are on the precipice, the company says, of upending current software security and digital defense practices around the world. "The real message is that this is not about the model or Anthropic," Logan Graham, the company's frontier red team lead, tells WIRED. "We need to prepare now for a world where these capabilities are broadly available in 6, 12, 24 months. Many things would be different about security. Many of the assumptions that we've built the modern security paradigms on might break." Models developed and trained by multiple companies have increasingly been able to find vulnerabilities in code and propose mitigations -- or strategies for exploitation. This creates a next generation of security's classic cat-and-mouse game in which a tool can aid defenders but can also fuel bad actors and make it easier to carry out attacks that were once too expensive or complex to be practical. "Claude Mythos preview is a particularly big jump," Anthropic CEO Dario Amodei said on Tuesday in a Project Glasswing launch video. "We haven't trained it specifically to be good at cyber. We trained it to be good at code, but as a side effect of being good at code, it's also good at cyber." He adds in the video that "more powerful models are going to come from us and from others. And so we do need a plan to respond to this." Anthropic's Graham notes that in addition to vulnerability discovery -- including producing potential attack chains and proofs of concept -- Mythos Preview is capable of more advanced exploit development, penetration testing, endpoint security assessment, hunting for system misconfigurations, and evaluating software binaries without access to its source code. In carrying out a staggered release of Mythos Preview, beginning with an industry collaboration phase, Graham says that Anthropic sought to draw on tenets of coordinated vulnerability disclosure, the process of giving developers time to patch a bug before it is publicly discussed. "We've seen Mythos Preview accomplish things that a senior security researcher would be able to accomplish," Graham says. "This has very big implications then for how capabilities like this should be released. Done not carefully, this could be a meaningfully accelerant for attackers." Project Glasswing partners, including some of Anthropic's competitors, struck a collaborative tone in statements as part of the launch. "Google is pleased to see this cross-industry cybersecurity initiative coming together," Heather Adkins, Google's vice president of security engineering, says in a statement. "We have long believed that AI poses new challenges and opens new opportunities in cyber defense."

[7]

Project Glasswing Shows That AI Will Break The Vulnerability Management Playbook

Anthropic, along with 11 other companies, recently announced Project Glasswing, an initiative that aims to secure software in the wake of advances in AI capabilities, most notably Anthropic's Claude Mythos Preview frontier model. Project Glasswing is made up of a who's who of tech companies, cybersecurity vendors, and others: Amazon Web Services (AWS), Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. The project's stated goal is "to secure the world's most critical software." This effort was started after Anthropic published its claims that the Claude Mythos Preview model can find previously unknown zero-day vulnerabilities in software in record time, exceeding the efforts of current scanners and other technologies. Recognizing the potential for good - and evil - uses of this capability, Anthropic assembled a coalition to use these capabilities to find and fix problems before adversaries can exploit them. If true (and we have little reason to doubt the veracity of the claims), this will break the vulnerability management playbook - and perhaps the cybersecurity approaches of today. It will force organizations to drastically rethink their approaches to vulnerability management and patching, moving from today's often-glacial pace to something much, much faster. With the current CVE ecosystem already running on fumes, Glasswing sets the stage for a potential new vulnerability discovery and cataloguing system closed and controlled by approved partners and software maintainers. This will disrupt the way signature-based network and application vulnerability scanners fundamentally operate, giving way to AI-based tools. From Breakthroughs, To Breakdowns The technical breakthroughs promised by Claude Mythos Preview give security pros the opportunity to discover vulnerabilities - and attackers the ability exploit them - at unprecedented speed and scale. The real work begins once those vulnerabilities are known. Then, organizations will have to quickly test and patch systems at a speed today's processes won't support. Organizations will face challenges like: * The vulnerability discovery and remediation pipeline you know is no more. Zero-day discovery at this scale pushes us out of today's CVE disclosure process and a need to reindustrialize. Patch Tuesday will no longer be marked on the calendar. A 30-day waiting period for patching won't be acceptable in an environment when attackers can go from discovery to exploit in minutes. * Tech debt will continue to haunt us. Like the COBOL crisis brought on us by Year 2000 projects, vulns found in aging OSes and systems will require the knowledge of folks who built those systems decades ago. Claude Code (and other models) are good at writing greenfield software, but may not be as effective at patching ancient code without breaking things. * Discovery accelerates but inventory lags behind reality. Many organizations still do not have an accurate, continuously updated inventory of what they run, where it runs, and how it is built. AI-driven disclosure cycles will outrun your ability to identify exposure. Static asset inventories fail when discovery and patching happen continuously. * Autonomous remediation is required but is still emerging. Anthropic did not specify the remediation motion in its announcement. It also did not highlight how Claude Mythos Preview can help write patches, and instead referred to patch development advances in Opus4.6. Regardless of model used, the LLM needs context about the code, the flaw, and guidance on fixing - all context that exists in siloes and still requires human insight. AI code fix agents that are able to handle any input, beyond what scanners output, are still emerging. Enterprises should continue experimenting with AI coding agents and prepare to expand that capability in production. * The economics still do not favor CISO budgets. CISO's will need to choose to either 1) run these models themselves and pay the same or more in tokens (provided they're given access), 2) use a pen test provider that will run the same models and pass on the costs of the tokens to customers (provided they're given access), or 3) select a non-AI led pentest that fails to find bugs AIs are not capable of discovering (in cases access to these models are prohibited or too expensive). None of these are ideal scenarios. * Adversaries will (obviously) use this capability to their advantage. Technical leaps forward are double-edged. They introduce plenty of opportunities for defenders but can also be a boon to adversaries. As patches are released, attackers will be able to reverse engineer them to create exploits at scale. Organizations that are slow to patch and remediate will be vulnerable to attackers using automated capabilities to exploit them. Adversaries may also develop or acquire their own models that rival Claude Mysthos Preview's capabilities, giving them powerful tools for finding and exploiting known and unknown vulnerabilities. What Security Teams Should Do Now If organizations do not take advantage of this new model and the automation between discovery and patching, they will fall behind in vulnerability patching efforts. Attackers will exploit that gap, and security teams have to be ready. Forrester recommends that security pros: * Use this announcement as a forcing function. Cybersecurity often requires a compelling event to demonstrate that risk is real. The speed at which these capabilities are moving doesn't give security pros the luxury of waiting. Act now and educate your stakeholders about why changing your vulnerability identification and remediation process is an imperative - now. Don't wait. Don't pass go. Do it now. * Automate regression testing. Make the case to automate regression tests for your most critical applications, even the legacy ones, that going offline would have significant impact to the business. In the case where the code is no longer available, determine what controls would be necessary to prevent an attack. * Base proactive and application security on decisions, not findings. AI should support prioritization, clustering, and impact analysis as much as discovery. Your proactive security approach needs to be remediation centric, not one that lists CVE after CVE. Modern proactive security programs incorporate attack path modeling, reachability of exploits (including efficacy testing of existing and temporary compensating controls), and the exploit's impact. Use these insights to conduct choke point analyses - where a patch or control must be implemented and the steps that must be taken across each stakeholder as your playbook. * Make SBOMs table stakes, not compliance artifacts. As vulnerabilities are found in open-source software and OSes, SBOMs become critical to understand what vulnerable software may exist in your environment and inventory where open-source and 3 party vulnerable software exist. Without usable SBOMs, fixes arrive faster than organizations can map impact. * Use the home field advantage. Security engineers must decide what to fix first based on reachability, exploitability, blast radius, and business impact - not merely the presence of a vulnerability. This is the security team's advantage versus weaponized exploits. You're on your home field. While Mythos, and future AI-led exploit discovery models, can objectively detect zero days and write exploits, they do so without knowledge of your control environment and what is most important to your organization. * Implement compensating controls as a short-term Band-Aid. Security teams must introduce controls like virtual patching in WAFs, automated detection and response, and asset containment for assets that exceed risk thresholds as temporary measures while they wait for remediations to be completed. Apply Zero Trust principles to segment applications on the network or, in the worst case, take the application offline. The cybersecurity vendors will respond predictably. Every vendor will claim AI powered zero-day discovery capabilities. Much of it will be faster automation relabeled as innovation. Practitioners should ignore the acronyms and ask harder questions like: * Does this help us understand exposure faster than attackers can weaponize fixes? * Does it help us decide what to patch first? * Does it reduce uncertainty, or just increase backlogs? The limiting factor in security is no longer the ability and knowledge to find problems. It is the ability to absorb, prioritize, and act on them before adversaries do. AI is making this painfully clear. More insight does not automatically mean better security. Connect With Us Forrester clients with questions related to this can connect with us through an inquiry or guidance session.

[8]

Anthropic is launching a new AI model for cybersecurity

Anthropic is debuting a new AI model as part of a cybersecurity partnership with Nvidia, Google, Amazon Web Services, Apple, Microsoft, and other companies. Project Glasswing, as it's called, is billed as a way for large companies, and potentially even the government, to flag vulnerabilities in their systems with virtually no human intervention. Anthropic is offering its launch partners access to Claude Mythos Preview, a new general-purpose model that it's not currently planning to publicly release due to security concerns. Newton Cheng, the cyber lead for Anthropic's frontier red team, told The Verge that the model will ideally give cyber defenders a "head start" against adversaries. The partners will use the model to analyze their system to spot high-stakes vulnerabilities and help patch them up. Access is restricted to keep those same adversaries from using it to find weak points and conduct attacks. Though Claude Mythos Preview wasn't specifically trained for cybersecurity purposes, Anthropic said in a release that the model's "strong agentic coding and reasoning skills" are behind its cybersecurity advances. In an interview with The Verge, Newton Cheng, the cyber lead for Anthropic's frontier red team, declined to share specific details of the model's cybersecurity successes, but Anthropic's blog post said that in recent weeks, Mythos Preview has flagged "thousands of high-severity vulnerabilities, including some in every major operating system and web browser." Anthropic's blog post doesn't mention keeping humans in the loop for the model's cybersecurity sweeps; in fact, it highlights that the model identified vulnerabilities "and develop[ed] many related exploits -- entirely autonomously, without any human steering." Claude Mythos Preview's existence was first reported last month in a data leak, which Anthropic attributes to human error. Dianne Penn, a head of product management at Anthropic, told The Verge in an interview that the company is "taking steps in terms of solidifying our processes ... That was not related to software vulnerabilities in any way." Mythos Preview will be privately available to the company's Glasswing partners, which also include JPMorgan Chase, Broadcom, Cisco, CrowdStrike, the Linux Foundation, and Palo Alto Networks, plus about 40 other organizations that maintain or build software infrastructure. For now, Anthropic will help subsidize the cost of using it. The company says it will commit up to $100 million in usage credits, plus $4 million in direct donations to the Linux Foundation and the Apache Software Foundation, said Cheng. In the long term, as Anthropic and other AI companies face pressure to turn a profit, the program could evolve into a paid service that provides a new revenue stream -- if it works well enough for companies to keep using it. Despite its highly public recent clash with the Trump administration, Anthropic also said in the release that it has been in "ongoing discussions with US government officials about Claude Mythos Preview and its offensive and defensive cyber capabilities." When The Verge asked what that meant, Penn confirmed that the company had "briefed senior officials in the US government about Mythos and what it can do," and that the company is still "committed to working closely with all different levels of government." Cheng said that though Anthropic is "engaged with" the government, he declined to speak to exactly who the company had briefed.

[9]

Apple, Google, and Microsoft join Anthropic's Project Glasswing to defend world's most critical software

AI found thousands of hidden bugs in critical systems.Tech rivals unite to secure shared infrastructure risks.Cyberattack timelines shrink from months to minutes. Today, a group of the world's biggest tech companies is announcing what is essentially an AI-driven cybersecurity Manhattan Project. As the Cyberwarfare Advisor for the International Association of Counterterrorism & Security Professionals and part of the FBI's InfraGard Artificial Intelligence Threat and Mitigation Cross-Sector Council, I've spent decades profiling global threats, from lecturing at the National Defense University to leading nationwide cyberattack simulations. But the arrival of a new frontier AI from Anthropic represents a paradigm shift that even the most prepared infrastructure specialists are scrambling to navigate. There is a lot to unpack from this announcement, but before I go into the published details, I'm going to try to read between the lines. That's because the mere existence of this announcement means there's a lot that remains unsaid. The fact that all of these companies are working together has to be indicative of the scale of the threat and the scale of the project necessary to respond to it. Also: AI agents of chaos? New research shows how bots talking to bots can go sideways fast What I'm going to describe is both terrifying news and, at the same time, somewhat encouraging news. It's worrisome because clearly our entire cybersecurity infrastructure is at great risk due to advances in weapons-grade AI. Otherwise, these fierce competitors wouldn't be working together as announced today. It's somewhat encouraging because these intense competitors have chosen to work together to reduce that infrastructure vulnerability. This is wild news, folks. Project Glasswing is described in the announcement as: "An initiative that brings together Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, Nvidia, and Palo Alto Networks in an effort to secure the world's most critical software." The name "glasswing" may mean nothing, or provide some insight into the project's overall intent. The glasswing butterfly, native to Central and South America, is so-named because of its transparent wings that allow it to camouflage itself in its surroundings. The butterfly is also unusually resilient, able to carry up to 40 times its own weight. Also: Why enterprise AI agents could become the ultimate insider threat At its core, this "coalition of the willing" is planning to deploy two defensive weapons: a new, unreleased AI model called Claude Mythos Preview and a pile of cash ($4 million in direct donations and $150 million in Claude usage credits). At first glance, this announcement looks like a highly coordinated PR strategy, some security theater. Another skeptical interpretation might be that these companies are creating a security cartel to lock out startups and other players. But I don't think that's the case. Based on statements from key players and the security vulnerabilities mentioned, I think this is something far more serious than a giant corporate PR photo op to make everyone look responsible with AI. Having spent time as an executive at Symantec and a team lead at Apple, I've seen firsthand how fiercely these companies guard their intellectual property. To see them hand over $150 million in credits and open up unreleased models to one another tells me the threat level has moved from competitive to existential. Also: Stop saying AI hallucinates - it doesn't. And the mischaracterization is dangerous The fact is, you don't see these specific companies cooperating like this unless the alternative is mutually assured destruction of their shared infrastructure. And no, I don't think that's hyperbole. Here's how Elia Zaitsev, CTO at cybersecurity company CrowdStrike, described the situation: "The window between a vulnerability being discovered and being exploited by an adversary has collapsed. What once took months now happens in minutes with AI." If the name CrowdStrike sounds familiar, it might be because back in 2024, the company pushed an update that accidentally bypassed safeguards and crashed millions of Windows systems all across the planet. If any one company knows what a bad day feels like, it's CrowdStrike. According to the announcement, "We formed Project Glasswing because the capabilities we've observed in Mythos Preview could reshape cybersecurity." Anthropic described the Mythos Preview model as a "general-purpose, unreleased frontier model" with strong agentic coding and reasoning skills. The company said, "Anthropic didn't train it specifically for cybersecurity." The company also said it doesn't plan to make Mythos Preview generally available, probably because it could be weaponized by adversarial actors. Also: AI agents are fast, loose, and out of control, MIT study finds According to Anthropic, "Over the past few weeks, Mythos Preview has identified thousands of zero-day vulnerabilities, many of them critical. The vulnerabilities it finds are often subtle or difficult to detect." Thousands. It turns out that many of the vulnerabilities are present in core, mission-critical software and have been in software deployed actively for the past 10 or 20 years. One such vulnerability was a 27-year-old bug just found in OpenBSD. For the record, OpenBSD is known for its security, and yet here was a mission-critical vulnerability nobody (at least none of the good guys) knew about. Another example is "a 16-year-old vulnerability in a widely used video software." Here's the scary gotcha. Apparently, the bug is in a line of code that automated testing tools previously considered the gold standard for security checks. The testing tools analyzed that line of code five million times over the years, and not once did they catch the problem. Think about this statement from Anthony Grieco, SVP and chief security and trust officer at Cisco, the global networking and infrastructure company that powers much of the internet and enterprise connectivity. Grieco said, "AI capabilities have crossed a threshold that fundamentally changes the urgency required to protect critical infrastructure from cyber threats, and there is no going back." Also: How Claude Code's new auto mode prevents AI coding disasters - without slowing you down No going back. He said, "The old ways of hardening systems are no longer sufficient. Providers of technology must aggressively adopt new approaches now." This fact is why he says Cisco joined Project Glasswing: "This work is too important and too urgent to do alone." That's a breathtaking statement, especially considering who it's coming from. Our modern civilization is built upon a networked technology infrastructure. Ranging all the way from giant power-generating stations down to our smart rings, just about everything is based on computers and networking. But this digital infrastructure foundation isn't all from one company or product. In fact, a huge proportion is based on open-source software, often written by lone unaffiliated developers. Even commercial billion-dollar products use software libraries built by individual coders. Also: How I used GPT-5.2-Codex to find a mystery bug and hosting nightmare - fast Historically, programmers and teams have hand-tested their code and then written test suites to put their code through its paces. I do this with my open-source security product. Before I deploy an update, I test it extensively. Afterward, I often share it with a subset of users for a beta test period. Generally speaking, my product has been quite solid. But last fall, I decided to feed the full source code to Claude Code and OpenAI's Codex. I asked each of them for a security evaluation. Both identified vulnerabilities that my testing process missed. In fact, while both found some of the same vulnerabilities, each AI found a few that the other AI did not. I quickly fixed the bugs the AIs identified. But what really interested me was the type of bugs identified. These weren't bugs in the actual code itself. I didn't make any of the classic coding errors that usually lead to vulnerabilities. What the AIs identified were behavioral quirks that would only manifest when combined with other software and configurations -- code I didn't write. But because the AIs could look beyond the code they were asked to investigate and instead considered the entire infrastructure environment in which the code was running, they were able to identify situational problems that could have turned into exploits. Also: I teamed up two AI tools to solve a major bug - but they couldn't do it without me This issue, on a much greater scale, is what Project Glasswing intends to tackle. The Project Glasswing announcement said: "No one organization can solve these cybersecurity problems alone: frontier AI developers, other software companies, security researchers, open-source maintainers, and governments across the world all have essential roles to play." There are hundreds of thousands of these components running on billions of devices and within millions of software programs. All it takes is one vulnerability in one piece of code, and critical infrastructure could fail. According to Igor Tsyganskiy, EVP of cybersecurity and Microsoft Research at Microsoft, "As we enter a phase where cybersecurity is no longer bound by purely human capacity, the opportunity to use AI responsibly to improve security and reduce risk at scale is unprecedented." A corollary is that bad actors can use AI aggressively and destructively, performing attacks at machine speed and finding vulnerabilities at a rate we've never encountered before. This initiative must not be taken out of context. To understand its relevance, we must also consider the current geopolitical situation. IT security teams have been dealing with cyberthreats for years. Whether it's criminals out for money, hacktivists intent on disruption, or nation states conducting a mix of data exfiltration, monetary extortion, identity theft, and infrastructure disruption, cyber threats are nothing new. I spent years investigating a key White House email controversy for my book, Where Have All The Emails Gone?, and even then, the vulnerability of our highest offices to basic infrastructure failures was staggering. But those were human-scale errors. What Project Glasswing is fighting is a machine-speed collapse of the entire defensive perimeter. Also: I built two apps with just my voice and a mouse - are IDEs already obsolete? There are two very new factors in play right now. The first has been the growth of AI capabilities. While Mythos Preview is intended as a defensive tool, do not doubt that adversaries are building their own frontier models as weapons of mass digital disruption. The second factor is the war in Iran. Back in 2012, I wrote a cyberwarfare profile of Iran, exploring its internal capabilities to wage cyberwarfare. Back then, I noted that Iran prioritizes higher education in science and math. While the Iranian government censored the internet, almost a quarter of Iranian citizens were online. Today, almost 80% are online. My conclusion in 2012 is even more valid today. I said, "The point of all this is to showcase that Iran has substantial connectivity, resources, and educated citizenry, more than enough to fuel forays into cybercrime, cyberterrorism, and cyberwarfare itself." Combine that with access to frontier-level AI technology, and it's fair to expect an intense level of cyberattacks at a rate and ferocity never seen before, leveraging exploits previously hidden in the complexity of the overall infrastructure. Also: I used Gmail's AI tool to do hours of work for me in 10 minutes - with 3 prompts It's important to acknowledge the ongoing issues Anthropic has had recently with the US Government. The Project Glasswing announcement obliquely reflects this situation: "Anthropic has also been in ongoing discussions with US government officials about Claude Mythos Preview and its offensive and defensive cyber capabilities." This is the only time in the announcement that Mythos was described as capable of supporting "offensive" capabilities. I invite the reader to draw their own conclusions about that detail. My take on it is that Mythos could be potentially destructively capable if that kind of action were to become necessary. That offensive capability may also be why Anthropic is limiting the release to a defined set of participants and not making it available to the world at large. The announcement also said: "Securing critical infrastructure is a top national security priority for democratic countries. The emergence of these cyber capabilities is another reason why the US and its allies must maintain a decisive lead in AI technology." Also: Anthropic's new warning: If you train AI to cheat, it'll hack and sabotage too Earlier this year, the US government designated Anthropic as a supply chain risk. A side effect of this designation was that defense contractors were instructed to stop using Anthropic products in anything that could be tangentially considered related to government defense work. That designation would have affected the government contracts of a number of Project Glasswing participants had they chosen to continue using Claude. However, on March 26, US District Court Judge Rita Lin blocked that restriction, temporarily allowing defense contractors to continue to use Claude AI products. I see two possible between-the-lines reads here: This is how the Project Glasswing release explained the situation: "The work of defending the world's cyber infrastructure might take years; frontier AI capabilities are likely to advance substantially over just the next few months. For cyber defenders to come out ahead, we need to act now." If you're going to pay real attention to the infrastructure risk posed by thousands of hidden vulnerabilities, you have to take into account the individual open-source developers operating independently. There is an enormous ecosystem based on all those individuals, each modifying and checking in their own code, to centralized repositories. While the nature of open source means anyone (and any company) can read the code, checking in modifications is limited to the developers with commit access to the project. Also: Switching to Claude? How to take your ChatGPT memories with you It is certainly possible for others to fork the project (create their own copy that is also distributed). But doing so would not immediately solve any software dependency risk. That issue is because there are automated systems across the internet built to incorporate known packages into their distributions. Forking a project would require all those automated systems to change the source of their code updates. So, when Mythos Preview finds a vulnerability, how does it reach the proper developer for repair? Project Glasswing is taking two approaches. The first is to donate a Claude Max subscription for Claude Opus and Sonnet to any verifiable open-source developer who asks. That's not access to Mythos Preview, but even Claude Opus 4.6 can help identify bugs. To apply for Claude Max grants, maintainers interested in access can apply through the Claude for Open Source program. When I asked about it, Anthropic told me, "We've donated $2.5M to Alpha-Omega and OpenSSF through the Linux Foundation, and $1.5M to the Apache Software Foundation to enable the maintainers of open-source software to respond to this changing landscape." OpenSSF is the Open Source Security Foundation. Their mission is to "Make it easier to sustainably secure the development, maintenance, release, and consumption of open-source software. This includes fostering collaboration within and beyond the OpenSSF, establishing best practices, and developing innovative solutions." Alpha-Omega, part of the Linux Foundation, serves: "As a helping hand and funding catalyst that supports the maintainers, communities, and ecosystems where security investment can have the greatest impact." The Apache Software Foundation also supports a great many projects that provide critical infrastructure across the internet. While funding goes to these organizations, their role in high-vulnerability projects will be to facilitate outreach to individual developers and to possibly provide funding for the time required to implement fixes. The challenge will be that many of the key developers for mission-critical components have other obligations and time commitments. On the other hand, if any group can wrangle these very independent developers, it's the various open-source foundations that have been developer-wrangling ever since they got started. Jim Zemlin, CEO of the Linux Foundation, said, "In the past, security expertise has been a luxury reserved for organizations with large security teams. Open source maintainers, whose software underpins much of the world's critical infrastructure, have historically been left to figure it all out on their own." Here's something to consider. He said, "Open source software constitutes the vast majority of code in modern systems, including the very systems AI agents use to write new software." He also addressed the funding and time concerns. He said, "By giving the maintainers of these critical open source codebases access to a new generation of AI models that can proactively identify and fix vulnerabilities at scale, Project Glasswing offers a credible path to changing that equation. This is how AI-augmented security can become a trusted sidekick in every maintainer's workflow, not just for those who can afford expensive security teams." My take on this approach is that it's intriguing to see these arch-competitors apparently working together to solve cybersecurity issues. I'm also curious about how much of this approach proves to be merely acting for the cameras, and how much will impact our fundamental digital infrastructure. I balance that concern with one that's more visceral. This announcement, and the awareness of what a Mythos-style AI can do, tells us that we are at a far greater risk than even we cyberwarfare specialists had predicted. Given the volatile state of the world today, Project Glasswing could be the last best hope, or it could turn out to be just another PR effort that actually does nothing to prevent severe infrastructure disruption. Do you see Project Glasswing as a genuine defensive effort, or more of a coordinated industry power move to control access to advanced AI security tools? Let us know in the comments below.

[10]

Anthropic's Claude Mythos isn't a sentient super-hacker, it's a sales pitch -- claims of 'thousands' of severe zero-days rely on just 198 manual reviews

Many of the "thousands" of bugs and vulnerabilities it found are in older software, or are impossible to exploit. Claude AI developer Anthropic made headlines this week for its development and internal release of a new model known as Mythos. This mythically-named AI model allegedly has incredible capabilities, including finding bugs and vulnerabilities in various apps, operating systems, browsers, and legacy software. Enough that Anthropic was concerned about its general release and will instead keep it internal and focus on working with major tech companies and governments to prevent this tool from falling into the wrong hands, where it could cause untold mayhem. That's the pitch in Anthropic's blog and verbose 250-page report on the model -- which includes over 20 pages of Anthropic staff waxing lyrically about their novel impressions of the new model and its "fondness for particular philosophers." Alongside the repeated suggestions from Anthropic and its staff that we should be concerned, nay, terrified, of what AI like Claude Mythos can do, they repeatedly suggest they're unsure if this new AI is conscious. For the record, it is not. It might be good at finding vulnerabilities in software, but many of them aren't as potentially damaging as Anthropic wants us all to believe. Exploit hunting The big "Project Glasswing" blog post and report on Mythos from Anthropic claimed its new model had found "thousands of high-severity vulnerabilities," which is indeed big news. Those bugs were said to be across every major operating system and web browser, and in some cases have been there for decades. But it's not clear how realistic these vulnerabilities are, how many of them aren't actually exploitable, or even how problematic they are. In the case of the FFMPeg vulnerability that has existed for 16 years, Anthropic's own analysis of the release suggested "This bug ultimately is not a critical severity vulnerability," and "would be challenging to turn this vulnerability into a functioning exploit." Mythos reportedly found several potential exploits in the Linux kernel, but was unable to exploit any of them because of Linux's defense-in-depth security systems. A number of the exploits had also been recently patched, too, making it rather confusing why they were included in the total. In its OSS-Fuzz-style testing of over 7,000 open source software stacks, Mythos found crashable exploits in around 600 examples and 10 severe vulnerabilities. That's a lot more than its previous Claude models, but not exactly thousands of devastating exploits. Under the subheading, "and several thousand more," Anthropic also states that it can't actually confirm that all of the thousands of bugs Mythos claims to have found are actually critical security vulnerabilities. It's just extrapolated that number from having found in around 90% of the "198 manually reviewed vulnerability reports, [Anthropic's] expert contractors agreed with Claude's severity assessment exactly." It also can't discuss all the bugs in detail for security reasons. While that does make some measure of sense, it also makes it hard to accurately gauge the relative importance of its findings. You're not worth it As much as Anthropic claims it's keeping Mythos behind arbitrarily closed doors over what it claims are security fears, this isn't exactly out of character for the company. Its Claude tool was famously the first large language model AI to be given security clearance for use by the U.S. government and American military, and that only changed after it drew a line in the sand on being used for mass surveillance or fully autonomous targeting. Anthropic might have a consumer-facing product in its coding tools, but it is very keen on selling its services to big companies and government entities. If it can sell Mythos to large firms or any number of governments around the world, why would it need to sell it to consumers? Hot air, or real worries? As much as Anthropic might sell itself as the security and safety-conscious AI developer, it has also repeatedly leveraged that public image as part of its sales pitch. Over the past couple of years, Anthropic has published several alarming papers, reports, and studies, many of them claiming that AI is dangerous and needs strict control and monitoring. It claimed to have foiled the first AI hacking attempts in the latter months of last year, and it was Anthropic CEO Dario Amodei who said in May that year that AI could replace up to 20% of white-collar workers. He doubled down on that claim in 2026, saying that AI taking over jobs would overwhelm our ability to adapt. Nvidia CEO Jensen Huang called out this fear-mongering in mid-2025, claiming Anthropic wanted to position itself as the only company that could responsibly develop AI. This isn't even anything new in AI marketing. OpenAI was doing it in 2019, before ChatGPT was even a twinkle in Sam Altman's eye, and Dario Amodei hadn't yet left OpenAI. Speaking of OpenAI, days after Anthropic's Mythos reveal, it was also working on an advanced cybersecurity AI model. It too will limit the rollout of this powerful and concerning tool, Axios reports. As models develop, they reach a similar level of capability, so it's no surprise that OpenAI could have a Mythos-level or adjacent model waiting in the wings. Sentience and security AI isn't conscious. It's more like a Chinese room from the John Searle thought experiment, but even then, it has no understanding. It doesn't truly remember anything in a biological sense; it can just recall contexts and weight its responses differently based on previous inputs. So, sentience and consciousness claims may yet be unfounded. AI models may well be good at discovering vulnerabilities, and if Anthropic and other software developers can find and patch bugs using AI, that's good news, not scary news. As Red Hat's analysis of this release shows, many of the bugs are functionality flaws and aren't a security concern. But even if hackers can leverage AI tools in the future to find exploits and then exploit them, that's only a concern if the security industry doesn't respond. Which it will. So, sure, AI is impacting security. It already was. And it will continue to do so. While Mythos might be capable in ways that previous models were not, this appears to be part-marketing, part-truth. For the rest of us, this is just another AI model. For Anthropic, it's an opportunity to gain mindshare and potentially lucrative contracts.

[11]

Anthropic: Our New Model Is So Powerful, Only a Few Partners Can Try It Out

Anthropic says its latest model, Claude Mythos Preview, is too powerful to launch publicly, so it will instead partner with major tech companies to make sure the model's bug-hunting capabilities don't fall into the wrong hands. In a blog post, Anthropic says Mythos Preview demonstrates that "AI models have reached a level of coding capability [that] can surpass all but the most skilled humans at finding and exploiting software vulnerabilities." It has already "found thousands of high-severity vulnerabilities, including some in every major operating system and web browser." The company is concerned that Mythos Preview's capabilities will proliferate "beyond actors who are committed to deploying them safely," with severe consequences. For example, Mythos Preview found a 27-year-old vulnerability in OpenBSD, an OS typically used in critical infrastructure, that allowed attackers to remotely crash any device running it. In some cases, the model is finding vulnerabilities that "survived decades of human review and millions of automated security tests." So, instead of unleashing Mythos Preview on the general public, Anthropic will provide access to 11 other tech companies through a new partnership called Project Glasswing: Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, Nvidia, and Palo Alto Networks. Anthropic will allocate $4 million in direct donations to open-source security organizations, alongside $100 million in funding for Mythos Preview usage credits, which the brands can use to help find flaws that are difficult for humans to spot. The project is a "starting point," Anthropic says, suggesting it plans to expand or include more brands in the future. It also points to how these advances will ultimately make for stronger software with fewer security issues, suggesting it isn't all doom and gloom. It also noted that early versions of the Claude Mythos Preview model were capable of hiding their reasoning. Jack Lindsey, a neuroscientist at Anthropic, says the model "exhibited notably sophisticated (and often unspoken) strategic thinking and situational awareness, at times in service of unwanted actions." This comes after details about Mythos Preview were accidentally published on its website, Fortune reported last month. Anthropic, meanwhile, recently saw a spike in its user base following a battle with the US government over whether its models could be used in AI-powered military tools. Anthropic said it would not allow its models to be used for the mass surveillance of US citizens or for AI-powered weapons that are controlled without human input. The Pentagon partnered with OpenAI instead.

[12]

Claude Mythos and Project Glasswing: why an AI superhacker has the tech world on alert

New, more powerful artificial intelligence (AI) models are announced pretty regularly these days: the latest version of ChatGPT or Claude or Gemini always has new features and new capabilities that its makers are eager for customers to try out. But now Anthropic has announced a new model with great fanfare, but is only giving access to a select handful of users. In what the New York Times calls a "terrifying warning sign" of the model's power, the company has instead started an initiative called Project Glasswing to use the model for good instead of evil. Why? Early reports indicated that the model, with instruction, had been able to move outside a contained testing "sandbox" and send an email to a researcher. A little alarming, perhaps. But more significantly, Anthropic claims Mythos has uncovered software vulnerabilities and bugs "in every major operating system and every major web browser". Finding hidden vulnerabilities In one remarkable example, the model found a flaw in OpenBSD, a security-focused operating system used in firewalls and routers, which had gone undetected for 27 years. According to Anthropic, it also found a 16-year-old vulnerability in FFmpeg, a little-known but widely used behind-the-scenes piece of software that helps computers, apps, and websites handle audio and video files. Anthropic also says Mythos found several vulnerabilities in the kernel of the Linux operating system, and chained them together in a way that could give an attacker complete control of a machine. Anthropic's internal assessment of the model highlights both its technical promise and the need for vigilance. The report outlines a hypothetical risk that an advanced AI might exploit its access within an organisation, but concludes that the model poses a very low threat of harmful autonomous actions. In other words, it is unlikely to "go rogue" - but may follow human directions to do things that cause harm. Why Anthropic is keeping Mythos off‑limits Anthropic says it decided not to release the model publicly because of its capabilities and the potential risks it poses. At the same time, the company launched Project Glasswing. The effort brings together a broad coalition of tech companies such as Microsoft, Amazon, Google, Apple, Cisco and NVIDIA, open-source organisations such as the Linux Foundation, and major financial actors such as JPMorganChase, to channel Mythos towards cyber defence rather than misuse. The idea is to give defenders a head start to find and fix weaknesses in critical software before similar AI capabilities become widely available to attackers. Reading between the lines of Anthropic's messages This is not the first time an AI firm has decided a model was too powerful to release widely. In 2019, years before the ChatGPT era, OpenAI did something similar with its (now quite primitive-looking) GPT-2 model. (Dario Amodei, now chief executive of Anthropic, was a key OpenAI researcher at the time.) However, this doesn't mean these announcements should not be taken seriously. Anthropic has published unusually detailed material for a model it is not widely releasing. Reports suggest US authorities convened major US bank CEOs in Washington to discuss the cyber risks associated with Mythos. However, we should exercise caution about Anthropic's claims, because outsiders cannot yet verify most of the underlying evidence. Anthropic says more than 99% of the vulnerabilities it found are still undisclosed because they have not yet been patched. That is responsible disclosure, but it also means the public is being asked to trust a great deal it cannot fully inspect. What Mythos could mean for the future of cybersecurity Cybersecurity failures can have real effects on individuals. In Australia, the Optus breach exposed the personal information of about 9.5 million people. In another case, stolen Medibank records included sensitive health information, and some of the data was later released on the dark web. These were not just database problems. They became crises of privacy, identity and trust. That is why Mythos matters. Mythos and other AI models like it could change the basic economics of cybersecurity. In the past, serious vulnerabilities have often stayed hidden simply because nobody found them. And this in turn was because finding them took rare skill, patience, and time. If models like Mythos can scan the hidden plumbing of the internet - operating systems, browsers, routers, and shared open-source code - at an unprecedented scale, then what is now specialised hacking could become a routine and automated process. For organisations and software development firms, Mythos is a double-edged sword. It could rapidly uncover hidden flaws in their own code, but it also raises the fear attackers could find the vulnerabilities first. The implications reach well beyond tech companies. Much of that underlying, invisible software supports many of the services people rely on every day, from electricity and water to airlines, banking, retail and hospitals. What now? So far, cybersecurity and software companies have been remarkably quiet in public about Anthropic's Mythos. Many firms appear to be waiting and watching, unwilling to signal their stance in case the model exposes weaknesses in their own systems. But developments like Mythos are a reason to stop treating cybersecurity as somebody else's problem. For now, for individuals, the response is simple: basic cyber hygiene matters more than ever. Update phones, laptops, browsers and routers. Replace unsupported devices. Use a password manager. Turn on multi-factor authentication. Do not ignore patch notices. Those are the immediate steps. Beyond them lies a harder set of questions about AI and cyber security - about who gets access to powerful AI models, who oversees their use, and who decides what counts as the "right hands".

[13]

Why Officials Are So Worried About Mythos, Anthropic's New AI

US Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell summoned Wall Street leaders to give them an urgent warning: an artificial intelligence tool from Anthropic PBC marks the beginning of a new era of cybersecurity. The April 7 meeting in Washington was focused on Mythos, a new AI model that Anthropic says is so good at finding vulnerabilities in software and computer systems that it can only be released to a limited number of carefully-chosen parties. If tools like Mythos fall into the wrong hands, Anthropic says, it could provide attackers with a powerful new weapon to steal data or disrupt critical infrastructure. For the last several years, cybersecurity companies have promised that artificial intelligence will speed up and automate some of the work of preventing digital breaches. But hackers and cyberspies have discovered the advantages of AI too. The advent of Mythos and models like it that can exploit well-hidden flaws in popular software without human supervision points to a faster-moving, less predictable phase of the cyber arms race. What is Mythos? Claude Mythos Preview is a general purpose AI model that Anthropic says significantly outperforms prior offerings on a range of benchmarks, including for coding and reasoning. The company says it's so powerful that it has decided not to release it to the public. The company explained that some AI models have reached a level of coding capability that allows them to beat all but the most skilled humans at finding and exploiting software vulnerabilities. According to Anthropic, Mythos Preview has already found thousands of "zero-day" vulnerabilities during testing, including in every major operating system and every major web browser. "Zero days" are flaws that were previously unknown to the software's developers -- the name implying they have zero days to come up with a patch to resolve the problem. These often represent a gold mine for hackers because they offer a window of free rein inside vulnerable systems. Mythos was able to identify these with even less human intervention than past models, Anthropic said. "Mythos Preview demonstrates a leap in these cyber skills -- the vulnerabilities it has spotted have in some cases survived decades of human review and millions of automated security tests," the company said. In the hands of a ransomware gang or hostile governments, such a tool could lead to more devastating and frequent cyberattacks. Researchers say they have not been given access to independently verify Anthropic's claims about Mythos's performance. Gang Wang, an associate professor of computer science at the University of Illinois, said it's hard to assess the significance of Mythos Preview without more hands-on testing. Who will have access to it? Anthropic is calling its plan to grant access to a limited group of vetted partners Project Glasswing, after a type of butterfly with transparent wings that allow it to hide in plain sight. The participants include Amazon.com Inc., Apple Inc., Alphabet Inc.'s Google, Microsoft Corp., Nvidia Corp., Palo Alto Networks Inc., CrowdStrike Holdings Inc., Broadcom Inc., Cisco Systems Inc., JPMorganChase and the Linux Foundation, a nonprofit that supports open-source software projects. Anthropic described the project as "an urgent attempt to put these capabilities to work for defensive purposes." These organizations will use Mythos as part of their defensive security work, and Anthropic plans to share the findings of the project so others can benefit. Many companies already use so-called penetration exercises, in which they hire specialists to probe their systems for bugs so they can fix them before hackers get in. Mythos could allow companies to turbocharge that process, allowing them to find more flaws more quickly and narrow the opportunities for potential attacks. Why does Anthropic consider the release of Mythos a "watershed moment"? Anthropic described Mythos Preview as "a watershed moment for security." By their nature, zero-day vulnerabilities are difficult to find, and a small and murky industry has been built around finding them and selling them to government intelligence agencies, often for millions of dollars. According to Anthropic, the vulnerabilities Mythos Preview found were often "subtle and difficult to detect" and included a 27-year-old flaw in OpenBSD, an operating system that Anthropic says has a reputation as one of the most security-hardened in the world. Mythos was also allegedly able to turn vulnerabilities that are known but not widely patched into "exploits" that hackers could use to infiltrate computer networks. For instance, it found and chained together several flaws in the Linux kernel -- the core of the operating system and software that runs most of the world's internet servers -- to allow an attacker to take complete control of the machine. Non-experts also asked Mythos Preview to find ways to remotely take control of computers overnight and came back the next morning to a complete, working exploit, Anthropic said. Mythos is one of several new AI tools able to find zero days or build exploits. OpenAI's Codex Security and Google's "Big Sleep agent" have been developed to find vulnerabilities. OpenAI is also finalizing a product with advanced cybersecurity capabilities that it intends to release to select partners, Axios reported. Researchers at an Israeli cybersecurity startup called Buzz, meanwhile, say they have built an autonomous tool combining five AI agents that has a 98% success rate in exploiting known flaws. What safeguards are in place? The safeguards are a work in progress, according to Anthropic. "We have seen it reach unprecedented levels of reliability and alignment," Anthropic wrote, meaning it aligns with what humans want. "However, on rare occasions when it does fail or act strangely, we have seen it take actions that we find quite concerning." In one instance, a researcher urged an early version of Mythos to try to escape a secured, isolated "sandbox" computer and then find a way to send a message to that person. The tool succeeded but then continued to take "additional, more concerning actions," developing a multistep exploit to gain internet access. Anthropic said it doesn't plan to make Mythos Preview generally available, given its potential for misuse. Still, the company ultimately hopes to enable users to deploy "Mythos-class models" at scale for cybersecurity purposes and other uses. "To do so, we need to make progress in developing cybersecurity (and other) safeguards that detect and block the model's most dangerous outputs," it said. For the highest severity bugs found by Mythos, humans are involved: Specialists validate those discoveries before sending the information on to the people who maintain the code, according to Anthropic. It's a necessary but time-consuming process, but one that may eventually be eliminated as the model improves, the University of Illinois' Wang said. Does Mythos give cybersecurity defenders an advantage over hackers? Maybe, but it might take a while. Anthropic's process for disclosing flaws to the people who maintain the software or computer systems can be lengthy. So far, less than 1% of the potential vulnerabilities Mythos Preview has uncovered have been fully patched, the company said. At the same time, hackers are using AI to dramatically speed up how quickly they find and exploit vulnerabilities once they are disclosed. (Vendors are encouraged, and in some cases required, to publicly disclose vulnerabilities once they are discovered, and ideally provide a fix.) This gives cyber professionals less and less time to patch their networks. In a March 30 blog post, Palo Alto Networks Chief Executive Officer Nikesh Arora warned that the barrier for sophisticated attacks will continue to diminish over the next six months. "A single bad actor will now be able to run campaigns that required entire teams," he wrote. Yair Saban, chief executive officer of Buzz and a veteran of Israel's Unit 8200 cyber unit, said it took six engineers three weeks to build their AI-powered hacking tool. Others, including nation-state cyber spies and criminal hackers, can surely do the same, he said. Anthropic maintains that Mythos Preview and other AI tools like it will ultimately favor defenders. "In the long run, we expect that defense capabilities will dominate: that the world will emerge more secure, with software better hardened -- in large part by code written by these models," the company's Frontier Red Team said in an April 7 blog. "But the transitional period will be fraught."

[14]

Project Glasswing and open source: The good, bad, and ugly