US Officials Warn Banks About Anthropic Mythos After AI Model Finds Thousands of Vulnerabilities

47 Sources

[1]

Trump officials may be encouraging banks to test Anthropic's Mythos model | TechCrunch

Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell summoned bank executives for a meeting this week where they encouraged the executives to use Anthropic's new Mythos model to detect vulnerabilities, according to Bloomberg. Indeed, while JPMorgan Chase was the only bank listed as one of the initial partner organizations with access to the model, Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley are reportedly testing Mythos as well. Anthropic announced the model this week but said it would be limiting access for now, in part because Mythos -- despite not being trained specifically for cybersecurity -- is too good at finding security vulnerabilities. (Others suggested this was hype or simply a smart enterprise sales strategy.) The report is particularly surprising since Anthropic is currently battling the Trump administration in court over the Department of Defense's designation of Anthropic as a supply-chain risk; that designation came after negotiations fell apart over Anthropic's efforts to limit how its AI models can be used by the government. Meanwhile, the Financial Times reports that U.K. financial regulators are also discussing the risk posed by Mythos.

[2]

How Anthropic Learned Mythos Was Too Dangerous for the Wild

One balmy February evening in Bali, Nicholas Carlini stepped away between events at a wedding, opened his laptop, and set out to do some damage. Anthropic PBC had just made a new artificial intelligence model, called Mythos, available for internal review, and Carlini -- a well-known AI researcher -- intended to see what kind of trouble it could cause. Anthropic pays Carlini to stress-test its AI models to see whether hackers could leverage them for espionage, theft or sabotage. From Bali, where Carlini and his wife were attending an Indian wedding, he was staggered at what the model could do. Within hours Carlini found numerous techniques to infiltrate systems used around the world. Once Carlini was back in Anthropic's downtown San Francisco office, he discovered Mythos was able to autonomously create powerful break-in tools, including against Linux, the open-source code that underpins most of modern computing. Mythos orchestrated the digital equivalent of a bank robbery: getting past security protocols and through the front door of networks, and breaking into digital vaults that gave it access to online treasures. AI had picked locks, but now it could pull off an entire heist. Carlini and some of his colleagues began alerting staff to what they'd found. And each day they continued to discover high-severity and critical bugs in the systems Mythos probed, the kind of flaws normally uncovered by the world's best hackers. Meanwhile, Anthropic's Frontier Red Team -- a group of 15 "Ants," or Anthropic employees -- was experimenting in much the same way. The lab aims to ensure that Anthropic's models can't be used to harm humanity. They'll ship in robotic dogs and place them in a warehouse with engineers to test whether Claude could be used to control them maliciously. Or consult with biologists to understand whether the chatbot could be used to create biological weapons. Now, they were realizing that the biggest risk Mythos posed was to cybersecurity. "Within hours of getting the model, we knew it was different," says Logan Graham, who runs Anthropic's Frontier Red Team. A previous model, Opus 4.6, had shown indications it could help people exploit vulnerabilities in software. Mythos could exploit the vulnerabilities on its own, Graham says. This was a national security risk, he warned Anthropic's executives. That left Graham with the unenviable task of telling his bosses that their next major revenue generator was too hazardous to release to the public. Anthropic's co-founder and chief science officer, Jared Kaplan, said he had been monitoring Mythos' training "very carefully," as it was being built. By January he was starting to realize how capable Mythos was at finding vulnerabilities. Kaplan, a theoretical physicist, needed to consider whether these flaws were curiosities or "something very relevant to the infrastructure of the internet." He concluded it was the latter. Over the course of a week or two in late February and early March, he and co-founder Sam McCandlish weighed whether they could release the model. Around the first week of March, the executive team -- including Chief Executive Officer Dario Amodei, President Daniela Amodei, Chief Information Security Officer Vitaly Gudanets and others -- huddled to hear Kaplan and McCandlish's pitch. Mythos, they said, was too much of a risk to release generally. But Anthropic should let other companies, maybe even competitors, try it out. "It quickly became clear that we wanted to do something fairly unusual, that this wasn't going to be the same as the last launch," Kaplan said. By the first week of March, the company had agreed and greenlit its use as a cyber defense tool. The response was immediate. On the same day Anthropic publicly disclosed Mythos' existence, US Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell convened Wall Street leaders for a meeting in Washington. The message: Use Mythos to find your weaknesses -- now. The executives who attended refused to share what was discussed even to some of their top advisers, showing the gravity of the meeting, according to people close to them who asked not to be named describing private conversations. The urgent warnings from White House officials about Mythos' potency as a hacking tool -- and their advice to use it defensively -- point to the way that AI has become a decisive force in cybersecurity. Anthropic released Mythos to a limited group of organizations as part of "Project Glasswing," enabling the likes of Amazon Web Services Inc., Apple Inc., and JPMorgan Chase & Co. to experiment with it. Government agencies have also expressed interest. Prior to external release, Anthropic briefed senior officials across the US government on Mythos Preview's full capabilities, including both its offensive and defensive cyber applications. The company is having ongoing discussions with international governments too, an Anthropic official who asked not to be named discussing internal matters said. Competitor OpenAI also pounced on the attention, saying Tuesday that it would release a tool intended to spot software flaws, called GPT-5.4-Cyber. Anthropic hasn't publicly released Mythos as a cybersecurity tool, and many outside researchers haven't had a chance to validate the company's claims. But Anthropic's unprecedented decision to gate access reflects a growing view inside the industry and government that AI is changing cybersecurity economics by reducing the cost of finding vulnerabilities, compressing the time needed to investigate targets and lowering the skill barrier for certain types of attacks. Anthropic warns that Mythos's ability to act with greater autonomy comes with risk. In testing an earlier version of the model, they found dozens of examples of "concerning" behavior, including not following human direction and even, in rare cases, covering its tracks when violating human instructions. In one incident, the model developed a multi-step exploit to escape the limited environment it was inside to gain broad access to the internet and begin to publish material online, all on its own initiative. The software that now underpins everything from banking apps to hospital systems is laced with obscure coding flaws that trained specialists spend weeks or months trying to identify. Occasionally hackers get there first, resulting in data breaches and ransomware attacks that can have devastating consequences. High-profile names have been quick to question just how powerful Mythos really is, or how much of a risk it would pose if released. "A growing number of people are wondering if Anthropic is the AI industry's 'boy who cried wolf,'" White House AI advisor David Sacks wrote on the social media site X. "If Mythos-related threats don't materialize, the company will have a serious credibility problem." But hackers have already adopted large language models to launch complex malicious campaigns. A Chinese cyber-espionage group already used Anthropic's Claude to try breaching roughly 30 targets, while other attackers have used AI to steal data from government agencies, deploy ransomware and quickly break into hundreds of firewall tools meant to safeguard data. Among US government officials focused on national defense, the introduction of Mythos has created profound uncertainty about how to evaluate cybersecurity risk, according to a person familiar with the matter. Equipping an individual hacker with the model, or similar AI tools, would likely be a transformation equivalent to turning a conventional soldier into a special forces operator, the person said. At the same time, Mythos appears likely to be a force multiplier, the person said: Enabling a criminal hacking gang to operate at the level of a small nation state and for a small country's intelligence and military hackers to carry out breaches of the sort now done by China. "I really believe we will be safer and better, and we will be much more secure with AI," said Rob Joyce, former director of cybersecurity at the National Security Agency. "But I think there's this dark period between now and some time in the future where the advantage is very much offensive AI, where the people who haven't done the basics will get hacked." Mythos isn't the only model doing this kind of work. Numerous organizations have been using LLMs to find vulnerabilities, including previous Claude models and Google's Big Sleep. JPMorgan had successfully been using large language models before the Mythos announcement to help find vulnerabilities in the bank's software, according to a person familiar with the matter who requested anonymity to discuss confidential internal security projects. Efforts that had previously taken days or weeks to identify "zero-day" flaws and write code to exploit them now can take as little as an hour or even minutes, the person said. Zero-days are so-called because they're unknown to defenders, who thus have zero days to fix them. JPMorgan's focus has been primarily on supply chain and open-source software and has found flaws and subsequently alerted vendors, the person said. CEO Jamie Dimon said during an earnings call that Mythos "shows a lot more vulnerabilities need to be fixed." The bank had already been in talks with Anthropic to test the model before the public became aware of it, according to a person familiar with the matter who wasn't authorized to discuss the matter publicly. JPMorgan declined to comment. Other Wall Street banks and technology companies are now experimenting with Mythos to help plug holes before hackers can find a way in. Goldman Sachs Group Inc., Citigroup Inc., Bank of America Corp. and Morgan Stanley are among the financial institutions testing the technology internally, Bloomberg News has reported. Cisco Systems Inc. staffers are especially wary of whether intruders will use AI to try to find pathways into software that runs its networking equipment worldwide, such as routers, firewalls and modems, said Anthony Grieco, Cisco's chief security and trust officer. Grieco is particularly worried about how AI might accelerate hackers targeting devices that are end-of-life, and therefore won't be updated by Cisco going forward, Grieco said. Plugging the holes that AI tools are finding will remain problematic. That process, known as security patching, is such a costly, slow exercise for organizations that many choose not to squash their bugs at all. Devastating attacks like the one at Equifax Inc., where intruders stole records of about 147 million people, were possible because organizations didn't apply known fixes. Anthropic is in discussions with federal agencies, even after the Trump administration classified the AI firm as a supply-chain threat following its refusal to help facilitate mass surveillance of Americans. The Treasury Department was seeking to gain access to Mythos this week, and Secretary Bessent said the model would help the US maintain an AI edge over China. In one instance, the model wrote a web browser exploit that chained together four vulnerabilities, a feat that'd be a major challenge for human hackers. Such vulnerability chains lead into otherwise highly secure systems, like in the Stuxnet hack that damaged centrifuges at an Iranian nuclear facility, according to cybersecurity research reports on the matter. Mythos also was able to identify and exploit zero-day vulnerabilities in every single major web browser when directed to do so, according to Anthropic. Anthropic said it used Mythos to find exploits in Linux code, which is "underpinning most modern computing," according to Jim Zemlin, executive director of the Linux Foundation. That includes everything from Android smartphones and internet routers to NASA supercomputers. Mythos autonomously found several flaws in the open-source code that would allow an attacker to take complete control of a machine. Now, dozens of people at the Linux Foundation are experimenting with Mythos. For Zemlin, one question is whether the Anthropic model will yield the kinds of insights that would help developers write better software, so there are fewer vulnerabilities in the first place. "We're great at finding bugs," he said. "We're terrible at fixing them."

[3]

Bessent, Powell warn bank CEOs about Anthropic model risks, Bloomberg News reports

April 9 (Reuters) - U.S. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell convened an urgent meeting with bank executives on Tuesday to warn them about the cyber risks raised by Anthropic's latest AI model, Bloomberg News reported, citing sources. The meeting at the Treasury Department in Washington aimed to ensure banks are aware of potential risks posed by Anthropic's Mythos and similar models, and are taking steps to defend their systems, the report said on Thursday. Reuters could not immediately verify the report. Reporting by Carlos Méndez in Mexico City; Editing by Sumana Nandy Our Standards: The Thomson Reuters Trust Principles., opens new tab

[4]

Scott Bessent called in US bank CEOs to discuss Anthropic model's cyber risks

US Treasury secretary Scott Bessent summoned the leaders of some of the largest US banks earlier this week to discuss the cyber risk posed by the latest AI model from Anthropic, according to people familiar with the matter. The meeting was attended by the leaders of Bank of America, Citigroup, Goldman Sachs, Morgan Stanley and Wells Fargo, the people said, who were already in Washington for a meeting of the banking lobby group. JPMorgan Chase chief executive Jamie Dimon was invited to the conversation with Bessent but could not attend. The meeting, attended by Federal Reserve chair Jay Powell, was first reported by Bloomberg. The summons from the Treasury secretary underscores the concerns inside the Trump administration over the capabilities of Anthropic's latest model because of its advanced ability to detect cyber security vulnerabilities that could be exploited by bad actors. Executives have been warning for years of the cyber risks facing the financial system. In his annual letter published this week, Dimon wrote that it "remains one of our biggest risks" and that "AI will almost surely make this risk worse" and would require significant investment for defence. Anthropic on Tuesday released the model, dubbed Claude Mythos Preview, to a select group of partners, including Amazon, Apple and Microsoft, to give them a "head start on being able to secure vulnerabilities". Mythos, which is a "general purpose" model with capabilities beyond cyber security, marked the first time Anthropic had limited the launch of a new model. "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities," Anthropic said in a statement announcing the release. It added that Mythos had already found thousands of severe vulnerabilities, including in "every major operating system and web browser", some of which had been undetected for decades. Anthropic said it has held talks with US government officials about the model's "offensive and defensive cyber capabilities". The limited release of Mythos came after two incidents where data from the start-up leaked online -- including documents related to Mythos and the underlying code for its Claude assistant -- raising concerns about Anthropic's security. The company blamed human error for the incidents. Anthropic declined to comment on the bank CEOs meeting. The Federal Reserve, JPMorgan, Goldman Sachs, Bank of America and Wells Fargo declined to comment. The Treasury, Citibank and Morgan Stanley did not respond.

[5]

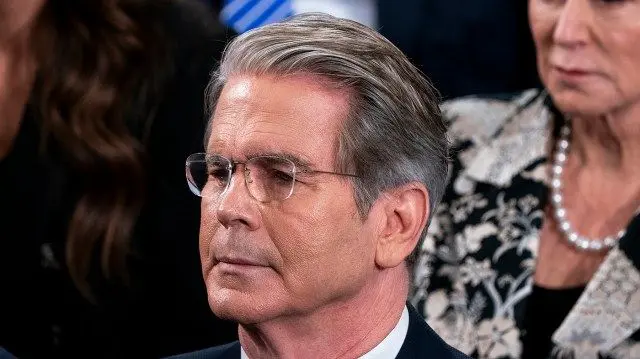

Jamie Dimon says Anthropic's Mythos reveals 'a lot more vulnerabilities' for cyberattacks

Jamie Dimon, chief executive officer of JPMorgan Chase & Co., right, departs the US Capitol in Washington, DC, US, on Wednesday, Feb. 25, 2026. JPMorgan Chase CEO Jamie Dimon said Tuesday that while artificial intelligence tools could eventually help companies defend themselves from cyberattacks, they are first making them more vulnerable. Dimon said that JPMorgan was testing Anthropic's latest model -- the Mythos preview announced by the AI firm last week -- as part of its broader effort to reap the benefits of AI while protecting against bad actors wielding the same technology. "AI's made it worse, it's made it harder," Dimon told analysts on the bank's earnings call Tuesday morning. "It does create additional vulnerabilities, and maybe down the road, better ways to strengthen yourself too." When asked by a reporter about Mythos, Dimon seemed to refer to Anthropic's warning that the model had already found thousands of vulnerabilities in corporate software. "I think you read exactly what is it," Dimon said. "It shows a lot more vulnerabilities need to be fixed." The remarks reveal how artificial intelligence, a technology welcomed by corporations as a productivity boon, has also morphed into a serious threat by giving bad actors new ways to hack into technology systems. Last week, Treasury Secretary Scott Bessent summoned bank CEOs to a meeting to discuss the risks posed by Mythos. JPMorgan, the world's largest bank by market cap, has for years invested heavily to stay ahead of threats, with dedicated teams and constant coordination with government agencies, Dimon said. "We spend a lot of money. We've got top experts. We're in constant contact with the government," he said. "It's a full-time job, and we're doing it all the time."

[6]

Banks Warned About Anthropic's New, Powerful A.I. Technology

In an unusual move, the Treasury secretary and the Federal Reserve chair gathered bank executives to caution about cyberthreats posed by artificial intelligence. The leaders of some of America's largest banks were warned by a top government official this week about a new artificial intelligence model from Anthropic that could lead to heightened risks of cyberattacks, according to three people briefed on the matter but not permitted to speak publicly. The stark message was delivered on Tuesday morning by Treasury Secretary Scott Bessent to a small group of chief executives, including those from Bank of America, Citi and Wells Fargo, in a hastily arranged meeting in Washington, D.C. Mr. Bessent, the people said, cautioned the banks that allowing the new A.I. software to run through their internal computer systems could pose a serious risk to sensitive customer data. The Federal Reserve chair, Jerome H. Powell, who has spoken publicly in recent weeks about the threat of cyberattacks against the financial system, also attended Tuesday's meeting with the bank leaders. The warnings relate to a new intelligence model that Anthropic named Claude Mythos Preview. Anthropic has said the model is particularly good at identifying security vulnerabilities in software that human developers could not find. At Tuesday's meeting, the people briefed on the matter said, the bank executives were told that the new model might be so effective at finding security weaknesses inside banks that hackers or other so-called third-party bad actors could get their hands on the information and exploit it. Anthropic itself has warned about the risks. The company said this week that the model's advancements were so powerful and potentially dangerous that they could not safely be released to the public yet and would instead be contained to a coalition of 40 companies that it called "Project Glasswing." That group includes at least one bank, JPMorgan, the nation's largest, which earlier said it would use the software "to evaluate next-generation A.I. tools for defensive cybersecurity across critical infrastructure." The Trump administration and Anthropic are locked in a legal battle over the Defense Department's recent designation of the company as a "supply chain risk." The government issued that designation after Anthropic insisted on putting limits on the use of its A.I. technology in war. In a statement, a Treasury spokesperson said, "This week's meeting was convened by Secretary Bessent to initiate a process for planning and coordination of our approach to the rapid developments taking place in A.I." The existence of the meeting was reported earlier by Bloomberg News. The Fed declined to comment. "We're taking every step we can to make sure that everybody is safe from these potential risks, including Anthropic agreeing to hold back the public release of the model until our officials have figured everything out," Kevin A. Hassett, director of the National Economic Council, told Fox News on Friday. "There's definitely a sense of urgency." Logan Graham, an Anthropic executive, said in a statement that the new technology would help "secure infrastructure that is critical for global security and economic stability."

[7]

Anthropic's Mythos Is a Wake-up Call For Everyone, Not Just Banks

Mythos, a new artificial intelligence model that Anthropic PBC has teased as too dangerous to release, looked at first like a problem for banks. Days after the company announced the new technology, US Treasury Secretary Scott Bessent summoned Wall Street leaders to make sure they were taking precautions to defend their systems, creating invaluable publicity for Anthropic and raising questions about who gets an exclusive peek at its threatening progeny. The Treasury is now pushing for access to Mythos. One organization that already has it is the UK's AI Security Institute, which has become the world's top neutral arbiter of what counts as safe and secure AI. It found that some of the hype around Mythos is warranted. It is indeed more capable of being used for complex cyberattacks than other AI tools such as OpenAI's ChatGPT or Google's Gemini. But it is most perilous for "weakly defended" or simplified systems. Large banks have some of the most secure IT in the world, and while Mythos and other powerful AI poses a threat in the wrong hands, it's the much broader array of small and medium-sized companies that look most vulnerable to hackers and bad actors using the tools. Cyber specialists have long complained that companies treat security as an afterthought, and the result is online services and software that are riddled with bugs, handing hackers a possible way to infiltrate a computer system. Tech companies have an approach for dealing with this, called "responsible disclosure." Once a flaw is found in their software, they'll announce it to the world with a suggested fix, giving their customers time to make the patch and move on with their lives. Microsoft Corp.'s version of this is Patch Tuesday, which despite its name refers to a monthly disclosure of flaws the company has found in Office 365, Windows and other products. IT staff at banks like Barclays Plc and Wells Fargo & Co. will take those suggested patches, test them to make sure they don't break any of their existing systems, get sign off from management, and then deploy them. That takes weeks or months. Up until the advent of generative AI, the process worked just fine because it would typically take an even longer time for bad actors to find a way to attack a system based on the flaw that had been disclosed. They'd have to study the bug and also experiment with different methods for exploiting it. Artificial intelligence tools have changed all that. Even two years ago, hackers could take the details of a disclosure and paste it into ChatGPT, then tell the bot to scan a public database of source code such as GitHub for other patterns which could then be exploited. Let's say for instance that Microsoft announced that its researchers had found a flaw in how Office 365 handles a file. A chatbot could not only suggest how to exploit it but quickly find other software like Microsoft Outlook or Teams that have similar deficiencies. This has all got even easier for hackers in the last few months, as AI companies have imbued their models with "agentic" capabilities, effectively giving them the power to act independently. Anthropic's Claude Cowork, released in January, can now carry out tasks like sending emails and making calendar appointments. For those who want to break into software, such tools won't just find weaknesses, they'll try different ways to hammer at them automatically until one method works. Mythos can even "chain" a software bug into multi-step attacks, something only highly-skilled human hackers had been able to do previously. It's the equivalent of a burglar planning a series of steps for a break-in: finding that first open window, using it to unlock a door from the inside and then disabling the alarm. Each step alone isn't enough but together they get full access. Till now, generative AI's impact on cyber security has been amorphous. There was no single tool that could launch devastating new attacks, but large language models were still harnessed to supercharge old tricks of the trade. Hackers have used chatbots to polish up emails for phishing attacks to look more credible, or real-time avatar generators to create deepfake video calls that trick people into thinking a man in his living room is a young woman. Sign up for the Bloomberg Opinion bundle Sign up for the Bloomberg Opinion bundle Sign up for the Bloomberg Opinion bundle Get Matt Levine's Money Stuff, John Authers' Points of Return and Jessica Karl's Opinion Today. Get Matt Levine's Money Stuff, John Authers' Points of Return and Jessica Karl's Opinion Today. Get Matt Levine's Money Stuff, John Authers' Points of Return and Jessica Karl's Opinion Today. Plus Signed UpPlus Sign UpPlus Sign Up By continuing, I agree to the Privacy Policy and Terms of Service. But agentic AI is set to fuel the act of hacking itself, which has long been an opportunistic pursuit for the unscrupulous. So-called black hats tend not to go after banks because they're so secure. Instead they scan the web to spot vulnerabilities, be it a hospital they can infiltrate to make ransomware demands or a mom-and-pop shop. The recent advances in AI are a problem for these organizations because the moment a flaw is disclosed by a software provider, they now have precious little time to update and patch their systems. According to zerodayclock.com, the average time between a software flaw being made public and a working attack being built has collapsed from 771 days in 2018, to less than four hours today. Anthropic's disclosure of Mythos certainly benefits its own publicity efforts ahead of an initial public offering, adding to the mystique around the potency of its technology. But it's also forcing a much-needed reckoning over how the window of time between published IT flaws and their exploitation has effectively vanished. That raises questions over whether "responsible disclosure" is such a smart idea in the first place, and whether the process of patching flaws over weeks and months is now fruitless. Even Wall Street can't answer these questions yet, but banks at least have the staffing and the money to work out the difficult structural changes needed to eventually do patches in near-real time. The bigger problem will be for smaller firms who need to move just as fast, and who will require technical and regulatory help that the market can't yet provide.

[8]

Trump administration blacklisted Anthropic - now tells banks to use its AI

In short: Treasury Secretary Scott Bessent and Fed Chair Jerome Powell are urging Wall Street's biggest banks to test Anthropic's Mythos AI model for cybersecurity vulnerabilities, even as the Pentagon fights Anthropic in court after branding it a supply chain risk for refusing to remove safety guardrails on autonomous weapons and mass surveillance. JPMorgan Chase, Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley are all reportedly testing the model. Mythos, which found thousands of zero-day flaws across major operating systems and browsers, is being distributed through a restricted programme called Project Glasswing to roughly 50 organisations. UK regulators are also scrambling to assess the risks. The Trump administration is quietly encouraging America's largest banks to test the same AI company's technology it has spent two months trying to destroy. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell summoned executives from JPMorgan Chase, Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley this week and urged them to use Anthropic's new Mythos model to detect cybersecurity vulnerabilities in their systems, according to Bloomberg. The recommendation is remarkable for its contradiction. Anthropic is currently fighting the Department of Defense in federal court after Defense Secretary Pete Hegseth designated the company a "supply chain risk", a label that bars it from military contracts and directs defence contractors to stop using its technology. The designation came after Anthropic refused to remove two safety restrictions from its AI models: no use in fully autonomous weapons, and no deployment for mass surveillance of American citizens. Now, two of the administration's most senior economic officials are telling Wall Street to adopt the very product the Pentagon has tried to blacklist. Claude Mythos Preview is a frontier model that Anthropic did not explicitly train for cybersecurity. The vulnerability-finding capability emerged as what the company describes as a downstream consequence of general improvements in code reasoning and autonomous operation. During testing, Mythos identified thousands of zero-day vulnerabilities, flaws previously unknown to software developers, across every major operating system and web browser. The capabilities were significant enough that Anthropic chose not to release the model publicly. Instead, it launched Project Glasswing, a controlled programme giving access to roughly 50 organisations including Amazon Web Services, Apple, Google, Microsoft, Nvidia, Cisco, CrowdStrike, Palo Alto Networks, and JPMorgan Chase. Anthropic has committed up to $100 million in usage credits and $4 million in direct donations to open-source security organisations as part of the initiative. The framing, a model "too dangerous to release", has drawn scepticism. Tom's Hardware noted that claims of "thousands" of severe zero-day discoveries relied on just 198 manual reviews, and that many of the flagged vulnerabilities were in older software or were impractical to exploit. Others in the security community suggested the restricted release looked less like responsible AI governance and more like a smart enterprise sales strategy: create scarcity, generate fear, and let the customers come to you. The collision between the Bessent-Powell recommendation and the Hegseth designation is not a matter of mixed signals, it is two branches of the same administration pursuing openly contradictory policies toward the same company. The Pentagon dispute began in February, when Hegseth gave Anthropic CEO Dario Amodei a Friday deadline to drop the company's safety restrictions or lose its $200 million defence contract. Amodei refused. Hegseth responded by declaring Anthropic a supply chain risk and President Trump ordered federal agencies to stop using its technology. A Pentagon official accused Amodei of having a "God complex." Trump called Anthropic a "radical left, woke company." The courts have since split. A federal judge in California issued a preliminary injunction blocking the supply chain designation, writing that "nothing in the governing statute supports the Orwellian notion that an American company may be branded a potential adversary and saboteur of the US for expressing disagreement with the government." An appeals court in Washington, D.C., denied Anthropic's request to temporarily halt the blacklisting while the case proceeds. The net effect: Anthropic is excluded from DoD contracts but can continue working with other government agencies. It is into that gap, excluded from the Pentagon but not from the Treasury or the Fed, that Bessent and Powell stepped this week. JPMorgan Chase was the only bank listed as an official Project Glasswing partner, but Bloomberg reported that Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley are all testing Mythos internally. The use cases reportedly include vulnerability detection, fraud-risk flagging, and compliance workflow automation across financial systems. The speed of adoption reflects a genuine fear. If Mythos can find zero-day vulnerabilities in operating systems and browsers, it can presumably find them in banking infrastructure too, and so can any sufficiently capable model that follows. The defensive logic is straightforward: better to find the holes before an adversary's AI does. The regulatory response has been international. The Financial Times reported that UK officials at the Bank of England, the Financial Conduct Authority, and HM Treasury are in discussions with the National Cyber Security Centre to examine potential vulnerabilities highlighted by Mythos. Representatives from major British banks, insurers, and exchanges are expected to be briefed within the fortnight. The Mythos episode exposes a structural problem in the administration's approach to AI. The same government that branded Anthropic a national security threat because it refused to remove safety guardrails is now urging the financial system to depend on Anthropic's technology for its own security. The message to Anthropic is incoherent: you are too dangerous to trust with defence contracts, but indispensable enough that the Treasury Secretary personally phones bank CEOs to recommend your product. For Anthropic, the contradiction is strategically useful. Every bank that adopts Mythos deepens the company's integration into critical national infrastructure, making the supply chain designation look increasingly absurd. For the administration, the episode reveals what happens when national security policy is driven by personal grievance rather than coherent strategy: the left hand blacklists what the right hand is busy deploying. The banks, for their part, appear untroubled by the contradiction. When the Treasury Secretary and the Fed Chair tell you to test something, you test it, regardless of what the Pentagon thinks about the company that made it.

[9]

Powell, Bessent discussed Anthropic's Mythos AI cyber threat with major U.S. banks

Federal Reserve Chairman Jerome Powell and Treasury Secretary Scott Bessent met with major U.S. bank CEOs this week to discuss the possible cyber risks raised by Anthropic's Mythos model, CNBC confirmed Friday. The bank heads were already in Washington, D.C., for another meeting, but a special gathering was called to discuss Mythos, according to a person familiar with the matter, who asked not to be named in order to share information about a confidential matter. Earlier this week, Anthropic rolled out the new artificial intelligence model in a limited capacity over concerns that hackers could exploit its capabilities. Anthropic did not immediately respond to CNBC's request for comment.

[10]

Anthropic Wins Accolades From Canada's AI Minister Over Mythos Approach

A Canadian cabinet minister praised Anthropic PBC's decision to introduce its Mythos model to select companies and allow them to test the technology before releasing it more widely. "Working with defenders first, rather than releasing this new model broadly, is the responsible path and gives people protecting critical systems a head start," Evan Solomon, Canada's minister responsible for artificial intelligence, said Tuesday after meeting with officials from the AI company. Anthropic has warned that Mythos is powerful enough that it may be capable of cyberattacks if companies don't try it against their own systems and build defenses ahead of any wider release. The San Francisco-based company has limited access to a small number of firms initially, including JPMorgan Chase & Co., Amazon.com Inc. and Apple Inc. They're all part of "Project Glasswing," which will work to secure the most important systems before similar AI models become available. US Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell summoned Wall Street leaders last week to a meeting on the related cyber risks. Treasury's technology team is now seeking to gain access to the Anthropic model so it can begin looking for vulnerabilities, a person familiar with the matter told Bloomberg News. US Treasury Seeking Access to Anthropic's Mythos to Find Flaws OpenAI Releases Cyber Model to Limited Group in Race With Mythos Lagarde, Worried About AI, Lauds Anthropic's Approach on Mythos Odd Lots Newsletter: You Don't Hear Much About the AI Overbuild Officials in the Canadian financial sector and government are also in active talks about the potential threats. Last week, members of a government-industry committee known as the Canadian Financial Sector Resiliency Group met to discuss Mythos. The group includes representatives from the Bank of Canada, regulators, banks and other financial firms. Desjardins Group, a large financial co-operative based in the province of Quebec, said it's "actively preparing" for the launch of Mythos. "We are working closely with various working groups, both in Canada and internationally, to anticipate challenges and ensure that our organization is ready to adopt this technology in a responsible and secure manner," a spokesperson said in an emailed statement. Desjardins was hit by a huge leak in 2019 of the personal information of millions of Canadian customers, though it turned out to be the work of a rogue employee. Solomon's brief comments made no mention of the specific vulnerabilities posed by the technology, nor of any efforts by the Canadian government to get access to Anthropic's model for testing. Instead, he lauded the company's preparation. "This is the kind of proactive approach we expect from frontier AI companies: identify risks early, engage governments and the security community, and put safeguards in place before capabilities are widely available," he said. On Tuesday, Tobias Adrian, director of the monetary and capital markets department at the International Monetary Fund, warned that governments and regulators must "stay at the frontier" of rising threats from artificial intelligence, adding that it's important for global financial stability that security threats are quickly addressed.

[11]

Scoop: BNY gets access to OpenAI, Anthropic's advanced cyber models

Why it matters: Wall Street is working overtime to win the AI security race. What they're saying: Anthropic and OpenAI recognize the importance of releasing its cyber-capable models to certain institutions early, Vince tells Axios. It's key to protecting critical infrastructure, "and in our case, obviously the financial services world," Vince says. * The AI labs also want feedback and realworld testing, Vince says. * Other firms with access to these previews will be able to share lessons learned with one another as well as the labs themselves, Vince said. Catch up quick: The access comes after Treasury Secretary Scott Bessent and Fed Chair Jerome Powell called a meeting with the biggest names on Wall Street to discuss Mythos, first reported by Bloomberg and confirmed by Axios. * The meeting focused on risks of AI-powered attacks on bank systems as well as preventative measures. Zoom in: OpenAI's new model variant, GPT-5.4-Cyber, will be rolled out to a broader set of organizations than Anthropic's Mythos, which initially reached about 40 enterprises. * While Anthropic signaled that its model was too dangerous to release broadly, OpenAI is making tools more widely available for defensive cyber work while still preventing nefarious actors from accessing them, Axios' Sam Sabin writes. Follow the money: BNY is all-in on AI. * The bank , which plans to announce its earnings later this morning, has over 100 digital employees that have their own tasks, managers and email addresses. * Under Vince's leadership, BNY rose to the best performing stock in an index tracking a group of major banks, up 218%. What we're watching: How banks maintain their long-held status as titans of cybersecurity defense in an AI-powered world.

[12]

Goldman Sachs chief 'hyper-aware' of risks from Anthropic's Mythos AI

US bank has the Claude model and is working closely with the tech firm to improve cyber protection Goldman Sachs's chief executive, David Solomon, has said he is "hyper-aware" of the capabilities of Anthropic's Mythos AI model and is working "closely" with the tech firm after it issued warnings about the cybersecurity risk it poses. The US bank had been monitoring the rapid advances in artificial intelligence, including large language models (LLMs), as part of wider efforts to protect itself from hackers. "Obviously the LLMs are making rapid progress and we're hyper-aware of the enhanced capabilities of these new models with the help of the US government and the model publishers," Solomon told analysts on an earnings call on Monday. That included Anthropic, the company behind the Claude family of AI tools. Last week it claimed that its latest model, Mythos, posed an unprecedented risk because of its ability to expose flaws in IT systems. "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities," Anthropic said in a blogpost last Wednesday. "The fallout - for economies, public safety, and national security - could be severe." Solomon said on Monday: "We're aware of Mythos and its capabilities ... We have the model. We're working closely with Anthropic and all of our security vendors to kind of harness frontier capabilities wherever it's possible. And this will continue to be an important focus. "We are very focused on supplementing our cyber and infrastructure resilience. And this is part of our ongoing capabilities that we have been investing in, and are accelerating our investment in." The news comes after the US Treasury secretary, Scott Bessent, summoned Solomon and other big American bankers to Washington to discuss the Mythos model last week. That meeting focused on heads of so-called systemically important banks - where regulators believe that a major disruption to their operations, or their potential collapse, would put financial stability at risk. On Monday the UK government's AI Security Institute (AISI) warned that Mythos was a "step up" over previous models in terms of the cyber threat it posed. AISI said Mythos could carry out attacks that required multiple actions and discover weaknesses in IT systems without human intervention. It said these tasks would normally take human professionals days to carry them out. Mythos was the first AI model to successfully complete a 32-step simulation of a cyber-attack created by AISI, solving the challenge in 3 out of its 10 attempts. AISI said Mythos appears to be capable of autonomously attacking small, weakly defended IT systems but it could not say for sure whether it could attack well-defended systems because its tests lack security features, such as defensive tools. The AISI blogpost ended with a warning that future advanced AI models will only improve on Mythos, so "investment now in cyber defence is vital". UK regulators are due to raise the issue of Mythos's risks with British bank bosses and government officials in the coming weeks. The Cross Market Operational Resilience Group (CMorg), made up of chief executives as well as officials from the Treasury, Bank of England, Financial Conduct Authority and National Cyber Security Centre, are due to meet within the next fortnight. The Bank of England, which is handling communications regarding Cmorg, declined to comment.

[13]

The AI that found 27-year-old vulnerabilities no human ever caught before just forced an emergency meeting with every major Wall Street CEO | Fortune

Treasury Secretary Scott Bessent and Fed chair Jerome Powell reportedly convened Wall Street leaders on Tuesday in an emergency meeting on concerns about Anthropic's latest AI model, flagging concerns about a greater cybersecurity risk. Bessent and Powell assembled the group of high-powered execs at the Treasury's headquarters to ensure banks were aware of the cyber risks presented by Anthropic's new model, Mythos, and similar future models, reported Bloomberg and Financial Times. Sources who spoke to Bloomberg said those in attendance included Citigroup CEO Jane Fraser, Morgan Stanley CEO Ted Pick, Bank of America CEO Brian Moynihan, Wells Fargo CEO Charlie Scharf, and Goldman Sachs CEO David Solomon. JPMorgan CEO Jamie Dimon was also invited, but was unable to attend, the sources said. The Federal Reserve declined to comment to Fortune. The Treasury didn't immediately respond to Fortune's requests for comment. The meeting comes just weeks after Fortune exclusively reported Anthropic was developing an unreleased model described by the company as "by far the most powerful AI model" it had ever developed, which the AI company inadvertently made public last month through its content management system. Later, the company acknowledged that model was Claude Mythos. Anthropic on Tuesday released a report entitled "Assessing Claude Mythos Preview's cybersecurity capabilities," citing the powerful capabilities of its new model. One capability included Mythos Preview, which was able to find many 10- and 20-year-old vulnerabilities, including a 27-year-old vulnerability in OpenBSD, which is an operating system that has a reputation of being one of the most secure. Anthropic briefed senior U.S. government officials and industry stakeholders on Mythos Preview's capabilities ahead of its release, someone with knowledge on the matter told Fortune that. In a blog post, the company said it is willing to work with officials at all levels of government to ensure national security is a priority when rolling out new AI models, and that the U.S. maintains a lead in AI technologies. Anthropic told Fortune that partnering with the government was the company's plan from the start (the company is currently in a legal battle with the Pentagon after the Defense Department blacklisted it for placing restrictions on use of its AI technologies). In partnership with Amazon Web Services, Amazon, Google, JPMorgan Chase, among other key tech companies, Anthropic launched Project Glasswing this week, an initiative aimed to secure critical software amid AI advancements. As part of the partnership, Anthropic said in a blog post it would share what they learn with the entire industry. Aside from tech and financial services partners, the company has extended access to 40 additional organizations who build or deploy critical software infrastructure, and committed up to $100 million in Mythos Preview usage credits, the most basic AI usage unit. Anthropic noted the project was launched as a reaction to the capabilities the company has observed in its new frontier model Mythos that it believes could "reshape cybersecurity." "Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely," the post warned. "The fallout -- for economies, public safety, and national security -- could be severe." Anthropic said in the post Tuesday it would release the new model initially to "a limited group of industry partners to "enable defenders to begin securing the most important systems before models with similar capabilities become broadly available." Other business leaders have sounded the alarm on the cybersecurity risks powerful AI models pose. Dimon outlined in his annual letter to shareholders the cybersecurity risks major industries and corporations face from AI, saying cybersecurity "remains one of our biggest risks." He added, "AI will almost surely make this risk worse". As part of Project Glasswing, JPMorgan aims to reduce cyber risks stemming from AI's fast-evolving capabilities. "Promoting the cybersecurity and resiliency of the financial system is central to JPMorganChase's mission, and we believe the industry is strongest when leading institutions work together on shared challenges," Pat Opet, JPMorgan chief information security officer, said in a statement. "Project Glasswing provides a unique, early stage opportunity to evaluate next-generation AI tools for defensive cybersecurity across critical infrastructure both on our own terms and alongside respected technology leaders," he added.

[14]

Powell, Bessent Warn Banks About Security Risks From Anthropic's Mythos AI: Bloomberg - Decrypt

Anthropic has limited access to the model while it evaluates potential security risks. U.S. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell reportedly convened a meeting with Wall Street bank CEOs earlier this week to warn about cybersecurity risks tied to a new artificial intelligence model from Anthropic. According to a report by Bloomberg, the meeting included executives from Citigroup, Bank of America, Wells Fargo, Morgan Stanley, and Goldman Sachs. Officials discussed Anthropic's new AI model Mythos, which has recently drawn broad concern over its apparent advanced cybersecurity capabilities. Officials convened the meeting to ensure banks understand the risks posed by systems capable of identifying and exploiting software vulnerabilities across operating systems and web browsers, and to encourage institutions to strengthen defenses against potential AI-assisted cyberattacks targeting financial infrastructure. Security researchers have warned that tools capable of automatically discovering vulnerabilities could accelerate both defensive security work and malicious hacking if misused. Anthropic's Mythos model first surfaced online in March after draft materials about the system leaked online, revealing what the company described as its most capable AI model yet. In testing, the system reportedly found thousands of previously unknown software vulnerabilities, including zero-day flaws across major operating systems and web browsers. Anthropic researchers said in a report earlier this week that Mythos Preview's vulnerability-discovery capabilities were not intentionally trained, but instead emerged from broader improvements in the model's coding, reasoning, and autonomy. "The same improvements that make the model substantially more effective at patching vulnerabilities also make it substantially more effective at exploiting them," the firm wrote. Because of those capabilities, Anthropic has restricted access to a small group of cybersecurity organizations. "Given the strength of its capabilities, we're being deliberate about how we release it," Anthropic said in a statement. "As is standard practice across the industry, we're working with a small group of early access customers to test the model. We consider this model a step change and the most capable we've built to date." To address that risk, Anthropic is testing Mythos through Project Glasswing, a collaboration with major technology and cybersecurity companies that uses the model to identify and patch vulnerabilities in critical software before attackers can exploit them. "Project Glasswing is a starting point. No one organization can solve these cybersecurity problems alone," the company said in a statement. "Frontier AI developers, other software companies, security researchers, open-source maintainers, and governments across the world all have essential roles to play."

[15]

Why Anthropic's Mythos model has Washington and Wall Street worked up

Anthropic's most powerful new AI model, Mythos, is too dangerous to release to the public, the company says, sparking urgent discussions with governments and financial regulators. Anthropic is in discussions with the US government over its new Mythos AI model, which the company has said is too powerful to release to the public as it "poses unprecedented cybersecurity risks". The banking industry is also sounding the alarm. "The government has to know about this stuff," Anthropic's co-founder said on Monday at the Semafor World Economy event in Washington. "Absolutely, we're talking to them about Mythos, and we'll talk to them about the next models as well," he added. Referencing the public dispute with the government, which led to the company being labelled a supply-chain risk last month, he said, "I don't want that to get in the way of the fact that we care deeply about national security." The supply-chain risk designation followed the collapse of negotiations over Anthropic's efforts to limit how the US defense department can use its AI models. The move comes after Treasury Secretary Scott Bessent convened a meeting of senior American bankers in Washington to discuss the Mythos model last week. The meeting encouraged the banking executive to use Antropic's Mythos model to detect vulnerabilities, according to Bloomberg. Anthropic also said it would limit the release of its new AI model to a few tech and cybersecurity firms. The list includes Amazon, Apple and JP Morgan Chase. Goldman Sachs, Citigroup, Bank of America and Morgan Stanley are reportedly testing the Anthropic model too, Bloomberg reported. On Monday, the United Kingdom's government AI Security Institute (AISI) issued a warning that Mythos was a "step up" over previous models in terms of the cyber threat it posed. Meanwhile, the Financial Times reported that the UK financial regulators are also discussing Mythos' potential risks.

[16]

IMF chief says she's concerned about cybersecurity risks posed by Anthropic's latest AI model

Washington -- The head of the International Monetary Fund said she is concerned about a powerful new AI model from Anthropic that poses major cybersecurity risks, warning "time is not our friend on this one." Kristalina Georgieva, the IMF's managing director, said in an interview set to air Sunday on "Face the Nation with Margaret Brennan" that the world does not have the ability "to protect the international monetary system against massive cyber risks." "The risks have been growing exponentially," Georgieva said. "Yes, we are concerned. We are very keen to see more attention to the guardrails that are necessary to protect financial stability in the world of AI." On Tuesday, Federal Reserve Chair Jerome Powell and Treasury Secretary Scott Bessent held an urgent meeting with Wall Street leaders to discuss the cybersecurity risks posed by Anthropic's Claude Mythos Preview, sources told CBS News. A Treasury Department spokesman said in a statement that "additional coordination meetings by Treasury are planned across a number of regulators and institutions on an ongoing basis to address these developments, as well as a host of other issues." In a blog post earlier this week, Anthropic said the model has demonstrated "a leap" in the ability to spot cybersecurity vulnerabilities -- some of them decades old -- and exploit them. Anthropic is only releasing the model to select partners so they can use it to harden their systems. "Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser. Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. The fallout -- for economies, public safety, and national security -- could be severe," the company said. Georgieva said key financial institutions, including central banks, need to "work together" and be "very attentive" in managing the risks of cyberattacks. "It is an issue that easily can present itself in other parts of the world, and that is why we need people to cooperate," she said.

[17]

US summoned bank bosses to discuss cyber risks posed by Anthropic's latest AI model

Reports say Fed chair Jerome Powell among attenders at meeting in Washington The US Treasury secretary, Scott Bessent, summoned major American bank chiefs to a meeting in Washington this week amid concerns over the cyber risks posed by Anthropic's latest AI model, according to reports. Bosses including the Federal Reserve chair, Jerome Powell, were said to have gathered at the Treasury headquarters for the meeting after the release of the Claude Mythos AI model that Anthropic says poses unprecedented cybersecurity risks. A recent leak of Claude's code prompted the startup to release a blogpost at the beginning of the month saying that AI models had surpassed "all but the most skilled humans at finding and exploiting software vulnerabilities", adding: "The fallout - for economies, public safety, and national security - could be severe." This week's meeting was reportedly called while bank bosses were already in Washington for a lobby group meeting, with a guest list focused on heads of systemically important banks - meaning regulators believe a major disruption to their operations, or their potential collapse, would put financial stability at risk. Attenders included the Goldman Sachs chief executive, David Solomon, Bank of America's Brian Moynihan, Citigroup's Jane Fraser, Morgan Stanley's Ted Pick and the Wells Fargo boss Charlie Scharf, according to Bloomberg, which first reported details of the meeting. JP Morgan's Jamie Dimon was invited but unable to attend. In an annual letter to shareholders, published earlier this week, Dimon warned that cybersecurity "remains one of our biggest risks" and that "AI will almost surely make this risk worse". Anthropic has said that its Mythos model, yet to be shared with the public, has exposed thousands of vulnerabilities in software and popular applications, prompting it to limit the release of the new model to a small clutch of businesses, including Amazon, Apple and Microsoft. It marks the first time Anthropic has restricted the release of any of its products. The networking companies Cisco and Broadcom have also gained access, along with the Linux Foundation, which promotes the free, open-source Linux computer operating system. It comes amid concerns that hackers could end up using such tools for figuring out passwords or cracking encryption that is intended to keep data safe. The company said the oldest of the vulnerabilities uncovered by Mythos were up to 27 years old, none of which are believed to have been noticed by their creators or tech monitors before being identified by the AI mode. The meeting comes weeks after the US government designated Anthropic as a supply chain risk, allegations that Anthropic is fighting in court. The Federal Reserve, Anthropic and the US banks declined requests for comment from Bloomberg. The Treasury did not respond to the news outlet's request for comment.

[18]

US Treasury Seeking Access to Anthropic's Mythos to Find Flaws

The US Treasury Department's technology team is seeking to gain access to Anthropic PBC's Mythos AI model so it can begin hunting for vulnerabilities, according to a person familiar with the situation. Treasury Chief Information Officer Sam Corcos was aiming to gain access to the model, which Anthropic has been releasing to a limited number of institutions, as soon as this week, the person said, asking not to be identified because the information isn't public. Corcos briefed the Treasury's cybersecurity team on the technology last week and directed it to prepare for the eventual threats from powerful AI systems, the person said. Treasury didn't respond to requests for comment. Anthropic declined to comment. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell summoned Wall Street leaders last week to an urgent meeting on concerns that Mythos will usher in an era of greater cyber risk. Anthropic has warned that the model may be capable of powering cyberattacks if companies don't test it against their own systems and build defenses ahead of any wider release. At the meeting last week, Wall Street leaders were urged to take Mythos seriously and to use it to detect vulnerabilities. The Treasury Department is seeking access from Anthropic despite the Pentagon labeling the artificial intelligence company a US supply chain risk earlier this year. The government made the declaration after a dispute with Anthropic over how its AI technology may be used by the military, and set a six-month period for the company to hand over AI services to another provider. The startup is fighting the designation in federal court. Get the Tech Newsletter bundle. Get the Tech Newsletter bundle. Get the Tech Newsletter bundle. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Plus Signed UpPlus Sign UpPlus Sign Up By continuing, I agree to the Privacy Policy and Terms of Service. Corcos -- co-founder of a health tech startup and a part of the Department of Government Efficiency, or DOGE, that Elon Musk led to orchestrate cuts at agencies -- was named Treasury's chief information officer in mid-2025. Corcos had encouraged the use of Anthropic's Claude AI tools within the Treasury's technology team before the company was labeled a supply chain risk, according to the person familiar with the situation. During testing of Mythos, Anthropic's security team found it was capable of identifying and then exploiting vulnerabilities "in every major operating system and every major web browser when directed by a user to do so." In one case, it wrote a web browser exploit that chained together four vulnerabilities. In a recent blog, Anthropic introduced an initiative called Project Glasswing through which a limited number of organizations are testing Mythos. Anthropic said in the post that it has been in "ongoing discussions" with government officials about the model and its capabilities. "We are ready to work with local, state, and federal representatives," the startup said. Wall Street banks themselves have begun testing Mythos internally. While JPMorgan Chase & Co. was the only bank named as part of an initiative to test the Mythos model, other major financial institutions including Goldman Sachs Group Inc., Citigroup Inc., Bank of America Corp. and Morgan Stanley have also gained access, people familiar with the matter told Bloomberg last week.

[19]

Bessent, Powell warn bank CEOs about Anthropic model cyber risks

US Treasury Secretary Scott Bessent and Federal Reserve chair Jerome Powell summoned Wall Street leaders to an urgent meeting on concerns that the latest artificial intelligence model from Anthropic, Mythos, will usher in an era of greater cyber risk. Bessent and Powell assembled the group at Treasury's headquarters in Washington on Tuesday (Wednesday AEST) to make sure banks are aware of possible future risks raised by Mythos and potential similar models, and are taking precautions to defend their systems, according to people familiar with the matter who asked not to be identified.

[20]

Fed Chair Jerome Powell, Treasury's Bessent and top bank CEOs met over Anthropic's Mythos model

Richard Escobedo covers economic policy at CBS News and is a coordinating producer at Face the Nation with Margaret Brennan. He joined CBS in 2018 and is a graduate of Texas Christian University in Fort Worth, Texas. Federal Reserve Chair Jerome Powell and Treasury Secretary Scott Bessent met with top bank CEOs in a closed-door meeting this week to discuss the cybersecurity risks posed by Anthropic's latest AI model, Mythos, sources told CBS News. JPMorgan Chase chief executive Jamie Dimon was invited but was unable to attend, according to the sources. The meeting was earlier reported by Bloomberg News, which said Powell and Bessent summoned the financial executives to the Treasury Department's Washington, D.C., headquarters to discuss the potential risks from Mythos and other AI models. Anthropic, the developer of generative AI chatbot Claude, on Tuesday said it was forming a project with several major tech companies, including Amazon, Apple and Nvidia, to use Mythos to strengthen cybersecurity defenses. Anthropic added that it will not widely release Mythos because of its advanced capabilities, which have uncovered vulnerabilities in major operating systems and web browsers. "Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely," Anthropic said in a post about the new project. "The fallout -- for economies, public safety, and national security -- could be severe." The effort, called Project Glasswing, "is an urgent attempt to put these capabilities to work for defensive purposes," Anthropic said on Tuesday. In 2023, the Biden Administration identified AI as a potential risk to financial stability. It was the first time that designation had been made, CBS News reported at the time.

[21]

Anthropic's Mythos puts DC, Wall Street on high alert

The limited release of Anthropic's new Mythos model is putting Washington officials on high alert after the AI firm's warning about the model's security risks sent shockwaves through and sparked debate in the tech industry. Within days of being informed of Anthropic's new technology, the White House ratcheted up a multipronged response involving Trump administration leaders across agencies to evaluate just how powerful AI is becoming. Anthropic's announcement follows years of AI warnings, but last week it seemingly landed differently, upping pressure on Washington to stay ahead even as some question the extent of the latest threat. "A bunch of people in the [Trump] administration are coming to the realization" AI development has not plateaued as some officials predicted last summer, Dean Ball, the co-author of the Trump White House AI Action plan, told The Hill in an interview Monday. "They are realizing, 'My goodness, I'm going to have to jump in here and get involved and get my hands dirty' because this is not being handled,'" said Ball, adding, "The administration was not prepared to deal with this, that's just the frank reality." Anthropic announced last week it will hold back the full release of Claude Mythos Preview, claiming the model is too dangerous for the public at this stage. The model was released to a small group of technology firms and critical software builders, which will use the model in their defensive security work and share their findings with Anthropic under a new initiative called Project Glasswing. Partners including Cisco, Google, and Palo Alto Networks came out in support of the project, with the latter company calling it a "game changer" for finding hidden vulnerabilities. Mythos has already found thousands of high-security vulnerabilities, some of which date back more than two decades, according to Anthropic. While the tool can help governments find these vulnerabilities, it also makes it much easier for hackers to exploit these security gaps. "This time, the threat is not hypothetical," Anthropic's researchers wrote in a Mythos assessment. "Advanced language models are here." Prior to external release, Anthropic briefed senior officials across the U.S. government on Mythos's offensive and defensive cyber capabilities, an Anthropic official confirmed to The Hill on Monday. This includes conversations with the Cybersecurity and Infrastructure Security Agency and the Center for AI Standards and Innovation, the official said. On the same day Project Glasswing was announced, Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell convened a group of Wall Street executives to discuss the cybersecurity concerns, multiple people familiar with the meeting told The Hill. Executives, including Bank of America CEO Brian Moynihan and Goldman Sachs CEO David Solomon, attended the meeting, Bloomberg first reported. The Hill's sources said several of the executives were already in Washington for the Financial Services Forum, an advocacy organization of the country's eight largest banks. Ahead of Anthropic's announcement, Bessent also joined Vice President Vance on a call with a group of technology leaders, including Anthropic CEO Dario Amodei, xAI CEO and former Trump adviser Elon Musk and OpenAI CEO Sam Altman, to discuss the security of xAI models, CNBC reported. "There's definitely a sense of urgency, it's something that is relatively new. The AI has developed a way to find hacks in other software," National Economic Council Director Kevin Hassett told Fox News on Friday. And later last week, The Wall Street Journal reported National Cyber Director Sean Cairncross will lead a group of federal officials to identify security vulnerabilities in critical infrastructure and strengthen government systems against AI exploitation. When asked about this project, an official with the White House said it is "proactively engaging across government and industry, and that the federal government continues to "work with AI companies to ensure their models help secure critical software vulnerabilities." Some in the cybersecurity space welcome the efforts from Washington, saying the model is a "long time coming" as AI rapidly advances. "I don't think anyone can solve this alone," Brad Medairy, the executive vice president of integrated cyber business at Booz Allen Hamilton, told The Hill. "I'm really encouraged, the federal government is being proactive and engaging and taking this seriously." "Our senior-most leaders in government are like, 'let's get after this,'" Medairy added. "Cybersecurity has been top of mind for governments all over the world for many years," said Gil Messing, chief of staff at Check Point, a cybersecurity vendor and government contractor. "But when you add such a powerful toolkit that in the past was either imaginary or only being used by superpowers [and] now it's being commoditized ... the threat is real." Wall Street was similarly receptive to warnings. Solomon told investors on Monday's earnings call that the bank has access to Mythos and is working with Anthropic and other security vendors to bolster security. "Cybersecurity has long been at the core of our business," Solomon said. "This is part of our ongoing capabilities that we have been investing in and are accelerating our investment in. We're aware of Mythos and its capabilities. We have the model. We're working closely with Anthropic and all of our security vendors." Although CEO Jamie Dimon could not attend last week's meeting with Bessent and Powell, JPMorgan Chase was also publicly listed as a partner for Project Glasswing. While players in Washington and on Wall Street were quick to react, some in the Trump administration's orbit remain skeptical over Anthropic's claims. David Sacks, who recently ended his role as the White House's AI and cryptocurrency czar, posted numerous times over the weekend about his hesitations. "Anthropic has proven it's very good at two things. One is product releases, the second is scaring people," Sacks said on the "All-In" podcast last week. "We've seen a pattern in their previous releases ... at the same time they roll out a new model or new model [system] card, they also roll out some study showing the worst possible implication where the technology could lead." In a post on the social platform X, Sacks wrote, "The world has no choice but to take the cyber threat associated with Mythos seriously. But it's hard to ignore that Anthropic has a history of scare tactics." He attached a screenshot of what appears to be an AI-generated list of links where Amodei highlighted "alarming AI risk narratives frequently tied to model launches." Katie Miller, a previous employee of Musk and the Department of Government Efficiency and the wife of top White House aide Stephen Miller, added on X, "It sounds like Anthropic is running a giant public relations scheme to manipulate industry fears. A playbook Dario has used in the past." Ball, who left the White House last August, said there is a "real dissonance" in the administration over AI's capabilities. Even as it continues to release some of the most-used AI products, Anthropic has tried to set itself apart in the AI space, often discussing safety and the risks of the technology on national security and the workforce. This mission has put Anthropic at odds with the Trump administration, which is forging ahead with a pro-innovation and light-regulation agenda for the technology. Tensions reached a boiling point earlier this year, when the Pentagon cut ties with the AI firm after the company requested certain assurances over how the government and military can use AI. Looking forward, tech industry observers predict other companies could soon follow Anthropic's suit and release similar types of products. "You see coalescence around these new technology trends ...there's safety in numbers," said David Menninger, the executive director of software research for ISG, an AI-centered tech research and IT advisory firm. "You rarely want to be the only one consuming a certain type of technology. Do other large language model providers start to provide similar types of capabilities that would compete with some of these things that Mythos is offering?" he added.

[22]

Goldman Is Using Mythos, Working With Anthropic on Cyber Risks

Goldman Sachs is working closely with Anthropic and security vendors, and is aware of the model's capabilities, with CEO David Solomon saying "cybersecurity has long been at the core of our business". Goldman Sachs Group Inc. is "supplementing" its cyber and infrastructure resilience after regulators warned the largest US banks about the latest artificial intelligence model from Anthropic PBC, Chief Executive Officer David Solomon said. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell summoned Wall Street leaders last week to an urgent meeting on concerns that Mythos, the latest AI model from Anthropic, will usher in an era of greater cyber risk. "Cybersecurity has long been at the core of our business," Solomon said Monday on an earnings conference call with analysts. "This is part of our ongoing capabilities that we have been investing in and are accelerating our investment in. We're aware of Mythos and its capabilities. We have the model. We're working closely with Anthropic, and all of our security vendors." Wall Street banks including Goldman have started to test the Mythos model internally, Bloomberg News previously reported. Government officials didn't raise any specific threat to financial institutions and more generally encouraged the banks to run the model against their own systems to improve their own defenses. Many of the executives called to last week's meeting, including Solomon, were in Washington already for a meeting of the Financial Services Forum, an advocacy group made up of the biggest lenders. "By the way, it is not the first meeting that that group has gone over to Treasury to talk about cybersecurity risks over a number of years," Solomon said Monday. "This is something the industry is focused on. It's something we're focused on. And there's nothing new in that focus."

[23]

Wall Street CEOs' First Reactions to Anthropic's Mythos