Google's Project Astra: AI-Powered Smart Glasses Inch Closer to Reality

11 Sources

11 Sources

[1]

Google thinks it finally has smart glasses that are actually smart thanks to Gemini AI

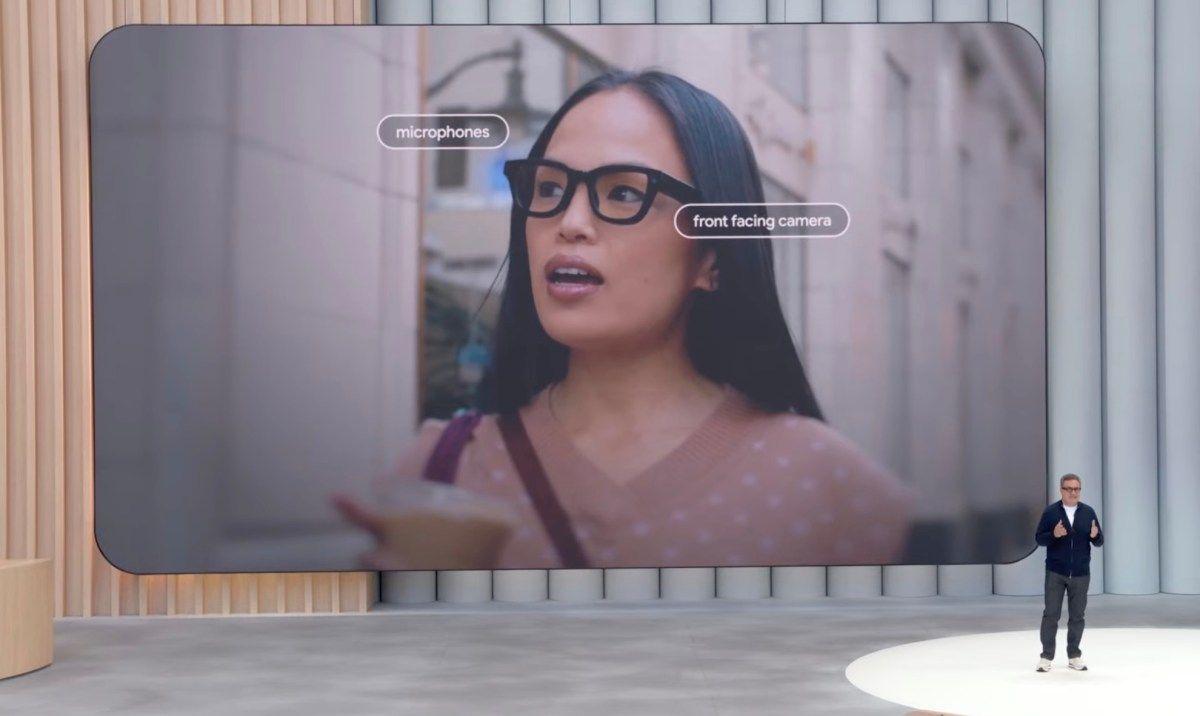

Google Glass may not have taken off like the tech giant hoped, but it seems that Google remains convinced that we're going to want wearable mixed-reality tech at some point. Advances from Meta and Apple seem to have only encouraged it, and now Google's putting two of its favorite techs together: smart glasses and AI. We first got a glimpse of Google's Project Astra always-on AI agent back in May. Now the company's shared a new demo of its prototype for a multimodal virtual assistant that would live in your phone - and your glasses - and would be able to see everything you do. At the briefing for the launch of Gemini 2.0 this week, Google revealed that a small group is to begin testing Project Astra prototype Gemini AI glasses as part of its Trusted Tester programme. A group of testers will also test Project Astra on Android phones. The idea is that having an AI agent in glasses form will help with things like providing directions and language translations in situations where we want to have our hands free. Compared to where things were in May, Google says Astra can now understand more languages, mix languages and understand accents and "uncommon words". It can also understand language at about the latency of human conversation" with new native audio understanding and streaming capabilities. Meanwhile, Astra can now access Google Maps, Lens and Search to answer questions and it now has 10 minutes of in-session memory and can remember previous conversations. While Project Astra remains a prototype, Google's also announced the launch of a new operating system, Android XR, for both smart glasses and headsets. Available as a preview for developers for now, it supports tools like ARCore, Android Studio, Jetpack Compose, Unity, and OpenXR to allow developers to start building apps and games for upcoming devices. Known as Project Moohan internally, the operating system signals Google's intention to take on rivals Apple and Meta in the AR and mixed-reality space. Apple's expensive Vision Pro was hardly destined for mainstream success, but it introduced the concept of spatial computing, which Google appears to have taken pointers from. Meanwhile, Meta has been aiming its Quest headsets and RayBan smart glasses at a more mainstream market. While the latter remains mainly popular among gamers, the collaboration with RayBan does seem to have helped make smart glasses more appealing to the public by promoting them as fashion accessories that don't obviously look like smart glasses. Google's prototype looks much more powerful thanks to it integration of Gemini with various apps and that ability to remember, but we don't know what form it will take if it ever reaches market. We're told that Android XR is a collaboration with Samsung and Qualcomm, and we've also seen a glimpse of what looks to be a Samsung mixed-reality headset. This could mean that it will be Samsung rather than Google itself that launches the first Gemini AI glasses. That makes me wonder if they would be as appealing to the fashion-conscious consumer as the Meta-RayBan offering. I'm also wondering how Google's going to make its Project Astra capabilities practical given the current limitations on batteries and potential privacy obstacles if the AI agent needs to be recording all the time (I don't see how it will know where I left my keys if it isn't). Microsoft delayed the roll out of its controversial Recall tool for Copilot+ PCs partly due to such concerns. For an overview of what the headset market looks like at the moment, see our pick of the best VR headsets. Just last week, we saw the launch of the world's first Cinematic AR glasses with the XREAL One and XREAL One Pro Series, described as "the most advanced consumer AR glasses on the market" by XREAL's co-founder and CEO, Chi Xu.

[2]

Google smart glasses just closer to real with huge Project Astra update

While OpenAI is meting out its big AI announcements over 12 Days, Google has decided to drop the whole kit and kaboodle on us by announcing Gemini 2.0. And we just got another sneak peek at Google's new prototype smart glasses. Google claims that it is entering the "agent era" with Gemini 2, revealing a plethora of AI agents and the ability to create your own AI agents. One of the more interesting agentic announcements revolves around Project Astra, which was first teased during Google I/O back in May where it stole the show. Project Astra is meant to be a "universal AI agent" that works on tasks in everyday life via your phone's camera and voice recognition software. This will morph into smart glasses a la Meta's Ray Bans. Google CEO Sundar Pichai teased Astra in a video on X and Google posted a YouTube video showing Astra in action. During I/O the company showed off potential Astra capabilities working via smart glasses. While the AI agent is supposed to come to phones first, the smart glasses teaser really showed how it might work on other platforms. Google claimed that Astra could understand and respond to the world "like people do." As with many AI reveals this year, Astra was shown responding to and with conversational language. The model appeared to have impressive spatial understanding and video processing during the May demo. Initially Astra was promised to be released before the end of the year. However, in October, during an earnings call, Google CEO Sundar Pichai revealed that Astra had been delayed into 2025. So, we weren't expecting much Astra news before the year was out. As part of today's announcement, Google revealed several updates to the model: Google did not provide more of a timeline for when people might get to see Astra in the real world. However, in Google's announcement post, the company revealed that its tester program is starting to evaluate Project Astra on prototype glasses. We wonder if those glasses are the new smart glasses from Samsung we expect to be announced in January considering the partnership with Google and Qualcomm for a new XR platform. Those glasses aren't supposed to feature a camera, yet. The glasses featured in the above video appear to be something else. Meta may be in the lead with smart glasses but other companies are catching up like Xreal and its new X1-powered AR glasses. Google added that it is working on bringing Astra to the Gemini app, and other form factors, hinting at more than just phones and glasses.

[3]

Google teases smart glasses with amazing Project Astra update

Google has been hard at work improving Project Astra since it was first shown during Google I/O this year, with the AI bot that understands the world around you being one of the major updates to arrive with Gemini 2.0. Even more excitingly, Google says it's "working to bring the capabilities to Google products like the Gemini app, our AI assistant, and to other form factors like glasses." What's new in Project Astra? Google says language has been given a big performance bump, and Astra can now better understand accents and less commonly used words, plus it can speak in multiple languages, and in combinations of languages too. It means Astra is more conversational, and speaks more like we do every day. Astra "sees" the world around it and now uses Google Lens, Google Maps, and Google Search to inform it. Recommended Videos A big part of Project Astra's appeal is its ability to remember things, and Google has given it a 10-minute in-conversation memory, ensuring conversation flows and questions don't need to be phrased in a certain way to be understood. Its long-term memory has also been improved. Please enable Javascript to view this content The final update is around latency, which Google says now matches the flow of human conversation. Take a look at the video above to see how Project Astra is progressing, as it's looking very exciting indeed. We were impressed with Project Astra when we saw it demonstrated at Google I/O but frustrated that Google didn't effectively even tease, let alone announce, a pair of smart glasses that would work with it in the future. It has now teased such a product with the announcement of Gemini 2.0 and the Project Astra updates. The wearable is being tested in the real world, as you can see in the Project Astra video, where it's obvious how well Astra suits hands-free use. Such a product can't come soon enough as brands like Meta, with the help of Ray-Ban, and newcomers like Solos are already ahead of the game. Even Samsung is expected to launch some kind of smart eyewear in 2025. What about Gemini 2.0? Google's CEO Sundar Pichai calls it the company's most capable model yet and said, "If Gemini 1.0 was about organizing and understanding information, Gemini 2.0 is about making it much more useful." While the updates and new features are mostly of interest to developers at the moment, we will see Gemini 2.0 in action on our phones and in our searches. A chat-optimized, experimental version of Gemini 2.0 Flash -- the first model in the Gemini 2.0 family -- will be available as an option in the mobile and desktop Gemini app for you to try, while Gemini 2.0 will enable AI Overviews in your Google searches to answer more complex questions, including advanced math equations and coding queries. This feature begins testing this week and will be more widely available in early 2025. There's no information on when Project Astra or the prototype smart glasses will be more widely available.

[4]

Google wants to sell those Project Astra AR glasses some day, but it won't be today

Google is slowly peeling back the curtain on its vision to, one day, sell you glasses with augmented reality and multimodal AI capabilities. The company's plans for those glasses, however, are still blurry. At this point, we've seen multiple demos of Project Astra -- DeepMind's effort to build real-time, multimodal apps and agents with AI -- running on a mysterious pair of prototype glasses. On Wednesday, Google said it would release those prototype glasses, armed with AI and AR capabilities, to a small set of selected users for real-world testing. On Thursday, Google said the Project Astra prototype glasses would run on Android XR, Google's new operating system for vision-based computing. It's now starting to let hardware makers and developers build different kinds of glasses, headsets, and experiences around this operating system. The glasses seem cool, but it's important to remember that they're essentially vaporware -- Google still has nothing concrete to share about the actual product or when it will be released. However, it certainly seems like the company wants to launch these at some point, calling smart glasses and headsets the "next generation of computing" in a press release. Today, Google is building out Project Astra and Android XR so that these glasses can one day be an actual product. Google also shared a new demo showing how its prototype glasses can use Project Astra and AR technology to do things like translate posters in front of you, remember where you left things around the house, or let you read texts without taking out your phone. "Glasses are one of the most powerful form factors because of being hands-free; because it is an easily accessible wearable. Everywhere you go, it sees what you see," said DeepMind product lead Bibo Xu in an interview with TechCrunch at Google's Mountain View headquarters. "It's perfect for Astra." A Google spokesperson told TechCrunch they have no timeline for a consumer launch of this prototype, and the company isn't sharing many details about the AR technology in the glasses, how much they cost, or how all of this really works. But Google did at least share its vision for AR and AI glasses in a press release on Thursday: Android XR will also support glasses for all-day help in the future. We want there to be lots of choices of stylish, comfortable glasses you'll love to wear every day and that work seamlessly with your other Android devices. Glasses with Android XR will put the power of Gemini one tap away, providing helpful information right when you need it -- like directions, translations or message summaries without reaching for your phone. It's all within your line of sight, or directly in your ear. Many tech companies have shared similar lofty visions for AR glasses in recent months. Meta recently showed off its prototype Orion AR glasses, which also have no consumer launch date. Snap's Spectacles are available for purchase to developers, but they're still not a consumer product either. An edge that Google seems to have on all of its competitors, however, is Project Astra, which it is launching as an app to a few beta testers soon. I got a chance to try out the multimodal AI agent -- albeit, as a phone app and not a pair of glasses -- earlier this week, and while it's not available for consumer use today, I can confirm that it works pretty well. I walked around a library on Google's campus, pointing a phone camera at different objects while talking to Astra. The agent was able to process my voice and the video simultaneously, letting me ask questions about what I was seeing and get answers in real time. I pinged from book cover to book cover and Astra quickly gave me summaries of the authors and books I was looking at. Project Astra works by streaming pictures of your surroundings, one frame per second, into an AI model for real-time processing. While that's happening, it also processes your voice as you speak. Google DeepMind says it's not training its models on any of this user data it collects, but the AI model will remember your surroundings and conversations for 10 minutes. That allows the AI to refer back to something you saw or said earlier. Some members of Google DeepMind also showed me how Astra could read your phone screen, similar to how it understands the view through a phone camera. The AI could quickly summarize an AirBnB listing, it used Google Maps to show nearby destinations, and executed Google Searches based on things it was seeing on the phone screen. Using Project Astra on your phone is impressive, and it's likely a signal of what's coming for AI apps. OpenAI has also demoed GPT-4o's vision capabilities, which are similar to Project Astra and also have been teased to release soon. These apps could make AI assistants far more useful by giving them capabilities far beyond the realm of text chatting. When you're using Project Astra on a phone, it's apparent that the AI model would really be perfect on a pair of glasses. It seems Google has had the same idea, but it might take them a while to make that a reality.

[5]

Google Hints at a Return to the Smart Glasses Game

It's been over 10 years now since the ill-fated launch of Google's Glass, its augmented reality goggles -- a project that blossomed briefly then withered, not least because the tech wasn't quite mature and the general public wasn't ready for this sort of innovation. But we live in a time where Apple's launched a $3,500 augmented reality device, the Vision Pro, and Meta's smart glasses made in partnership with Ray Ban are reportedly selling like hotcakes. Google may be ready to try to enter this market again. Xu explained that embedding Astra in a glasses-like device is "one of the most powerful and intuitive form factors to experience this kind of AI." Google shared a brief video demonstrating how it thinks this sort of wearable tech would work -- all the while guided by visual and audio AI-generated cues. Though it was just a concept, Xu admitted when asked that there would be "more news coming shortly" about the "glasses product." Those words have triggered plenty of intrigue online. Meanwhile, when Google showed off what Gemini 2.0 could do, it placed a lot of emphasis on "agentic" AI...meaning advanced systems that can actually perform online actions, not just chat back to you or generate images or video. As news site CoinTelegraph reports, Google executives said these AI agents will be able to understand "complex instructions, plan, reason, take action across websites and even assist with video game strategy." It also hinted at agent-centric research projects that can perform tasks like taking control of a user's Chrome browser, moving a PC's cursor and typing in text to help with things like filling in online forms. It may also have agents that can help with coding, or help researchers find data on a specific niche topic.

[6]

Google renews push into mixed reality headgear

Google is ramping up its push into smart glasses and augmented reality headgear, taking on rivals Apple and Meta with help from its sophisticated Gemini artificial intelligence. The internet titan on Thursday unveiled an Android XR operating system created in a collaboration with Samsung, which will use it in a device being built in what is called internally "Project Moohan," according to Google. The software is designed to power augmented and virtual reality experiences enhanced with artificial intelligence, XR vice president Shahram Izadi said in a blog post. "With headsets, you can effortlessly switch between being fully immersed in a virtual environment and staying present in the real world," Izadi said. "You can fill the space around you with apps and content, and with Gemini, our AI assistant, you can even have conversations about what you're seeing or control your device." Google this week announced the launch of Gemini 2.0, its most advanced artificial intelligence model to date, as the world's tech giants race to take the lead in the fast-developing technology. CEO Sundar Pichai said the new model would mark what the company calls "a new agentic era" in AI development, with AI models designed to understand and make decisions about the world around you. Android XR infused with Gemini promises to put digital assistants into eyewear, tapping into what users are seeing and hearing. An AI "agent," the latest Silicon Valley trend, is a digital helper that is supposed to sense surroundings, make decisions, and take actions to achieve specific goals. "Gemini can understand your intent, helping you plan, research topics and guide you through tasks," Izadi said. "Android XR will first launch on headsets that transform how you watch, work and explore." The Android XR release was a preview for developers so they can start building games and other apps for headgear, ideally fun or useful enough to get people to buy the hardware. This is not Google's first foray into smart eyewear. Its first offering, Google Glass, debuted in 2013 only to be treated as an unflattering tech status symbol and met with privacy concerns due to camera capabilities. The market has evolved since then, with Meta investing heavily in a Quest virtual reality headgear line priced for mainstream adoption and Apple hitting the market with pricey Vision Pro "spacial reality" gear. Google plans to soon begin testing prototype Android XR-powered glasses with a small group of users. Google will also adapt popular apps such as YouTube, Photos, Maps, and Google TV for immersive experiences using Android XR, according to Izadi. Gemini AI in glasses will enable tasks like directions and language translations, he added. "It's all within your line of sight, or directly in your ear," Izadi said.

[7]

Google is hinting that new AI-powered smart glasses are coming soon

TL;DR: Gemini 2.0 has been announced alongside a teaser for AI assistant-powered smart glasses. Google is testing these glasses, known as Project Astra, with its Trusted Tester program. The glasses feature AI capabilities to identify surroundings, and more information is expected soon. Gemini 2.0 has arrived, and alongside the announcement of the highly anticipated update of Google's AI companion is a teaser for new AI assistant-powered smart glasses. Google may very well be preparing to make a smart glasses-related announcement, as the company has teased via a new YouTube video posted to its official channel, "Project Astra," a demonstrative video showcasing the future capabilities of an AI assistant. Currently, in the research prototype phase, Project Astra was detailed by Google DeepMind product manager Bibo Xu, who revealed that a small group of people within Google's Trusted Tester program would be testing Project Astro on the prototype glasses. Notably, Google's Trusted Tester program consists of members who get to play with Google prototype products, most of which never make it to market, which may have thrown a dampener on the idea of Google releasing these glasses showcased in the above video. However, when asked about the glasses Xu said, "for the glasses product itself, we'll have more news coming shortly." The above video depicts the glasses being worn by an individual exploring a local neighborhood, with the user querying the AI assistant with simple yet impressive requests such as "What is this park on my left?". The smart glasses use sensors within the lenses to "see" what the user is seeing. From here a larger AI model calculates the answer, which is then heard by the user.

[8]

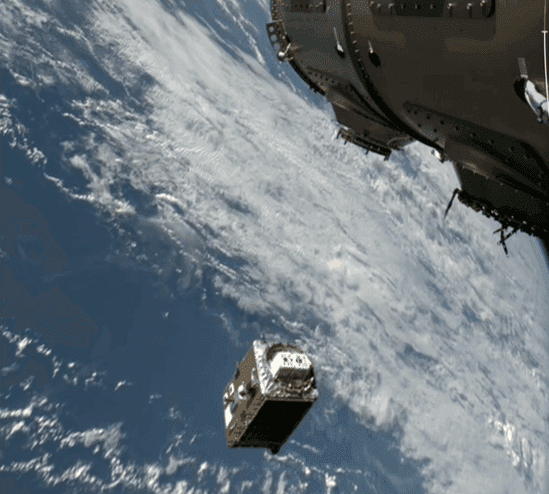

See what Google's Project Astra AR glasses can do (for a select few beta testers) | TechCrunch

Google has released a prototype of Project Astra's AR glasses for testing in the real world. The glasses are part of Googles long-term plan to one day have hardware with augmented reality and multimodal AI capabilities. In the meantime, they will be releasing demos to get the attention of consumers, developers, and their competition. Along with the the glasses, Google also announced their new operating system Android XR which will allow developers to build experiences for XR hardware like glasses and headsets. The OS will be powering the Project Atra Glasses along with the newly announced Samsung-built headset Project Moohan which will be for sale next year.

[9]

It sure sounds like Google is planning to actually launch some smart glasses

Google is working on a lot of AI stuff -- like, a lot of AI stuff -- but if you want to really understand the company's vision for virtual assistants, take a look at Project Astra. Google first showed a demo of its all-encompassing, multimodal virtual assistant at Google I/O this spring and clearly imagines Astra as an always-on helper in your life. In reality, the tech is somewhere between "neat concept video" and "early prototype," but it represents the most ambitious version of Google's AI work.

[10]

Google and Samsung's first AI face computer to arrive next year

For years, Google pushed the idea of wearing screens on our faces. Now it's trying again. Google Glass. Google Cardboard. Daydream. For years, the search giant has tried -- and largely failed -- to get consumers wearing screens in front of their faces. Now it's trying again. The company on Thursday revealed a new version of its Android software -- called Android XR -- developed in tandem with Samsung to power a variety of wearable devices, from virtual reality headsets to compact glasses with screens embedded in their lenses. "We, like many others, have made some attempts here before," said Sameer Samat, Google's Android ecosystem president, in a briefing. "I think the vision was correct, but the technology wasn't quite ready." While it's unclear whether Google plans to develop its own XR, or "extended reality" devices for sale to consumers, the first products built on top of this new software will arrive sometime next year, Samat said. And leading the pack is a self-contained headset built by Samsung code-named "Project Moohan," a reference to the Korean word for "infinity." Some facets of the Android XR experience might feel surprisingly familiar to anyone who has tried on one of Apple's $3,499 Vision Pro headsets. Users of Android XR headsets like Project Moohan will be able to flick through photo slideshows hanging in the air in front of them, settle in with movies displayed in an immersive virtual theater, or run Android apps that can be controlled with eye movements and hand gestures. Google never really stopped working on XR, a blanket term that refers to related technologies like virtual reality, augmented reality and mixed reality, Samat said. This time, however, the company is leaning on AI to make this new generation of face computers more helpful. Head-worn Android XR devices will have Google's Gemini chatbot built into their core, allowing users to control their apps with conversational commands as well as physical gestures. "The reality is that keyboards are rarely going to be an interface for XR, and Gemini is crucial in enabling a simple and quality experience," said Moor Insights principal analyst Anshel Sag, who tried a preproduction version of the Moohan headset. Shahram Izadi, Google's vice president of XR, says Gemini will also be able to see what the wearer sees -- whether they're real-world objects or digital elements hovering in space -- and can react to them accordingly. In one sample video the company shared with reporters, a pair of smart glasses with in-lens displays could see a specific tool in a user's field of view and remind them where it was. Other preview videos showed those prototype glasses displaying walking directions from Google Maps as wearers traipse through a city and offering to translate menus written in foreign languages. "It's notable that Apple is yet to promise any Intelligence features for Vision Pro, and Meta has said little about AI for its Horizon OS," said Leo Gebbie, principal analyst at CCS Insight. "Google seems to have grabbed a jump-start on its rivals in terms of surfacing this approach." Despite the optimism, there's no guarantee Google's AI-forward approach to headsets and smart glasses will immediately win over skeptical consumers. Demand for AR and VR has deflated so far this year according to shipment data from research firm IDC, though it does expect the industry to grow in the coming years.

[11]

Google plans new smart glasses and VR headsets in Samsung partnership

Google is teaming up with Samsung to take on Meta and Apple in the resurgent market for smart glasses and virtual-reality headsets, almost a decade after suspending consumer sales of its controversial Google Glass device. Next year, Samsung will release the first device based on a new version of Google's Android smartphone operating system that has been customised for headsets and glasses, which the Alphabet-owned internet group described as the "next generation of computing". The collaboration on the device -- codenamed "Project Moohan" -- between Google and Samsung, whose alliance in Android smartphones 15 years ago created the first serious competitor to the iPhone, comes 10 months after Apple launched its Vision Pro headset. However, despite being Apple's boldest launch into a new product category since the iPhone, the high-priced Vision Pro has struggled to attract consumers with sales estimated to be in the hundreds of thousands. Meanwhile Meta's Ray-Ban-branded smart glasses have become a surprise hit. The device, produced in partnership with eyewear giant EssilorLuxottica, combines cameras with a virtual audio assistant in a lightweight frame. Google said it planned to make its new Android XR system available for makers of smart glasses as well as virtual- and augmented-reality headsets. "Advancements in AI are making interacting with computers more natural and conversational," Google said in a blog post on Thursday. "This inflection point enables new extended reality (XR) devices, like headsets and glasses, to understand your intent and the world around you, helping you get things done in entirely new ways." According to one person familiar with Project Moohan, the Samsung headset offers similar high-fidelity displays and user experience as the Vision Pro, but will be launched at a "significantly" lower price point than Apple's $3,500 product. Android XR represents Google's latest attempt to reboot Google Glass, the futuristic eyewear first announced by its "moonshot" lab X in 2011. However, Glass's unusual style, including a camera and tiny display, suffered from technical limitations such as short battery life and clashes over privacy. It was pulled from sale to consumers in 2015, although efforts to sell the device to businesses continued for several years. Google has also tried to break into the virtual reality market before, with its Cardboard and Daydream devices that used a smartphone for their display. However, support for Daydream was discontinued in 2019. The Silicon Valley-based company is hoping the latest wave of AI innovations, which has brought breakthroughs in image recognition and the ability to hold conversations with virtual assistants, will help it to fare better this time around. Google this week showed an updated version of its Project Astra prototype, a system for smartphones and smart glasses that allows users to ask questions about what its cameras are seeing. The system is powered by a new version of its Gemini AI platform.

Share

Share

Copy Link

Google unveils updates to Project Astra, its AI-powered smart glasses prototype, as part of Gemini 2.0 launch. The company is testing the technology with a small group of users, signaling a potential return to the smart glasses market.

Google Revives Smart Glasses Ambitions with Project Astra

Google is making a renewed push into the smart glasses market with Project Astra, an AI-powered prototype that integrates the company's Gemini 2.0 AI technology. This move comes as part of Google's broader strategy to enter what it calls the "agent era" of artificial intelligence

1

.Project Astra: A Universal AI Agent

Project Astra, first teased during Google I/O in May, is designed to be a "universal AI agent" that works on tasks in everyday life. The system uses a device's camera and voice recognition software to understand and respond to the world "like people do"

2

.Key features of Project Astra include:

- Improved language understanding, including accents and uncommon words

- Multilingual capabilities

- Integration with Google Lens, Maps, and Search

- 10-minute in-conversation memory and improved long-term memory

- Latency matching human conversation flow

3

Prototype Testing and Android XR

Google has announced that a small group of testers will begin evaluating Project Astra on prototype glasses as part of its Trusted Tester program

4

. The company has also introduced Android XR, a new operating system for smart glasses and headsets, available as a preview for developers4

.Potential Applications and Challenges

The AI-powered glasses aim to assist with tasks such as:

- Providing hands-free directions

- Real-time language translations

- Remembering where items are placed around the house

- Reading texts without taking out a phone

5

However, challenges remain, including battery limitations and potential privacy concerns related to continuous recording

4

.Related Stories

Market Context and Competition

Google's renewed interest in smart glasses comes as competitors like Apple, Meta, and Samsung make strides in the AR and mixed-reality space:

- Apple recently launched its $3,500 Vision Pro device

- Meta's collaboration with Ray-Ban has made smart glasses more appealing as fashion accessories

- Samsung is rumored to be developing mixed-reality headsets

5

4

Future Outlook

While Google has not provided a specific timeline for a consumer launch of Project Astra glasses, the company's vision statement suggests a commitment to developing "stylish, comfortable glasses" that work seamlessly with other Android devices

5

. As the technology matures and public acceptance grows, Google's return to the smart glasses market could potentially reshape the landscape of wearable AI and augmented reality devices.References

Summarized by

Navi

[1]

[3]

Related Stories

Google Unveils Android XR: A New Era of AI-Powered Smart Glasses and XR Headsets

16 May 2025•Technology

Google's Android XR Glasses: A Glimpse into the Future of Smart Eyewear

22 May 2025•Technology

Google AI glasses set to launch in 2026 with Gemini and Android XR across multiple partners

08 Dec 2025•Technology

Recent Highlights

1

Apple Plans Major Siri AI Overhaul in iOS 27 With Third-Party Chatbot Integration

Technology

2

OpenAI shuts down Sora after six months, ending Disney's $1 billion licensing partnership

Technology

3

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research