Intel Xeon 6 selected as host CPU for Nvidia DGX Rubin NVL8 systems at GTC 2026

4 Sources

4 Sources

[1]

Intel Xeon 6 selected as host CPU for Nvidia DGX Rubin NVL8 systems -- Intel wins a contract as Nvidia enters data center processor market with Vera CPUs

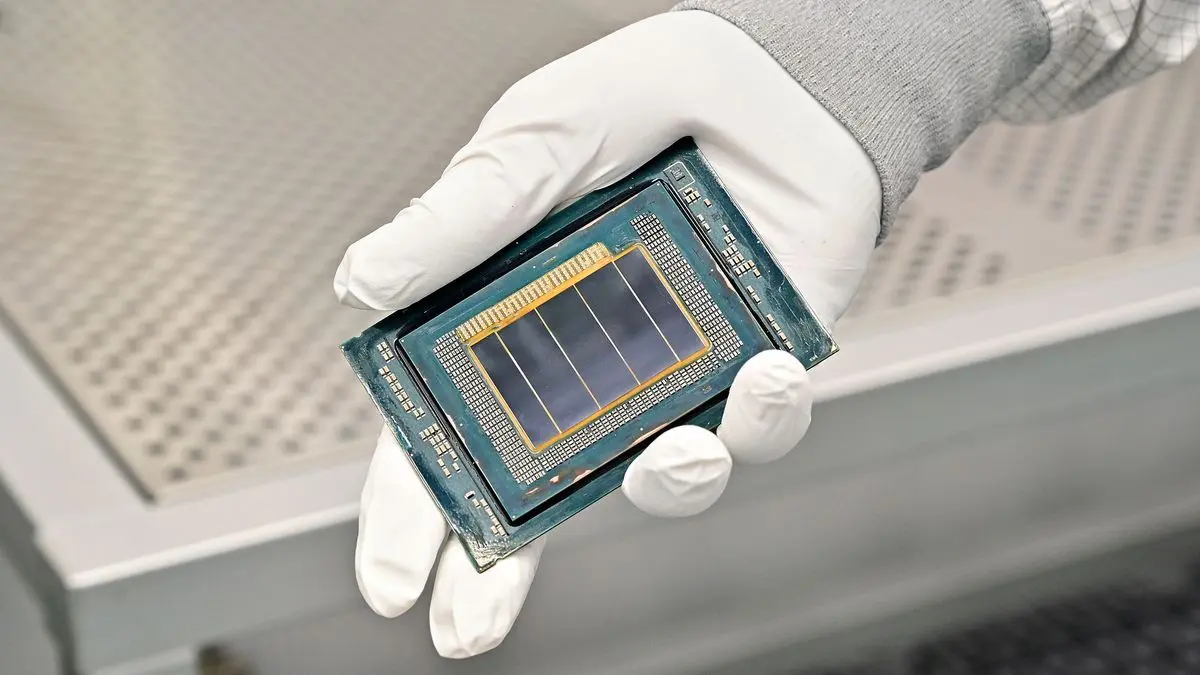

Xeon 6 brings a 2.3x memory bandwidth improvement over its predecessor. Intel announced today at Nvidia GTC 2026 in San Jose that its Xeon 6 processor will serve as the host CPU in Nvidia's DGX Rubin NVL8 systems, extending the x86 pairing the two companies established with the Xeon 6776P in current DGX B300 Blackwell-based platforms. The DGX Rubin NVL8 is Nvidia's next-generation flagship AI server system. In that configuration, the host CPU is responsible for task orchestration, memory management, scheduling, and data movement to the GPU accelerators. With inference workloads shifting toward agentic AI and reasoning systems, those functions place increasingly heavy demands on per-core performance and memory bandwidth. Intel said Xeon 6 addresses those demands through a combination of memory capacity, bandwidth, and I/O capabilities. The platform supports up to 8TB of system memory, which Intel cited as key for supporting large language models with growing key-value caches. Meanwhile, memory bandwidth has improved 2.3 times generation-on-generation via MRDIMM technology, raising the rate at which data reaches the GPU accelerators. PCIe 5.0 lanes handle high-bandwidth accelerator connectivity, and a feature Intel calls Priority Core Turbo dedicates strong single-thread performance to orchestration, scheduling, and data movement tasks, keeping GPU utilization high as workload complexity increases. Security coverage extends across the CPU-to-GPU data path through Intel Trust Domain Extensions (TDX), which adds hardware-rooted isolation and attestation via an Encrypted Bounce Buffer. Intel said end-to-end confidential computing is increasingly required as AI inference scales across data center, cloud, and edge deployments. Xeon 6 also now supports Nvidia Dynamo, an inference orchestration framework that enables heterogeneous scheduling across CPU and GPU resources within the same cluster. "In this new era, the host CPU is mission-critical," said Jeff McVeigh, corporate vice president and general manager of Data Center Strategic Programs at Intel. "It governs orchestration, memory access, model security, and throughput across GPU-accelerated systems." Intel also cited Xeon's x86 software ecosystem and enterprise deployment history as factors in the selection, noting compatibility with existing AI software stacks. The DGX Rubin NVL8 configuration builds on the same architectural foundation as DGX B300, giving operators platform continuity between Blackwell and Rubin generations. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[2]

Intel says its Xeon 6 Chips are set to coordinate Nvidia's giant AI servers

* Nvidia's DGX Rubin NVL8 uses Intel Xeon 6 host CPUs to orchestrate GPU AI servers. * Intel positions Xeon 6 as the "mission control" for orchestration, memory, security, and throughput. * Intel is showing off its new tech at Nvidia GTC 2026 over at booth #3100. It's hard to talk about AI hardware without also bringing up Nvidia. The company banked on the AI boom since the beginning, and began reaping the rewards as companies worldwide scrambled to set up infrastructure to run their LLM-based dreams. It's easy to assume that, because Nvidia was the company bringing in the heavy lifting, its AI servers would feature only Nvidia-branded hardware. Well, it turns out that the truth is a little more complex than that. While Nvidia is prepping to release its DGX Rubin NVL8 servers to help companies with their agentic AI goals, it needs a host chip to make sure all the hardware is properly orchestrated with one another. And to achieve that, Nvidia has teamed up with Intel to bring its Xeon 6 technology into the fray. Some of Intel's future chips will integrate Nvidia RTX GPUs Two titans are joining forces. Posts 1 By Simon Batt Intel's Xeon 6 host chips will help manage Nvidia's AI servers It's a match made in heaven Over at Nvidia GTC 2026, Intel gave everyone a sneak peek into what it's planning to bring to the AI table. Intel argues that, while GPUs are essential for chewing through AI-related tasks, CPUs are needed to help keep everything organized. While the GPUs act as the number-crunchers, CPUs act as the 'mission control' to help distribute the work, keep everything in sync, and ensure all the hardware works harmoniously. To help Nvidia with its own AI servers, Intel is bringing its decades of CPU expertise into the fray with its Xeon 6 host chips. As the name suggests, these will sit in a 'managerial' position inside the DGX Rubin NVL8 servers to ensure everything runs smoothly. Jeff McVeigh, corporate vice president and general manager for Data Center Strategic Programs at Intel, is keen to remind us not to underestimate the power of a good CPU in an AI processing server: In this new era, the host CPU is mission‑critical. It governs orchestration, memory access, model security, and throughput across GPU‑accelerated systems. Intel Xeon 6 delivers leadership performance, efficiency, and compatibility with the extensive x86 software ecosystem that customers rely on to scale inference workloads. Intel will be showing off this powerful new tech over at booth #3100 on the show floor at Nvidia GTC 2026, so if you're there, be sure to check out what the company has been working on in this new era of AI.

[3]

Intel Xeon 6 Used as Host CPUs in NVIDIA DGX Rubin NVL8 Systems

Today at NVIDIA GTC 2026, Intel announced that Intel Xeon 6 is being used as the processor for NVIDIA DGX Rubin NVL8 systems. This highlights Xeon's role in providing architectural continuity and scalability for GPU-accelerated AI systems as workloads shift toward massive, real-time inference. "AI is shifting from large-scale training to real‑time, everywhere inference-driven by agentic AI and reasoning systems," said Jeff McVeigh, corporate vice president and general manager, Data Center Strategic Programs at Intel. "In this new era, the host CPU is mission‑critical. It governs orchestration, memory access, model security, and throughput across GPU‑accelerated systems. Intel Xeon 6 delivers leadership performance, efficiency, and compatibility with the extensive x86 software ecosystem that customers rely on to scale inference workloads." As organizations continue to deploy AI systems, inference is increasingly defined not only by GPU throughput but also by CPU-led system performance, with the host CPU shaping overall cluster efficiency and total cost of ownership. It is also responsible for critical functions such as memory management, task orchestration, and workload distribution, while ensuring the security, reliability, and operational continuity essential to modern AI infrastructure. Building on these system-level requirements, Intel Xeon processors are used as the host CPU for DGX Rubin NVL8 systems due to their capability to support fast memory speeds, balanced performance across a range of workloads, lower long-term total cost of ownership (TCO), and their mature, enterprise-proven software ecosystem. Additionally, Intel's robust PCIe and I/O capabilities further strengthen Xeon's role as a high-bandwidth, low-latency platform across diverse workloads.* Efficient performance per watt * Optimized support throughout the ecosystem AI software stack, including new support for NVIDIA Dynamo to enable heterogeneous inference across CPU and forthcoming GPUs * Proven reliability across mission critical environments * Superior orchestration of GPU accelerated, heterogeneous systemsThis selection reinforces Intel Xeon as a cornerstone of modern AI infrastructure, enabling scalable deployment across modern data centers, the cloud and edge use cases. As AI inference scales, end-to-end confidential computing becomes essential - from CPU to GPU data paths. Intel Trust Domain Extensions (TDX) adds hardware-based isolation and attestation further reinforcing the selection of Xeon as the secure foundation for modern AI clusters. About the new Intel and NVIDIA collaboration: NVIDIA DGX Rubin NVL8 systems integrate Intel Xeon 6 processors, building on the architectural foundation established with Intel Xeon 6776P in current NVIDIA Blackwell-based platforms, including DGX B300 systems. By building on this proven foundation, Intel is helping to carry forward the performance, experience, and system-level expertise into the new DGX Rubin NVL8 systems. Intel engineered Xeon to help these systems get the most out of their GPUs, using features like Priority Core Turbo to keep data flowing to GPUs - and with strong single‑thread performance handling orchestration, scheduling, and data movement, Xeon helps ensure everything runs smoothly and efficiently even as inference workloads grow more complex. Key features of Intel Xeon 6 include:* Up to 8 TB system memory to support large models and growing KV caches * 3X higher memory bandwidth gen-on-gen with MRDIMM technology, improving data feed rates to GPUs * Industry-leading PCIe 5.0 lanes to support AI accelerators and other devices * Confidential computing across CPU-GPU data paths with Encrypted Bounce Buffer * Hardware-rooted isolation safeguards AI data and models while in use

[4]

Nvidia To Use Intel Xeon 6 CPUs For DGX Rubin NVL8 Systems

While the move marks another win by Intel against AMD, Nvidia plans to make a bigger push into the CPU market this year with its custom, Arm-compatible Vera CPU, which will go into the company's flagship Vera Rubin NVL72 platform as well as a stand-alone CPU offering. Nvidia plans to use Intel's Xeon 6 chips as the host CPU for the AI infrastructure giant's upcoming DGX Rubin NVL8 systems as it makes a bigger push into the CPU market. The DGX Rubin NVL8 systems were among the announcements Nvidia was expected to make during Nvidia CEO Jensen Huang's keynote Monday at its GTC 2026 event in San Jose, Calif. [Related: Analysis: After Big Nvidia Win, Will Intel Ever Escape Its Rival's Shadow?] The DGX Rubin NVL8 systems will feature eight Rubin GPUs connected using Nvidia's high-speed NVLink interconnect as part of the broader Vera Rubin platform the company plans to debut later this year. Other specs were not immediately available. Announced by Intel at the start of Huang's keynote, the plan to use Xeon 6 processors for Nvidia's DGX Rubin NVL8 marks another win by the chipmaker against AMD, with all but one generation of the x86-based platform using its server chips as the host CPU. AMD only won the socket once with Nvidia's DGX A100 system in 2020. The announcement was made after Nvidia and Intel last September revealed an expanded partnership that will see the two companies develop "multiple generations" of products together for the PC and data center markets. An Intel spokesperson told CRN that the DGX Rubin NVL8 engagement is separate from the company's strategic partnership with Nvidia. In a statement, Intel executive Jeff McVeigh said that Nvidia's host CPU selection reflects the reality that the processor is "mission-critical" for agentic AI and reasoning systems. "It governs orchestration, memory access, model security, and throughput across GPU accelerated systems," said McVeigh, who is corporate vice president and general manager of data center strategic programs. The company said that Nvidia chose its Xeon 6 processors "due to their capability to support fast memory speeds, balanced performance across a range of workloads, lower long-term total cost of ownership and their mature, enterprise-proven software ecosystem." The integration of Xeon 6 chips into the DGX Rubin NVL8 systems is "building on the architectural foundation established" with the custom Xeon 6776P processor it designed for Nvidia's Blackwell-based platforms, including the DGX B300 systems," according to Intel. Meanwhile, Nvidia plans to make a bigger push into the CPU market this year with its custom, Arm-compatible Vera CPU, which will go into the company's flagship Vera Rubin NVL72 platform as well as a stand-alone CPU offering. According to research firm IDC, revenue generated from non-x86 servers, which mainly consists of Arm-based designs, increased 146.4 percent year over year to $55.5 billion in the fourth quarter of last year, representing 44.2 percent of total server revenue. Sales from x86-based servers, on the other hand, grew 16.9 percent to $69.8 billion for the same period.

Share

Share

Copy Link

Intel secured a critical win at Nvidia GTC 2026 as its Xeon 6 processor was selected as the host CPU for Nvidia's next-generation DGX Rubin NVL8 AI systems. The partnership extends Intel's x86 dominance in Nvidia's flagship AI servers, with Xeon 6 delivering 2.3x memory bandwidth improvements and up to 8TB system memory to handle increasingly complex inference workloads and agentic AI systems.

Intel Xeon 6 Secures Host CPU Role in Nvidia's Next-Generation AI Platform

Intel announced at Nvidia GTC 2026 in San Jose that its Intel Xeon 6 processor will serve as the host CPU in Nvidia's DGX Rubin NVL8 systems, marking another significant win against AMD in the competitive data center processor market

1

. The Nvidia DGX Rubin NVL8 represents Nvidia's next-generation flagship AI server system, featuring eight Rubin GPUs connected using the company's high-speed NVLink interconnect4

. This selection extends the x86 pairing established with the Xeon 6776P in current DGX B300 Blackwell-based platforms, providing architectural continuity between generations1

.The partnership comes as AI workloads shift dramatically from large-scale training toward real-time inference driven by agentic AI and reasoning systems. In this evolving landscape, the host CPU plays a mission-critical role in governing task orchestration, memory access, model security, and throughput across GPU-accelerated AI systems

3

. Jeff McVeigh, corporate vice president and general manager of Data Center Strategic Programs at Intel, emphasized this shift: "In this new era, the host CPU is mission-critical. It governs orchestration, memory access, model security, and throughput across GPU-accelerated systems"1

.Technical Capabilities Addressing Next-Generation AI Workloads

Intel Xeon 6 brings substantial technical improvements designed specifically for next-generation AI workloads. The platform supports up to 8TB of system memory, which Intel identified as essential for supporting large language models with growing key-value caches

1

. Memory bandwidth has improved 2.3 times generation-on-generation through MRDIMM technology, raising the rate at which data reaches GPU accelerators1

. Industry-leading PCIe 5.0 lanes handle high-bandwidth accelerator connectivity, while a feature called Priority Core Turbo dedicates strong single-thread performance to orchestration, scheduling, and data movement tasks1

.Security coverage extends across the CPU-to-GPU data path through Intel Trust Domain Extensions (TDX), which adds hardware-rooted isolation and attestation via an Encrypted Bounce Buffer

1

. As AI inference scales across data center, cloud, and edge deployments, end-to-end confidential computing becomes increasingly essential3

. Intel Xeon 6 now supports Nvidia Dynamo, an inference orchestration framework that enables heterogeneous scheduling across CPU and GPU resources within the same cluster1

.Strategic Positioning and Competitive Landscape

Nvidia selected Intel Xeon 6 processors due to their capability to support fast memory speeds, balanced performance across a range of workloads, lower long-term total cost of ownership, and their mature, enterprise-proven x86 software ecosystem

4

. This marks another win by Intel against AMD, with all but one generation of Nvidia's x86-based platform using Intel server chips as the host CPU. AMD only won the socket once with Nvidia's DGX A100 system in 20204

.However, the competitive dynamics are shifting. While Intel celebrates this win, Nvidia plans to make a bigger push into the CPU market this year with its custom, Arm-compatible Vera CPU, which will go into the company's flagship Vera Rubin NVL72 platform as well as a stand-alone CPU offering

4

. According to research firm IDC, revenue generated from non-x86 servers, which mainly consists of Arm-based designs, increased 146.4 percent year over year to $55.5 billion in the fourth quarter of last year, representing 44.2 percent of total server revenue4

.Related Stories

Implications for Scalable and Efficient AI Infrastructure

As organizations continue to deploy AI systems, AI inference workloads are increasingly defined not only by GPU throughput but also by CPU-led system performance, with the host CPU shaping overall cluster efficiency and total cost of ownership

3

. Intel positions Xeon 6 as "mission control" for orchestration, memory, security, and throughput in GPU-accelerated AI systems2

. While GPUs act as number-crunchers, CPUs help distribute work, keep everything in sync, and ensure all hardware operates harmoniously2

.The DGX Rubin NVL8 configuration builds on the same architectural foundation as DGX B300, giving operators platform continuity between Blackwell and Rubin generations

1

. This selection reinforces Intel Xeon as a cornerstone of modern AI infrastructure, enabling scalable and efficient AI infrastructure deployment across modern data centers, cloud, and edge use cases3

. Intel was showcasing this technology at booth #3100 on the show floor at GTC 20262

.References

Summarized by

Navi

[1]

Related Stories

Intel Unveils New Xeon 6 CPUs with Advanced AI Performance Features for Nvidia's DGX B300 Systems

23 May 2025•Technology

Intel Unveils Xeon 6 Processors with Enhanced AI and Networking Capabilities

25 Feb 2025•Technology

Intel Unveils Next-Generation AI Solutions: Xeon 6 CPUs and Gaudi 3 AI Accelerators

25 Sept 2024