Nvidia CEO Jensen Huang Envisions Future of 'Reasoning' AI Powered by Cheaper Computing

3 Sources

3 Sources

[1]

Nvidia CEO Says 'Reasoning' AI Will Depend on Cheaper Computing

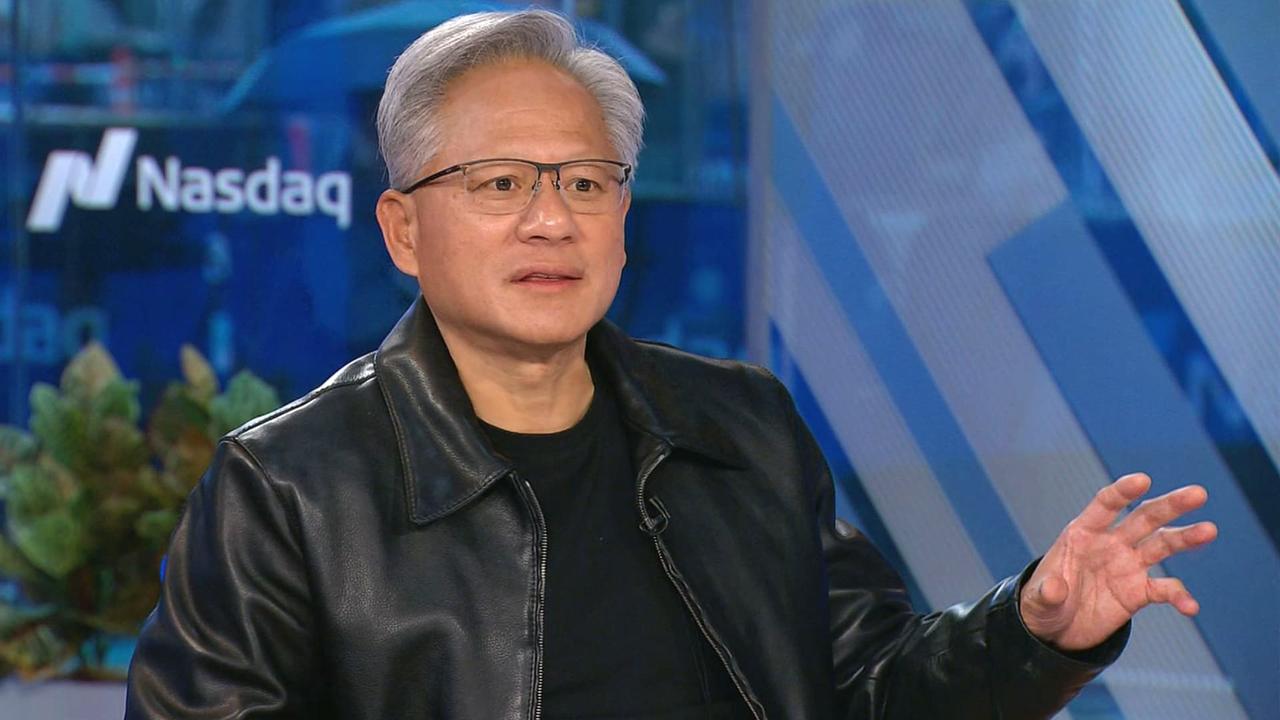

Nvidia CEO bets big on AI services that can reason Jensen Huang said he uses ChatGPT everyday Nvidia is planning to boost its chip performance every year Nvidia Chief Executive Officer Jensen Huang said that the future of Artificial Intelligence (AI) will be services that can "reason," but such a stage requires the cost of computing to come down first. Next-generation tools will be able to respond to queries by going through hundreds or thousands of steps and reflecting on their own conclusions, he said during a podcast hosted by Arm Holdings Plc CEO Rene Haas. That will give this future software the ability to reason and set it apart from current systems such as OpenAI's ChatGPT, which Huang said he uses every day. Nvidia will set the stage for these advances by boosting its chip performance every year by two to three times, at the same level of cost and energy consumption, Huang said. This will transform the way AI systems handle inference -- the ability to spot patterns and draw conclusions. "We're able to drive incredible cost reduction for intelligence," he said. "We all realize the value of this. If we can drive down the cost tremendously, we could do things at inference time like reasoning." The Santa Clara, California-based company has more than 90 percent of the market for so-called accelerator chips -- processors that speed up AI work. It has also branched out to selling computers, software, AI models, networking and other services -- part of a push to get more companies to embrace artificial intelligence. Nvidia is facing attempts to loosen its grip on the market. Data center operators such as Amazon.com Inc.'s AWS and Microsoft are developing in-house alternatives. And Advanced Micro Devices, already an Nvidia rival in gaming chips, has emerged as an AI contender. AMD plans to share the latest on its AI products at an event Thursday. © 2024 Bloomberg LP

[2]

Nvidia CEO Says 'Reasoning' AI Will Depend on Cheaper Computing

Nvidia Corp. Chief Executive Officer Jensen Huang said that the future of artificial intelligence will be services that can "reason," but such a stage requires the cost of computing to come down first. Next-generation tools will be able to respond to queries by going through hundreds or thousands of steps and reflecting on their own conclusions, he said during a podcast hosted by Arm Holdings Plc CEO Rene Haas. That will give this future software the ability to reason and set it apart from current systems such as OpenAI's ChatGPT, which Huang said he uses every day.

[3]

Nvidia wants to drive AI costs down amid the rise of 'reasoning' models

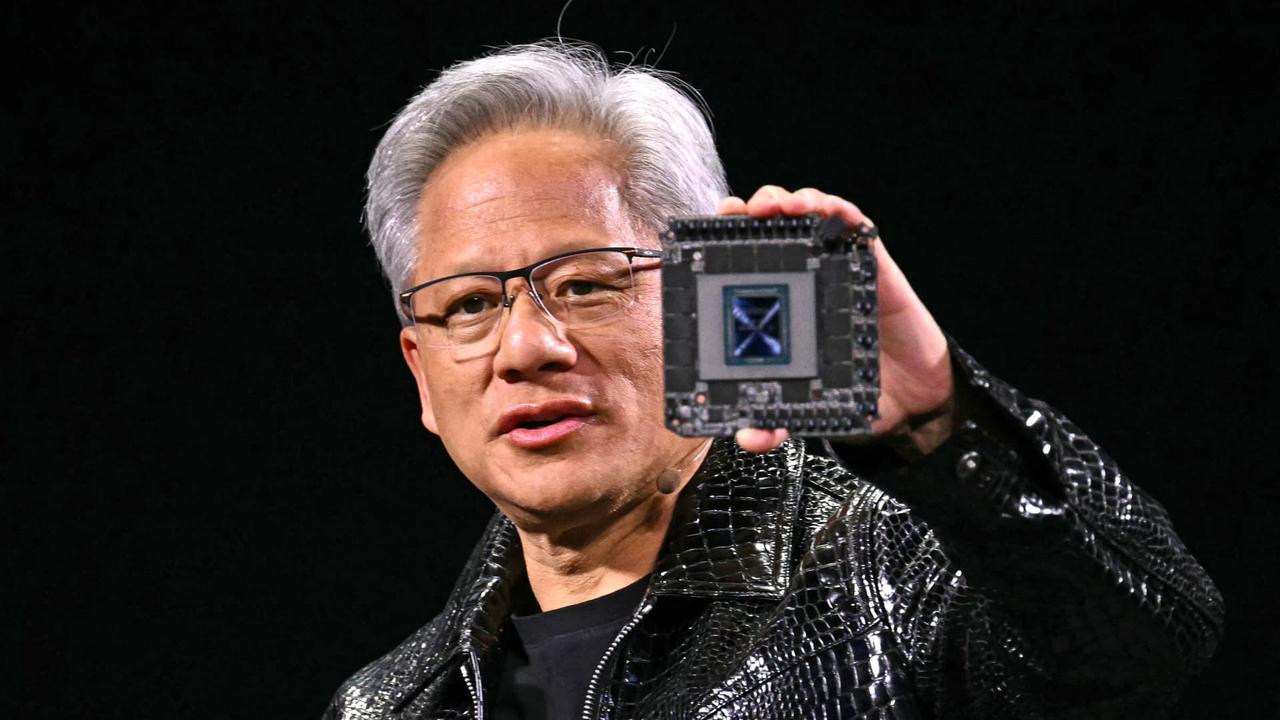

Nvidia's (NVDA) chips have been a driver of the current artificial intelligence boom -- and the chipmaker only wants to make it move faster, chief executive Jensen Huang said. During an appearance on the Tech Unheard podcast, Huang was asked by host Rene Haas, chief executive of British semiconductor company Arm (ARM), if the pace of AI innovation is moving "faster than you imagined." Huang replied, "No," and said Nvidia is "trying to make it go faster." "We've gone to a one-year cycle," Huang said about the company's production of new chips. "And the reason for that is because the technology has the opportunity to move fast." Nvidia is designing "six or seven new chips per system," Huang said, then using "co-design to reinvent the entire system" and inventing other technologies that allow it to improve performance by two or three times while using the same amount of energy and cost each year. "That's another way of, essentially, reducing the cost of AI by two or three times per year," Huang said. "That is way faster than Moore's Law." Over the next few years, Huang said, Nvidia wants to drive down the cost of AI amid the rise of even more complex models. This evolution is already underway. In September, OpenAI released a new series of "reasoning" AI models called o1, which are "designed to spend more time thinking before they respond," the way humans do. In the future, AI services such as OpenAI's ChatGPT, which Huang said he uses every day, will "iteratively reason about the answer" and could go through hundreds or thousands of inferences before producing an output. This level of complex processing would require significantly more computational power than current models. Despite the increased demands, Huang believes the trade-off is worthwhile. "[T]he quality of the answer is so much better," Huang said. "We want to drive the cost down so that we could deliver this new type of reasoning inference with the same level of cost and responsiveness as the past." On Wednesday, Nvidia's stock was climbing back toward its record high $135 close in June. The chipmaker's shares were down 0.27% during midday trading, but opened up almost 1% at around $134 per share.

Share

Share

Copy Link

Nvidia's CEO Jensen Huang discusses the future of AI, emphasizing the need for cheaper computing to enable 'reasoning' AI services. He outlines Nvidia's strategy to boost chip performance and reduce AI costs.

Nvidia's Vision for 'Reasoning' AI

Jensen Huang, CEO of Nvidia, has outlined a compelling vision for the future of artificial intelligence, emphasizing the critical role of cheaper computing in enabling more advanced 'reasoning' AI services. In a recent podcast hosted by Arm Holdings CEO Rene Haas, Huang shared insights into Nvidia's strategy and the evolving landscape of AI technology

1

2

.The Promise of 'Reasoning' AI

Huang envisions next-generation AI tools that can "reason" by processing queries through hundreds or thousands of steps and reflecting on their own conclusions. This capability would set future AI systems apart from current models like OpenAI's ChatGPT, which Huang admits to using daily

1

2

.The Nvidia CEO believes that these advanced AI services will offer superior quality in their responses:

"[T]he quality of the answer is so much better," Huang stated, highlighting the potential of reasoning AI

3

.Driving Down Costs for AI Innovation

A key focus for Nvidia is reducing the cost of AI computing to make these advanced systems feasible. Huang explained:

"We're able to drive incredible cost reduction for intelligence. We all realize the value of this. If we can drive down the cost tremendously, we could do things at inference time like reasoning."

1

Nvidia's Strategy for Performance Improvement

To achieve this goal, Nvidia has implemented an aggressive strategy:

-

Annual performance boost: Nvidia aims to increase chip performance by two to three times every year while maintaining the same cost and energy consumption levels

1

3

. -

Accelerated development cycle: The company has shifted to a one-year cycle for producing new chips, designing "six or seven new chips per system"

3

. -

System-wide innovation: Nvidia is reinventing entire systems through co-design and developing new technologies to improve performance

3

.

Huang emphasized that this approach allows Nvidia to reduce AI costs by two to three times annually, outpacing Moore's Law

3

.Related Stories

Market Position and Competition

Nvidia currently dominates the market for accelerator chips, holding over 90% market share. These specialized processors are crucial for speeding up AI workloads

1

.However, the company faces increasing competition:

-

In-house alternatives: Major cloud providers like Amazon's AWS and Microsoft are developing their own AI chips

1

. -

Rival chipmakers: Advanced Micro Devices (AMD) is emerging as a significant contender in the AI chip market

1

.

The Road Ahead

As AI models become more complex, exemplified by OpenAI's recent release of "reasoning" AI models called o1, the demand for computational power is set to increase significantly

3

. Nvidia's strategy of driving down costs while boosting performance aims to meet this growing demand, potentially reshaping the landscape of AI technology and its applications across various industries.Huang's vision underscores the transformative potential of AI and highlights Nvidia's commitment to pushing the boundaries of what's possible in artificial intelligence.

References

Summarized by

Navi

Related Stories

Nvidia CEO Jensen Huang Challenges DeepSeek's AI Efficiency Claims, Predicts Surge in Computing Demand

20 Mar 2025•Technology

Nvidia's AI Dominance Faces Challenges as Scaling Laws Show Signs of Slowing

21 Nov 2024•Technology

Nvidia CEO Jensen Huang Reports Surge in AI Computing Demand

08 Oct 2025•Technology