OpenAI releases GPT-5.4 mini and nano, built for speed over size in AI software engineering

5 Sources

5 Sources

[1]

OpenAI's Latest AI Models Are Built for Speed

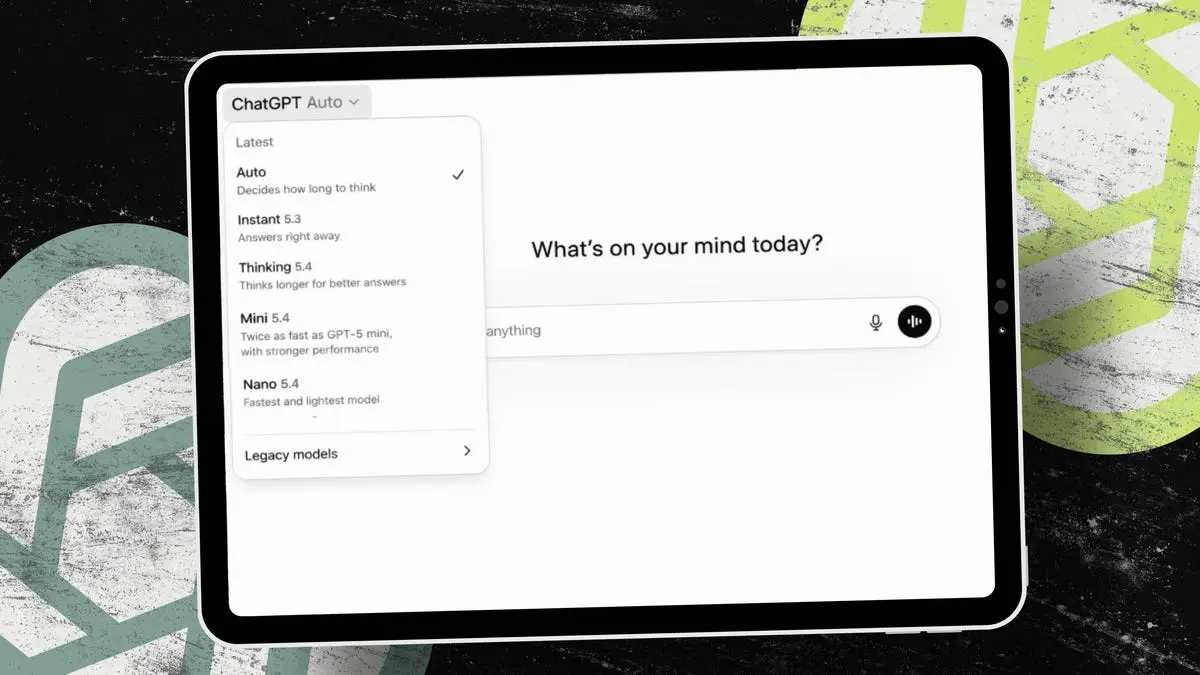

Expertise Artificial intelligence, home energy, heating and cooling, home technology. The latest models for ChatGPT users and developers using OpenAI's API are designed to be workhorses, built for tasks like vibe coding, where big, powerful AI models are expensive overkill. GPT-5.4 mini and nano, which OpenAI released Tuesday, are the smallest and fastest versions of GPT-5.4, which debuted earlier this month. These new models are part of an effort by OpenAI to lean into coding as it battles with rival Anthropic for the AI software engineering market. OpenAI's latest models for its Codex coding software have directly challenged Anthropic's Claude Code, which went viral at the end of 2025 for its ability to create apps from scratch. Disclosure: Ziff Davis, CNET's parent company, in 2025 filed a lawsuit against OpenAI, alleging it infringed Ziff Davis copyrights in training and operating its AI systems. OpenAI says GPT-5.4 mini is more than twice as fast as its predecessor, GPT-5 mini, at tasks like coding, reasoning and tool use. On some benchmarks, it's close to the capabilities of the standard GPT-5.4 model. Read more: Best AI Chatbots of 2026 The company suggested GPT-5.4 mini is best for things like editing and debugging code. It could be used as a subagent in Codex, with a larger model like GPT-5.4 delegating certain tasks to the faster, cheaper model. GPT-5.4 nano is even smaller, and OpenAI suggested it for grunt work like classifying and extracting data. GPT-5.4 mini will be available for developers through the API and through Codex and ChatGPT. ChatGPT Free and Go users can access it through the "Thinking" feature. Others will find it as the fallback model when they hit the rate limit for GPT-5.4 Thinking. GPT-5.4 nano is only available in the API.

[2]

OpenAI's GPT-5.4 mini and nano launch - with near flagship performance at much lower cost

Developers can mix large planning models with cheaper subagents. Over the past few weeks, we have seen the generation of OpenAI's flagship large language models iterate from GPT-5.3 to GPT-5.4. Think of the model as the engine that powers AI computation. Each generational jump usually results in increased performance and accuracy. Also: OpenAI's new GPT-5.4 clobbers humans on pro-level work in tests - by 83% The actual releases can be a bit difficult to track without a scorecard. On March 5, OpenAI released GPT-5.4 Thinking, a high-performance, in-depth thinking model. Two days earlier, it released GPT-5.3 (not 5.4) Instant, a model that "makes everyday conversations more consistently helpful and fluid," but not necessarily more accurate. This week, OpenAI is releasing the GPT-5.4 mini and GPT-5.4 nano models. These models are designed for fast, efficient, high-volume AI workloads. These are basically the budget language model offerings. For many AI workflows, the most effective model is one that balances strong performance with fast responses and reliable tool use. According to OpenAI, "These models are built for the kinds of workloads where latency directly shapes the product experience: coding assistants that need to feel responsive, subagents that quickly complete supporting tasks, computer-using systems that capture and interpret screenshots, and multimodal applications that can reason over images in real-time." Also: Nvidia's 'ChatGPT moment' for self-driving cars, and other key AI announcements at GTC 2026 The company said, "In these settings, the best model is often not the largest one -- it's the one that can respond quickly, use tools reliably, and still perform well on complex professional tasks." Compared to GPT-5 mini, GPT-5.4 mini improves across coding, reasoning, multimodal understanding, and tool use. The model runs more than twice as fast as GPT-5 mini. GPT-5.4 nano is the smallest and fastest model, aimed at classification, extraction, ranking, and simpler coding-support tasks. When looking at the smaller, less expensive models, performance is the distinguishing factor. Buyers want to know just how much bang for the buck they're getting. To illustrate this performance, OpenAI is showing substantial benefits over models released just months earlier: GPT-5.4 mini approaches GPT-5.4-level pass rates while delivering faster execution. In other words, the smaller, lighter GPT-5.4 mini model performs almost as well as the full GPT-5.4 model on benchmark tests (the "pass rates") that measure if the model solves problems correctly. Also: Why encrypted backups may fail in an AI-driven ransomware era GPT-5.4 nano splits the difference. For example, it scores 52.39% on SWE-bench Pro and 46.30% on Terminal Bench 2.0, not as high as GPT-5.4 mini but still considerably better than GPT-5 mini. Technology specialist Hebbia builds tools that help professionals dig through enormous collections of documents using natural language. Their offerings appeal to users in sectors such as finance, law, and research, where the ability to analyze and derive insights from many documents at once is particularly helpful. According to Aabhas Sharma, CTO at Hebbia: "GPT-5.4 mini delivers strong end-to-end performance for a model in this class. In our evaluations, it matched or exceeded competitive models on several output tasks and citation recall at a much lower cost. It also achieved higher end-to-end pass rates and stronger source attribution than the larger GPT-5.4 model." Digital workspace Notion is the darling of internet-based productivity wonks. I'm writing this article in my Notion workspace. The technology provides a home for both structured and unstructured data. You can also use Notion to build no-code mini applications for information management. I use Notion to track my article production, internal projects, video plans, development projects, and more. Also: As AI agents spread, 1Password's new tool tackles a rising security threat Abhisek Modi, AI engineering lead at Notion, said: "GPT-5.4 mini handles focused, well-defined tasks with impressive precision. For editing pages specifically, it matched and often exceeded GPT-5.2 on handling complex formatting at a fraction of the compute." Modi continued: "Until recently, only the most expensive models could reliably navigate agentic tool calling. Today, smaller models like GPT-5.4 mini and nano can easily handle it, which will let our users build Custom Agents on Notion pick exactly the amount of intelligence they need." I haven't been super-impressed by Notion's AI. Hopefully, by incorporating these new models, Notion AI's performance will improve considerably. When you start to look at how agents fit into the overall ecosystem, it becomes apparent that AI can be structured to mirror real-world human operations. For example, you can combine a more powerful AI model (like GPT-5.4 Thinking) with faster, cheaper models like GPT-5.4 mini in the same way you might have a senior engineer managing a team of junior engineers. Also: Nvidia wants to own your AI data center from end to end Agentic systems can combine models of different sizes, with larger models planning tasks and smaller models executing subtasks. In this context, GPT-5.4 mini can handle subagent work, such as searching codebases, reviewing files, and processing documents. OpenAI said: "GPT-5.4 mini is also strong on multimodal tasks, particularly those related to computer use. The model can quickly interpret screenshots of dense user interfaces to complete computer use tasks with speed." GPT-5.4 mini is available in API, Codex, and ChatGPT versions. For Free and Go tier users, GPT-5.4 mini is accessible via the "Thinking" option in the plus menu. OpenAI said: "For all other users, GPT-5.4 mini is available as a rate limit fallback for GPT-5.4 Thinking." Also: I used GPT-5.2-Codex to find a mystery bug and hosting nightmare - it was beyond fast The company said that for programmers, GPT-5.4 mini is available across the Codex app, CLI, IDE extension, and web. OpenAI said that the mini model "Uses only 30% of the GPT-5.4 quota, letting developers quickly handle simpler coding tasks in Codex for about one-third the cost." Additionally, Codex can also delegate to GPT-5.4 mini subagents so that less reasoning-intensive work runs on the less costly model. You can see how costs compare when you look at them side by side: By comparison, GPT-5.4 is priced at $2.50 per million input tokens and $15.00 per million output tokens. That's a lot more expensive. It makes sense that if you're trying to keep costs down and don't need the extra processing power, it's better to use the mini and nano models. Have you experimented with smaller AI models, like GPT-5.4 mini or nano, in your own workflows? Do you prefer using the largest models available, or do you find faster, cheaper models are often "good enough" for real-time tasks like coding, document analysis, or agent workflows? If you build AI-powered tools, how do you decide when to use a full reasoning model versus a lightweight subagent model? Let us know what you're seeing in practice and comment below.

[3]

GPT-5.4 mini brings some of the smarts of OpenAI's latest model to ChatGPT Free and Go users

When OpenAI released GPT-5.4 at the start of March, the company said the new model was designed primarily for professional work like programming and data analysis. Now OpenAI is launching GPT-5.4 mini and nano, and while it is once again highlighting the usefulness of these new systems for tasks like coding, one of the new models is available to Free and Go users. What's more, that model, GPT-5.4 mini, even offers performance that approaches GPT-5.4 in a handful of areas. As a Free or Go user, you can access 5.4 mini by selecting "Thinking" from ChatGPT's plus menu. For paid users, the model is the new fallback for when you've hit your rate limit with 5.4 proper. OpenAI says 5.4 mini offers better performance than GPT-5.0 mini in a few different key areas, including reasoning, multimodal understanding and tool use. That means 5.4 mini is better at parsing non-text inputs such as images and audio, and has a more nuanced understanding of how to do things like search the web. It does all of this while running more than twice as fast as its predecessor. As for GPT-5.4 nano, OpenAI says it's ideal for tasks such as data classification and extraction where speed and cost-efficiency are top of mind. If you're a ChatGPT user, you won't find the new model in the chatbot. Instead, OpenAI is making it only available through its API service. The company envisions developers using more advanced models to delegate tasks to AI agents running GPT-5.4 nano, and that's reflected in the cost of the new model, which OpenAI has priced starting at $0.20 per million input tokens.

[4]

OpenAI Releases GPT-5.4 Mini and Nano, Which Could Be More Useful Than the Big Model

Developers can now run hybrid AI systems where a flagship model plans tasks while smaller models handle the bulk of the work. OpenAI isn't slowing down. Less than two weeks after launching GPT-5.4 -- itself released just two days after GPT-5.3 -- the company dropped two more models on Tuesday: GPT-5.4 Mini and GPT-5.4 Nano. These aren't stripped-down versions of the flagship model -- they're purpose-built machines designed for the kind of work where waiting half a minute for an answer is not an option. OpenAI calls them its "most capable small models yet," saying that GPT-5.4 Mini is more than two times faster than GPT-5 Mini. If you've ever watched a coding assistant think for 45 seconds before editing three lines of code, then you understand the appeal of a fast model. So why would anyone release a less accurate model on purpose? The short answer: because accuracy isn't always the bottleneck. If you're running a customer service chatbot that answers the same 200 questions all day, then you don't need the model that scored best on PhD-level chemistry exams. You need the one that responds in under a second and costs a fraction of a cent per reply. That's the space these models are built for. But it doesn't mean these models are dumb or unreliable. On coding benchmarks, GPT-5.4 Mini scored 54.4% on SWE-Bench Pro -- a test that measures a model's ability to fix real GitHub issues -- compared to 45.7% for the old GPT-5 Mini and 57.7% for the full GPT-5.4. On OSWorld-Verified, which tests how well a model can actually operate a desktop computer by reading screenshots, Mini hit 72.1%, just shy of the flagship's 75.0% -- and both clear the human baseline of 72.4%. GPT-5.4 Nano, meanwhile, scores 52.4% on SWE-Bench Pro and 39.0% on OSWorld -- lower than Mini, but still a major leap over previous Nano-class models. "GPT-5.4 marks a step forward for both Mini and Nano models in our internal evaluations," Perplexity Deputy CTO Jerry Ma said after testing both. "Mini delivers strong reasoning, while Nano is responsive and efficient for live conversational workflows." Instead of routing every single task through an expensive flagship model, you can now build systems where the big model plans and coordinates while smaller models handle the actual grunt work in parallel -- searching a codebase here, reading a document there, or processing a form somewhere else. As we saw in our GPT-5.4 vs. Grok 4.20 comparison, where the model sits in the workflow matters as much as which model you pick. GPT-5.4 Mini runs at a rate of $0.75 per million input tokens and $4.50 per million output tokens via the API. GPT-5.4 Nano is even cheaper: $0.20 per million input tokens and $1.25 per million output tokens -- a price point that makes running a huge amount of queries per day financially realistic for startups. For context, Nano is roughly four times cheaper than Mini on inputs. For regular ChatGPT users, GPT-5.4 Mini is available today to Free and Go users via the "Thinking" option in the plus menu. Paid subscribers who hit their GPT-5.4 rate limits will automatically fall back to Mini. GPT-5.4 Nano, however, is API-only for now -- OpenAI is clearly positioning it as a developer tool, not a consumer one.

[5]

OpenAI Just Revealed Cheaper Versions of Its Flagship Model. Here's How to Use Them

OpenAI has introduced GPT-5.4 mini and GPT-5.4 nano, two small, cost-efficient versions of its flagship AI model. In a press release, OpenAI said that 5.4 nano and mini are "our most capable small models yet," coming close to matching the default GPT-5.4 model's abilities at coding and agentically operating software. But they come at a much cheaper price. The new models are also significantly faster than the flagship model and previous small models, making them particularly useful for collaborative vibe coding work. OpenAI typically releases models in four sizes; a large pro version, a middle-of-the-road standard version, a small mini version, and an even smaller nano version. Smaller models are faster and cheaper, but not as capable as their larger siblings. Both models excel in "workloads where latency directly shapes the product experience," according to OpenAI, such as coding assistants that can operate and make changes in real time and computer-use agents that can handle data entry.

Share

Share

Copy Link

OpenAI launched GPT-5.4 mini and GPT-5.4 nano on Tuesday, marking a strategic shift toward smaller and faster AI models designed for high-volume workloads. The new models run more than twice as fast as their predecessors while approaching flagship performance on coding tasks. GPT-5.4 mini is now available to ChatGPT Free and Go users, while nano targets developers building cost-efficient AI systems.

OpenAI Launches Smaller and Faster AI Models for High-Volume Workloads

OpenAI unveiled GPT-5.4 mini and GPT-5.4 nano on Tuesday, less than two weeks after releasing its flagship AI model GPT-5.4

1

4

. These cost-efficient AI models represent a strategic pivot toward speed and efficiency rather than raw computational power, targeting workloads where latency directly shapes user experience2

. The company describes them as "our most capable small models yet," designed specifically for AI software engineering tasks where waiting 45 seconds for a response isn't practical4

5

.

Source: Inc.

GPT-5.4 mini runs more than twice as fast as GPT-5 mini while delivering performance that approaches the flagship AI model on key benchmarks

1

3

. On SWE-Bench Pro, a test measuring a model's ability to fix real GitHub issues, GPT-5.4 mini scored 54.4% compared to 45.7% for GPT-5 mini and 57.7% for the full GPT-5.44

. The model also achieved 72.1% on OSWorld-Verified, which tests how well AI can operate a desktop computer by reading screenshots—just below the flagship's 75.0% but above the human baseline of 72.4%4

.Developers Gain Access to Budget Models with Near-Flagship Performance

The release enables developers to build hybrid AI systems where a flagship model plans and coordinates while smaller models handle execution tasks in parallel

4

. GPT-5.4 mini is priced at $0.75 per million input tokens and $4.50 per million output tokens via the API, while GPT-5.4 nano costs just $0.20 per million input tokens and $1.25 per million output tokens—roughly four times cheaper than mini4

. This pricing makes running high-volume AI workloads financially realistic for startups and enterprises alike.OpenAI suggests GPT-5.4 mini excels at coding tasks like editing and debugging code, with improved reasoning and tool use capabilities

1

3

. The model also demonstrates better multimodal understanding, meaning it can parse non-text inputs such as images and audio more effectively than previous versions3

. GPT-5.4 nano, meanwhile, targets grunt work like data classification and extraction, ranking, and simpler coding-support tasks1

2

. It scored 52.39% on SWE-Bench Pro and 46.30% on Terminal Bench 2.0—not as high as mini but still considerably better than GPT-5 mini2

.

Source: ZDNet

ChatGPT Users Get Access to Advanced Capabilities at Lower Tiers

For ChatGPT Free and Go users, GPT-5.4 mini is now accessible through the "Thinking" feature in the plus menu

1

3

. Paid subscribers will find it as the fallback model when they hit rate limits for GPT-5.4 Thinking1

. GPT-5.4 nano remains API-only, positioning it as a developer tool rather than a consumer-facing option3

4

.Source: Engadget

The launch intensifies OpenAI's competition with Anthropic in the AI software engineering market, particularly as the company battles for dominance in coding assistants

1

. Early feedback from technology companies suggests strong performance. Aabhas Sharma, CTO at document analysis platform Hebbia, noted that "GPT-5.4 mini delivers strong end-to-end performance for a model in this class" while achieving "higher end-to-end pass rates and stronger source attribution than the larger GPT-5.4 model"2

. Abhisek Modi, AI engineering lead at Notion, said the model "matched and often exceeded GPT-5.2 on handling complex formatting at a fraction of the compute"2

.Related Stories

Cost-Efficiency Enables New Agentic Workflows and Real-Time Applications

The models are built for scenarios where cost-efficiency and low latency matter more than maximum capability—coding assistants that need to feel responsive, subagents that quickly complete supporting tasks, computer-using systems that capture and interpret screenshots, and applications requiring real-time reasoning

2

. According to OpenAI, "the best model is often not the largest one—it's the one that can respond quickly, use tools reliably, and still perform well on complex professional tasks"2

.This architecture mirrors real-world human operations, where managers delegate specific tasks to specialists rather than handling everything themselves

2

. Developers can now route tasks intelligently: a flagship model might plan the overall approach while mini handles code editing and nano processes data extraction—all running in parallel4

. The pricing structure makes this practical even at scale, with nano's $0.20 per million input tokens enabling massive query volumes without breaking budgets4

.References

Summarized by

Navi

[3]

Related Stories

Recent Highlights

1

Google Maps unveils Ask Maps with Gemini AI and 3D Immersive Navigation in biggest update

Technology

2

AI chatbots help plan violent attacks as safety guardrails fail, new investigation reveals

Technology

3

OpenAI secures $110 billion funding round as questions swirl around AI bubble and profitability

Business and Economy