Nvidia's DLSS 5 faces fierce backlash as gamers and developers reject generative AI overhaul

110 Sources

[1]

Nvidia CEO tries to explain why DLSS 5 isn't just "AI slop

Last week, Nvidia's public reveal of DLSS 5 -- and its "generative AI" enhanced glow-ups of gaming scenes -- drew widespread condemnation from the gaming community. In a podcast published Monday, though, Nvidia CEO Jensen Huang tried to differentiate the technology's optional, artist-guided graphical enhancements from the "AI slop" that Huang says he's not a fan of. As part of a nearly two-hour-long interview with the Lex Fridman Podcast, Huang was asked to explain the "drama" around DLSS 5 and "the gamers online [that] were concerned that it makes games look like AI slop." Huang responded that he "could see where they're coming from, because I don't love AI slop myself... all of the AI-generated content increasingly looks similar and they're all beautiful, so... I'm empathetic towards what they're thinking." At the same time, Huang said DLSS 5 is decidedly separate that kind of "slop," because it "is 3D conditioned, 3D guided." The artists behind a game are still the ones creating the in-game structural geometry and textures that form the "ground truth structure" that DLSS 5 works from, Huang said. "And so every single frame, it enhances but it doesn't change anything," he said. For the most part, though, gamers haven't been worried about DLSS 5 creating trippy new content from the ground up like some generative AI world models. Instead, the worry is that DLSS 5's visual "enhancements" could end up smoothing out many disparate games toward a single, flattened, homogenized photo-realism standard. That's a misunderstanding of how DLSS 5 works, Huang said. It's not a technology where a game ships in one state and "then we're gonna post-process it," he said. Instead, DLSS 5 "is integrated with the artist, and so it's about giving the artist the tool of AI, the tool of generative AI." Because DLSS 5 is "open," Huang said artists can train the model for the specific kind of look they want. In the future, Huang said artists will also be able to prompt DLSS 5 with examples or a description of a desired look -- "I want it to be a toon shader," for instance. And if visual artists want to use DLSS 5's models "to generate the opposite of photoreal, yeah, it'll do that too," he said. The interview follows similar comments Huang gave in an interview with Tom's Hardware last week, when he said "it's not post-processing at the frame level, it's generative control at the geometry level." But any "confusion" on this point from gamers is entirely understandable since previous versions of DLSS were explicitly sold as relatively turnkey post-processing to improve resolutions and/or frame rates by generating new frames that look like the ones rendered by the game itself. If Nvidia wanted to introduce a new artist-facing tool for using generative AI to create customizable shader-style effects, they could have done so without overturning the existing and well-known meaning and branding of DLSS. Elsewhere in the new podcast, Huang threw in an aside that if artists don't like the look of DLSS 5's enhancements, "they could decide not to use it, you know?" Whether or not individual artists want to use DLSS 5, though, Nvidia's announced partnerships with major publishers including Bethesda, Capcom, NetEase, NCSoft, Tencent, Ubisoft, and Warner Bros. Games. suggest that the companies behind many big-budget games will be pushing the technology's use in their projects at least for the time being. And while gamers will also be able to disable DLSS 5's "enhancements" if they don't like the way they look, that's not exactly the affirmative defense of the technology that generative AI boosters may have been hoping for out of Nvidia. We still have months to go until Nvidia's planned debut of DLSS 5 in any actual games. We expect Huang and others at Nvidia will be spending a good deal of that time trying to explain and justify the new technology to a skeptical gaming public.

[2]

Gamers react with overwhelming disgust to DLSS 5's generative AI glow-ups

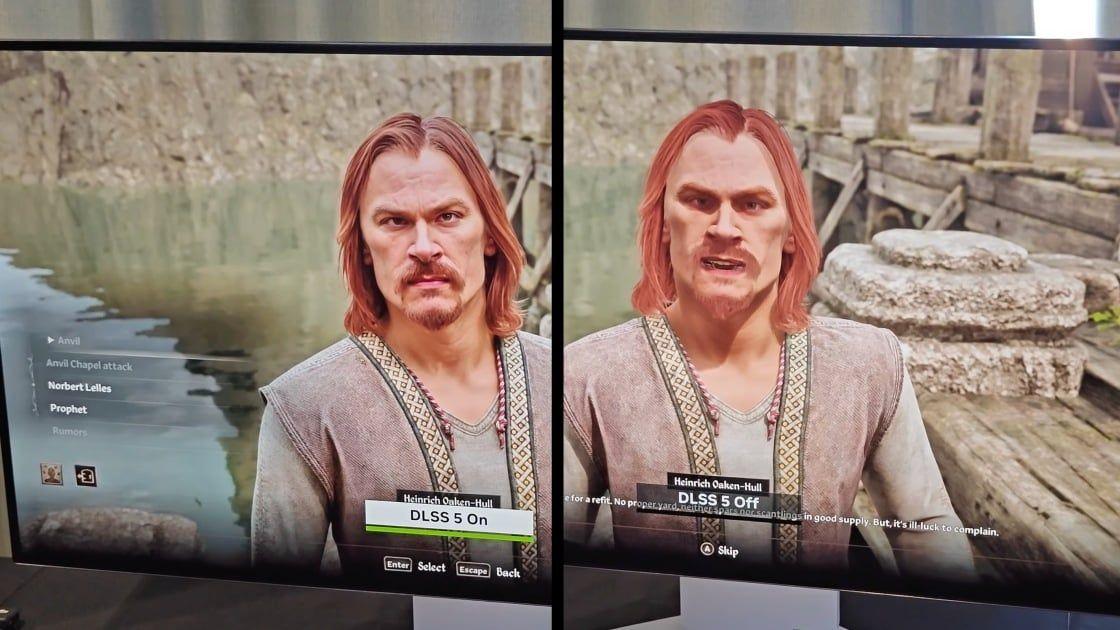

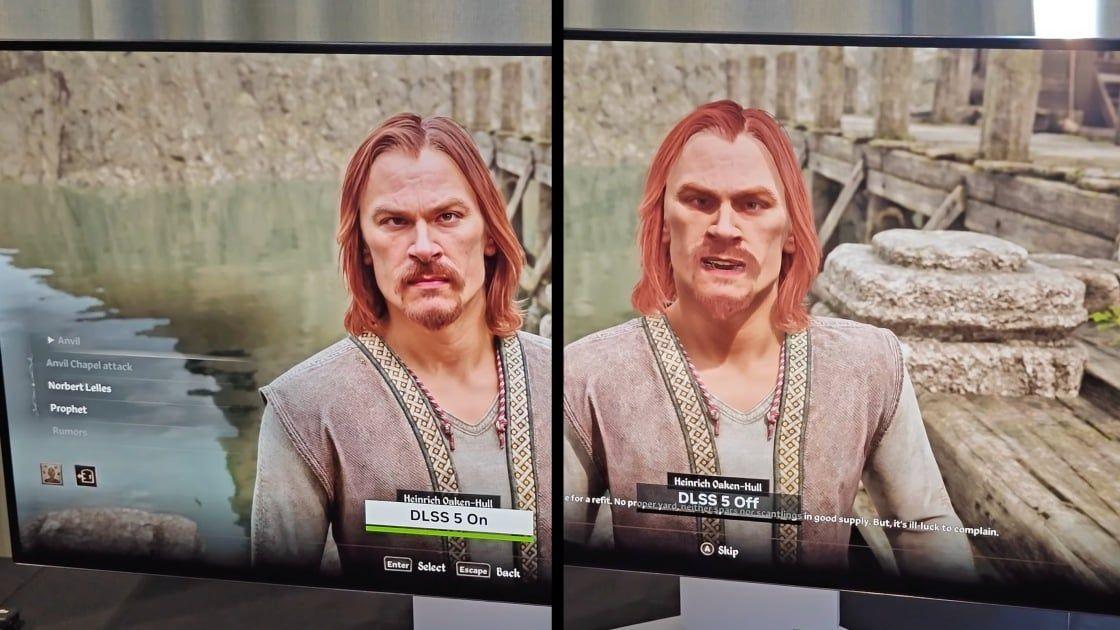

Since deep-learning super-sampling (DLSS) launch on 2018's RTX 2080 cards, gamers have been generally bullish on the technology as a way to effectively use machine learning upscaling techniques to increase resolutions or juice frame rates in games. With yesterday's tease of the upcoming DLSS 5, though, Nvidia has crossed a line from mere upscaling into complete lighting and texture overhauls influenced by "generative AI." The result is a bland, uncanny gloss that has received an instant and overwhelmingly negative reaction from large swaths of gamers and the industry at large. While previous DLSS releases rendered upscaled frames or created entirely new ones to smooth out gaps, Nvidia calls DLSS 5 -- which it plans to launch in Autumn -- "a real-time neural rendering model" that can "deliver a new level of photoreal computer graphics previously only achieved in Hollywood visual effects." Nvidia CEO Jensen Huang said explicitly that the technology melds "generative AI" with "handcrafted rendering" for "a dramatic leap in visual realism while preserving the control artists need for creative expression." Unlike existing generative video models, which Nvidia notes are "difficult to precisely control and often lack predictability," DLSS 5 uses a game's internal color and motion vectors "to infuse the scene with photoreal lighting and materials that are anchored to source 3D content and consistent from frame to frame." That underlying game data helps the system "understand complex scene semantics such as characters, hair, fabric and translucent skin, along with environmental lighting conditions like front-lit, back-lit or overcast," the company says. "When you absolutely, positively, don't want any art direction..." You can see how DLSS 5 reinterprets that frame data for yourself in both Nvidia's announcement video and in a detailed Digital Foundry breakdown (which notes that the demo currently makes use of two RTX 5090s, with one completely dedicated to DLSS 5). But while Digital Foundry described the "transformational lighting" effects as "astonishing" numerous times in its write-up, the reaction from the rest of the gaming world has been overwhelmingly negative so far. Many of the reactions have focused on how DLSS 5 turns in-game faces into overly detailed, uncanny valley versions of the original models. Reactions have compared the effect to air-brushed pornography, "yassified, looks-maxed freaks", or those uncanny, unavoidable Evony ads. Others have noted how DLSS 5 seems to mangle the intended art direction by dampening shadows in favor of a homogenized look. Some game developers have leapt on the "artistic intent" angle, too. Thomas Was Alone developer Mike Bithell added that the technology seems designed "for when you absolutely, positively, don't want any art direction in your gaming experience." And Gunfire Games Senior Concept Artist Jeff Talbot said, "in every shot the art direction was taken away for the senseless addition of 'details.' Each DLSS 5 shot looked worse and had less character than the original. This is just a garbage AI Filter." New Blood Interactive founder and CEO Dave Oshry added that DLSS 5's "AI dogshit is actually depressing" and lamented that future generations "won't even know this looks 'bad' or 'wrong' because to them it'll be normal." Damage control By way of damage control, Nvidia took to the comments on its YouTube reveal trailer (which are filled with thousands of negative takes) to stress that DLSS 5 "is not a filter" and that "game developers have full, detailed artistic control over DLSS 5's effects to ensure they maintain their game's unique aesthetic." That includes the ability to tweak intensity and color grading or turn the masking off entirely in "places where the effect shouldn't be applied." DLSS 5 OFF // DLSS 5 ON [image or embed] -- Tuba Zef (@tubazef.com) March 16, 2026 at 3:59 PM Bethesda, one of the many major publishers Nvidia named as an early DLSS 5 partner, piped in on social media to say that these videos are "a very early look, and our art teams will be further adjusting the lighting and final effect to look the way we think works best for each game. This will all be under our artists' control, and totally optional for players." From a public image perspective, though, the damage may already be done. DLSS 5 has quickly become a meme format in its own right, with Internet commenters using "DLSS 5 On" as visual shorthand for "overly cleaned up" or "mangled beyond recognition." We're not sure how you come back from that kind of instant infamy, but Nvidia will have until the fall to find out.

[3]

Gamers Hate Nvidia's DLSS 5. Developers Aren't Crazy About It Either

Nvidia's new AI upscaling gaming technology struck gamers as uncanny and off-putting. Developers don't seem to like it either, but it could be "the default" in a few years. Nvidia announced a new version of its DLSS AI upscaling technology for its graphics cards earlier this week at its GPU Technology Conference (GTC), which it calls the Super Bowl of AI. But unlike previous versions of DLSS that used AI to improve frame rates in video games, DLSS 5 has a much more ambitious calling: using generative AI to make character faces in games look more realistic and detailed. The demonstration received sharp blowback on social media, with many finding the effect off-putting, reacting with outright disgust, and calling it yet another example of AI slop. DLSS, or deep-learning super-sampling, is a feature Nvidia introduced on its graphics cards in 2018. The primary use has been to improve frame rates in video games by rendering games at a lower resolution, then using AI to upscale the quality. More recent versions of DLSS insert AI-generated frames in between actual rendered frames. These techniques use less computing power than generating the full frames, allowing for better gaming performance without taxing your PC's hardware and maintaining visual fidelity. The feature can be turned on or off. "From a technical standpoint, it's quite an achievement," Kevin Bates, CEO and creator of the open source retro gaming handheld Arduboy, wrote in a message to WIRED. "I would have expected a cloud-based rendering service to provide it. The fact they expect to distill it down to what can run on a single [graphics] card later this year is insane." But DLSS 5 has crossed a generative-AI rubicon. Instead of just being a tool Nvidia provides developers, it manifests as actual visual changes without their consent. While you can still turn it on or off in your video games, the technology has some developers -- not just gamers -- worried. Nvidia showed off a demo of the tech on games like Capcom's Resident Evil Requiem, Ubisoft's Assassin's Creed, and Bethesda's Starfield. The company says it's meant to improve the graphics and generate photorealistic details and lighting. The demo seemed to largely improve the lighting, which detractors compared to the glow of a ring light just out of frame. Faces became far more detailed, even introducing new facial features. It was also criticized on social media for over sexualizing characters, where people called the look "yassified," or "porn faces," and compared the effect to Instagram or Snapchat's glamour filters, which smooth out imperfections on a person's image. Gamers did not approve. The Verge called it motion smoothing, but worse. The tech also has other issues, like introducing unexpected artifacts in real time. You can see some of those problems in the official demo video itself. In a scene in a FIFA game where a soccer ball is being kicked into a net, the ball has weird artifacts on it with DLSS 5 on, looking like a piece of the net is on the foreground of the ball before it has even gone in the goal. (Pause the video at 59 seconds.) People's facial features, like the female character in Resident Evil Requiem, have some slight but noticeably different facial features: Larger eyes, fuller lips, and a completely different nose. "It devalues an artist's creativity and intent on a basic level," says James Brady, a video game artist and designer who has worked on games like Call of Duty: Modern Warfare 3. "All this takes away from the artist's original design intent on the character and its shape language, with what pretty much functions on a surface level as a 'Snapchat filter.'"

[4]

Nvidia Teases DLSS 5 and Gamers Aren't Impressed

Expertise Video Games | Misinformation | Conspiracy Theories | Cryptocurrency | NFTs | Movies | TV | Economy | Stocks Nvidia opened its GTC conference with a keynote by CEO Jensen Huang, revealing the company's latest tech. Among the raft of the company's AI developments, gamers were treated to the imminent version of its AI-powered upscaling and optimization technology, DLSS (Deep Learning Super Sampling), touted as the "biggest breakthrough in computer graphics". Nvidia published a video illustrating how DLSS 5 can enhance graphics in Resident Evil Requiem, Starfield and other games, showing before-and-after takes. But gamers weren't thrilled. In fact, the response to DLSS 5 resembles more of a collective backlash, replete with memes, ridicule and outrage. Gamers were quick to point out that DLSS 5 transformed the original graphics into something vastly different. Some called the visuals "AI slop" because they look like "yassified" AI-generated filters. Many worry that DLSS 5 could deviate from a creator's specific artistic vision. Critics also fear that if this technology becomes the industry standard, video game graphics might start to look the same, losing their unique visual identity. "Everything about this is a betrayal of these game's artistry," said YouTuber The Sphere Hunter in a post on X Monday. "Painting over handcrafted, intentional 3D art with shiny, wrinkly, sunken-in, porous, puckered, fraudulent, filtered nonsense is deeply disrespectful. If you want this, just watch gen-AI videos all day." Countless memes mocking the tech's exaggerated features flooded the internet. Others on social media parodied the effects DLSS 5 could produce in other games. In a Q&A on Tuesday, Huang addressed the backlash from gamers, calling them "completely wrong." Huang underlined that DLSS 5 "enhances and adds generative capability, but it doesn't change the artistic control" and that "it's in the direct control of the game developer." The team at Digital Foundry, which specializes in game technology and hardware reviews, called it "disruptive and transformative" but was generally positive about it, though they saw some hiccups. "[The images] looked a little bit uncanny, I would say, but definitely the overall portrayal of those characters is much more sophisticated," said Oliver Mackenzie, video producer and writer for Digital Foundry. Bethesda's official X account replied to comments from members of Digital Foundry about Starfield and The Elder Scrolls IV: Oblivion Remastered, both published by Bethesda. "This is a very early look, and our art teams will be further adjusting the lighting and final effect to look the way we think works best for each game. This will all be under our artists' control, and totally optional for players," the publisher said. DLSS 5 is set to be released sometime in the fall. Nvidia first released its DLSS tech back in 2018 with its RTX 2080 card: The RTX architecture introduced the Tensor cores, which are essential for accelerating the calculations used by the DLSS AI. The deep learning technology was designed to upscale images and video from low resolution in real time to achieve higher frame rates. Gamers weren't impressed at first, but later versions of the technology did perform better in games that supported it. DLSS 4, released last year and tweaked to 4.5 as of January, made significant improvements to detail rendering, reducing motion artifacts, boosting frame rates, and generating more realistic lighting via path tracing (which incorporates interactions with ray-traced lighting). DLSS 5 works a bit differently than previous versions of the technology. According to Nvidia, DLSS 5 shifts from processing simple pixels to understanding 3D elements. By deconstructing characters into specific components -- such as skin, hair and clothing -- the AI can render them more consistently. This results in faster performance and much more realistic details, especially for textures and lighting. Game developers control how DLSS 5 enhances images and to what degree, ensuring it matches the game's aesthetic. The demo video showcased some positive enhancements, but others looked like sweeping changes to the characters and the environment. On Monday, Nvidia released a list of games slated to support DLSS 5: Nvidia has yet to provide a list of GPUs that will support the new technology. In an FAQ, the company says it will release a list of supported cards closer to its release.

[5]

Nvidia's DLSS 5 is like motion smoothing for video games, but worse

Yesterday Nvidia revealed its latest upscaling tech called DLSS 5, which it described as "the company's most significant breakthrough in computer graphics since the debut of real-time ray tracing in 2018." Sounds good, until you actually see it. According to Nvidia, the tech "infuses pixels with photoreal lighting and materials," but all anyone seemed to notice was that it turned recognizable faces into something resembling AI slop. Resident Evil Requiem protagonist Grace got a makeover that would make her look at home in a Tilly Norwood video. The Hogwarts Legacy kids looked like they'd been wrung through an Instagram filter. Even Liverpool captain Virgil van Dijk, a very real and famous person, had his features warped and became just some other dude with DLSS 5. This "significant breakthrough" imbues everything with a particular look that's become synonymous with AI-generated art. It's sort of like motion smoothing, if motion smoothing went a step farther and changed people's faces -- and it's making everything look the same. It's important to note that the next time you play Requiem on a PC, Grace won't suddenly look like she was ripped out of a Grok Imagine demo. DLSS 5 doesn't launch until the fall, it'll require some beefy hardware to operate, and it is an optional feature. But it is a technology that is being pushed by one of the most valuable companies in the world, which has support from major video game developers. And they all seem content with associating their games with a very particular aesthetic. In a statement on Nvidia's announcement blog, Bethesda boss Todd Howard said that "with DLSS 5 the artistic style and detail shine through without being held back by the traditional limits of real-time rendering," while noting that the feature will be available in Starfield. Jun Takeuchi, executive producer on many of Capcom's biggest blockbusters, including Requiem, said that "DLSS 5 represents another important step in pushing visual fidelity forward, helping players become even more immersed in the world of Resident Evil." It's a little strange to hear that some of the most influential names in games have decided that it's cool for Nvidia to replace their carefully crafted characters with generic AI-powered versions. In a follow-up tweet, Bethesda noted that what we're seeing is a "very early look," and that the studio's "art teams will be further adjusting the lighting and final effect to look the way we think works best for each game." So maybe the version of DLSS 5 that's available in the fall will look very different. But what we are seeing now points to a bleak future. AI has infiltrated nearly every aspect of our lives, and one of the most frustrating ways has been on an aesthetic level. AI-generated faces are an amalgamation of countless images, which are then used to spit out a sort of homogenized ideal. It's typically easy to identify thanks to a handful of telltale signs: unnaturally smooth skin and uniform features, perpetually cheerful eyes, a smiling mouth with full lips, perfectly styled hair that looks synthetic, small noses, and HDR-style lighting that highlights every contour. On their own, these can be typical facial features, but when every AI face has them all or most of them, we start veering into the Uncanny Valley. That's why so many people reacted strongly to the faces in Nvidia's announcement: They don't just look bad, they look the same as everything else. That same aesthetic is prevalent everywhere from Instagram feeds to YouTube thumbnails, and it's been inching its way from social networks to more traditional forms of entertainment and culture. I've yet to see a good AI-generated film, and yet they keep coming, and you can identify them from a single screen. Nvidia's new tech is the most visible example of that aesthetic infiltrating games. There are a number of reasons why seeing AI mangle an artist's work is troublesome for games in particular. The industry has been ravaged by layoffs and studio closures following some very expensive misplaced bets and a post-pandemic slowdown, so the potential for replacing human work with slop doesn't sit well. It's also a medium where a subset of the audience has some very backward ideas about what a normal human woman looks like, so making existing characters somehow both more generic and more cartoonish through an AI tool is extremely problematic. Since the announcement, there have been a number of indie developers who have come out to lambast DLSS 5 through memes and more explicitly negative statements. And while a large percentage of developers seem to be against the idea of using generative AI in their games, with some smaller studios going so far as to use "AI free" as a marketing label, it's also true that many of those in decision-making positions at larger developers don't feel the same way. That's why we have the likes of Howard and Takeuchi espousing this technology while at the same time showing a demo that people can't stop making fun of. Grace's face rendered through DLSS 5 is an early vision of what things could look like if the adoption becomes more widespread. And if that happens, being a good friend might mean turning it off when you visit, just like motion smoothing.

[6]

Nvidia CEO says he's 'empathic' to DLSS 5 concerns -- Jensen Huang doubles down on defense while decrying 'AI slop'

More of the same talking points, but it seems Nvidia has seen the backlash. Nvidia CEO Jensen Huang is conceding to backlash, following comments the executive made at GTC 2026 where he said gamers were "completely wrong" about their criticisms of DLSS 5, as first reported by Tom's Hardware. During a recent appearance on the Lex Fridman podcast, Huang seemed more sympathetic to the vocal crowd that has framed DLSS 5 as "AI slop." "I think their perspective makes sense," Huang said. "And I could see where they're coming from because I don't love AI slop myself. You know, all of the AI-generated content increasingly looks similar, and they're all beautiful... so I'm empathic toward what they're thinking. That's just not what DLSS 5 is trying to do." Although Huang is striking a more conciliatory tone, much of his response is similar to what we heard at GTC. "The artist determines the geometry, we are completely truthful to the geometry... so every single frame, it enhances, but it doesn't change anything." There was some confusion about how DLSS 5 worked when it was first announced, and although the inner workings of it still aren't clear on a technical level, Huang has said that it isn't a general-purpose generative AI model. He describes it as "content-controlled generative AI." On the other end of the spectrum, Huang also said that it isn't a post-processing filter. The technical details of DLSS 5 live somewhere between that space, and we likely won't know them until later this year when the feature is set to release. "The question about enhancing, DLSS 5... in the future, you could even prompt it. You know, I want it to be a toon shader. I want it to look like this, kind of. You could even give it an example and it would generate in the style of that, all consistent with the artistry, the style, the intent of the artist," Huang continued. "All of that is done for the artist so they can create something that is more beautiful but still in the style that they want." Although the talking points about DLSS 5 remain unchanged, it seems that Huang has at least heard the criticism. "I think that they got the impression that the games are going to come out the way the games are... and then we're going to post-process it. That's not what DLSS is intended to do." Huang also made assertions that DLSS is "integrated" with the artist, and suggested that it would put the power of generative AI in the hands of artists working in game development; although, we've already seen generative AI show up in shipping releases, from Clair Obscur: Expedition 33 to the recently-launched Crimson Desert. Up to this point, DLSS hasn't been a tool developers have much interaction with. It's not post-processing, either, but it comes late in the rendering chain and is largely governed by Nvidia's models and various DLSS presets. Although DLSS 5 looks like it's doing a lot, Huang said that it's just another tool, not an essential feature. "The gamers might also appreciate that, in the last couple of years, we introduced skin shaders to game developers, and many of those games have skin shaders that include sub-surface scattering that makes skin look more skin-like... [DLSS 5] is just one more tool. They can decide what to use," Huang ended the conversation about DLSS 5. Immediately after, without missing a beat, he said 1993's Doom was the most influential video game ever made. Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

[7]

Nvidia's CEO On DLSS 5 Backlash: The Haters Are Wrong

When he's not battling bugs and robots in Helldivers 2, Michael is reporting on AI, satellites, cybersecurity, PCs, and tech policy. Some gamers may hate Nvidia's DLSS 5 and how it uses AI to add ultrarealistic effects to PC games. But the company's CEO, Jensen Huang, says the critics are "wrong." "We created the technology, we didn't create the art," Huang said, describing DLSS 5 as merely a tool game developers can choose to use. In a Q&A with journalists at the company's GTC event, Huang was asked about the initial harsh reception to DLSS 5, which uses a new AI "neural rendering" model to add photorealism to game characters, objects and environments. The company has described it as the biggest leap in gaming graphics in years. However, some consumers have mockingly compared DLSS 5 to AI-powered face filters over social media apps. Others, including IGN, have argued the technology represents a "slap in the face" to the art of video game design. In the Q&A, Huang was specifically asked about how DLSS 5 allegedly creates "worse, homogenous" imagery or imposes Nvidia's view on how games should look. In response, Nvidia's CEO said: "First of all, they're completely wrong." Huang then alluded to how DLSS 5 was designed to understand 3D characters, environments and their motion to add a new level of realism, as opposed to ham-fisted AI face filters. "As I explain very carefully, DLSS 5 infuses controllability of geometry and textures and everything about the game with generative AI. Now, you can still fine-tune the generative AI so that you could make it your artistic style. And so all of that is up to you. We created the technology, we didn't create the art," he said. "DLSS 5, because it's geometry-controlled, it's conditioned by the ground truth of the game, it enhances and adds generative capability to it, but it doesn't change artistic control," he also said. "It's not post-processing at the frame level, it's generative control at the geometry level." "This is very different from generative AI," he later added. "It's content controlled generative AI. That's why we call it neural rendering." Nvidia also emphasized similar points during our hands-on with DLSS 5. Although the technology functions as a proprietary model, the company says game developers will be able to fine-tune the neural rendering effect to their liking. Still, some gamers, including PCMag staff members, say that DLSS 5 seems to go overboard with the AI-added effects, creating hyper-real faces that give uncanny valley vibes. For now, Nvidia noted in a FAQ: "DLSS 5 at GTC is an early preview and the model is still being optimized. We will share these details closer to release in fall 2026." During his Q&A, Huang also mentioned ray-tracing, which Nvidia introduced to RTX 2000 GPUs back in 2018. He noted the enhanced lighting and shadow effects initially faced criticism as well, but the technology experienced improvements and became mainstream in gaming over time. "Everybody poo-pooed it. Everybody said ray-tracing fubar. If we didn't have RTX today, doing full scene path-tracing, computer graphics wouldn't be what it is today," he added.

[8]

Bethesda says it's "further adjusting" the look of Starfield's DLSS 5's visuals following backlash

* Bethesda says it will tweak DLSS 5 lighting and effects in Starfield after fan backlash. * DLSS 5 alters visuals beyond just upscaling, adding an AI sheen that many feel looks like AI slop. * Nvidia says that developers will have full control over DLSS 5 and how it changes their games' visuals. Bethesda says it will work on "further adjusting the lighting and final effect" of Nvidia's controversial DLSS 5 AI-enhanced visuals in Starfield, following backlash from fans that have heavily criticized the AI-slop-like look of characters' faces. Instead of just upscaling a title to a higher resolution like previous versions of the AI tech, DLSS 5 fundamentally changes a title's visuals, adding additional character detail and changing the environment's lighting and color. This shifts the game's visuals well beyond what its original developer envisioned, and in most cases, adds an unnecessary AI sheen to titles that, at least so far, makes them look worse. Nvidia's CEO Jensen Huang has already responded to the backlash, saying that gamers are "wrong" about DLSS 5 and that game developers will have the final say on how their games look with the feature enabled. Whether that statement proves entirely accurate remains to be seen. Nvidia's CEO says gamers are "wrong" about DLSS 5's AI slop backlash Welcome to the gaming industry's AI era Posts 11 By Patrick O'Rourke The studio says the look of DLSS 5 in Starfield is under its "artistic control." Hopefully, the final aesthetic is significantly less intense Bethesda head Todd Howard initially called DLSS 5's impact "amazing" in a Nvidia marketing video focused on the AI feature. Now, in a recent post on X, the studio has pulled back on that initial enthusiasm, saying that, "This is a very early look, and our art teams will be further adjusting the lighting and final effect to look the way we think works best for each game." This means that at least in Starfield, DLSS 5's AI sheen will likely be dialed down to some extent. If Huang's statements about affording developers ample control over DLSS 5 are accurate, hopefully, the feature doesn't look as extreme as it currently does in every title we've seen so far. Subscribe to our newsletter for DLSS 5 insights Get clear, expert context on contentious gaming tech by subscribing to the newsletter. We'll unpack what DLSS 5's changes mean for visuals and artistic control, plus thoughtful coverage of related gaming tech topics. Get Updates By subscribing, you agree to receive newsletter and marketing emails, and accept our Terms of Use and Privacy Policy. You can unsubscribe anytime. Nvidia recently confirmed that DLSS 4.5 with 6x Multi Frame Generation launches on March 31st for RTX 50-series GPUs. Starfield's upcoming Free Lanes Update adds new weapons, quests, points of interest, and a new vehicle to the space exploration title. It also allows players to travel through space rather than just fast-travel, a feature fans have requested since Starfield's release. A new paid expansion called Terran Armada is also coming to the game. Nvidia's DLSS 4.5 with 6x Multi Frame Generation drops at the end of March The more efficient form of frame generation is set to be a game changer. Posts By Patrick O'Rourke

[9]

Jensen Huang says gamers are "completely wrong" about DLSS 5 -- Nvidia CEO responds to DLSS 5 backlash

At a press Q and A with Tom's Hardware at GTC 2026, Nvidia CEO Jensen Huang downplayed criticism of DLSS 5, the company's new use of AI and neural rendering to infer how certain features of games would look if they were more photorealistic. Since the debut of the feature, some critics have vocally complained on social media that the technology is making games look worse, homogenous, or only show Nvidia's view of the world. Much of the criticism has focused on the updated appearances of Resident Evil Requiem's Grace Ashcroft and Leon Kennedy. "Well, first of all, they're completely wrong," Huang said in a Q&A session in response to a question from Tom's Hardware editor-in-chief Paul Alcorn about the critcism. "The reason for that is because, as I have explained very carefully, DLSS 5 fuses controllability of the of geometry and textures and everything about the game with generative AI," Huang continued. He added that developers can still "fine-tune the generative AI" to make it match their style, adding that DLSS 5 adds generative capability to the existing geometry of the game, but that it "doesn't change the artistic control." "It's not post-processing, it's not post-processing at the frame level, it's generative control at the geometry level," he said. Huang also said that developers can try the tool and see how they want to use it, suggesting that it's up to a developer to try to make a "toon shader" or see if the game should be "made of glass." "All of that is in the control -- direct control -- of the game developer," he said. This is very different than generative AI; it's content-control generative AI. That's why we call it neural rendering." We'll see if the vocal gamers who say they dislike what they see change their mind as we see more. DLSS 5 is set to launch in the fall, and there will likely be far more demos of this technology that are more fully baked before then.

[10]

We Tried Nvidia's DLSS 5: Is It Just an AI Image Filter, or the Future of PC Gaming?

DLSS 5 on and off for the game The Elder Scrolls IV: Oblivion Remastered. (Credit: PCMag) Remember the huge graphical leaps we'd get moving from one console generation to the next? Those days are over...or so we thought. Nvidia's newly announced -- and already controversial -- DLSS 5 may be the graphics jump that gamers have been waiting for. At Nvidia's GTC event, I was able to try a demo of DLSS 5, and the technology wowed me several times, making me wonder: Am I staring at the future of gaming graphics? DLSS 5: Promise and Controversy DLSS is best known for using AI models to increase a PC game's frame rate for smoother gameplay. But on Monday, Nvidia introduced DLSS 5, which uses a "neural rendering" model to add photorealistic effects. The company has been quietly developing the technology for over three years, and the result can make game characters feel startlingly alive by injecting even more shadows, textures, and definition over faces, clothes, and environments, creating a new sense of depth. But despite the improvements, Nvidia's DLSS 5 announcement has already received some backlash over concerns that the GPU maker is merely adding an Instagram-like image filter over game-character faces. Another criticism is that DLSS 5 is acting like an AI slop generator and allegedly forcing AI imagery on top of carefully crafted characters created by game developers. Those worries were on my mind as Nvidia gave me a closer look at DLSS 5 on Monday. But as I saw the technology in action, it also became clear to me DLSS 5 could take computer graphics to a whole new level. Eyes On With the Next DLSS One thing is clear: DLSS 5 is no simple face filter. Video-game rocks and stones suddenly looked like rocks from real-life. The same was true of trees, water, a medieval castle, the interior of Hogwarts School, and even an espresso machine: DLSS 5 added a new level of photorealism that traditional game rendering had struggled to achieve. Another "wow" moment came when DLSS 5 was activated during a demo of The Elder Scrolls IV: Oblivion Remastered. Characters originally modeled two decades ago instantly began to look more like real people; their odd "potato" faces had vanished, replaced by fully fleshed-out faces with photorealistic hair, skin, eyes, and clothes. Sure, I was not seeing the ugly, but charming, facial models from before. But in return, DLSS 5 unlocked a new level of immersion. In Assassin's Creed Shadows, DLSS 5 turned the game's lush forests into something that seemed organic. The effects added even more variety to the light and texture of the dense foliage and rocks, making the depicted landscape indistinguishable from real-world photography. Crucially, the experience didn't feel fake or forced. Nor did it act like a conventional AI image filter, which can alter a person's face, but in a clumsy, heavy-handed way that masks over the original. Nvidia points to how DLSS 5's neural rendering is designed to understand 3D characters and objects, including colors, hair, fabric, skin, and movement, in addition to the surrounding environment. In other words, the technology is supposed to preserve game models before enhancing them. The Power Question: What Will It Take to Run DLSS 5? That all said, DLSS 5 remains a work-in-progress. In fact, Nvidia demoed the technology using not one but two GeForce RTX 5090 graphics cards -- each starting at $1,999, though actual pricing has since risen far higher due to the ongoing memory shortage. The goal is to optimize DLSS 5 so it can run on a single GPU. But in the demo I saw at GTC, one RTX 5090 card was used to render the game, while the other added neural rendering effects. That suggests DLSS 5 will need major tweaks to make it practical for the company's graphics cards. Nvidia will need to move quickly on its optimizations, since it plans to launch the technology this fall. We wouldn't be surprised if the initial launch is limited in scope. Other big unknowns include whether DLSS 5 will introduce a major performance hit -- a potential irony, considering that DLSS was developed to boost frame rates on sometimes underpowered hardware. How DLSS performs over an entire game is another major question. I was only able to briefly try out the feature in both Hogwarts Legacy and Oblivion, and my hands-on session was limited to walking around a single scene, rather than battling enemies or throwing magic spells. Understandably, some gamers may be skeptical or even alarmed, given the ethical issues and legal battles surrounding generative AI. At the same time, the PC market is reeling from an AI-driven memory shortage that risks undercutting DLSS 5 by inflating the cost of admission to buy Nvidia GPUs. But putting all that aside, I have to say DLSS 5 displayed the most realistic gaming graphics I've ever seen -- and I'm looking forward to experiencing more. Once you see DLSS 5 in action, it's hard to deny the potential it holds. In the meantime, Nvidia is already responding to some of the backlash, explaining that game developers will have full artistic control over DLSS 5 and can fine-tune the model to their liking. Some major developers, including Bethesda, Capcom, and Ubisoft, are already on board and preparing to support the technology in their own games.

[11]

Nvidia's DLSS 5 is the (glossy) subject of memes and backlash from gamers

Upgraded graphics in video games sound like they would be popular amongst players and enthusiasts, but Nvidia is finding that the opposite appears to be true with its latest tech. The company announced at a conference Monday that the new version of its artificial intelligence technology designed to boost performance will enable game developers to deliver "photoreal computer graphics previously only achieved in Hollywood visual effects." DLSS (Deep Learning Super Sampling) is Nvidia's image enhancement technology. First released in 2018, the technology was initially used to upscale resolution, but now it can generate entirely new frames. It has been integrated in over 750 games, according to the company. DLSS 5 will arrive this fall, but Nvidia presented a sample of what games will look like with the new technology earlier this week. So why and how are gamers mad about what the company calls its "most significant breakthrough in computer graphics" in recent years? Because the examples it used in a presentation look -- as those on the internet would say -- "yassified," or heavily edited in an attempt to look more attractive, often to the point of comedy. A brief clip of the character Grace Ashcroft from Resident Evil Requiem shows a before and after shot of her with DLSS 5 both off and on, and the difference is significant. While some aspects of the image vastly improve its appearance, like enhanced details and texture in the background, the character's face looks significantly different. With DLSS 5, she has more plump lips, the bags under her eyes don't appear as dark and it looks like she's wearing makeup. As the sample clips continue, more characters from Hogwarts Legacy, Starfield and EA Sports FC get similar treatment. "DLSS 5 is the GPT moment for graphics -- blending handcrafted rendering with generative AI to deliver a dramatic leap in visual realism while preserving the control artists need for creative expression," Nvidia founder and CEO Jensen Huang said in a release Monday. Many gamers responded with criticism, with one commenter on YouTube writing, "The obsession with fidelity over art direction is reaching terminal levels." Some felt the technology undermines artistic intent from the game designers, changing lighting choices and facial features instead of simply enhancing the image. Some also said the clips with DLSS 5 on had a general uncanny feeling, featuring hallmarks of AI-generated imagery. Others responded with memes. One person posted side-by-side images on X of the famous Great Depression-era photograph "Migrant Mother" with a heavily edited version where the once despondent woman is flashing a bright smile and sporting heavy makeup. The text reads "Nvidia presents DLSS 5." DLSS 5 has prompted a new meme template, which other posts have followed a similar format. Two similar images appear, with one clearly edited, and often have accompanying text like "DLSS 5 off vs. DLSS 5 on." One post uses the popular and often-memed image of actor Kevin James and juxtaposes it next to a version of the photo in which his face looks entirely different. Others take images that were clearly designed to be in an animated, cartoon-like style and put them next to an unsettlingly realistic version of that image. In a comment pinned to its YouTube video showcasing what the technology can do, Nvidia said "game developers have full, detailed artistic control over DLSS 5's effects to ensure they maintain their game's unique aesthetic." Huang also responded to the criticism during a press Q&A on Tuesday, saying that critics are "completely wrong." "The reason for that is because, as I have explained very carefully, DLSS 5 fuses controllability of the geometry and textures and everything about the game with generative AI," he said in response to a question about the criticism from Tom's Hardware. Developers can still "fine-tune the generative AI" to make it match their style, he said, adding that DLSS 5 "doesn't change the artistic control." Nvidia said DLSS 5 will come to games including Assassin's Creed Shadows, Delta Force, Justice, Phantom Blade Zero, Sea of Remnants and several other titles when it arrives in the fall.

[12]

Gamers are right to be disgusted by NVIDIA's DLSS 5

You can sum up the gamer response to NVIDIA's DLSS 5 announcement with the ever-relevant Fallout 4 meme: "Everyone disliked that." Across social media and Reddit last night, I couldn't find anyone who's genuinely positive about the potential for DLSS 5, which uses AI to add "photorealistic" lighting and materials to in-game models and environments. Instead, it's mostly complaints about the feature being another avenue for AI slop. And you know what? I agree. It's not unusual to see gamers being reflexively angry about new technology on the internet, especially when it's being pitched by NVIDIA as the "biggest breakthrough in computer graphics" since its RTX 20-series GPUs arrived in 2018 with real-time ray tracing. There was plenty of suspicion around DLSS's original AI upscaling model, as well as the "fake" frames generated by later iterations. But the few demos we've seen of DLSS 5 basically look like "yassified" AI filters for popular games. Leon and Grace from Resident Evil: Requiem have more distinct facial and hair detail, but they look a bit too slick. There are more wrinkles on an old woman in Hogwarts Legacy. And the face, hair and clothing from a Starfield character gain an uncanny sheen. None of the demos have the immediate impact of the Star Wars real-time ray tracing short ILMxLab produced with NVIDIA seven years ago. That demonstration showed us glorious reflections and lighting effects we'd never seen before in real-time. The DLSS 5 demos, on the other hand, don't look much different from the AI filters that make you look more presentable for Zoom calls. There's no genuine excitement for DLSS 5, just NVIDIA telling us that it's groundbreaking. There's also plenty of concern about DLSS 5 straying from an artist's original intent, as well as a potential homogenization of game visuals if every developer starts using the feature. NVIDIA claims developers will have "detailed controls for intensity, color grading and masking," which will help DLSS 5 stay in line with a game's aesthetic. But we don't have any direct developer experience with the feature yet -- some artists may want far more control than NVIDIA wants to give. The difference between DLSS 5 and earlier versions NVIDIA's upscaling is like the difference between generative AI and more traditional machine learning models. NVIDIA relied on the latter to make low-resolution textures and models appear sharper, and later to insert generated frames to smooth out gameplay and raise your fps count. As Wirecutter and former Polygon editor Arthuer Gies points out, you could argue those features were in service of delivering what developers originally intended. But DLSS 5's neural model applies its concept of "photorealism" on top of what games are rendering -- it's like watching a Pixar movie that let OpenAI's Sora do a final visual pass. Part of the negative response towards DLSS 5 may stem from a widespread anti-gen AI sentiment, but that doesn't devalue the criticisms either. Similar to AI generated text, images and video, there's a dehumanizing aspect about DLSS 5. It can erase the work of human artists (despite how much control NVIDIA claims they have), and it also feels like a calculated attempt to appeal to gamers who just want shinier graphics. NVIDIA showed off how generative AI could be used to create dialog and voices for NPCs last year at CES, but that was also widely disliked (and I called it a genuine nightmare). Of course, I can't fully judge DLSS 5 until I see it in action beyond a short demo. But I think the visceral disgust is an important indicator that many gamers aren't onboard with the AI-powered future NVIDIA is trying to sell us. And perhaps the idea of chasing "photorealism" may be a bit of a fool's errand. It may be appropriate for some games, but as Nintendo and indie PC devs have shown, you can also make some of the best games of all time without striving for realism. Tears of the Kingdom could use a better framerate and higher resolution textures, but it certainly doesn't need DLSS 5.

[13]

Nvidia CEO Jensen Huang says gamers calling DLSS 5 AI slop are "completely wrong"

Serving tech enthusiasts for over 25 years. TechSpot means tech analysis and advice you can trust. A hot potato: Do you think DLSS 5 makes games resemble AI slop? That it's little more than an AI filter inserted into titles that neither wanted nor needed it? If you are one of these many people, Nvidia boss Jensen Huang wants you to know that you're "completely wrong." Few technologies have faced as much criticism upon their reveals as DLSS 5. The vast majority of gamers are aghast at the way it gives characters a typical AI-generated uncanny valley appearance - especially Resident Evil Requiem's Grace Ashcroft and Leon Kennedy - as opposed to the "photorealistic" look Nvidia claims. Tom's Hardware editor-in-chief Paul Alcorn asked Huang about the criticism in a recently Q&A session. "Well, first of all, they're completely wrong," Huang said. "The reason for that is because, as I have explained very carefully, DLSS 5 fuses controllability of the of geometry and textures and everything about the game with generative AI." Huang then repeated Nvidia's previous disclaimer: that developers have full, detailed artistic control over DLSS 5's effects to ensure they maintain their game's unique aesthetic, and that they can "fine tune the generative AI" to match the intended style. "It's not post-processing, it's not post-processing at the frame level, it's generative control at the geometry level," he said. Huang added that it's up to developers to use DLSS 5 however they like. "This is very different than generative AI; it's content-control generative AI. That's why we call it neural rendering," he concluded. We recently published Ryan Shrout's post on DLSS 5. The analyst saw the technology running and was very impressed, emphasizing that it's not just a face filter but a tool that amplifies good rendering. Also read: I saw DLSS 5 running across multiple games. It's not a face filter. Despite Huang's explanations, everyone has their own opinion on DLSS 5. An interesting Reddit post argues its biggest problem is not that it looks "too AI" or betrays artistic intent, but that its tone mapping is overly aggressive, creating an ugly, overcooked HDR effect that distorts lighting, color, and mood. User Veedrac argues that the relighting underneath is often genuinely strong, but that DLSS 5's heavy-handed HDR-style processing muddies the result. By blending DLSS 5 with elements of the original image - such as restoring some of the original saturation, lightness, and darker tones - the post says it is possible to keep the improved lighting while making scenes look more natural and faithful. DLSS 5 - Fixing it in post by u/Veedrac in hardware After comparing several images, Veedrac concludes that many of DLSS 5's gains are real, but are being overshadowed by bad tone mapping that Nvidia should dial back. This isn't the first time Huang has clapped back at critics of something his company is heavily invested in. In January, the CEO said the relentless negativity around AI is hurting society and has "done a lot of damage."

[14]

Nvidia faces backlash over 'breakthrough' AI graphics feature

A new feature from chip-maker Nvidia that promises cinematic-quality graphics using AI has prompted a backlash online, despite the company claiming it would "reinvent" what is possible in video games. Nvidia said the DLSS 5 tool, which will be rolled out this autumn, would allow games to have "photoreal computer graphics previously only achieved in Hollywood visual effects". In images shared with the media, the tech was shown radically changing the appearance of characters and environments in games such as Resident Evil Requiem and Hogwarts Legacy. But some industry professionals said its use of AI went too far, making graphics feel airbrushed and hollow. "Clearly this is a massive glow-up for environments," said video game critic Alex Donaldson on Bluesky. "The character stuff is uncanny & weird tho, & it feels like artistic expression risks being squeezed out." Jeff Talbot, a concept artist at Gunfire Games, posted: "This is NOT the direction games should be going in. Each DLSS 5 shot looked worse and had less character than the original." Nvidia has become a household name because of the advanced microchips it makes for AI data centres. But the firm was originally focused on gaming and is still a driving force of innovation in the industry. Unveiling DLSS 5 at its main annual conference in Silicon Valley, Nvidia said the tech was the company's most "significant breakthrough" in computer graphics since it introduced real-time ray tracing in 2018. Ray tracing is a rendering technique that transforms the look of light, shadows and reflections in games. The tech giant said DLSS 5 would use AI to generate "photoreal" graphics of things like hair, fabric and skin, along with more realistic environmental lighting conditions. "We are reinventing computer graphics once again," said Nvidia boss Jensen Huang. "DLSS 5 is the [Chat]GPT moment for graphics - blending hand-crafted rendering with generative AI to deliver a dramatic leap in visual realism while preserving the control artists need for creative expression." Nvidia said the tech was supported by major publishers and game developers including Bethesda, CAPCOM and Warner Bros. Games. It comes amid growing anger among pockets of gaming community about the increased use of AI-generated content in titles, which has resulted in some studios scrapping games or promising to limit their use of the technology. Running With Scissors, the publisher behind the Postal shooter franchise, pulled a forthcoming game after critics said it had used AI-generated graphics. The role-playing game Clair Obscur: Expedition 33 won Game of the Year at the Indie Game Awards, but was then disqualified after it emerged its developer had experimented with AI-generated images but ultimately not used them. Some, however, have defended AI content, arguing it is pushing the industry forwards. Charlie Guillemot, joint chief executive of Vantage Studios, which makes Assassin's Creed Shadows, said DLSS 5 would make the game feel more immersive. "The way it renders lighting, materials and characters changes what we can promise to players. On Assassin's Creed Shadows, it's letting us build the kind of worlds we've always wanted to."

[15]

Nvidia's AI tech for improving game graphics still has some growing up to do

DLSS 5 doesn't always look bad, and developers can control intensity and color grading to ensure the results match their vision for their games Nvidia's DLSS is a clutch of machine learning-powered image rendering technologies that come in handy for boosting the frame rate in your games and improving lighting and image quality. They use the processing power of graphics cards to make this happen on your computer. The latest version, DLSS 5, is described as a neural rendering model that "infuses pixels with photoreal lighting and materials," and Nvidia says it's the company's biggest advancement in computer graphics since it first cracked ray tracing (a technique for realistically simulating light in 3D scenes) back in 2018. "DLSS 5 is the GPT moment for graphics - blending handcrafted rendering with generative AI to deliver a dramatic leap in visual realism while preserving the control artists need for creative expression," said Jensen Huang, Nvidia's founder and CEO. You can see a demo of this vision in the brief video below: That's a lot to take in, in just over a minute. Previous versions of DLSS made great advances in improving gaming experiences for players by leveraging machine learning to eke out more performance from the hardware you already have. Most recently, we got multi frame generation in DLSS 4.5, which essentially conjured up to five additional frames for every one frame your GPU could render in fractions of a second, making for massive gains in smoothness and responsiveness. This is the sort of breakthrough that people would typically embrace with open arms, particularly when RAM and GPU prices are through the roof to the point of becoming inaccessible for most gamers looking to upgrade their rigs. With DLSS 5, Nvidia's doing something different. It uses an AI model to tack on lighting and materials to enhance frames, while maintaining consistency even at 4K resolution so you don't have weird artifacts hovering around on screen. Nvidia says it understands that game tech should help precisely represent the developer's artistic intent - but the implementation seems to be veering away from that drastically enough to draw plenty of negative reactions (check out Kotaku's coverage, and head to the comments in Digital Foundry's analysis). You can see the AI slop effect in the overtly sharpened facial features in the clips of Starfield and Resident Evil Requiem above, and even in Hogwarts Legacy below. There are some bits that don't look all that awful, like lamp posts and cauldrons close to the foreground - but what you see are imagined details from a bunch of algorithms superimposed onto painstakingly created elements to look 'better.' What seems to be happening is that we're seeing a lot of unnatural details that are detracting from the experience. It also appears to add wholly different treatments to certain scenes: grim environments can end up seeming a lot brighter and less foreboding to walk through. All that said, it's worth noting that developers will have control over intensity, color grading and masking applied in their titles with DLSS 5 switched on, which means they'll have a say in how their games end up looking with AI-generated enhancements. The company also notes it'll only arrive this (Northern Hemisphere) fall, which gives it - and the studios behind DLSS 5-supporting titles like Starfield, Assassin's Creed Shadows, and Where Winds Meet - time to gather and respond to players' feedback before rolling the tech out widely. Hopefully they're all listening.

[16]

Does Nvidia's Slop-ified DLSS 5 Game Lighting Make Any Sense?

Nvidia finally reveals what's actually going on with its upscaler, and it's much less impressive than it first seemed. Nvidia’s newfangled AI-based “neural rendering†technology, DLSS 5, dramatically modifies (aka "slop-ifies") game visuals with the help of AI. Nvidia promised this technology would revolutionize in-game lighting at its most fundamental level. After hearing from lighting experts, developers, and Nvidia itself, it turns out that DLSS 5 isn’t nearly as impressive as all that. Both gamers and game developers were none too happy about DLSS 5 “slop-ifying†faces in games like Resident Evil Requiem, Starfield, and Hogwarts Legacy. For its part, Nvidia was adamant this technology was not modifying characters at the "geometry level.†On Thursday, YouTuber Daniel Owen went back and forth with Nvidia’s own “GeForce Evangelist†Jacob Freeman about what’s going on inside DLSS 5. To summarize, it’s a sophisticated, ultra-fast, AI image generator. Freeman confirmed DLSS 5 is only analyzing a "2D frame plus motion vectors as input†and sticking that information into a generative AI model. Essentially, the AI is only analyzing the surface information of each rendered frame and using motion vectors to determine what the next frame will look like. These motion vectors are data points used to determine the difference between where in-game objects are positioned from frame to frame. Nvidia’s AI is using these vectors to guesstimate the position of these to make motion look smoother. The AI doesn’t have access to intrinsic scene information, such as PBR (physically based rendering). PBR helps inform a game engine of what materials are in a scene, such as wood, metal, etc. Instead, with DLSS 5, "materials are inferred from the rendered frame.†The AI merely guesses what each object should look like, then papers over the frame with its own interpretation. Changing a scene's textures and lighting may also disrupt how players perceive the environment. In a follow-up video posted by Digital Foundry, the AI reinterprets two ceramic-seeming cups on a table and decides they are completely made of metal. Gizmodo reached out to Nvidia to confirm our interpretations of Freeman’s comments. We’ll update this story if we hear back. It's likely inaccurate to say the AI is interpreting a "screenshot" of each scene, as Owen put it in his video. However, it remains unclear at what part of the rendering stage the AI is taking its information from. “This is a very early preview of the tech,†Freeman responded when asked whether the AI was capable of reinterpreting a scene. In a Q&A hosted at GTC 2026, Nvidia CEO Jensen Huang was adamant that the technology doesn’t result in homogenized graphics in games. He then went on to describe the technology in vague terms that don’t mean much or anything to the wider gaming public. “DLSS 5 fuses the controllability of the geometry and textures and everything about the game with generative AI,†Huang is recorded saying at the Q&A. “It’s not post-processing; it’s not post-processing at the frame level; it’s generative control at the geometry level.†The Nvidia CEO has a tendency to fall into technobabble to detail his company’s products. He initially described DLSS 5 and its “neural rendering†technology as “combined 3D graphicsâ€"structured dataâ€"with generative AIâ€"probabilistic computing.†Huang’s comments seem to fly directly in the face of Freeman’s description. DLSS 5 is not remapping new textures and lighting onto 3D objects. It’s spitting out AI-generated 2D images at such a rapid rate that it can keep up with a game’s frame rate. As with all generative techniques in games such as multi-frame gen, this will likely increase latency and lead to odd artifacts within scenes, especially with objects in motion. DLSS 5 lighting may look more “realistic†in a sense that it is reflecting accurately off of objects in a scene. The issue is that the AI will not understand the artistic direction behind each moment in a game. The one thing missing from this debate is whether the lighting makes any sense outside of programming and gaming circles. Gizmodo reached out to Jonathan Harris, a local independent photographer and filmmaker based in New York, to get his take on DLSS 5's realism. “In some cases, the lighting does look better, but at the same time, you lose the creative edge,†Harris said. “It seems like it's optimizing light.†Gizmodo showed the indie filmmaker two screenshots from environmental lighting in one of Nvidia’s demo scenes. He pointed out that the one with DLSS 5 enabled looked as if it were trying to resemble an overcast sky. The AI even added clouds that weren’t there in the original frame. Left, one of Nvidia's demo scenes with DLSS 5 on; right, the same scene with DLSS 5 off. © Nvidia “Things do seem to look sharper, which can be nice, but in a game like Resident Evil, which is supposed to have a little bit of fog and haze, the lighting overall is just a bit brighterâ€| and that may work against the tone of the game,†Harris said. Nvidia said in its FAQ that game devs will have the ability to change color grading and mask off parts of scenes. This means creators could keep the AI from modifying the light and texture on specific characters or objects in a frame. One Redditor pointed out that the issue with Nvidia’s original DLSS 5 images may be more due to the AI’s awful tone mapping. By toning down the overbaked colors in the original screenshots, the characters seem to fit much better with the original games. Other than color grading, developers may not have control over whether the AI can add elements to a scene that weren’t there before. In a thread on X, longtime game dev and ex-Ubisoft programmer Muhammad Moniem said “it is an AI trained post-processing filter.†While he stopped short of calling it “slop,†he also said, “This is not an Instagram filter by any mean [sic]! This is a TikTok filter.†Let’s get one thing straight. There’s a reason why many of these DLSS 5-modified characters look horrendous. They all bear too much resemblance to AI-generated Instagram and TikTok videos that have flooded users’ feeds. Whether you actually wanted a "photorealistic" game or not, these AI-infused frames miss the mark by miles. While we’ve seen hands-on from some outlets like Digital Foundry and PC Guide, most of the footage was cut up and spliced. Digital Foundry’s original video of the tech showcased a few static environments and character models. The only extended video of DLSS 5 we’ve seen comes from the YouTube channel HotHardware. The problem with this footage is that Nvidia seems to restrict people from seeing the lighting effects when the characters are in motion. The Nvidia staff will turn off DLSS 5, move to another end of the room in games like Hogwarts Legacy and Starfield, then turn the upscaler back on. Nvidia's staff said that the model is getting information “from what’s on screen and what’s moving on screen.†What’s still unclear is how fast it will be able to do this when the player’s camera is moving as well. Nvidia showed DLSS 5 to an extremely limited press pool, and it's done a very poor job explaining how the technology even works. The game and DLSS 5 model were running on two Nvidia GeForce RTX 5090 GPUs. One graphics card was dedicated to running the AI model exclusively. Nvidia told multiple outlets it was already testing DLSS 5 running on a single GPU. Despite all these lingering questions we have about whether it will look good or even run on any but the highest-end GPUs, Nvidia still intends to launch DLSS 5 this fall. The wider developer community seems much less on board. “A lot of these companies take the time to high-res 3D scan these people’s faces," said Mike York, an animator who has worked on games like Red Dead Redemption 2 and GTA V, in a YouTube video. “They want to get those original textures. They want to get that little scar underneath the eyeâ€| [DLSS 5 is] squashing over somebody's hard work.†Our friends at Kotaku quoted a whole assortment of game developers who do not like DLSS 5 or what it represents for the industry. Nvidia’s CEO showed this technology off at its own AI-centric GTC conference, even though it had an opportunity to bring it to developers at GDC (Game Developers Conference) 2026 that took place just a few weeks prior. Nvidia was so focused on AI, it ignored everything that the gaming community actually wants from today's games. It was never about achieving "photorealism." It was about creating an experience that matters, that impacts the players, and that adds something to the world. You knowâ€"art.

[17]

Nvidia's CEO says gamers are "wrong" about DLSS 5's AI slop backlash

Patrick O'Rourke is XDA's News Editor and Entertainment Segment Lead. Previously, he was Pocket-lint's Editor-in-Chief, the Editor-in-Chief of Canadian tech publication MobileSyrup, and earlier in his career, he worked as the technology editor at the Financial Post and Postmedia. He's based in Toronto. Over the past 15 years, he's written thousands of articles. Patrick has also interviewed dozens of tech industry executives and covered GDC, E3, Gamescom, WWDC, Apple keynotes, Samsung Unpacked events and more. Patrick has a BA in journalism from Toronto Metropolitan University. Summary DLSS 5 uses generative AI to change geometry, textures, and lighting -- not just upscaled visuals. Nvidia insists developers keep artistic control and can fine-tune DLSS 5's generative effects. Trailer comparisons show an otherworldly AI sheen; many fear DLSS 5 will bring 'AI slop' to games. At GTC 2026, Nvidia announced DLSS 5, a more advanced version of its AI upscaling technology, and the response has been resoundingly negative. Rather than just improving a game's resolution and frame rate with the help of AI, DLSS 5 actually changes a title's graphics, adding detail to characters' hair and faces, and even a game's environment and lighting. The resulting effect... isn't great to put it lightly, adding an unnecessary AI sheen to visuals that many are calling AI slop. Check out the video below, and you'll see what I mean. However, during a recent Q&A event at GTC, Nvidia CEO Jensen Huang explained that this impression is misguided. Taking a question from Tom's Hardware's Paul Alcorn, Huang said, "Well, first of all, they're completely wrong," and reiterated that game developers will have control over how the DLSS 5 is implemented in their titles. "The reason for that is because, as I have explained very carefully, DLSS 5 fuses controllability of the geometry and textures and everything about the game with generative AI," said Huang. He added that developers will be able to "fine-tune the generative AI" to match their style" and and say that it "doesn't change the artistic control." "It's not post-processing, it's not post-processing at the frame level, it's generative control at the geometry level," said Huang. Related Nvidia's DLSS 5 uses AI to "enhance" games with photorealistic lighting and more character detail AI-enhanced visuals are here. Posts 1 By Simon Batt The AI slopification of gaming is coming, whether we like it or not Hopefully, most developers opt for a more low-key version of DLSS 5's AI generation features While this is likely accurate, the aesthetic changes shown in the DLSS 5 trailer Nvidia recently released don't really support that perspective or paint the upcoming AI upscaling technology in a positive light. For example, the Resident Evil Requiem transformation focused on Grace Ashcroft looks positively otherworldly with DLSS 5 on, and the same can be said for most of the comparisons, particularly the one featuring characters and environments in Starfield. It will all come down to what the technology looks like when it launches, and the level of control developers will afford Nvidia over the look of their titles with DLSS 5 on, if Huang's statements about the upcoming AI upscaling feature prove accurate. Either way, I can't help but feel like the wave of AI slop that's progressively infected the internet over the past few months is now coming for the gaming space. Nvidia recently confirmed that DLSS 4.5 with 6x Multi Frame Generation launches on March 31st for RTX 50-series GPUs. Related 385TB of gaming history was nearly lost -- until its community stepped in Myrient has been "100% backed up" and validated Posts By Patrick O'Rourke

[18]

Calling Nvidia's DLSS 5 "AI slop" completely misses the point of modern rendering

Another day, another DLSS version sows discord among the gaming masses. It takes me right back to the release of the first public version of DLSS, and all the surrounding negativity. Yet, today, all but a vocal minority of gamers deny that DLSS has been the killer feature of the generation. DLSS uses machine learning algorithms to intelligently upscale a rendered image so that it appears to be a higher resolution. This solved a major issue that happened because of the shift from CRT monitors (which can display any arbitrary resolution without scaling artifacts) to flat panel displays, which have a physical pixel grid. If you can't render your image at that "native" resolution, you need to apply a scaling solution, and before DLSS they all looked rather terrible. As DLSS matured, it reached the point of providing superior image quality to "native" rendering combined with the popular Temporal Antialiasing (TAA) technique. With DLSS 4.5, NVIDIA polished the final rough edges of the technology. All but fixing issues with image stability and disocclusion artifacts. It was such a big leap that people wondered why it was just a point-five release, but it turns out the answer is way more radical than we could imagine. What is DLSS 5? Imagining a better (game) world DLSS 5 includes a new technology that uses AI to rework the lighting in a game so that it takes a major leap towards photorealism. It does not change the geometry or texture work, but "imagines" what the game would look like if rendered with extremely high-quality light. You can see the examples in action below in NVIDIA's official release video. The end result is simply beyond anything that current hardware can achieve using traditional rendering methods. It pushes these real-time games into the realm of pre-rendered CG. In this preliminary analysis video by Digital Foundry you can see that this is a real implementation in a real game. The results are consistent, and the game runs as normal, it just looks different. Just like real-time ray-tracing technology, this is using neural networks to make something possible that simply cannot be done by brute force at the moment. Now, it's worth noting that this demo is using not one, but two RTX 5090 cards. One to run the game, and one to apply the DLSS pass to the graphics, but obviously the intent is for this technology to run on a single card when it's released to the public. Alienware 16 Area-51 (2025) 9/10 Brand Dell / Alienware Operating System Windows 11 Home (Pro upgradable) $2000 at Dell $3100 at Best Buy Expand Collapse Why are gamers complaining? New thing is scary The reaction to DLSS 5 online has been interesting, to say the least. The most vocal reactions have been negative. The term "AI slop" was thrown around quite liberally as you might expect. However, the key theme here was about "artistic intent." The idea that DLSS 5 changes the art of the game so that it no longer looks the way the game developers intended it to look. I can't argue that it doesn't make the games look different, but the implication that NVIDA just put out a bunch of demos using games by major studios without their consent or approval is laughable. Indeed, not only did studios like Bethesda and Capcom give their blessing, they worked on the DLSS 5 implementation in their games themselves! NVIDIA was also quick to point out in a pinned comment under the announcement video that DLSS 5 wasn't a "filter" or some magic on/off switch: Important to note with this technology advance - game developers have full, detailed artistic control over DLSS 5's effects to ensure they maintain their game's unique aesthetic. The SDK includes things like intensity, color grading and masking off places where the effect shouldn't be applied. It's not a filter - DLSS 5 inputs the game's color and motion vectors for each frame into the model, anchoring the output in the source 3D content. It essentially builds on what we've seen with ray reconstruction and the original denoising process for RTX. It provides another option for the lighting in the game. Another complaint that I saw was the one leveled at generative AI products like Midjourney, where the idea is that the training was based on stolen work. Except that NVIDIA trains DLSS on extremely high-quality real-time rendering of game footage they generate themselves. It's NVIDIA's supercomputer resources that make DLSS what it is, not the GPU we buy that have simple tensor cores to run the resulting algorithms. The results matter more than the methods It's all fake, my dudes Setting all of this scrambling to prejudgment aside, my biggest frustration is with the strange purism that some people in the gaming world express when it comes to how AI is being used. The "fake frames" and "fake pixels" camps railed against DLSS in its initial form. They only want raw, native pixels like nature intended, apparently. Subscribe to the newsletter for DLSS 5 breakdowns Get clearer context - subscribe to the newsletter for in-depth, jargon-free analysis of DLSS 5 and AI-driven graphics, thoughtful takes on developer control and image-fidelity debates, and what it means for modern game visuals. Get Updates By subscribing, you agree to receive newsletter and marketing emails, and accept our Terms of Use and Privacy Policy. You can unsubscribe anytime. This entire framing misses the point that the "real" game rendering technologies they are defending consist of a mountain of kludges and shortcuts game developers have invented over the years to get as close to their intent as possible. What does it matter what sort of math, or what type of processor is responsible for the image on screen? NVIDIA's big DLSS Super Resolution upgrade has arrived A major upscaling update is rolling out to everyone. Posts By Jorge A. Aguilar A computer geek like me might be interested in the nuts and bolts, sure. But as a gamer, I care about the results above all. Does it look good? Does it play well? These are important questions. And I do care about whether I'm getting the intended experience. After all, I'm a guy who buys CRT monitors and TVs so that I can see what the games meant for those displays are supposed to look like. I think perhaps using existing games to showcase DLSS 5 could have been a mistake on NVIDIA's side. It may have been better to show off a game that has been designed from the ground up to have DLSS 5 as part of its rendering solution. Either way, this is a smart solution to achieve a multi-generational leap in visual fidelity. The only question is whether the AMD-powered gaming consoles most people play on will once again feel outdated at launch, just as they did when NVIDIA blindsided AMD with RTX and DLSS at the same time as the launch of the PlayStation 5 and Xbox Series consoles.

[19]

We got a first look at Nvidia's DLSS 5 and the future of neural rendering at GTC -- the results can be impressive, but there's work to do