Pentagon Investigates AI Role in Missile Strike That Killed 175 at Iranian School

2 Sources

2 Sources

[1]

Outdated Targeting Data Blamed for Strike on Iranian School as Pentagon Fights for Autonomous Weapons

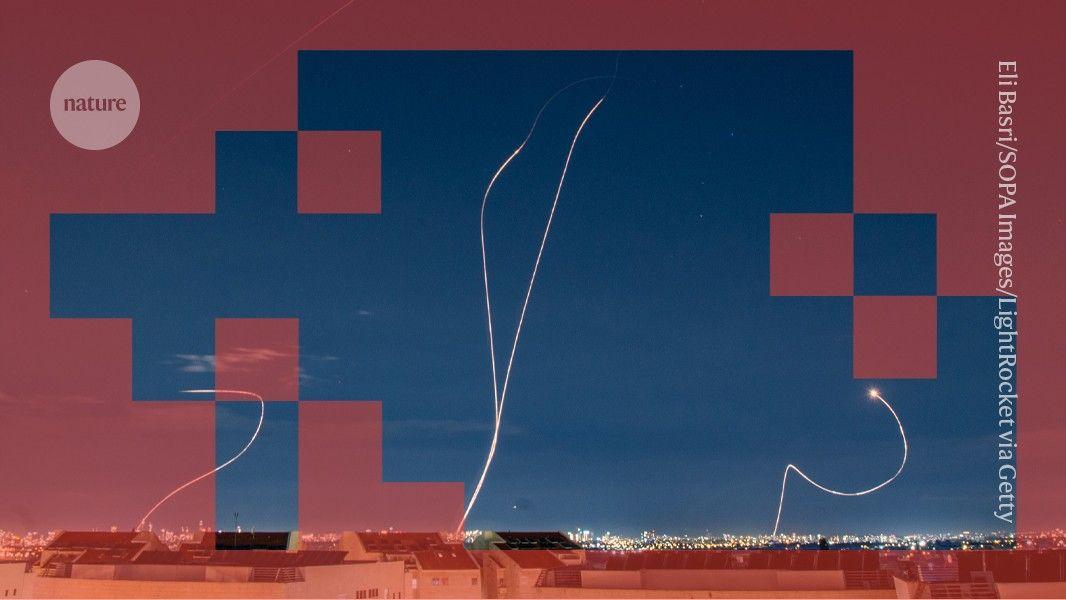

An investigation by the U.S. military into America's missile strike on an Iranian school will likely blame outdated targeting information, according to a new report from the New York Times. The attack killed at least 175 people, most of them children, and President Donald Trump has previously tried to blame Iran for hitting the school. On Feb. 28, the first day of the war, the U.S. launched a Tomahawk missile at Shajarah Tayyebeh elementary school in Minab, Iran, while allegedly trying to hit targets at a nearby Iranian military base. The preliminary findings of the investigation by the U.S. have found that the school used to be part of the base, though reports differ about when the building was converted into a school. NBC News reports that the school building was segmented from the base, run by the Islamic Revolutionary Guards Navy, roughly 15 years ago. The New York Times notes that a fence was erected between the base and the school sometime between 2013 and 2016. Washington Post open source investigations reporter Evan Hill notes on X that colorful murals at the school were visible from Google Earth at least eight years ago, calling into question how anyone with access to satellite imagery may have mistaken the school for a legitimate military target. The Defense Intelligence Agency labeled the school as a target and passed that information to U.S. Central Command, according to the Times. Investigators "do not yet fully understand" how the bad information was transmitted, and investigators are reportedly looking at how the National Geospatial-Intelligence Agency, which examines satellite images for the military and intelligence community, may have been involved. After the strike on the school, there was widespread speculation that AI might be to blame for the bad targeting information. Anonymous officials told the Times that AI was "unlikely" to be the cause of the strike, suggesting instead it was probably human error. Anthropic's AI model Claude is used by the U.S. military, though the U.S. has now labeled the company as a "supply chain risk." The Pentagon has never before designated a U.S. company as a supply chain risk, and Anthropic has now filed suit, but the Pentagon is still using Claude as it's phased out over the next six months. Hegseth ordered the designation of Anthropic as a supply chain risk because the AI company refused to drop guardrails that prohibit Claude from being used for mass domestic surveillance and for fully autonomous weapons. The Pentagon's designation has caused other companies that do business with the U.S. government to reconsider their contracts with Anthropic, and some have characterized the Trump regime's moves as attempted corporate murder of an American AI company. Trump has tried to blame Iran for the missile strike on the school, though even Hegseth refused to back up the president recently during a talk with reporters on Air Force One. "We think it was done by Iran. They're very inaccurate, as you know, with their munitions. They have no accuracy whatsoever. It was done by Iran," Trump said March 7. Hegseth merely said it was under investigation and insisted that the U.S. does not target civilians. But the Defense Secretary has frequently belittled adherence to "stupid" rules of engagement and denounced so-called "woke" policies. Anthropic plans to open a permanent office in Washington D.C., according to a new report from Axios, and there's still hope in some circles for a resolution to the conflict with the Pentagon. But it's hard to see a scenario where Trump and his cronies would ease up the pressure unless Anthropic pledged to allow mass domestic surveillance and the use of autonomous weapons. More than 1,800 people have died since the start of the war in Iran, according to the New York Times, including 7 U.S. service members. But the number that U.S. commentators are most fixated on is oil prices, as that seems like the most likely thing that will potentially pressure Trump to end the war. The current national average for a gallon of gas is $3.57, according to AAA, up significantly from $2.98 just before the war started. The International Energy Agency said Wednesday that member countries would release 400 million barrels of oil from their emergency reserves, the largest ever. But it remains to be seen whether that will put a dent in prices at the pump.

[2]

US Military Investigating Whether AI Was Involved in Bombing Elementary School in Iran

Can't-miss innovations from the bleeding edge of science and tech Commercial satellite imagery captured last week shows the eerie devastation following the bombing of an Iranian elementary school. At least 175 people, including a large number of young schoolgirls, were killed in the attack. Haunting drone footage showed excavators digging dozens of graves for the victims. The airstrike, allegedly part of an offensive targeting an Iranian military complex nearby, provided a grotesque example of the horrors unfolding since the beginning of the US-Israel war on Iran late last month. The massacre also raised a grim technological question. It had already been reported that the US military has been using Anthropic's Claude to select targets during the attacks on Iran -- and, strikingly, the Pentagon refused to confirm or deny whether AI had any role in the school's bombing when Futurism asked. At first, neither the US nor Israel wanted to take blame for the carnage. US president Donald Trump desperately tried to steer clear as well, claiming that Iran had murdered its own children in cold blood. Now, as the New York Times reports, US officials have confirmed that a US military Tomahawk missile strike was indeed responsible for the bloodbath at the school. According to a preliminary finding, officers at the US Central Command "created the target coordinates for the strike using outdated data provided by the Defense Intelligence Agency," sources briefed on the matter told the newspaper. Strikingly, the NYT also reports that the military is investigating whether "any artificial intelligence models, data crunching programs or other technical intelligence gathering means were to blame for the mistaken targeting of the school." Claude, the newspaper's sources noted, works with the National Geospatial-Intelligence Agency's Maven Smart System to "identify points of interest for military intelligence officers." Regardless of the outcome of the investigation, officials told the paper, it was ultimately "human error" to bomb the school, regardless of how the target was selected in the first place. According to the NYT's own analysis of historical satellite imagery that dates back to 2013, it's likely Central Command officials may have been working with outdated information. The imagery shows that the school was fenced off from the nearby military base between 2013 and 2016. Despite the Trump administration officially labeling Anthropic's AI chatbot as a "supply chain risk," a move that sent shockwaves through the AI industry, the military has continued to rely on the chatbot during the offensive.

Share

Share

Copy Link

The U.S. military is investigating whether AI played a role in a Tomahawk missile strike on an Iranian elementary school that killed at least 175 people, mostly children. Preliminary findings point to outdated targeting data, but questions persist about Anthropic's Claude AI, which the Pentagon uses for target selection despite designating the company a supply chain risk over its refusal to remove guardrails against autonomous weapons.

Pentagon Investigation Centers on Outdated Targeting Data and AI Role

The U.S. military is conducting an investigation into a Tomahawk missile strike on February 28 that hit Shajarah Tayyebeh elementary school in Minab, Iran, killing at least 175 people, most of them children

1

. Preliminary findings suggest that outdated targeting data provided by the Defense Intelligence Agency led U.S. Central Command to create target coordinates for what was believed to be part of an Iranian military base2

. However, the school building had been separated from the Islamic Revolutionary Guards Navy base roughly 15 years ago, with a fence erected between 2013 and 20161

.

Source: Futurism

Crucially, the Pentagon is investigating whether AI models, including Anthropic's Claude, contributed to the targeting failure

2

. When asked directly, the Pentagon refused to confirm or deny whether AI had any role in the Iranian school bombing2

. Anonymous officials told the New York Times that AI was "unlikely" to be the cause, suggesting instead it was probably human error1

.Anthropic's Claude and the Maven Smart System Under Scrutiny

The investigation has brought attention to AI in military applications, specifically how Claude works with the National Geospatial-Intelligence Agency's Maven Smart System to "identify points of interest for military intelligence officers"

2

. Investigators are examining how the National Geospatial-Intelligence Agency, which analyzes satellite imagery for the military and intelligence community, may have been involved in transmitting faulty targeting information1

.Open source investigations raise questions about the failure. Colorful murals at the school were visible from Google Earth at least eight years ago, calling into question how anyone with access to satellite imagery could have mistaken the school for a legitimate military target

1

. Regardless of the investigation outcome, officials maintain it was ultimately human error to bomb the school, regardless of how the target was selected2

.Pentagon Autonomous Weapons Dispute Escalates with Supply Chain Risk Designation

The tragedy unfolds against a backdrop of escalating tensions between the Pentagon and Anthropic. Defense Secretary Pete Hegseth designated Anthropic as a supply chain risk because the AI company refused to drop guardrails that prohibit Claude from being used for mass domestic surveillance and for fully autonomous weapons

1

. This marks the first time the Pentagon has designated a U.S. company as a supply chain risk1

.Despite the designation, the U.S. military has continued using Claude during operations, with a planned phase-out over six months

1

2

. Anthropic has filed suit against the designation, and the company's decision has caused other government contractors to reconsider their relationships with Anthropic, with some characterizing the Trump administration's moves as attempted corporate pressure1

.Related Stories

Implications for Military AI Deployment and Civilian Safety

The incident raises urgent questions about accountability when AI systems assist in targeting decisions that result in civilian deaths. While officials attribute the strike to outdated data and human error, the investigation into whether AI models contributed to the tragedy highlights the opacity surrounding AI in military applications. More than 1,800 people have died since the start of the conflict, including 7 U.S. service members

1

.President Donald Trump initially attempted to blame Iran for hitting the school, claiming "We think it was done by Iran. They're very inaccurate, as you know, with their munitions"

1

. However, even Hegseth refused to back up these claims, stating only that the matter was under investigation and insisting the U.S. does not target civilians1

.As Anthropic plans to open a permanent office in Washington D.C., observers see little prospect for resolution unless the company agrees to remove its guardrails against autonomous weapons and mass surveillance

1

. The investigation's findings will likely shape future debates about the role of AI in targeting decisions and the balance between technological capabilities and ethical constraints in military operations.References

Summarized by

Navi

Related Stories

Pentagon reveals how Military AI chatbots accelerate targeting decisions in Iran operations

11 Mar 2026•Technology

Anthropic clashes with US Military over AI warfare ethics as Trump orders federal ban

28 Feb 2026•Policy and Regulation

Iran war exposes AI technology as new weapon in cyber warfare against U.S. and Israel

11 Mar 2026•Technology

Recent Highlights

1

AI chatbots validate you too much, making you less kind to others, Stanford study reveals

Science and Research

2

Anthropic's Claude Code Source Leak Reveals Hidden AI Agent Plans and Extensive System Access

Technology

3

Judge blocks Pentagon from branding Anthropic a security risk over AI safety guardrails dispute

Policy and Regulation