Reddit's AI Chatbot 'Answers' Sparks Controversy with Dangerous Health Advice

5 Sources

5 Sources

[1]

Can't Trust Chatbots Yet: Reddit's AI Was Caught Suggesting Heroin for Pain Relief

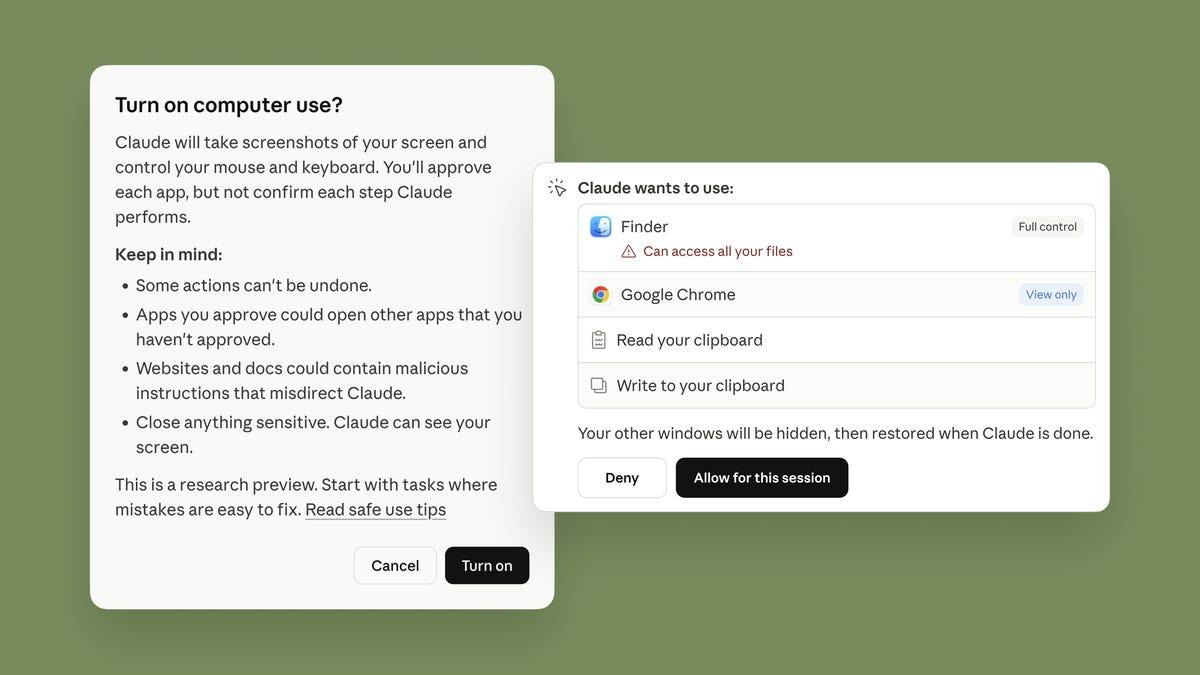

Jibin is a tech news writer based in Ahmedabad, India, who loves breaking down complex information for a broader audience. Don't miss out on our latest stories. Add PCMag as a preferred source on Google. AI chatbots have had a long history of hallucinating, and Reddit's version, called Answers, has now joined the list after it recommended heroin to a user seeking pain relief. As 404Media reports, the issue was flagged by a healthcare worker on a subreddit for moderators. For chronic pain, the user caught Answers suggesting a post that claimed "Heroin, ironically, has saved my life in those instances." In another question regarding pain relief, the user found the chatbot recommending kratom, a tree extract that's illegal in multiple states. The FDA has also warned against it "because of the risk of serious adverse events, including liver toxicity, seizures, and substance use disorder." Reddit Answers works like Gemini or ChatGPT, except it pulls information from its own user-generated content. Initially, it opened in a separate tab from the homepage, but recently, Reddit has been testing the chatbot within conversations. The user who reported the issue found Reddit Answers posting harmful medical advice under health-related subreddits, and the moderators there didn't have the option to disable it. After the user and 404Media raised their concerns with Reddit, the platform decided to stop it from appearing under sensitive discussions. Google's AI Overviews and ChatGPT have also provided questionable health advice, like using "non-toxic glue" on pizzas to keep the cheese from sliding off.

[2]

Moderators call for AI controls after Reddit Answers suggests heroin for pain relief

We've seen artificial intelligence give some pretty bizarre responses to queries as chatbots become more common. Today, Reddit Answers is in the spotlight after a moderator flagged the AI tool for providing dangerous medical advice that they were unable to disable or hide from view. The mod saw Reddit Answers suggest that people experiencing chronic pain stop taking their current prescriptions and take high-dose kratom, which is an unregulated substance that is illegal in some states. The user said they then asked Reddit Answers about other medical questions. They received potentially dangerous advice for treating neo-natal fever alongside some accurate actions as well as suggestions that heroin could be used for chronic pain relief. Several other mods, particularly from health-focused subreddits, replied to the original post adding their concerns that they have no way to turn off or flag a problem when Reddit Answers has provided inaccurate or dangerous information in their communities. A representative from Reddit told 404 Media that Reddit Answers had been updated to address some of the mods' concerns. "This update ensures that 'Related Answers' to sensitive topics, which may have been previously visible on the post detail page (also known as the conversation page), will no longer be displayed," the spokesperson told the publication. "This change has been implemented to enhance user experience and maintain appropriate content visibility within the platform." We've reached out to Reddit for additional comment about what topics are being excluded but have not received a reply at this time. While the rep told 404 Media that Reddit Answers "excludes content from private, quarantined and NSFW communities, as well as some mature topics," the AI tool clearly doesn't seem equipped to properly deliver medical information, much less to handle the snark, sarcasm or potential bad advice that may be given by other Redditors. Aside from the latest move to not appear on "sensitive topics," it doesn't seem like Reddit plans to provide any tools to control how or when AI is being shown in subreddits, which could make the already-challenging task of moderation nearly impossible.

[3]

Reddit's AI Suggests That People Suffering Chronic Pain Try Opioids

Tech companies are rushing to deploy AI everywhere. But because glaring issues remain with the tech, it's almost inevitable that these efforts lead to public embarrassment when the AI malfunctions. In one of the latest examples, Reddit's new AI was caught making an extraordinarily bad suggestion: that DIY heroin use might be an effective strategy for pain management. As flagged by 404 Media, a user noticed while browsing the r/FamilyMedicine subreddit that the site's "Reddit Answers" AI was proffering "approaches to pain management without opioids." On its face, that's a nice idea, since over prescription of opioid painkillers led to a severe and ongoing addiction crisis. But the Reddit AI's first suggestion was to try kratom, an herbal extract from a plant called Mitragyna speciosa that currently exists in a legal gray area and is associated with significant addiction and health risks, in addition to being ineffective at pain treatment. Intrigued, the Reddit user followed up by asking the AI whether there was any medical rationale to use heroin for pain management. "Heroin and other strong narcotics are sometimes used in pain management, but their use is controversial and subject to strict regulations," the bot replied. "Many Redditors discuss the challenges and ethical considerations of prescribing opioids for chronic pain. One Redditor shared their experience with heroin, claiming it saved their life but also led to addiction: 'Heroin, ironically, has saved my life in those instances.'" For obvious reasons, that's an appallingly bad answer. Though doctors still prescribe opioid medications when absolutely necessary, heroin emphatically isn't one of them; though there are nuanced discussions among physicians on when it's appropriate to treat chronic pain with such addictive substances, the bot's chirpy answer -- and especially its suggestion that heroin can be lifesaving -- is profoundly inappropriate. For what it's worth, Reddit agreed. After 404 reached out with questions, the company patched its AI system to prevent it from weighing in on "controversial topics." "We rolled out an update designed to address and resolve this specific issue," a spokesperson told 404. "This update ensures that 'Related Answers' to sensitive topics, which may have been previously visible on the post detail page (also known as the conversation page), will no longer be displayed. This change has been implemented to enhance user experience and maintain appropriate content visibility within the platform." It's better than nothing -- but in a more careful tech sector, wouldn't the system have launched with better guardrails, instead of relying on random users and journalists to flag the issue?

[4]

Reddit's AI thinks Heroin is a health tip

What happened: Well, Reddit's new AI chatbot is already causing chaos. The bot, called "Answers," is supposed to help people by summarising information from old posts. According to a report by 404media, the latest chatbot by Reddit is now suggesting hard narcotics to deal with chronic pain. When someone asked it about dealing with chronic pain, it pointed to a user comment that said, "Heroin, ironically, has saved my life in those instances." And it gets worse. Someone else asked it a question, and it recommended kratom, an herbal extract that's banned in some places and has been linked to some serious health problems. The scariest part is that Reddit is testing this bot right inside of active conversations, so its terrible advice is showing up where everyone can see it. The people in charge of these communities, the moderators, said they couldn't even turn the feature off. Why is this important: This is a perfect, if terrifying, example of one of the biggest problems with AI right now. The bot doesn't actually understand anything. It's just really good at finding and repeating what humans have written online - and it can't tell the difference between a helpful tip, a sarcastic joke, or a genuinely dangerous suggestion. The real danger is that it's putting this stuff right into the middle of conversations where real, vulnerable people are looking for help, and it presents the information as if it's a fact. Why should I care: So why does this matter to you? Because it shows just how risky these AI tools can be when they're let loose without a leash, especially when it comes to something as serious as your health. Even if you'd never ask a chatbot for medical advice, its bad suggestions can start to pollute online communities, making it harder to know who or what to trust. Recommended Videos What's next: After people (rightfully) freaked out, Reddit confirmed they're pulling the bot from any health-related discussions. But they've been pretty quiet about whether they're putting any real safety filters on the bot itself. So, for now, the problem is patched, not necessarily solved.

[5]

Reddit's AI chatbot suggests users try heroin

If you were hoping AI chatbots could replace Dr. Google, Reddit's latest experiment suggests... maybe not yet. Reddit Answers, the platform's AI assistant, recently made headlines for recommending heroin as a pain reliever. As reported by 404Media, the bizarre advice came to light when a healthcare worker spotted the response on a subreddit for moderators. In one interaction, the chatbot pointed to this post: "Heroin, ironically, has saved my life in those instances." In another question, it even suggested kratom, a tree extract that's illegal in multiple states and flagged by the FDA "because of the risk of serious adverse events, including liver toxicity, seizures, and substance use disorder." Reddit Answers isn't your standard AI. Instead of relying solely on curated databases, it draws from Reddit's vast pool of user-generated content. That makes it quirky, sometimes insightful. But clearly, not always safe. Originally confined to a separate tab, Reddit has been testing Answers within regular conversations, which is how this particular episode went unnoticed... until now. After Reddit's AI chatbot suggests users try heroin. After the report went public, Reddit responded by reducing the AI's visibility under sensitive health-related chats. Moderators had no control over the advice themselves, highlighting just how experimental the tool still is. Of course, this isn't Reddit's first AI hiccup. Other bots like Google's AI Overviews and ChatGPT have also delivered questionable health guidance, including "tips" like using non-toxic glue on pizzas to stop cheese from sliding off. Clearly, when it comes to medical advice, AIs are entertaining, but humans still have the upper hand. So maybe don't ask it for medical advice just yet.

Share

Share

Copy Link

Reddit's AI-powered chatbot 'Answers' has come under fire for suggesting heroin and kratom as pain relief options. The incident highlights the ongoing challenges and risks associated with AI-generated content in sensitive areas like healthcare.

Reddit's AI Chatbot Recommends Dangerous Substances for Pain Relief

Reddit's artificial intelligence-powered chatbot, 'Answers,' has found itself at the center of a controversy after recommending heroin and kratom as pain relief options. The incident has raised serious concerns about the reliability and safety of AI-generated content, particularly in sensitive areas such as healthcare advice

1

.

Source: GameReactor

The Controversial Recommendations

A healthcare worker on a subreddit for moderators flagged the issue after noticing Answers suggesting potentially harmful substances for pain management. In one instance, the chatbot referenced a post claiming, "Heroin, ironically, has saved my life in those instances"

2

. In another response, Answers recommended kratom, a tree extract that is illegal in multiple states and has been warned against by the FDA due to risks of liver toxicity, seizures, and substance use disorder3

.How Reddit Answers Works

Unlike traditional AI chatbots, Reddit Answers draws information from the platform's vast pool of user-generated content. Initially, it opened in a separate tab from the homepage, but Reddit has been testing the chatbot within conversations, potentially exposing users to unfiltered and potentially dangerous advice

4

.

Source: Digital Trends

Moderators' Concerns and Lack of Control

The incident has highlighted a significant issue for Reddit moderators, particularly those overseeing health-related subreddits. Moderators found themselves unable to disable or flag problematic AI-generated content, raising concerns about their ability to maintain the safety and accuracy of information in their communities

2

.Reddit's Response and Updates

Following the reports and concerns raised by moderators and media outlets, Reddit has taken steps to address the issue. A spokesperson stated that they have rolled out an update to ensure that 'Related Answers' to sensitive topics will no longer be displayed on the post detail page

5

. This change aims to enhance user experience and maintain appropriate content visibility within the platform.Related Stories

Broader Implications for AI in Healthcare

This incident is not isolated to Reddit, as other AI chatbots like Google's AI Overviews and ChatGPT have also provided questionable health advice in the past

1

. It underscores the ongoing challenges faced by AI systems in distinguishing between helpful information, sarcasm, and potentially dangerous suggestions, especially in sensitive areas like healthcare4

.The Future of AI Chatbots and Content Moderation

As AI continues to be integrated into various platforms, the Reddit Answers controversy serves as a stark reminder of the need for robust safeguards and human oversight. The incident raises questions about the balance between innovation and user safety, as well as the role of content moderation in an increasingly AI-driven online landscape

3

.References

Summarized by

Navi

[4]

[5]

Related Stories

Recent Highlights

1

Tennessee Teens Sue Elon Musk's xAI Over Grok AI-Generated Child Abuse Images

Policy and Regulation

2

Pentagon designates Palantir Maven AI as core US military system in major defense shift

Technology

3

Supermicro Co-Founder Indicted in $2.5 Billion Nvidia AI Chip Smuggling Scheme to China

Policy and Regulation