Uber expands AWS deal to run ride-matching on Amazon's AI chips, challenging cloud rivals

4 Sources

4 Sources

[1]

Uber is the latest to be won over by Amazon's AI chips | TechCrunch

On Tuesday, Amazon announced that Uber was expanding its contract for AWS cloud services to run more of its ride-sharing features on Amazon's chips. Uber will particularly expand its use of AWS's Graviton (a low-power, ARM-based server CPU) and start a new trial testing Trainium3, AWS's Nvidia competitor AI chip. This deal is a bit less about a long-term threat to Nvidia than it is a thorough thumbing of the nose by Amazon at AWS's cloud competitors, Google and Oracle. While Uber historically ran its own data centers, back in 2023, the ride-hailing company famously signed giant, multi-year cloud computing deals with Oracle and Google. The idea was to move the majority of its I.T. infrastructure off its own datacenters and onto these two clouds, it said. Even in December, Uber publicly reiterated that goal, writing in a blog post: "In February 2023, Uber began transitioning from on-premise data centers to the cloud using OCI and Google Cloud Platform, taking on the dual challenge of shifting massive workloads and introducing Arm-powered compute instances into a previously x86-dominated environment." Uber particularly called out in that post the use of the ARM chips made by Ampere in Oracle's cloud. This is where things get interesting. If you want a crash course in how inter-tangled Silicon Valley can be, take a look at the history of Ampere. Ampere was founded by former Intel bigwig Renee James after she was not promoted to CEO at the chipmaker. She pulled all her strings, including her power at her then-job as an investor at private equity firm Carlyle, and her board seat position at Oracle, to raise the cash to start this company. Oracle owned about one-third of the company, and James had to give up her status as an independent Oracle director because of that investment. (James was, by the way, a key board person who helped vote in Oracle's $9.3 billion purchase of NetSuite in 2016, a company where Larry Ellison was a major stockholder. That deal sparked an unsuccessful shareholder lawsuit alleging Oracle overpaid for it.) In December, Ampere's major competitor Softbank acquired it, and Oracle sold its stake for a handsome $2.7 billion pre-tax gain. James left Oracle's board at the end of 2024 and is no longer working at Ampere. Oracle is raising money as fast as it can to build data centers for OpenAI and Stargate. Ellison said Oracle sold Ampere because he believed designing chips in-house for its data centers was no longer a competitive advantage. It prefers to buy the chips and has signed massive deals with Nvidia. It's worth noting that Oracle, Softbank, and Nvidia are also part of OpenAI's orbit of circular deals that are supposed to fund the model maker's massive data center build-out. But now AWS is announcing it has nabbed a bigger contract from one of Oracle's star customers, Uber, because it has in-house-designed chips. Uber joins Anthropic, OpenAI, and Apple as big tech companies that have signed on or increased their usage of AWS because of these AI chips. In December, Amazon CEO Andy Jassy said Trainium was already a multibillion-dollar business.

[2]

Uber bets on Amazon's custom chips to boost AI efforts

April 7 (Reuters) - Uber (UBER.N), opens new tab is using Amazon's (AMZN.O), opens new tab custom chips to speed up computing and train artificial intelligence models, the cloud giant said on Tuesday, as the ride-hailing firm seeks advanced hardware to handle growing digital workloads. The deal expands the companies' existing cloud partnership by enabling Uber to use Amazon Web Services' Graviton chips to support smoother rides and deliveries and Trainium processors to train AI models that power its apps. Uber is working to optimize its digital interface, accelerate ride-matching and personalize user experiences to attract users and gain a competitive edge. Amazon, meanwhile, is investing heavily in growing the appeal of its custom chips and attracting enterprise customers to capitalize on booming demand for AI model training and inference. Reporting by Zaheer Kachwala in Bengaluru Our Standards: The Thomson Reuters Trust Principles., opens new tab

[3]

Uber joins Amazon's Trainium roster with AWS expansion deal

In short: Uber has expanded its AWS contract to run real-time ride-matching infrastructure on Amazon's Graviton4 processor and is piloting AI model training on Trainium3, joining Anthropic, OpenAI, and Apple on a customer list that is becoming the clearest evidence yet that Amazon's custom silicon strategy is working. Uber's infrastructure runs on milliseconds. Every time a rider opens the app, a system called Trip Serving Zones determines which drivers to consider, how to weight them, and how quickly to return a match, all before the user has finished watching the loading animation. At Uber's scale, which reached more than 40 million trips a day in 2025 across 72 countries, the compute cost of that operation is substantial and the latency tolerance is essentially zero. On 7 April 2026, the company announced it is moving more of that workload to AWS, running Trip Serving Zones on Amazon's Graviton4 processor and beginning a pilot to train AI models on Trainium3. It is the latest addition to a roster of significant technology companies choosing Amazon's custom silicon over the default, and for Amazon's chip programme, arguably the most operationally consequential customer yet. The announcement covers two distinct workloads. Trip Serving Zones, Uber's real-time infrastructure for matching riders and drivers, will run on Graviton4, Amazon's ARM-based processor designed for high-throughput, low-latency compute. The workload is not AI in any generative sense; it is infrastructure, and its demands are closer to telecommunications switching than to model inference. What it requires is responsiveness under load, particularly during demand spikes when ride volumes surge and the matching system must scale without introducing delay. Separately, Uber is beginning a pilot to train AI models on Trainium3 using data from its accumulated trip history. The company has recorded 13.567 billion trips over its lifetime and serves more than 200 million monthly active users, generating a continuous stream of behavioural data on driver allocation, estimated arrival times, demand patterns, and route optimisation. Training AI on that dataset is a longer-term initiative, but the economics of Trainium3 make the pilot financially rational even before any performance case is made. Kamran Zargahi, Uber's vice-president of engineering, described the operational rationale plainly. "Uber operates at a scale where milliseconds matter. Moving more Trip Serving workloads to AWS gives us the flexibility to match riders and drivers faster and handle delivery demand spikes without disruption." On the AI side, Zargahi said the company was "building a technology foundation that will make every Uber experience smarter, so we can keep our focus where it belongs: on the people who use Uber every day." Rich Geraffo, vice-president and managing director for North America at AWS, framed the partnership in terms of Uber's real-time demands: "Uber is one of the most demanding real-time applications in the world, and we're proud to be an important part of the infrastructure powering their global operations." The AWS deal is the third major cloud relationship Uber has entered in the past three years. In 2023, the company signed two separate seven-year agreements, one with Oracle Cloud Infrastructure and one with Google Cloud, as part of an exit from its own data centres. That multicloud strategy was framed as a hedge against vendor lock-in and a way to match specific workloads to the clouds best suited to run them. Adding AWS completes a picture in which Uber is, effectively, a significant customer of all three major hyperscalers simultaneously. The practical consequence of that structure is that Uber has unusual leverage in negotiations with each provider and unusual freedom to route workloads toward whichever platform offers the best performance-cost ratio for a given function. Moving Trip Serving Zones to Graviton4 is a statement about where AWS currently sits on that curve for high-frequency, latency-sensitive infrastructure. The Trainium3 pilot is a more tentative signal, a test of whether Amazon's AI training economics can compete with the GPU-based infrastructure Uber already has access to through its existing cloud relationships. Trainium3 is Amazon's third-generation AI training accelerator, and its specifications make the cost argument straightforward. Each chip delivers 2.517 petaflops in MXFP8 precision, with 144 GB of HBM3e memory and 4.9 terabytes per second of memory bandwidth. At scale, Trainium3 runs at roughly 30 to 50 per cent of the cost of comparable Nvidia H100 or H200 hardware. The UltraServer configuration allows up to 144 accelerators to be networked together, delivering approximately 362 MXFP8 petaflops, a cluster capable of training frontier-scale models. The cost differential is the headline, but the underlying argument is about workload fit. Training large models on proprietary trip data does not require the same interoperability demands as inference in production environments, where software ecosystems, CUDA toolchains, and integration dependencies have historically made Nvidia hardware the default. In training contexts, where the workflow is more controlled and the cost per training run compounds across thousands of experiments, the case for custom silicon is more straightforward. The AI chip acceleration that defined 2025 created the volume of Trainium deployments Amazon needed to mature its tooling, and Uber's pilot arrives at a moment when that software ecosystem is meaningfully more capable than it was 18 months ago. Uber joins a short but strategically significant group of Trainium customers. Anthropic has committed to using more than one million Trainium chips across Amazon's Project Rainier cluster. OpenAI, despite its close relationship with Microsoft, included Trainium capacity as part of its $50 billion AWS commitment. Apple has publicly praised Trainium's performance for its own training workloads. The pattern across those customers is consistent: they are all organisations with large, proprietary datasets, predictable training workflows, and sufficient scale to justify the engineering investment of moving off GPU-default infrastructure. The depth of capital flowing into AI infrastructure, illustrated by commitments like OpenAI's $50 billion AWS deal, is also forcing every AI-dependent company to evaluate whether their compute costs are sustainable, a pressure that makes Trainium's price advantage more compelling over time. For Amazon, each addition to the Trainium customer roster performs a dual function: it validates the chip commercially and it builds the software tooling that makes the next adoption easier. Uber's use case, training on proprietary operational data at scale, is different enough from Anthropic's frontier model training to expand the range of workloads Amazon can credibly claim Trainium handles well. That breadth matters as Amazon competes for the next wave of enterprise AI infrastructure decisions. The AI infrastructure deals reshaping the industry's capital structure are not being won solely on chip performance; they are being won on the combination of performance, cost, ecosystem maturity, and the confidence that comes from seeing who else is on the same platform. Every Trainium announcement is, in some sense, a Nvidia story. Amazon's custom silicon programme exists because the economics and strategic dependencies of GPU dominance have become uncomfortable for the companies that rely on it most. Uber's pilot is a small data point in a larger pattern of enterprises exploring what alternatives to Nvidia's stack look like in practice. The competitive response has not been passive: Nvidia's NVLink Fusion strategy, which opens its high-speed interconnect to third-party silicon including Marvell's custom AI accelerators, is a direct attempt to absorb the custom silicon movement into Nvidia's ecosystem rather than compete with it head-on. The logic is that even if customers build or buy non-Nvidia training chips, they remain inside Nvidia's networking fabric and software dependencies. How much of Uber's AI training ultimately migrates to Trainium will depend on the pilot results, and on whether Amazon's tooling closes the remaining gaps with the CUDA ecosystem that has made Nvidia hardware the path of least resistance for most AI engineering teams. What the announcement does establish is that Uber is testing those gaps seriously rather than treating them as a given. For an industry that has spent three years talking about Nvidia alternatives without producing many at scale, a 40-million-trips-per-day test environment is as real-world a proof of concept as Amazon could ask for.

[4]

Uber Collaborates with AWS to Strengthen Real-Time Trip Matching Systems

Uber is expanding its infrastructure and artificial intelligence (AI) capabilities on Amazon Web Services (AWS). Uber is expanding its infrastructure and artificial intelligence (AI) capabilities on Amazon Web Services (AWS). Uber is using AWS Graviton instances to support more of its Trip Serving Zones, the real-time infrastructure behind every ride and delivery, and has started pilot training some AI models on Trainium -- enabling faster rider and delivery matching, global demand handling, and smarter, more personalized experiences for millions of daily users.

Share

Share

Copy Link

Uber is expanding its AWS contract to run real-time ride-matching infrastructure on Amazon's Graviton4 processor and piloting AI model training on Trainium3. The move adds Uber to a growing roster of major tech companies choosing Amazon's custom silicon over Nvidia, while simultaneously challenging Google Cloud and Oracle in the cloud computing battle.

Uber Shifts Critical Infrastructure to AWS AI Chips

Uber announced on Tuesday that it is expanding its cloud services agreement with Amazon Web Services to run more of its ride-sharing features on Amazon's custom chips

1

. The ride-hailing company will particularly expand its use of AWS's Graviton4, a low-power ARM-based server CPU, and start a new trial testing Trainium3, AWS's competitor to Nvidia in the AI training chip market2

. This cloud partnership expansion marks a strategic shift for Uber, which operates at a scale where milliseconds matter and compute cost efficiency directly impacts profitability.

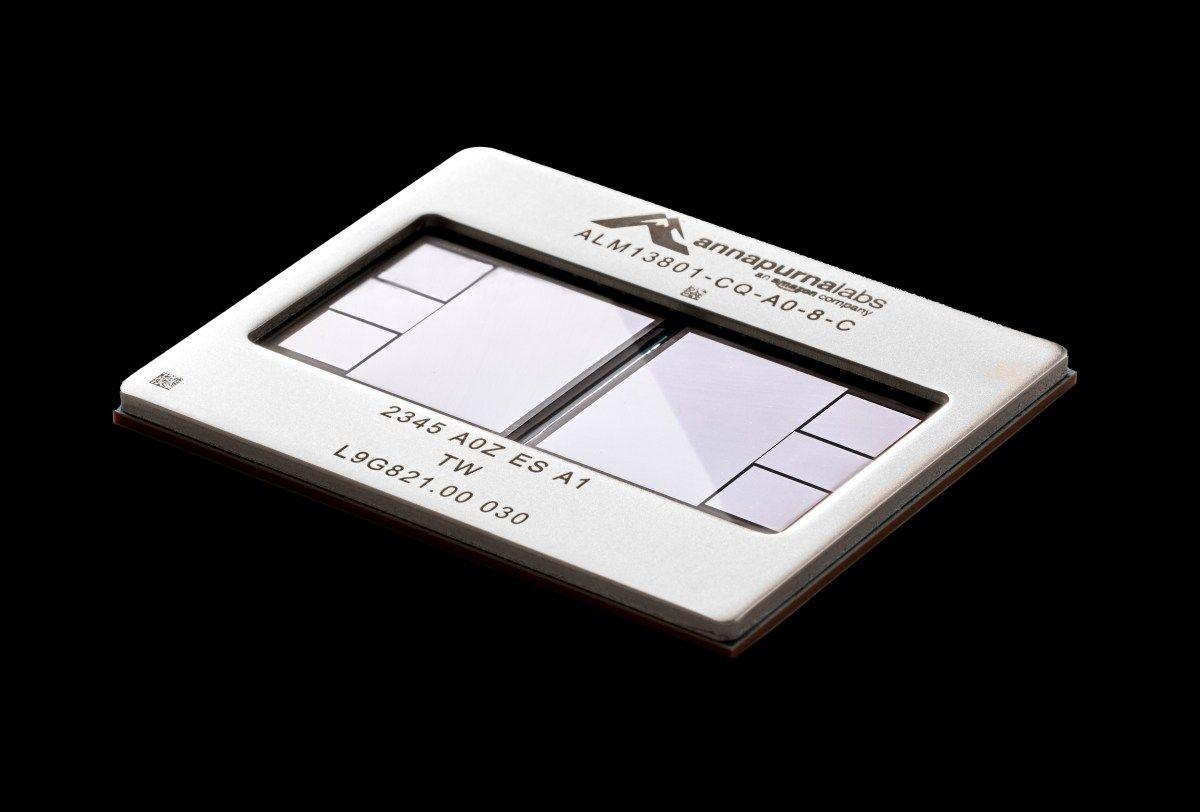

Source: DT

The deal enables Uber to use Amazon Web Services' Graviton and Trainium chips to support smoother rides and deliveries while training AI models that power its apps

2

. At Uber's scale, which reached more than 40 million trips a day in 2025 across 72 countries, the infrastructure demands are substantial and latency tolerance is essentially zero3

. Every time a rider opens the app, a system called Trip Serving Zones determines which drivers to consider, how to weight them, and how quickly to return a match, all before the user has finished watching the loading animation.Real-Time Trip Matching Gets Performance Boost

The announcement covers two distinct workloads that showcase Uber's AI capabilities expansion. Trip Serving Zones, Uber's real-time infrastructure for matching riders and drivers, will run on Graviton4, Amazon's ARM-based processor designed for high-throughput, low-latency compute

3

. The workload requires responsiveness under load, particularly during demand spikes when ride volumes surge and the matching system must scale without introducing delay. Kamran Zargahi, Uber's vice-president of engineering, described the operational rationale plainly: "Uber operates at a scale where milliseconds matter. Moving more Trip Serving workloads to AWS gives us the flexibility to match riders and drivers faster and handle delivery demand spikes without disruption"3

.Uber is working to optimize its digital interface, accelerate ride-matching and personalize user experiences to attract users and gain a competitive edge

2

. The company has recorded 13.567 billion trips over its lifetime and serves more than 200 million monthly active users, generating a continuous stream of behavioral data on driver allocation, estimated arrival times, demand patterns, and route optimization3

.AI Model Training on Trainium3 Begins

Separately, Uber is beginning a pilot for AI model training on Trainium3 using data from its accumulated trip history

3

. Training AI on that dataset is a longer-term initiative, but the economics of Trainium3 make the pilot financially rational. Each Trainium3 chip delivers 2.517 petaflops in MXFP8 precision, with 144 GB of HBM3e memory and 4.9 terabytes per second of memory bandwidth. At scale, Trainium3 runs at roughly 30 to 50 per cent of the cost of comparable Nvidia H100 or H200 hardware3

.

Source: TechCrunch

Amazon is investing heavily in growing the appeal of its custom chips and attracting enterprise customers to capitalize on booming demand for AI model training and inference

2

.Related Stories

Multi-Cloud Strategy Creates Competitive Leverage

The AWS deal is the third major cloud relationship Uber has entered in the past three years. While Uber historically ran its own data centers, back in 2023, the ride-hailing company signed giant, multi-year cloud computing deals with Oracle and Google Cloud

1

. The idea was to move the majority of its infrastructure off its own datacenters and onto these two clouds. Even in December, Uber publicly reiterated that goal, writing in a blog post about transitioning from on-premise data centers to the cloud using Oracle Cloud Infrastructure and Google Cloud Platform1

.This multi-cloud strategy means Uber is effectively a significant customer of all three major hyperscalers simultaneously. The practical consequence is that Uber has unusual leverage in negotiations with each provider and unusual freedom to route workloads toward whichever platform offers the best performance-cost ratio for a given function

3

. Moving Trip Serving Zones to Graviton4 is a statement about where AWS currently sits on that curve for high-frequency, latency-sensitive infrastructure.

Source: The Next Web

Amazon's Silicon Strategy Gains Momentum

Uber joins Anthropic, OpenAI, and Apple as big tech companies that have signed on or increased their usage of AWS because of these AI chips

1

. In December, Amazon CEO Andy Jassy said Trainium was already a multibillion-dollar business. This deal is a thorough challenge by Amazon to AWS's cloud competitors, Google and Oracle. For Uber, the move represents building a technology foundation that will make every experience smarter, keeping focus on the people who use Uber every day. Rich Geraffo, vice-president and managing director for North America at AWS, framed the partnership in terms of Uber's real-time demands: "Uber is one of the most demanding real-time applications in the world, and we're proud to be an important part of the infrastructure powering their global operations"3

. The Trainium3 pilot is a test of whether Amazon's AI training economics can compete with the GPU-based infrastructure Uber already has access to through its existing cloud relationships, with implications for how enterprise customers evaluate alternatives to Nvidia for model inference and training at scale.References

Summarized by

Navi

[3]

Related Stories

Apple Embraces Amazon's AI Chips for Intelligence Model Training and Search Efficiency

04 Dec 2024•Technology

Amazon Challenges Nvidia's AI Chip Dominance with Trainium 2

12 Nov 2024•Technology

Amazon unveils AI Factories with Nvidia partnership and launches Trainium3 chip for on-premises AI

02 Dec 2025•Technology