Apple's AI Vision: AirPods with Built-in Cameras in Active Development

10 Sources

10 Sources

[1]

Is Apple working on AirPods with cameras? Here's what we know so far

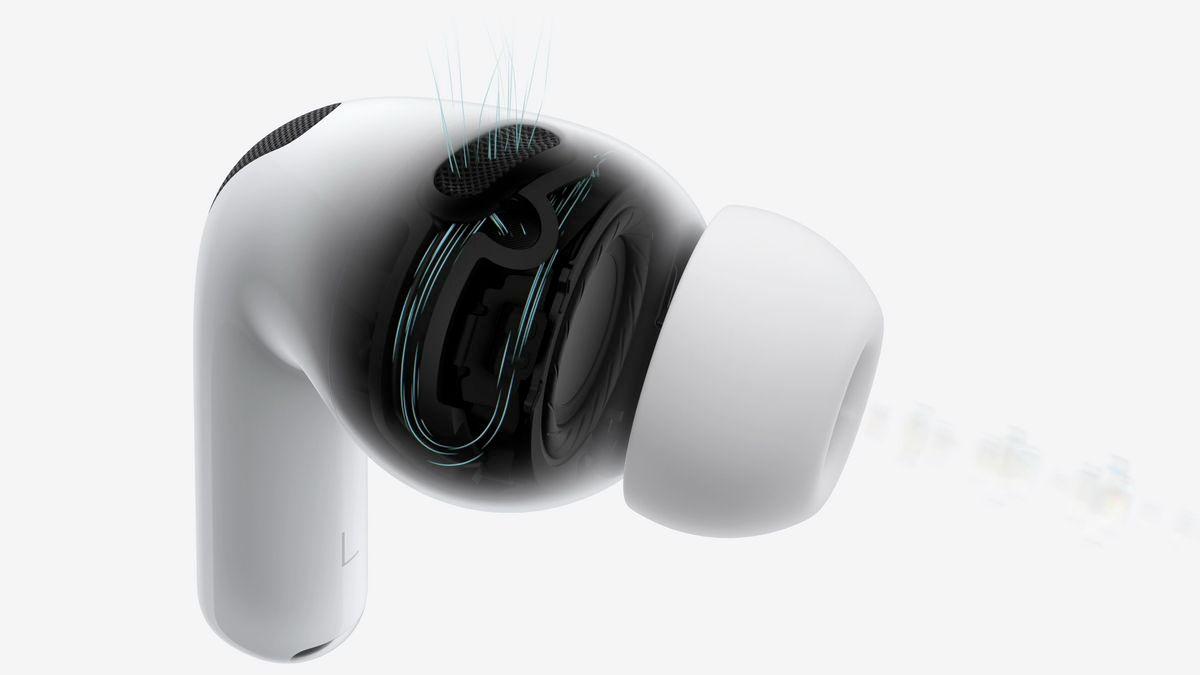

Potential features include real-time object recognition, contextual Siri assistance, and in-air gesture controls. Apple has been working on enhancing the AirPods for a while and we have seen it with the recent hearing aid feature. Now the latest reports suggest that Apple is working on a new AirPods that will have integrated cameras, with an aim to enhance the AI capabilities and spatial audio. According to Mark Gurman's report, the new AirPods are under development but may debut with the AirPods Pro 4 (not anytime sooner than 2027). The main reason behind the AirPods with cameras could be to improve Apple's AI ecosystem. The report stated that the new AirPods would be inspired by Visual Intelligence features and may allow users to interact with their surroundings in smarter ways via camera sensors and AI. This way, users may be able to ask Siri contextual questions about their environment without needing to pull out their iPhones. Also read: iPhone 16 Pro Max available with over Rs 15,000 discount: How to grab this deal According to Gurman, this move will move towards smart glasses functionality -- without the actual glasses. Looking at the Apple AirPods sale records, this can be a big move in pushing the AI strategy by offering real-time information, object recognition, and enhanced Siri responses through external cameras. Previously Ming-Chi Kuo also suggested that the cameras could work in sync with the Apple Vision Pro and other upcoming headsets. Overall, the goal is to improve the spatial audio by tracking the movements of the users and offer a more promising audio experience. Additionally, he also stated that the cameras could enable "in-air gesture control" for AirPods, potentially allowing users to control music, calls, and Siri functions with hand movements. While Apple has yet to confirm this concept, it may face some serious concerns related to battery, form factor, quality, privacy, and misuse. It remains to be seen how Apple is planning to launch its product. Speaking of the release timeline, the Bloomberg report stated that the AirPods Pro could launch in 2027, alongside AirPods Pro 4.

[2]

Apple AirPods With Inbuilt Cameras in Development: Mark Gurman

Apple is said to be eying to expand its Visual Intelligence features Apple recently launched a handful of new devices including the iPhone 16e, MacBook Air with M4, iPad Air and more. After the launch of new products, the Cupertino-based tech giant seems to have shifted its focus to pushing the boundaries of audio technology. According to Bloomberg's Mark Gurman, Apple is exploring ways to include tiny cameras in its future AirPods. The brand is likely to debut this technology on the AirPods Pro 4. In the latest edition of his Power On newsletter, Mark Gurman states that Apple is 'actively developing' new AirPods with inbuilt cameras. This technology is unlikely to be included in the upcoming AirPods Pro 3, but Apple is said to be working on it for future generations. The AirPods with integrated cameras could be able to provide details about the surroundings of the wearer, and the gathered data could be used in AI features. Gurman claims that the new version of the AirPods Pro will use external cameras and artificial intelligence (AI) to understand the outside world and provide information to the user. "This would essentially be the smart glasses path -- but without actual glasses", he said. This would allow the users to ask Siri about their surroundings directly through the earbuds. By putting cameras into its popular AirPods, Apple is said to be eying to expand its Visual Intelligence features into the earbuds. The technology debuted on the iPhone 16 lineup through the Camera Control button. According to Gurman, Apple will not unveil this technology until 2027 and it is likely to debut on the AirPods Pro 4. Apple is said to be considering introducing new smart glasses in the same year. The report by Gurman bolsters previous claims made by TF Securities analyst Ming-Chi Kuo. Kuo opined last year that cameras in future AirPods could act as infrared sensors. They are said to offer enhanced spatial audio experience with the Apple Vision Pro and could also enable "in-air gesture control."

[3]

AirPods with camera? Learn about Apple's plan with their audio wearable

Apple is reportedly developing a future version of AirPods with built-in cameras, expected to launch around 2027. According to Bloomberg's Mark Gurman, these AirPods will use external cameras and AI to analyze the user's surroundings, offering features similar to smart glasses without requiring a headset. This aligns with Apple's broader AI strategy, which includes advancements like Camera Control in the iPhone 16. Apple is reportedly developing a new version of AirPods with an integrated camera, with a potential launch expected in 2027. While details remain scarce, this move suggests a shift towards more advanced wearable technology. Currently, Apple sells three different versions of AirPods, but the addition of a camera could mark a significant departure from their traditional focus on audio. Bloomberg's Mark Gurman reports that Apple is actively working on a version of AirPods with built-in cameras. While this technology won't be part of the upcoming AirPods Pro 3, expected to launch this year, it is being developed for future models. According to Gurman, the addition of a camera aims to improve the AirPods' ability to recognize and interact with their surroundings. With the launch of the iPhone 16 lineup, Apple introduced Camera Control, a new button that enables users to capture photos and adjust settings while also unlocking a feature called Visual Intelligence. According to Bloomberg's Mark Gurman, Apple aims to bring similar technology to AirPods as part of its broader AI strategy. Gurman suggests that the upcoming AirPods will use external cameras and AI to analyze the user's surroundings, offering features similar to smart glasses -- without the need for an actual headset. According to Bloomberg, Apple is unlikely to introduce this technology before 2027, potentially alongside the launch of the AirPods Pro 4. The company is also considering developing smart glasses, similar to Meta's Ray-Bans, as a way to leverage its investment in Vision Pro's visual intelligence technology. This system, designed to scan and interpret the user's surroundings, could enable a range of new interactive features.

[4]

Camera-toting AirPods with Apple Intelligence said to be in active development - but the idea may be too flawed to take off

We've been hearing for a while now that Apple is working on camera-equipped AirPods, and a new report says that they're in "active development". The report, from Bloomberg, doesn't go into any more detail. But it ties in with previous reports from the same source that say Apple sees camera-equipped earbuds as an interim step until AI-packing smart glasses are practical and affordable. I'm not so sure, because just like Vision Pro there are some very significant obstacles to come. And some of those obstacles are literal obstacles rather than metaphorical ones. Let's assume that Apple can do the tech equivalent of putting a quart into a pint pot, with some of the tech that currently takes up so much room in Ray-Ban Meta smart glasses made small enough to stick in an AirPod. That in itself is a big ask - it's one reason why Vision Pro is so big and Apple's own smart glasses are still years in the future, if they arrive at all - but if Apple does solve it there are still significant obstacles to overcome. The thing about in-ear cameras is that they need to be able to see beyond your ears. And if you're not a short-haired man in California, that means there are potential obstacles: long hair is the most obvious one, of course, but for reasons of warmth, religion or fashion there are also hoods, hats and other fabrics to think about too. There's also the same question that, for me at least, applies to the Vision Pro. Yes, it's magical and clever and amazing and all the other superlatives. But what is it actually for? What will it actually do to make your life better and to justify the price tag? The answer, inevitably, appears to be AI. But right now AI is frequently hopeless, and Apple Intelligence is hopeless-er - so much so that the only reason I haven't turned it off on my iPhone is because doing so makes Siri on my HomePods become completely unusable. And I'm not alone. In December 2024, some 73% of iPhone owners and 87% of Samsung phone owners said that AI added "little to no value" to their devices. Perhaps this is why Apple has delayed the launch of the full AI-infused Siri for a while longer, while it develops it further. Apple has a long tradition of launching devices without full understanding their most impactful purpose - it did it with the iPad, and again with the Apple Watch; both products took a while to find their niches - and I worry that unless they're designed to enhance another product such as Apple's smart glasses, then eyeballing AirPods may have a similar trajectory. Cameras in smart glasses - privacy issues aside - make sense. But cameras in your ears may be too limited a prospect to ever really live.

[5]

Apple's AI vision via AirPods camera is in active development

Apple is working on bolstering future Apple Intelligence efforts by working on AirPods with built-in cameras. One of the many ways Apple has moved forward with Apple Intelligence is the introduction of Visual Intelligence, a feature that is used to acquire information and to get details of real-world items using an iPhone 16. However, Apple is also considering making a version that doesn't involve you pointing an iPhone camera at things in the first place. According to Mark Gurman in Sunday's newsletter for Bloomberg, Apple is "actively developing" a product that can combine AirPods with cameras. The idea is to have the cameras being able to provide data on the surrounding environment that can be used in AI features. For example, a query asking where the user is located may use local sign scans as a way to determine an approximate location, or a user could be told which way a shop is based on storefront imagery. Gurman doesn't go into detail, but does state that the earbuds are being worked on by the company. The development of cameras in AirPods may sound like an unusual way of doing things, but it is a concept that has surfaced in rumors multiple times. Aside from being brought up in late January by Gurman, they also appeared in claims from December and October, with a potential arrival anticipated to be between two and three years away. The cameras may not necessarily be full color version, but could instead use infrared sensors for depth mapping. Such a system could be used more for navigational purposes, and potentially use less power than the full video versions. While some may consider adding the cameras to smart glasses, such as the repeatedly rumored Apple Glass, adding cameras to AirPods could be a better move overall. For a start, the viewing angle could be a lot more broad than the typically front-facing camera seen in existing smart glasses. For the design of smart glasses, weight is a major factor. By adding hardware and batteries, you're adding more weight to a typically lightweight item, which can gradually become more uncomfortable to wear for long periods. There's already one Apple audio product that adds an extra feature as a minor tangent. The Beats Powerbeats Pro 2 include a blood monitoring sensor in each earbud, which feeds back data to an iPhone, in a form that could feasibly take a lot of weight thanks to its use of an earhook. There's also the possibility of users being able to enjoy smart glasses-like features, without necessarily needing to wear glasses of any form. Or at least not needing to switch their traditional glasses frames if they are regular spectacle wearers. Not everyone particularly likes the idea of wearing glasses at all, hence the existence of contact lenses as an alternative. The term "glasshole" springs to mind here. By including most of the functionality, barring visual elements, smart earbuds that can "see" the environment and provide audio feedback could be a viable alternative to smart glasses. If Apple can capitalize on the idea and make it work enough to impress the public, it could be onto a winner.

[6]

Apple is 'actively developing' AirPods with cameras: What to expect - 9to5Mac

According to Bloomberg's Mark Gurman, Apple is 'actively developing' a version of AirPods with integrated cameras. This tech is unlikely to make an appearance in AirPods Pro 3, which are expected to debut this year - but nonetheless, it's in the pipeline. Apple wants your AirPods to better understand your environment, but why? With the iPhone 16 lineup, Apple introduced Camera Control. This new button is great for taking photos and adjusting camera settings, but it also unlocked a new feature: Visual Intelligence. Visual Intelligence is a powerful tool that helps users learn about the world around them, and allows users to take action based on the physical context around them. You can add an event flyer to your calendar, for example, or tap into the power of ChatGPT or Google to help learn about something you don't understand. According to Bloomberg's Mark Gurman, Apple wants to bring similar integration with AirPods, mainly in an effort to bolster its position in the AI race: Apple is working on a new version of the AirPods Pro that uses external cameras and artificial intelligence to understand the outside world and provide information to the user. This would essentially be the smart glasses path -- but without actual glasses. With this integration on AirPods, you could possibly ask Siri about your surroundings, without even needing to take your iPhone out of your pocket. Supply chain analyst Ming Chi Kuo has also floated the idea of Apple using these cameras as a means of integration with other products. Specifically, they could be used for better spatial awareness while listening to audio with Apple Vision Pro: The new AirPods is expected to be used with Vision Pro and future Apple headsets to enhance the user experience of spatial audio and strengthen the spatial computing ecosystem. For example, when a user is watching a video with Vision Pro and wearing this new AirPods, if users turn their heads to look in a specific direction, the sound source in that direction can be emphasized to enhance the spatial audio/computing experience. This suggestion in particular is a bit odd, but given Kuo's credibility, its worth mentioning. He also mentioned that it's possible that these cameras could enable "in-air gesture control" for AirPods. According to Bloomberg, Apple won't debut this tech until 2027 at the earliest, likely alongside AirPods Pro 4:

[7]

New report says Apple is working on Meta-style smart glasses and AirPods with cameras

Apple has barreled into 2025 with a bevy of new products, including the upgraded M3 iPad Air and the iPhone 16e. And the most exciting gadgets are on the horizon. Bloomberg's Mark Gurman dropped a big recap report on Apple's start to 2025. but buried in there was news about Apple's next step with audio (and video). He claims that Apple is "actively developing" a new pair of AirPods with integrated cameras. It's apparently part of an internal Apple push to explore more smart wearables, including smart glasses and the AirPods. The rumor mill has gone back and forth on whether or not Apple is even working on smart glasses. This report hints that Apple is working on a device that would be similar to the Ray-Ban smart glasses from Meta. As Gurman hints, Apple could tightly integrate its AirPods with a set of AI glasses. These would not be AR glasses, as the technology is still coming together. "The cameras would help power AI features by gathering information on the surrounding environment," Gurman wrote. The Meta Ray-Ban glasses have seen 2 million pairs sold so far, which is not bad for a nascent product category. They can be used for listening to music, taking photos and recording video but also for accessing Meta AI on the go, whether you're looking for quick answers or even trying to pick out an outfit. As Gurman reports, "if Apple can bring its design prowess, offer AirPods-level audio quality and tightly integrate the glasses with the iPhone, I think the company would have a smash hit. It's mind-boggling that Apple hasn't gotten there yet." When Apple introduced the iPhone 16 lineup, the company also added the new Camera Control button that unlocks the Apple Intelligence feature Visual Intelligence -- though that didn't turn on until the iOS 18.2 in December. Visual Intelligence similar to Google Lens uses AI to help you learn about things you see in the world. For example, you can scan an event flyer with your camera that would add the event to your calendar. It could also tap into ChatGPT or Google search to find more information based on what is shown on the camera. Presumably, you could ask Siri about your surroundings without needing to actually use your iPhone. The problem is that Apple is openly and reportedly struggling with getting a revamped Siri off the ground. A lot hinges on Apple making Siri work the way it pitched last summer during WWDC 2024. At the earliest, now, we might see this when iOS 19 launches in the fall, but that isn't a guarantee and could be pushed out to 2026. This would likely have a downstream affect on all of Apple's other products, including the much rumored smart home hub. Some reports have pointed toward a 2026 release for the AirPods with cameras, though Gurman has both hinted at a 2025 release and pointed toward a 2027 release, possibly alongside a set of smart glasses. It's a fairly large window, and as mentioned, largely depends on whether or not Apple can actually get its AI tools under control. If Apple doesn't launch cameras integrated AirPods this year, reports indicate that the AirPods Pro 3 would arrive with a number of other upgrades. These include new sensors for health and temperature tracking, making them more like a wearable like a smart watch than just earbuds. The buds may also get a redesigned control system.

[8]

Apple Still Planning New AirPods With Tiny Cameras

Apple continues to explore the idea of releasing camera-equipped AirPods in the future, according to Bloomberg's Mark Gurman. Apple also is still actively developing a product that would combine AirPods with cameras. The cameras would help power AI features by gathering information on the surrounding environment, as I've reported before. He previously said the "cameras" would technically be infrared sensors. In a June 2024 blog post, Apple supply chain analyst Ming-Chi Kuo said Apple planned to mass produce new AirPods with infrared cameras by 2026. He said the infrared camera component would be similar to the iPhone's Face ID receiver. Kuo said the new AirPods with infrared cameras would provide an enhanced spatial audio experience with Apple's Vision Pro headset. "For example, when a user is watching a video with Vision Pro and wearing this new AirPods, if users turn their heads to look in a specific direction, the sound source in that direction can be emphasized to enhance the spatial audio/computing experience," wrote Kuo. The infrared cameras could potentially enable "in-air gesture control" as well, allowing for device interaction with hand movements. If the alleged 2026 mass production timeframe remains on schedule, the new AirPods with infrared cameras could launch in 2026 or 2027.

[9]

Apple reportedly planning to add this radical feature into Airpods

AirPods are not just a simple audio accessory from Apple. The company sold more than $18 billion of AirPods in 2023, and already laid out plans to incorporate new health features into the earbuds such as checking a user's temperature, heart-rate monitoring, and other biometric data. These possible upcoming features for the AirPods would complement the hearing aid feature that Apple added to the earbuds last year. There is, however, another feature for the AirPods that Apple is working on, and it could be another game changer. Apple is working on adding cameras to its AirPods, according to a report from Bloomberg. The cameras would reportedly power AI features by gathering data from the environment. The report doesn't specify whether this feature would show up on the AirPods or AirPods Pro, or would cameras be added to the larger AirPods Max, which could easily accommodate a camera. Apple didn't immediately respond to a request for confirmation about the report. Like other potential new features for AirPods and Apple devices, these advancements rely on AI. Apple's venture into AI, however, has faced challenges. Last September, Apple unveiled its Apple Intelligence feature with the release of the iPhone 16. Apple had big plans for Apple Intelligence at the time but faced a major setback. On Thursday, an Apple spokesperson told Daring Fireball that the planned update to Siri, making it a true AI assistant, has been delayed. The company says it plans to release this Siri overhaul later this year. It's unclear if Apple will unveil this new Siri at its Worldwide Developer Conference this June. The delay of the new Siri also impacted the development of a new Apple device. A smart home hub, designed to function as an iPad-like home control device, has been pushed back from an expected March reveal, according to Bloomberg. Its delay stems from its dependence on the overhauled Siri.

[10]

AirPods with cameras would be a good match for contextual Siri

According to recent rumors, Apple has been exploring the idea of putting cameras in the AirPods. Although this project probably won't see the light of day any time soon, I can't stop imagining how AirPods with cameras would be a good match with the promised contextual Siri - which was recently delayed by Apple. Let's start with the rumors. Bloomberg's Mark Gurman reported this week that Apple has been "actively developing" new AirPods with built-in cameras. Although this isn't the first report about AirPods with cameras, the report not only corroborates the rumor but also reveals more details about how these cameras would be used. Of course, when we talk about cameras, most people probably think about having lenses used to capture high-resolution photos and videos, just like the we do on the iPhone. But I'm sure it's not in Apple's plans to let people take pictures by pointing their ears at someone. So what would these cameras be used for? Well, cameras are sensors, and they can be used for a lot of things. For instance, iPhones have an infrared camera in addition to the main front-facing camera. This second lens is used to capture the user's face even in the dark, which makes Face ID work. With that in mind, we can think of a few possibilities. Analyst Ming-Chi Kuo once suggested that Apple wants to use the cameras in the AirPods to enhance the spatial audio experience by better detecting the user's surroundings. Kuo also suggested that the cameras could enable "in-air gesture control" for AirPods, something that would be particularly useful for enhancing the Vision Pro experience, for example. But my biggest bet for AirPods with cameras is artificial intelligence. According to Bloomberg, Apple has been experimenting with combining these sensors in AirPods with AI to "understand the outside world and provide information to the user." The idea is similar to what Meta glasses already have with Meta AI, but now in wireless earbuds. Imagine asking your AirPods things about the place you're in without having to take your iPhone out of your pocket. And why not give users crucial alerts, such as when there's a vehicle coming towards them. But there's one feature that would be a killer for these future AirPods, and that would be integration with the new contextual Siri. On iOS, the new contextual Siri will learn things about you based on your photos, chats, emails, calendar events, and more. Siri can then provide answers based on this personal context. Yes, I know this feature has been officially delayed by Apple, but imagine something like this for AirPods with cameras. Instead of using personal data from your device, Siri could use the cameras in the AirPods to capture and learn about the user's surroundings. Then you could ask Siri not just about a place you're in at the moment, but about something you saw hours or even days ago. Of course, there's a huge privacy concern when it comes to always recording everything around you, but I believe Apple would be the right company to build a feature like this with privacy in mind. What about you? Would you like to have a feature like this? Let me know in the comments section below.

Share

Share

Copy Link

Apple is reportedly developing AirPods with integrated cameras, aiming to enhance AI capabilities and spatial audio. This technology could debut with AirPods Pro 4, potentially launching around 2027.

Apple's Vision for AI-Enhanced AirPods

Apple is reportedly working on a groundbreaking development in wearable technology: AirPods with built-in cameras. This innovation, which could debut with the AirPods Pro 4 around 2027, aims to enhance AI capabilities and spatial audio experiences

1

.Potential Features and Capabilities

The camera-equipped AirPods are expected to offer several advanced features:

- Real-time object recognition

- Contextual Siri assistance

- In-air gesture controls

- Enhanced spatial audio experiences

These features would allow users to interact with their surroundings more intelligently, potentially asking Siri contextual questions about their environment without needing to use their iPhones

2

.Integration with Apple's AI Ecosystem

The development of camera-equipped AirPods aligns with Apple's broader AI strategy. It builds upon the Visual Intelligence features introduced in the iPhone 16 lineup, extending this technology to wearable devices

3

.Challenges and Considerations

While the concept is innovative, it faces several potential challenges:

- Battery life and form factor constraints

- Privacy concerns

- Practical limitations (e.g., long hair or headwear obstructing cameras)

- User adoption and perceived value

Some analysts question whether the technology will provide sufficient value to justify its development and potential cost

4

.Related Stories

Alternative to Smart Glasses

The camera-equipped AirPods could serve as an alternative to smart glasses, offering similar functionality without the need for a headset. This approach might appeal to users who are hesitant to wear glasses or prefer a less visible wearable device

5

.Future Implications

If successful, this technology could revolutionize how users interact with AI assistants and their environment. It may also pave the way for more advanced wearable AI devices in the future, potentially influencing the development of Apple's rumored smart glasses project.

References

Summarized by

Navi

[5]

Related Stories

Apple's Next-Gen AirPods: Integrating Cameras for Enhanced Vision Pro Experience and Beyond

27 Jan 2025•Technology

Apple's AirPods Evolution: AI-Powered Cameras and Health Sensors Coming in 2026

13 Oct 2025•Technology

Apple's AI Revolution: Camera-Equipped AirPods and Apple Watch Set for 2027 Launch

09 May 2025•Technology

Recent Highlights

1

Meta unveils Muse Spark AI model as Superintelligence Labs makes its debut

Technology

2

Anthropic restricts Mythos AI model release, citing unprecedented cybersecurity capabilities

Technology

3

Anthropic sends Claude AI to psychiatrist, discovers functional emotions that shape behavior

Science and Research