Arm unveils first in-house AI chip, securing Meta and OpenAI as early partners for data centers

3 Sources

3 Sources

[1]

ARM's first in-house AI chip draws Meta and OpenAI interest

Industry looks beyond x86 for efficient large-scale AI data center deployment * Arm enters silicon production with a CPU designed for large-scale AI workloads * New AGI CPU doubles rack performance compared with traditional x86 systems * Meta and OpenAI adopt Arm chip for next-generation infrastructure Arm has extended its compute platform into production silicon for the first time with the introduction of what it calls the "next evolution of the Arm compute platform," the AGI CPU. The companys says the CPU is designed specifically for AI data centers, supporting agentic AI workloads which involve continuously running agents capable of reasoning, planning, and acting. The processor features up to 136 Neoverse V3 cores per CPU, with 6GB/s memory bandwidth per core and sub-100ns latency, allowing higher workload density and improved system efficiency. Performance and capacity The Arm AGI CPU promises deterministic performance under sustained load with a 300-watt TDP and a dedicated core per program thread. The processor supports air-cooled 1U server chassis with up to 8,160 cores per rack, and liquid-cooled deployments reaching 45,000 cores per rack. Compared with x86 CPUs, the Arm AGI CPU can provide more than double the performance per rack, supporting larger AI workloads while remaining energy efficient. These capabilities aim to improve compute density, accelerator utilization, and overall infrastructure efficiency. Meta serves as the lead partner and co-developer of the Arm AGI CPU, integrating it with its Meta Training and Inference Accelerator (MTIA) to optimize data center performance. Early commercial adoption also includes the likes of OpenAI, Cerebras, Cloudflare, Positron, Rebellions, SAP, and SK Telecom. Arm is collaborating with OEMs and ODMs such as Lenovo, Supermicro, Quanta Computer, and ASRock Rack to deliver early systems, with broader availability expected in the second half of 2026. More than 50 industry leaders across hyperscale, cloud, semiconductor, memory, networking, software, and system design sectors support the CPU's rollout. "Over the last decade, we've partnered closely with Arm in building Graviton here at AWS, and it's been a remarkable success -- the majority of compute capacity AWS added to our fleet in 2025 was powered by Graviton," said James Hamilton, SVP and Distinguished Engineer, Amazon. "This collaboration has been great for both companies, and Graviton continues to deliver better price/performance for our customers." Industry partners also pointed to the broader infrastructure implications of the new CPU. "The new Arm AGI CPU will further unlock the Arm ecosystem for a broad range of customers, creating new opportunities for everyone..." said Charlie Kawwas, President, Semiconductor Solutions Group, Broadcom Inc. "As Broadcom builds the world's most capable XPU and networking solutions for hyperscalers...our partnership with Arm has enabled us to move with unmatched intent and speed." The Arm AGI CPU is intended to serve as a foundation for agentic AI workloads, enabling organizations to deploy AI tools at scale while maintaining high efficiency. The processor supports large-scale deployment of AI applications, including accelerator management, control plane processing, and cloud- or enterprise-based API and task hosting. That said, the Arm AGI CPU's success will depend on data center adoption, integration with existing accelerators and memory, and proven performance gains over alternatives. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

[2]

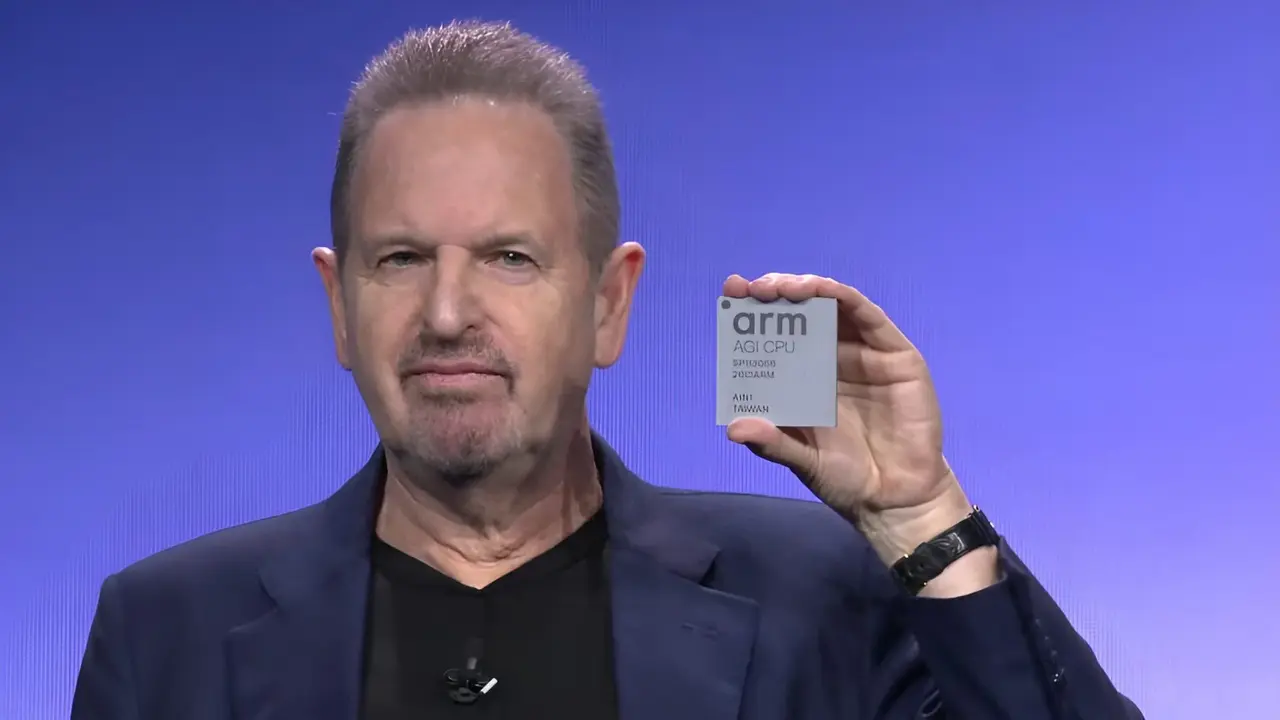

Arm creates history by building its first-ever CPU, the ARM AGI

TL;DR: Arm announced its first production silicon chip, the Arm AGI CPU, designed for data center AI applications with up to 136 Neoverse V3 cores and TSMC's 3nm process. Partnering with Meta and over 50 companies, Arm aims to challenge x86 systems by delivering higher performance per rack for agentic AI infrastructure. Arm products have been ubiquitous in consumer electronics for quite some time now, but the company never actually produced the silicon itself. Over its 35-year history, the business model has been based on IP licensing rather than production. However, on March 24th, 2026, during the Arm Everywhere keynote, Arm made history by announcing its first-ever production silicon chip, the Arm AGI CPU. There have been rumblings in the industry over the past year or so about Arm finally entering the merchant silicon market, but nothing really came of it until now. Arm has collaborated with Meta on this project, and the partnership aims to optimize Arm's AGI infrastructure for Meta's extensive family of apps. Moreover, the two will collaborate on future generations of the AGI CPU, according to Arm. The Arm AGI processor is the first product of a new data center focused silicon lineup. It can have up to 136 Neoverse V3 cores running at up to 3.7GHz, with dedicated 2MB L2 cache per core. The CPU has been manufactured using TSMC's 3nm process and has a 300-watt TDP. The cores use a dual-chiplet design with 96 lanes of PCIe Gen6 as well. This is serious hardware, hyper-focused on AI applications. "AI has fundamentally redefined how computing is built and deployed. Agentic computing is accelerating that change. Today marks the next phase of the Arm compute platform and a defining moment for our company. With the expansion into delivering production silicon with our Arm AGI CPU, we are giving partners more choices, all built on Arm's foundation of high-performance, power-efficient computing, to support agentic AI infrastructure at global scale." - Arm CEO, Rene Haas It is interesting to note that Arm has opted to produce a data center focused agentic AI CPU, when the entire industry is crowding around GPUs for this purpose. Arm has placed its chips on the expectation that the CPU-to-GPU ratio is about to change in agentic AI applications, and that step is quite bold. According to Arm's keynote, data centers are expected to require more than four times the current CPU capacity per gigawatt to support agent-driven applications. Moreover, Arm's foray into silicon production should ring alarm bells for the current x86 manufacturers, AMD and Intel. According to Arm, its system can deliver 2x the performance per rack compared to the latest x86 systems, though, of course, this claim will need to be verified. Arm's partner Meta is deploying the AGI CPU with its custom MTIA (Meta Training and Inference Accelerator) silicon, and additional deployments have been confirmed. Other partners include Cerebras, Cloudflare, F5, OpenAI, Positron, Rebellions, SAP, and SK Telecom. Arm says "more than 50 companies" have lined up for deployment. It will be interesting to see how Arm manages to compete with Intel, AMD, and NVIDIA for a piece of the AI pie.

[3]

ARM's CEO Rene Haas Says the 'AGI CPU' Will Bite Into the x86 Dominance, Brutally Referring to Intel as "Historic"

ARM's CEO appears confident in the company's entry into the server CPU segment, claiming that the AGI CPU is designed to counter x86's dominance in the industry. ARM made a rather unusual announcement at its recent keynote, where CEO Rene Haas announced a transition from a mere IP company to a compute provider and unveiled its first-ever server CPU, the AGI CPU. ARM's decision has been met with skepticism, but Haas's revenue projections have made everyone happy, since the AGI CPU is said to generate roughly $15 billion in annual revenue by 2031, driven by its rack-scale configuration. Interestingly, ARM's CEO sat with WIRED to talk about the company's new venture, and here are the fundamentals behind it: So why would we build a chip? When you're a compute platform company, there are times when the ecosystem benefits from you physically building something. We've seen this in the past, whether it's Microsoft building a Surface laptop that helps the Windows ecosystem, while HP and Dell and Lenovo are still building laptops; or whether it's Google building a Pixel phone, but meanwhile, Samsung still builds Android phones. - ARM's Rene Haas ARM's CEO claims that their entry as a compute provider is ultimately a way to expand the influence of the firm's IP offerings to a wider market, thereby increasing the company's customer TAM. Haas cites Microsoft's Windows and Surface laptop shipments, claiming that the latter has increased adoption of the company's OS. He says that with the AGI CPU, the ARM ecosystem becomes much stronger, and it also serves the agentic AI sector, where the need for processing units has signifcantly risen in the past few months due to agent orchestration and management workloads. Drawing parallels with Microsoft is a sensible move from ARM's CEO, but it's important to note that ARM has helped its competitors develop capable solutions for different markets. Specifically, when we talk about datacenters, ARM's IP dominates in CPU offerings from NVIDIA and Amazon, which means that the AGI CPU has emerged in a market where ARM is both the 'foe and the fellow'. Given how closely ARM works with its customers, there may be skepticism within them about sharing chip designs and architectures, now that ARM is a direct competitor. The ARM-Qualcomm fiasco in the mobile segment is a clear indicator that you cannot compete in and serve similar markets, and this could become a problem for the IP provider in the future. Interestingly, ARM's CEO was asked about the conflict of interest within partners like NVIDIA, to which he had to say: Question: You're referring to the fact that it's Intel's x86 architecture versus Arm architecture. So you don't think you'll piss off your pal, Jensen, but that AMD and Intel may have some response to this. ARM's CEO: I use "piss off" as tongue-in-cheek. It's beneficial to the Arm ecosystem and it's beneficial to Jensen that we build a chip. If you've got [Nvidia's] Vera chip, which is a great product, and you've got Arm AGI CPU, which is a great product, it's not great for Intel and AMD, that's all I know. ARM is confident in its ability to compete with x86 in the server CPU segment with its AGX CPU, yet questions remain about its adoption prospects. Right now, the lead customer for the solution is Meta, which will integrate it into its rack offerings, likely with the MTIA ASICs. Haas does mention customers like SK hynix, Cisco, SAP, and Cloudflare, but, yet again, the adoption concern isn't only driven by the clients ARM can bring; it also depends on whether the firm can sustain production. The AGI CPU is being fabbed at TSMC using the 3nm process, and we know how difficult it has been to secure capacity recently. The AGI CPU is a great step by ARM and the company's manufacturing team, yet the current focus is on whether the firm can capture market share, and there are several caveats to doing so, especially when competing with players that have been in the industry for decades.

Share

Share

Copy Link

Arm has launched its first production silicon chip, the AGI CPU, marking a historic shift from IP licensing to compute provider. Designed for agentic AI workloads with up to 136 Neoverse V3 cores, the chip promises double the performance per rack compared to x86 systems. Meta serves as lead partner while OpenAI joins over 50 companies in early adoption, with broader availability expected in late 2026.

Arm Enters Production Silicon Market with First Server CPU

Arm has made history by announcing its first-ever production silicon chip, the Arm AGI CPU, fundamentally transforming the company's 35-year business model from IP licensor to compute provider

2

. Unveiled during the Arm Everywhere keynote on March 24th, 2026, this in-house AI chip represents what CEO Rene Haas calls "the next evolution of the Arm compute platform"1

. The processor is designed specifically for data center AI applications, supporting agentic AI applications that involve continuously running agents capable of reasoning, planning, and acting1

.

Source: Wccftech

Technical Specifications Challenge x86 Dominance

The Arm AGI CPU features up to 136 Neoverse V3 cores running at speeds up to 3.7GHz, with dedicated 2MB L2 cache per core

2

. Manufactured using TSMC's 3nm process, the processor delivers 6GB/s memory bandwidth per core with sub-100ns latency, enabling higher workload density and improved system efficiency1

. The chip operates at a 300-watt TDP and uses a dual-chiplet design with 96 lanes of PCIe Gen62

. In terms of deployment capacity, air-cooled 1U server chassis can support up to 8,160 cores per rack, while liquid-cooled deployments can reach 45,000 cores per rack1

. Arm claims superior performance per rack, delivering more than double the performance compared to x86 CPUs, positioning the chip to challenge x86 dominance in large-scale AI data center workloads1

.

Source: TechRadar

Meta and OpenAI Lead Early Adoption Wave

Meta serves as the lead partner and co-developer of the Arm AGI CPU, integrating it with its Meta Training and Inference Accelerator (MTIA) to optimize data center performance

1

. The partnership extends beyond deployment, with both companies collaborating on future generations of the processor2

. OpenAI joins the early commercial adoption roster alongside Cerebras, Cloudflare, F5, Positron, Rebellions, SAP, and SK Telecom1

2

. More than 50 industry leaders across hyperscale, cloud, semiconductor, memory, networking, software, and system design sectors support the CPU's rollout1

. Arm is collaborating with OEMs and ODMs including Lenovo, Supermicro, Quanta Computer, and ASRock Rack to deliver early systems, with broader availability expected in the second half of 20261

.Related Stories

Strategic Shift Raises Questions About Competition

Rene Haas explained the strategic rationale behind Arm's entry into the merchant silicon market, drawing parallels with Microsoft building Surface laptops while partners like HP and Dell continue producing Windows devices

3

. "When you're a compute provider company, there are times when the ecosystem benefits from you physically building something," Haas stated3

. The company projects the AGI CPU will generate roughly $15 billion in annual revenue by 2031, driven by its rack-scale configuration3

. However, this move creates potential conflicts of interest with partners like NVIDIA and Amazon, who already use Arm's IP in their own data center CPU offerings3

. When questioned about competing with NVIDIA, Haas responded confidently: "If you've got [Nvidia's] Vera chip, which is a great product, and you've got Arm AGI CPU, which is a great product, it's not great for Intel and AMD, that's all I know"3

.Industry Implications for Agentic AI Infrastructure

Arm's decision to produce a data center-focused CPU for agent orchestration represents a bold bet on shifting infrastructure requirements. According to Arm's keynote, data centers are expected to require more than four times the current CPU capacity per gigawatt to support agent-driven applications

2

. This positions the company differently from competitors focused primarily on GPU-centric architectures. Charlie Kawwas, President of Semiconductor Solutions Group at Broadcom, noted that "the new Arm AGI CPU will further unlock the Arm ecosystem for a broad range of customers, creating new opportunities for everyone"1

. The processor's success will depend on securing TSMC 3nm manufacturing capacity, achieving proven performance gains over alternatives from Intel and AMD, and navigating the delicate balance between being both competitor and supplier to major tech companies3

.References

Summarized by

Navi