AI in recruitment transforms job interviews as candidate fraud and deepfakes break hiring systems

3 Sources

[1]

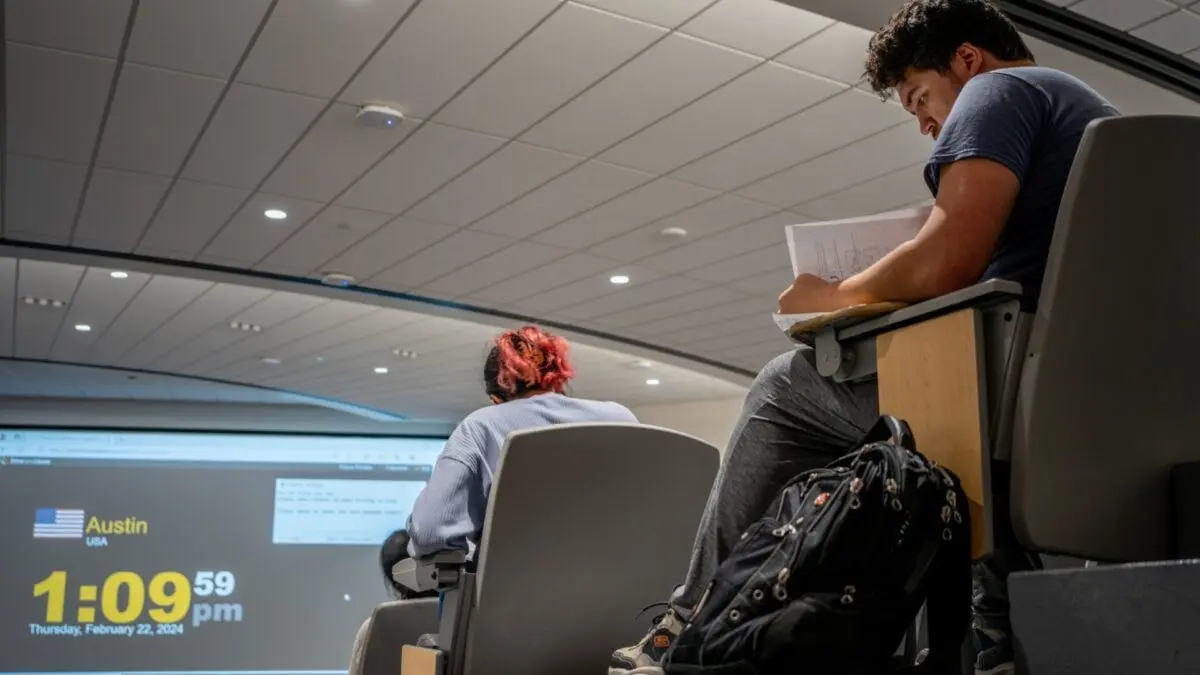

Why recruiters are making interviews 'AI-free zones'

As soon as Michael Kienle becomes accustomed to one use of AI, jobseekers think up improbable new ways to sneak it into the application process. "We know they're using it to write their CVs, their application letters," says Kienle, global vice-president for talent acquisition at L'Oréal. But recently candidates have become more brazen. One of his recruiters told him a jobseeker had used AI in a video interview, simply repeating answers the bot would provide. The deception was spotted because "the answers didn't come naturally", explains Kienle. Candidates reading AI-generated answers in interviews is just one of the unforeseen consequences employers are reporting as the technology rips through the jobs market. As vacancies shrink, the ease of making applications has left employers overwhelmed with candidates, but often deprived of meaningful information to identify promising hires from AI slop submissions. The din and distrust are prompting some companies to reassess their approach to hiring and training, introducing hidden difficulties in application forms, practical testing and banning online exams to prevent cheating, as well as increasing their own use of AI screening tools. "I think AI has actually pushed the interview process back to being more human focused," says David Brown, chief executive of recruiter Hays Americas. "Employers are struggling with deciphering between resumes, because now it's a lot easier to submit en masse and they all look like great fits. From a candidate perspective, it's how do I stand out?" In response, L'Oréal decided to "sanctuarise the interview" as a first principle, says Kienle. Beyond a basic transcription tool -- which candidates can opt out of -- he says AI is not used in interviews. "It will be in person, person to person . . . 45 minutes or one hour . . . that is an AI-free zone." The second principle is to ensure all candidates have at least one face-to-face interview before they start at the company. Kienle says he meets each of his 200-person team of recruiters and puts them all through two years of training focused on L'Oréal's particular recruitment needs. "If you have good recruiters, experienced recruiters, they do not ask the typical questions," he says. "It's all about authenticity -- if you just repeat things that AI told you it's not authentic, it's not you. [The recruiters] have to know the personality, who they are talking to." While accounting firm EY encourages applicants to use AI to help them prepare, "when you're in an interview and assessment we want to hear the real you and [it] is really not permitted", says global head of talent acquisition, Irmgard Naudin ten Cate. EY is prioritising in-person interaction and has trained more than 20,000 interviewers to "stress-test candidates' thinking", spotting answers that may have been prepared by AI without independent thought or real knowledge. Recruiters can identify AI answers if they "probe correctly" says Naudin ten Cate. "We don't want to know what you've done, we want to know how you think, how you make decisions, how you handle conflicts . . . It's that type of question . . . [where we can] detect rehearsed answers." February data from Deel, an HR platform, showed more than 40 per cent of employers had extended probation periods as they were finding it harder to assess people's true skills during the application process. Around three-quarters of surveyed senior HR leaders had noticed a steep rise in AI-generated applications, and a similar proportion deemed CVs and cover letters less reliable than two years ago. "AI has widened the gap between how candidates present themselves and how they perform," says Matt Monette, UK&I lead at Deel. "Employers are telling us they can only understand real capability once someone starts the job." The issue is also extending beyond hiring. The Association of Chartered Certified Accountants, the world's largest accounting body, said last year that it would require candidates to sit assessments in person, ending the online exams that have been running since the pandemic, as AI has made it harder to combat cheating. Rigorous testing does not mean excluding AI, however. Some employers want would-be hires to prove they can use it. Consultancy McKinsey is running a pilot that asks candidates to use its AI tool Lilli to analyse a case study as one of the tasks testing aptitude, curiosity and judgment during the application process. Mayank Gupta, chief executive of CaseBasix, a company that prepares McKinsey candidates, said in January that other firms such as Boston Consulting Group and Bain were likely to incorporate AI into the training process. Many candidates say they have turned to AI partly in response to the use of it by recruiters. A rise in automated screening means many jobseekers find applications are quickly rejected with minimal human interaction, prompting them to send even more, in a vicious circle of AI applying and screening. Employers admit AI will play a role in filtering applications. But some are holding back on rolling out tools more widely, in part due to concerns about whether they can adequately assess candidates or conform to legislation that demands protections against bias, and sharing or unnecessary processing of personal information. Recruiters are also starting to make candidates jump through more hoops in the early stages of recruitment in an attempt to weed out the least serious. Advertising agency VML, for example, was typically receiving about 400 applications for an entry-level role, but finding only 10 per cent fitted the brief. Last year, after it asked candidates to create a 60-second video saying why they were a good fit, 40 per cent dropped out, according to Graham Powell-Symon, talent acquisition director. "Trying to decipher whether they're actually viable candidates for the role is very time consuming. You have to be more rigorous," he says. "Anybody who's got the commitment to make a video likely has some degree of commitment to us as a business." VML and other employers including Virgin Group are also asking candidates to use new tools developed by start-ups such as Vizzy, to create profiles that showcase professional achievements that would typically be on CVs, alongside richer details such as psychometric test scores and portfolios. Sarah Lock, recruitment lead at Virgin Group, says the tool helps inform interviews, which now "place more emphasis on real-world thinking and values" and take a more conversational approach. "We're seeing more applicants than ever before, but less real differentiation," she says. "It helps us cut through the sameness [so] by the time candidates reach interview . . . we already have a much more rounded view of who they are. That allows interviews to go deeper." Some employers are looking to other ways to engage candidates. For example, L'Oréal's Brandstorm, an international competition where participants compete on real-life business scenarios to win a work placement, has been running for decades, but in the past three years registrations have tripled to 4,000 in the UK. "It's a very important source of recruitment," says Kienle. EY has created virtual job simulations, where would-be applicants can practise online role plays setting out what consultants do and what it might be like to work at EY, and which it says about 50,000 students have completed. Naudin ten Cate notes that 88 per cent of applicants who participate in the simulation say they are confident during their interview as a result. For EY, it has the benefit of engaging would-be hires early on, so anxious young jobseekers are more likely to make targeted rather than scattergun applications. "We engage with the person . . . guiding someone through it versus having this whole big funnel at the top," she says. "There's less noise in the system, and for the [applicant], it's a better experience."

[2]

Gen Z is using AI in job interviews as graduate unemployment climbs

The class of 2025 graduated into the worst entry-level job market in five years. Now a growing number of them are using AI tools during live job interviews, and a cottage industry of startups is rushing to sell them the means to do it. Whether that constitutes cheating or common sense depends on which side of the hiring table you sit on, but the numbers behind the trend are not in dispute. Unemployment among recent college graduates aged 22 to 27 climbed to 5.7 per cent by the end of 2025, according to the Federal Reserve Bank of New York, well above the 4.2 per cent national rate. Underemployment, which measures graduates working in jobs that do not require a degree, hit 42.5 per cent, its highest level since 2020. The tech sector, once the default destination for ambitious graduates, shed roughly 245,000 jobs in 2025, according to tracking data from Layoffs.fyi and TrueUp. Another 59,000 have gone in the first three months of 2026. The graduates who entered this market did so having watched an older cohort get hired, promoted, and then laid off at companies like Meta, Amazon, and Google in the space of 18 months. The lesson they drew was not subtle: competence and loyalty are insufficient protection. And so they arrived armed with a technology that their universities had spent four years telling them to learn. The phenomenon surfaced this week in a press release from LockedIn AI, a startup that sells a product called DUO: a service that combines real-time AI transcription of interview questions with a live human coach who can see the candidate's screen and provide strategic guidance during the conversation. The press release, distributed via GlobeNewswire, was framed as a trend piece about generational resilience. It was, more precisely, a product advertisement. LockedIn AI is not alone. Its founder, Kagehiro Mitsuyami, also co-founded Final Round AI, a similar product. Both companies have faced questions about the authenticity of their marketing: reviews on Trustpilot appear to be AI-generated, and independent reviewers have noted that the software can be visible to interviewers when candidates switch between windows. A Gartner survey of 3,000 job seekers found that six per cent admitted to interview fraud, including having someone else impersonate them. Fifty-nine per cent of hiring managers suspect candidates of using AI to misrepresent themselves. The market for these tools is growing precisely because the conditions that created them are getting worse, not better. The National Association of Colleges and Employers found that 45 per cent of employers characterised the job market for the class of 2026 as "fair," down from "good" the previous year. Hiring projections for new graduates are essentially flat, at 1.6 per cent growth. For candidates submitting dozens of applications and receiving interview invitations at rates below two per cent, the temptation to use every available advantage is considerable. The most effective argument in favour of AI-assisted interviewing is not about fairness in the abstract. It is about a specific inconsistency in how technology companies treat AI. Google's chief executive, Sundar Pichai, disclosed during an April 2025 earnings call that more than 30 per cent of the company's new code is now generated with AI assistance, up from 25 per cent six months earlier. Amazon, Microsoft, and Meta all encourage their engineers to use AI coding tools daily. Applicant tracking systems powered by AI screen and reject resumes before a human ever reads them. The hiring pipeline is automated from end to end, except on the candidate's side. For graduates who spent their university years being told that AI fluency would define their careers, being asked to pretend the technology does not exist during a 45-minute interview feels less like a test of competence and more like a test of compliance. The companies asking them to do so are, in many cases, the same ones that will expect them to use AI tools from their first day on the job. This argument has real force, but it also has limits. There is a difference between using AI to write code more efficiently and using AI to answer questions about your own experience, judgment, and problem-solving ability. An interview is, at least in theory, a conversation designed to evaluate what a candidate knows and how they think. Outsourcing those answers to a language model, or to a human coach whispering through an earpiece, undermines the purpose of the exercise regardless of how unfair the exercise may be. Companies are already adapting. In-person interview rounds rose from 24 per cent in 2022 to 38 per cent in 2025, according to hiring industry data. Seventy-two per cent of recruiting leaders now conduct at least one in-person stage specifically to combat AI-assisted fraud. Some firms have moved to whiteboard exercises, pair programming sessions, and unstructured conversations that are harder to augment with real-time tools. The deeper question is whether the interview itself is the right mechanism for evaluating candidates in an AI-saturated labour market. If the goal is to assess what a candidate can produce with the tools they will actually use on the job, then banning those tools during the evaluation makes little sense. If the goal is to assess raw cognitive ability and domain knowledge, then AI assistance defeats the purpose entirely. Most interviews attempt to do both, which is why the current system satisfies no one. What is clear is that the class of 2025 did not create this problem. They inherited a job market reshaped by pandemic-era overhiring, aggressive cost-cutting, and an AI revolution that is simultaneously creating and destroying opportunity at a pace that neither employers nor candidates have fully absorbed. Their decision to use AI in interviews is not rebellion. It is the predictable behaviour of rational actors in a system that has told them, repeatedly and in every other context, that AI is not optional. The fact that the system now objects to them taking that message seriously is, at minimum, worth examining.

[3]

The Deepfake candidate: How AI Is breaking the hiring process

Organisations that rushed to adopt automated, AI-led hiring are discovering that the very technology that speeded up recruitment and made it more scalable is now making it easier to game the HR system. Advances in generative AI and deepfake tools have turned candidate impersonation from a fringe concern into a systemic risk, one that HR systems are not designed to detect. A July 2025 Gartner survey of 3,000 job candidates found 6% admitted to participating in interview fraud, either posing as someone else or having someone else pose as them in an interview. According to the research firm, one in four candidate profiles worldwide will be fake by 2028. Consider this example. On March 24, Bengaluru-based InCruiter uncovered a sophisticated deepfake impersonation attempt during a hiring process for a global fintech and private credit client. The company had deployed InCruiter's AI interview software, which enables fully automated interviews without a human recruiter present. During one such session, a candidate responded to technical questions fluently, with nothing initially appearing amiss. However, InCruiter's continuous deepfake detection system flagged subtle visual anomalies. There were early signs of a synthetic identity overlay. Further analysis confirmed that the person on screen was not the real candidate, but an AI-generated avatar designed to mimic their appearance and voice, likely to bypass automated evaluation. The platform identified behavioural and visual inconsistencies throughout the interview and generated a detailed proctoring report with timestamped evidence, trust scores, and flagged indicators. This allowed the client to quickly review the case and reject the candidate before they advanced further. Broader shift The shift to remote hiring accelerated during the pandemic, with companies leaning heavily on AI interview platforms, asynchronous video assessments, and automated screening tools. Firms like HireVue and Pymetrics built entire business models around the promise of objective, bias-free hiring. But with generative AI toolsm candidates may not be who, or what, they appear to be. Today, a job applicant does not need advanced technical skills to deploy deception. Off-the-shelf tools can clone voices, generate real-time answers, and even manipulate facial expressions during video calls. Startups and open-source projects alike have made it trivial to run a parallel AI assistant during interviews, feeding answers through voice modulation or subtle on-screen prompts. In more sophisticated cases, deepfake overlays can replace a candidate's face entirely. Cybersecurity firm iProov reported a surge in "presentation attacks," where synthetic identities are used to bypass identity verification systems. The implications go beyond a few bad hires. In technical roles especially, companies risk onboarding individuals who lack the claimed expertise, potentially compromising product quality, security, and team productivity. Deepfake interview fraud is already embedded in hiring cycles. Historically, cheating in online interviews was estimated at 10-15%, but with the launch of its deepfake detection technology in early 2026, InCruiter found fraudulent activity in 25-30% of suspicious sessions, nearly double what even experienced human interviewers previously identified. In regulated industries such as finance or healthcare, the stakes are even higher, where compliance violations could follow. In 2023, for example, several US-based tech firms reported cases where candidates passed multiple rounds of AI-led interviews, only to be exposed later when their on-the-job performance didn't match their interview proficiency. In some instances, the person who showed up for work wasn't even the same individual who appeared on video during the hiring process. According to InCruiter, IT and tech account for roughly 60% of deepfake fraud cases, followed by BFSI (15%), BPOs and KPOs (10%), startups (10%), and manufacturing and core sectors (5%). Crucially, deepfake fraud does not discriminate by company size but targets process weaknesses, making any organisation running virtual interviews susceptible. "AI-led interviews are rapidly becoming the future of hiring at scale," Anil Agarwal, founder and CEO of InCruiter, said in a press statement. "But as organisations automate, they must prepare for more sophisticated fraud. We've facilitated over 15 million interview minutes across enterprise clients, and even before launching deepfake detection, 10-15% of interviews showed clear signs of cheating." Agarwal claims his company's technology can help recruiters detect 25-30% fraudulent activity in suspicious sessions. Addressing the situation The problem is that hiring systems optimised for efficiency are colliding with technologies optimised for imitation. AI interview tools are trained to evaluate structured responses, tone, and behavioural cues but generative AI is increasingly capable of mimicking all three. The result is a feedback loop where machines are effectively interviewing other machines. Source: Metaview Labs, Inc. Employers need a multi-layered approach to mitigate hiring fraud, built around clear expectations, smarter assessments, and continuous validation. First, organisations must define and communicate what constitutes acceptable AI use, advises Gartner, while making candidates aware of fraud detection mechanisms and potential legal consequences. Second, the assessment process itself should be designed to surface misconduct with recruiters being trained to spot evasive behaviour, and hiring workflows including safeguards such as in-person interviews. Gartner found that 62% of candidates are more likely to apply when such interviews are required. Finally, fraud prevention should extend beyond initial screening. Employers need system-level checks, including stronger background verification, risk-based monitoring, and embedded tools like identity verification and anomaly detection, ensuring integrity across the entire hiring lifecycle rather than relying on one-time scrutiny, according to Gartner. Companies are beginning to respond, but solutions remain patchy. Some are reintroducing in-person rounds or live technical assessments. Others are investing in liveness detection and biometric verification tools. Platforms like Onfido and Persona are seeing increased demand for identity checks embedded within hiring workflows. However, these fixes come with trade-offs. More rigorous verification can slow down hiring and reintroduce biases that AI tools were meant to eliminate. There are also privacy concerns, especially in regions with strict data protection laws. AI-led hiring, thus, needs a fundamental redesign. Just as email systems had to evolve to combat spam, recruitment platforms may need to build "trust layers" that verify not just identity, but authenticity in real time. This could include continuous verification during interviews, AI systems trained to detect synthetic behaviour, or hybrid models that combine human judgment with machine efficiency. In a world where anyone can sound like an expert and look like a perfect candidate, organisations must rethink what it means to truly know who they are hiring.

Share

Copy Link

Companies are declaring job interviews as AI-free zones after discovering candidates using generative AI to answer questions in real-time. From deepfake candidate impersonation to AI-generated submissions overwhelming recruiters, the technology is forcing employers to rethink hiring. L'Oréal now trains 200 recruiters for two years to spot AI-assisted fraud, while Gartner warns one in four candidate profiles will be fake by 2028.

Companies Turn Job Interviews Into AI-Free Zones

AI in recruitment has reached a tipping point. Michael Kienle, global vice-president for talent acquisition at L'Oréal, recently discovered a candidate simply repeating AI-generated answers during a video interview

1

. The deception was spotted because "the answers didn't come naturally," but the incident reflects a broader crisis in hiring. Companies are now establishing AI-free zones during job interviews, prioritizing human interaction and authenticity over efficiency as candidate fraud reaches alarming levels.L'Oréal has implemented a two-principle approach: no AI tools beyond basic transcription during interviews, and at least one face-to-face interview before any hire starts

1

. Kienle meets each of his 200-person team of recruiters and puts them through two years of recruiter training focused on detecting AI-assisted fraud. "If you have good recruiters, experienced recruiters, they do not ask the typical questions," he explains, emphasizing that authenticity matters more than polished responses.

Source: The Next Web

Deepfake Candidate Impersonation Becomes Systemic Risk

The problem extends far beyond candidates reading scripted answers. On March 24, Bengaluru-based InCruiter uncovered a sophisticated deepfake candidate attempt during a fintech hiring process

3

. An AI-generated avatar mimicked a real candidate's appearance and voice to bypass automated evaluation. InCruiter's deepfake detection system flagged subtle visual anomalies and behavioral inconsistencies, generating timestamped evidence that allowed the client to reject the application before it advanced further.A July 2025 Gartner survey of 3,000 job candidates found 6 per cent admitted to participating in interview fraud, either posing as someone else or having someone else pose as them

2

3

. Gartner warns that one in four candidate profiles worldwide will be fake by 2028. InCruiter reports that IT and tech account for roughly 60 per cent of deepfake fraud cases, followed by BFSI at 15 per cent, with fraudulent activity detected in 25-30 per cent of suspicious sessions3

.Gen Z Embraces AI Tools in Job Interviews Amid Dire Market

The class of 2025 graduated into the worst entry-level job market in five years. Unemployment among recent college graduates aged 22 to 27 climbed to 5.7 per cent by the end of 2025, according to the Federal Reserve Bank of New York, well above the 4.2 per cent national rate

2

. Underemployment hit 42.5 per cent, its highest level since 2020. The tech sector shed roughly 245,000 jobs in 2025, with another 59,000 lost in the first three months of 2026.Gen Z candidates are turning to startups like LockedIn AI and Final Round AI, which sell services combining real-time AI transcription with live human coaches who provide strategic guidance during virtual interviews

2

. The ethical debate centers on a specific inconsistency: Google's CEO Sundar Pichai disclosed that more than 30 per cent of the company's new code is now generated with AI assistance, yet candidates are expected to avoid AI tools in job interviews2

. For graduates told that AI fluency would define their careers, the restriction feels like a test of compliance rather than competence.Related Stories

Employers Struggle With Assessing True Skills

February data from Deel, an HR platform, showed more than 40 per cent of employers had extended probation periods as they were finding it harder to assess people's true skills during the application process

1

. Around three-quarters of surveyed senior HR leaders had noticed a steep rise in AI-generated submissions, and a similar proportion deemed CVs and cover letters less reliable than two years ago. "AI has widened the gap between how candidates present themselves and how they perform," says Matt Monette, UK&I lead at Deel.Accounting firm EY has trained more than 20,000 interviewers to "stress-test candidates' thinking," spotting answers that may have been prepared by generative AI without independent thought

1

. Irmgard Naudin ten Cate, global head of talent acquisition at EY, explains that recruiters can identify AI answers if they "probe correctly" by asking how candidates think, make decisions, and handle conflicts rather than what they've done. In-person interviews rose from 24 per cent in 2022 to 38 per cent in 2025, with 72 per cent of recruiting leaders now conducting at least one in-person stage specifically to combat AI-assisted fraud2

.Screening Tools and Identity Verification Under Pressure

The rise of automated screening tools and applicant tracking systems has created a vicious circle. Many candidates say they turned to AI partly in response to recruiters using it, as automated systems quickly reject applications with minimal human interaction

1

. This prompts jobseekers to send even more AI-generated submissions, overwhelming employers but providing little meaningful information to identify promising hires. Fifty-nine per cent of hiring managers suspect candidates of using AI to misrepresent themselves2

.

Source: CXOToday

The Association of Chartered Certified Accountants, the world's largest accounting body, announced it would require candidates to sit assessments in person, ending online exams that have run since the pandemic, as generative AI has made it harder to combat cheating

1

. Meanwhile, consultancy McKinsey is running a pilot that asks candidates to use its AI tool Lilli to analyze case studies, testing aptitude and judgment while embracing the technology1

. The distrust and complexity signal a fundamental shift: hiring systems optimized for efficiency are colliding with technologies optimized for imitation, forcing companies to choose between speed and authenticity in skills assessment.References

Summarized by

Navi

Related Stories

Job seekers are walking away from AI interviews as transparency issues fuel candidate pushback

01 May 2026•Technology

The AI Revolution in Recruitment: Promises and Pitfalls

22 Sept 2025•Technology

AI Hiring Creates a 'Doom Loop' as Job Seekers and Companies Struggle With Automated Screenings

21 Dec 2025•Technology

Recent Highlights

1

Google stops first AI-developed zero-day exploit designed to bypass two-factor authentication

Technology

2

Anthropic Mythos evolves faster than expected, completing complex cyberattacks in 20 hours

Technology

3

Google unveils Gemini Intelligence, transforming Android into an AI-first smartphone platform

Technology