Epstein victims sue Google and Trump administration over AI Mode exposing personal information

3 Sources

3 Sources

[1]

Epstein victims sue Google, Trump administration for disclosing personal information

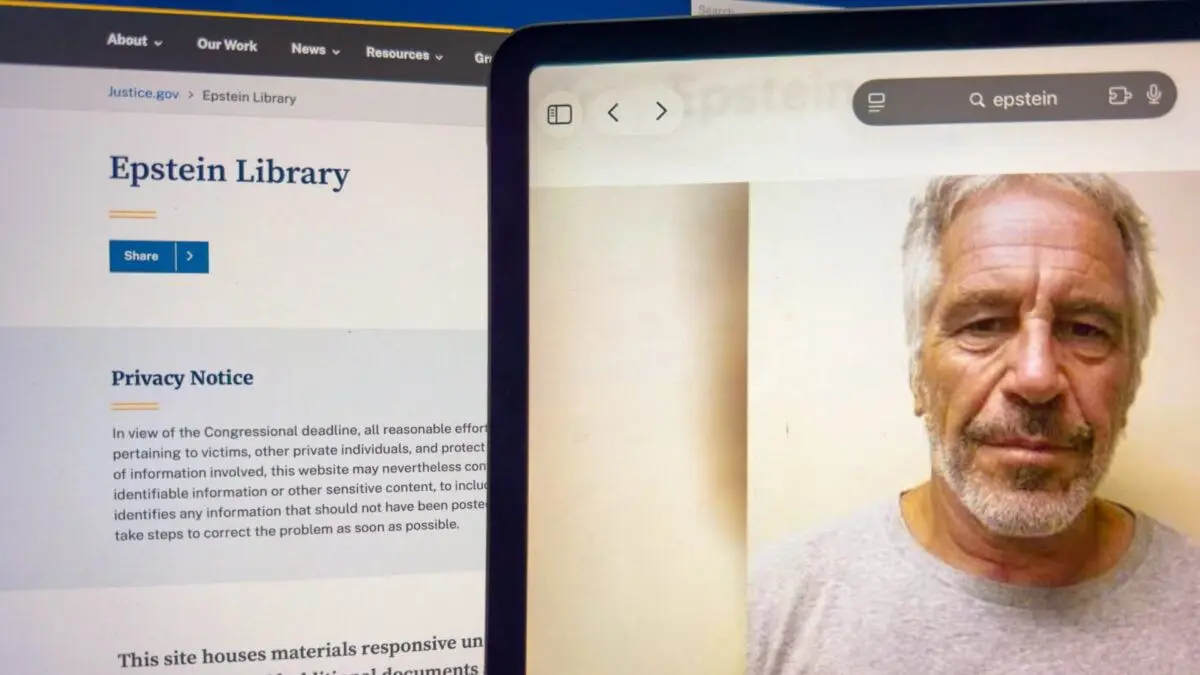

A tablet screen displays a portrait of Jeffrey Epstein beside the U.S. Department of Justice website page titled Epstein Library, Feb. 11, 2026. A victim of notorious sex predator Jeffrey Epstein filed a class action lawsuit on behalf of herself and other survivors against the Trump administration and Google for allegedly wrongfully disclosing and publishing personal information about them. The suit, filed on Thursday in the Northern District of California, where Google is headquartered, claims the U.S. Justice Department "outed" about 100 Epstein survivors in late 2025 and early 2026, and that even after the government acknowledged the mistake and withdrew the information, "online entities like Google continuously republish it, refusing victim's pleas to take it down." With respect to Google, the suit says the company's core search engine and its artificial intelligence summary feature called AI mode were responsible for publishing victims' personal information. "Survivors now face renewed trauma," the suit says. "Strangers call them, email them, threaten their physical safety, and accuse them of conspiring with Epstein when they are, in reality, Epstein's victims." The complaint was filed by an Epstein victim who used the pseudonym Jane Doe. After months of pressure, the DOJ earlier this year released more than 3 million additional pages of documents related to Epstein, including images and videos. In August 2019, Epstein killed himself in a jail in New York City, weeks after being arrested on federal child sex trafficking charges. In taking on Google, the plaintiffs are testing whether a major safety net for internet companies and social media sites has its limitations. Section 230 of the Communications Decency Act governs internet speech and has long allowed major platforms in the U.S. to avoid liability for content appearing on their websites and apps. With the explosion of AI-generated content and new controversies emerging regarding the publishing of non-consensual sexual images, including so-called deepfake porn, internet giants face a fresh new challenge in defending their turf. Earlier this month, Google was sued in a wrongful death case by a 36-year-old man's father, who alleged the company's Gemini chatbot convinced his son to attempt a "mass casualty attack" and to eventually commit suicide. The lawsuit from Epstein survivors alleges Google "intentionally," through its design, fueled harassment by hosting information about the victims, and said its AI Mode feature "is not a neutral search index." The complaint comes after two jury verdicts this week -- both against Meta and one involving Google's YouTube -- concluded that the online platforms are failing to adequately police their sites for content that's causing real-life harm. New Mexico Attorney General Raúl Torrez, who spearheaded his state's case against Meta, told CNBC this week that "there's a distinct possibility that these cases motivate Congress to re-examine Section 230 and, if not eliminate it, dramatically revise it." The latest suit claims Google's AI-generated content revealed personal information about the victims. It said Google's AI Mode responded to queries asking for such details. The complaint alleges that the government has failed to force tech platforms to take down materials in the past, allowing for the exposure of victims' information. "As a part of this response, generated repeatedly on multiple platforms and across various devices, Google's AI Mode included Plaintiff's full name, displayed her full email address, and generated a hypertext link allowing anyone to send direct email to Plaintiff with the click of a button," the suit says. Representatives from Google and the Trump administration did not immediately respond to requests for comment.

[2]

Epstein Victims Sue Google, Claim AI Mode Exposed Personal Information

A victim of Jeffrey Epstein filed a class action lawsuit on Thursday against Google, saying that the company's AI Mode feature published personal information on the sex trafficker's victims. In response to legislative action, the Department of Justice began releasing more than 3 million pages of evidence in its case against Epstein in batches late last year into early this year. But the roll-out has been deemed problematic, with some predators' names redacted while several survivors' identities were outed in improper redactions. "The United States, acting through the DOJ, made a deliberate policy choice to prioritize rapid, large-volume disclosure over protection of Epstein survivors’ privacy," according to the lawsuit filed in U.S. District Court for the Northern District of California. The lawsuit claims that the survivors have not only had to relive their trauma but have also been victims of harassment since their information was made public. Though the DOJ later removed the errors, the information was kept online by Google's AI search function, AI Mode, the plaintiff claims. "Even after the government acknowledged the disclosure violated the rights of survivors and withdrew the information, online entities like Google continuously republish it, refusing victim's pleas to take it down," the lawsuit says. Upon searching the name of the plaintiff, who goes by "Jane Doe," as well as the names of other victims she is representing with this lawsuit, Google's AI Mode displayed their "full name, contact information, cities of residence, and association with Jeffrey Epstein," the suit alleges. In the plaintiff's case, the AI also "generated a hypertext link allowing anyone to send direct email to Plaintiff with the click of a button." The lawsuit claims that the victim notified Google of the problem on multiple occasions over the past two months to no avail. "Despite receiving actual notice of the violations, the substantial harm caused by its continued dissemination, and the status of many Class members as sexual abuse survivors entitled to heightened privacy protections under the law, Google has failed and refuses to remove, de-index, or block access to the offending materials," the lawsuit claims. "Notably, several other publicly available AI tools that generate content by analyzing online sources, such as ChatGPT, Claude, and Perplexity, provided no victim-related information whatsoever in similar repeated testing." Unlike Google search, AI mode "is not a neutral search index; it is an active recommender and content generator," the lawsuit argues, and could be pleaded as "actionable doxxing." The lawsuit comes at the end of a week when tech giants' legal responsibility for online content has been tested. Meta and Google were found liable in a social media addiction trial in Los Angeles on Wednesday, and Meta was found liable in an online child safety trial in New Mexico on Tuesday. Both lawsuits were deemed landmark suits that could turn into watershed moments in the way online free speech is regulated in the United States. Currently, under Section 230 of the Communications Decency Act, big tech giants like Google that operate these online platforms are relieved of any liability for content posted by third parties. With this week's rulings against Meta and Google, the protection tech giants receive from Section 230 is now seriously challenged. Section 230's applicability to AI has been a topic of contention. Sen. Ron Wyden, who helped write the law, told Gizmodo in January that AI chatbots are not protected by it. The Department of Justice and Google did not immediately respond to Gizmodo's request for comment.

[3]

Victim of Jeffrey Epstein files class-action lawsuit against Google

There's more legal trouble brewing for Google, as a victim of Jeffrey Epstein has filed a class action suit against the world's largest search engine, alleging its AI Mode improperly published personal information about sex trafficking victims. The problem began with the Department of Justice, whose rollout of the Epstein files, following the passage of the Epstein Files Transparency Act last year, was riddled with hasty redactions, which often protected the identities of alleged perpetrators while the identities of victims were left unconcealed. The DOJ, however, has acknowledged the errors and removed the personal information from its website. The problem now lies with Google, and more specifically, its artificial intelligence, which trawled the initial, unredacted document dump and still hosts the sensitive personal information of sex trafficking victims. "Even after the government acknowledged the disclosure violated the rights of survivors and withdrew the information, online entities like Google continuously republish it, refusing [the] victim's pleas to take it down," the lawsuit reads. The allegations don't stop there. Not only did Google knowingly refuse to remove the sensitive information, which includes "full name, contact information, cities of residence, and association with Jeffrey Epstein," but the AI also allegedly "generated a hypertext link allowing anyone to send direct email to Plaintiff with the click of a button." Worse still, for Google: the lawsuit alleges that other artificial intelligence companies did not improperly publish victim information: "Notably, several other publicly available AI tools that generate content by analyzing online sources, such as ChatGPT, Claude, and Perplexity, provided no victim-related information whatsoever in similar repeated testing." This latest lawsuit comes on the heels of a damning Los Angeles jury ruling that found both Meta and Google-owned YouTube liable for "designing products that addict and harm children," prioritizing online engagement over the well-being of their users. At the time of writing, Google has not issued a public statement on the lawsuit, but a verdict in this trial could set important precedents for privacy protections in the age of AI, with implications that would ripple across the tech landscape.

Share

Share

Copy Link

A class-action lawsuit filed by Jeffrey Epstein survivors targets Google and the Trump administration for allegedly disclosing and republishing personal information about victims. The suit claims Google's AI Mode feature continues to display sensitive details including names, email addresses, and contact information despite repeated requests for removal.

Google Lawsuit Targets AI Mode for Exposing Epstein Victims' Data

A victim of Jeffrey Epstein filed a class-action lawsuit on Thursday in the Northern District of California against Google and the Trump administration, alleging both entities wrongfully disclosed and published personal information about survivors of sex trafficking

1

. The complaint, brought by a plaintiff using the pseudonym Jane Doe, claims that the Department of Justice "outed" approximately 100 Epstein victims in late 2025 and early 2026 through improper redactions in document releases2

. While the DOJ later acknowledged the errors and removed the information from its website, the lawsuit alleges that Google's AI Mode continues to republish sensitive details, refusing victims' pleas to take it down3

.AI-Generated Content Reveals Sensitive Details Despite Removal Requests

The lawsuit specifically targets Google's AI summary feature, arguing that AI Mode is "not a neutral search index" but rather "an active recommender and content generator"

2

. According to the complaint, when users searched for the plaintiff's name and other victims' names, Google's AI Mode displayed their "full name, contact information, cities of residence, and association with Jeffrey Epstein"2

. In the plaintiff's case, the AI even "generated a hypertext link allowing anyone to send direct email to Plaintiff with the click of a button"1

. The lawsuit claims the victim notified Google of the problem on multiple occasions over the past two months to no avail2

.Other AI Platforms Avoided Publishing Victim Information

What makes this Google lawsuit particularly damaging is the allegation that other AI platforms handled the same data responsibly. The complaint notes that "several other publicly available AI tools that generate content by analyzing online sources, such as ChatGPT, Claude, and Perplexity, provided no victim-related information whatsoever in similar repeated testing"

2

. This comparison suggests that Google's AI Mode may have design flaws or inadequate privacy protections that competitors have successfully implemented, raising questions about platform responsibility in the age of AI-generated content.Related Stories

DOJ Document Release Created Initial Privacy Crisis

The problem originated when the Department of Justice released more than 3 million pages of documents related to Jeffrey Epstein earlier this year, following months of pressure and legislative action

1

. The lawsuit alleges that "The United States, acting through the DOJ, made a deliberate policy choice to prioritize rapid, large-volume disclosure over protection of Epstein survivors' privacy"2

.

Source: Gizmodo

The rollout was riddled with hasty redactions that often protected alleged perpetrators' identities while leaving survivors' information in unredacted files

3

. The exposed personal information has led to renewed trauma for survivors, with the suit stating: "Strangers call them, email them, threaten their physical safety, and accuse them of conspiring with Epstein when they are, in reality, Epstein's victims"1

.Section 230 Protections Face Fresh Challenge

This case tests whether Section 230 of the Communications Decency Act, which has long shielded internet companies from liability for third-party content, extends to AI-generated content

1

.

Source: Mashable

The lawsuit comes at a critical moment, following two jury verdicts this week against Meta and Google's YouTube that concluded online platforms are failing to adequately police their sites for harmful content

1

. New Mexico Attorney General Raúl Torrez told CNBC that "there's a distinct possibility that these cases motivate Congress to re-examine Section 230 and, if not eliminate it, dramatically revise it"1

. Senator Ron Wyden, who helped write the Communications Decency Act, has stated that AI chatbots are not protected by Section 2302

. A verdict in this trial could establish important precedents for privacy protections in the AI era, with implications affecting data removal policies and content generator oversight across the tech industry. The lawsuit's claim of "actionable doxxing" and allegations that Google "intentionally" fueled harassment through its design choices signal that courts may soon need to define new boundaries for platform liability in cases involving AI systems and sensitive personal information1

2

.References

Summarized by

Navi

Related Stories

Conservative Activist Sues Google Over AI-Generated False Claims

22 Oct 2025•Technology

Google and Character.AI settle first major lawsuits over teen suicide linked to AI chatbots

07 Jan 2026•Policy and Regulation

Penske Media Sues Google Over AI-Generated Summaries in Search Results

14 Sept 2025•Technology

Recent Highlights

1

Apple Plans Major Siri AI Overhaul in iOS 27 With Third-Party Chatbot Integration

Technology

2

OpenAI shuts down Sora video app after six months, ending Disney's $1 billion investment deal

Technology

3

Pentagon formalizes Palantir's Maven AI as core military system with multi-year funding

Policy and Regulation